开源模型应用落地-DeepSeek-R1-Distill-Qwen-7B-LoRA微调-LLaMA-Factory-单机单卡-V100(一)

一、前言

如今,大语言模型领域热闹非凡,各种模型不断涌现。DeepSeek-R1-Distill-Qwen-7B 模型凭借其出色的效果和性能,吸引了众多开发者的目光。而 LLaMa-Factory 作为强大的微调工具,能让模型更好地满足个性化需求。

在本篇中,将深入探讨如何运用 LLaMa-Factory 对 DeepSeek-R1-Distill-Qwen-7B 模型进行微调,探索如何通过微调,让模型更好地为我们所用。

二、术语介绍

2.1. LoRA微调

LoRA (Low-Rank Adaptation) 用于微调大型语言模型 (LLM)。 是一种有效的自适应策略,它不会引入额外的推理延迟,并在保持模型质量的同时显着减少下游任务的可训练参数数量。

2.2. 参数高效微调(PEFT)

仅微调少量 (额外) 模型参数,同时冻结预训练 LLM 的大部分参数,从而大大降低了计算和存储成本。

2.3. LLaMA-Factory

是一个与 LLaMA(Large Language Model Meta AI)相关的项目,旨在为用户提供一种简化和优化的方式来训练、微调和部署大型语言模型。该工具通常包括一系列功能,如数据处理、模型配置、训练监控等,以帮助研究人员和开发者更高效地使用 LLaMA 模型。

LLaMA-Factory支持的模型列表:

2.4. DeepSeek-R1-Distill-Qwen-7B

是一个由DeepSeek开发的模型,它是通过蒸馏技术将Qwen-7B大型模型的一部分知识精华提取出来,以适应更小型的模型需求。

三、前置条件

3.1. 基础环境及前置条件

1. 操作系统:centos7

2. NVIDIA Tesla V100 32GB CUDA Version: 12.2

3. 提前下载好DeepSeek-R1-Distill-Qwen-7B模型

通过以下两个地址进行下载,优先推荐魔搭

huggingface:

https://huggingface.co/deepseek-ai/DeepSeek-R1-Distill-Qwen-7B/tree/main

ModelScope:

魔搭社区

按需选择SDK或者Git方式下载

使用git-lfs方式下载示例:

3.2. Anaconda安装

1、Update Systemsudo yum update -ysudo yum upgrade -y2、Download Anacondawget https://repo.anaconda.com/archive/Anaconda3-2022.10-Linux-x86_64.sh3、Verify Data Integritysha256sum Anaconda3-2022.10-Linux-x86_64.sh4、Run Anaconda Installation Scriptbash Anaconda3-2022.10-Linux-x86_64.sh安装目录:/opt/anaconda3注:安装位置可以在执行安装脚本的时候直接指定,可以这样修改执行内容bash Anaconda3-2022.10-Linux-x86_64.sh -p /opt/anaconda3Do you wish the installer to initialize Anaconda3 by running conda init?yes如果没有执行初始化,可以执行:/opt/anaconda3/bin/conda init注:初始化时,anaconda将配置写入了~/.bashrc 文件,直接执行source ~/.bashrc5、Verify Installationconda --version6、配置镜像源conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/free/conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main/conda config --set show_channel_urls yes3.3.下载LLaMA-Factory

方式一:直接下载

地址:GitHub - hiyouga/LLaMA-Factory: Unified Efficient Fine-Tuning of 100+ LLMs & VLMs (ACL 2024)

方式二:使用git克隆项目

git clone --depth 1 https://github.com/hiyouga/LLaMA-Factory.git

下载好的项目放置在/data/service目录下

3.4. 安装依赖

conda create --name llama_factory python=3.10

conda activate llama_factory

cd /data/service/LLaMA-Factory

pip install -e ".[torch,metrics]" -i https://pypi.tuna.tsinghua.edu.cn/simplePS:软硬件要求

四、技术实现

4.1.数据准备

有两种格式选择,包括alpaca和sharegpt

alpaca示例格式:

[{"instruction": "人类指令(必填)","input": "人类输入(选填)","output": "模型回答(必填)","system": "系统提示词(选填)","history": [["第一轮指令(选填)", "第一轮回答(选填)"],["第二轮指令(选填)", "第二轮回答(选填)"]]}

]对于上述格式的数据,dataset_info.json 中的数据集描述应为:

"数据集名称": {"file_name": "data.json","columns": {"prompt": "instruction","query": "input","response": "output","system": "system","history": "history"}

}sharegpt示例格式:

- 相比 alpaca 格式的数据集,sharegpt 格式支持更多的角色种类,例如 human、gpt、observation、function 等等。它们构成一个对象列表呈现在

conversations列中。 - 注意其中 human 和 observation 必须出现在奇数位置,gpt 和 function 必须出现在偶数位置。

[{"conversations": [{"from": "human","value": "人类指令"},{"from": "function_call","value": "工具参数"},{"from": "observation","value": "工具结果"},{"from": "gpt","value": "模型回答"}],"system": "系统提示词(选填)","tools": "工具描述(选填)"}

]对于上述格式的数据,dataset_info.json 中的数据集描述应为:

"数据集名称": {"file_name": "data.json","formatting": "sharegpt","columns": {"messages": "conversations","system": "system","tools": "tools"}

}以下采用sharegpt格式,在/data/service/LLaMA-Factory/data/目录上传测试数据,文件名为ds_zh_demo.json,测试数据示例如下:

[{"conversations": [{"from": "user","value": "什么是深度学习,它与传统机器学习有什么区别?"},{"from": "assistant","value": "深度学习是机器学习的一个子领域,使用人工神经网络,尤其是深度神经网络,来进行数据建模。与传统机器学习方法相比,深度学习能够自动提取特征,无需手动特征工程,适合处理大规模数据集和复杂模式。"}]},{"conversations": [{"from": "user","value": "如何评估一个机器学习模型的性能?"},{"from": "assistant","value": "评估机器学习模型的性能通常使用多种指标,包括准确率、精确率、召回率、F1-score、ROC曲线和AUC值。选择合适的指标取决于具体任务的性质和目标。"}]}

]

修改数据集描述文件dataset_info.json

vi /data/service/LLaMA-Factory/data/dataset_info.json增加以下内容:

"ds_zh_demo": {"file_name": "ds_zh_demo.json","formatting": "sharegpt","columns": {"messages": "conversations"},"tags": {"role_tag": "from","content_tag": "value","user_tag": "user","assistant_tag": "assistant"}

}

4.2.配置文件准备

1) 备份原有的配置文件

cp /data/service/LLaMA-Factory/examples/train_lora/llama3_lora_sft.yaml /data/service/LLaMA-Factory/examples/train_lora/llama3_lora_sft.yaml.bak2) 创建新的配置文件

mv /data/service/LLaMA-Factory/examples/train_lora/llama3_lora_sft.yaml /data/service/LLaMA-Factory/examples/train_lora/ds_qwen7b_lora_sft.yaml3) 修改配置文件内容

vi /data/service/LLaMA-Factory/examples/train_lora/ds_qwen7b_lora_sft.yaml内容如下:

### model

model_name_or_path: /data/model/DeepSeek-R1-Distill-Qwen-7B

trust_remote_code: true### method

stage: sft

do_train: true

finetuning_type: lora

lora_rank: 8

lora_target: all### dataset

dataset: ds_zh_demo

template: deepseek3

cutoff_len: 4096

max_samples: 4019

overwrite_cache: true

preprocessing_num_workers: 16### output

output_dir: /data/model/sft/DeepSeek-R1-Distill-Qwen-7B

logging_steps: 10

save_steps: 500

plot_loss: true

overwrite_output_dir: true### train

per_device_train_batch_size: 1

gradient_accumulation_steps: 8

learning_rate: 1.0e-4

num_train_epochs: 1.0

lr_scheduler_type: cosine

warmup_ratio: 0.1

bf16: true

ddp_timeout: 180000000### eval

val_size: 0.1

per_device_eval_batch_size: 1

eval_strategy: steps

eval_steps: 500需要关注以下参数

- model_name_or_path:模型路径

- dataset:数据集名称,对应上面声明的qwen_zh_demo

- template:模版

- cutoff_len:控制输入序列的最大长度

- output_dir:微调后权重保存路径

- gradient_accumulation_steps:梯度累积的步数,GPU资源不足时需要减少该值

- num_train_epochs:训练的轮数

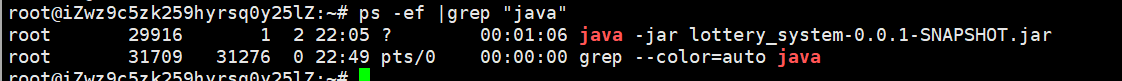

4.3.启动微调

conda activate llama_factory

cd /data/service/LLaMA-Factory

llamafactory-cli train /data/service/LLaMA-Factory/examples/train_lora/ds_qwen7b_lora_sft.yaml# 后台运行

nohup llamafactory-cli train /data/service/LLaMA-Factory/examples/train_lora/ds_qwen7b_lora_sft.yaml > output.log 2>&1 &4.4.微调结果

[INFO|configuration_utils.py:1052] 2025-02-18 16:39:55,400 >> loading configuration file /data/model/DeepSeek-R1-Distill-Qwen-7B/generation_config.json

[INFO|configuration_utils.py:1099] 2025-02-18 16:39:55,400 >> Generate config GenerationConfig {"bos_token_id": 151646,"do_sample": true,"eos_token_id": 151643,"temperature": 0.6,"top_p": 0.95

}[INFO|2025-02-18 16:39:55] llamafactory.model.model_utils.checkpointing:157 >> Gradient checkpointing enabled.

[INFO|2025-02-18 16:39:55] llamafactory.model.model_utils.attention:157 >> Using torch SDPA for faster training and inference.

[INFO|2025-02-18 16:39:55] llamafactory.model.adapter:157 >> Upcasting trainable params to float32.

[INFO|2025-02-18 16:39:55] llamafactory.model.adapter:157 >> Fine-tuning method: LoRA

[INFO|2025-02-18 16:39:55] llamafactory.model.model_utils.misc:157 >> Found linear modules: down_proj,o_proj,up_proj,k_proj,v_proj,q_proj,gate_proj

[INFO|2025-02-18 16:39:55] llamafactory.model.loader:157 >> trainable params: 20,185,088 || all params: 7,635,801,600 || trainable%: 0.2643

Detected kernel version 4.18.0, which is below the recommended minimum of 5.5.0; this can cause the process to hang. It is recommended to upgrade the kernel to the minimum version or higher.

[INFO|trainer.py:667] 2025-02-18 16:39:55,807 >> Using auto half precision backend

[INFO|trainer.py:2243] 2025-02-18 16:39:56,634 >> ***** Running training *****

[INFO|trainer.py:2244] 2025-02-18 16:39:56,634 >> Num examples = 3,617

[INFO|trainer.py:2245] 2025-02-18 16:39:56,634 >> Num Epochs = 1

[INFO|trainer.py:2246] 2025-02-18 16:39:56,634 >> Instantaneous batch size per device = 1

[INFO|trainer.py:2249] 2025-02-18 16:39:56,634 >> Total train batch size (w. parallel, distributed & accumulation) = 8

[INFO|trainer.py:2250] 2025-02-18 16:39:56,634 >> Gradient Accumulation steps = 8

[INFO|trainer.py:2251] 2025-02-18 16:39:56,634 >> Total optimization steps = 452

[INFO|trainer.py:2252] 2025-02-18 16:39:56,638 >> Number of trainable parameters = 20,185,0880%| | 0/452 [00:00<?, ?it/s]/usr/local/miniconda3/envs/llama_factory/lib/python3.10/site-packages/torch/utils/checkpoint.py:295: FutureWarning: `torch.cpu.amp.autocast(args...)` is deprecated. Please use `torch.amp.autocast('cpu', args...)` instead.with torch.enable_grad(), device_autocast_ctx, torch.cpu.amp.autocast(**ctx.cpu_autocast_kwargs): # type: ignore[attr-defined]

100%|██████████| 452/452 [4:06:28<00:00, 31.87s/it][INFO|trainer.py:3705] 2025-02-18 20:46:24,795 >> Saving model checkpoint to /data/model/sft/DeepSeek-R1-Distill-Qwen-7B/checkpoint-452

[INFO|configuration_utils.py:670] 2025-02-18 20:46:24,819 >> loading configuration file /data/model/DeepSeek-R1-Distill-Qwen-7B/config.json

[INFO|configuration_utils.py:739] 2025-02-18 20:46:24,820 >> Model config Qwen2Config {"architectures": ["Qwen2ForCausalLM"],"attention_dropout": 0.0,"bos_token_id": 151643,"eos_token_id": 151643,"hidden_act": "silu","hidden_size": 3584,"initializer_range": 0.02,"intermediate_size": 18944,"max_position_embeddings": 131072,"max_window_layers": 28,"model_type": "qwen2","num_attention_heads": 28,"num_hidden_layers": 28,"num_key_value_heads": 4,"rms_norm_eps": 1e-06,"rope_scaling": null,"rope_theta": 10000,"sliding_window": null,"tie_word_embeddings": false,"torch_dtype": "bfloat16","transformers_version": "4.45.0","use_cache": true,"use_mrope": false,"use_sliding_window": false,"vocab_size": 152064

}[INFO|tokenization_utils_base.py:2649] 2025-02-18 20:46:25,042 >> tokenizer config file saved in /data/model/sft/DeepSeek-R1-Distill-Qwen-7B/checkpoint-452/tokenizer_config.json

[INFO|tokenization_utils_base.py:2658] 2025-02-18 20:46:25,043 >> Special tokens file saved in /data/model/sft/DeepSeek-R1-Distill-Qwen-7B/checkpoint-452/special_tokens_map.json

[INFO|trainer.py:2505] 2025-02-18 20:46:25,377 >> Training completed. Do not forget to share your model on huggingface.co/models =)100%|██████████| 452/452 [4:06:28<00:00, 32.72s/it]

[INFO|trainer.py:3705] 2025-02-18 20:46:25,379 >> Saving model checkpoint to /data/model/sft/DeepSeek-R1-Distill-Qwen-7B

[INFO|configuration_utils.py:670] 2025-02-18 20:46:25,401 >> loading configuration file /data/model/DeepSeek-R1-Distill-Qwen-7B/config.json

[INFO|configuration_utils.py:739] 2025-02-18 20:46:25,401 >> Model config Qwen2Config {"architectures": ["Qwen2ForCausalLM"],"attention_dropout": 0.0,"bos_token_id": 151643,"eos_token_id": 151643,"hidden_act": "silu","hidden_size": 3584,"initializer_range": 0.02,"intermediate_size": 18944,"max_position_embeddings": 131072,"max_window_layers": 28,"model_type": "qwen2","num_attention_heads": 28,"num_hidden_layers": 28,"num_key_value_heads": 4,"rms_norm_eps": 1e-06,"rope_scaling": null,"rope_theta": 10000,"sliding_window": null,"tie_word_embeddings": false,"torch_dtype": "bfloat16","transformers_version": "4.45.0","use_cache": true,"use_mrope": false,"use_sliding_window": false,"vocab_size": 152064

}[INFO|tokenization_utils_base.py:2649] 2025-02-18 20:46:25,556 >> tokenizer config file saved in /data/model/sft/DeepSeek-R1-Distill-Qwen-7B/tokenizer_config.json

[INFO|tokenization_utils_base.py:2658] 2025-02-18 20:46:25,556 >> Special tokens file saved in /data/model/sft/DeepSeek-R1-Distill-Qwen-7B/special_tokens_map.json

{'loss': 3.6592, 'grad_norm': 0.38773563504219055, 'learning_rate': 2.173913043478261e-05, 'epoch': 0.02}

{'loss': 3.667, 'grad_norm': 0.698821485042572, 'learning_rate': 4.347826086956522e-05, 'epoch': 0.04}

{'loss': 3.4784, 'grad_norm': 0.41371676325798035, 'learning_rate': 6.521739130434783e-05, 'epoch': 0.07}

{'loss': 3.2962, 'grad_norm': 0.4966348111629486, 'learning_rate': 8.695652173913044e-05, 'epoch': 0.09}

{'loss': 3.0158, 'grad_norm': 0.333425909280777, 'learning_rate': 9.997605179330019e-05, 'epoch': 0.11}

{'loss': 3.2221, 'grad_norm': 0.3786776065826416, 'learning_rate': 9.970689785771798e-05, 'epoch': 0.13}

{'loss': 2.8439, 'grad_norm': 0.3683229386806488, 'learning_rate': 9.914027086842322e-05, 'epoch': 0.15}

{'loss': 3.0528, 'grad_norm': 0.42745739221572876, 'learning_rate': 9.82795618288397e-05, 'epoch': 0.18}

{'loss': 2.9092, 'grad_norm': 0.45462721586227417, 'learning_rate': 9.712992168898436e-05, 'epoch': 0.2}

{'loss': 3.1055, 'grad_norm': 0.5547119379043579, 'learning_rate': 9.56982305193869e-05, 'epoch': 0.22}

{'loss': 2.9412, 'grad_norm': 0.5830215811729431, 'learning_rate': 9.399305633701373e-05, 'epoch': 0.24}

{'loss': 2.7873, 'grad_norm': 0.5862609148025513, 'learning_rate': 9.202460382960448e-05, 'epoch': 0.27}

{'loss': 2.8255, 'grad_norm': 0.5828853845596313, 'learning_rate': 8.980465328528219e-05, 'epoch': 0.29}

{'loss': 2.6266, 'grad_norm': 0.6733331084251404, 'learning_rate': 8.734649009291585e-05, 'epoch': 0.31}

{'loss': 2.8745, 'grad_norm': 0.6904928684234619, 'learning_rate': 8.46648252351431e-05, 'epoch': 0.33}

{'loss': 2.8139, 'grad_norm': 0.7874809503555298, 'learning_rate': 8.177570724986628e-05, 'epoch': 0.35}

{'loss': 2.7818, 'grad_norm': 0.8345168232917786, 'learning_rate': 7.86964261870916e-05, 'epoch': 0.38}

{'loss': 2.7198, 'grad_norm': 0.8806198239326477, 'learning_rate': 7.544541013588645e-05, 'epoch': 0.4}

{'loss': 2.7231, 'grad_norm': 0.9481658935546875, 'learning_rate': 7.204211494069292e-05, 'epoch': 0.42}

{'loss': 2.7371, 'grad_norm': 0.9718573093414307, 'learning_rate': 6.850690776699573e-05, 'epoch': 0.44}

{'loss': 2.6862, 'grad_norm': 1.2056019306182861, 'learning_rate': 6.486094521315022e-05, 'epoch': 0.46}

{'loss': 2.4661, 'grad_norm': 1.200085163116455, 'learning_rate': 6.112604669781572e-05, 'epoch': 0.49}

{'loss': 2.4841, 'grad_norm': 1.1310691833496094, 'learning_rate': 5.732456388071247e-05, 'epoch': 0.51}

{'loss': 2.3755, 'grad_norm': 1.1279083490371704, 'learning_rate': 5.3479246898159063e-05, 'epoch': 0.53}

{'loss': 2.5552, 'grad_norm': 1.2654848098754883, 'learning_rate': 4.96131082139099e-05, 'epoch': 0.55}

{'loss': 2.6197, 'grad_norm': 1.3887016773223877, 'learning_rate': 4.574928490008264e-05, 'epoch': 0.58}

{'loss': 2.3773, 'grad_norm': 1.3009178638458252, 'learning_rate': 4.1910900172361764e-05, 'epoch': 0.6}

{'loss': 2.3881, 'grad_norm': 1.346793532371521, 'learning_rate': 3.812092500812646e-05, 'epoch': 0.62}

{'loss': 2.4821, 'grad_norm': 1.7273674011230469, 'learning_rate': 3.440204067565511e-05, 'epoch': 0.64}

{'loss': 2.3563, 'grad_norm': 1.529177188873291, 'learning_rate': 3.077650299710653e-05, 'epoch': 0.66}

{'loss': 2.1308, 'grad_norm': 1.5957469940185547, 'learning_rate': 2.7266009157601224e-05, 'epoch': 0.69}

{'loss': 2.1709, 'grad_norm': 1.4444897174835205, 'learning_rate': 2.3891567857490372e-05, 'epoch': 0.71}

{'loss': 2.275, 'grad_norm': 1.5686719417572021, 'learning_rate': 2.067337358489085e-05, 'epoch': 0.73}

{'loss': 2.2075, 'grad_norm': 1.5931408405303955, 'learning_rate': 1.7630685760908622e-05, 'epoch': 0.75}

{'loss': 2.1727, 'grad_norm': 1.7681787014007568, 'learning_rate': 1.4781713480810184e-05, 'epoch': 0.77}

{'loss': 2.3562, 'grad_norm': 1.742925763130188, 'learning_rate': 1.2143506540914128e-05, 'epoch': 0.8}

{'loss': 2.1187, 'grad_norm': 1.6716198921203613, 'learning_rate': 9.731853403356705e-06, 'epoch': 0.82}

{'loss': 2.2564, 'grad_norm': 1.915489912033081, 'learning_rate': 7.561186709365653e-06, 'epoch': 0.84}

{'loss': 2.261, 'grad_norm': 2.132519245147705, 'learning_rate': 5.644496906502233e-06, 'epoch': 0.86}

{'loss': 2.1632, 'grad_norm': 1.591231107711792, 'learning_rate': 3.9932545067728366e-06, 'epoch': 0.88}

{'loss': 2.1266, 'grad_norm': 1.584917664527893, 'learning_rate': 2.6173414408598827e-06, 'epoch': 0.91}

{'loss': 2.2944, 'grad_norm': 1.5982666015625, 'learning_rate': 1.524991919285429e-06, 'epoch': 0.93}

{'loss': 2.3799, 'grad_norm': 2.1475727558135986, 'learning_rate': 7.227431544266194e-07, 'epoch': 0.95}

{'loss': 2.1196, 'grad_norm': 1.6714484691619873, 'learning_rate': 2.153962382888841e-07, 'epoch': 0.97}

{'loss': 2.1427, 'grad_norm': 1.7334465980529785, 'learning_rate': 5.987410165758656e-09, 'epoch': 1.0}

{'train_runtime': 14788.7396, 'train_samples_per_second': 0.245, 'train_steps_per_second': 0.031, 'train_loss': 2.6206856934370193, 'epoch': 1.0}

***** train metrics *****epoch = 0.9997total_flos = 100517734GFtrain_loss = 2.6207train_runtime = 4:06:28.73train_samples_per_second = 0.245train_steps_per_second = 0.031

Figure saved at: /data/model/sft/DeepSeek-R1-Distill-Qwen-7B/training_loss.png

[WARNING|2025-02-18 20:46:25] llamafactory.extras.ploting:162 >> No metric eval_loss to plot.

[WARNING|2025-02-18 20:46:25] llamafactory.extras.ploting:162 >> No metric eval_accuracy to plot.

[INFO|trainer.py:4021] 2025-02-18 20:46:25,781 >>

***** Running Evaluation *****

[INFO|trainer.py:4023] 2025-02-18 20:46:25,781 >> Num examples = 402

[INFO|trainer.py:4026] 2025-02-18 20:46:25,781 >> Batch size = 1

100%|██████████| 402/402 [09:03<00:00, 1.35s/it]t]

[INFO|modelcard.py:449] 2025-02-18 20:55:30,409 >> Dropping the following result as it does not have all the necessary fields:

{'task': {'name': 'Causal Language Modeling', 'type': 'text-generation'}}

***** eval metrics *****epoch = 0.9997eval_loss = 2.2648eval_runtime = 0:09:04.62eval_samples_per_second = 0.738eval_steps_per_second = 0.738生成的权重文件:

五、附带说明

5.1. dataset_info.json

包含了所有可用的数据集。如果您希望使用自定义数据集,请务必在 dataset_info.json 文件中添加数据集描述,并通过修改 dataset: 数据集名称 配置来使用数据集。

"数据集名称": {"hf_hub_url": "Hugging Face 的数据集仓库地址(若指定,则忽略 script_url 和 file_name)","ms_hub_url": "ModelScope 的数据集仓库地址(若指定,则忽略 script_url 和 file_name)","script_url": "包含数据加载脚本的本地文件夹名称(若指定,则忽略 file_name)","file_name": "该目录下数据集文件夹或文件的名称(若上述参数未指定,则此项必需)","formatting": "数据集格式(可选,默认:alpaca,可以为 alpaca 或 sharegpt)","ranking": "是否为偏好数据集(可选,默认:False)","subset": "数据集子集的名称(可选,默认:None)","split": "所使用的数据集切分(可选,默认:train)","folder": "Hugging Face 仓库的文件夹名称(可选,默认:None)","num_samples": "该数据集所使用的样本数量。(可选,默认:None)","columns(可选)": {"prompt": "数据集代表提示词的表头名称(默认:instruction)","query": "数据集代表请求的表头名称(默认:input)","response": "数据集代表回答的表头名称(默认:output)","history": "数据集代表历史对话的表头名称(默认:None)","messages": "数据集代表消息列表的表头名称(默认:conversations)","system": "数据集代表系统提示的表头名称(默认:None)","tools": "数据集代表工具描述的表头名称(默认:None)","images": "数据集代表图像输入的表头名称(默认:None)","videos": "数据集代表视频输入的表头名称(默认:None)","audios": "数据集代表音频输入的表头名称(默认:None)","chosen": "数据集代表更优回答的表头名称(默认:None)","rejected": "数据集代表更差回答的表头名称(默认:None)","kto_tag": "数据集代表 KTO 标签的表头名称(默认:None)"},"tags(可选,用于 sharegpt 格式)": {"role_tag": "消息中代表发送者身份的键名(默认:from)","content_tag": "消息中代表文本内容的键名(默认:value)","user_tag": "消息中代表用户的 role_tag(默认:human)","assistant_tag": "消息中代表助手的 role_tag(默认:gpt)","observation_tag": "消息中代表工具返回结果的 role_tag(默认:observation)","function_tag": "消息中代表工具调用的 role_tag(默认:function_call)","system_tag": "消息中代表系统提示的 role_tag(默认:system,会覆盖 system column)"}

}5.2. 自定义对话模版

在 template.py 中添加自己的对话模板。

https://github.com/hiyouga/LLaMA-Factory/blob/main/src/llamafactory/data/template.py

# Copyright 2025 the LlamaFactory team.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.from dataclasses import dataclass

from typing import TYPE_CHECKING, Dict, List, Optional, Sequence, Tuple, Type, Unionfrom typing_extensions import overridefrom ..extras import logging

from ..extras.misc import check_version

from .data_utils import Role

from .formatter import EmptyFormatter, FunctionFormatter, StringFormatter, ToolFormatter

from .mm_plugin import get_mm_pluginif TYPE_CHECKING:from transformers import PreTrainedTokenizerfrom ..hparams import DataArgumentsfrom .formatter import SLOTS, Formatterfrom .mm_plugin import BasePluginfrom .tool_utils import FunctionCalllogger = logging.get_logger(__name__)@dataclass

class Template:format_user: "Formatter"format_assistant: "Formatter"format_system: "Formatter"format_function: "Formatter"format_observation: "Formatter"format_tools: "Formatter"format_prefix: "Formatter"default_system: strstop_words: List[str]thought_words: Tuple[str, str]efficient_eos: boolreplace_eos: boolreplace_jinja_template: boolmm_plugin: "BasePlugin"def encode_oneturn(self,tokenizer: "PreTrainedTokenizer",messages: Sequence[Dict[str, str]],system: Optional[str] = None,tools: Optional[str] = None,) -> Tuple[List[int], List[int]]:r"""Returns a single pair of token ids representing prompt and response respectively."""encoded_messages = self._encode(tokenizer, messages, system, tools)prompt_ids = []for encoded_ids in encoded_messages[:-1]:prompt_ids += encoded_idsresponse_ids = encoded_messages[-1]return prompt_ids, response_idsdef encode_multiturn(self,tokenizer: "PreTrainedTokenizer",messages: Sequence[Dict[str, str]],system: Optional[str] = None,tools: Optional[str] = None,) -> List[Tuple[List[int], List[int]]]:r"""Returns multiple pairs of token ids representing prompts and responses respectively."""encoded_messages = self._encode(tokenizer, messages, system, tools)return [(encoded_messages[i], encoded_messages[i + 1]) for i in range(0, len(encoded_messages), 2)]def extract_tool(self, content: str) -> Union[str, List["FunctionCall"]]:r"""Extracts tool message."""return self.format_tools.extract(content)def get_stop_token_ids(self, tokenizer: "PreTrainedTokenizer") -> List[int]:r"""Returns stop token ids."""stop_token_ids = {tokenizer.eos_token_id}for token in self.stop_words:stop_token_ids.add(tokenizer.convert_tokens_to_ids(token))return list(stop_token_ids)def _convert_elements_to_ids(self, tokenizer: "PreTrainedTokenizer", elements: "SLOTS") -> List[int]:r"""Converts elements to token ids."""token_ids = []for elem in elements:if isinstance(elem, str):if len(elem) != 0:token_ids += tokenizer.encode(elem, add_special_tokens=False)elif isinstance(elem, dict):token_ids += [tokenizer.convert_tokens_to_ids(elem.get("token"))]elif isinstance(elem, set):if "bos_token" in elem and tokenizer.bos_token_id is not None:token_ids += [tokenizer.bos_token_id]elif "eos_token" in elem and tokenizer.eos_token_id is not None:token_ids += [tokenizer.eos_token_id]else:raise ValueError(f"Input must be string, set[str] or dict[str, str], got {type(elem)}")return token_idsdef _encode(self,tokenizer: "PreTrainedTokenizer",messages: Sequence[Dict[str, str]],system: Optional[str],tools: Optional[str],) -> List[List[int]]:r"""Encodes formatted inputs to pairs of token ids.Turn 0: prefix + system + query respTurn t: query resp"""system = system or self.default_systemencoded_messages = []for i, message in enumerate(messages):elements = []if i == 0:elements += self.format_prefix.apply()if system or tools:tool_text = self.format_tools.apply(content=tools)[0] if tools else ""elements += self.format_system.apply(content=(system + tool_text))if message["role"] == Role.USER.value:elements += self.format_user.apply(content=message["content"], idx=str(i // 2))elif message["role"] == Role.ASSISTANT.value:elements += self.format_assistant.apply(content=message["content"])elif message["role"] == Role.OBSERVATION.value:elements += self.format_observation.apply(content=message["content"])elif message["role"] == Role.FUNCTION.value:elements += self.format_function.apply(content=message["content"])else:raise NotImplementedError("Unexpected role: {}".format(message["role"]))encoded_messages.append(self._convert_elements_to_ids(tokenizer, elements))return encoded_messages@staticmethoddef _add_or_replace_eos_token(tokenizer: "PreTrainedTokenizer", eos_token: str) -> None:r"""Adds or replaces eos token to the tokenizer."""is_added = tokenizer.eos_token_id is Nonenum_added_tokens = tokenizer.add_special_tokens({"eos_token": eos_token})if is_added:logger.info_rank0(f"Add eos token: {tokenizer.eos_token}.")else:logger.info_rank0(f"Replace eos token: {tokenizer.eos_token}.")if num_added_tokens > 0:logger.warning_rank0("New tokens have been added, make sure `resize_vocab` is True.")def fix_special_tokens(self, tokenizer: "PreTrainedTokenizer") -> None:r"""Adds eos token and pad token to the tokenizer."""stop_words = self.stop_wordsif self.replace_eos:if not stop_words:raise ValueError("Stop words are required to replace the EOS token.")self._add_or_replace_eos_token(tokenizer, eos_token=stop_words[0])stop_words = stop_words[1:]if tokenizer.eos_token_id is None:self._add_or_replace_eos_token(tokenizer, eos_token="<|endoftext|>")if tokenizer.pad_token_id is None:tokenizer.pad_token = tokenizer.eos_tokenlogger.info_rank0(f"Add pad token: {tokenizer.pad_token}")if stop_words:num_added_tokens = tokenizer.add_special_tokens(dict(additional_special_tokens=stop_words), replace_additional_special_tokens=False)logger.info_rank0("Add {} to stop words.".format(",".join(stop_words)))if num_added_tokens > 0:logger.warning_rank0("New tokens have been added, make sure `resize_vocab` is True.")@staticmethoddef _jinja_escape(content: str) -> str:r"""Escape single quotes in content."""return content.replace("'", r"\'")@staticmethoddef _convert_slots_to_jinja(slots: "SLOTS", tokenizer: "PreTrainedTokenizer", placeholder: str = "content") -> str:r"""Converts slots to jinja template."""slot_items = []for slot in slots:if isinstance(slot, str):slot_pieces = slot.split("{{content}}")if slot_pieces[0]:slot_items.append("'" + Template._jinja_escape(slot_pieces[0]) + "'")if len(slot_pieces) > 1:slot_items.append(placeholder)if slot_pieces[1]:slot_items.append("'" + Template._jinja_escape(slot_pieces[1]) + "'")elif isinstance(slot, set): # do not use {{ eos_token }} since it may be replacedif "bos_token" in slot and tokenizer.bos_token_id is not None:slot_items.append("'" + tokenizer.bos_token + "'")elif "eos_token" in slot and tokenizer.eos_token_id is not None:slot_items.append("'" + tokenizer.eos_token + "'")elif isinstance(slot, dict):raise ValueError("Dict is not supported.")return " + ".join(slot_items)def _get_jinja_template(self, tokenizer: "PreTrainedTokenizer") -> str:r"""Returns the jinja template."""prefix = self._convert_slots_to_jinja(self.format_prefix.apply(), tokenizer)system = self._convert_slots_to_jinja(self.format_system.apply(), tokenizer, placeholder="system_message")user = self._convert_slots_to_jinja(self.format_user.apply(), tokenizer)assistant = self._convert_slots_to_jinja(self.format_assistant.apply(), tokenizer)jinja_template = ""if prefix:jinja_template += "{{ " + prefix + " }}"if self.default_system:jinja_template += "{% set system_message = '" + self._jinja_escape(self.default_system) + "' %}"jinja_template += ("{% if messages[0]['role'] == 'system' %}{% set loop_messages = messages[1:] %}""{% set system_message = messages[0]['content'] %}{% else %}{% set loop_messages = messages %}{% endif %}""{% if system_message is defined %}{{ " + system + " }}{% endif %}""{% for message in loop_messages %}""{% set content = message['content'] %}""{% if message['role'] == 'user' %}""{{ " + user + " }}""{% elif message['role'] == 'assistant' %}""{{ " + assistant + " }}""{% endif %}""{% endfor %}")return jinja_templatedef fix_jinja_template(self, tokenizer: "PreTrainedTokenizer") -> None:r"""Replaces the jinja template in the tokenizer."""if tokenizer.chat_template is None or self.replace_jinja_template:try:tokenizer.chat_template = self._get_jinja_template(tokenizer)except ValueError as e:logger.info_rank0(f"Cannot add this chat template to tokenizer: {e}.")@staticmethoddef _convert_slots_to_ollama(slots: "SLOTS", tokenizer: "PreTrainedTokenizer", placeholder: str = "content") -> str:r"""Converts slots to ollama template."""slot_items = []for slot in slots:if isinstance(slot, str):slot_pieces = slot.split("{{content}}")if slot_pieces[0]:slot_items.append(slot_pieces[0])if len(slot_pieces) > 1:slot_items.append("{{ " + placeholder + " }}")if slot_pieces[1]:slot_items.append(slot_pieces[1])elif isinstance(slot, set): # do not use {{ eos_token }} since it may be replacedif "bos_token" in slot and tokenizer.bos_token_id is not None:slot_items.append(tokenizer.bos_token)elif "eos_token" in slot and tokenizer.eos_token_id is not None:slot_items.append(tokenizer.eos_token)elif isinstance(slot, dict):raise ValueError("Dict is not supported.")return "".join(slot_items)def _get_ollama_template(self, tokenizer: "PreTrainedTokenizer") -> str:r"""Returns the ollama template."""prefix = self._convert_slots_to_ollama(self.format_prefix.apply(), tokenizer)system = self._convert_slots_to_ollama(self.format_system.apply(), tokenizer, placeholder=".System")user = self._convert_slots_to_ollama(self.format_user.apply(), tokenizer, placeholder=".Content")assistant = self._convert_slots_to_ollama(self.format_assistant.apply(), tokenizer, placeholder=".Content")return (f"{prefix}{{{{ if .System }}}}{system}{{{{ end }}}}"f"""{{{{ range .Messages }}}}{{{{ if eq .Role "user" }}}}{user}"""f"""{{{{ else if eq .Role "assistant" }}}}{assistant}{{{{ end }}}}{{{{ end }}}}""")def get_ollama_modelfile(self, tokenizer: "PreTrainedTokenizer") -> str:r"""Returns the ollama modelfile.TODO: support function calling."""modelfile = "# ollama modelfile auto-generated by llamafactory\n\n"modelfile += f'FROM .\n\nTEMPLATE """{self._get_ollama_template(tokenizer)}"""\n\n'if self.default_system:modelfile += f'SYSTEM """{self.default_system}"""\n\n'for stop_token_id in self.get_stop_token_ids(tokenizer):modelfile += f'PARAMETER stop "{tokenizer.convert_ids_to_tokens(stop_token_id)}"\n'modelfile += "PARAMETER num_ctx 4096\n"return modelfile@dataclass

class Llama2Template(Template):@overridedef _encode(self,tokenizer: "PreTrainedTokenizer",messages: Sequence[Dict[str, str]],system: str,tools: str,) -> List[List[int]]:system = system or self.default_systemencoded_messages = []for i, message in enumerate(messages):elements = []system_text = ""if i == 0:elements += self.format_prefix.apply()if system or tools:tool_text = self.format_tools.apply(content=tools)[0] if tools else ""system_text = self.format_system.apply(content=(system + tool_text))[0]if message["role"] == Role.USER.value:elements += self.format_user.apply(content=system_text + message["content"])elif message["role"] == Role.ASSISTANT.value:elements += self.format_assistant.apply(content=message["content"])elif message["role"] == Role.OBSERVATION.value:elements += self.format_observation.apply(content=message["content"])elif message["role"] == Role.FUNCTION.value:elements += self.format_function.apply(content=message["content"])else:raise NotImplementedError("Unexpected role: {}".format(message["role"]))encoded_messages.append(self._convert_elements_to_ids(tokenizer, elements))return encoded_messagesdef _get_jinja_template(self, tokenizer: "PreTrainedTokenizer") -> str:prefix = self._convert_slots_to_jinja(self.format_prefix.apply(), tokenizer)system_message = self._convert_slots_to_jinja(self.format_system.apply(), tokenizer, placeholder="system_message")user_message = self._convert_slots_to_jinja(self.format_user.apply(), tokenizer)assistant_message = self._convert_slots_to_jinja(self.format_assistant.apply(), tokenizer)jinja_template = ""if prefix:jinja_template += "{{ " + prefix + " }}"if self.default_system:jinja_template += "{% set system_message = '" + self._jinja_escape(self.default_system) + "' %}"jinja_template += ("{% if messages[0]['role'] == 'system' %}{% set loop_messages = messages[1:] %}""{% set system_message = messages[0]['content'] %}{% else %}{% set loop_messages = messages %}{% endif %}""{% for message in loop_messages %}""{% if loop.index0 == 0 and system_message is defined %}""{% set content = " + system_message + " + message['content'] %}""{% else %}{% set content = message['content'] %}{% endif %}""{% if message['role'] == 'user' %}""{{ " + user_message + " }}""{% elif message['role'] == 'assistant' %}""{{ " + assistant_message + " }}""{% endif %}""{% endfor %}")return jinja_templateTEMPLATES: Dict[str, "Template"] = {}def register_template(name: str,format_user: Optional["Formatter"] = None,format_assistant: Optional["Formatter"] = None,format_system: Optional["Formatter"] = None,format_function: Optional["Formatter"] = None,format_observation: Optional["Formatter"] = None,format_tools: Optional["Formatter"] = None,format_prefix: Optional["Formatter"] = None,default_system: str = "",stop_words: Optional[Sequence[str]] = None,thought_words: Optional[Tuple[str, str]] = None,efficient_eos: bool = False,replace_eos: bool = False,replace_jinja_template: bool = False,mm_plugin: "BasePlugin" = get_mm_plugin(name="base"),template_class: Type["Template"] = Template,

) -> None:r"""Registers a chat template.To add the following chat template:```<s><user>user prompt here<model>model response here</s><user>user prompt here<model>model response here</s>```The corresponding code should be:```register_template(name="custom",format_user=StringFormatter(slots=["<user>{{content}}\n<model>"]),format_assistant=StringFormatter(slots=["{{content}}</s>\n"]),format_prefix=EmptyFormatter("<s>"),)```"""if name in TEMPLATES:raise ValueError(f"Template {name} already exists.")default_slots = ["{{content}}"] if efficient_eos else ["{{content}}", {"eos_token"}]default_user_formatter = StringFormatter(slots=["{{content}}"])default_assistant_formatter = StringFormatter(slots=default_slots)default_function_formatter = FunctionFormatter(slots=default_slots, tool_format="default")default_tool_formatter = ToolFormatter(tool_format="default")default_prefix_formatter = EmptyFormatter()TEMPLATES[name] = template_class(format_user=format_user or default_user_formatter,format_assistant=format_assistant or default_assistant_formatter,format_system=format_system or default_user_formatter,format_function=format_function or default_function_formatter,format_observation=format_observation or format_user or default_user_formatter,format_tools=format_tools or default_tool_formatter,format_prefix=format_prefix or default_prefix_formatter,default_system=default_system,stop_words=stop_words or [],thought_words=thought_words or ("<think>", "</think>"),efficient_eos=efficient_eos,replace_eos=replace_eos,replace_jinja_template=replace_jinja_template,mm_plugin=mm_plugin,)def parse_template(tokenizer: "PreTrainedTokenizer") -> "Template":r"""Extracts a chat template from the tokenizer."""def find_diff(short_str: str, long_str: str) -> str:i, j = 0, 0diff = ""while i < len(short_str) and j < len(long_str):if short_str[i] == long_str[j]:i += 1j += 1else:diff += long_str[j]j += 1return diffprefix = tokenizer.decode(tokenizer.encode(""))messages = [{"role": "system", "content": "{{content}}"}]system_slot = tokenizer.apply_chat_template(messages, add_generation_prompt=False, tokenize=False)[len(prefix) :]messages = [{"role": "system", "content": ""}, {"role": "user", "content": "{{content}}"}]user_slot_empty_system = tokenizer.apply_chat_template(messages, add_generation_prompt=True, tokenize=False)user_slot_empty_system = user_slot_empty_system[len(prefix) :]messages = [{"role": "user", "content": "{{content}}"}]user_slot = tokenizer.apply_chat_template(messages, add_generation_prompt=True, tokenize=False)user_slot = user_slot[len(prefix) :]messages = [{"role": "user", "content": "{{content}}"}, {"role": "assistant", "content": "{{content}}"}]assistant_slot = tokenizer.apply_chat_template(messages, add_generation_prompt=False, tokenize=False)assistant_slot = assistant_slot[len(prefix) + len(user_slot) :]if len(user_slot) > len(user_slot_empty_system):default_system = find_diff(user_slot_empty_system, user_slot)sole_system = system_slot.replace("{{content}}", default_system, 1)user_slot = user_slot[len(sole_system) :]else: # if defaut_system is empty, user_slot_empty_system will be longer than user_slotdefault_system = ""return Template(format_user=StringFormatter(slots=[user_slot]),format_assistant=StringFormatter(slots=[assistant_slot]),format_system=StringFormatter(slots=[system_slot]),format_function=FunctionFormatter(slots=[assistant_slot], tool_format="default"),format_observation=StringFormatter(slots=[user_slot]),format_tools=ToolFormatter(tool_format="default"),format_prefix=EmptyFormatter(slots=[prefix]) if prefix else EmptyFormatter(),default_system=default_system,stop_words=[],thought_words=("<think>", "</think>"),efficient_eos=False,replace_eos=False,replace_jinja_template=False,mm_plugin=get_mm_plugin(name="base"),)def get_template_and_fix_tokenizer(tokenizer: "PreTrainedTokenizer", data_args: "DataArguments") -> "Template":r"""Gets chat template and fixes the tokenizer."""if data_args.template is None:if isinstance(tokenizer.chat_template, str):logger.warning_rank0("`template` was not specified, try parsing the chat template from the tokenizer.")template = parse_template(tokenizer)else:logger.warning_rank0("`template` was not specified, use `empty` template.")template = TEMPLATES["empty"] # placeholderelse:if data_args.template not in TEMPLATES:raise ValueError(f"Template {data_args.template} does not exist.")template = TEMPLATES[data_args.template]if template.mm_plugin.__class__.__name__ != "BasePlugin":check_version("transformers>=4.45.0")if data_args.train_on_prompt and template.efficient_eos:raise ValueError("Current template does not support `train_on_prompt`.")if data_args.tool_format is not None:logger.info_rank0(f"Using tool format: {data_args.tool_format}.")default_slots = ["{{content}}"] if template.efficient_eos else ["{{content}}", {"eos_token"}]template.format_function = FunctionFormatter(slots=default_slots, tool_format=data_args.tool_format)template.format_tools = ToolFormatter(tool_format=data_args.tool_format)template.fix_special_tokens(tokenizer)template.fix_jinja_template(tokenizer)return templateregister_template(name="alpaca",format_user=StringFormatter(slots=["### Instruction:\n{{content}}\n\n### Response:\n"]),format_assistant=StringFormatter(slots=["{{content}}", {"eos_token"}, "\n\n"]),default_system=("Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n"),replace_jinja_template=True,

)register_template(name="aquila",format_user=StringFormatter(slots=["Human: {{content}}###Assistant:"]),format_assistant=StringFormatter(slots=["{{content}}###"]),format_system=StringFormatter(slots=["System: {{content}}###"]),default_system=("A chat between a curious human and an artificial intelligence assistant. ""The assistant gives helpful, detailed, and polite answers to the human's questions."),stop_words=["</s>"],

)register_template(name="atom",format_user=StringFormatter(slots=[{"bos_token"}, "Human: {{content}}\n", {"eos_token"}, {"bos_token"}, "Assistant:"]),format_assistant=StringFormatter(slots=["{{content}}\n", {"eos_token"}]),

)register_template(name="baichuan",format_user=StringFormatter(slots=[{"token": "<reserved_102>"}, "{{content}}", {"token": "<reserved_103>"}]),efficient_eos=True,

)register_template(name="baichuan2",format_user=StringFormatter(slots=["<reserved_106>{{content}}<reserved_107>"]),efficient_eos=True,

)register_template(name="belle",format_user=StringFormatter(slots=["Human: {{content}}\n\nBelle: "]),format_assistant=StringFormatter(slots=["{{content}}", {"eos_token"}, "\n\n"]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),

)register_template(name="bluelm",format_user=StringFormatter(slots=[{"token": "[|Human|]:"}, "{{content}}", {"token": "[|AI|]:"}]),

)register_template(name="breeze",format_user=StringFormatter(slots=["[INST] {{content}} [/INST] "]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),efficient_eos=True,

)register_template(name="chatglm2",format_user=StringFormatter(slots=["[Round {{idx}}]\n\n问:{{content}}\n\n答:"]),format_prefix=EmptyFormatter(slots=[{"token": "[gMASK]"}, {"token": "sop"}]),efficient_eos=True,

)register_template(name="chatglm3",format_user=StringFormatter(slots=[{"token": "<|user|>"}, "\n", "{{content}}", {"token": "<|assistant|>"}]),format_assistant=StringFormatter(slots=["\n", "{{content}}"]),format_system=StringFormatter(slots=[{"token": "<|system|>"}, "\n", "{{content}}"]),format_function=FunctionFormatter(slots=["{{content}}"], tool_format="glm4"),format_observation=StringFormatter(slots=[{"token": "<|observation|>"}, "\n", "{{content}}", {"token": "<|assistant|>"}]),format_tools=ToolFormatter(tool_format="glm4"),format_prefix=EmptyFormatter(slots=[{"token": "[gMASK]"}, {"token": "sop"}]),stop_words=["<|user|>", "<|observation|>"],efficient_eos=True,

)register_template(name="chatml",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),format_observation=StringFormatter(slots=["<|im_start|>tool\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),stop_words=["<|im_end|>", "<|im_start|>"],replace_eos=True,replace_jinja_template=True,

)# copied from chatml template

register_template(name="chatml_de",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),format_observation=StringFormatter(slots=["<|im_start|>tool\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),default_system="Du bist ein freundlicher und hilfsbereiter KI-Assistent.",stop_words=["<|im_end|>", "<|im_start|>"],replace_eos=True,replace_jinja_template=True,

)register_template(name="codegeex2",format_prefix=EmptyFormatter(slots=[{"token": "[gMASK]"}, {"token": "sop"}]),

)register_template(name="codegeex4",format_user=StringFormatter(slots=["<|user|>\n{{content}}<|assistant|>\n"]),format_system=StringFormatter(slots=["<|system|>\n{{content}}"]),format_function=FunctionFormatter(slots=["{{content}}"], tool_format="glm4"),format_observation=StringFormatter(slots=["<|observation|>\n{{content}}<|assistant|>\n"]),format_tools=ToolFormatter(tool_format="glm4"),format_prefix=EmptyFormatter(slots=["[gMASK]<sop>"]),default_system=("你是一位智能编程助手,你叫CodeGeeX。你会为用户回答关于编程、代码、计算机方面的任何问题,""并提供格式规范、可以执行、准确安全的代码,并在必要时提供详细的解释。"),stop_words=["<|user|>", "<|observation|>"],efficient_eos=True,

)register_template(name="cohere",format_user=StringFormatter(slots=[("<|START_OF_TURN_TOKEN|><|USER_TOKEN|>{{content}}<|END_OF_TURN_TOKEN|>""<|START_OF_TURN_TOKEN|><|CHATBOT_TOKEN|>")]),format_system=StringFormatter(slots=["<|START_OF_TURN_TOKEN|><|SYSTEM_TOKEN|>{{content}}<|END_OF_TURN_TOKEN|>"]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),

)register_template(name="cpm",format_user=StringFormatter(slots=["<用户>{{content}}<AI>"]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),

)# copied from chatml template

register_template(name="cpm3",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),stop_words=["<|im_end|>"],

)# copied from chatml template

register_template(name="dbrx",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),format_observation=StringFormatter(slots=["<|im_start|>tool\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),default_system=("You are DBRX, created by Databricks. You were last updated in December 2023. ""You answer questions based on information available up to that point.\n""YOU PROVIDE SHORT RESPONSES TO SHORT QUESTIONS OR STATEMENTS, but provide thorough ""responses to more complex and open-ended questions.\nYou assist with various tasks, ""from writing to coding (using markdown for code blocks — remember to use ``` with ""code, JSON, and tables).\n(You do not have real-time data access or code execution ""capabilities. You avoid stereotyping and provide balanced perspectives on ""controversial topics. You do not provide song lyrics, poems, or news articles and ""do not divulge details of your training data.)\nThis is your system prompt, ""guiding your responses. Do not reference it, just respond to the user. If you find ""yourself talking about this message, stop. You should be responding appropriately ""and usually that means not mentioning this.\nYOU DO NOT MENTION ANY OF THIS INFORMATION ""ABOUT YOURSELF UNLESS THE INFORMATION IS DIRECTLY PERTINENT TO THE USER'S QUERY."),stop_words=["<|im_end|>"],

)register_template(name="deepseek",format_user=StringFormatter(slots=["User: {{content}}\n\nAssistant:"]),format_system=StringFormatter(slots=["{{content}}\n\n"]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),

)register_template(name="deepseek3",format_user=StringFormatter(slots=["<|User|>{{content}}<|Assistant|>"]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),

)register_template(name="deepseekcoder",format_user=StringFormatter(slots=["### Instruction:\n{{content}}\n### Response:"]),format_assistant=StringFormatter(slots=["\n{{content}}\n<|EOT|>\n"]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),default_system=("You are an AI programming assistant, utilizing the DeepSeek Coder model, ""developed by DeepSeek Company, and you only answer questions related to computer science. ""For politically sensitive questions, security and privacy issues, ""and other non-computer science questions, you will refuse to answer.\n"),

)register_template(name="default",format_user=StringFormatter(slots=["Human: {{content}}\nAssistant:"]),format_assistant=StringFormatter(slots=["{{content}}", {"eos_token"}, "\n"]),format_system=StringFormatter(slots=["System: {{content}}\n"]),

)register_template(name="empty",format_assistant=StringFormatter(slots=["{{content}}"]),

)register_template(name="exaone",format_user=StringFormatter(slots=["[|user|]{{content}}\n[|assistant|]"]),format_assistant=StringFormatter(slots=["{{content}}", {"eos_token"}, "\n"]),format_system=StringFormatter(slots=["[|system|]{{content}}[|endofturn|]\n"]),

)register_template(name="falcon",format_user=StringFormatter(slots=["User: {{content}}\nFalcon:"]),format_assistant=StringFormatter(slots=["{{content}}\n"]),efficient_eos=True,

)register_template(name="fewshot",format_assistant=StringFormatter(slots=["{{content}}\n\n"]),efficient_eos=True,

)register_template(name="gemma",format_user=StringFormatter(slots=["<start_of_turn>user\n{{content}}<end_of_turn>\n<start_of_turn>model\n"]),format_assistant=StringFormatter(slots=["{{content}}<end_of_turn>\n"]),format_observation=StringFormatter(slots=["<start_of_turn>tool\n{{content}}<end_of_turn>\n<start_of_turn>model\n"]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),

)register_template(name="glm4",format_user=StringFormatter(slots=["<|user|>\n{{content}}<|assistant|>"]),format_assistant=StringFormatter(slots=["\n{{content}}"]),format_system=StringFormatter(slots=["<|system|>\n{{content}}"]),format_function=FunctionFormatter(slots=["{{content}}"], tool_format="glm4"),format_observation=StringFormatter(slots=["<|observation|>\n{{content}}<|assistant|>"]),format_tools=ToolFormatter(tool_format="glm4"),format_prefix=EmptyFormatter(slots=["[gMASK]<sop>"]),stop_words=["<|user|>", "<|observation|>"],efficient_eos=True,

)register_template(name="granite3",format_user=StringFormatter(slots=["<|start_of_role|>user<|end_of_role|>{{content}}<|end_of_text|>\n<|start_of_role|>assistant<|end_of_role|>"]),format_assistant=StringFormatter(slots=["{{content}}<|end_of_text|>\n"]),format_system=StringFormatter(slots=["<|start_of_role|>system<|end_of_role|>{{content}}<|end_of_text|>\n"]),

)register_template(name="index",format_user=StringFormatter(slots=["reserved_0{{content}}reserved_1"]),format_system=StringFormatter(slots=["<unk>{{content}}"]),efficient_eos=True,

)register_template(name="intern",format_user=StringFormatter(slots=["<|User|>:{{content}}\n<|Bot|>:"]),format_assistant=StringFormatter(slots=["{{content}}<eoa>\n"]),format_system=StringFormatter(slots=["<|System|>:{{content}}\n"]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),default_system=("You are an AI assistant whose name is InternLM (书生·浦语).\n""- InternLM (书生·浦语) is a conversational language model that is developed by Shanghai AI Laboratory ""(上海人工智能实验室). It is designed to be helpful, honest, and harmless.\n""- InternLM (书生·浦语) can understand and communicate fluently in the language ""chosen by the user such as English and 中文."),stop_words=["<eoa>"],

)register_template(name="intern2",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),default_system=("You are an AI assistant whose name is InternLM (书生·浦语).\n""- InternLM (书生·浦语) is a conversational language model that is developed by Shanghai AI Laboratory ""(上海人工智能实验室). It is designed to be helpful, honest, and harmless.\n""- InternLM (书生·浦语) can understand and communicate fluently in the language ""chosen by the user such as English and 中文."),stop_words=["<|im_end|>"],

)register_template(name="llama2",format_user=StringFormatter(slots=[{"bos_token"}, "[INST] {{content}} [/INST]"]),format_system=StringFormatter(slots=["<<SYS>>\n{{content}}\n<</SYS>>\n\n"]),template_class=Llama2Template,

)# copied from llama2 template

register_template(name="llama2_zh",format_user=StringFormatter(slots=[{"bos_token"}, "[INST] {{content}} [/INST]"]),format_system=StringFormatter(slots=["<<SYS>>\n{{content}}\n<</SYS>>\n\n"]),default_system="You are a helpful assistant. 你是一个乐于助人的助手。",template_class=Llama2Template,

)register_template(name="llama3",format_user=StringFormatter(slots=[("<|start_header_id|>user<|end_header_id|>\n\n{{content}}<|eot_id|>""<|start_header_id|>assistant<|end_header_id|>\n\n")]),format_assistant=StringFormatter(slots=["{{content}}<|eot_id|>"]),format_system=StringFormatter(slots=["<|start_header_id|>system<|end_header_id|>\n\n{{content}}<|eot_id|>"]),format_function=FunctionFormatter(slots=["{{content}}<|eot_id|>"], tool_format="llama3"),format_observation=StringFormatter(slots=[("<|start_header_id|>ipython<|end_header_id|>\n\n{{content}}<|eot_id|>""<|start_header_id|>assistant<|end_header_id|>\n\n")]),format_tools=ToolFormatter(tool_format="llama3"),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),stop_words=["<|eot_id|>", "<|eom_id|>"],

)# copied from llama3 template

register_template(name="mllama",format_user=StringFormatter(slots=[("<|start_header_id|>user<|end_header_id|>\n\n{{content}}<|eot_id|>""<|start_header_id|>assistant<|end_header_id|>\n\n")]),format_assistant=StringFormatter(slots=["{{content}}<|eot_id|>"]),format_system=StringFormatter(slots=["<|start_header_id|>system<|end_header_id|>\n\n{{content}}<|eot_id|>"]),format_function=FunctionFormatter(slots=["{{content}}<|eot_id|>"], tool_format="llama3"),format_observation=StringFormatter(slots=[("<|start_header_id|>ipython<|end_header_id|>\n\n{{content}}<|eot_id|>""<|start_header_id|>assistant<|end_header_id|>\n\n")]),format_tools=ToolFormatter(tool_format="llama3"),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),stop_words=["<|eot_id|>", "<|eom_id|>"],mm_plugin=get_mm_plugin(name="mllama", image_token="<|image|>"),

)# copied from vicuna template

register_template(name="llava",format_user=StringFormatter(slots=["USER: {{content}} ASSISTANT:"]),default_system=("A chat between a curious user and an artificial intelligence assistant. ""The assistant gives helpful, detailed, and polite answers to the user's questions."),mm_plugin=get_mm_plugin(name="llava", image_token="<image>"),

)# copied from vicuna template

register_template(name="llava_next",format_user=StringFormatter(slots=["USER: {{content}} ASSISTANT:"]),default_system=("A chat between a curious user and an artificial intelligence assistant. ""The assistant gives helpful, detailed, and polite answers to the user's questions."),mm_plugin=get_mm_plugin(name="llava_next", image_token="<image>"),

)# copied from llama3 template

register_template(name="llava_next_llama3",format_user=StringFormatter(slots=[("<|start_header_id|>user<|end_header_id|>\n\n{{content}}<|eot_id|>""<|start_header_id|>assistant<|end_header_id|>\n\n")]),format_assistant=StringFormatter(slots=["{{content}}<|eot_id|>"]),format_system=StringFormatter(slots=["<|start_header_id|>system<|end_header_id|>\n\n{{content}}<|eot_id|>"]),format_function=FunctionFormatter(slots=["{{content}}<|eot_id|>"], tool_format="llama3"),format_observation=StringFormatter(slots=[("<|start_header_id|>ipython<|end_header_id|>\n\n{{content}}<|eot_id|>""<|start_header_id|>assistant<|end_header_id|>\n\n")]),format_tools=ToolFormatter(tool_format="llama3"),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),stop_words=["<|eot_id|>", "<|eom_id|>"],mm_plugin=get_mm_plugin(name="llava_next", image_token="<image>"),

)# copied from mistral template

register_template(name="llava_next_mistral",format_user=StringFormatter(slots=["[INST] {{content}}[/INST]"]),format_assistant=StringFormatter(slots=[" {{content}}", {"eos_token"}]),format_system=StringFormatter(slots=["{{content}}\n\n"]),format_function=FunctionFormatter(slots=["[TOOL_CALLS] {{content}}", {"eos_token"}], tool_format="mistral"),format_observation=StringFormatter(slots=["""[TOOL_RESULTS] {"content": {{content}}}[/TOOL_RESULTS]"""]),format_tools=ToolFormatter(tool_format="mistral"),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),mm_plugin=get_mm_plugin(name="llava_next", image_token="<image>"),template_class=Llama2Template,

)# copied from qwen template

register_template(name="llava_next_qwen",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),format_function=FunctionFormatter(slots=["{{content}}<|im_end|>\n"], tool_format="qwen"),format_observation=StringFormatter(slots=["<|im_start|>user\n<tool_response>\n{{content}}\n</tool_response><|im_end|>\n<|im_start|>assistant\n"]),format_tools=ToolFormatter(tool_format="qwen"),default_system="You are a helpful assistant.",stop_words=["<|im_end|>"],mm_plugin=get_mm_plugin(name="llava_next", image_token="<image>"),

)# copied from chatml template

register_template(name="llava_next_yi",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),stop_words=["<|im_end|>"],mm_plugin=get_mm_plugin(name="llava_next", image_token="<image>"),

)# copied from vicuna template

register_template(name="llava_next_video",format_user=StringFormatter(slots=["USER: {{content}} ASSISTANT:"]),default_system=("A chat between a curious user and an artificial intelligence assistant. ""The assistant gives helpful, detailed, and polite answers to the user's questions."),mm_plugin=get_mm_plugin(name="llava_next_video", image_token="<image>", video_token="<video>"),

)# copied from mistral template

register_template(name="llava_next_video_mistral",format_user=StringFormatter(slots=["[INST] {{content}}[/INST]"]),format_assistant=StringFormatter(slots=[" {{content}}", {"eos_token"}]),format_system=StringFormatter(slots=["{{content}}\n\n"]),format_function=FunctionFormatter(slots=["[TOOL_CALLS] {{content}}", {"eos_token"}], tool_format="mistral"),format_observation=StringFormatter(slots=["""[TOOL_RESULTS] {"content": {{content}}}[/TOOL_RESULTS]"""]),format_tools=ToolFormatter(tool_format="mistral"),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),mm_plugin=get_mm_plugin(name="llava_next_video", image_token="<image>", video_token="<video>"),template_class=Llama2Template,

)# copied from chatml template

register_template(name="llava_next_video_yi",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),stop_words=["<|im_end|>"],mm_plugin=get_mm_plugin(name="llava_next_video", image_token="<image>", video_token="<video>"),

)# copied from chatml template

register_template(name="marco",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),format_observation=StringFormatter(slots=["<|im_start|>tool\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),default_system=("你是一个经过良好训练的AI助手,你的名字是Marco-o1.由阿里国际数字商业集团的AI Business创造.\n## 重要!!!!!\n""当你回答问题时,你的思考应该在<Thought>内完成,<Output>内输出你的结果。\n""<Thought>应该尽可能是英文,但是有2个特例,一个是对原文中的引用,另一个是是数学应该使用markdown格式,<Output>内的输出需要遵循用户输入的语言。\n"),stop_words=["<|im_end|>"],

)# copied from chatml template

register_template(name="minicpm_v",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),stop_words=["<|im_end|>"],default_system="You are a helpful assistant.",mm_plugin=get_mm_plugin(name="minicpm_v", image_token="<image>", video_token="<video>"),

)# copied from minicpm_v template

register_template(name="minicpm_o",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),stop_words=["<|im_end|>"],default_system="You are Qwen, created by Alibaba Cloud. You are a helpful assistant.",mm_plugin=get_mm_plugin(name="minicpm_v", image_token="<image>", video_token="<video>", audio_token="<audio>"),

)# mistral tokenizer v3 tekken

register_template(name="ministral",format_user=StringFormatter(slots=["[INST]{{content}}[/INST]"]),format_system=StringFormatter(slots=["{{content}}\n\n"]),format_function=FunctionFormatter(slots=["[TOOL_CALLS]{{content}}", {"eos_token"}], tool_format="mistral"),format_observation=StringFormatter(slots=["""[TOOL_RESULTS]{"content": {{content}}}[/TOOL_RESULTS]"""]),format_tools=ToolFormatter(tool_format="mistral"),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),template_class=Llama2Template,

)# mistral tokenizer v3

register_template(name="mistral",format_user=StringFormatter(slots=["[INST] {{content}}[/INST]"]),format_assistant=StringFormatter(slots=[" {{content}}", {"eos_token"}]),format_system=StringFormatter(slots=["{{content}}\n\n"]),format_function=FunctionFormatter(slots=["[TOOL_CALLS] {{content}}", {"eos_token"}], tool_format="mistral"),format_observation=StringFormatter(slots=["""[TOOL_RESULTS] {"content": {{content}}}[/TOOL_RESULTS]"""]),format_tools=ToolFormatter(tool_format="mistral"),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),template_class=Llama2Template,

)# mistral tokenizer v7 tekken (copied from ministral)

register_template(name="mistral_small",format_user=StringFormatter(slots=["[INST]{{content}}[/INST]"]),format_system=StringFormatter(slots=["[SYSTEM_PROMPT]{{content}}[/SYSTEM_PROMPT]"]),format_function=FunctionFormatter(slots=["[TOOL_CALLS]{{content}}", {"eos_token"}], tool_format="mistral"),format_observation=StringFormatter(slots=["""[TOOL_RESULTS]{"content": {{content}}}[/TOOL_RESULTS]"""]),format_tools=ToolFormatter(tool_format="mistral"),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),

)register_template(name="olmo",format_user=StringFormatter(slots=["<|user|>\n{{content}}<|assistant|>\n"]),format_prefix=EmptyFormatter(slots=[{"eos_token"}]),

)register_template(name="openchat",format_user=StringFormatter(slots=["GPT4 Correct User: {{content}}", {"eos_token"}, "GPT4 Correct Assistant:"]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),

)register_template(name="openchat-3.6",format_user=StringFormatter(slots=[("<|start_header_id|>GPT4 Correct User<|end_header_id|>\n\n{{content}}<|eot_id|>""<|start_header_id|>GPT4 Correct Assistant<|end_header_id|>\n\n")]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),stop_words=["<|eot_id|>"],

)# copied from chatml template

register_template(name="opencoder",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),format_observation=StringFormatter(slots=["<|im_start|>tool\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),default_system="You are OpenCoder, created by OpenCoder Team.",stop_words=["<|im_end|>"],

)register_template(name="orion",format_user=StringFormatter(slots=["Human: {{content}}\n\nAssistant: ", {"eos_token"}]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),

)# copied from gemma template

register_template(name="paligemma",format_user=StringFormatter(slots=["<start_of_turn>user\n{{content}}<end_of_turn>\n<start_of_turn>model\n"]),format_assistant=StringFormatter(slots=["{{content}}<end_of_turn>\n"]),format_observation=StringFormatter(slots=["<start_of_turn>tool\n{{content}}<end_of_turn>\n<start_of_turn>model\n"]),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),mm_plugin=get_mm_plugin(name="paligemma", image_token="<image>"),

)register_template(name="phi",format_user=StringFormatter(slots=["<|user|>\n{{content}}<|end|>\n<|assistant|>\n"]),format_assistant=StringFormatter(slots=["{{content}}<|end|>\n"]),format_system=StringFormatter(slots=["<|system|>\n{{content}}<|end|>\n"]),stop_words=["<|end|>"],

)register_template(name="phi_small",format_user=StringFormatter(slots=["<|user|>\n{{content}}<|end|>\n<|assistant|>\n"]),format_assistant=StringFormatter(slots=["{{content}}<|end|>\n"]),format_system=StringFormatter(slots=["<|system|>\n{{content}}<|end|>\n"]),format_prefix=EmptyFormatter(slots=[{"<|endoftext|>"}]),stop_words=["<|end|>"],

)register_template(name="phi4",format_user=StringFormatter(slots=["<|im_start|>user<|im_sep|>{{content}}<|im_end|><|im_start|>assistant<|im_sep|>"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>"]),format_system=StringFormatter(slots=["<|im_start|>system<|im_sep|>{{content}}<|im_end|>"]),stop_words=["<|im_end|>"],

)# copied from ministral template

register_template(name="pixtral",format_user=StringFormatter(slots=["[INST]{{content}}[/INST]"]),format_system=StringFormatter(slots=["{{content}}\n\n"]),format_function=FunctionFormatter(slots=["[TOOL_CALLS]{{content}}", {"eos_token"}], tool_format="mistral"),format_observation=StringFormatter(slots=["""[TOOL_RESULTS]{"content": {{content}}}[/TOOL_RESULTS]"""]),format_tools=ToolFormatter(tool_format="mistral"),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),mm_plugin=get_mm_plugin(name="pixtral", image_token="[IMG]"),template_class=Llama2Template,

)# copied from chatml template

register_template(name="qwen",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),format_function=FunctionFormatter(slots=["{{content}}<|im_end|>\n"], tool_format="qwen"),format_observation=StringFormatter(slots=["<|im_start|>user\n<tool_response>\n{{content}}\n</tool_response><|im_end|>\n<|im_start|>assistant\n"]),format_tools=ToolFormatter(tool_format="qwen"),default_system="You are a helpful assistant.",stop_words=["<|im_end|>"],

)# copied from chatml template

register_template(name="qwen2_audio",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),default_system="You are a helpful assistant.",stop_words=["<|im_end|>"],mm_plugin=get_mm_plugin(name="qwen2_audio", audio_token="<|AUDIO|>"),

)# copied from qwen template

register_template(name="qwen2_vl",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),format_function=FunctionFormatter(slots=["{{content}}<|im_end|>\n"], tool_format="qwen"),format_observation=StringFormatter(slots=["<|im_start|>user\n<tool_response>\n{{content}}\n</tool_response><|im_end|>\n<|im_start|>assistant\n"]),format_tools=ToolFormatter(tool_format="qwen"),default_system="You are a helpful assistant.",stop_words=["<|im_end|>"],mm_plugin=get_mm_plugin(name="qwen2_vl", image_token="<|image_pad|>", video_token="<|video_pad|>"),

)register_template(name="sailor",format_user=StringFormatter(slots=["<|im_start|>question\n{{content}}<|im_end|>\n<|im_start|>answer\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),default_system=("You are an AI assistant named Sailor created by Sea AI Lab. ""Your answer should be friendly, unbiased, faithful, informative and detailed."),stop_words=["<|im_end|>"],

)# copied from llama3 template

register_template(name="skywork_o1",format_user=StringFormatter(slots=[("<|start_header_id|>user<|end_header_id|>\n\n{{content}}<|eot_id|>""<|start_header_id|>assistant<|end_header_id|>\n\n")]),format_assistant=StringFormatter(slots=["{{content}}<|eot_id|>"]),format_system=StringFormatter(slots=["<|start_header_id|>system<|end_header_id|>\n\n{{content}}<|eot_id|>"]),format_function=FunctionFormatter(slots=["{{content}}<|eot_id|>"], tool_format="llama3"),format_observation=StringFormatter(slots=[("<|start_header_id|>ipython<|end_header_id|>\n\n{{content}}<|eot_id|>""<|start_header_id|>assistant<|end_header_id|>\n\n")]),format_tools=ToolFormatter(tool_format="llama3"),format_prefix=EmptyFormatter(slots=[{"bos_token"}]),default_system=("You are Skywork-o1, a thinking model developed by Skywork AI, specializing in solving complex problems ""involving mathematics, coding, and logical reasoning through deep thought. When faced with a user's request, ""you first engage in a lengthy and in-depth thinking process to explore possible solutions to the problem. ""After completing your thoughts, you then provide a detailed explanation of the solution process ""in your response."),stop_words=["<|eot_id|>", "<|eom_id|>"],

)register_template(name="solar",format_user=StringFormatter(slots=["### User:\n{{content}}\n\n### Assistant:\n"]),format_system=StringFormatter(slots=["### System:\n{{content}}\n\n"]),efficient_eos=True,

)register_template(name="starchat",format_user=StringFormatter(slots=["<|user|>\n{{content}}<|end|>\n<|assistant|>"]),format_assistant=StringFormatter(slots=["{{content}}<|end|>\n"]),format_system=StringFormatter(slots=["<|system|>\n{{content}}<|end|>\n"]),stop_words=["<|end|>"],

)register_template(name="telechat",format_user=StringFormatter(slots=["<_user>{{content}}<_bot>"]),format_system=StringFormatter(slots=["<_system>{{content}}<_end>"]),

)register_template(name="telechat2",format_user=StringFormatter(slots=["<_user>{{content}}<_bot>"]),format_system=StringFormatter(slots=["<_system>{{content}}"]),default_system=("你是中国电信星辰语义大模型,英文名是TeleChat,你是由中电信人工智能科技有限公司和中国电信人工智能研究院(TeleAI)研发的人工智能助手。"),

)register_template(name="vicuna",format_user=StringFormatter(slots=["USER: {{content}} ASSISTANT:"]),default_system=("A chat between a curious user and an artificial intelligence assistant. ""The assistant gives helpful, detailed, and polite answers to the user's questions."),replace_jinja_template=True,

)register_template(name="video_llava",format_user=StringFormatter(slots=["USER: {{content}} ASSISTANT:"]),default_system=("A chat between a curious user and an artificial intelligence assistant. ""The assistant gives helpful, detailed, and polite answers to the user's questions."),mm_plugin=get_mm_plugin(name="video_llava", image_token="<image>", video_token="<video>"),

)register_template(name="xuanyuan",format_user=StringFormatter(slots=["Human: {{content}} Assistant:"]),default_system=("以下是用户和人工智能助手之间的对话。用户以Human开头,人工智能助手以Assistant开头,""会对人类提出的问题给出有帮助、高质量、详细和礼貌的回答,并且总是拒绝参与与不道德、""不安全、有争议、政治敏感等相关的话题、问题和指示。\n"),

)register_template(name="xverse",format_user=StringFormatter(slots=["Human: {{content}}\n\nAssistant: "]),

)register_template(name="yayi",format_user=StringFormatter(slots=[{"token": "<|Human|>"}, ":\n{{content}}\n\n", {"token": "<|YaYi|>"}, ":"]),format_assistant=StringFormatter(slots=["{{content}}\n\n"]),format_system=StringFormatter(slots=[{"token": "<|System|>"}, ":\n{{content}}\n\n"]),default_system=("You are a helpful, respectful and honest assistant named YaYi ""developed by Beijing Wenge Technology Co.,Ltd. ""Always answer as helpfully as possible, while being safe. ""Your answers should not include any harmful, unethical, ""racist, sexist, toxic, dangerous, or illegal content. ""Please ensure that your responses are socially unbiased and positive in nature.\n\n""If a question does not make any sense, or is not factually coherent, ""explain why instead of answering something not correct. ""If you don't know the answer to a question, please don't share false information."),stop_words=["<|End|>"],

)# copied from chatml template

register_template(name="yi",format_user=StringFormatter(slots=["<|im_start|>user\n{{content}}<|im_end|>\n<|im_start|>assistant\n"]),format_assistant=StringFormatter(slots=["{{content}}<|im_end|>\n"]),format_system=StringFormatter(slots=["<|im_start|>system\n{{content}}<|im_end|>\n"]),stop_words=["<|im_end|>"],

)register_template(name="yi_vl",format_user=StringFormatter(slots=["### Human: {{content}}\n### Assistant:"]),format_assistant=StringFormatter(slots=["{{content}}\n"]),default_system=("This is a chat between an inquisitive human and an AI assistant. ""Assume the role of the AI assistant. Read all the images carefully, ""and respond to the human's questions with informative, helpful, detailed and polite answers. ""这是一个好奇的人类和一个人工智能助手之间的对话。假设你扮演这个AI助手的角色。""仔细阅读所有的图像,并对人类的问题做出信息丰富、有帮助、详细的和礼貌的回答。\n\n"),stop_words=["###"],efficient_eos=True,mm_plugin=get_mm_plugin(name="llava", image_token="<image>"),

)register_template(name="yuan",format_user=StringFormatter(slots=["{{content}}", {"token": "<sep>"}]),format_assistant=StringFormatter(slots=["{{content}}<eod>\n"]),stop_words=["<eod>"],

)register_template(name="zephyr",format_user=StringFormatter(slots=["<|user|>\n{{content}}", {"eos_token"}, "<|assistant|>\n"]),format_system=StringFormatter(slots=["<|system|>\n{{content}}", {"eos_token"}]),default_system="You are Zephyr, a helpful assistant.",

)register_template(name="ziya",format_user=StringFormatter(slots=["<human>:{{content}}\n<bot>:"]),format_assistant=StringFormatter(slots=["{{content}}\n"]),

)相关文章:

开源模型应用落地-DeepSeek-R1-Distill-Qwen-7B-LoRA微调-LLaMA-Factory-单机单卡-V100(一)

一、前言 如今,大语言模型领域热闹非凡,各种模型不断涌现。DeepSeek-R1-Distill-Qwen-7B 模型凭借其出色的效果和性能,吸引了众多开发者的目光。而 LLaMa-Factory 作为强大的微调工具,能让模型更好地满足个性化需求。 在本篇中&am…...

如何避免redis长期运行持久化AOF文件过大的问题:AOF重写

一、AOF 重写的核心作用 通过 重建 AOF 文件,解决以下问题: 体积压缩:消除冗余命令(如多次修改同一 key),生成最小操作集合。混合持久化支持(若启用 aof-use-rdb-preamble yes)&am…...

uni-app发起网络请求的三种方式

uni.request(OBJECT) 发起网络请求 具体参数可查看官方文档uni-app data:请求的参数; header:设置请求的 header,header 中不能设置 Referer; method:请求方法; timeout:超时时间,单位 ms&a…...

以下是一个使用 HTML、CSS 和 JavaScript 实现的登录弹窗效果示例

以下是一个使用 HTML、CSS 和 JavaScript 实现的登录弹窗效果示例: <!DOCTYPE html> <html lang"zh-CN"> <head><meta charset"UTF-8"><title>登录弹窗示例</title><style>body {font-family: Aria…...

EasyRTC:智能硬件适配,实现多端音视频互动新突破

一、智能硬件全面支持,轻松跨越平台障碍 EasyRTC 采用前沿的智能硬件适配技术,无缝对接 Windows、macOS、Linux、Android、iOS 等主流操作系统,并全面拥抱 WebRTC 标准。这一特性确保了“一次开发,多端运行”的便捷性,…...

LeetCode1287

LeetCode1287 目录 题目描述示例思路分析代码段代码逐行讲解复杂度分析总结的知识点整合总结 题目描述 给定一个非递减的整数数组 arr,其中有一个元素恰好出现超过数组长度的 25%。请你找到并返回这个元素。 示例 示例 1 输入: arr [1, 2, 2, 6, 6, 6, 6, 7,…...

格式)

【计算机网络】网络层数据包(Packet)格式

在计算机网络中,数据包(Packet) 是网络层的协议数据单元(PDU),用于在不同网络之间传输数据。数据包的格式取决于具体的网络层协议(如 IPv4、IPv6 等)。以下是常见数据包格式的详细说…...

使用vite打包并部署vue项目到nginx

1 使用 Vite 创建 vue3 项目 Vite 是一个新型的前端构建工具,专为现代浏览器和工具链而设计,提供了极快的冷启动和热模块更新(HMR)速度。以下是使用 Vite 创建 Vue 3 项目的详细步骤: 一、安装 Node.js 和 npm 首先…...

深度学习笔记之自然语言处理(NLP)

深度学习笔记之自然语言处理(NLP) 在行将开学之时,我将开始我的深度学习笔记的自然语言处理部分,这部分内容是在前面基础上开展学习的,且目前我的学习更加倾向于通识。自然语言处理部分将包含《动手学深度学习》这本书的第十四章,…...

【ISO 14229-1:2023 UDS诊断全量测试用例清单系列:第十九节】

ISO 14229-1:2023 UDS诊断服务测试用例全解析(ClearDiagnosticInformation_0x84服务) 作者:车端域控测试工程师 更新日期:2025年02月14日 关键词:UDS协议、0x84服务、清除诊断信息、ISO 14229-1:2023、ECU测试 一、服…...

自动化测试框架搭建-单次接口执行-三部曲

目的 判断接口返回值和提前设置的预期是否一致,从而判断本次测试是否通过 代码步骤设计 第一步:前端调用后端已经写好的POST接口,并传递参数 第二步:后端接收到参数,组装并请求指定接口,保存返回 第三…...

Spring Bean的生命周期和作用域

一、Bean 生命周期 Bean的定义Bean的实例化属性注入Bean的初始化Bean的使用Bean的销毁 可以增强的位置: PostConstruct:属性注入后,afterPropertiesSet方法 (前提实现:InitializingBean接口)前增强。 Pr…...

DeepSeek R1生成图片总结2(虽然本身是不能直接生成图片,但是可以想办法利用别的工具一起实现)

DeepSeek官网 目前阶段,DeepSeek R1是不能直接生成图片的,但可以通过优化文本后转换为SVG或HTML代码,再保存为图片。另外,Janus-Pro是DeepSeek的多模态模型,支持文生图,但需要本地部署或者使用第三方工具。…...

ESP32 ESP-IDF TFT-LCD(ST7735 128x160) LVGL基本配置和使用

ESP32 ESP-IDF TFT-LCD(ST7735 128x160) LVGL基本配置和使用 📍项目地址:https://github.com/lvgl/lv_port_esp32参考文章:https://blog.csdn.net/chentuo2000/article/details/126668088https://blog.csdn.net/p1279030826/article/details/…...

数据库连接池与池化思想

目录 1. 数据库连接池概述 1.1 什么是数据库连接池? 1.2 为什么需要连接池? 2. 池化思想 2.1 池化思想的优点 2.2 池化思想的典型应用 3. 常见的开源数据库连接池 3.1 DBCP 3.2 C3P0 3.3 Druid 4. Druid连接池的使用 4.1 Druid的特点 4.2 D…...

)

深度学习和机器学习的本质区别(白话版)

深度学习与机器学习的本质区别 在人工智能的世界里,机器学习和深度学习是两个常被提及的概念,但它们在本质上有着重要区别。简单来说,机器学习依赖于人为设定的数据模式,而深度学习则更依赖于数据本身自动发现模式。 机器学习&a…...

calibrate_sheet_of_light_3d_calib_object)

halcon激光三角测量(十七)calibrate_sheet_of_light_3d_calib_object

目录 一、calibrate_sheet_of_light_3d_calib_object例程代码二、标定过程三、校准后的3D模型和原3D模型对齐过程四、获得模型标定结果,并生成3D模型五、set_paint 和 dev_set_paint函数 一、calibrate_sheet_of_light_3d_calib_object例程代码 1、第一部分&#x…...

【笔记】LLM|Ubuntu22服务器极简本地部署DeepSeek+联网使用方式

2025/02/18说明:2月18日~2月20日是2024年度博客之星投票时间,走过路过可以帮忙点点投票吗?我想要前一百的实体证书,经过我严密的计算只要再拿到60票就稳了。一人可能会有多票,Thanks♪(・ω・)&am…...

win11 labelme 汉化菜单

替换 app.py,再重启 #labelme 汉化菜单# -*- coding: utf-8 -*-import functools import os import os.path as osp import re import webbrowserimport imgviz from qtpy import QtCore from qtpy.QtCore import Qt from qtpy import QtGui from qtpy import QtWidgetsfrom l…...

Linux的基础指令和环境部署,项目部署实战(下)

目录 上一篇:Linxu的基础指令和环境部署,项目部署实战(上)-CSDN博客 1. 搭建Java部署环境 1.1 apt apt常用命令 列出所有的软件包 更新软件包数据库 安装软件包 移除软件包 1.2 JDK 1.2.1. 更新 1.2.2. 安装openjdk&am…...

利用Java爬虫精准获取商品SKU详细信息:实战案例指南

在电商领域,SKU(Stock Keeping Unit,库存单位)详细信息是电商运营的核心数据之一。它不仅包含了商品的规格、价格、库存等关键信息,还直接影响到库存管理、价格策略和市场分析等多个方面。本文将详细介绍如何利用Java爬…...

数值积分:通过复合梯形法计算

在物理学和工程学中,很多问题都可以通过数值积分来求解,特别是当我们无法得到解析解时。数值积分是通过计算积分区间内离散点的函数值来近似积分的结果。在这篇博客中,我将讨论如何使用 复合梯形法 来进行数值积分,并以一个简单的…...

【Java计算机毕业设计】基于SSM+VUE保险公司管理系统数据库源代码+LW文档+开题报告+答辩稿+部署教程+代码讲解

源代码数据库LW文档(1万字以上)开题报告答辩稿 部署教程代码讲解代码时间修改教程 一、开发工具、运行环境、开发技术 开发工具 1、操作系统:Window操作系统 2、开发工具:IntelliJ IDEA或者Eclipse 3、数据库存储:…...

C#之上位机开发---------C#通信库及WPF的简单实践

〇、上位机,分层架构 界面层 要实现的功能: 展示数据 获取数据 发送数据 数据层 要实现的功能: 转换数据 打包数据 存取数据 通信层 要实现的功能: 打开连接 关闭连接 读取数据 写入数据 实体类 作用: 封装数据…...

Pytorch论文实现之GAN-C约束鉴别器训练自己的数据集

简介 简介:这次介绍复现的论文主要是约束判别器的函数空间,作者认为原来的损失函数在优化判别器关于真样本和假样本的相对输出缺乏显式约束,因为在实践中,在优化生成器时,鉴别器对生成样本的输出会增加,但对真实数据保持不变,而优化鉴别器会导致其对真实数据的输出增加…...

vue3.x 的shallowReactive 与 shallowRef 详细解读

在 Vue 3.x 中,shallowReactive 和 shallowRef 是两个用于创建浅层响应式数据的 API。它们与 reactive 和 ref 类似,但在处理嵌套对象时的行为有所不同。以下是它们的详细解读和示例。 1. shallowReactive 作用 shallowReactive 创建一个浅层响应式对…...

MongoDB 常用命令速查表

以下是一份 MongoDB 常用命令速查表,涵盖数据库、集合、文档的增删改查、索引管理、聚合操作等场景: 1. 数据库操作 命令说明show dbs查看所有数据库use <db-name>切换/创建数据库(需插入数据后才会显示)db.dropDatabase()…...

DeepSeek崛起的本质分析:AI变局中的中国机会

DeepSeek崛起的本质分析:AI变局中的中国机会 1. 中国AI发展的大背景 近年来,全球AI技术竞争日趋白热化,而中国作为全球第二大经济体,在AI领域的投入和政策支持力度不断加大。大模型是AI产业的制高点,而美国对中国的高…...

Autojs: 使用 SQLite

例子 let db new SQLiteUtil("/sdcard/A_My_DB/sqlite.db");db.fastCreateTable("user_table",{name: "",online: false,},["name"] // 设置 name 为唯一, 重复项 不会添加成功 );// 新增数据的 ID let row_id db.insert("use…...

读书笔记 - 修改代码的艺术

读书笔记 - 修改代码的艺术 第 1 章 修改软件第 2 章 带着反馈工作系统变更方式反馈方式遗留代码修改方法 第 3 章 感知和分离伪协作程序模拟对象 第 4 章 接缝模型接缝 第 5 章 工具自动化重构工具单元测试用具 第 6 章 时间紧迫,但必须修改新生方法(Sp…...