【YOLOv8】YOLOv8改进系列(5)----替换主干网络之EfficientFormerV2

主页:HABUO🍁主页:HABUO

🍁YOLOv8入门+改进专栏🍁

🍁如果再也不能见到你,祝你早安,午安,晚安🍁

【YOLOv8改进系列】:

【YOLOv8】YOLOv8结构解读

YOLOv8改进系列(1)----替换主干网络之EfficientViT

YOLOv8改进系列(2)----替换主干网络之FasterNet

YOLOv8改进系列(3)----替换主干网络之ConvNeXt V2

YOLOv8改进系列(4)----替换C2f之FasterNet中的FasterBlock替换C2f中的Bottleneck

目录

💯一、EfficientFormerV2介绍

1. 简介

2. EfficientFormerV2 的设计

2.1 网络架构设计

2.2 网络架构示意图

2.3 关键设计选择的性能对比

3. 实验结果

3.1 ImageNet-1K 分类

3.2 下游任务

4. 关键结论

💯二、具体添加方法

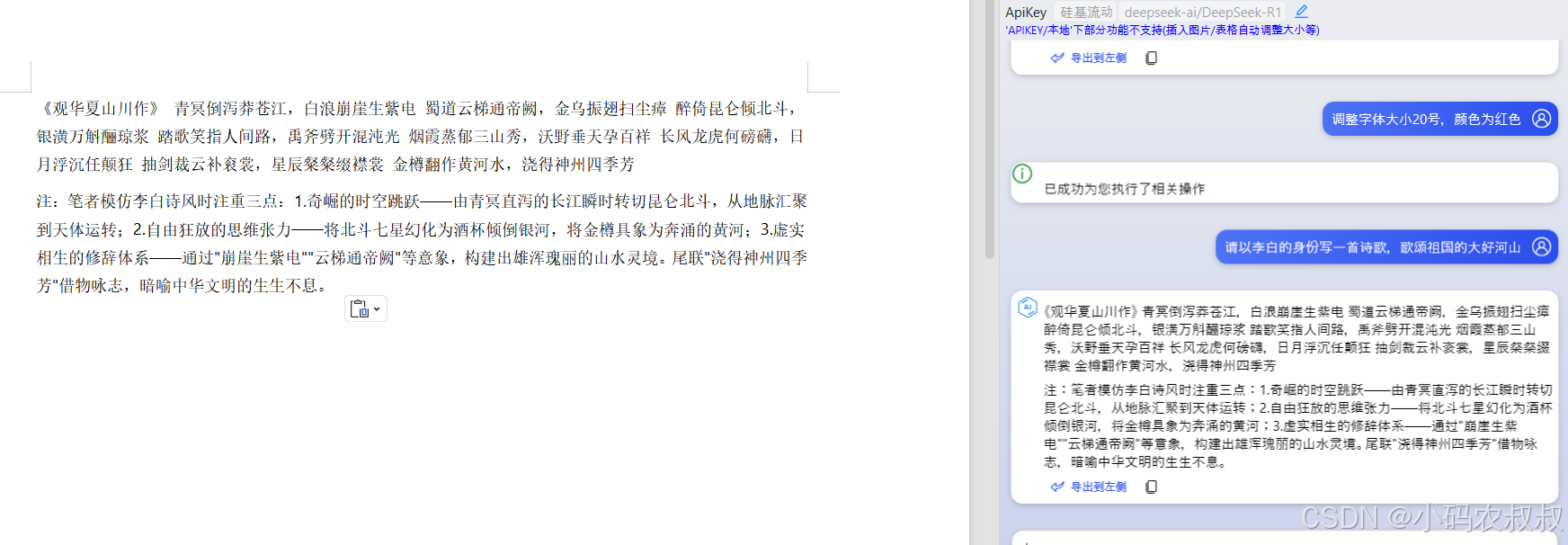

第①步:创建EfficientFormerV2.py

第②步:修改task.py

(1)引入创建的EfficientFormerV2文件

(2)修改_predict_once函数

(3)修改parse_model函数

第③步:yolov8.yaml文件修改

第④步:验证是否加入成功

💯一、EfficientFormerV2介绍

- 论文题目:《Rethinking Vision Transformers for MobileNet Size and Speed》

- 论文地址:https://arxiv.org/pdf/2212.08059v1

1. 简介

这篇论文介绍了一种名为 EfficientFormerV2 的新型高效视觉模型,旨在解决如何在移动设备上实现与 MobileNet 相当的模型大小和推理速度的同时,达到与 Vision Transformers (ViTs) 相似的高性能。

论文的核心目标是探索是否可以设计出一种 Transformer 模型,使其在移动设备上的推理速度和模型大小与 MobileNet 相当,同时保持高性能。为此,作者提出了 EfficientFormerV2,并通过以下方法实现这一目标:

-

重新审视 ViTs 的设计选择,提出一种低延迟、高参数效率的改进型超网络(supernet)。

-

引入一种细粒度的联合搜索策略,同时优化模型的延迟和参数数量,以找到高效的架构。

2. EfficientFormerV2 的设计

2.1 网络架构设计

EfficientFormerV2 的设计基于以下关键改进:

-

统一的前馈网络(FFN):将局部信息建模模块(如池化层)替换为深度可分离卷积(DWCONV),并将其集成到 FFN 中,简化了网络结构。

-

多头自注意力(MHSA)改进:通过在 Value 矩阵中注入局部信息,并引入 Talking Head 机制,提升注意力模块的性能。

-

高效的注意力机制:通过“Stride Attention”方法,将高分辨率特征的注意力计算简化为固定分辨率,从而减少计算复杂度。

-

注意力下采样:结合局部和全局信息的下采样策略,进一步优化性能。

2.2 网络架构示意图

EfficientFormerV2 的网络架构分为四个阶段,分别处理不同分辨率的特征(1/4、1/8、1/16 和 1/32)。前两个阶段主要使用统一的 FFN 捕获局部信息,后两个阶段结合局部 FFN 和全局 MHSA 模块,以平衡局部和全局信息的建模。

2.3 关键设计选择的性能对比

论文通过实验验证了不同设计选择对性能的影响,例如:

-

统一的 FFN 设计相比基线模型提升了 0.6% 的准确率,且没有增加延迟。

-

引入 Talking Head 和局部信息建模后,准确率进一步提升至 80.8%,同时保持参数和延迟不变。

-

通过 Stride Attention 和注意力下采样,模型在高分辨率特征上的性能和效率得到显著提升。

3. 实验结果

3.1 ImageNet-1K 分类

EfficientFormerV2 在 ImageNet-1K 数据集上进行了广泛的实验,结果表明:

-

EfficientFormerV2-S0 在与 MobileNetV2 相同的延迟和参数量下,Top-1 准确率高出 3.9%。

-

EfficientFormerV2-S1 在与 MobileNetV2×1.4 相当的延迟下,准确率高出 4.3%,且模型大小减少了 2倍。

-

EfficientFormerV2-L 在较大的模型规模下,达到了与 EfficientFormer-L7 相同的准确率,但模型大小减少了 3.1倍。

此外,EfficientFormerV2 在 iPhone 12 和 Pixel 6 等移动设备上的推理延迟表现出色,证明了其在实际应用中的高效性。

3.2 下游任务

EfficientFormerV2 还在目标检测、实例分割和语义分割等下游任务中进行了验证:

-

在 MS COCO 数据集上,EfficientFormerV2-L 在与 EfficientFormer-L3 相同的模型大小下,检测和分割性能分别提升了 3.3 APbox 和 2.3 APmask。

-

在 ADE20K 数据集上,EfficientFormerV2-S2 的语义分割性能(mIoU)比 PoolFormer-S12 高出 5.2%,证明了其作为特征提取器的有效性。

4. 关键结论

EfficientFormerV2 通过重新审视 ViTs 的设计选择,并引入细粒度的联合搜索算法,成功实现了在移动设备上与 MobileNet 相当的模型大小和推理速度,同时保持了高性能。这一成果为在资源受限的硬件上部署 Transformer 模型提供了新的思路,并为未来的研究提供了有价值的参考。

💯二、具体添加方法

第①步:创建EfficientFormerV2.py

创建完成后,将下面代码直接复制粘贴进去:

import os

import copy

import torch

import torch.nn as nn

import torch.nn.functional as F

import math

from typing import Dict

import itertools

import numpy as np

from timm.models.layers import DropPath, trunc_normal_, to_2tuple__all__ = ['efficientformerv2_s0', 'efficientformerv2_s1', 'efficientformerv2_s2', 'efficientformerv2_l']EfficientFormer_width = {'L': [40, 80, 192, 384], # 26m 83.3% 6attn'S2': [32, 64, 144, 288], # 12m 81.6% 4attn dp0.02'S1': [32, 48, 120, 224], # 6.1m 79.0'S0': [32, 48, 96, 176], # 75.0 75.7

}EfficientFormer_depth = {'L': [5, 5, 15, 10], # 26m 83.3%'S2': [4, 4, 12, 8], # 12m'S1': [3, 3, 9, 6], # 79.0'S0': [2, 2, 6, 4], # 75.7

}# 26m

expansion_ratios_L = {'0': [4, 4, 4, 4, 4],'1': [4, 4, 4, 4, 4],'2': [4, 4, 4, 4, 3, 3, 3, 3, 3, 3, 3, 4, 4, 4, 4],'3': [4, 4, 4, 3, 3, 3, 3, 4, 4, 4],

}# 12m

expansion_ratios_S2 = {'0': [4, 4, 4, 4],'1': [4, 4, 4, 4],'2': [4, 4, 3, 3, 3, 3, 3, 3, 4, 4, 4, 4],'3': [4, 4, 3, 3, 3, 3, 4, 4],

}# 6.1m

expansion_ratios_S1 = {'0': [4, 4, 4],'1': [4, 4, 4],'2': [4, 4, 3, 3, 3, 3, 4, 4, 4],'3': [4, 4, 3, 3, 4, 4],

}# 3.5m

expansion_ratios_S0 = {'0': [4, 4],'1': [4, 4],'2': [4, 3, 3, 3, 4, 4],'3': [4, 3, 3, 4],

}class Attention4D(torch.nn.Module):def __init__(self, dim=384, key_dim=32, num_heads=8,attn_ratio=4,resolution=7,act_layer=nn.ReLU,stride=None):super().__init__()self.num_heads = num_headsself.scale = key_dim ** -0.5self.key_dim = key_dimself.nh_kd = nh_kd = key_dim * num_headsif stride is not None:self.resolution = math.ceil(resolution / stride)self.stride_conv = nn.Sequential(nn.Conv2d(dim, dim, kernel_size=3, stride=stride, padding=1, groups=dim),nn.BatchNorm2d(dim), )self.upsample = nn.Upsample(scale_factor=stride, mode='bilinear')else:self.resolution = resolutionself.stride_conv = Noneself.upsample = Noneself.N = self.resolution ** 2self.N2 = self.Nself.d = int(attn_ratio * key_dim)self.dh = int(attn_ratio * key_dim) * num_headsself.attn_ratio = attn_ratioh = self.dh + nh_kd * 2self.q = nn.Sequential(nn.Conv2d(dim, self.num_heads * self.key_dim, 1),nn.BatchNorm2d(self.num_heads * self.key_dim), )self.k = nn.Sequential(nn.Conv2d(dim, self.num_heads * self.key_dim, 1),nn.BatchNorm2d(self.num_heads * self.key_dim), )self.v = nn.Sequential(nn.Conv2d(dim, self.num_heads * self.d, 1),nn.BatchNorm2d(self.num_heads * self.d),)self.v_local = nn.Sequential(nn.Conv2d(self.num_heads * self.d, self.num_heads * self.d,kernel_size=3, stride=1, padding=1, groups=self.num_heads * self.d),nn.BatchNorm2d(self.num_heads * self.d), )self.talking_head1 = nn.Conv2d(self.num_heads, self.num_heads, kernel_size=1, stride=1, padding=0)self.talking_head2 = nn.Conv2d(self.num_heads, self.num_heads, kernel_size=1, stride=1, padding=0)self.proj = nn.Sequential(act_layer(),nn.Conv2d(self.dh, dim, 1),nn.BatchNorm2d(dim), )points = list(itertools.product(range(self.resolution), range(self.resolution)))N = len(points)attention_offsets = {}idxs = []for p1 in points:for p2 in points:offset = (abs(p1[0] - p2[0]), abs(p1[1] - p2[1]))if offset not in attention_offsets:attention_offsets[offset] = len(attention_offsets)idxs.append(attention_offsets[offset])self.attention_biases = torch.nn.Parameter(torch.zeros(num_heads, len(attention_offsets)))self.register_buffer('attention_bias_idxs',torch.LongTensor(idxs).view(N, N))@torch.no_grad()def train(self, mode=True):super().train(mode)if mode and hasattr(self, 'ab'):del self.abelse:self.ab = self.attention_biases[:, self.attention_bias_idxs]def forward(self, x): # x (B,N,C)B, C, H, W = x.shapeif self.stride_conv is not None:x = self.stride_conv(x)q = self.q(x).flatten(2).reshape(B, self.num_heads, -1, self.N).permute(0, 1, 3, 2)k = self.k(x).flatten(2).reshape(B, self.num_heads, -1, self.N).permute(0, 1, 2, 3)v = self.v(x)v_local = self.v_local(v)v = v.flatten(2).reshape(B, self.num_heads, -1, self.N).permute(0, 1, 3, 2)attn = ((q @ k) * self.scale+(self.attention_biases[:, self.attention_bias_idxs]if self.training else self.ab))# attn = (q @ k) * self.scaleattn = self.talking_head1(attn)attn = attn.softmax(dim=-1)attn = self.talking_head2(attn)x = (attn @ v)out = x.transpose(2, 3).reshape(B, self.dh, self.resolution, self.resolution) + v_localif self.upsample is not None:out = self.upsample(out)out = self.proj(out)return outdef stem(in_chs, out_chs, act_layer=nn.ReLU):return nn.Sequential(nn.Conv2d(in_chs, out_chs // 2, kernel_size=3, stride=2, padding=1),nn.BatchNorm2d(out_chs // 2),act_layer(),nn.Conv2d(out_chs // 2, out_chs, kernel_size=3, stride=2, padding=1),nn.BatchNorm2d(out_chs),act_layer(),)class LGQuery(torch.nn.Module):def __init__(self, in_dim, out_dim, resolution1, resolution2):super().__init__()self.resolution1 = resolution1self.resolution2 = resolution2self.pool = nn.AvgPool2d(1, 2, 0)self.local = nn.Sequential(nn.Conv2d(in_dim, in_dim, kernel_size=3, stride=2, padding=1, groups=in_dim),)self.proj = nn.Sequential(nn.Conv2d(in_dim, out_dim, 1),nn.BatchNorm2d(out_dim), )def forward(self, x):local_q = self.local(x)pool_q = self.pool(x)q = local_q + pool_qq = self.proj(q)return qclass Attention4DDownsample(torch.nn.Module):def __init__(self, dim=384, key_dim=16, num_heads=8,attn_ratio=4,resolution=7,out_dim=None,act_layer=None,):super().__init__()self.num_heads = num_headsself.scale = key_dim ** -0.5self.key_dim = key_dimself.nh_kd = nh_kd = key_dim * num_headsself.resolution = resolutionself.d = int(attn_ratio * key_dim)self.dh = int(attn_ratio * key_dim) * num_headsself.attn_ratio = attn_ratioh = self.dh + nh_kd * 2if out_dim is not None:self.out_dim = out_dimelse:self.out_dim = dimself.resolution2 = math.ceil(self.resolution / 2)self.q = LGQuery(dim, self.num_heads * self.key_dim, self.resolution, self.resolution2)self.N = self.resolution ** 2self.N2 = self.resolution2 ** 2self.k = nn.Sequential(nn.Conv2d(dim, self.num_heads * self.key_dim, 1),nn.BatchNorm2d(self.num_heads * self.key_dim), )self.v = nn.Sequential(nn.Conv2d(dim, self.num_heads * self.d, 1),nn.BatchNorm2d(self.num_heads * self.d),)self.v_local = nn.Sequential(nn.Conv2d(self.num_heads * self.d, self.num_heads * self.d,kernel_size=3, stride=2, padding=1, groups=self.num_heads * self.d),nn.BatchNorm2d(self.num_heads * self.d), )self.proj = nn.Sequential(act_layer(),nn.Conv2d(self.dh, self.out_dim, 1),nn.BatchNorm2d(self.out_dim), )points = list(itertools.product(range(self.resolution), range(self.resolution)))points_ = list(itertools.product(range(self.resolution2), range(self.resolution2)))N = len(points)N_ = len(points_)attention_offsets = {}idxs = []for p1 in points_:for p2 in points:size = 1offset = (abs(p1[0] * math.ceil(self.resolution / self.resolution2) - p2[0] + (size - 1) / 2),abs(p1[1] * math.ceil(self.resolution / self.resolution2) - p2[1] + (size - 1) / 2))if offset not in attention_offsets:attention_offsets[offset] = len(attention_offsets)idxs.append(attention_offsets[offset])self.attention_biases = torch.nn.Parameter(torch.zeros(num_heads, len(attention_offsets)))self.register_buffer('attention_bias_idxs',torch.LongTensor(idxs).view(N_, N))@torch.no_grad()def train(self, mode=True):super().train(mode)if mode and hasattr(self, 'ab'):del self.abelse:self.ab = self.attention_biases[:, self.attention_bias_idxs]def forward(self, x): # x (B,N,C)B, C, H, W = x.shapeq = self.q(x).flatten(2).reshape(B, self.num_heads, -1, self.N2).permute(0, 1, 3, 2)k = self.k(x).flatten(2).reshape(B, self.num_heads, -1, self.N).permute(0, 1, 2, 3)v = self.v(x)v_local = self.v_local(v)v = v.flatten(2).reshape(B, self.num_heads, -1, self.N).permute(0, 1, 3, 2)attn = ((q @ k) * self.scale+(self.attention_biases[:, self.attention_bias_idxs]if self.training else self.ab))# attn = (q @ k) * self.scaleattn = attn.softmax(dim=-1)x = (attn @ v).transpose(2, 3)out = x.reshape(B, self.dh, self.resolution2, self.resolution2) + v_localout = self.proj(out)return outclass Embedding(nn.Module):def __init__(self, patch_size=3, stride=2, padding=1,in_chans=3, embed_dim=768, norm_layer=nn.BatchNorm2d,light=False, asub=False, resolution=None, act_layer=nn.ReLU, attn_block=Attention4DDownsample):super().__init__()self.light = lightself.asub = asubif self.light:self.new_proj = nn.Sequential(nn.Conv2d(in_chans, in_chans, kernel_size=3, stride=2, padding=1, groups=in_chans),nn.BatchNorm2d(in_chans),nn.Hardswish(),nn.Conv2d(in_chans, embed_dim, kernel_size=1, stride=1, padding=0),nn.BatchNorm2d(embed_dim),)self.skip = nn.Sequential(nn.Conv2d(in_chans, embed_dim, kernel_size=1, stride=2, padding=0),nn.BatchNorm2d(embed_dim))elif self.asub:self.attn = attn_block(dim=in_chans, out_dim=embed_dim,resolution=resolution, act_layer=act_layer)patch_size = to_2tuple(patch_size)stride = to_2tuple(stride)padding = to_2tuple(padding)self.conv = nn.Conv2d(in_chans, embed_dim, kernel_size=patch_size,stride=stride, padding=padding)self.bn = norm_layer(embed_dim) if norm_layer else nn.Identity()else:patch_size = to_2tuple(patch_size)stride = to_2tuple(stride)padding = to_2tuple(padding)self.proj = nn.Conv2d(in_chans, embed_dim, kernel_size=patch_size,stride=stride, padding=padding)self.norm = norm_layer(embed_dim) if norm_layer else nn.Identity()def forward(self, x):if self.light:out = self.new_proj(x) + self.skip(x)elif self.asub:out_conv = self.conv(x)out_conv = self.bn(out_conv)out = self.attn(x) + out_convelse:x = self.proj(x)out = self.norm(x)return outclass Mlp(nn.Module):"""Implementation of MLP with 1*1 convolutions.Input: tensor with shape [B, C, H, W]"""def __init__(self, in_features, hidden_features=None,out_features=None, act_layer=nn.GELU, drop=0., mid_conv=False):super().__init__()out_features = out_features or in_featureshidden_features = hidden_features or in_featuresself.mid_conv = mid_convself.fc1 = nn.Conv2d(in_features, hidden_features, 1)self.act = act_layer()self.fc2 = nn.Conv2d(hidden_features, out_features, 1)self.drop = nn.Dropout(drop)self.apply(self._init_weights)if self.mid_conv:self.mid = nn.Conv2d(hidden_features, hidden_features, kernel_size=3, stride=1, padding=1,groups=hidden_features)self.mid_norm = nn.BatchNorm2d(hidden_features)self.norm1 = nn.BatchNorm2d(hidden_features)self.norm2 = nn.BatchNorm2d(out_features)def _init_weights(self, m):if isinstance(m, nn.Conv2d):trunc_normal_(m.weight, std=.02)if m.bias is not None:nn.init.constant_(m.bias, 0)def forward(self, x):x = self.fc1(x)x = self.norm1(x)x = self.act(x)if self.mid_conv:x_mid = self.mid(x)x_mid = self.mid_norm(x_mid)x = self.act(x_mid)x = self.drop(x)x = self.fc2(x)x = self.norm2(x)x = self.drop(x)return xclass AttnFFN(nn.Module):def __init__(self, dim, mlp_ratio=4.,act_layer=nn.ReLU, norm_layer=nn.LayerNorm,drop=0., drop_path=0.,use_layer_scale=True, layer_scale_init_value=1e-5,resolution=7, stride=None):super().__init__()self.token_mixer = Attention4D(dim, resolution=resolution, act_layer=act_layer, stride=stride)mlp_hidden_dim = int(dim * mlp_ratio)self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim,act_layer=act_layer, drop=drop, mid_conv=True)self.drop_path = DropPath(drop_path) if drop_path > 0. \else nn.Identity()self.use_layer_scale = use_layer_scaleif use_layer_scale:self.layer_scale_1 = nn.Parameter(layer_scale_init_value * torch.ones(dim).unsqueeze(-1).unsqueeze(-1), requires_grad=True)self.layer_scale_2 = nn.Parameter(layer_scale_init_value * torch.ones(dim).unsqueeze(-1).unsqueeze(-1), requires_grad=True)def forward(self, x):if self.use_layer_scale:x = x + self.drop_path(self.layer_scale_1 * self.token_mixer(x))x = x + self.drop_path(self.layer_scale_2 * self.mlp(x))else:x = x + self.drop_path(self.token_mixer(x))x = x + self.drop_path(self.mlp(x))return xclass FFN(nn.Module):def __init__(self, dim, pool_size=3, mlp_ratio=4.,act_layer=nn.GELU,drop=0., drop_path=0.,use_layer_scale=True, layer_scale_init_value=1e-5):super().__init__()mlp_hidden_dim = int(dim * mlp_ratio)self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim,act_layer=act_layer, drop=drop, mid_conv=True)self.drop_path = DropPath(drop_path) if drop_path > 0. \else nn.Identity()self.use_layer_scale = use_layer_scaleif use_layer_scale:self.layer_scale_2 = nn.Parameter(layer_scale_init_value * torch.ones(dim).unsqueeze(-1).unsqueeze(-1), requires_grad=True)def forward(self, x):if self.use_layer_scale:x = x + self.drop_path(self.layer_scale_2 * self.mlp(x))else:x = x + self.drop_path(self.mlp(x))return xdef eformer_block(dim, index, layers,pool_size=3, mlp_ratio=4.,act_layer=nn.GELU, norm_layer=nn.LayerNorm,drop_rate=.0, drop_path_rate=0.,use_layer_scale=True, layer_scale_init_value=1e-5, vit_num=1, resolution=7, e_ratios=None):blocks = []for block_idx in range(layers[index]):block_dpr = drop_path_rate * (block_idx + sum(layers[:index])) / (sum(layers) - 1)mlp_ratio = e_ratios[str(index)][block_idx]if index >= 2 and block_idx > layers[index] - 1 - vit_num:if index == 2:stride = 2else:stride = Noneblocks.append(AttnFFN(dim, mlp_ratio=mlp_ratio,act_layer=act_layer, norm_layer=norm_layer,drop=drop_rate, drop_path=block_dpr,use_layer_scale=use_layer_scale,layer_scale_init_value=layer_scale_init_value,resolution=resolution,stride=stride,))else:blocks.append(FFN(dim, pool_size=pool_size, mlp_ratio=mlp_ratio,act_layer=act_layer,drop=drop_rate, drop_path=block_dpr,use_layer_scale=use_layer_scale,layer_scale_init_value=layer_scale_init_value,))blocks = nn.Sequential(*blocks)return blocksclass EfficientFormerV2(nn.Module):def __init__(self, layers, embed_dims=None,mlp_ratios=4, downsamples=None,pool_size=3,norm_layer=nn.BatchNorm2d, act_layer=nn.GELU,num_classes=1000,down_patch_size=3, down_stride=2, down_pad=1,drop_rate=0., drop_path_rate=0.,use_layer_scale=True, layer_scale_init_value=1e-5,fork_feat=True,vit_num=0,resolution=640,e_ratios=expansion_ratios_L,**kwargs):super().__init__()if not fork_feat:self.num_classes = num_classesself.fork_feat = fork_featself.patch_embed = stem(3, embed_dims[0], act_layer=act_layer)network = []for i in range(len(layers)):stage = eformer_block(embed_dims[i], i, layers,pool_size=pool_size, mlp_ratio=mlp_ratios,act_layer=act_layer, norm_layer=norm_layer,drop_rate=drop_rate,drop_path_rate=drop_path_rate,use_layer_scale=use_layer_scale,layer_scale_init_value=layer_scale_init_value,resolution=math.ceil(resolution / (2 ** (i + 2))),vit_num=vit_num,e_ratios=e_ratios)network.append(stage)if i >= len(layers) - 1:breakif downsamples[i] or embed_dims[i] != embed_dims[i + 1]:# downsampling between two stagesif i >= 2:asub = Trueelse:asub = Falsenetwork.append(Embedding(patch_size=down_patch_size, stride=down_stride,padding=down_pad,in_chans=embed_dims[i], embed_dim=embed_dims[i + 1],resolution=math.ceil(resolution / (2 ** (i + 2))),asub=asub,act_layer=act_layer, norm_layer=norm_layer,))self.network = nn.ModuleList(network)if self.fork_feat:# add a norm layer for each outputself.out_indices = [0, 2, 4, 6]for i_emb, i_layer in enumerate(self.out_indices):if i_emb == 0 and os.environ.get('FORK_LAST3', None):layer = nn.Identity()else:layer = norm_layer(embed_dims[i_emb])layer_name = f'norm{i_layer}'self.add_module(layer_name, layer)self.channel = [i.size(1) for i in self.forward(torch.randn(1, 3, resolution, resolution))]def forward_tokens(self, x):outs = []for idx, block in enumerate(self.network):x = block(x)if self.fork_feat and idx in self.out_indices:norm_layer = getattr(self, f'norm{idx}')x_out = norm_layer(x)outs.append(x_out)return outsdef forward(self, x):x = self.patch_embed(x)x = self.forward_tokens(x)return xdef update_weight(model_dict, weight_dict):idx, temp_dict = 0, {}for k, v in weight_dict.items():if k in model_dict.keys() and np.shape(model_dict[k]) == np.shape(v):temp_dict[k] = vidx += 1model_dict.update(temp_dict)print(f'loading weights... {idx}/{len(model_dict)} items')return model_dictdef efficientformerv2_s0(weights='', **kwargs):model = EfficientFormerV2(layers=EfficientFormer_depth['S0'],embed_dims=EfficientFormer_width['S0'],downsamples=[True, True, True, True, True],vit_num=2,drop_path_rate=0.0,e_ratios=expansion_ratios_S0,**kwargs)if weights:pretrained_weight = torch.load(weights)['model']model.load_state_dict(update_weight(model.state_dict(), pretrained_weight))return modeldef efficientformerv2_s1(weights='', **kwargs):model = EfficientFormerV2(layers=EfficientFormer_depth['S1'],embed_dims=EfficientFormer_width['S1'],downsamples=[True, True, True, True],vit_num=2,drop_path_rate=0.0,e_ratios=expansion_ratios_S1,**kwargs)if weights:pretrained_weight = torch.load(weights)['model']model.load_state_dict(update_weight(model.state_dict(), pretrained_weight))return modeldef efficientformerv2_s2(weights='', **kwargs):model = EfficientFormerV2(layers=EfficientFormer_depth['S2'],embed_dims=EfficientFormer_width['S2'],downsamples=[True, True, True, True],vit_num=4,drop_path_rate=0.02,e_ratios=expansion_ratios_S2,**kwargs)if weights:pretrained_weight = torch.load(weights)['model']model.load_state_dict(update_weight(model.state_dict(), pretrained_weight))return modeldef efficientformerv2_l(weights='', **kwargs):model = EfficientFormerV2(layers=EfficientFormer_depth['L'],embed_dims=EfficientFormer_width['L'],downsamples=[True, True, True, True],vit_num=6,drop_path_rate=0.1,e_ratios=expansion_ratios_L,**kwargs)if weights:pretrained_weight = torch.load(weights)['model']model.load_state_dict(update_weight(model.state_dict(), pretrained_weight))return modelif __name__ == '__main__':inputs = torch.randn((1, 3, 640, 640))model = efficientformerv2_s0('eformer_s0_450.pth')res = model(inputs)for i in res:print(i.size())model = efficientformerv2_s1('eformer_s1_450.pth')res = model(inputs)for i in res:print(i.size())model = efficientformerv2_s2('eformer_s2_450.pth')res = model(inputs)for i in res:print(i.size())model = efficientformerv2_l('eformer_l_450.pth')res = model(inputs)for i in res:print(i.size())第②步:修改task.py

(1)引入创建的EfficientFormerV2文件

from ultralytics.nn.backbone.EfficientFormerV2 import *(2)修改_predict_once函数

def _predict_once(self, x, profile=False, visualize=False, embed=None):"""Perform a forward pass through the network.Args:x (torch.Tensor): The input tensor to the model.profile (bool): Print the computation time of each layer if True, defaults to False.visualize (bool): Save the feature maps of the model if True, defaults to False.embed (list, optional): A list of feature vectors/embeddings to return.Returns:(torch.Tensor): The last output of the model."""y, dt, embeddings = [], [], [] # outputsfor idx, m in enumerate(self.model):if m.f != -1: # if not from previous layerx = y[m.f] if isinstance(m.f, int) else [x if j == -1 else y[j] for j in m.f] # from earlier layersif profile:self._profile_one_layer(m, x, dt)if hasattr(m, 'backbone'):x = m(x)for _ in range(5 - len(x)):x.insert(0, None)for i_idx, i in enumerate(x):if i_idx in self.save:y.append(i)else:y.append(None)# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x if x_ is not None])}')x = x[-1]else:x = m(x) # runy.append(x if m.i in self.save else None) # save output# if type(x) in {list, tuple}:# if idx == (len(self.model) - 1):# if type(x[1]) is dict:# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x[1]["one2one"]])}')# else:# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x[1]])}')# else:# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x if x_ is not None])}')# elif type(x) is dict:# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x["one2one"]])}')# else:# if not hasattr(m, 'backbone'):# print(f'layer id:{idx:>2} {m.type:>50} output shape:{x.size()}')if visualize:feature_visualization(x, m.type, m.i, save_dir=visualize)if embed and m.i in embed:embeddings.append(nn.functional.adaptive_avg_pool2d(x, (1, 1)).squeeze(-1).squeeze(-1)) # flattenif m.i == max(embed):return torch.unbind(torch.cat(embeddings, 1), dim=0)return x(3)修改parse_model函数

可以直接把下面的代码粘贴到对应的位置中,后续的改进中,对应的模块就不需要做出改变,有改变处,后续会另有说明

def parse_model(d, ch, verbose=True, warehouse_manager=None): # model_dict, input_channels(3)"""Parse a YOLO model.yaml dictionary into a PyTorch model."""import ast# Argsmax_channels = float("inf")nc, act, scales = (d.get(x) for x in ("nc", "activation", "scales"))depth, width, kpt_shape = (d.get(x, 1.0) for x in ("depth_multiple", "width_multiple", "kpt_shape"))if scales:scale = d.get("scale")if not scale:scale = tuple(scales.keys())[0]LOGGER.warning(f"WARNING ⚠️ no model scale passed. Assuming scale='{scale}'.")if len(scales[scale]) == 3:depth, width, max_channels = scales[scale]elif len(scales[scale]) == 4:depth, width, max_channels, threshold = scales[scale]if act:Conv.default_act = eval(act) # redefine default activation, i.e. Conv.default_act = nn.SiLU()if verbose:LOGGER.info(f"{colorstr('activation:')} {act}") # printif verbose:LOGGER.info(f"\n{'':>3}{'from':>20}{'n':>3}{'params':>10} {'module':<60}{'arguments':<50}")ch = [ch]layers, save, c2 = [], [], ch[-1] # layers, savelist, ch outis_backbone = Falsefor i, (f, n, m, args) in enumerate(d["backbone"] + d["head"]): # from, number, module, argstry:if m == 'node_mode':m = d[m]if len(args) > 0:if args[0] == 'head_channel':args[0] = int(d[args[0]])t = mm = getattr(torch.nn, m[3:]) if 'nn.' in m else globals()[m] # get moduleexcept:passfor j, a in enumerate(args):if isinstance(a, str):with contextlib.suppress(ValueError):try:args[j] = locals()[a] if a in locals() else ast.literal_eval(a)except:args[j] = an = n_ = max(round(n * depth), 1) if n > 1 else n # depth gainif m in {Classify, Conv, ConvTranspose, GhostConv, Bottleneck, GhostBottleneck, SPP, SPPF, DWConv, Focus, BottleneckCSP, C1, C2, C2f, ELAN1, AConv, SPPELAN, C2fAttn, C3, C3TR, C3Ghost, nn.Conv2d, nn.ConvTranspose2d, DWConvTranspose2d, C3x, RepC3, PSA, SCDown, C2fCIB, C2f_Faster, C2f_ODConv,C2f_Faster_EMA, C2f_DBB, GSConv, GSConvns, VoVGSCSP, VoVGSCSPns, VoVGSCSPC, C2f_CloAtt, C3_CloAtt, SCConv, C2f_SCConv, C3_SCConv, C2f_ScConv, C3_ScConv,C3_EMSC, C3_EMSCP, C2f_EMSC, C2f_EMSCP, RCSOSA, KWConv, C2f_KW, C3_KW, DySnakeConv, C2f_DySnakeConv, C3_DySnakeConv,DCNv2, C3_DCNv2, C2f_DCNv2, DCNV3_YOLO, C3_DCNv3, C2f_DCNv3, C3_Faster, C3_Faster_EMA, C3_ODConv,OREPA, OREPA_LargeConv, RepVGGBlock_OREPA, C3_OREPA, C2f_OREPA, C3_DBB, C3_REPVGGOREPA, C2f_REPVGGOREPA,C3_DCNv2_Dynamic, C2f_DCNv2_Dynamic, C3_ContextGuided, C2f_ContextGuided, C3_MSBlock, C2f_MSBlock,C3_DLKA, C2f_DLKA, CSPStage, SPDConv, RepBlock, C3_EMBC, C2f_EMBC, SPPF_LSKA, C3_DAttention, C2f_DAttention,C3_Parc, C2f_Parc, C3_DWR, C2f_DWR, RFAConv, RFCAConv, RFCBAMConv, C3_RFAConv, C2f_RFAConv,C3_RFCBAMConv, C2f_RFCBAMConv, C3_RFCAConv, C2f_RFCAConv, C3_FocusedLinearAttention, C2f_FocusedLinearAttention,C3_AKConv, C2f_AKConv, AKConv, C3_MLCA, C2f_MLCA,C3_UniRepLKNetBlock, C2f_UniRepLKNetBlock, C3_DRB, C2f_DRB, C3_DWR_DRB, C2f_DWR_DRB, CSP_EDLAN,C3_AggregatedAtt, C2f_AggregatedAtt, DCNV4_YOLO, C3_DCNv4, C2f_DCNv4, HWD, SEAM,C3_SWC, C2f_SWC, C3_iRMB, C2f_iRMB, C3_iRMB_Cascaded, C2f_iRMB_Cascaded, C3_iRMB_DRB, C2f_iRMB_DRB, C3_iRMB_SWC, C2f_iRMB_SWC,C3_VSS, C2f_VSS, C3_LVMB, C2f_LVMB, RepNCSPELAN4, DBBNCSPELAN4, OREPANCSPELAN4, DRBNCSPELAN4, ADown, V7DownSampling,C3_DynamicConv, C2f_DynamicConv, C3_GhostDynamicConv, C2f_GhostDynamicConv, C3_RVB, C2f_RVB, C3_RVB_SE, C2f_RVB_SE, C3_RVB_EMA, C2f_RVB_EMA, DGCST,C3_RetBlock, C2f_RetBlock, C3_PKIModule, C2f_PKIModule, RepNCSPELAN4_CAA, C3_FADC, C2f_FADC, C3_PPA, C2f_PPA, SRFD, DRFD, RGCSPELAN,C3_Faster_CGLU, C2f_Faster_CGLU, C3_Star, C2f_Star, C3_Star_CAA, C2f_Star_CAA, C3_KAN, C2f_KAN, C3_EIEM, C2f_EIEM, C3_DEConv, C2f_DEConv,C3_SMPCGLU, C2f_SMPCGLU, C3_Heat, C2f_Heat, CSP_PTB, SimpleStem, VisionClueMerge, VSSBlock_YOLO, XSSBlock, GLSA, C2f_WTConv, WTConv2d, FeaturePyramidSharedConv,C2f_FMB, LDConv, C2f_gConv, C2f_WDBB, C2f_DeepDBB, C2f_AdditiveBlock, C2f_AdditiveBlock_CGLU, CSP_MSCB, C2f_MSMHSA_CGLU, CSP_PMSFA, C2f_MogaBlock,C2f_SHSA, C2f_SHSA_CGLU, C2f_SMAFB, C2f_SMAFB_CGLU, C2f_IdentityFormer, C2f_RandomMixing, C2f_PoolingFormer, C2f_ConvFormer, C2f_CaFormer,C2f_IdentityFormerCGLU, C2f_RandomMixingCGLU, C2f_PoolingFormerCGLU, C2f_ConvFormerCGLU, C2f_CaFormerCGLU, CSP_MutilScaleEdgeInformationEnhance, C2f_FFCM,C2f_SFHF, CSP_FreqSpatial, C2f_MSM, C2f_RAB, C2f_HDRAB, C2f_LFE, CSP_MutilScaleEdgeInformationSelect, C2f_SFA, C2f_CTA, C2f_CAMixer, MANet,MANet_FasterBlock, MANet_FasterCGLU, MANet_Star, C2f_HFERB, C2f_DTAB, C2f_ETB, C2f_JDPM, C2f_AP, PSConv, C2f_Kat, C2f_Faster_KAN, C2f_Strip, C2f_StripCGLU}:if args[0] == 'head_channel':args[0] = d[args[0]]c1, c2 = ch[f], args[0]if c2 != nc: # if c2 not equal to number of classes (i.e. for Classify() output)c2 = make_divisible(min(c2, max_channels) * width, 8)if m is C2fAttn:args[1] = make_divisible(min(args[1], max_channels // 2) * width, 8) # embed channelsargs[2] = int(max(round(min(args[2], max_channels // 2 // 32)) * width, 1) if args[2] > 1 else args[2]) # num headsargs = [c1, c2, *args[1:]]if m in (KWConv, C2f_KW, C3_KW):args.insert(2, f'layer{i}')args.insert(2, warehouse_manager)if m in (DySnakeConv,):c2 = c2 * 3if m in (RepNCSPELAN4, DBBNCSPELAN4, OREPANCSPELAN4, DRBNCSPELAN4, RepNCSPELAN4_CAA):args[2] = make_divisible(min(args[2], max_channels) * width, 8)args[3] = make_divisible(min(args[3], max_channels) * width, 8)if m in {BottleneckCSP, C1, C2, C2f, C2fAttn, C3, C3TR, C3Ghost, C3x, RepC3, C2fCIB, C2f_Faster, C2f_ODConv, C2f_Faster_EMA, C2f_DBB,VoVGSCSP, VoVGSCSPns, VoVGSCSPC, C2f_CloAtt, C3_CloAtt, C2f_SCConv, C3_SCConv, C2f_ScConv, C3_ScConv,C3_EMSC, C3_EMSCP, C2f_EMSC, C2f_EMSCP, RCSOSA, C2f_KW, C3_KW, C2f_DySnakeConv, C3_DySnakeConv,C3_DCNv2, C2f_DCNv2, C3_DCNv3, C2f_DCNv3, C3_Faster, C3_Faster_EMA, C3_ODConv, C3_OREPA, C2f_OREPA, C3_DBB,C3_REPVGGOREPA, C2f_REPVGGOREPA, C3_DCNv2_Dynamic, C2f_DCNv2_Dynamic, C3_ContextGuided, C2f_ContextGuided, C3_MSBlock, C2f_MSBlock, C3_DLKA, C2f_DLKA, CSPStage, RepBlock, C3_EMBC, C2f_EMBC, C3_DAttention, C2f_DAttention,C3_Parc, C2f_Parc, C3_DWR, C2f_DWR, C3_RFAConv, C2f_RFAConv, C3_RFCBAMConv, C2f_RFCBAMConv, C3_RFCAConv, C2f_RFCAConv,C3_FocusedLinearAttention, C2f_FocusedLinearAttention, C3_AKConv, C2f_AKConv, C3_MLCA, C2f_MLCA,C3_UniRepLKNetBlock, C2f_UniRepLKNetBlock, C3_DRB, C2f_DRB, C3_DWR_DRB, C2f_DWR_DRB, CSP_EDLAN,C3_AggregatedAtt, C2f_AggregatedAtt, C3_DCNv4, C2f_DCNv4, C3_SWC, C2f_SWC,C3_iRMB, C2f_iRMB, C3_iRMB_Cascaded, C2f_iRMB_Cascaded, C3_iRMB_DRB, C2f_iRMB_DRB, C3_iRMB_SWC, C2f_iRMB_SWC,C3_VSS, C2f_VSS, C3_LVMB, C2f_LVMB, C3_DynamicConv, C2f_DynamicConv, C3_GhostDynamicConv, C2f_GhostDynamicConv,C3_RVB, C2f_RVB, C3_RVB_SE, C2f_RVB_SE, C3_RVB_EMA, C2f_RVB_EMA, C3_RetBlock, C2f_RetBlock, C3_PKIModule, C2f_PKIModule,C3_FADC, C2f_FADC, C3_PPA, C2f_PPA, RGCSPELAN, C3_Faster_CGLU, C2f_Faster_CGLU, C3_Star, C2f_Star, C3_Star_CAA, C2f_Star_CAA,C3_KAN, C2f_KAN, C3_EIEM, C2f_EIEM, C3_DEConv, C2f_DEConv, C3_SMPCGLU, C2f_SMPCGLU, C3_Heat, C2f_Heat, CSP_PTB, XSSBlock, C2f_WTConv,C2f_FMB, C2f_gConv, C2f_WDBB, C2f_DeepDBB, C2f_AdditiveBlock, C2f_AdditiveBlock_CGLU, CSP_MSCB, C2f_MSMHSA_CGLU, CSP_PMSFA, C2f_MogaBlock,C2f_SHSA, C2f_SHSA_CGLU, C2f_SMAFB, C2f_SMAFB_CGLU, C2f_IdentityFormer, C2f_RandomMixing, C2f_PoolingFormer, C2f_ConvFormer, C2f_CaFormer,C2f_IdentityFormerCGLU, C2f_RandomMixingCGLU, C2f_PoolingFormerCGLU, C2f_ConvFormerCGLU, C2f_CaFormerCGLU, CSP_MutilScaleEdgeInformationEnhance, C2f_FFCM,C2f_SFHF, CSP_FreqSpatial, C2f_MSM, C2f_RAB, C2f_HDRAB, C2f_LFE, CSP_MutilScaleEdgeInformationSelect, C2f_SFA, C2f_CTA, C2f_CAMixer, MANet,MANet_FasterBlock, MANet_FasterCGLU, MANet_Star, C2f_HFERB, C2f_DTAB, C2f_ETB, C2f_JDPM, C2f_AP, C2f_Kat, C2f_Faster_KAN, C2f_Strip, C2f_StripCGLU}:args.insert(2, n) # number of repeatsn = 1elif m in {AIFI, AIFI_RepBN}:args = [ch[f], *args]c2 = args[0]elif m in (HGStem, HGBlock, Ghost_HGBlock, Rep_HGBlock, Dynamic_HGBlock, EIEStem):c1, cm, c2 = ch[f], args[0], args[1]if c2 != nc: # if c2 not equal to number of classes (i.e. for Classify() output)c2 = make_divisible(min(c2, max_channels) * width, 8)cm = make_divisible(min(cm, max_channels) * width, 8)args = [c1, cm, c2, *args[2:]]if m in (HGBlock, Ghost_HGBlock, Rep_HGBlock, Dynamic_HGBlock):args.insert(4, n) # number of repeatsn = 1elif m is ResNetLayer:c2 = args[1] if args[3] else args[1] * 4elif m is nn.BatchNorm2d:args = [ch[f]]elif m is Concat:c2 = sum(ch[x] for x in f)elif m in ((WorldDetect, ImagePoolingAttn) + DETECT_CLASS + V10_DETECT_CLASS + SEGMENT_CLASS + POSE_CLASS + OBB_CLASS):args.append([ch[x] for x in f])if m in SEGMENT_CLASS:args[2] = make_divisible(min(args[2], max_channels) * width, 8)if m in (Segment_LSCD, Segment_TADDH, Segment_LSCSBD, Segment_LSDECD, Segment_RSCD):args[3] = make_divisible(min(args[3], max_channels) * width, 8)if m in (Detect_LSCD, Detect_TADDH, Detect_LSCSBD, Detect_LSDECD, Detect_RSCD, v10Detect_LSCD, v10Detect_TADDH, v10Detect_RSCD, v10Detect_LSDECD):args[1] = make_divisible(min(args[1], max_channels) * width, 8)if m in (Pose_LSCD, Pose_TADDH, Pose_LSCSBD, Pose_LSDECD, Pose_RSCD, OBB_LSCD, OBB_TADDH, OBB_LSCSBD, OBB_LSDECD, OBB_RSCD):args[2] = make_divisible(min(args[2], max_channels) * width, 8)elif m is RTDETRDecoder: # special case, channels arg must be passed in index 1args.insert(1, [ch[x] for x in f])elif m is Fusion:args[0] = d[args[0]]c1, c2 = [ch[x] for x in f], (sum([ch[x] for x in f]) if args[0] == 'concat' else ch[f[0]])args = [c1, args[0]]elif m is CBLinear:c2 = make_divisible(min(args[0][-1], max_channels) * width, 8)c1 = ch[f]args = [c1, [make_divisible(min(c2_, max_channels) * width, 8) for c2_ in args[0]], *args[1:]]elif m is CBFuse:c2 = ch[f[-1]]elif isinstance(m, str):t = mif len(args) == 2: m = timm.create_model(m, pretrained=args[0], pretrained_cfg_overlay={'file':args[1]}, features_only=True)elif len(args) == 1:m = timm.create_model(m, pretrained=args[0], features_only=True)c2 = m.feature_info.channels()elif m in {convnextv2_atto, convnextv2_femto, convnextv2_pico, convnextv2_nano, convnextv2_tiny, convnextv2_base, convnextv2_large, convnextv2_huge,fasternet_t0, fasternet_t1, fasternet_t2, fasternet_s, fasternet_m, fasternet_l,EfficientViT_M0, EfficientViT_M1, EfficientViT_M2, EfficientViT_M3, EfficientViT_M4, EfficientViT_M5,efficientformerv2_s0, efficientformerv2_s1, efficientformerv2_s2, efficientformerv2_l,vanillanet_5, vanillanet_6, vanillanet_7, vanillanet_8, vanillanet_9, vanillanet_10, vanillanet_11, vanillanet_12, vanillanet_13, vanillanet_13_x1_5, vanillanet_13_x1_5_ada_pool,RevCol,lsknet_t, lsknet_s,SwinTransformer_Tiny,repvit_m0_9, repvit_m1_0, repvit_m1_1, repvit_m1_5, repvit_m2_3,CSWin_tiny, CSWin_small, CSWin_base, CSWin_large,unireplknet_a, unireplknet_f, unireplknet_p, unireplknet_n, unireplknet_t, unireplknet_s, unireplknet_b, unireplknet_l, unireplknet_xl,transnext_micro, transnext_tiny, transnext_small, transnext_base,RMT_T, RMT_S, RMT_B, RMT_L,PKINET_T, PKINET_S, PKINET_B,MobileNetV4ConvSmall, MobileNetV4ConvMedium, MobileNetV4ConvLarge, MobileNetV4HybridMedium, MobileNetV4HybridLarge,starnet_s050, starnet_s100, starnet_s150, starnet_s1, starnet_s2, starnet_s3, starnet_s4}:if m is RevCol:args[1] = [make_divisible(min(k, max_channels) * width, 8) for k in args[1]]args[2] = [max(round(k * depth), 1) for k in args[2]]m = m(*args)c2 = m.channelelif m in {EMA, SpatialAttention, BiLevelRoutingAttention, BiLevelRoutingAttention_nchw,TripletAttention, CoordAtt, CBAM, BAMBlock, LSKBlock, ScConv, LAWDS, EMSConv, EMSConvP,SEAttention, CPCA, Partial_conv3, FocalModulation, EfficientAttention, MPCA, deformable_LKA,EffectiveSEModule, LSKA, SegNext_Attention, DAttention, MLCA, TransNeXt_AggregatedAttention,FocusedLinearAttention, LocalWindowAttention, ChannelAttention_HSFPN, ELA_HSFPN, CA_HSFPN, CAA_HSFPN, DySample, CARAFE, CAA, ELA, CAFM, AFGCAttention, EUCB, ContrastDrivenFeatureAggregation, FSA}:c2 = ch[f]args = [c2, *args]# print(args)elif m in {SimAM, SpatialGroupEnhance}:c2 = ch[f]elif m is ContextGuidedBlock_Down:c2 = ch[f] * 2args = [ch[f], c2, *args]elif m is BiFusion:c1 = [ch[x] for x in f]c2 = make_divisible(min(args[0], max_channels) * width, 8)args = [c1, c2]# --------------GOLD-YOLO--------------elif m in {SimFusion_4in, AdvPoolFusion}:c2 = sum(ch[x] for x in f)elif m is SimFusion_3in:c2 = args[0]if c2 != nc: # if c2 not equal to number of classes (i.e. for Classify() output)c2 = make_divisible(min(c2, max_channels) * width, 8)args = [[ch[f_] for f_ in f], c2]elif m is IFM:c1 = ch[f]c2 = sum(args[0])args = [c1, *args]elif m is InjectionMultiSum_Auto_pool:c1 = ch[f[0]]c2 = args[0]args = [c1, *args]elif m is PyramidPoolAgg:c2 = args[0]args = [sum([ch[f_] for f_ in f]), *args]elif m is TopBasicLayer:c2 = sum(args[1])# --------------GOLD-YOLO--------------# --------------ASF--------------elif m is Zoom_cat:c2 = sum(ch[x] for x in f)elif m is Add:c2 = ch[f[-1]]elif m in {ScalSeq, DynamicScalSeq}:c1 = [ch[x] for x in f]c2 = make_divisible(args[0] * width, 8)args = [c1, c2]elif m is asf_attention_model:args = [ch[f[-1]]]# --------------ASF--------------elif m is SDI:args = [[ch[x] for x in f]]elif m is Multiply:c2 = ch[f[0]]elif m is FocusFeature:c1 = [ch[x] for x in f]c2 = int(c1[1] * 0.5 * 3)args = [c1, *args]elif m is DASI:c1 = [ch[x] for x in f]args = [c1, c2]elif m is CSMHSA:c1 = [ch[x] for x in f]c2 = ch[f[-1]]args = [c1, c2]elif m is CFC_CRB:c1 = ch[f]c2 = c1 // 2args = [c1, *args]elif m is SFC_G2:c1 = [ch[x] for x in f]c2 = c1[0]args = [c1]elif m in {CGAFusion, CAFMFusion, SDFM, PSFM}:c2 = ch[f[1]]args = [c2, *args]elif m in {ContextGuideFusionModule}:c1 = [ch[x] for x in f]c2 = 2 * c1[1]args = [c1]# elif m in {PSA}:# c2 = ch[f]# args = [c2, *args]elif m in {SBA}:c1 = [ch[x] for x in f]c2 = c1[-1]args = [c1, c2]elif m in {WaveletPool}:c2 = ch[f] * 4elif m in {WaveletUnPool}:c2 = ch[f] // 4elif m in {CSPOmniKernel}:c2 = ch[f]args = [c2]elif m in {ChannelTransformer, PyramidContextExtraction}:c1 = [ch[x] for x in f]c2 = c1args = [c1]elif m in {RCM}:c2 = ch[f]args = [c2, *args]elif m in {DynamicInterpolationFusion}:c2 = ch[f[0]]args = [[ch[x] for x in f]]elif m in {FuseBlockMulti}:c2 = ch[f[0]]args = [c2]elif m in {CrossLayerChannelAttention, CrossLayerSpatialAttention}:c2 = [ch[x] for x in f]args = [c2[0], *args]elif m in {FreqFusion}:c2 = ch[f[0]]args = [[ch[x] for x in f], *args]elif m in {DynamicAlignFusion}:c2 = args[0]args = [[ch[x] for x in f], c2]elif m in {ConvEdgeFusion}:c2 = make_divisible(min(args[0], max_channels) * width, 8)args = [[ch[x] for x in f], c2]elif m in {MutilScaleEdgeInfoGenetator}:c1 = ch[f]c2 = [make_divisible(min(i, max_channels) * width, 8) for i in args[0]]args = [c1, c2]elif m in {MultiScaleGatedAttn}:c1 = [ch[x] for x in f]c2 = min(c1)args = [c1]elif m in {WFU, MultiScalePCA, MultiScalePCA_Down}:c1 = [ch[x] for x in f]c2 = c1[0]args = [c1]elif m in {GetIndexOutput}:c2 = ch[f][args[0]]elif m is HyperComputeModule:c1, c2 = ch[f], args[0]c2 = make_divisible(min(c2, max_channels) * width, 8)args = [c1, c2, threshold]else:c2 = ch[f]if isinstance(c2, list) and m not in {ChannelTransformer, PyramidContextExtraction, CrossLayerChannelAttention, CrossLayerSpatialAttention, MutilScaleEdgeInfoGenetator}:is_backbone = Truem_ = mm_.backbone = Trueelse:m_ = nn.Sequential(*(m(*args) for _ in range(n))) if n > 1 else m(*args) # modulet = str(m)[8:-2].replace('__main__.', '') # module typem.np = sum(x.numel() for x in m_.parameters()) # number paramsm_.i, m_.f, m_.type = i + 4 if is_backbone else i, f, t # attach index, 'from' index, typeif verbose:LOGGER.info(f"{i:>3}{str(f):>20}{n_:>3}{m.np:10.0f} {t:<60}{str(args):<50}") # printsave.extend(x % (i + 4 if is_backbone else i) for x in ([f] if isinstance(f, int) else f) if x != -1) # append to savelistlayers.append(m_)if i == 0:ch = []if isinstance(c2, list) and m not in {ChannelTransformer, PyramidContextExtraction, CrossLayerChannelAttention, CrossLayerSpatialAttention, MutilScaleEdgeInfoGenetator}:ch.extend(c2)for _ in range(5 - len(ch)):ch.insert(0, 0)else:ch.append(c2)return nn.Sequential(*layers), sorted(save)第③步:yolov8.yaml文件修改

在下述文件夹中创立yolov8-EfficientFormerV2.yaml

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'# [depth, width, max_channels]n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPss: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPsm: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPsl: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPsx: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs# 0-P1/2

# 1-P2/4

# 2-P3/8

# 3-P4/16

# 4-P5/32# YOLOv8.0n backbone

backbone:# [from, repeats, module, args]- [-1, 1, efficientformerv2_s0, []] # 4- [-1, 1, SPPF, [1024, 5]] # 5# YOLOv8.0n head

head:- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 6- [[-1, 3], 1, Concat, [1]] # 7 cat backbone P4- [-1, 3, C2f, [512]] # 8- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 9- [[-1, 2], 1, Concat, [1]] # 10 cat backbone P3- [-1, 3, C2f, [256]] # 11 (P3/8-small)- [-1, 1, Conv, [256, 3, 2]] # 12- [[-1, 8], 1, Concat, [1]] # 13 cat head P4- [-1, 3, C2f, [512]] # 14 (P4/16-medium)- [-1, 1, Conv, [512, 3, 2]] # 15- [[-1, 5], 1, Concat, [1]] # 16 cat head P5- [-1, 3, C2f, [1024]] # 17 (P5/32-large)- [[11, 14, 17], 1, Detect, [nc]] # Detect(P3, P4, P5)

第④步:验证是否加入成功

将train.py中的配置文件进行修改,并运行

🏋不是每一粒种子都能开花,但播下种子就比荒芜的旷野强百倍🏋

🍁YOLOv8入门+改进专栏🍁

【YOLOv8改进系列】:

【YOLOv8】YOLOv8结构解读

YOLOv8改进系列(1)----替换主干网络之EfficientViT

YOLOv8改进系列(2)----替换主干网络之FasterNet

YOLOv8改进系列(3)----替换主干网络之ConvNeXt V2

YOLOv8改进系列(4)----替换C2f之FasterNet中的FasterBlock替换C2f中的Bottleneck

相关文章:

【YOLOv8】YOLOv8改进系列(5)----替换主干网络之EfficientFormerV2

主页:HABUO🍁主页:HABUO 🍁YOLOv8入门改进专栏🍁 🍁如果再也不能见到你,祝你早安,午安,晚安🍁 【YOLOv8改进系列】: 【YOLOv8】YOLOv8结构解读…...

深入理解 HTML 文本格式化

在网页开发中,HTML 文本格式化是一项基础且关键的技能。通过合理运用 HTML 格式化标签,我们能够让网页上的文本以丰富多样的形式呈现,从而提升用户体验。本文将详细介绍 HTML 文本格式化的相关知识。 一、HTML 文本格式化基础 加粗文本…...

时序和延时

1、延迟模型的类型 verilog有三种类型的延迟模型:分布延迟 、 集总延迟 、 路径延迟(pin to pin) 1.1、 分布延迟 分布延迟是在每个独立元件的基础上进行定义的。 module M(output wire out ,input wire a …...

北大一二三四版全套DeepSeek教学资料

DeepSeek学习资料合集:https://pan.quark.cn/s/bb6ebf0e9b4d DeepSeek实操变现指南:https://pan.quark.cn/s/76328991eaa2 你是否渴望深入探索人工智能的前沿领域?是否在寻找一份能引领你从理论到实践,全面掌握AI核心技术的学习…...

垃圾收集算法与收集器

在 JVM 中,垃圾收集(Garbage Collection, GC)算法的核心目标是自动回收无用对象的内存,同时尽量减少对应用性能的影响。以下是 JVM 中主要垃圾收集算法的原理、流程及实际应用场景的详细介绍: 一、标记-清除算法&#…...

【大模型】WPS 接入 DeepSeek-R1详解,打造全能AI办公助手

目录 一、前言 二、WPS接入AI工具优势 三、WPS接入AI工具两种方式 3.1 手动配置的方式 3.2 Office AI助手 四、WPS手动配置方式接入AI大模型 4.1 安装VBA插件 4.1.1 下载VBA插件并安装 4.2 配置WPS 4.3 WPS集成VB 4.4 AI助手效果测试 4.5 配置模板文…...

STM32步进电机驱动全解析(上) | 零基础入门STM32第五十七步

主题内容教学目的/扩展视频步进电机电路原理,跳线设置,驱动程序,调用控制。熟悉驱动程序,能调用控制。 师从洋桃电子,杜洋老师 📑文章目录 一、步进电机核心原理图解二、核心特性与优势三、关键驱动方式对比…...

)

Spring Boot 多数据源解决方案:dynamic-datasource-spring-boot-starter 的奥秘(上)

在 Spring Boot 生态中,dynamic-datasource-spring-boot-starter 是一个非常实用的组件,它为我们在多数据源场景下提供了便捷的解决方案。在上一篇文章《一分钟上手:如何创建你的第一个 Spring Boot Starter》中,我们学习了如何创…...

[NewStarCTF 2023 公开赛道]ez_sql1 【sqlmap使用/大小写绕过】

题目: 发现id处可以sql注入: 虽然输入id1;show databases;#没什么回显,但是知道这里是字符型注入了 这次利用sqlmap注入 --dbs:列出所有数据库名字 python .\sqlmap.py -u http://a40b2f0a-823f-4c99-b43c-08b94ed0abb2.node5.…...

】后台管理系统基础搭建:从0到1构建电商中枢)

【商城实战(18)】后台管理系统基础搭建:从0到1构建电商中枢

【商城实战】专栏重磅来袭!这是一份专为开发者与电商从业者打造的超详细指南。从项目基础搭建,运用 uniapp、Element Plus、SpringBoot 搭建商城框架,到用户、商品、订单等核心模块开发,再到性能优化、安全加固、多端适配…...

新能源汽车充电综合解决方案:安科瑞电气助力绿色出行

安科瑞 华楠 18706163979 随着新能源汽车的迅猛发展,充电基础设施的建设成为了推动行业进步的关键。然而,充电技术滞后、运营效率低下、车桩比失衡等问题,依然困扰着广大车主和运营商。今天,我们要为大家介绍一款新能源汽车充电…...

蓝桥杯java-B组真题—动态规划

目录 一.什么是动态规划? 二.题目 第一种情况:集合本身之和为奇数 第二种情况:集合本身之和为偶数 下面是代码实现: 一.什么是动态规划? 这里就简单的解释一下,动态规划就是记录之前的计算结果,避免重复的计算之前已经计算过的结果,用…...

【网络编程】事件选择模型

十、基于I/O模型的网络开发 10.9 事件选择模型 10.0.1 基本概念 事件选择(WSAEventSelect) 模型是另一个有用的异步 I/O 模型。和 WSAAsyncSelect 模 型类似的是,它也允许应用程序在一个或多个套接字上接收以事件为基础的网络事件通知,最 主要的差别在…...

网易邮箱如何用大数据任务调度实现海量邮件数据处理?Apache DolphinScheduler用户交流会上来揭秘!

你是否对大数据领域的前沿应用充满好奇?网易邮箱作为互联网大厂网易的重要业务线,在大数据应用方面有着诸多值得借鉴的实践经验。你是否渴望深入了解网易邮箱如何借助 Apache DolphinScheduler 实现海量邮件数据处理、用户行为分析、实时监控等核心业务场…...

前端知识点---路由模式-实例模式和单例模式(ts)

在 ArkTS(Ark UI 框架)中,路由实例模式(Standard Instance Mode)主要用于管理页面跳转。当创建一个新页面时,可以选择标准实例模式(Standard Mode)或单实例模式(Single M…...

固定表头、首列 —— uniapp、vue 项目

项目实地:也可以在 【微信小程序】搜索体验:xny.handbook 另一个体验项目:官网 一、效果展示 二、代码展示 (1)html 部分 <view class"table"><view class"tr"><view class&quo…...

langchain系列(九)- LangGraph 子图详解

目录 一、导读 二、原理说明 1、简介 2、子图图示 3、使用说明 三、基础代码实现 1、实现功能 2、Graph 图示 3、代码实现 4、输出 5、分析 四、人机交互 1、实现中断 2、历史状态(父图) 3、历史状态(子图) 4、历史…...

搜索引擎是如何理解你的查询并提供精准结果的?

目录 一、搜索引擎简单介绍 二、搜索引擎整体架构和工作过程 (一)整体分析 (二)爬虫系统 三个基本点 爬虫系统的工作流程 关键考虑因素和挑战 (三)索引系统 网页处理阶段 预处理阶段 反作弊分析…...

【前端】【组件】【vue2】封装一个vue2的ECharts组件,不用借助vue-echarts

在Vue2项目中使用ECharts 5.6的完整实现步骤如下: 安装依赖 npm install echarts5.6.2 --save # 指定安装5.x最新版本基础组件实现(新建components/ECharts.vue) <template><div ref"chartDom" class"echarts-co…...

18天 - 常见的 HTTP 状态码有哪些?HTTP 请求包含哪些内容,请求头和请求体有哪些类型?HTTP 中 GET 和 POST 的区别是什么?

常见的 HTTP 状态码有哪些? HTTP 状态码用于指示服务器对客户端请求的响应结果,常见的 HTTP 状态码可以分为以下几类: 1. 信息类(1xx) 100 Continue:客户端应继续发送请求。101 Switching Protocols&…...

IDEA软件安装环境配置中文插件

一、Java环境配置 1. JDK安装8 访问Oracle官网下载JDK8(推荐JDK8,11)Java Downloads | Oracle 双击安装程序,保持默认设置连续点击"下一步"完成安装 验证JDK安装,winR键 然后输入cmd,输入java…...

循环神经网络(RNN):时序建模的核心引擎与演进之路

在人工智能处理序列数据的战场上,循环神经网络(RNN)如同一个能够理解时间的智者。从 2015 年谷歌神经机器翻译系统颠覆传统方法,到 2023 年 ChatGPT 实现对话连续性,这些突破都植根于 RNN 对时序建模的深刻理解。本文将…...

的作用解释)

HTML 表单 (form) 的作用解释

表单在网页中主要负责的是数据采集功能,一个表单基本由三部分组成: 表单标签:这里面包含了处理表单数据所用 CGI (Common Gateway Interface,通用网关接口)程序的 URL (Uniform Resource Locati…...

)

Windows控制台函数:控制台读取输入函数ReadConsoleA()

目录 什么是 ReadConsoleA? 它长什么样? 怎么用它? 它跟 std::cin 有什么不一样? 注意事项 什么是 ReadConsoleA? ReadConsoleA 是一个 Windows API 函数,用来从控制台读取用户输入。想象一下&#…...

网络tcp协议设置,网络tcp协议设置不了

网络TCP协议的设置通常涉及到多个方面,包括IP地址、子网掩码、默认网关、DNS服务器等参数的配置,以及TCP/IP协议栈本身的配置。如果遇到网络TCP协议设置不了的问题,可能是由多种原因导致的。以下是一些可能的原因及解决方法: 一、…...

电脑总显示串口正在被占用处理方法

1.现象 在嵌入式开发过程中,有很多情况下要使用串口调试,其中485/422/232转usb串口是非常常见的做法。 根据协议,接口芯片不同,需要安装对应的驱动程序,比如ch340,cp2102,CDM212364等驱动。可…...

R语言和RStudio安装

整体还是比较简单的,主要是记录个流程。 官方镜像站列表R语言官网 1 安装R(2025/3/6) R语言官网:The R Project for Statistical Computing 打开之后就Hello world一下吧 配置环境变量 2 安装RStudio 下载地址:htt…...

RHEL/CentOS 7.9使用firewalld限制出方向策略

背景 通常使用firewalld时候多为限制入方向访问,本次因有系统需要在生产环境部署测试环境,需求人希望在该测试环境中限制访问的对象,避免对生产造成影响 基础团队小伙伴参照rich-files,通过CLI,GUI反复进行进行配置验…...

设计模式之建造者模式:原理、实现与应用

引言 建造者模式(Builder Pattern)是一种创建型设计模式,它通过将复杂对象的构建过程分解为多个简单的步骤,使得对象的创建更加灵活和可维护。建造者模式特别适用于构建具有多个组成部分的复杂对象。本文将深入探讨建造者模式的原…...

1688店铺所有商品数据接口详解

一、接口概述淘宝开放平台提供 1688.items.onsale.get/taobao.item_search_shop 接口,可批量获取店铺在售商品列表,包含商品 ID、标题、价格、销量、图片等核心信息。该接口适用于商品库管理、竞品监控、数据分析等场景 二、接口调用流程 前期准…...