深度学习项目--基于DenseNet网络的“乳腺癌图像识别”,准确率90%+,pytorch复现

- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊

前言

- 如果说最经典的神经网络,

ResNet肯定是一个,从ResNet发布后,很多人做了修改,denseNet网络无疑是最成功的一个,它采用密集型连接,将通道数连接在一起; - 本文是基于上一篇复现DenseNet121模型,做一个乳腺癌图像识别,效果还行,准确率0.9+;

- CNN经典网络之“DenseNet”简介,源码研究与复现(pytorch): https://blog.csdn.net/weixin_74085818/article/details/146102290?spm=1001.2014.3001.5501

- 欢迎收藏 + 关注,本人将会持续更新

文章目录

- 1、导入数据

- 1、导入库

- 2、查看数据信息和导入数据

- 3、展示数据

- 4、数据导入

- 5、数据划分

- 6、动态加载数据

- 2、构建DenseNet121网络

- 3、模型训练

- 1、构建训练集

- 2、构建测试集

- 3、设置超参数

- 4、模型训练

- 5、结果可视化

- 6、模型评估

1、导入数据

1、导入库

import torch

import torch.nn as nn

import torchvision

import numpy as np

import os, PIL, pathlib

from collections import OrderedDict

import re

from torch.hub import load_state_dict_from_url# 设置设备

device = "cuda" if torch.cuda.is_available() else "cpu"device

'cuda'

2、查看数据信息和导入数据

data_dir = "./data/"data_dir = pathlib.Path(data_dir)# 类别数量

classnames = [str(path).split("\\")[0] for path in os.listdir(data_dir)]classnames

['0', '1']

3、展示数据

import matplotlib.pylab as plt

from PIL import Image # 获取文件名称

data_path_name = "./data/0/" # 不患病的

data_path_list = [f for f in os.listdir(data_path_name) if f.endswith(('jpg', 'png'))]# 创建画板

fig, axes = plt.subplots(2, 8, figsize=(16, 6))for ax, img_file in zip(axes.flat, data_path_list):path_name = os.path.join(data_path_name, img_file)img = Image.open(path_name) # 打开# 显示ax.imshow(img)ax.axis('off')plt.show()

4、数据导入

from torchvision import transforms, datasets # 数据统一格式

img_height = 224

img_width = 224 data_tranforms = transforms.Compose([transforms.Resize([img_height, img_width]),transforms.ToTensor(),transforms.Normalize( # 归一化mean=[0.485, 0.456, 0.406],std=[0.229, 0.224, 0.225] )

])# 加载所有数据

total_data = datasets.ImageFolder(root="./data/", transform=data_tranforms)

5、数据划分

# 大小 8 : 2

train_size = int(len(total_data) * 0.8)

test_size = len(total_data) - train_size train_data, test_data = torch.utils.data.random_split(total_data, [train_size, test_size])

6、动态加载数据

batch_size = 64train_dl = torch.utils.data.DataLoader(train_data,batch_size=batch_size,shuffle=True

)test_dl = torch.utils.data.DataLoader(test_data,batch_size=batch_size,shuffle=False

)

# 查看数据维度

for data, labels in train_dl:print("data shape[N, C, H, W]: ", data.shape)print("labels: ", labels)break

data shape[N, C, H, W]: torch.Size([64, 3, 224, 224])

labels: tensor([1, 1, 0, 0, 1, 1, 0, 0, 0, 1, 1, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1,1, 0, 1, 1, 0, 0, 1, 1, 1, 0, 0, 0, 0, 1, 0, 1, 0, 0, 1, 0, 0, 0, 0, 0,1, 0, 0, 1, 1, 1, 1, 1, 0, 0, 0, 1, 1, 0, 0, 1])

2、构建DenseNet121网络

import torch.nn.functional as F# 实现DenseBlock中的部件:DenseLayer

'''

1、BN + ReLU: 处理部分,首先进行归一化,然后在用激活函数ReLU

2、Bottlenck Layer:称为瓶颈层,这个层在yolov5中常用,但是yolov5中主要用于特征提取+维度降维,这里采用1 * 1卷积核 + 3 * 3的卷积核进行卷积操作,目的:减少输入输入特征维度

3、BN + ReLU:对 瓶颈层 数据进行归一化,ReLU激活函数,归一化可以确保梯度下降的时候较为平稳

4、3 * 3 生成新的特征图

'''

class _DenseLayer(nn.Sequential):def __init__(self, num_input_features, growth_rate, bn_size, drop_rate):''' num_input_features: 输入特征数,也就是通道数,在DenseNet中,每一层都会接受之前层的输出作为输入,故,这个数值通常会随着网络深度增加而增加growth_rate: 增长率,这个是 DenseNet的核心概念,决定了每一层为全局状态贡献的特征数量,他的用处主要在于决定了中间瓶颈层的输出通道,需要结合代码去研究bn_size: 瓶颈层中输出通道大小,含义:在使用1 * 1卷积核去提取特征数时,目标通道需要扩展到growth_rate的多少倍倍数, bn_size * growth_rate(输出维度)drop_rate: 使用Dropout的参数'''super(_DenseLayer, self).__init__()self.add_module("norm1", nn.BatchNorm2d(num_input_features))self.add_module("relu1", nn.ReLU(inplace=True))# 输出维度: bn_size * growth_rate, 1 * 1卷积核,步伐为1,只起到特征提取作用self.add_module("conv1", nn.Conv2d(num_input_features, bn_size * growth_rate, stride=1, kernel_size=1, bias=False))self.add_module("norm2", nn.BatchNorm2d(bn_size * growth_rate))self.add_module("relu2", nn.ReLU(inplace=True))# 输出通道:growth_rate, 维度计算:不变self.add_module("conv2", nn.Conv2d(bn_size * growth_rate, growth_rate, stride=1, kernel_size=3, padding=1, bias=False))self.drop_rate = drop_ratedef forward(self, x):new_features = super(_DenseLayer, self).forward(x) # 传播if self.drop_rate > 0:new_features = F.dropout(new_features, p=self.drop_rate, training=self.training) # self.training 继承nn.Sequential,是否训练模式# 模型融合,即,特征通道融合,形成新的特征图return torch.cat([x, new_features], dim=1) # (N, C, H, W) # 即 C1 + C2,通道上融合'''

DenseNet网络核心由DenseBlock模块组成,DenseBlock网络由DenseLayer组成,从 DenseLayer 可以看出,DenseBlock是密集连接,每一层的输入不仅包含前一层的输出,还包含网络中所有之前层的输出

'''

# 构建DenseBlock模块, 通过上图

class _DenseBlock(nn.Sequential):# num_layers 几层DenseLayer模块def __init__(self, num_layers, num_input_features, bn_size, growth_rate, drop_rate):super(_DenseBlock, self).__init__()for i in range(num_layers):layer = _DenseLayer(num_input_features + i * growth_rate, growth_rate, bn_size, drop_rate)self.add_module("denselayer%d" % (i + 1), layer)# Transition层,用于维度压缩# 组成:一个卷积层 + 一个池化层

class _Transition(nn.Sequential):def __init__(self, num_init_features, num_out_features):super(_Transition, self).__init__()self.add_module("norm", nn.BatchNorm2d(num_init_features))self.add_module("relu", nn.ReLU(inplace=True))self.add_module("conv", nn.Conv2d(num_init_features, num_out_features, kernel_size=1, stride=1, bias=False))# 降维self.add_module("pool", nn.AvgPool2d(2, stride=2))# 搭建DenseNet网络

class DenseNet(nn.Module):def __init__(self, growth_rate=32, block_config=(6, 12, 24, 16), num_init_features=64, bn_size=4, compression_rate=0.5, drop_rate=0.5, num_classes=1000):''' growth_rate、num_init_features、num_init_features、drop_rate 和denselayer一样block_config : 参数在 DenseNet 架构中用于指定每个 Dense Block 中包含的层数, 如:DenseNet-121: block_config=(6, 12, 24, 16) 表示第一个 Dense Block 包含 6 层,第二个包含 12 层,第三个包含 24 层,第四个包含 16 层。DenseNet-169: block_config=(6, 12, 32, 32)DenseNet-201: block_config=(6, 12, 48, 32)DenseNet-264: block_config=(6, 12, 64, 48)compression_rate: 压缩维度, DenseNet 中用于 Transition Layer(过渡层)的一个重要参数,它控制了从一个 Dense Block 到下一个 Dense Block 之间特征维度的压缩程度'''super(DenseNet, self).__init__()# 第一层卷积# OrderedDict,让模型层有序排列self.features = nn.Sequential(OrderedDict([# 输出维度:((w - k + 2 * p) / s) + 1("conv0", nn.Conv2d(3, num_init_features, kernel_size=7, stride=2, padding=3, bias=False)),("norm0", nn.BatchNorm2d(num_init_features)),("relu0", nn.ReLU(inplace=True)),("pool0", nn.MaxPool2d(3, stride=2, padding=1)) # 降维]))# 搭建DenseBlock层num_features = num_init_features# num_layers: 层数for i, num_layers in enumerate(block_config):block = _DenseBlock(num_layers, num_features, bn_size, growth_rate, drop_rate)# nn.Module 中features封装了nn.Sequentialself.features.add_module("denseblock%d" % (i + 1), block)''' # 这个计算反映了 DenseNet 中的一个关键特性:每一层输出的特征图(即新增加的通道数)由 growth_rate 决定,# 并且这些新生成的特征图会被传递给该 Dense Block 中的所有后续层以及下一个 Dense Block。'''num_features += num_layers * growth_rate # 叠加,每一次叠加# 判断是否需要使用Transition层if i != len(block_config) - 1:transition = _Transition(num_features, int(num_features*compression_rate)) # compression_rate 作用self.features.add_module("transition%d" % (i + 1), transition)num_features = int(num_features*compression_rate) # 更新维度# 最后一层self.features.add_module("norm5", nn.BatchNorm2d(num_features))self.features.add_module("relu5", nn.ReLU(inplace=True))# 分类层 self.classifier = nn.Linear(num_features, num_classes)# params initialization for m in self.modules(): if isinstance(m, nn.Conv2d): '''如果当前模块是一个二维卷积层 (nn.Conv2d),那么它的权重 (m.weight) 将通过 Kaiming 正态分布 (kaiming_normal_) 进行初始化。这种初始化方式特别适合与ReLU激活函数一起使用,有助于缓解深度网络中的梯度消失问题,促进有效的训练。 ''' nn.init.kaiming_normal_(m.weight) elif isinstance(m, nn.BatchNorm2d): ''' 对于二维批归一化层 (nn.BatchNorm2d),偏置项 (m.bias) 被初始化为0,而尺度因子 (m.weight) 被初始化为1。这意味着在没有数据经过的情况下,批归一化层不会对输入进行额外的缩放或偏移,保持输入不变。''' nn.init.constant_(m.bias, 0) nn.init.constant_(m.weight, 1) elif isinstance(m, nn.Linear): ''' 对于全连接层 (nn.Linear),只对其偏置项 (m.bias) 进行了初始化,设置为0''' nn.init.constant_(m.bias, 0)def forward(self, x):features = self.features(x)out = F.avg_pool2d(features, 7, stride=1).view(x.size(0), -1)out = self.classifier(out)return outmodel = DenseNet(num_init_features=64, growth_rate=32, block_config=(6, 12, 12, 16))model.to(device)

DenseNet((features): Sequential((conv0): Conv2d(3, 64, kernel_size=(7, 7), stride=(2, 2), padding=(3, 3), bias=False)(norm0): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu0): ReLU(inplace=True)(pool0): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)(denseblock1): _DenseBlock((denselayer1): _DenseLayer((norm1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(64, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer2): _DenseLayer((norm1): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(96, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer3): _DenseLayer((norm1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer4): _DenseLayer((norm1): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(160, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer5): _DenseLayer((norm1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(192, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer6): _DenseLayer((norm1): BatchNorm2d(224, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(224, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)))(transition1): _Transition((norm): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(conv): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(pool): AvgPool2d(kernel_size=2, stride=2, padding=0))(denseblock2): _DenseBlock((denselayer1): _DenseLayer((norm1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer2): _DenseLayer((norm1): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(160, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer3): _DenseLayer((norm1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(192, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer4): _DenseLayer((norm1): BatchNorm2d(224, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(224, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer5): _DenseLayer((norm1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer6): _DenseLayer((norm1): BatchNorm2d(288, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(288, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer7): _DenseLayer((norm1): BatchNorm2d(320, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(320, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer8): _DenseLayer((norm1): BatchNorm2d(352, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(352, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer9): _DenseLayer((norm1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(384, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer10): _DenseLayer((norm1): BatchNorm2d(416, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(416, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer11): _DenseLayer((norm1): BatchNorm2d(448, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(448, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer12): _DenseLayer((norm1): BatchNorm2d(480, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(480, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)))(transition2): _Transition((norm): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(conv): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)(pool): AvgPool2d(kernel_size=2, stride=2, padding=0))(denseblock3): _DenseBlock((denselayer1): _DenseLayer((norm1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer2): _DenseLayer((norm1): BatchNorm2d(288, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(288, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer3): _DenseLayer((norm1): BatchNorm2d(320, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(320, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer4): _DenseLayer((norm1): BatchNorm2d(352, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(352, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer5): _DenseLayer((norm1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(384, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer6): _DenseLayer((norm1): BatchNorm2d(416, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(416, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer7): _DenseLayer((norm1): BatchNorm2d(448, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(448, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer8): _DenseLayer((norm1): BatchNorm2d(480, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(480, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer9): _DenseLayer((norm1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(512, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer10): _DenseLayer((norm1): BatchNorm2d(544, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(544, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer11): _DenseLayer((norm1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(576, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer12): _DenseLayer((norm1): BatchNorm2d(608, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(608, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)))(transition3): _Transition((norm): BatchNorm2d(640, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(conv): Conv2d(640, 320, kernel_size=(1, 1), stride=(1, 1), bias=False)(pool): AvgPool2d(kernel_size=2, stride=2, padding=0))(denseblock4): _DenseBlock((denselayer1): _DenseLayer((norm1): BatchNorm2d(320, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(320, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer2): _DenseLayer((norm1): BatchNorm2d(352, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(352, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer3): _DenseLayer((norm1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(384, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer4): _DenseLayer((norm1): BatchNorm2d(416, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(416, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer5): _DenseLayer((norm1): BatchNorm2d(448, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(448, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer6): _DenseLayer((norm1): BatchNorm2d(480, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(480, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer7): _DenseLayer((norm1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(512, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer8): _DenseLayer((norm1): BatchNorm2d(544, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(544, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer9): _DenseLayer((norm1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(576, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer10): _DenseLayer((norm1): BatchNorm2d(608, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(608, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer11): _DenseLayer((norm1): BatchNorm2d(640, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(640, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer12): _DenseLayer((norm1): BatchNorm2d(672, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(672, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer13): _DenseLayer((norm1): BatchNorm2d(704, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(704, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer14): _DenseLayer((norm1): BatchNorm2d(736, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(736, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer15): _DenseLayer((norm1): BatchNorm2d(768, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(768, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False))(denselayer16): _DenseLayer((norm1): BatchNorm2d(800, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu1): ReLU(inplace=True)(conv1): Conv2d(800, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)(norm2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu2): ReLU(inplace=True)(conv2): Conv2d(128, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)))(norm5): BatchNorm2d(832, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu5): ReLU(inplace=True))(classifier): Linear(in_features=832, out_features=1000, bias=True)

)

3、模型训练

1、构建训练集

def train(dataloader, model, loss_fn, optimizer):size = len(dataloader.dataset)batch_size = len(dataloader)train_acc, train_loss = 0, 0 for X, y in dataloader:X, y = X.to(device), y.to(device)# 训练pred = model(X)loss = loss_fn(pred, y)# 梯度下降法optimizer.zero_grad()loss.backward()optimizer.step()# 记录train_loss += loss.item()train_acc += (pred.argmax(1) == y).type(torch.float).sum().item()train_acc /= sizetrain_loss /= batch_sizereturn train_acc, train_loss

2、构建测试集

def test(dataloader, model, loss_fn):size = len(dataloader.dataset)batch_size = len(dataloader)test_acc, test_loss = 0, 0 with torch.no_grad():for X, y in dataloader:X, y = X.to(device), y.to(device)pred = model(X)loss = loss_fn(pred, y)test_loss += loss.item()test_acc += (pred.argmax(1) == y).type(torch.float).sum().item()test_acc /= sizetest_loss /= batch_sizereturn test_acc, test_loss

3、设置超参数

loss_fn = nn.CrossEntropyLoss() # 损失函数

learn_lr = 1e-4 # 超参数

optimizer = torch.optim.Adam(model.parameters(), lr=learn_lr) # 优化器

4、模型训练

通过实验发现,还是设置20轮次附件最好

import copytrain_acc = []

train_loss = []

test_acc = []

test_loss = []epoches = 20best_acc = 0 # 设置一个最佳准确率,作为最佳模型的判别指标for i in range(epoches):model.train()epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, optimizer)model.eval()epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)# 保存最佳模型到 best_model if epoch_test_acc > best_acc: best_acc = epoch_test_acc best_model = copy.deepcopy(model)train_acc.append(epoch_train_acc)train_loss.append(epoch_train_loss)test_acc.append(epoch_test_acc)test_loss.append(epoch_test_loss)# 获取当前的学习率 lr = optimizer.state_dict()['param_groups'][0]['lr']# 输出template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%, Test_loss:{:.3f}')print(template.format(i + 1, epoch_train_acc*100, epoch_train_loss, epoch_test_acc*100, epoch_test_loss))PATH = './best_model.pth' # 保存的参数文件名

torch.save(best_model.state_dict(), PATH)print("Done")

Epoch: 1, Train_acc:79.3%, Train_loss:1.948, Test_acc:84.6%, Test_loss:1.079

Epoch: 2, Train_acc:85.3%, Train_loss:0.395, Test_acc:85.2%, Test_loss:0.721

Epoch: 3, Train_acc:87.3%, Train_loss:0.318, Test_acc:86.5%, Test_loss:0.526

Epoch: 4, Train_acc:89.0%, Train_loss:0.277, Test_acc:86.6%, Test_loss:0.494

Epoch: 5, Train_acc:89.0%, Train_loss:0.266, Test_acc:87.9%, Test_loss:0.400

Epoch: 6, Train_acc:89.6%, Train_loss:0.252, Test_acc:84.6%, Test_loss:0.524

Epoch: 7, Train_acc:90.3%, Train_loss:0.239, Test_acc:85.5%, Test_loss:0.445

Epoch: 8, Train_acc:90.2%, Train_loss:0.235, Test_acc:87.6%, Test_loss:0.359

Epoch: 9, Train_acc:90.0%, Train_loss:0.235, Test_acc:89.3%, Test_loss:0.298

Epoch:10, Train_acc:91.0%, Train_loss:0.220, Test_acc:89.5%, Test_loss:0.307

Epoch:11, Train_acc:90.8%, Train_loss:0.222, Test_acc:88.3%, Test_loss:0.316

Epoch:12, Train_acc:91.4%, Train_loss:0.210, Test_acc:83.3%, Test_loss:0.516

Epoch:13, Train_acc:91.5%, Train_loss:0.208, Test_acc:91.3%, Test_loss:0.247

Epoch:14, Train_acc:91.5%, Train_loss:0.206, Test_acc:90.1%, Test_loss:0.269

Epoch:15, Train_acc:92.0%, Train_loss:0.199, Test_acc:91.1%, Test_loss:0.242

Epoch:16, Train_acc:92.1%, Train_loss:0.194, Test_acc:89.4%, Test_loss:0.285

Epoch:17, Train_acc:92.4%, Train_loss:0.193, Test_acc:91.0%, Test_loss:0.229

Epoch:18, Train_acc:92.4%, Train_loss:0.188, Test_acc:88.0%, Test_loss:0.317

Epoch:19, Train_acc:92.7%, Train_loss:0.182, Test_acc:89.2%, Test_loss:0.285

Epoch:20, Train_acc:92.6%, Train_loss:0.182, Test_acc:78.5%, Test_loss:0.728

Done

5、结果可视化

import matplotlib.pyplot as plt

#隐藏警告

import warnings

warnings.filterwarnings("ignore") #忽略警告信息epochs_range = range(epoches)plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training Accuracy')plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training= Loss')

plt.show()

在20轮测试集准确率变化比较大,从跑的几次实验来看,这次是偶然事件,测试集损失率后面一直稳定在0.3附件,测试准确率一直在0.8、0.89、0.90附件徘徊

6、模型评估

# 将参数加载到model当中

best_model.load_state_dict(torch.load(PATH, map_location=device))

epoch_test_acc, epoch_test_loss = test(test_dl, best_model, loss_fn)print(epoch_test_acc, epoch_test_loss)

0.9134651249533756 0.24670581874393283

相关文章:

深度学习项目--基于DenseNet网络的“乳腺癌图像识别”,准确率90%+,pytorch复现

🍨 本文为🔗365天深度学习训练营 中的学习记录博客🍖 原作者:K同学啊 前言 如果说最经典的神经网络,ResNet肯定是一个,从ResNet发布后,很多人做了修改,denseNet网络无疑是最成功的…...

级联树SELECTTREE格式调整

步骤: 1、将全部列表设置成Map<Long, List<Obejct>> map的格式,方便查看每个父级对应的子列表,减少循环次数 2、对这个map进行递归,重新进行级联树的集合调整,将子集放置在对应的childs里面。 public Dyna…...

解决std::iterator对rapidjson编译事项)

编译RTTR 0.9.6 (CMake + vs2019)解决std::iterator对rapidjson编译事项

RTTR编译 使用CMake和VS2019 x64编译RTTR 0.9.6指南一、下载RTTR 0.9.6并配置CMake二、在VS2019上编译RTTR 0.9.6解决rapidjson与C17兼容性问题 三、安装RTTR四、最简单的还是用vcpkg 使用CMake和VS2019 x64编译RTTR 0.9.6指南 本文将指导您完成从下载RTTR 0.9.6到使用CMake生…...

深入理解JavaScript构造函数与原型链:从原理到最佳实践

一、开篇:为什么需要理解原型链? 在JavaScript开发中,90%以上的"诡异"bug都与原型链机制相关。理解构造函数与原型链的运行原理,不仅能帮助我们写出更优雅的代码,还能在框架源码阅读、性能优化等场景中游刃…...

【Linux 指北】常用 Linux 指令汇总

第一章、常用基本指令 # 注意: # #表示管理员 # $表示普通用户 [rootlocalhost Practice]# 说明此处表示管理员01. ls 指令 语法: ls [选项][目录或文件] 功能:对于目录,该命令列出该目录下的所有子目录与文件。对于文件…...

第27周JavaSpringboot 前后端联调

电商前后端联调课程笔记 一、项目启动与环境搭建 1.1 项目启动 在学习电商项目的前后端联调之前,需要先掌握如何启动项目。项目启动是整个开发流程的基础,只有成功启动项目,才能进行后续的开发与调试工作。 1.1.1 环境安装 环境安装是项…...

QT中的布局管理

在 Qt 中,布局管理器(如 QHBoxLayout 和 QVBoxLayout)的构造函数可以接受一个 QWidget* 参数,用于指定该布局的父控件。如果指定了父控件,布局会自动将其管理的控件添加到父控件中。 在你的代码中,QHBoxLa…...

.net 6.0 webapi支持 xml返回xml json返回json

// 添加控制器并配置格式化器 var builder WebApplication.CreateBuilder(); builder.Services.AddControllers(options > {options.Filters.Add<ContentTypeFilter>();options.ReturnHttpNotAcceptable true; // 强制要求Accept头匹配// 添加 XML 格式化器options.…...

docker 搭建alpine下nginx1.26/mysql8.0/php7.4环境

docker 搭建alpine下nginx1.26/mysql8.0/php7.4环境 docker-compose.yml services:mysql-8.0:container_name: mysql-8.0image: mysql:8.0restart: always#ports:#- "3306:3306"volumes:- ./etc/mysql/conf.d/mysql.cnf:/etc/mysql/conf.d/mysql.cnf:ro- ./var/log…...

Android7上移植I2C-tools

一,下载源码 cd hardware/libhardware/tests git clone https://git.kernel.org/pub/scm/utils/i2c-tools/i2c-tools.git 二, 在 i2c-tools 目录添加 Android.mk 编译文件 LOCAL_PATH: $(call my-dir)################### i2c-tools ###############…...

Centos 7 修改语言和输入源为中文+修改终端快捷键复制为Ctrl+C、粘贴为Ctrl+V

目录 修改语言和输入源为中文 1、设置 2、Region & Language(区域和语言) 3、Add an Input Source(添加输入源) 4、修改语言为中文 5、Restart(重启) 6、Log Out (注销) …...

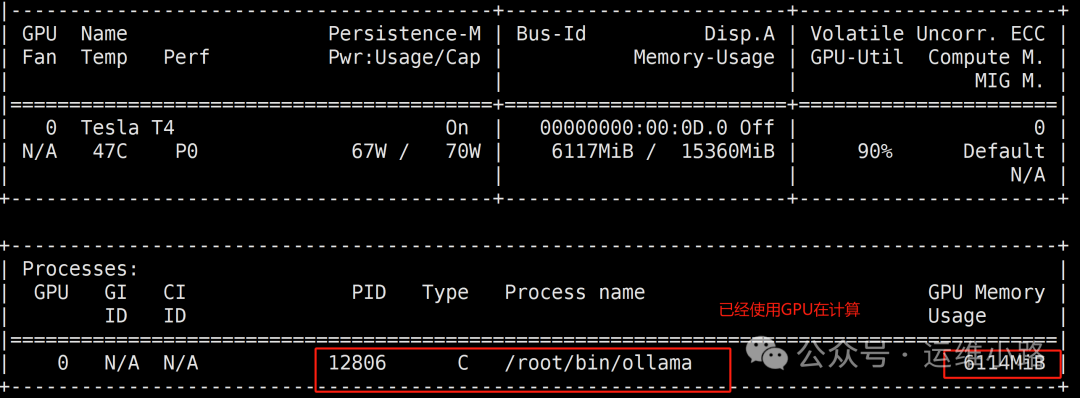

DeepSeek-进阶版部署(Linux+GPU)

前面几个小节讲解的Win和Linux部署DeepSeek的比较简单的方法,而且采用的模型也是最小的,作为测试体验使用是没问题的。如果要在生产环境使用还是需要用到GPU来实现,下面我将以有一台带上GPU显卡的Linux机器来部署DeepSeek。这里还只是先体验单…...

疯狂安卓入门,crayandroid

系列文章目录 文章目录 系列文章目录第一组 ViewGroup 为基类帧布局约束布局 第二组 TextView 及其子类button时钟 AnalogClock 和 TextClock计时器 第三组 ImageView 及其子类第四组 AdapterView 及其子类AutoCompleteTextView 的功能和用法ExapndaleListViewAdapterViewFlipp…...

批量测试IP和域名联通性

最近需要测试IP和域名的联通性,因数量很多,单个ping占用时间较长。考虑使用Python和Bat解决。考虑到依托的环境,Bat可以在Windows直接运行。所以直接Bat处理。 方法1 echo off for /f %%i in (E:\封禁IP\ipall.txt) do (ping %%i -n 1 &…...

Python——计算机网络

一.ip 1.ip的定义 IP是“Internet Protocol”的缩写,即“互联网协议”。它是用于计算机网络通信的基础协议之一,属于TCP/IP协议族中的网络层协议。IP协议的主要功能是负责将数据包从源主机传输到目标主机,并确保数据能够在复杂的网络环境中正…...

一招解决Pytorch GPU版本安装慢的问题

Pytorch是一个流行的深度学习框架,广泛应用于计算机视觉、自然语言处理等领域。安装Pytorch GPU版本可以充分利用GPU的并行计算能力,加速模型的训练和推理过程。接下来,我们将详细介绍如何在Windows操作系统上安装Pytorch GPU版本。 查看是否…...

股票交易所官方api接口有哪些?获取和使用需要满足什么条件

炒股自动化:申请官方API接口,散户也可以 python炒股自动化(0),申请券商API接口 python炒股自动化(1),量化交易接口区别 Python炒股自动化(2):获取…...

MoonSharp 文档五

目录 13.Coroutines(协程) Lua中的协程 从CLR代码中的协程 从CLR代码中的协程作为CLR迭代器 注意事项 抢占式协程 14.Hardwire descriptors(硬编码描述符) 为什么需要“硬编码” 什么是“硬编码” 如何进行硬编码 硬编…...

框架源码私享笔记(02)Mybatis核心框架原理 | 一条SQL透析核心组件功能特性

最近在思考一个问题:如何能够更好的分享主流框架源码学习笔记(主要是源码部分)?让有缘刷到的同学既可以有所收获,还能保持对相关技术架构探讨学习热情和兴趣。以及自己也保持较高的分享热情和动力。 今天尝试用一个SQL查询作为引…...

如何重置 MySQL root 用户的登录密码?

重置 MySQL root 密码的核心步骤是绕过权限验证登录数据库并更新密码字段。以下是具体方法: 方法一:通过 --SKIP-GRANT-TABLES 模式修改密码 停止 MySQL 服务 Windows:在命令行执行 net stop mysql(服务名可能为 mysql80 或 mysql…...

ArrayList底层结构和源码分析笔记

参考视频:韩顺平Java集合 ArrayList特点 ArrayList 可以加入 null,包括多个。 ArrayList 是由数组来实现数据存储的 ArrayList 基本等同于 Vector,除了 ArrayList 是线程不安全(执行效率高)。在多线程情况下…...

Centos离线安装gcc

文章目录 Centos离线安装gcc1. gcc是什么?2. gcc下载地址3. gcc的安装4. 安装结果验证 Centos离线安装gcc 1. gcc是什么? GCC(GNU Compiler Collection)是 GNU 项目下的开源编译器套件,主要用于将 C、C 等编程语言的源…...

flutter 图片资源路径管理

1. 创建统一资源管理类 创建一个单独的 Dart 文件(比如 manager.dart),将所有图片路径集中管理。这样在引用图片时,不需要每次都手动输入完整路径,只需通过常量引用即可。 //manager.dartclass Manager { static co…...

[内网渗透] 红日靶场2

环境配置 靶场地址: http://vulnstack.qiyuanxuetang.net/vuln/wiki/ 环境配置可以看这个: https://www.bilibili.com/video/BV1De4y1a7Ps/?spm_id_from333.337.search-card.all.click&vd_sourcecf73ac8de9b7c0322b1bccf77de91c5dNAT模式分配111段, DHCP也要更改 再添加…...

【cocos creator】游戏优化,内存,性能,包体积大小,加载,drawcall优化

参考: https://blog.csdn.net/qq_47012987/article/details/140169024 内存泄露排查 使用chrome测试cocos creator内存泄漏问题手游内存优化cocos creator优化Creator资源自动释放逻辑:所有 cc.Asset 实例都拥有成员函数 addRef 和 decRef,分…...

MySQL 企业版 TDE加密后 测试和问题汇总

一、测试keyring file 1.1 当keyring file文件丢失或者被篡改 结论:不影响当前正在运行的数据库,但是在重启服务后会启动失败出现报错。 tail -n 100 /var/log/mysql/error.log 报错信息如下: 2025-03-12T08:04:54.668847Z 1 [ERROR] [M…...

Unity 封装一个依赖于MonoBehaviour的计时器(下) 链式调用

[Unity] 封装一个依赖于MonoBehaviour的计时器(上)-CSDN博客 目录 1.加入等待间隔时间"永远执行方法 2.修改为支持链式调用 实现链式调用 管理"链式"调度顺序 3.测试 即时方法编辑 "永久"方法 链式调用 4.总结 1.加入等待间隔时间&qu…...

petalinux环境下给linux-xlnx源码打补丁

在调试88e1512芯片时官方驱动无法满足我的应用方式,因此修改了marvell.c源码,但是在做bsp包重新创建新工程时发现之前的修改没有生效,因此查找了一下资料发现可以通过打补丁的方式添加到工程文件中,便于管理。 操作步骤 一、获取…...

套接字缓冲区以及Net_device

基础网络模型图 一般网络设计分为三层架构和五层设计: 一、三层架构 用户空间的应用层 位于最上层,是用户直接使用的网络应用程序,如浏览器、邮件客户端、即时通讯软件等。这些程序通过系统调用(如 socket 接口)向内核…...

2024下半年真题 系统架构设计师 案例分析

案例一 软件架构 关于人工智能系统的需求分析,给出十几个需求。 a.系统发生业务故障时,3秒内启动 XXX,属于可靠性 b.系统中的数据进行导出,要求在3秒内完成,属于可用性 c.质量属性描述,XXX,属…...