llama.cpp LLM_CHAT_TEMPLATE_DEEPSEEK_3

llama.cpp LLM_CHAT_TEMPLATE_DEEPSEEK_3

- 1. `LLAMA_VOCAB_PRE_TYPE_DEEPSEEK3_LLM`

- 2. `static const std::map<std::string, llm_chat_template> LLM_CHAT_TEMPLATES`

- 3. `LLM_CHAT_TEMPLATE_DEEPSEEK_3`

- References

不宜吹捧中国大语言模型的同时,又去贬低美国大语言模型。

水是人体的主要化学成分,约占体重的 50% 至 70%,大语言模型的含水量也不会太低。

科技发展靠的是硬实力,而不是情怀和口号。

llama.cpp

https://github.com/ggerganov/llama.cpp

1. LLAMA_VOCAB_PRE_TYPE_DEEPSEEK3_LLM

LLAMA_VOCAB_PRE_TYPE_DEEPSEEK3_LLM,LLAMA_VOCAB_PRE_TYPE_DEEPSEEK_LLMandLLAMA_VOCAB_PRE_TYPE_DEEPSEEK_CODER

/home/yongqiang/llm_work/llama_cpp_25_01_05/llama.cpp/include/llama.h

enum llama_vocab_type {LLAMA_VOCAB_TYPE_NONE = 0, // For models without vocabLLAMA_VOCAB_TYPE_SPM = 1, // LLaMA tokenizer based on byte-level BPE with byte fallbackLLAMA_VOCAB_TYPE_BPE = 2, // GPT-2 tokenizer based on byte-level BPELLAMA_VOCAB_TYPE_WPM = 3, // BERT tokenizer based on WordPieceLLAMA_VOCAB_TYPE_UGM = 4, // T5 tokenizer based on UnigramLLAMA_VOCAB_TYPE_RWKV = 5, // RWKV tokenizer based on greedy tokenization};// pre-tokenization typesenum llama_vocab_pre_type {LLAMA_VOCAB_PRE_TYPE_DEFAULT = 0,LLAMA_VOCAB_PRE_TYPE_LLAMA3 = 1,LLAMA_VOCAB_PRE_TYPE_DEEPSEEK_LLM = 2,LLAMA_VOCAB_PRE_TYPE_DEEPSEEK_CODER = 3,LLAMA_VOCAB_PRE_TYPE_FALCON = 4,LLAMA_VOCAB_PRE_TYPE_MPT = 5,LLAMA_VOCAB_PRE_TYPE_STARCODER = 6,LLAMA_VOCAB_PRE_TYPE_GPT2 = 7,LLAMA_VOCAB_PRE_TYPE_REFACT = 8,LLAMA_VOCAB_PRE_TYPE_COMMAND_R = 9,LLAMA_VOCAB_PRE_TYPE_STABLELM2 = 10,LLAMA_VOCAB_PRE_TYPE_QWEN2 = 11,LLAMA_VOCAB_PRE_TYPE_OLMO = 12,LLAMA_VOCAB_PRE_TYPE_DBRX = 13,LLAMA_VOCAB_PRE_TYPE_SMAUG = 14,LLAMA_VOCAB_PRE_TYPE_PORO = 15,LLAMA_VOCAB_PRE_TYPE_CHATGLM3 = 16,LLAMA_VOCAB_PRE_TYPE_CHATGLM4 = 17,LLAMA_VOCAB_PRE_TYPE_VIKING = 18,LLAMA_VOCAB_PRE_TYPE_JAIS = 19,LLAMA_VOCAB_PRE_TYPE_TEKKEN = 20,LLAMA_VOCAB_PRE_TYPE_SMOLLM = 21,LLAMA_VOCAB_PRE_TYPE_CODESHELL = 22,LLAMA_VOCAB_PRE_TYPE_BLOOM = 23,LLAMA_VOCAB_PRE_TYPE_GPT3_FINNISH = 24,LLAMA_VOCAB_PRE_TYPE_EXAONE = 25,LLAMA_VOCAB_PRE_TYPE_CHAMELEON = 26,LLAMA_VOCAB_PRE_TYPE_MINERVA = 27,LLAMA_VOCAB_PRE_TYPE_DEEPSEEK3_LLM = 28,};

/home/yongqiang/llm_work/llama_cpp_25_01_05/llama.cpp/src/llama-hparams.h

// bump if necessary

#define LLAMA_MAX_LAYERS 512

#define LLAMA_MAX_EXPERTS 256 // DeepSeekV3enum llama_expert_gating_func_type {LLAMA_EXPERT_GATING_FUNC_TYPE_NONE = 0,LLAMA_EXPERT_GATING_FUNC_TYPE_SOFTMAX = 1,LLAMA_EXPERT_GATING_FUNC_TYPE_SIGMOID = 2,

};

2. static const std::map<std::string, llm_chat_template> LLM_CHAT_TEMPLATES

LLM_CHAT_TEMPLATE_DEEPSEEK_3,LLM_CHAT_TEMPLATE_DEEPSEEK_2andLLM_CHAT_TEMPLATE_DEEPSEEK

/home/yongqiang/llm_work/llama_cpp_25_01_05/llama.cpp/src/llama-chat.h

enum llm_chat_template {LLM_CHAT_TEMPLATE_CHATML,LLM_CHAT_TEMPLATE_LLAMA_2,LLM_CHAT_TEMPLATE_LLAMA_2_SYS,LLM_CHAT_TEMPLATE_LLAMA_2_SYS_BOS,LLM_CHAT_TEMPLATE_LLAMA_2_SYS_STRIP,LLM_CHAT_TEMPLATE_MISTRAL_V1,LLM_CHAT_TEMPLATE_MISTRAL_V3,LLM_CHAT_TEMPLATE_MISTRAL_V3_TEKKEN,LLM_CHAT_TEMPLATE_MISTRAL_V7,LLM_CHAT_TEMPLATE_PHI_3,LLM_CHAT_TEMPLATE_PHI_4,LLM_CHAT_TEMPLATE_FALCON_3,LLM_CHAT_TEMPLATE_ZEPHYR,LLM_CHAT_TEMPLATE_MONARCH,LLM_CHAT_TEMPLATE_GEMMA,LLM_CHAT_TEMPLATE_ORION,LLM_CHAT_TEMPLATE_OPENCHAT,LLM_CHAT_TEMPLATE_VICUNA,LLM_CHAT_TEMPLATE_VICUNA_ORCA,LLM_CHAT_TEMPLATE_DEEPSEEK,LLM_CHAT_TEMPLATE_DEEPSEEK_2,LLM_CHAT_TEMPLATE_DEEPSEEK_3,LLM_CHAT_TEMPLATE_COMMAND_R,LLM_CHAT_TEMPLATE_LLAMA_3,LLM_CHAT_TEMPLATE_CHATGML_3,LLM_CHAT_TEMPLATE_CHATGML_4,LLM_CHAT_TEMPLATE_MINICPM,LLM_CHAT_TEMPLATE_EXAONE_3,LLM_CHAT_TEMPLATE_RWKV_WORLD,LLM_CHAT_TEMPLATE_GRANITE,LLM_CHAT_TEMPLATE_GIGACHAT,LLM_CHAT_TEMPLATE_MEGREZ,LLM_CHAT_TEMPLATE_UNKNOWN,

};

{ "deepseek3", LLM_CHAT_TEMPLATE_DEEPSEEK_3 },{ "deepseek2", LLM_CHAT_TEMPLATE_DEEPSEEK_2 }and{ "deepseek", LLM_CHAT_TEMPLATE_DEEPSEEK }

/home/yongqiang/llm_work/llama_cpp_25_01_05/llama.cpp/src/llama-chat.cpp

static const std::map<std::string, llm_chat_template> LLM_CHAT_TEMPLATES = {{ "chatml", LLM_CHAT_TEMPLATE_CHATML },{ "llama2", LLM_CHAT_TEMPLATE_LLAMA_2 },{ "llama2-sys", LLM_CHAT_TEMPLATE_LLAMA_2_SYS },{ "llama2-sys-bos", LLM_CHAT_TEMPLATE_LLAMA_2_SYS_BOS },{ "llama2-sys-strip", LLM_CHAT_TEMPLATE_LLAMA_2_SYS_STRIP },{ "mistral-v1", LLM_CHAT_TEMPLATE_MISTRAL_V1 },{ "mistral-v3", LLM_CHAT_TEMPLATE_MISTRAL_V3 },{ "mistral-v3-tekken", LLM_CHAT_TEMPLATE_MISTRAL_V3_TEKKEN },{ "mistral-v7", LLM_CHAT_TEMPLATE_MISTRAL_V7 },{ "phi3", LLM_CHAT_TEMPLATE_PHI_3 },{ "phi4", LLM_CHAT_TEMPLATE_PHI_4 },{ "falcon3", LLM_CHAT_TEMPLATE_FALCON_3 },{ "zephyr", LLM_CHAT_TEMPLATE_ZEPHYR },{ "monarch", LLM_CHAT_TEMPLATE_MONARCH },{ "gemma", LLM_CHAT_TEMPLATE_GEMMA },{ "orion", LLM_CHAT_TEMPLATE_ORION },{ "openchat", LLM_CHAT_TEMPLATE_OPENCHAT },{ "vicuna", LLM_CHAT_TEMPLATE_VICUNA },{ "vicuna-orca", LLM_CHAT_TEMPLATE_VICUNA_ORCA },{ "deepseek", LLM_CHAT_TEMPLATE_DEEPSEEK },{ "deepseek2", LLM_CHAT_TEMPLATE_DEEPSEEK_2 },{ "deepseek3", LLM_CHAT_TEMPLATE_DEEPSEEK_3 },{ "command-r", LLM_CHAT_TEMPLATE_COMMAND_R },{ "llama3", LLM_CHAT_TEMPLATE_LLAMA_3 },{ "chatglm3", LLM_CHAT_TEMPLATE_CHATGML_3 },{ "chatglm4", LLM_CHAT_TEMPLATE_CHATGML_4 },{ "minicpm", LLM_CHAT_TEMPLATE_MINICPM },{ "exaone3", LLM_CHAT_TEMPLATE_EXAONE_3 },{ "rwkv-world", LLM_CHAT_TEMPLATE_RWKV_WORLD },{ "granite", LLM_CHAT_TEMPLATE_GRANITE },{ "gigachat", LLM_CHAT_TEMPLATE_GIGACHAT },{ "megrez", LLM_CHAT_TEMPLATE_MEGREZ },

};

3. LLM_CHAT_TEMPLATE_DEEPSEEK_3

LLM_CHAT_TEMPLATE_DEEPSEEK_3,LLM_CHAT_TEMPLATE_DEEPSEEK_2andLLM_CHAT_TEMPLATE_DEEPSEEK

/home/yongqiang/llm_work/llama_cpp_25_01_05/llama.cpp/src/llama-chat.cpp

// Simple version of "llama_apply_chat_template" that only works with strings

// This function uses heuristic checks to determine commonly used template. It is not a jinja parser.

int32_t llm_chat_apply_template(llm_chat_template tmpl,const std::vector<const llama_chat_message *> & chat,std::string & dest, bool add_ass) {// Taken from the research: https://github.com/ggerganov/llama.cpp/issues/5527std::stringstream ss;if (tmpl == LLM_CHAT_TEMPLATE_CHATML) {// chatml templatefor (auto message : chat) {ss << "<|im_start|>" << message->role << "\n" << message->content << "<|im_end|>\n";}if (add_ass) {ss << "<|im_start|>assistant\n";}} else if (tmpl == LLM_CHAT_TEMPLATE_MISTRAL_V7) {// Official mistral 'v7' template// See: https://huggingface.co/mistralai/Mistral-Large-Instruct-2411#basic-instruct-template-v7for (auto message : chat) {std::string role(message->role);std::string content(message->content);if (role == "system") {ss << "[SYSTEM_PROMPT] " << content << "[/SYSTEM_PROMPT]";} else if (role == "user") {ss << "[INST] " << content << "[/INST]";}else {ss << " " << content << "</s>";}}} else if (tmpl == LLM_CHAT_TEMPLATE_MISTRAL_V1|| tmpl == LLM_CHAT_TEMPLATE_MISTRAL_V3|| tmpl == LLM_CHAT_TEMPLATE_MISTRAL_V3_TEKKEN) {// See: https://github.com/mistralai/cookbook/blob/main/concept-deep-dive/tokenization/chat_templates.md// See: https://github.com/mistralai/cookbook/blob/main/concept-deep-dive/tokenization/templates.mdstd::string leading_space = tmpl == LLM_CHAT_TEMPLATE_MISTRAL_V1 ? " " : "";std::string trailing_space = tmpl == LLM_CHAT_TEMPLATE_MISTRAL_V3_TEKKEN ? "" : " ";bool trim_assistant_message = tmpl == LLM_CHAT_TEMPLATE_MISTRAL_V3;bool is_inside_turn = false;for (auto message : chat) {if (!is_inside_turn) {ss << leading_space << "[INST]" << trailing_space;is_inside_turn = true;}std::string role(message->role);std::string content(message->content);if (role == "system") {ss << content << "\n\n";} else if (role == "user") {ss << content << leading_space << "[/INST]";} else {ss << trailing_space << (trim_assistant_message ? trim(content) : content) << "</s>";is_inside_turn = false;}}} else if (tmpl == LLM_CHAT_TEMPLATE_LLAMA_2|| tmpl == LLM_CHAT_TEMPLATE_LLAMA_2_SYS|| tmpl == LLM_CHAT_TEMPLATE_LLAMA_2_SYS_BOS|| tmpl == LLM_CHAT_TEMPLATE_LLAMA_2_SYS_STRIP) {// llama2 template and its variants// [variant] support system message// See: https://huggingface.co/blog/llama2#how-to-prompt-llama-2bool support_system_message = tmpl != LLM_CHAT_TEMPLATE_LLAMA_2;// [variant] add BOS inside historybool add_bos_inside_history = tmpl == LLM_CHAT_TEMPLATE_LLAMA_2_SYS_BOS;// [variant] trim spaces from the input messagebool strip_message = tmpl == LLM_CHAT_TEMPLATE_LLAMA_2_SYS_STRIP;// construct the promptbool is_inside_turn = true; // skip BOS at the beginningss << "[INST] ";for (auto message : chat) {std::string content = strip_message ? trim(message->content) : message->content;std::string role(message->role);if (!is_inside_turn) {is_inside_turn = true;ss << (add_bos_inside_history ? "<s>[INST] " : "[INST] ");}if (role == "system") {if (support_system_message) {ss << "<<SYS>>\n" << content << "\n<</SYS>>\n\n";} else {// if the model does not support system message, we still include it in the first message, but without <<SYS>>ss << content << "\n";}} else if (role == "user") {ss << content << " [/INST]";} else {ss << content << "</s>";is_inside_turn = false;}}} else if (tmpl == LLM_CHAT_TEMPLATE_PHI_3) {// Phi 3for (auto message : chat) {std::string role(message->role);ss << "<|" << role << "|>\n" << message->content << "<|end|>\n";}if (add_ass) {ss << "<|assistant|>\n";}} else if (tmpl == LLM_CHAT_TEMPLATE_PHI_4) {// chatml templatefor (auto message : chat) {ss << "<|im_start|>" << message->role << "<|im_sep|>" << message->content << "<|im_end|>";}if (add_ass) {ss << "<|im_start|>assistant<|im_sep|>";}} else if (tmpl == LLM_CHAT_TEMPLATE_FALCON_3) {// Falcon 3for (auto message : chat) {std::string role(message->role);ss << "<|" << role << "|>\n" << message->content << "\n";}if (add_ass) {ss << "<|assistant|>\n";}} else if (tmpl == LLM_CHAT_TEMPLATE_ZEPHYR) {// zephyr templatefor (auto message : chat) {ss << "<|" << message->role << "|>" << "\n" << message->content << "<|endoftext|>\n";}if (add_ass) {ss << "<|assistant|>\n";}} else if (tmpl == LLM_CHAT_TEMPLATE_MONARCH) {// mlabonne/AlphaMonarch-7B template (the <s> is included inside history)for (auto message : chat) {std::string bos = (message == chat.front()) ? "" : "<s>"; // skip BOS for first messagess << bos << message->role << "\n" << message->content << "</s>\n";}if (add_ass) {ss << "<s>assistant\n";}} else if (tmpl == LLM_CHAT_TEMPLATE_GEMMA) {// google/gemma-7b-itstd::string system_prompt = "";for (auto message : chat) {std::string role(message->role);if (role == "system") {// there is no system message for gemma, but we will merge it with user prompt, so nothing is brokensystem_prompt = trim(message->content);continue;}// in gemma, "assistant" is "model"role = role == "assistant" ? "model" : message->role;ss << "<start_of_turn>" << role << "\n";if (!system_prompt.empty() && role != "model") {ss << system_prompt << "\n\n";system_prompt = "";}ss << trim(message->content) << "<end_of_turn>\n";}if (add_ass) {ss << "<start_of_turn>model\n";}} else if (tmpl == LLM_CHAT_TEMPLATE_ORION) {// OrionStarAI/Orion-14B-Chatstd::string system_prompt = "";for (auto message : chat) {std::string role(message->role);if (role == "system") {// there is no system message support, we will merge it with user promptsystem_prompt = message->content;continue;} else if (role == "user") {ss << "Human: ";if (!system_prompt.empty()) {ss << system_prompt << "\n\n";system_prompt = "";}ss << message->content << "\n\nAssistant: </s>";} else {ss << message->content << "</s>";}}} else if (tmpl == LLM_CHAT_TEMPLATE_OPENCHAT) {// openchat/openchat-3.5-0106,for (auto message : chat) {std::string role(message->role);if (role == "system") {ss << message->content << "<|end_of_turn|>";} else {role[0] = toupper(role[0]);ss << "GPT4 Correct " << role << ": " << message->content << "<|end_of_turn|>";}}if (add_ass) {ss << "GPT4 Correct Assistant:";}} else if (tmpl == LLM_CHAT_TEMPLATE_VICUNA || tmpl == LLM_CHAT_TEMPLATE_VICUNA_ORCA) {// eachadea/vicuna-13b-1.1 (and Orca variant)for (auto message : chat) {std::string role(message->role);if (role == "system") {// Orca-Vicuna variant uses a system prefixif (tmpl == LLM_CHAT_TEMPLATE_VICUNA_ORCA) {ss << "SYSTEM: " << message->content << "\n";} else {ss << message->content << "\n\n";}} else if (role == "user") {ss << "USER: " << message->content << "\n";} else if (role == "assistant") {ss << "ASSISTANT: " << message->content << "</s>\n";}}if (add_ass) {ss << "ASSISTANT:";}} else if (tmpl == LLM_CHAT_TEMPLATE_DEEPSEEK) {// deepseek-ai/deepseek-coder-33b-instructfor (auto message : chat) {std::string role(message->role);if (role == "system") {ss << message->content;} else if (role == "user") {ss << "### Instruction:\n" << message->content << "\n";} else if (role == "assistant") {ss << "### Response:\n" << message->content << "\n<|EOT|>\n";}}if (add_ass) {ss << "### Response:\n";}} else if (tmpl == LLM_CHAT_TEMPLATE_COMMAND_R) {// CohereForAI/c4ai-command-r-plusfor (auto message : chat) {std::string role(message->role);if (role == "system") {ss << "<|START_OF_TURN_TOKEN|><|SYSTEM_TOKEN|>" << trim(message->content) << "<|END_OF_TURN_TOKEN|>";} else if (role == "user") {ss << "<|START_OF_TURN_TOKEN|><|USER_TOKEN|>" << trim(message->content) << "<|END_OF_TURN_TOKEN|>";} else if (role == "assistant") {ss << "<|START_OF_TURN_TOKEN|><|CHATBOT_TOKEN|>" << trim(message->content) << "<|END_OF_TURN_TOKEN|>";}}if (add_ass) {ss << "<|START_OF_TURN_TOKEN|><|CHATBOT_TOKEN|>";}} else if (tmpl == LLM_CHAT_TEMPLATE_LLAMA_3) {// Llama 3for (auto message : chat) {std::string role(message->role);ss << "<|start_header_id|>" << role << "<|end_header_id|>\n\n" << trim(message->content) << "<|eot_id|>";}if (add_ass) {ss << "<|start_header_id|>assistant<|end_header_id|>\n\n";}} else if (tmpl == LLM_CHAT_TEMPLATE_CHATGML_3) {// chatglm3-6bss << "[gMASK]" << "sop";for (auto message : chat) {std::string role(message->role);ss << "<|" << role << "|>" << "\n " << message->content;}if (add_ass) {ss << "<|assistant|>";}} else if (tmpl == LLM_CHAT_TEMPLATE_CHATGML_4) {ss << "[gMASK]" << "<sop>";for (auto message : chat) {std::string role(message->role);ss << "<|" << role << "|>" << "\n" << message->content;}if (add_ass) {ss << "<|assistant|>";}} else if (tmpl == LLM_CHAT_TEMPLATE_MINICPM) {// MiniCPM-3B-OpenHermes-2.5-v2-GGUFfor (auto message : chat) {std::string role(message->role);if (role == "user") {ss << LU8("<用户>");ss << trim(message->content);ss << "<AI>";} else {ss << trim(message->content);}}} else if (tmpl == LLM_CHAT_TEMPLATE_DEEPSEEK_2) {// DeepSeek-V2for (auto message : chat) {std::string role(message->role);if (role == "system") {ss << message->content << "\n\n";} else if (role == "user") {ss << "User: " << message->content << "\n\n";} else if (role == "assistant") {ss << "Assistant: " << message->content << LU8("<|end▁of▁sentence|>");}}if (add_ass) {ss << "Assistant:";}} else if (tmpl == LLM_CHAT_TEMPLATE_DEEPSEEK_3) {// DeepSeek-V3for (auto message : chat) {std::string role(message->role);if (role == "system") {ss << message->content << "\n\n";} else if (role == "user") {ss << LU8("<|User|>") << message->content;} else if (role == "assistant") {ss << LU8("<|Assistant|>") << message->content << LU8("<|end▁of▁sentence|>");}}if (add_ass) {ss << LU8("<|Assistant|>");}} else if (tmpl == LLM_CHAT_TEMPLATE_EXAONE_3) {// ref: https://huggingface.co/LGAI-EXAONE/EXAONE-3.0-7.8B-Instruct/discussions/8#66bae61b1893d14ee8ed85bb// EXAONE-3.0-7.8B-Instructfor (auto message : chat) {std::string role(message->role);if (role == "system") {ss << "[|system|]" << trim(message->content) << "[|endofturn|]\n";} else if (role == "user") {ss << "[|user|]" << trim(message->content) << "\n";} else if (role == "assistant") {ss << "[|assistant|]" << trim(message->content) << "[|endofturn|]\n";}}if (add_ass) {ss << "[|assistant|]";}} else if (tmpl == LLM_CHAT_TEMPLATE_RWKV_WORLD) {// this template requires the model to have "\n\n" as EOT tokenfor (auto message : chat) {std::string role(message->role);if (role == "user") {ss << "User: " << message->content << "\n\nAssistant:";} else {ss << message->content << "\n\n";}}} else if (tmpl == LLM_CHAT_TEMPLATE_GRANITE) {// IBM Granite templatefor (const auto & message : chat) {std::string role(message->role);ss << "<|start_of_role|>" << role << "<|end_of_role|>";if (role == "assistant_tool_call") {ss << "<|tool_call|>";}ss << message->content << "<|end_of_text|>\n";}if (add_ass) {ss << "<|start_of_role|>assistant<|end_of_role|>\n";}} else if (tmpl == LLM_CHAT_TEMPLATE_GIGACHAT) {// GigaChat templatebool has_system = !chat.empty() && std::string(chat[0]->role) == "system";// Handle system message if presentif (has_system) {ss << "<s>" << chat[0]->content << "<|message_sep|>";} else {ss << "<s>";}// Process remaining messagesfor (size_t i = has_system ? 1 : 0; i < chat.size(); i++) {std::string role(chat[i]->role);if (role == "user") {ss << "user<|role_sep|>" << chat[i]->content << "<|message_sep|>"<< "available functions<|role_sep|>[]<|message_sep|>";} else if (role == "assistant") {ss << "assistant<|role_sep|>" << chat[i]->content << "<|message_sep|>";}}// Add generation prompt if neededif (add_ass) {ss << "assistant<|role_sep|>";}} else if (tmpl == LLM_CHAT_TEMPLATE_MEGREZ) {// Megrez templatefor (auto message : chat) {std::string role(message->role);ss << "<|role_start|>" << role << "<|role_end|>" << message->content << "<|turn_end|>";}if (add_ass) {ss << "<|role_start|>assistant<|role_end|>";}} else {// template not supportedreturn -1;}dest = ss.str();return dest.size();

}

llm_chat_template llm_chat_detect_template(const std::string & tmpl) {try {return llm_chat_template_from_str(tmpl);} catch (const std::out_of_range &) {// ignore}auto tmpl_contains = [&tmpl](const char * haystack) -> bool {return tmpl.find(haystack) != std::string::npos;};if (tmpl_contains("<|im_start|>")) {return tmpl_contains("<|im_sep|>")? LLM_CHAT_TEMPLATE_PHI_4: LLM_CHAT_TEMPLATE_CHATML;} else if (tmpl.find("mistral") == 0 || tmpl_contains("[INST]")) {if (tmpl_contains("[SYSTEM_PROMPT]")) {return LLM_CHAT_TEMPLATE_MISTRAL_V7;} else if (// catches official 'v1' templatetmpl_contains("' [INST] ' + system_message")// catches official 'v3' and 'v3-tekken' templates|| tmpl_contains("[AVAILABLE_TOOLS]")) {// Official mistral 'v1', 'v3' and 'v3-tekken' templates// See: https://github.com/mistralai/cookbook/blob/main/concept-deep-dive/tokenization/chat_templates.md// See: https://github.com/mistralai/cookbook/blob/main/concept-deep-dive/tokenization/templates.mdif (tmpl_contains(" [INST]")) {return LLM_CHAT_TEMPLATE_MISTRAL_V1;} else if (tmpl_contains("\"[INST]\"")) {return LLM_CHAT_TEMPLATE_MISTRAL_V3_TEKKEN;}return LLM_CHAT_TEMPLATE_MISTRAL_V3;} else {// llama2 template and its variants// [variant] support system message// See: https://huggingface.co/blog/llama2#how-to-prompt-llama-2bool support_system_message = tmpl_contains("<<SYS>>");bool add_bos_inside_history = tmpl_contains("bos_token + '[INST]");bool strip_message = tmpl_contains("content.strip()");if (strip_message) {return LLM_CHAT_TEMPLATE_LLAMA_2_SYS_STRIP;} else if (add_bos_inside_history) {return LLM_CHAT_TEMPLATE_LLAMA_2_SYS_BOS;} else if (support_system_message) {return LLM_CHAT_TEMPLATE_LLAMA_2_SYS;} else {return LLM_CHAT_TEMPLATE_LLAMA_2;}}} else if (tmpl_contains("<|assistant|>") && tmpl_contains("<|end|>")) {return LLM_CHAT_TEMPLATE_PHI_3;} else if (tmpl_contains("<|assistant|>") && tmpl_contains("<|user|>")) {return LLM_CHAT_TEMPLATE_FALCON_3;} else if (tmpl_contains("<|user|>") && tmpl_contains("<|endoftext|>")) {return LLM_CHAT_TEMPLATE_ZEPHYR;} else if (tmpl_contains("bos_token + message['role']")) {return LLM_CHAT_TEMPLATE_MONARCH;} else if (tmpl_contains("<start_of_turn>")) {return LLM_CHAT_TEMPLATE_GEMMA;} else if (tmpl_contains("'\\n\\nAssistant: ' + eos_token")) {// OrionStarAI/Orion-14B-Chatreturn LLM_CHAT_TEMPLATE_ORION;} else if (tmpl_contains("GPT4 Correct ")) {// openchat/openchat-3.5-0106return LLM_CHAT_TEMPLATE_OPENCHAT;} else if (tmpl_contains("USER: ") && tmpl_contains("ASSISTANT: ")) {// eachadea/vicuna-13b-1.1 (and Orca variant)if (tmpl_contains("SYSTEM: ")) {return LLM_CHAT_TEMPLATE_VICUNA_ORCA;}return LLM_CHAT_TEMPLATE_VICUNA;} else if (tmpl_contains("### Instruction:") && tmpl_contains("<|EOT|>")) {// deepseek-ai/deepseek-coder-33b-instructreturn LLM_CHAT_TEMPLATE_DEEPSEEK;} else if (tmpl_contains("<|START_OF_TURN_TOKEN|>") && tmpl_contains("<|USER_TOKEN|>")) {// CohereForAI/c4ai-command-r-plusreturn LLM_CHAT_TEMPLATE_COMMAND_R;} else if (tmpl_contains("<|start_header_id|>") && tmpl_contains("<|end_header_id|>")) {return LLM_CHAT_TEMPLATE_LLAMA_3;} else if (tmpl_contains("[gMASK]sop")) {// chatglm3-6breturn LLM_CHAT_TEMPLATE_CHATGML_3;} else if (tmpl_contains("[gMASK]<sop>")) {return LLM_CHAT_TEMPLATE_CHATGML_4;} else if (tmpl_contains(LU8("<用户>"))) {// MiniCPM-3B-OpenHermes-2.5-v2-GGUFreturn LLM_CHAT_TEMPLATE_MINICPM;} else if (tmpl_contains("'Assistant: ' + message['content'] + eos_token")) {return LLM_CHAT_TEMPLATE_DEEPSEEK_2;} else if (tmpl_contains(LU8("<|Assistant|>")) && tmpl_contains(LU8("<|User|>")) && tmpl_contains(LU8("<|end▁of▁sentence|>"))) {return LLM_CHAT_TEMPLATE_DEEPSEEK_3;} else if (tmpl_contains("[|system|]") && tmpl_contains("[|assistant|]") && tmpl_contains("[|endofturn|]")) {// ref: https://huggingface.co/LGAI-EXAONE/EXAONE-3.0-7.8B-Instruct/discussions/8#66bae61b1893d14ee8ed85bb// EXAONE-3.0-7.8B-Instructreturn LLM_CHAT_TEMPLATE_EXAONE_3;} else if (tmpl_contains("rwkv-world")) {return LLM_CHAT_TEMPLATE_RWKV_WORLD;} else if (tmpl_contains("<|start_of_role|>")) {return LLM_CHAT_TEMPLATE_GRANITE;} else if (tmpl_contains("message['role'] + additional_special_tokens[0] + message['content'] + additional_special_tokens[1]")) {return LLM_CHAT_TEMPLATE_GIGACHAT;} else if (tmpl_contains("<|role_start|>")) {return LLM_CHAT_TEMPLATE_MEGREZ;}return LLM_CHAT_TEMPLATE_UNKNOWN;

}

References

[1] Yongqiang Cheng, https://yongqiang.blog.csdn.net/

[2] huggingface/gguf, https://github.com/huggingface/huggingface.js/tree/main/packages/gguf

相关文章:

llama.cpp LLM_CHAT_TEMPLATE_DEEPSEEK_3

llama.cpp LLM_CHAT_TEMPLATE_DEEPSEEK_3 1. LLAMA_VOCAB_PRE_TYPE_DEEPSEEK3_LLM2. static const std::map<std::string, llm_chat_template> LLM_CHAT_TEMPLATES3. LLM_CHAT_TEMPLATE_DEEPSEEK_3References 不宜吹捧中国大语言模型的同时,又去贬低美国大语言…...

【玩转 Postman 接口测试与开发2_014】第11章:测试现成的 API 接口(下)——自动化接口测试脚本实战演练 + 测试集合共享

《API Testing and Development with Postman》最新第二版封面 文章目录 3 接口自动化测试实战3.1 测试环境的改造3.2 对列表查询接口的测试3.3 对查询单个实例的测试3.4 对新增接口的测试3.5 对修改接口的测试3.6 对删除接口的测试 4 测试集合的共享操作4.1 分享 Postman 集合…...

Linux03——常见的操作命令

root用户以及权限 Linux系统的超级管理员用户是:root用户 su命令 可以切换用户,语法:su [-] [用户名]- 表示切换后加载环境变量,建议带上用户可以省略,省略默认切换到root su命令是用于账户切换的系统命令ÿ…...

w188校园商铺管理系统设计与实现

🙊作者简介:多年一线开发工作经验,原创团队,分享技术代码帮助学生学习,独立完成自己的网站项目。 代码可以查看文章末尾⬇️联系方式获取,记得注明来意哦~🌹赠送计算机毕业设计600个选题excel文…...

leetcode——二叉树的最近公共祖先(java)

给定一个二叉树, 找到该树中两个指定节点的最近公共祖先。 百度百科中最近公共祖先的定义为:“对于有根树 T 的两个节点 p、q,最近公共祖先表示为一个节点 x,满足 x 是 p、q 的祖先且 x 的深度尽可能大(一个节点也可以是它自己的…...

基于FPGA的BT656编解码

概述 BT656全称为“ITU-R BT.656-4”或简称“BT656”,是一种用于数字视频传输的接口标准。它规定了数字视频信号的编码方式、传输格式以及接口电气特性。在物理层面上,BT656接口通常包含10根线(在某些应用中可能略有不同,但标准配置为10根)。这些线分别用于传输视频数据、…...

解锁数据结构密码:层次树与自引用树的设计艺术与API实践

1. 引言:为什么选择层次树和自引用树? 数据结构是编程中的基石之一,尤其是在处理复杂关系和层次化数据时,树形结构常常是最佳选择。层次树(Hierarchical Tree)和自引用树(Self-referencing Tree…...

本地快速部署DeepSeek-R1模型——2025新年贺岁

一晃年初六了,春节长假余额马上归零了。今天下午在我的电脑上成功部署了DeepSeek-R1模型,抽个时间和大家简单分享一下过程: 概述 DeepSeek模型 是一家由中国知名量化私募巨头幻方量化创立的人工智能公司,致力于开发高效、高性能…...

WAWA鱼2024年终总结,关键词:成长

前言 本来想着偷懒一下,不写2024年终总结了,因为24年上半年还在忙毕业,下半年在忙转正,其实没什么太多好写的。结果被an_da和学弟催更了,哈哈哈,感谢大家对我近况的关注,学校内容基本都忘的差不…...

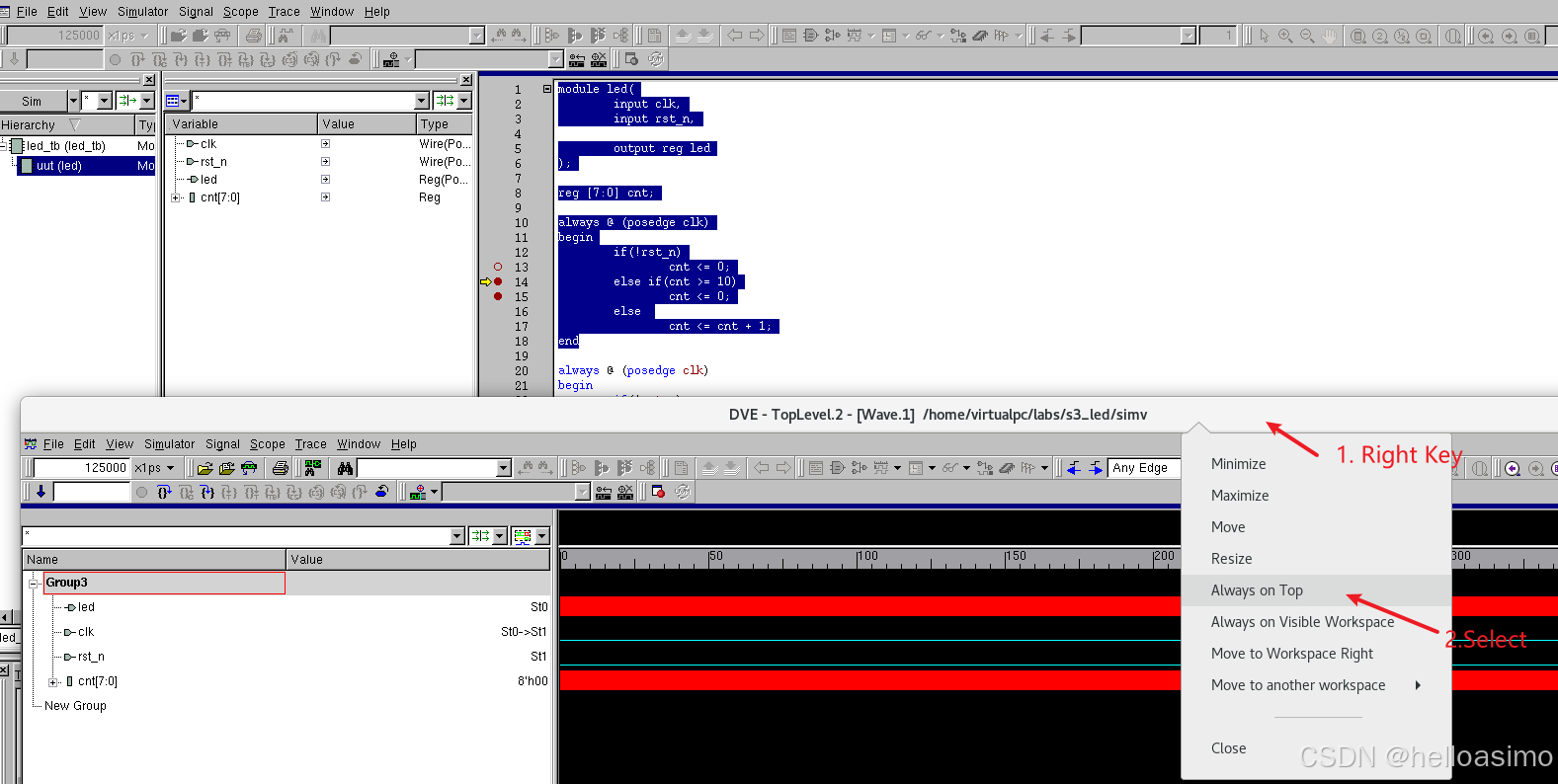

使用VCS进行单步调试的步骤

使用VCS对SystemVerilog进行单步调试的步骤如下: 1. 编译设计 使用-debug_all或-debug_pp选项编译设计,生成调试信息。 我的4个文件: 1.led.v module led(input clk,input rst_n,output reg led );reg [7:0] cnt;always (posedge clk) beg…...

【Elasticsearch】硬件资源优化

🧑 博主简介:CSDN博客专家,历代文学网(PC端可以访问:https://literature.sinhy.com/#/?__c1000,移动端可微信小程序搜索“历代文学”)总架构师,15年工作经验,精通Java编…...

Elasticsearch 指南 [8.17] | Search APIs

Search API 返回与请求中定义的查询匹配的搜索结果。 http GET /my-index-000001/_search Request GET /<target>/_search GET /_search POST /<target>/_search POST /_search Prerequisites 如果启用了 Elasticsearch 安全功能,针对目标数据流…...

QT+mysql+python 效果:

# This Python file uses the following encoding: utf-8 import sysfrom PySide6.QtWidgets import QApplication, QWidget,QMessageBox from PySide6.QtGui import QStandardItemModel, QStandardItem # 导入需要的类# Important: # 你需要通过以下指令把 form.ui转为ui…...

Java 序列化和反序列化作用

Java 序列化和反序列化的核心作用是将对象转换为可存储或传输的字节流(序列化),以及从字节流恢复对象(反序列化)。以下是详细说明和示例: 作用 持久化存储 将对象保存到文件或数据库,重启后仍可…...

【4】阿里面试题整理

[1]. 介绍一下数据库死锁 数据库死锁是指两个或多个事务,由于互相请求对方持有的资源而造成的互相等待的状态,导致它们都无法继续执行。 死锁会导致事务阻塞,系统性能下降甚至应用崩溃。 比如:事务T1持有资源R1并等待R2&#x…...

回顾生化之父三上真司的游戏思想

1. 放养式野蛮成长路线,开创生存恐怖类型 三上进入capcom后,没有培训,没有师傅手把手的指导,而是每天摸索写策划书,老员工给出不行的评语后,扔掉旧的重写新的。 然后突然就成为游戏总监,进入开…...

Java循环操作哪个快

文章目录 Java循环操作哪个快一、引言二、循环操作性能对比1、普通for循环与增强for循环1.1、代码示例 2、for循环与while循环2.1、代码示例 3、循环优化技巧3.1、代码示例 三、循环操作的适用场景四、使用示例五、总结 Java循环操作哪个快 一、引言 在Java开发中,…...

Maven jar 包下载失败问题处理

Maven jar 包下载失败问题处理 1.配置好国内的Maven源2.重新下载3. 其他问题 1.配置好国内的Maven源 打开⾃⼰的 Idea 检测 Maven 的配置是否正确,正确的配置如下图所示: 检查项⼀共有两个: 确认右边的两个勾已经选中,如果没有请…...

【Numpy核心编程攻略:Python数据处理、分析详解与科学计算】1.25 视觉风暴:NumPy驱动数据可视化

1.25 视觉风暴:NumPy驱动数据可视化 目录 #mermaid-svg-i3nKPm64ZuQ9UcNI {font-family:"trebuchet ms",verdana,arial,sans-serif;font-size:16px;fill:#333;}#mermaid-svg-i3nKPm64ZuQ9UcNI .error-icon{fill:#552222;}#mermaid-svg-i3nKPm64ZuQ9UcNI …...

Baklib推动数字化内容管理解决方案助力企业数字化转型

内容概要 在当今信息爆炸的时代,数字化内容管理成为企业提升效率和竞争力的关键。企业在面对大量数据时,如何高效地存储、分类与检索信息,直接关系到其经营的成败。数字化内容管理不仅限于简单的文档存储,更是整合了文档、图像、…...

读书笔记 | 《最小阻力之路》:用结构思维重塑人生愿景

一、核心理念:结构决定行为轨迹 橡皮筋模型:愿景张力的本质 书中提出:人类行为始终沿着"现状"与"愿景"之间的张力路径运动,如同橡皮筋拉伸产生的动力。 案例:音乐家每日练习的坚持,不…...

React中使用箭头函数定义事件处理程序

React中使用箭头函数定义事件处理程序 为什么使用箭头函数?1. 传递动态参数2. 避免闭包问题3. 确保每个方块的事件处理程序是独立的4. 代码可读性和维护性 示例代码总结 在React开发中,处理事件是一个常见的任务。特别是当我们需要传递动态参数时&#x…...

高阶开发基础——快速入门C++并发编程6——大作业:实现一个超级迷你的线程池

目录 实现一个无返回的线程池 完全代码实现 Reference 实现一个无返回的线程池 实现一个简单的线程池非常简单,我们首先聊一聊线程池的定义: 线程池(Thread Pool) 是一种并发编程的设计模式,用于管理和复用多个线程…...

少样本提示词模板

文章目录 少样本提示词模板 少样本提示词模板 少样本提示是一种基于机器学习的技术,利用少量的样本(即提示词的示例部分)来引导模型对特定任务进行学习和执行。这些示例能让模型理解开发者期望它完成的任务的类型和风格。在给定的任务中&…...

SQLGlot:用SQLGlot解析SQL

几十年来,结构化查询语言(SQL)一直是与数据库交互的实际语言。在一段时间内,不同的数据库在支持通用SQL语法的同时演变出了不同的SQL风格,也就是方言。这可能是SQL被广泛采用和流行的原因之一。 SQL解析是解构SQL查询…...

代码随想录算法训练营Day35

第九章 动态规划part03 正式开始背包问题,背包问题还是挺难的,虽然大家可能看了很多背包问题模板代码,感觉挺简单,但基本理解的都不够深入。 如果是直接从来没听过背包问题,可以先看文字讲解慢慢了解 这是干什么的。 …...

ECharts 样式设置

ECharts 样式设置 引言 ECharts 是一款功能强大的可视化库,广泛用于数据可视化。样式设置是 ECharts 中的重要一环,它能够帮助开发者根据需求调整图表的视觉效果,使其更加美观和易于理解。本文将详细介绍 ECharts 的样式设置,包…...

【腾讯前端面试】纯css画图形

之前参加腾讯面试,第一轮是笔试,面试官发的试卷里有一题手写css画一个扇形、一个平行四边形……笔试时间还是比较充裕的,但是我对这题完全没有思路😭于是就空着了,最后也没过。 今天偶然翻到廖雪峰大佬的博客里提到了关…...

的解决方法)

DBeaver连接MySQL提示Access denied for user ‘‘@‘ip‘ (using password: YES)的解决方法

在使用DBeaver连接MySQL数据库时,如果遇到“Access denied for user ip (using password: YES)”的错误提示,说明用户认证失败。此问题通常与数据库用户权限、配置错误或网络设置有关。本文将详细介绍解决此问题的步骤。 一、检查用户名和密码 首先&am…...

截止到2025年2月1日,Linux的Wayland还有哪些问题是需要解决的?

截至2025年2月1日,Wayland需要解决的核心问题可按权重从高到低排序如下: 1. 屏幕共享与远程桌面的完整支持(权重:★★★★★) 问题:企业场景(如 腾讯会议)、开发者远程调试依赖稳定的屏幕共享功能。当前Wayland依赖PipeWire和XWayland,存在权限管理复杂、多显示器选择…...