使用C语言操作kafka ---- librdkafka

1 安装librdkafka

git clone https://github.com/edenhill/librdkafka.git

cd librdkafka

git checkout v1.7.0

./configure

make

sudo make install

sudo ldconfig

在librdkafka的examples目录下会有示例程序。比如consumer的启动需要下列参数

./consumer <broker> <group.id> <topic1> <topic2>..

指定broker、group id、topic(可以订阅多个)。示例:

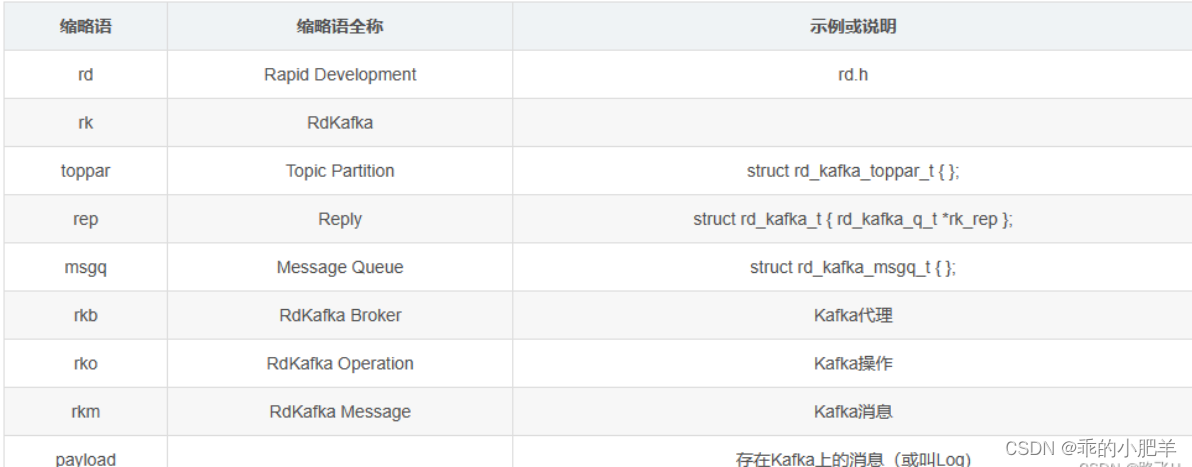

指定broker、group id、topic(可以订阅多个)。示例:缩略语介绍:

2 开启kafka相关服务

2.1 启动zookeeper

启动zookeeper可以通过下面的脚本来启动zookeeper服务,当然,也可以自己独立搭建zookeeper的集群来实现。这里我们直接使用kafka自带的zookeeper。

cd bin/

# 前台运行:

sh zookeeper-server-start.sh ../config/zookeeper.properties# 后台运行:

sh zookeeper-server-start.sh -daemon ../config/zookeeper.properties

可以通过命令lsof -i:2181 查看zookeeper是否启动成功。

$ lsof -i:2181

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

java 74930 fly 96u IPv6 734467 0t0 TCP *:2181 (LISTEN)

2.2 启动Kafka

启动kafka(kafka安装路径的bin目录下执行),默认启动端口9092。

sh kafka-server-start.sh -daemon ../config/server.properties

2.3 创建topic

sh kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic test

参数说明:

–create 是创建主题的的动作指令。

–zookeeper 指定kafka所连接的zookeeper服务地址。

–replicator-factor 指定了副本因子(即副本数量); 表示该topic需要在不同的broker中保存几份,这里设置成1,表示在两个broker中保存两份Partitions分区数。

–partitions 指定分区个数;多通道,类似车道。

–topic 指定所要创建主题的名称,比如test。

3 c语言操作kafka的范例

3.1 消费者

在librdkafka\examples下有consumer.c文件,该文件是一个c语言操作kafka的代码范例,内容如下。

/*** Simple high-level balanced Apache Kafka consumer* using the Kafka driver from librdkafka* (https://github.com/edenhill/librdkafka)*/#include <stdio.h>

#include <signal.h>

#include <string.h>

#include <ctype.h>/* Typical include path would be <librdkafka/rdkafka.h>, but this program* is builtin from within the librdkafka source tree and thus differs. */

//#include <librdkafka/rdkafka.h>

#include "rdkafka.h"static volatile sig_atomic_t run = 1;/*** @brief Signal termination of program*/

static void stop (int sig) {run = 0;

}/*** @returns 1 if all bytes are printable, else 0.*/

static int is_printable (const char *buf, size_t size) {size_t i;for (i = 0 ; i < size ; i++)if (!isprint((int)buf[i]))return 0;return 1;

}int main (int argc, char **argv) {rd_kafka_t *rk; /* Consumer instance handle */rd_kafka_conf_t *conf; /* Temporary configuration object */rd_kafka_resp_err_t err; /* librdkafka API error code */char errstr[512]; /* librdkafka API error reporting buffer */const char *brokers; /* Argument: broker list */const char *groupid; /* Argument: Consumer group id */char **topics; /* Argument: list of topics to subscribe to */int topic_cnt; /* Number of topics to subscribe to */rd_kafka_topic_partition_list_t *subscription; /* Subscribed topics */int i;/** Argument validation*/if (argc < 4) {fprintf(stderr,"%% Usage: ""%s <broker> <group.id> <topic1> <topic2>..\n",argv[0]);return 1;}brokers = argv[1];groupid = argv[2];topics = &argv[3];topic_cnt = argc - 3;/** Create Kafka client configuration place-holder*/conf = rd_kafka_conf_new(); // 创建配置文件/* Set bootstrap broker(s) as a comma-separated list of* host or host:port (default port 9092).* librdkafka will use the bootstrap brokers to acquire the full* set of brokers from the cluster. */if (rd_kafka_conf_set(conf, "bootstrap.servers", brokers,errstr, sizeof(errstr)) != RD_KAFKA_CONF_OK) {fprintf(stderr, "%s\n", errstr);rd_kafka_conf_destroy(conf);return 1;}/* Set the consumer group id.* All consumers sharing the same group id will join the same* group, and the subscribed topic' partitions will be assigned* according to the partition.assignment.strategy* (consumer config property) to the consumers in the group. */if (rd_kafka_conf_set(conf, "group.id", groupid,errstr, sizeof(errstr)) != RD_KAFKA_CONF_OK) {fprintf(stderr, "%s\n", errstr);rd_kafka_conf_destroy(conf);return 1;}/* If there is no previously committed offset for a partition* the auto.offset.reset strategy will be used to decide where* in the partition to start fetching messages.* By setting this to earliest the consumer will read all messages* in the partition if there was no previously committed offset. */if (rd_kafka_conf_set(conf, "auto.offset.reset", "earliest",errstr, sizeof(errstr)) != RD_KAFKA_CONF_OK) {fprintf(stderr, "%s\n", errstr);rd_kafka_conf_destroy(conf);return 1;}/** Create consumer instance.** NOTE: rd_kafka_new() takes ownership of the conf object* and the application must not reference it again after* this call.*/// 创建一个kafka消费者rk = rd_kafka_new(RD_KAFKA_CONSUMER, conf, errstr, sizeof(errstr));if (!rk) {fprintf(stderr,"%% Failed to create new consumer: %s\n", errstr);return 1;}conf = NULL; /* Configuration object is now owned, and freed,* by the rd_kafka_t instance. *//* Redirect all messages from per-partition queues to* the main queue so that messages can be consumed with one* call from all assigned partitions.** The alternative is to poll the main queue (for events)* and each partition queue separately, which requires setting* up a rebalance callback and keeping track of the assignment:* but that is more complex and typically not recommended. */rd_kafka_poll_set_consumer(rk);// poll机制,设置消费者实例到poll中/* Convert the list of topics to a format suitable for librdkafka */// 创建主题分区列表subscription = rd_kafka_topic_partition_list_new(topic_cnt);for (i = 0 ; i < topic_cnt ; i++)rd_kafka_topic_partition_list_add(subscription,topics[i],/* the partition is ignored* by subscribe() */RD_KAFKA_PARTITION_UA);/* Subscribe to the list of topics */err = rd_kafka_subscribe(rk, subscription);if (err) {fprintf(stderr,"%% Failed to subscribe to %d topics: %s\n",subscription->cnt, rd_kafka_err2str(err));rd_kafka_topic_partition_list_destroy(subscription);rd_kafka_destroy(rk);return 1;}fprintf(stderr,"%% Subscribed to %d topic(s), ""waiting for rebalance and messages...\n",subscription->cnt);rd_kafka_topic_partition_list_destroy(subscription);/* Signal handler for clean shutdown */signal(SIGINT, stop);/* Subscribing to topics will trigger a group rebalance* which may take some time to finish, but there is no need* for the application to handle this idle period in a special way* since a rebalance may happen at any time.* Start polling for messages. */while (run) {rd_kafka_message_t *rkm;rkm = rd_kafka_consumer_poll(rk, 100);if (!rkm)continue; /* Timeout: no message within 100ms,* try again. This short timeout allows* checking for `run` at frequent intervals.*//* consumer_poll() will return either a proper message* or a consumer error (rkm->err is set). */if (rkm->err) {/* Consumer errors are generally to be considered* informational as the consumer will automatically* try to recover from all types of errors. */fprintf(stderr,"%% Consumer error: %s\n",rd_kafka_message_errstr(rkm));rd_kafka_message_destroy(rkm);continue;}/* Proper message. */printf("Message on %s [%"PRId32"] at offset %"PRId64":\n",rd_kafka_topic_name(rkm->rkt), rkm->partition,rkm->offset);/* Print the message key. */if (rkm->key && is_printable(rkm->key, rkm->key_len))printf(" Key: %.*s\n",(int)rkm->key_len, (const char *)rkm->key);else if (rkm->key)printf(" Key: (%d bytes)\n", (int)rkm->key_len);/* Print the message value/payload. */if (rkm->payload && is_printable(rkm->payload, rkm->len))printf(" Value: %.*s\n",(int)rkm->len, (const char *)rkm->payload);else if (rkm->payload)printf(" Value: (%d bytes)\n", (int)rkm->len);rd_kafka_message_destroy(rkm);}/* Close the consumer: commit final offsets and leave the group. */fprintf(stderr, "%% Closing consumer\n");rd_kafka_consumer_close(rk);/* Destroy the consumer */rd_kafka_destroy(rk);return 0;

}

3.2 生产者

在librdkafka\examples下有producer.c文件,该文件是一个c语言操作kafka的代码范例,内容如下。

/*** Simple Apache Kafka producer* using the Kafka driver from librdkafka* (https://github.com/edenhill/librdkafka)*/#include <stdio.h>

#include <signal.h>

#include <string.h>/* Typical include path would be <librdkafka/rdkafka.h>, but this program* is builtin from within the librdkafka source tree and thus differs. */

#include "rdkafka.h"static volatile sig_atomic_t run = 1;/*** @brief Signal termination of program*/

static void stop (int sig) {run = 0;fclose(stdin); /* abort fgets() */

}/*** @brief Message delivery report callback.** This callback is called exactly once per message, indicating if* the message was succesfully delivered* (rkmessage->err == RD_KAFKA_RESP_ERR_NO_ERROR) or permanently* failed delivery (rkmessage->err != RD_KAFKA_RESP_ERR_NO_ERROR).** The callback is triggered from rd_kafka_poll() and executes on* the application's thread.*/

static void dr_msg_cb (rd_kafka_t *rk,const rd_kafka_message_t *rkmessage, void *opaque) {if (rkmessage->err)fprintf(stderr, "%% Message delivery failed: %s\n",rd_kafka_err2str(rkmessage->err));elsefprintf(stderr,"%% Message delivered (%zd bytes, ""partition %"PRId32")\n",rkmessage->len, rkmessage->partition);/* The rkmessage is destroyed automatically by librdkafka */

}int main (int argc, char **argv) {rd_kafka_t *rk; /* Producer instance handle */rd_kafka_conf_t *conf; /* Temporary configuration object */char errstr[512]; /* librdkafka API error reporting buffer */char buf[512]; /* Message value temporary buffer */const char *brokers; /* Argument: broker list */const char *topic; /* Argument: topic to produce to *//** Argument validation*/if (argc != 3) {fprintf(stderr, "%% Usage: %s <broker> <topic>\n", argv[0]);return 1;}brokers = argv[1];topic = argv[2];/** Create Kafka client configuration place-holder*/conf = rd_kafka_conf_new();/* Set bootstrap broker(s) as a comma-separated list of* host or host:port (default port 9092).* librdkafka will use the bootstrap brokers to acquire the full* set of brokers from the cluster. */if (rd_kafka_conf_set(conf, "bootstrap.servers", brokers,errstr, sizeof(errstr)) != RD_KAFKA_CONF_OK) {fprintf(stderr, "%s\n", errstr);return 1;}/* Set the delivery report callback.* This callback will be called once per message to inform* the application if delivery succeeded or failed.* See dr_msg_cb() above.* The callback is only triggered from rd_kafka_poll() and* rd_kafka_flush(). */rd_kafka_conf_set_dr_msg_cb(conf, dr_msg_cb);/** Create producer instance.** NOTE: rd_kafka_new() takes ownership of the conf object* and the application must not reference it again after* this call.*/rk = rd_kafka_new(RD_KAFKA_PRODUCER, conf, errstr, sizeof(errstr));if (!rk) {fprintf(stderr,"%% Failed to create new producer: %s\n", errstr);return 1;}/* Signal handler for clean shutdown */signal(SIGINT, stop);fprintf(stderr,"%% Type some text and hit enter to produce message\n""%% Or just hit enter to only serve delivery reports\n""%% Press Ctrl-C or Ctrl-D to exit\n");while (run && fgets(buf, sizeof(buf), stdin)) {size_t len = strlen(buf);rd_kafka_resp_err_t err;if (buf[len-1] == '\n') /* Remove newline */buf[--len] = '\0';if (len == 0) {/* Empty line: only serve delivery reports */rd_kafka_poll(rk, 0/*non-blocking */);continue;}/** Send/Produce message.* This is an asynchronous call, on success it will only* enqueue the message on the internal producer queue.* The actual delivery attempts to the broker are handled* by background threads.* The previously registered delivery report callback* (dr_msg_cb) is used to signal back to the application* when the message has been delivered (or failed).*/retry:err = rd_kafka_producev(/* Producer handle */rk,/* Topic name */RD_KAFKA_V_TOPIC(topic),/* Make a copy of the payload. */RD_KAFKA_V_MSGFLAGS(RD_KAFKA_MSG_F_COPY),/* Message value and length */RD_KAFKA_V_VALUE(buf, len),/* Per-Message opaque, provided in* delivery report callback as* msg_opaque. */RD_KAFKA_V_OPAQUE(NULL),/* End sentinel */RD_KAFKA_V_END);if (err) {/** Failed to *enqueue* message for producing.*/fprintf(stderr,"%% Failed to produce to topic %s: %s\n",topic, rd_kafka_err2str(err));if (err == RD_KAFKA_RESP_ERR__QUEUE_FULL) {/* If the internal queue is full, wait for* messages to be delivered and then retry.* The internal queue represents both* messages to be sent and messages that have* been sent or failed, awaiting their* delivery report callback to be called.** The internal queue is limited by the* configuration property* queue.buffering.max.messages */rd_kafka_poll(rk, 1000/*block for max 1000ms*/);goto retry;}} else {fprintf(stderr, "%% Enqueued message (%zd bytes) ""for topic %s\n",len, topic);}/* A producer application should continually serve* the delivery report queue by calling rd_kafka_poll()* at frequent intervals.* Either put the poll call in your main loop, or in a* dedicated thread, or call it after every* rd_kafka_produce() call.* Just make sure that rd_kafka_poll() is still called* during periods where you are not producing any messages* to make sure previously produced messages have their* delivery report callback served (and any other callbacks* you register). */rd_kafka_poll(rk, 0/*non-blocking*/);}/* Wait for final messages to be delivered or fail.* rd_kafka_flush() is an abstraction over rd_kafka_poll() which* waits for all messages to be delivered. */fprintf(stderr, "%% Flushing final messages..\n");rd_kafka_flush(rk, 10*1000 /* wait for max 10 seconds */);/* If the output queue is still not empty there is an issue* with producing messages to the clusters. */if (rd_kafka_outq_len(rk) > 0)fprintf(stderr, "%% %d message(s) were not delivered\n",rd_kafka_outq_len(rk));/* Destroy the producer instance */rd_kafka_destroy(rk);return 0;

}

3.3 生产者和消费者的交互

(1)启动消费者。

./consumer localhost:9092 0 test

1

显示:

% Subscribed to 1 topic(s), waiting for rebalance and messages...

1

(2)启动生产者。

./producer localhost:9092 test

总结

- 一个分区只能被一个消费者读取。如果一个topic只有一个分区,多个消费者读取时只有一个消费者能读到数据;单个分区开启多个消费者去读取数据是没有意义的。

相关文章:

使用C语言操作kafka ---- librdkafka

1 安装librdkafka git clone https://github.com/edenhill/librdkafka.git cd librdkafka git checkout v1.7.0 ./configure make sudo make install sudo ldconfig 在librdkafka的examples目录下会有示例程序。比如consumer的启动需要下列参数 ./consumer <broker> &…...

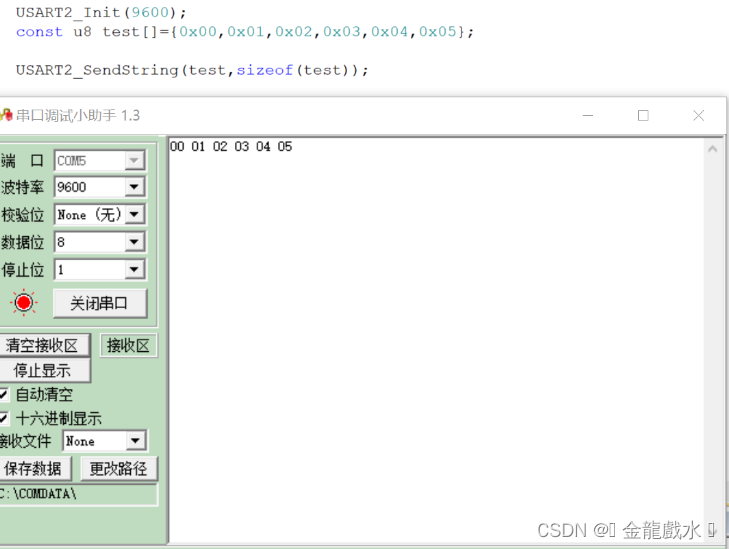

误用STM32串口发送标志位 “USART_FLAG_TXE” “USART_FLAG_TC”造成的BUG

当你使用串口发送数据时是否出现过这样的情况: 1.发送时第一个字节丢失。 2.发送时出现莫名的字节丢失。 3.各种情况字节丢失。 1.先了解一下串口发送的流程图(手动描绘): 可以假想USART_FLAG_TXE是用于检测"弹仓"&…...

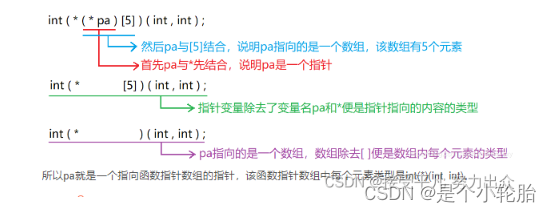

指针(三)

函数指针 定义:整型指针是指向整形的指针,数组指针式指向数组的指针,其实函数指针就是指向函数的指针。 函数指针基础: ()优先级要高于*;一个变量除去了变量名,便是它的变量类型;一个指针变量…...

labelimg遇到的标签修改问题:修改一张图像的标签时,保存后导致classes.txt改变

问题描述:修改一张图像的标签时候, classes.txt 会同步更新,导致重新生成了 classes.txt 但是这个 classes.txt 只有你现在写的那个类别名,以前的没有了。 解决:设置一个 predefined_classes.txt,内容和模…...

Spring Cloud Gateway使用和配置

Spring Cloud Gateway是Spring官方基于Spring 5.0,Spring Boot 2.0和Project Reactor等技术开发的网关,Spring Cloud Gateway旨在为微服务架构提供一种简单而有效的统一的API路由管理方式。Spring Cloud Gateway作为Spring Cloud生态系中的网关ÿ…...

RT-Thread 时钟管理

时钟管理 时钟是非常重要的概念,和朋友出去游玩需要约定时间,完成任务也需要花费时间,生活离不开时间。 操作系统也一样,需要通过时间来规范其任务的执行,操作系统中最小的时间单位是时钟节拍(OS Tick&…...

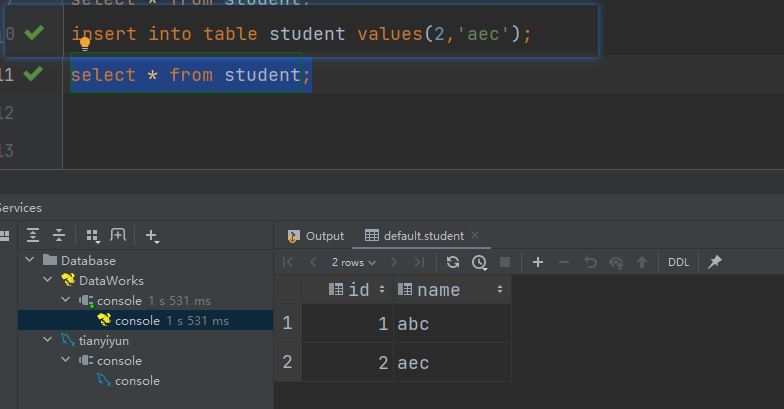

User: zhangflink is not allowed to impersonate zhangflink

使用hive2连接进行添加数据是报错: [08S01][1] Error while processing statement: FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.mr.MapRedTask. User: zhangflink is not allowed to impersonate zhangflink 有些文章说需要修…...

深入理解Sentinel系列-1.初识Sentinel

👏作者简介:大家好,我是爱吃芝士的土豆倪,24届校招生Java选手,很高兴认识大家📕系列专栏:Spring源码、JUC源码、Kafka原理、分布式技术原理🔥如果感觉博主的文章还不错的话ÿ…...

vue中字典的使用

1.引入字典 dicts: [order_status,product_type],2.表单中使用 select下拉 <el-form-item label"订单状态" prop"orderStatus"><el-select v-model"form.orderStatus" clearable placeholder"请输入订单状态" :disabled"…...

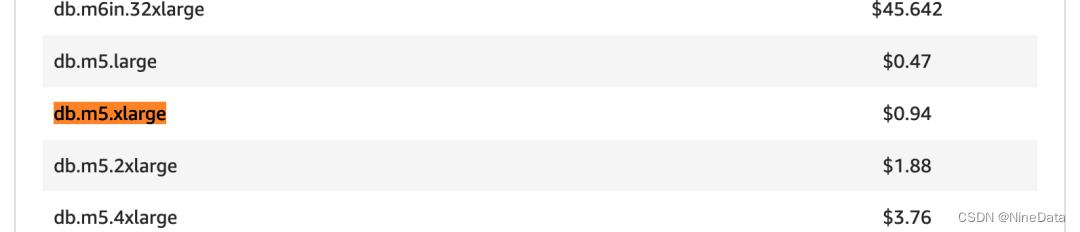

AWS基于x86 vs Graviton(ARM)的RDS MySQL性能对比

概述 这是一个系列。在前面,我们测试了阿里云经济版(“ARM”)与标准版的性能/价格对比;华为云x86规格与ARM(鲲鹏增强)版的性能/价格对比。现在,再来看看AWS的ARM版本的RDS情况 在2018年&#…...

ESP32 蓝牙音箱无法链接上电脑的解决:此项不起作用,请确保你的蓝牙设备仍可检测到

ESP32 被我加了放大器后通过A2DP链接手机播放一直正常,但是怎么都链接不到电脑,蓝牙设备可以被发现和配对,但是始终无法连接,显示: 此项不起作用,请确保你的蓝牙设备仍可检测到,然后再试一次 …...

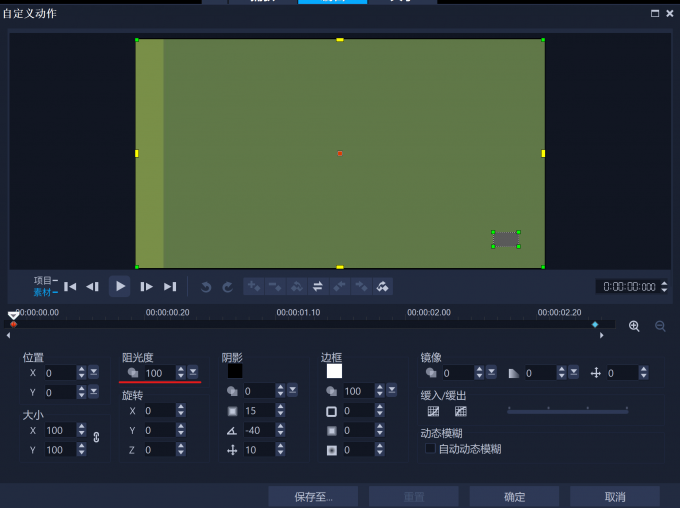

会声会影2024软件还包含了视频教学以及模板素材

会声会影2024中文版是一款加拿大公司Corel发布的视频编软件。会声会影2024官方版支持视频合并、剪辑、屏幕录制、光盘制作、添加特效、字幕和配音等功能,用户可以快速上手。会声会影2024软件还包含了视频教学以及模板素材,让用户剪辑视频更加的轻松。 会…...

[Swift]RxSwift常见用法详解

RxSwift 是 ReactiveX API 的 Swift 版。它是一个基于 Swift 事件驱动的库,用于处理异步和基于事件的代码。 GitHub:https://github.com/ReactiveX/RxSwift 一、安装 首先,你需要安装 RxSwift。你可以使用 CocoaPods,Carthage 或者 Swift …...

探索鸿蒙_ArkTs开发语言

ArkTs 在正常的网页开发中,实现一个效果,需要htmlcssjs三种语言实现。 但是使用ArkTs语言,就能全部实现了。 ArkTs是基于TypeScript的,但是呢,TypeScript是基于javascript的,所以ArkTs不但能完成js的工作&a…...

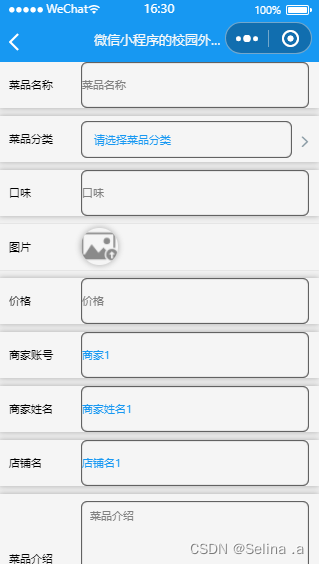

案例049:基于微信小程序的校园外卖平台设计与实现

文末获取源码 开发语言:Java 框架:SSM JDK版本:JDK1.8 数据库:mysql 5.7 开发软件:eclipse/myeclipse/idea Maven包:Maven3.5.4 小程序框架:uniapp 小程序开发软件:HBuilder X 小程序…...

通过提示工程释放人工智能

在快速发展的技术领域,人工智能 (AI) 处于前沿,不断重塑我们与数字系统的交互。这一演变的一个关键方面是大型语言模型 (LLM) 的开发和完善,它在从客户服务机器人到高级数据分析的各种应用中已变得不可或缺。利用这些法学硕士的潜力的核心是提…...

亚马逊云科技Serverless视频内容摘要提取方案

概述 随着GenAI的普及,视频内容摘要生成成为一个备受关注的领域。通过将视频内容转化为文本,可以探索到更广泛的应用场景,其中包括: 视频搜索与索引:将视频内容转化为文本形式,可以方便地进行搜索和索引操作…...

c语言:整数与浮点数在内存中的存储方式

整数在内存中的存储: 在计算机内存中,整数通常以二进制形式存储。计算机使用一定数量的比特(bit)来表示整数,比如32位或64位。在存储整数时,计算机使用补码形式来表示负数,而使用原码形式来表示…...

dockerdesktop 导出镜像,导入镜像

总体思路 备份时 容器 > 镜像 > 本地文件 恢复时 本地文件 > 镜像 > 容器 备份步骤 首先,把容器生成为镜像 docker commit [容器名称] [镜像名称] 示例 docker commit nginx mynginx然后,把镜像备份为本地文件,如果使用的是Docker Desktop,打包备份的文件会自动存…...

2-Django、Flask和Tornado三大主流框架对比

在Python的web开发框架中,目前使用量最高的几个是Django、Flask和Tornado, 经常会有人拿这几个对比,相信大家的初步印象应该是 Django大而全、Flask小而精、Tornado性能高。 了解常用框架 Django 主要特点是大而全,集成了很多组件,例如: Mo…...

基于Fire2012算法与FastLED库的Arduino LED篝火制作全攻略

1. 项目概述:用代码点燃一场永不熄灭的数字篝火夏夜、星空、朋友围坐,篝火带来的温暖与氛围是露营的灵魂。但现实是,很多营地禁止明火,或者在城市阳台、室内空间,生一堆真正的火既不安全也不现实。作为一名玩了十多年A…...

手机号归属地查询系统:3步构建可视化定位工具

手机号归属地查询系统:3步构建可视化定位工具 【免费下载链接】location-to-phone-number This a project to search a location of a specified phone number, and locate the map to the phone number location. 项目地址: https://gitcode.com/gh_mirrors/lo/l…...

基于Claude的AI招聘系统:从简历解析到智能评估全流程实践

1. 项目概述:当Claude成为你的招聘官最近在GitHub上看到一个挺有意思的项目,叫“hire-from-claude”。光看名字,你可能会觉得有点玄乎,难道是要让AI来面试和招聘人类?其实,这个项目的核心思路,是…...

系统管理员AI编程实战:基于Claude的运维自动化脚本开发指南

1. 项目概述:一个面向系统管理员的Claude-Code学习与实践仓库最近在整理自己的技术栈时,发现很多系统管理员同行对如何将大型语言模型(LLM)高效地融入日常运维工作流感到困惑。大家普遍觉得这些AI工具很强大,但具体到写…...

多数人支持!微软或把 Xbox 重新品牌化为 XBOX,回归最初形式

Xbox 品牌重塑:从民意调查到账号更名微软 Xbox 首席执行官阿莎夏尔马在 X(原推特)上发起民意调查,询问粉丝微软应使用 Xbox 还是 XBOX,结果多数人支持 XBOX,随后公司将其 X 账号更名。不过,Xbox…...

基于视觉语言模型的智能体框架:让AI看懂界面并自动操作

1. 项目概述:当AI学会“看”与“想”最近在探索AI与视觉结合的领域时,我深度体验了landing-ai团队开源的vision-agent项目。这不仅仅是一个工具库,它更像是一个为大型语言模型(LLM)装上了“眼睛”和“手”的智能体框架…...

Agent 的记忆也会被投毒:长期记忆安全的六阶段框架

过去,我们更习惯把大模型的风险理解为“这一轮输入有没有问题”“这一轮输出会不会越界”。但有了长期记忆之后,风险结构发生了变化。恶意内容不一定在当场触发,也不一定在同一轮任务里显现出来。它可以先悄悄进入记忆,在几天后、…...

基于Slack Bolt与OpenAI API构建企业级AI助手:从集成部署到高级应用

1. 项目概述:当ChatGPT遇上Slack,团队协作的智能革命 如果你和我一样,每天的工作都泡在Slack里,与团队沟通、同步进度、处理各种消息,那你一定也经历过这样的时刻:一个技术问题卡住了,需要快速…...

Go语言轻量级规则引擎Airules:高性能架构与微服务实践

1. 项目概述:从“Airules”看现代规则引擎的轻量化实践最近在GitHub上看到一个挺有意思的项目,叫“Airules”。光看名字,你可能会联想到“AI规则”或者“空气规则”,其实它的全称是“Air Rules”,直译过来就是“空气规…...

锂电池安全使用指南:从原理到实践,避免常见风险

1. 项目概述:从“能用”到“用好”的锂电安全课如果你玩过任何需要脱离电源线工作的电子项目,无论是给一个Arduino小车供电,还是驱动一架四轴飞行器,最终都绕不开一个核心问题:电源。从最基础的碱性电池,到…...