【音视频 ffmpeg 学习】 跑示例程序 持续更新中

环境准备 在上一篇文章

把mux.c 拷贝到main.c 中 使用 attribute(unused) 消除警告

__attribute__(unused)

/** Copyright (c) 2003 Fabrice Bellard** Permission is hereby granted, free of charge, to any person obtaining a copy* of this software and associated documentation files (the "Software"), to deal* in the Software without restriction, including without limitation the rights* to use, copy, modify, merge, publish, distribute, sublicense, and/or sell* copies of the Software, and to permit persons to whom the Software is* furnished to do so, subject to the following conditions:** The above copyright notice and this permission notice shall be included in* all copies or substantial portions of the Software.** THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR* IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,* FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL* THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER* LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,* OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN* THE SOFTWARE.*//*** @file libavformat muxing API usage example* @example mux.c** Generate a synthetic audio and video signal and mux them to a media file in* any supported libavformat format. The default codecs are used.*/#include <stdlib.h>

#include <stdio.h>

#include <string.h>

#include <math.h>#include <libavutil/avassert.h>

#include <libavutil/channel_layout.h>

#include <libavutil/opt.h>

#include <libavutil/mathematics.h>

#include <libavutil/timestamp.h>

#include <libavcodec/avcodec.h>

#include <libavformat/avformat.h>

#include <libswscale/swscale.h>

#include <libswresample/swresample.h>#define STREAM_DURATION 10.0

#define STREAM_FRAME_RATE 25 /* 25 images/s */

#define STREAM_PIX_FMT AV_PIX_FMT_YUV420P /* default pix_fmt */#define SCALE_FLAGS SWS_BICUBIC// a wrapper around a single output AVStream

typedef struct OutputStream {AVStream *st;AVCodecContext *enc;/* pts of the next frame that will be generated */int64_t next_pts;int samples_count;AVFrame *frame;AVFrame *tmp_frame;AVPacket *tmp_pkt;float t, tincr, tincr2;struct SwsContext *sws_ctx;struct SwrContext *swr_ctx;

} OutputStream;static void log_packet(const AVFormatContext *fmt_ctx, const AVPacket *pkt)

{AVRational *time_base = &fmt_ctx->streams[pkt->stream_index]->time_base;printf("pts:%s pts_time:%s dts:%s dts_time:%s duration:%s duration_time:%s stream_index:%d\n",av_ts2str(pkt->pts), av_ts2timestr(pkt->pts, time_base),av_ts2str(pkt->dts), av_ts2timestr(pkt->dts, time_base),av_ts2str(pkt->duration), av_ts2timestr(pkt->duration, time_base),pkt->stream_index);

}static int write_frame(AVFormatContext *fmt_ctx, AVCodecContext *c,AVStream *st, AVFrame *frame, AVPacket *pkt)

{int ret;// send the frame to the encoderret = avcodec_send_frame(c, frame);if (ret < 0) {fprintf(stderr, "Error sending a frame to the encoder: %s\n",av_err2str(ret));exit(1);}while (ret >= 0) {ret = avcodec_receive_packet(c, pkt);if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF)break;else if (ret < 0) {fprintf(stderr, "Error encoding a frame: %s\n", av_err2str(ret));exit(1);}/* rescale output packet timestamp values from codec to stream timebase */av_packet_rescale_ts(pkt, c->time_base, st->time_base);pkt->stream_index = st->index;/* Write the compressed frame to the media file. */log_packet(fmt_ctx, pkt);ret = av_interleaved_write_frame(fmt_ctx, pkt);/* pkt is now blank (av_interleaved_write_frame() takes ownership of* its contents and resets pkt), so that no unreferencing is necessary.* This would be different if one used av_write_frame(). */if (ret < 0) {fprintf(stderr, "Error while writing output packet: %s\n", av_err2str(ret));exit(1);}}return ret == AVERROR_EOF ? 1 : 0;

}/* Add an output stream. */

static void add_stream(OutputStream *ost, AVFormatContext *oc,const AVCodec **codec,enum AVCodecID codec_id)

{AVCodecContext *c;int i;/* find the encoder */*codec = avcodec_find_encoder(codec_id);if (!(*codec)) {fprintf(stderr, "Could not find encoder for '%s'\n",avcodec_get_name(codec_id));exit(1);}ost->tmp_pkt = av_packet_alloc();if (!ost->tmp_pkt) {fprintf(stderr, "Could not allocate AVPacket\n");exit(1);}ost->st = avformat_new_stream(oc, NULL);if (!ost->st) {fprintf(stderr, "Could not allocate stream\n");exit(1);}ost->st->id = oc->nb_streams-1;c = avcodec_alloc_context3(*codec);if (!c) {fprintf(stderr, "Could not alloc an encoding context\n");exit(1);}ost->enc = c;switch ((*codec)->type) {case AVMEDIA_TYPE_AUDIO:c->sample_fmt = (*codec)->sample_fmts ?(*codec)->sample_fmts[0] : AV_SAMPLE_FMT_FLTP;c->bit_rate = 64000;c->sample_rate = 44100;if ((*codec)->supported_samplerates) {c->sample_rate = (*codec)->supported_samplerates[0];for (i = 0; (*codec)->supported_samplerates[i]; i++) {if ((*codec)->supported_samplerates[i] == 44100)c->sample_rate = 44100;}}av_channel_layout_copy(&c->ch_layout, &(AVChannelLayout)AV_CHANNEL_LAYOUT_STEREO);ost->st->time_base = (AVRational){ 1, c->sample_rate };break;case AVMEDIA_TYPE_VIDEO:c->codec_id = codec_id;c->bit_rate = 400000;/* Resolution must be a multiple of two. */c->width = 352;c->height = 288;/* timebase: This is the fundamental unit of time (in seconds) in terms* of which frame timestamps are represented. For fixed-fps content,* timebase should be 1/framerate and timestamp increments should be* identical to 1. */ost->st->time_base = (AVRational){ 1, STREAM_FRAME_RATE };c->time_base = ost->st->time_base;c->gop_size = 12; /* emit one intra frame every twelve frames at most */c->pix_fmt = STREAM_PIX_FMT;if (c->codec_id == AV_CODEC_ID_MPEG2VIDEO) {/* just for testing, we also add B-frames */c->max_b_frames = 2;}if (c->codec_id == AV_CODEC_ID_MPEG1VIDEO) {/* Needed to avoid using macroblocks in which some coeffs overflow.* This does not happen with normal video, it just happens here as* the motion of the chroma plane does not match the luma plane. */c->mb_decision = 2;}break;default:break;}/* Some formats want stream headers to be separate. */if (oc->oformat->flags & AVFMT_GLOBALHEADER)c->flags |= AV_CODEC_FLAG_GLOBAL_HEADER;

}/**************************************************************/

/* audio output */static AVFrame *alloc_audio_frame(enum AVSampleFormat sample_fmt,const AVChannelLayout *channel_layout,int sample_rate, int nb_samples)

{AVFrame *frame = av_frame_alloc();if (!frame) {fprintf(stderr, "Error allocating an audio frame\n");exit(1);}frame->format = sample_fmt;av_channel_layout_copy(&frame->ch_layout, channel_layout);frame->sample_rate = sample_rate;frame->nb_samples = nb_samples;if (nb_samples) {if (av_frame_get_buffer(frame, 0) < 0) {fprintf(stderr, "Error allocating an audio buffer\n");exit(1);}}return frame;

}static void open_audio(__attribute__((unused)) AVFormatContext *oc, const AVCodec *codec,OutputStream *ost, AVDictionary *opt_arg)

{AVCodecContext *c;int nb_samples;int ret;AVDictionary *opt = NULL;c = ost->enc;/* open it */av_dict_copy(&opt, opt_arg, 0);ret = avcodec_open2(c, codec, &opt);av_dict_free(&opt);if (ret < 0) {fprintf(stderr, "Could not open audio codec: %s\n", av_err2str(ret));exit(1);}/* init signal generator */ost->t = 0;ost->tincr = 2 * M_PI * 110.0 / c->sample_rate;/* increment frequency by 110 Hz per second */ost->tincr2 = 2 * M_PI * 110.0 / c->sample_rate / c->sample_rate;if (c->codec->capabilities & AV_CODEC_CAP_VARIABLE_FRAME_SIZE)nb_samples = 10000;elsenb_samples = c->frame_size;ost->frame = alloc_audio_frame(c->sample_fmt, &c->ch_layout,c->sample_rate, nb_samples);ost->tmp_frame = alloc_audio_frame(AV_SAMPLE_FMT_S16, &c->ch_layout,c->sample_rate, nb_samples);/* copy the stream parameters to the muxer */ret = avcodec_parameters_from_context(ost->st->codecpar, c);if (ret < 0) {fprintf(stderr, "Could not copy the stream parameters\n");exit(1);}/* create resampler context */ost->swr_ctx = swr_alloc();if (!ost->swr_ctx) {fprintf(stderr, "Could not allocate resampler context\n");exit(1);}/* set options */av_opt_set_chlayout (ost->swr_ctx, "in_chlayout", &c->ch_layout, 0);av_opt_set_int (ost->swr_ctx, "in_sample_rate", c->sample_rate, 0);av_opt_set_sample_fmt(ost->swr_ctx, "in_sample_fmt", AV_SAMPLE_FMT_S16, 0);av_opt_set_chlayout (ost->swr_ctx, "out_chlayout", &c->ch_layout, 0);av_opt_set_int (ost->swr_ctx, "out_sample_rate", c->sample_rate, 0);av_opt_set_sample_fmt(ost->swr_ctx, "out_sample_fmt", c->sample_fmt, 0);/* initialize the resampling context */if ((ret = swr_init(ost->swr_ctx)) < 0) {fprintf(stderr, "Failed to initialize the resampling context\n");exit(1);}

}/* Prepare a 16 bit dummy audio frame of 'frame_size' samples and* 'nb_channels' channels. */

static AVFrame *get_audio_frame(OutputStream *ost)

{AVFrame *frame = ost->tmp_frame;int j, i, v;int16_t *q = (int16_t*)frame->data[0];/* check if we want to generate more frames */if (av_compare_ts(ost->next_pts, ost->enc->time_base,STREAM_DURATION, (AVRational){ 1, 1 }) > 0)return NULL;for (j = 0; j <frame->nb_samples; j++) {v = (int)(sin(ost->t) * 10000);for (i = 0; i < ost->enc->ch_layout.nb_channels; i++)*q++ = v;ost->t += ost->tincr;ost->tincr += ost->tincr2;}frame->pts = ost->next_pts;ost->next_pts += frame->nb_samples;return frame;

}/** encode one audio frame and send it to the muxer* return 1 when encoding is finished, 0 otherwise*/

static int write_audio_frame(AVFormatContext *oc, OutputStream *ost)

{AVCodecContext *c;AVFrame *frame;int ret;int dst_nb_samples;c = ost->enc;frame = get_audio_frame(ost);if (frame) {/* convert samples from native format to destination codec format, using the resampler *//* compute destination number of samples */dst_nb_samples = av_rescale_rnd(swr_get_delay(ost->swr_ctx, c->sample_rate) + frame->nb_samples,c->sample_rate, c->sample_rate, AV_ROUND_UP);av_assert0(dst_nb_samples == frame->nb_samples);/* when we pass a frame to the encoder, it may keep a reference to it* internally;* make sure we do not overwrite it here*/ret = av_frame_make_writable(ost->frame);if (ret < 0)exit(1);/* convert to destination format */ret = swr_convert(ost->swr_ctx,ost->frame->data, dst_nb_samples,(const uint8_t **)frame->data, frame->nb_samples);if (ret < 0) {fprintf(stderr, "Error while converting\n");exit(1);}frame = ost->frame;frame->pts = av_rescale_q(ost->samples_count, (AVRational){1, c->sample_rate}, c->time_base);ost->samples_count += dst_nb_samples;}return write_frame(oc, c, ost->st, frame, ost->tmp_pkt);

}/**************************************************************/

/* video output */static AVFrame *alloc_frame(enum AVPixelFormat pix_fmt, int width, int height)

{AVFrame *frame;int ret;frame = av_frame_alloc();if (!frame)return NULL;frame->format = pix_fmt;frame->width = width;frame->height = height;/* allocate the buffers for the frame data */ret = av_frame_get_buffer(frame, 0);if (ret < 0) {fprintf(stderr, "Could not allocate frame data.\n");exit(1);}return frame;

}static void open_video(__attribute__((unused)) AVFormatContext *oc, const AVCodec *codec,OutputStream *ost, AVDictionary *opt_arg)

{int ret;AVCodecContext *c = ost->enc;AVDictionary *opt = NULL;av_dict_copy(&opt, opt_arg, 0);/* open the codec */ret = avcodec_open2(c, codec, &opt);av_dict_free(&opt);if (ret < 0) {fprintf(stderr, "Could not open video codec: %s\n", av_err2str(ret));exit(1);}/* allocate and init a re-usable frame */ost->frame = alloc_frame(c->pix_fmt, c->width, c->height);if (!ost->frame) {fprintf(stderr, "Could not allocate video frame\n");exit(1);}/* If the output format is not YUV420P, then a temporary YUV420P* picture is needed too. It is then converted to the required* output format. */ost->tmp_frame = NULL;if (c->pix_fmt != AV_PIX_FMT_YUV420P) {ost->tmp_frame = alloc_frame(AV_PIX_FMT_YUV420P, c->width, c->height);if (!ost->tmp_frame) {fprintf(stderr, "Could not allocate temporary video frame\n");exit(1);}}/* copy the stream parameters to the muxer */ret = avcodec_parameters_from_context(ost->st->codecpar, c);if (ret < 0) {fprintf(stderr, "Could not copy the stream parameters\n");exit(1);}

}/* Prepare a dummy image. */

static void fill_yuv_image(AVFrame *pict, int frame_index,int width, int height)

{int x, y, i;i = frame_index;/* Y */for (y = 0; y < height; y++)for (x = 0; x < width; x++)pict->data[0][y * pict->linesize[0] + x] = x + y + i * 3;/* Cb and Cr */for (y = 0; y < height / 2; y++) {for (x = 0; x < width / 2; x++) {pict->data[1][y * pict->linesize[1] + x] = 128 + y + i * 2;pict->data[2][y * pict->linesize[2] + x] = 64 + x + i * 5;}}

}static AVFrame *get_video_frame(OutputStream *ost)

{AVCodecContext *c = ost->enc;/* check if we want to generate more frames */if (av_compare_ts(ost->next_pts, c->time_base,STREAM_DURATION, (AVRational){ 1, 1 }) > 0)return NULL;/* when we pass a frame to the encoder, it may keep a reference to it* internally; make sure we do not overwrite it here */if (av_frame_make_writable(ost->frame) < 0)exit(1);if (c->pix_fmt != AV_PIX_FMT_YUV420P) {/* as we only generate a YUV420P picture, we must convert it* to the codec pixel format if needed */if (!ost->sws_ctx) {ost->sws_ctx = sws_getContext(c->width, c->height,AV_PIX_FMT_YUV420P,c->width, c->height,c->pix_fmt,SCALE_FLAGS, NULL, NULL, NULL);if (!ost->sws_ctx) {fprintf(stderr,"Could not initialize the conversion context\n");exit(1);}}fill_yuv_image(ost->tmp_frame, ost->next_pts, c->width, c->height);sws_scale(ost->sws_ctx, (const uint8_t * const *) ost->tmp_frame->data,ost->tmp_frame->linesize, 0, c->height, ost->frame->data,ost->frame->linesize);} else {fill_yuv_image(ost->frame, ost->next_pts, c->width, c->height);}ost->frame->pts = ost->next_pts++;return ost->frame;

}/** encode one video frame and send it to the muxer* return 1 when encoding is finished, 0 otherwise*/

static int write_video_frame(AVFormatContext *oc, OutputStream *ost)

{return write_frame(oc, ost->enc, ost->st, get_video_frame(ost), ost->tmp_pkt);

}static void close_stream(__attribute__((unused)) AVFormatContext *oc, OutputStream *ost)

{avcodec_free_context(&ost->enc);av_frame_free(&ost->frame);av_frame_free(&ost->tmp_frame);av_packet_free(&ost->tmp_pkt);sws_freeContext(ost->sws_ctx);swr_free(&ost->swr_ctx);

}/**************************************************************/

/* media file output */int main(int argc, char **argv)

{OutputStream video_st = { 0 }, audio_st = { 0 };const AVOutputFormat *fmt;const char *filename;AVFormatContext *oc;const AVCodec *audio_codec, *video_codec;int ret;int have_video = 0, have_audio = 0;int encode_video = 0, encode_audio = 0;AVDictionary *opt = NULL;int i;if (argc < 2) {printf("usage: %s output_file\n""API example program to output a media file with libavformat.\n""This program generates a synthetic audio and video stream, encodes and\n""muxes them into a file named output_file.\n""The output format is automatically guessed according to the file extension.\n""Raw images can also be output by using '%%d' in the filename.\n""\n", argv[0]);return 1;}filename = argv[1];for (i = 2; i+1 < argc; i+=2) {if (!strcmp(argv[i], "-flags") || !strcmp(argv[i], "-fflags"))av_dict_set(&opt, argv[i]+1, argv[i+1], 0);}/* allocate the output media context */avformat_alloc_output_context2(&oc, NULL, NULL, filename);if (!oc) {printf("Could not deduce output format from file extension: using MPEG.\n");avformat_alloc_output_context2(&oc, NULL, "mpeg", filename);}if (!oc)return 1;fmt = oc->oformat;/* Add the audio and video streams using the default format codecs* and initialize the codecs. */if (fmt->video_codec != AV_CODEC_ID_NONE) {add_stream(&video_st, oc, &video_codec, fmt->video_codec);have_video = 1;encode_video = 1;}if (fmt->audio_codec != AV_CODEC_ID_NONE) {add_stream(&audio_st, oc, &audio_codec, fmt->audio_codec);have_audio = 1;encode_audio = 1;}/* Now that all the parameters are set, we can open the audio and* video codecs and allocate the necessary encode buffers. */if (have_video)open_video(oc, video_codec, &video_st, opt);if (have_audio)open_audio(oc, audio_codec, &audio_st, opt);av_dump_format(oc, 0, filename, 1);/* open the output file, if needed */if (!(fmt->flags & AVFMT_NOFILE)) {ret = avio_open(&oc->pb, filename, AVIO_FLAG_WRITE);if (ret < 0) {fprintf(stderr, "Could not open '%s': %s\n", filename,av_err2str(ret));return 1;}}/* Write the stream header, if any. */ret = avformat_write_header(oc, &opt);if (ret < 0) {fprintf(stderr, "Error occurred when opening output file: %s\n",av_err2str(ret));return 1;}while (encode_video || encode_audio) {/* select the stream to encode */if (encode_video &&(!encode_audio || av_compare_ts(video_st.next_pts, video_st.enc->time_base,audio_st.next_pts, audio_st.enc->time_base) <= 0)) {encode_video = !write_video_frame(oc, &video_st);} else {encode_audio = !write_audio_frame(oc, &audio_st);}}av_write_trailer(oc);/* Close each codec. */if (have_video)close_stream(oc, &video_st);if (have_audio)close_stream(oc, &audio_st);if (!(fmt->flags & AVFMT_NOFILE))/* Close the output file. */avio_closep(&oc->pb);/* free the stream */avformat_free_context(oc);return 0;

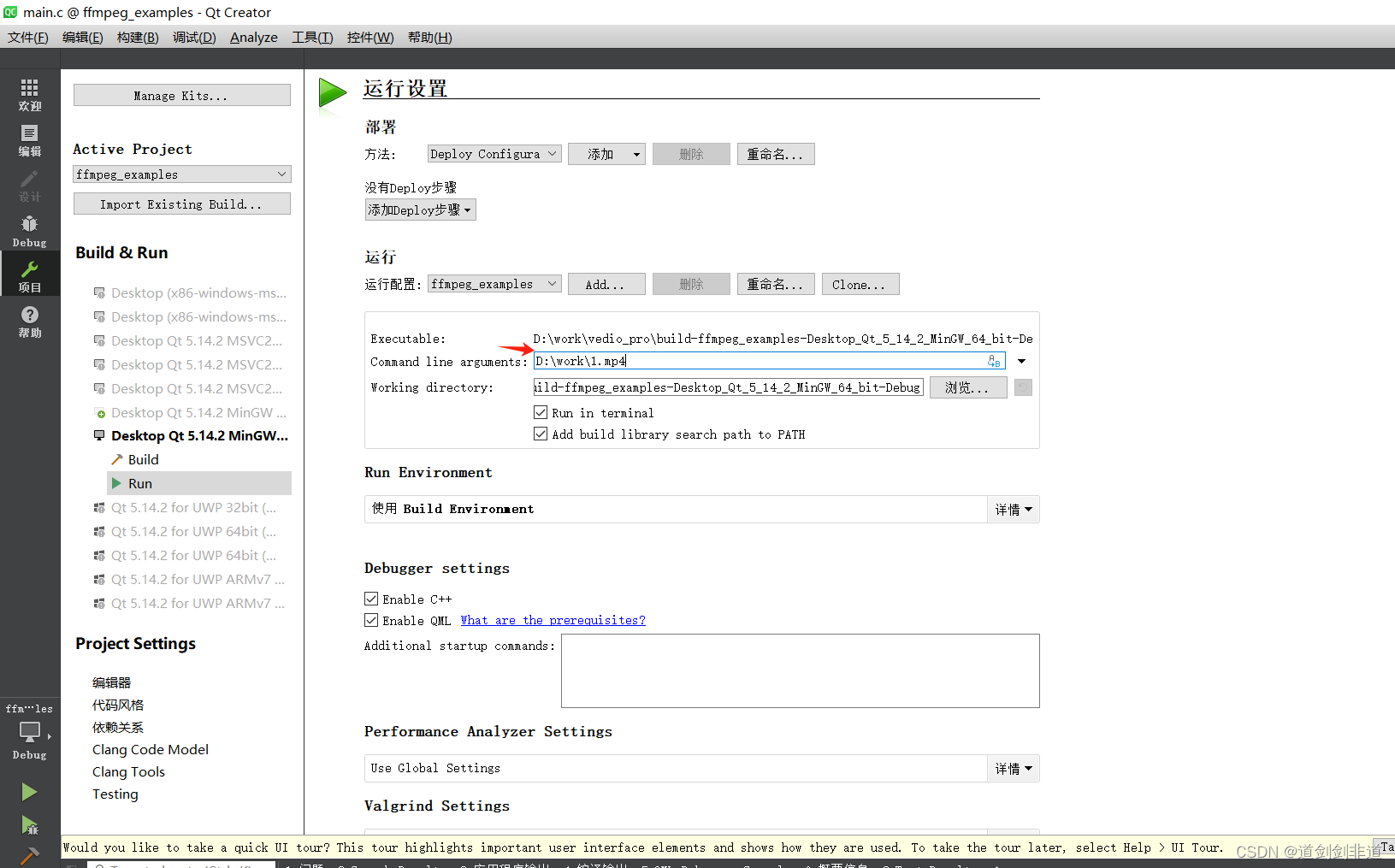

}在项目 cmd 位置输入 参数

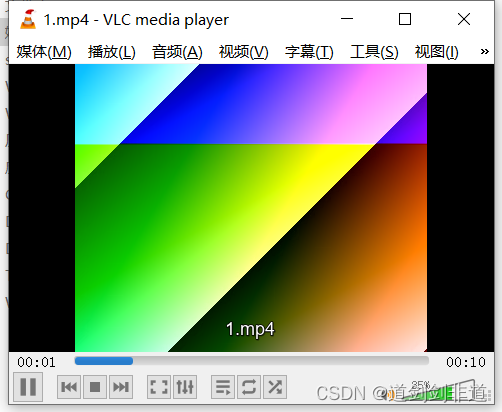

运行后使用播放器播放

未完待续。。。

相关文章:

【音视频 ffmpeg 学习】 跑示例程序 持续更新中

环境准备 在上一篇文章 把mux.c 拷贝到main.c 中 使用 attribute(unused) 消除警告 __attribute__(unused)/** Copyright (c) 2003 Fabrice Bellard** Permission is hereby granted, free of charge, to any person obtaining a copy* of this software and associated docu…...

前端axios与python库requests的区别

当涉及到发送HTTP请求时,Axios和Python中的requests库都是常用的工具。下面是它们的详细说明: Axios: Axios是一个基于Promise的HTTP客户端,主要用于浏览器和Node.js环境中发送HTTP请求。以下是Axios的一些特点和用法࿱…...

达梦数据库文档

1:达梦数据库(DM8)简介 达梦数据库管理系统是武汉达梦公司推出的具有完全自主知识产权的高性能数据库管理系统,简称DM。达梦数据库管理系统目前最新的版本是8.0版本,简称DM8。 DM8是达梦公司在总结DM系列产品研发与应用经验的基础上…...

CorelDRAW2024新功能有哪些?CorelDRAW2024最新版本更新怎么样?

CorelDRAW2024新功能有哪些?CorelDRAW2024最新版本更新怎么样?让我们带您详细了解! CorelDRAW Graphics Suite 是矢量制图行业的标杆软件,2024年全新版本为您带来多项新功能和优化改进。本次更新强调易用性,包括更强大…...

基于Mapify的在线艺术地图设计

地图是传递空间信息的有效载体,更加美观、生动的地图产品也是我们追求目标。 那么,我们如何才能制出如下图所示这样一幅艺术性较高的地图呢?今天我们来一探究竟吧! 按照惯例,现将网址给出: https://www.m…...

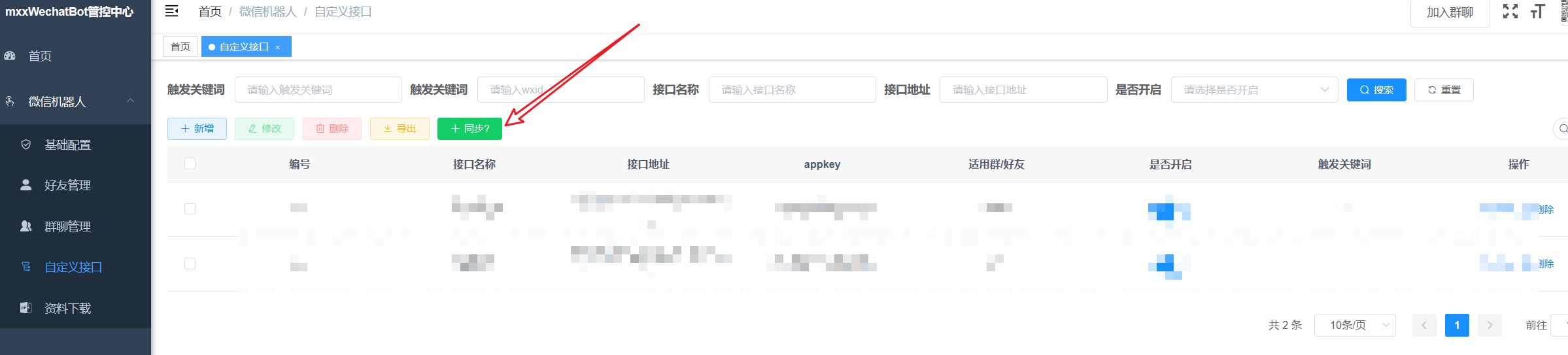

mxxWechatBot微信机器人V2版本文档说明

大家伙,我是雄雄,欢迎关注微信公众号:雄雄的小课堂。 先看这里 一、前言二、mxxWechatBot流程图三、怎么使用? 一、前言 经过不断地探索与研究,mxxWechatBot正式上线,届时全面开放使用。 mxxWechatBot&am…...

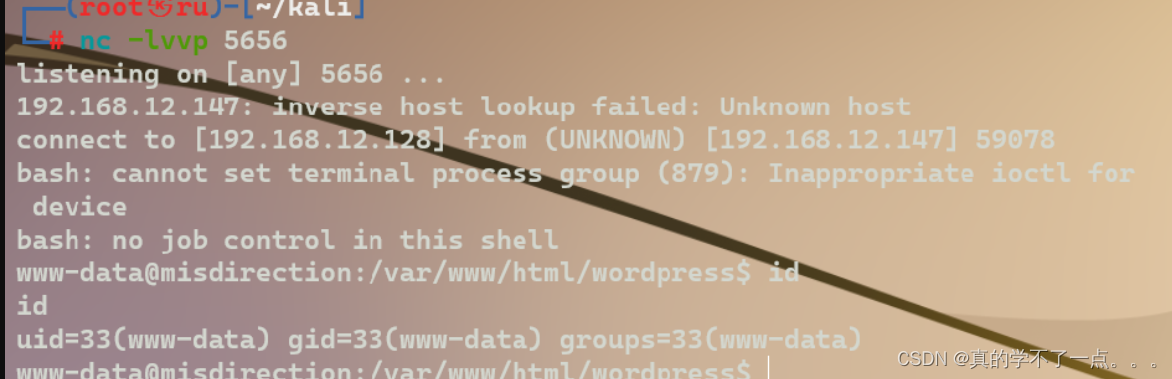

红队打靶练习:MISDIRECTION: 1

信息收集 1、arp ┌──(root㉿ru)-[~/kali] └─# arp-scan -l Interface: eth0, type: EN10MB, MAC: 00:0c:29:69:c7:bf, IPv4: 192.168.12.128 Starting arp-scan 1.10.0 with 256 hosts (https://github.com/royhills/arp-scan) 192.168.12.1 00:50:56:c0:00:08 …...

Jmeter吞吐量控制器总结

吞吐量控制器(Throughput Controller) 场景: 在同一个线程组里, 有10个并发, 7个做A业务, 3个做B业务,要模拟这种场景,可以通过吞吐量模拟器来实现。 添加吞吐量控制器 用法1: Percent Executions 在一个线程组内分别建立两个吞吐量控制器, 分别放业务A和业务B …...

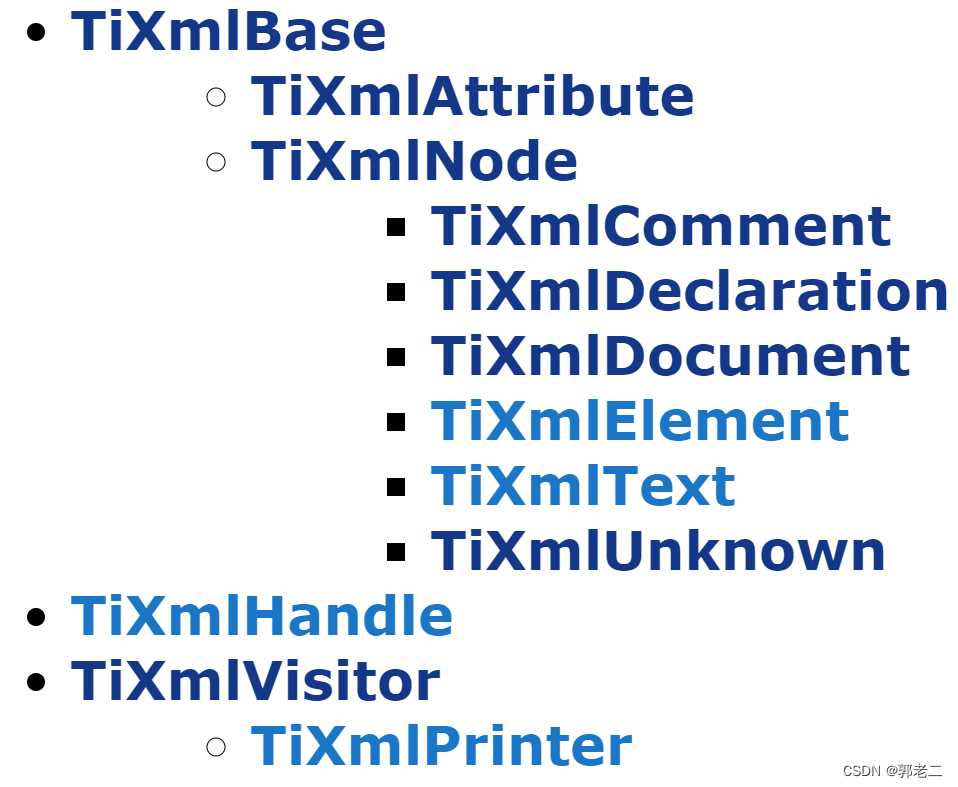

【XML】TinyXML 详解(二):接口详解

【C】郭老二博文之:C目录 1、XML测试文件(laoer.xml) <?xml version"1.0" standalone"no" ?> <!-- Hello World !--> <root><child name"childName" id"1"><c_child…...

【机器学习】人工智能概述

人工智能(Artificial Intelligence,简称AI)是一门研究如何使机器能够像人一样思考、学习和执行任务的学科。它是计算机科学的一个重要分支,涉及机器学习、自然语言处理、计算机视觉等多个领域。 人工智能的概念最早可以追溯到20世…...

flink 实时写入 hudi 参数推荐

数据湖任务并行度计算...

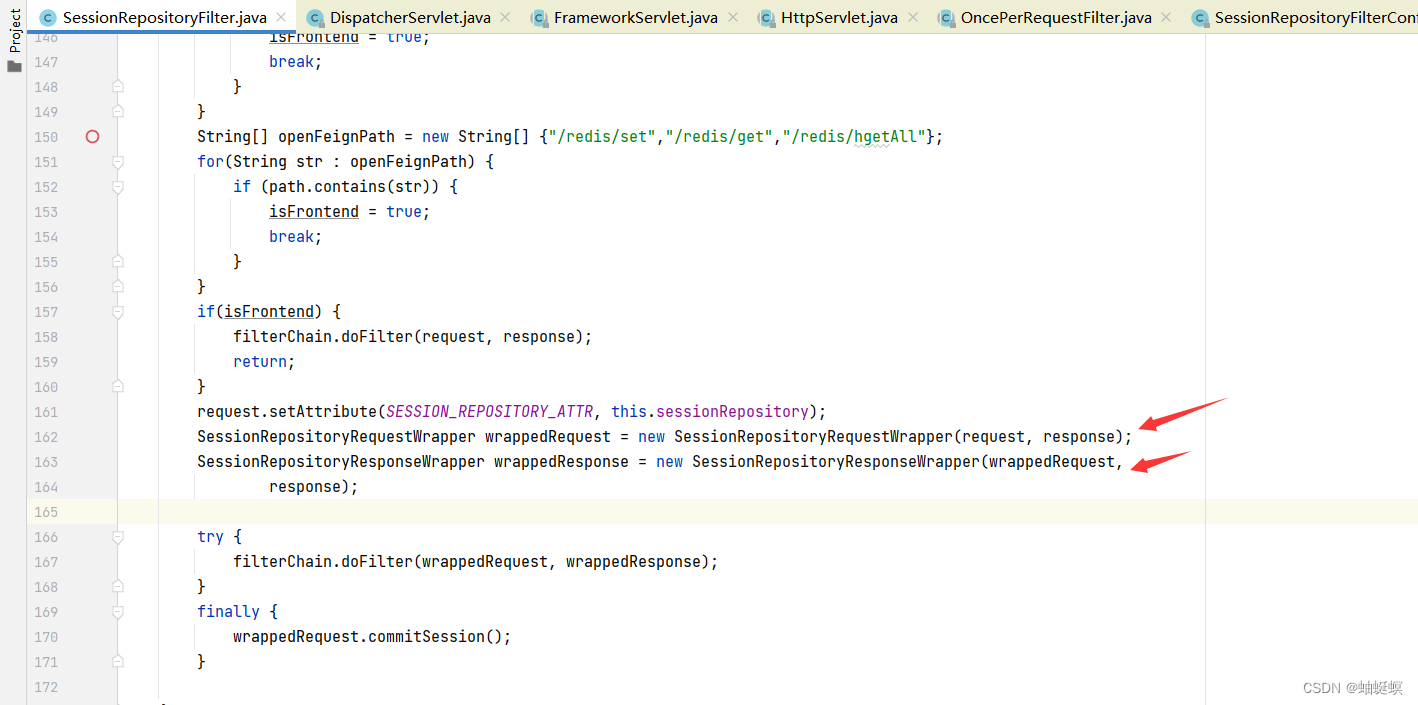

传统项目基于tomcat cookie单体会话升级分布式会话解决方案

传统捞项目基于servlet容器 cookie单体会话改造分布式会话方案 ##引入redis,spring-session依赖 <!--redis依赖 --><dependency><groupId>org.springframework.boot</groupId><artifactId>spring-boot-starter-data-redis</artifactId>&…...

)

Unity 关于json数据的解析方式(LitJson.dll插件)

关于json数据的解析方式(LitJson.dll插件) void ParseItemJson(){TextAsset itemText Resources.Load<TextAsset>("Items");//读取Resources中Items文件,需要将Items文件放到Resources文件夹中string itemJson itemText.te…...

智能监控平台/视频共享融合系统EasyCVR海康设备国标GB28181接入流程

TSINGSEE青犀视频监控汇聚平台EasyCVR可拓展性强、视频能力灵活、部署轻快,可支持的主流标准协议有国标GB28181、RTSP/Onvif、RTMP等,以及支持厂家私有协议与SDK接入,包括海康Ehome、海大宇等设备的SDK等。平台既具备传统安防视频监控的能力&…...

expdp到ASM 文件系统 并拷贝

1.创建asm导出数据目录 sql>select name,total_mb,free_mb from v$asm_diskgroup; 确认集群asm磁盘组环境 asmcmd>cd DGDSDB asmcmd>mkdir dpbak asmcmd>ls -l sql>conn / as sysdba create directory expdp_asm_dir as DGDSDB/dpbak; create directory expdp_l…...

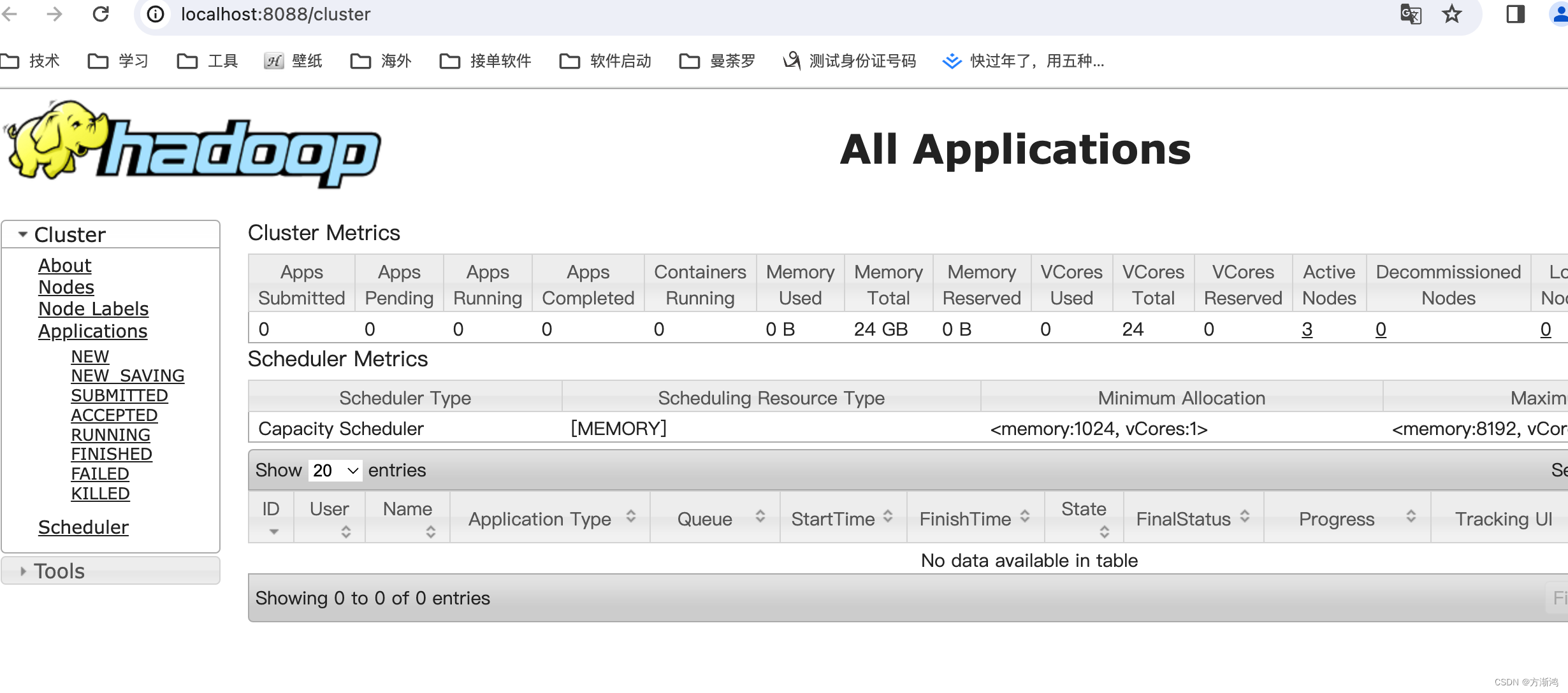

【2023】通过docker安装hadoop以及常见报错

💻目录 1、准备2、安装镜像2.1、创建centos-ssh的镜像2.2、创建hadoop的镜像 3、配置ssh网络3.1、搭建同一网段的网络3.2、配置host实现互相之间可以免密登陆3.3、查看是否成功 4、安装配置Hadoop4.1、添加存储文件夹4.2、添加指定配置4.3、同步数据 5、测试启动5.1…...

Baumer工业相机堡盟工业相机如何通过NEOAPI SDK获取相机当前实时帧率(C++)

Baumer工业相机堡盟工业相机如何通过NEOAPI SDK获取相机当前实时帧率(C) Baumer工业相机Baumer工业相机的帧率的技术背景Baumer工业相机的帧率获取方式CameraExplorer如何查看相机帧率信息在NEOAPI SDK里通过函数获取相机帧率(C) …...

SpringBoot项目部署及多环境

1、多环境 2、项目部署上线 原始前端 / 后端项目宝塔Linux容器容器平台 3、前后端联调 4、项目扩展和规划 多环境 程序员鱼皮-参考文章 本地开发:localhost(127.0.0.1) 多环境:指同一套项目代码在把不同的阶段需要根据实际…...

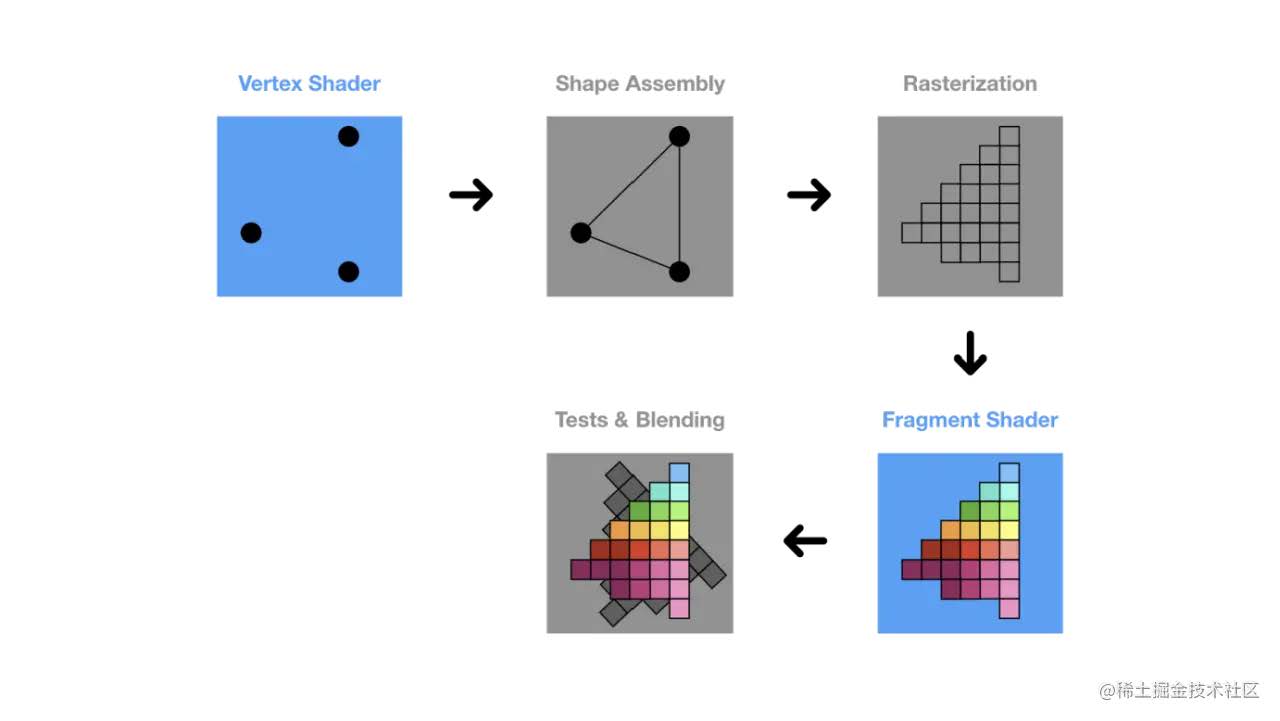

WebGL以及wasm的介绍以及简单应用

简介 下面主要介绍了WebGL和wasm,是除了html,css,js以外Web标准所支持的另外两个大件 前者实现复杂的图形处理,后者提供高效的代码迁移以及代码执行效率 WebGL 简介 首先,浏览器里的游戏是怎么做到这种交互又显示不同的画面的? 试想用我们的前端三件套实现一下.好像可以…...

JS和TS的基础语法学习以及babel的基本使用

简介 本文主要介绍了一下js和ts的基础语法,为前端开发zuo JavaScript 更详细的 JavaScript 学习资料:https://developer.mozilla.org/zh-CN/docs/Web/JavaScript 简介 定位 : JavaScript 是一种动态语言,它包含类型、运算符、标准内置( bu…...

别再被Linux的free命令骗了!手把手教你读懂‘可用内存’和‘实际空闲内存’的区别

别再被Linux的free命令骗了!手把手教你读懂‘可用内存’和‘实际空闲内存’的区别 刚接触Linux服务器管理时,看到free -m输出里那个触目惊心的"free"数值,我的第一反应是:"天哪,内存快用完了࿰…...

开源协作平台Penny:为女性开发者打造包容性技术社区

1. 项目概述:一个为女性开发者量身定制的开源协作平台最近在GitHub上闲逛,发现了一个挺有意思的项目,叫“WomenBuilt/penny”。光看这个名字,你可能会有点摸不着头脑,这“penny”是啥?一个记账应用…...

RHClaw红队工具集:模块化CLI框架提升安全研究效率

1. 项目概述与核心价值最近在和一些做安全研究的朋友交流时,发现一个挺有意思的现象:大家手里或多或少都攒了一些自己写的、或者从开源社区淘来的“小工具”。这些工具往往功能单一但极其锋利,比如一个专门用来解析特定协议头的脚本ÿ…...

基于TEA加密的QQ号码逆向查询技术实现

基于TEA加密的QQ号码逆向查询技术实现 【免费下载链接】phone2qq 项目地址: https://gitcode.com/gh_mirrors/ph/phone2qq 在数字身份管理领域,用户经常面临忘记QQ号码但记得绑定手机号的情况。传统找回方式依赖官方验证流程,耗时较长且操作复杂…...

DroidCam OBS插件终极指南:零成本将手机变身高清直播摄像头

DroidCam OBS插件终极指南:零成本将手机变身高清直播摄像头 【免费下载链接】droidcam-obs-plugin DroidCam OBS Source 项目地址: https://gitcode.com/gh_mirrors/dr/droidcam-obs-plugin 还在为专业直播设备价格昂贵而烦恼?想用手机摄像头获得…...

Linux系统级音频处理:JDSP4Linux架构、DSP效果器与实战调音指南

1. 项目概述:从“听个响”到“听个准”的桌面音频革命如果你是一个对电脑音质有追求的Linux用户,或者是一个音频领域的开发者,那么你很可能经历过这样的困扰:系统自带的音频管理就像个“大锅饭”,所有声音都混在一起&a…...

)

从IMU到GPS:手把手教你用ESKF实现机器人定位(附代码避坑指南)

从IMU到GPS:手把手教你用ESKF实现机器人定位(附代码避坑指南) 在机器人定位领域,误差状态卡尔曼滤波(Error-State Kalman Filter, ESKF)正逐渐成为处理IMU和GPS数据融合的主流方法。本文将带您深入理解ESK…...

GIS制图必备:GlobalMapper 20制作1:100万标准图幅的完整指南与命名规则详解

GIS制图实战:GlobalMapper 20标准图幅生成与命名规范全解析 在测绘与地理信息行业,标准图幅不仅是数据管理的基石,更是跨部门协作的通用语言。当我们面对1:100万比例尺的地形图分幅时,每一个经纬网格的划分、每一组编号的生成&…...

工程师着装文化变迁:从安全规范到效率优化

1. 项目概述:从“着装规范”到工程师文化观察那天早上,我像往常一样,准备去马萨诸塞州纳蒂克的MathWorks公司拜访。出门前,我习惯性地套上了长裤。七月的波士顿,夏天终于姗姗来迟,气温宜人,其实…...

谷歌账户注册改用发短信验证,注重隐私者如何创建新账户成焦点?

谷歌账户注册方式变更 2026年3月8日下午2点20分,anon28387880称谷歌创建新账户时用二维码取代短信验证,自己试过无法再用二维码注册。扫描智能手机二维码会触发手机向谷歌发短信验证手机号。据说这是为安全考虑,能增加钓鱼难度,但…...