院子摄像头的监控

院子摄像头的监控和禁止区域入侵检测相比,多了2个功能:1)如果检测到有人入侵,则把截图保存起来,2)如果检测到有人入侵,则向数据库插入一条事件数据。

打开checkingfence.py,添加如下代码:

# -*- coding: utf-8 -*-'''

禁止区域检测主程序

摄像头对准围墙那一侧用法:

python checkingfence.py

python checkingfence.py --filename tests/yard_01.mp4

'''# import the necessary packages

from oldcare.track import CentroidTracker

from oldcare.track import TrackableObject

from imutils.video import FPS

import numpy as np

import imutils

import argparse

import time

import dlib

import cv2

import os

import subprocess# 得到当前时间

current_time = time.strftime('%Y-%m-%d %H:%M:%S',time.localtime(time.time()))

print('[INFO] %s 禁止区域检测程序启动了.'%(current_time))# 传入参数

ap = argparse.ArgumentParser()

ap.add_argument("-f", "--filename", required=False, default = '',help="")

args = vars(ap.parse_args())# 全局变量

prototxt_file_path='models/mobilenet_ssd/MobileNetSSD_deploy.prototxt'

# Contains the Caffe deep learning model files.

#We’ll be using a MobileNet Single Shot Detector (SSD),

#“Single Shot Detectors for object detection”.

model_file_path='models/mobilenet_ssd/MobileNetSSD_deploy.caffemodel'

output_fence_path = 'supervision/fence'

input_video = args['filename']

skip_frames = 30 # of skip frames between detections

# your python path

python_path = '/home/reed/anaconda3/envs/tensorflow/bin/python' # 超参数

# minimum probability to filter weak detections

minimum_confidence = 0.80 # 物体识别模型能识别的物体(21种)

CLASSES = ["background", "aeroplane", "bicycle", "bird", "boat","bottle", "bus", "car", "cat", "chair", "cow", "diningtable","dog", "horse", "motorbike", "person", "pottedplant", "sheep","sofa", "train", "tvmonitor"]# if a video path was not supplied, grab a reference to the webcam

if not input_video:print("[INFO] starting video stream...")vs = cv2.VideoCapture(0)time.sleep(2)

else:print("[INFO] opening video file...")vs = cv2.VideoCapture(input_video)# 加载物体识别模型

print("[INFO] loading model...")

net = cv2.dnn.readNetFromCaffe(prototxt_file_path, model_file_path)# initialize the frame dimensions (we'll set them as soon as we read

# the first frame from the video)

W = None

H = None# instantiate our centroid tracker, then initialize a list to store

# each of our dlib correlation trackers, followed by a dictionary to

# map each unique object ID to a TrackableObject

ct = CentroidTracker(maxDisappeared=40, maxDistance=50)

trackers = []

trackableObjects = {}# initialize the total number of frames processed thus far, along

# with the total number of objects that have moved either up or down

totalFrames = 0

totalDown = 0

totalUp = 0# start the frames per second throughput estimator

fps = FPS().start()# loop over frames from the video stream

while True:# grab the next frame and handle if we are reading from either# VideoCapture or VideoStreamret, frame = vs.read()# if we are viewing a video and we did not grab a frame then we# have reached the end of the videoif input_video and not ret:breakif not input_video:frame = cv2.flip(frame, 1)# resize the frame to have a maximum width of 500 pixels (the# less data we have, the faster we can process it), then convert# the frame from BGR to RGB for dlibframe = imutils.resize(frame, width=500)rgb = cv2.cvtColor(frame, cv2.COLOR_BGR2RGB)# if the frame dimensions are empty, set themif W is None or H is None:(H, W) = frame.shape[:2]# initialize the current status along with our list of bounding# box rectangles returned by either (1) our object detector or# (2) the correlation trackersstatus = "Waiting"rects = []# check to see if we should run a more computationally expensive# object detection method to aid our trackerif totalFrames % skip_frames == 0:# set the status and initialize our new set of object trackersstatus = "Detecting"trackers = []# convert the frame to a blob and pass the blob through the# network and obtain the detectionsblob = cv2.dnn.blobFromImage(frame, 0.007843, (W, H), 127.5)net.setInput(blob)detections = net.forward()# loop over the detectionsfor i in np.arange(0, detections.shape[2]):# extract the confidence (i.e., probability) associated# with the predictionconfidence = detections[0, 0, i, 2]# filter out weak detections by requiring a minimum# confidenceif confidence > minimum_confidence:# extract the index of the class label from the# detections listidx = int(detections[0, 0, i, 1])# if the class label is not a person, ignore itif CLASSES[idx] != "person":continue# compute the (x, y)-coordinates of the bounding box# for the objectbox = detections[0, 0, i, 3:7]*np.array([W, H, W, H])(startX, startY, endX, endY) = box.astype("int")# construct a dlib rectangle object from the bounding# box coordinates and then start the dlib correlation# trackertracker = dlib.correlation_tracker()rect = dlib.rectangle(startX, startY, endX, endY)tracker.start_track(rgb, rect)# add the tracker to our list of trackers so we can# utilize it during skip framestrackers.append(tracker)# otherwise, we should utilize our object *trackers* rather than#object *detectors* to obtain a higher frame processing throughputelse:# loop over the trackersfor tracker in trackers:# set the status of our system to be 'tracking' rather# than 'waiting' or 'detecting'status = "Tracking"# update the tracker and grab the updated positiontracker.update(rgb)pos = tracker.get_position()# unpack the position objectstartX = int(pos.left())startY = int(pos.top())endX = int(pos.right())endY = int(pos.bottom())# draw a rectangle around the peoplecv2.rectangle(frame, (startX, startY), (endX, endY),(0, 255, 0), 2)# add the bounding box coordinates to the rectangles listrects.append((startX, startY, endX, endY))# draw a horizontal line in the center of the frame -- once an# object crosses this line we will determine whether they were# moving 'up' or 'down'cv2.line(frame, (0, H // 2), (W, H // 2), (0, 255, 255), 2)# use the centroid tracker to associate the (1) old object# centroids with (2) the newly computed object centroidsobjects = ct.update(rects)# loop over the tracked objectsfor (objectID, centroid) in objects.items():# check to see if a trackable object exists for the current# object IDto = trackableObjects.get(objectID, None)# if there is no existing trackable object, create oneif to is None:to = TrackableObject(objectID, centroid)# otherwise, there is a trackable object so we can utilize it# to determine directionelse:# the difference between the y-coordinate of the *current*# centroid and the mean of *previous* centroids will tell# us in which direction the object is moving (negative for# 'up' and positive for 'down')y = [c[1] for c in to.centroids]direction = centroid[1] - np.mean(y)to.centroids.append(centroid)# check to see if the object has been counted or notif not to.counted:# if the direction is negative (indicating the object# is moving up) AND the centroid is above the center# line, count the objectif direction < 0 and centroid[1] < H // 2:totalUp += 1to.counted = True# if the direction is positive (indicating the object# is moving down) AND the centroid is below the# center line, count the objectelif direction > 0 and centroid[1] > H // 2:totalDown += 1to.counted = Truecurrent_time = time.strftime('%Y-%m-%d %H:%M:%S',time.localtime(time.time()))event_desc = '有人闯入禁止区域!!!'event_location = '院子'print('[EVENT] %s, 院子, 有人闯入禁止区域!!!' %(current_time))cv2.imwrite(os.path.join(output_fence_path, 'snapshot_%s.jpg' %(time.strftime('%Y%m%d_%H%M%S'))), frame)# insert into databasecommand = '%s inserting.py --event_desc %s --event_type 4 --event_location %s' %(python_path, event_desc, event_location)p = subprocess.Popen(command, shell=True) # store the trackable object in our dictionarytrackableObjects[objectID] = to# draw both the ID of the object and the centroid of the# object on the output frametext = "ID {}".format(objectID)cv2.putText(frame, text, (centroid[0] - 10, centroid[1] - 10),cv2.FONT_HERSHEY_SIMPLEX, 0.5, (0, 255, 0), 2)cv2.circle(frame, (centroid[0], centroid[1]), 4, (0, 255, 0), -1)# construct a tuple of information we will be displaying on the# frameinfo = [#("Up", totalUp),("Down", totalDown),("Status", status),]# loop over the info tuples and draw them on our framefor (i, (k, v)) in enumerate(info):text = "{}: {}".format(k, v)cv2.putText(frame, text, (10, H - ((i * 20) + 20)),cv2.FONT_HERSHEY_SIMPLEX, 0.6, (0, 0, 255), 2)# show the output framecv2.imshow("Prohibited Area", frame)k = cv2.waitKey(1) & 0xff if k == 27:break# increment the total number of frames processed thus far and# then update the FPS countertotalFrames += 1fps.update()# stop the timer and display FPS information

fps.stop()

print("[INFO] elapsed time: {:.2f}".format(fps.elapsed())) # 14.19

print("[INFO] approx. FPS: {:.2f}".format(fps.fps())) # 90.43# close any open windows

vs.release()

cv2.destroyAllWindows()执行下行命令即可运行程序:

python checkingfence.py --filename tests/yard_01.mp4同学们如果可以把摄像头挂在高处,也可以通过摄像头捕捉画面。程序运行方式如下:

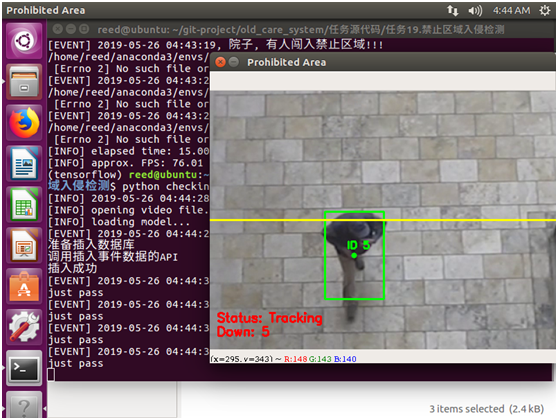

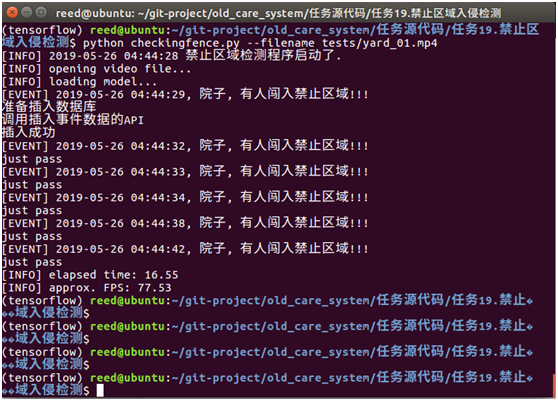

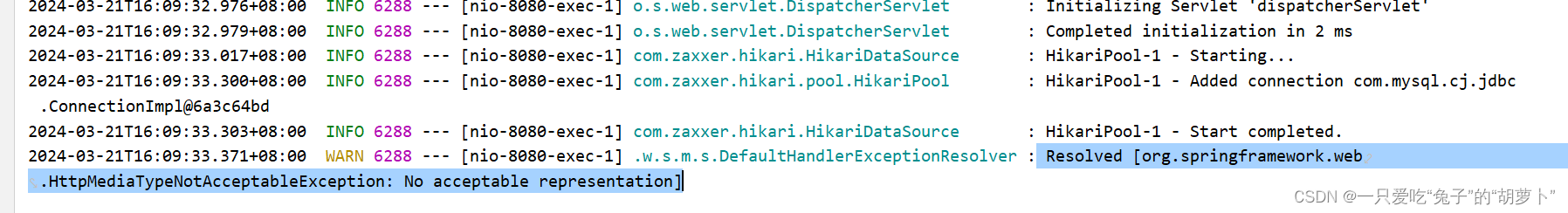

python checkingfence.py程序运行结果如下图:

图1 程序运行效果

图2 程序运行控制台的输出

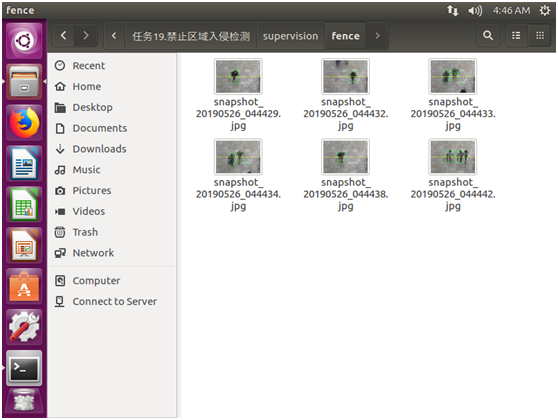

supervision/fence目录下出现了入侵的截图。

图3 入侵截图被保存

相关文章:

院子摄像头的监控

院子摄像头的监控和禁止区域入侵检测相比,多了2个功能:1)如果检测到有人入侵,则把截图保存起来,2)如果检测到有人入侵,则向数据库插入一条事件数据。 打开checkingfence.py,添加如下…...

SpringBoot3使用响应Result类返回的响应状态码为406

Resolved [org.springframework.web.HttpMediaTypeNotAcceptableException: No acceptable representation] 解决方法:Result类上加上Data注解...

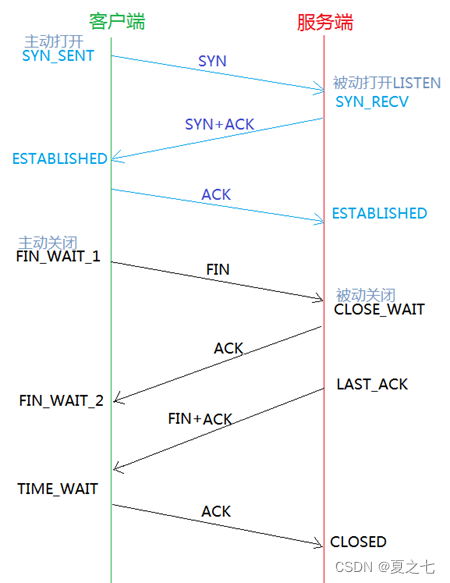

基础:TCP四次挥手做了什么,为什么要挥手?

1. TCP 四次挥手在做些什么 1. 第一次挥手 : 1)挥手作用:主机1发送指令告诉主机2,我没有数据发送给你了。 2)数据处理:主机1(可以是客户端,也可以是服务端),…...

Android Studio实现内容丰富的安卓校园二手交易平台(带聊天功能)

获取源码请点击文章末尾QQ名片联系,源码不免费,尊重创作,尊重劳动 项目编号083 1.开发环境android stuido jdk1.8 eclipse mysql tomcat 2.功能介绍 安卓端: 1.注册登录 2.查看二手商品列表 3.发布二手商品 4.商品详情 5.聊天功能…...

第十一届蓝桥杯省赛第一场真题

2065. 整除序列 - AcWing题库 #include <bits/stdc.h> using namespace std; #define int long long//记得开long long void solve(){int n;cin>>n;while(n){cout<<n<< ;n/2;} } signed main(){int t1;while(t--)solve();return 0; } 2066. 解码 - …...

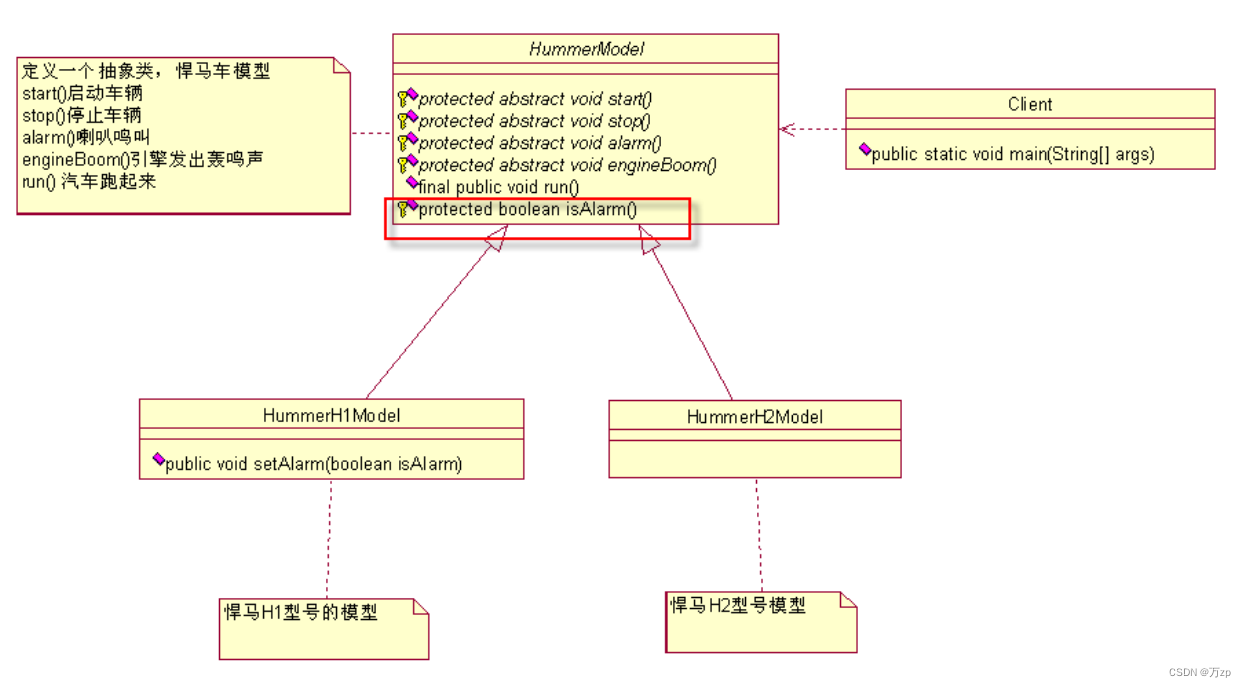

设计模式 模板方法模式

01.如果接到一个任务,要求设计不同型号的悍马车 02.设计一个悍马车的抽象类(模具,车模) public abstract class HummerModel {/** 首先,这个模型要能够被发动起来,别管是手摇发动,还是电力发动…...

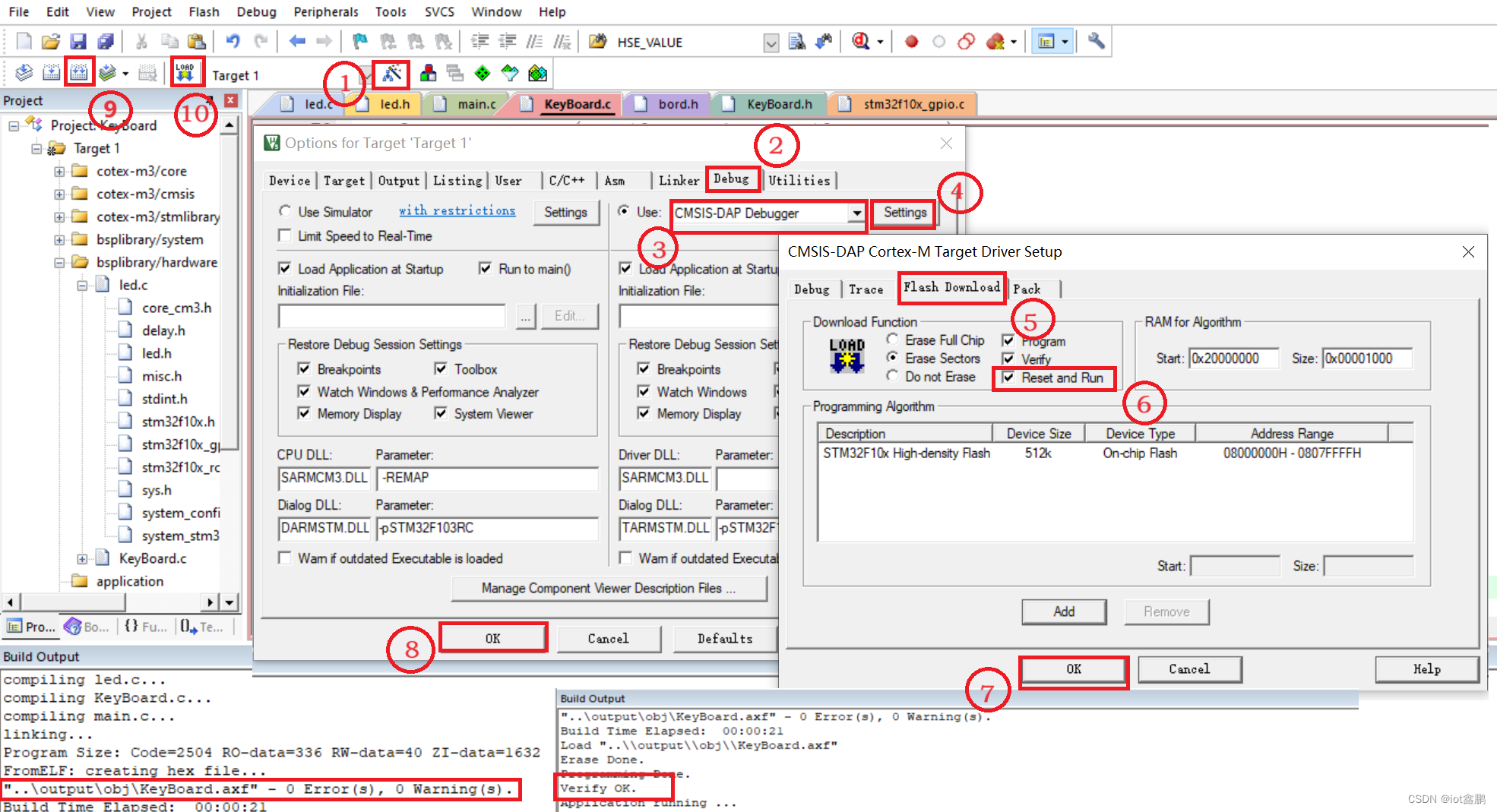

【STM32嵌入式系统设计与开发】——6矩阵按键应用(4x4)

这里写目录标题 一、任务描述二、任务实施1、SingleKey工程文件夹创建2、函数编辑(1)主函数编辑(2)LED IO初始化函数(LED_Init())(3)开发板矩阵键盘IO初始化(ExpKeyBordInit())&…...

乐优商城(九)数据同步RabbitMQ

1. 项目问题分析 现在项目中有三个独立的微服务: 商品微服务:原始数据保存在 MySQL 中,从 MySQL 中增删改查商品数据。搜索微服务:原始数据保存在 ES 的索引库中,从 ES 中查询商品数据。商品详情微服务:做…...

XSS-labs详解

xss-labs下载地址https://github.com/do0dl3/xss-labs 进入靶场点击图片,开始我们的XSS之旅! Less-1 查看源码 代码从 URL 的 GET 参数中取得 "name" 的值,然后输出一个居中的标题,内容是 "欢迎用户" 后面…...

设计模式——模板方法模式封装.net Core读取不同类型的文件

1、模板方法模式 模板方法模式:定义一个操作中的算法骨架,而将一些步骤延迟到子类中,模板方法使得子类可以不改变一个算法的结构即可重定义该算法的某些特定步骤。 特点:通过把不变的行为搬移到超类,去除子类中重复的代…...

[思考记录]技术欠账

最近对某开发项目做回顾梳理,除了进一步思考整理相关概念和问题外,一个重要的任务就是清理“技术欠账”。 这个“技术欠账”是指在这个项目的初期,会有意无意偏向快速实现,想先做出来、用起来,进而在实现过程中做出…...

React - 实现菜单栏滚动

简介 本文将会基于react实现滚动菜单栏功能。 技术实现 实现效果 点击菜单,内容区域会自动滚动到对应卡片。内容区域滑动,指定菜单栏会被选中。 ScrollMenu.js import {useRef, useState} from "react"; import ./ScrollMenu.css;export co…...

线性筛选(欧拉筛选)-洛谷P3383

#include <bits/stdc.h> using namespace std; int main() {std::ios::sync_with_stdio(false); cin.tie(nullptr); //为了加速int n, q;cin >> n >> q; vector<int>num(n 1); //定义数字表vector<int>prime; //定义素数表数组num[1] …...

企业微信可以更换公司主体吗?

企业微信变更主体有什么作用?当我们的企业因为各种原因需要注销或已经注销,或者运营变更等情况,企业微信无法继续使用原主体继续使用时,可以申请企业主体变更,变更为新的主体。企业微信变更主体的条件有哪些࿱…...

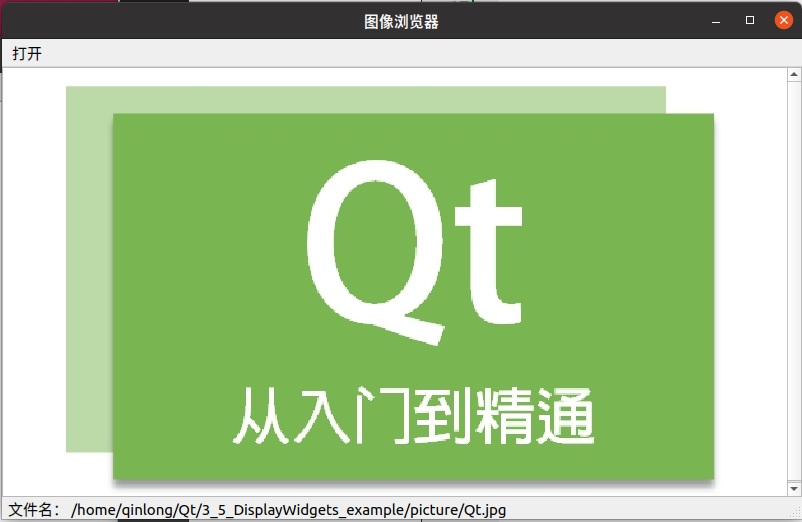

Qt教程 — 3.6 深入了解Qt 控件:Display Widgets部件(2)

目录 1 Display Widgets简介 2 如何使用Display Widgets部件 2.1 QTextBrowser组件-简单的文本浏览器 2.2 QGraphicsView组件-简单的图像浏览器 Display Widgets将分为两篇文章介绍 文章1(Qt教程 — 3.5 深入了解Qt 控件:Display Widgets部件-CSDN…...

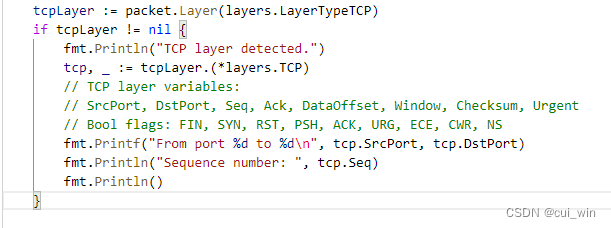

Golang案例开发之gopacket抓包三次握手四次分手(3)

文章目录 前言一、理论知识三次握手四次分手二、代码实践1.模拟客户端和服务器端2.三次握手代码3.四次分手代码验证代码完整代码总结前言 TCP通讯的三次握手和四次分手,有很多文章都在介绍了,当我们了解了gopacket这个工具的时候,我们当然是用代码实践一下,我们的理论。本…...

如何减少pdf的文件大小?pdf压缩工具介绍

文件发不出去,有时就会耽误工作进度,文件太大无法发送,这应该是大家在发送PDF时,常常会碰到的问题吧,那么PDF文档压缩大小怎么做呢?因此我们需要对pdf压缩后再发送,那么有没有好用的pdf压缩工具…...

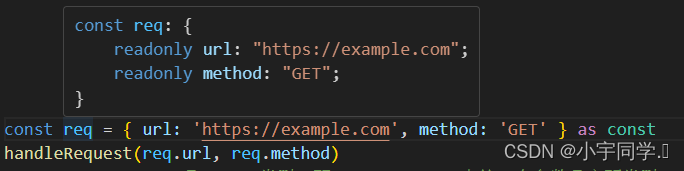

TypeScript基础类型

string、number、bolean 直接在变量后面添加即可。 let myName: string Tomfunction sayHello(person: string) {return hello, person } let user Tom let array [1, 2, 3] console.log(sayHello(user))function greet(person: string, date: Date): string {console.lo…...

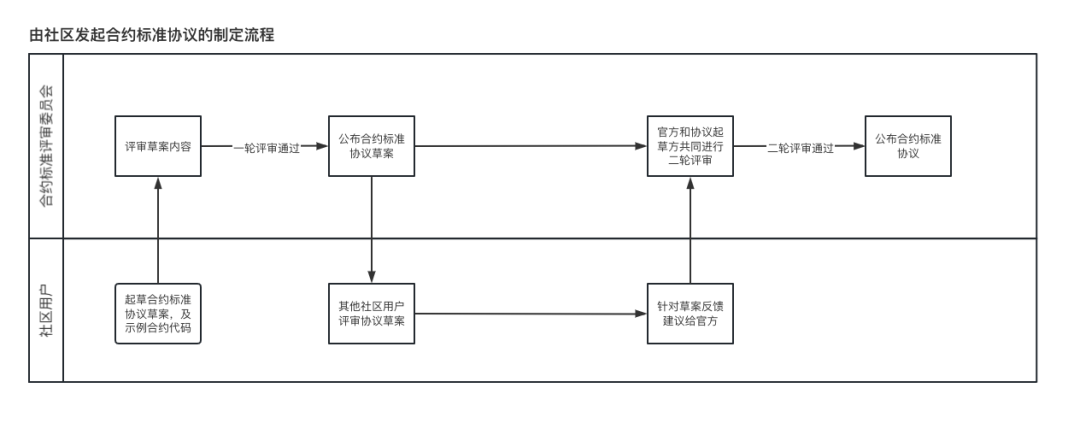

长安链智能合约标准协议第二草案——BNS与DID协议邀请社区用户评审

长安链智能合约标准协议 在智能合约编写过程中,不同的产品及开发人员对业务理解和编程习惯不同,即使同一业务所编写的合约在具体实现上也可能有很大差异,在运维或业务对接中面临较大的学习和理解成本,现有公链合约协议规范又不能完…...

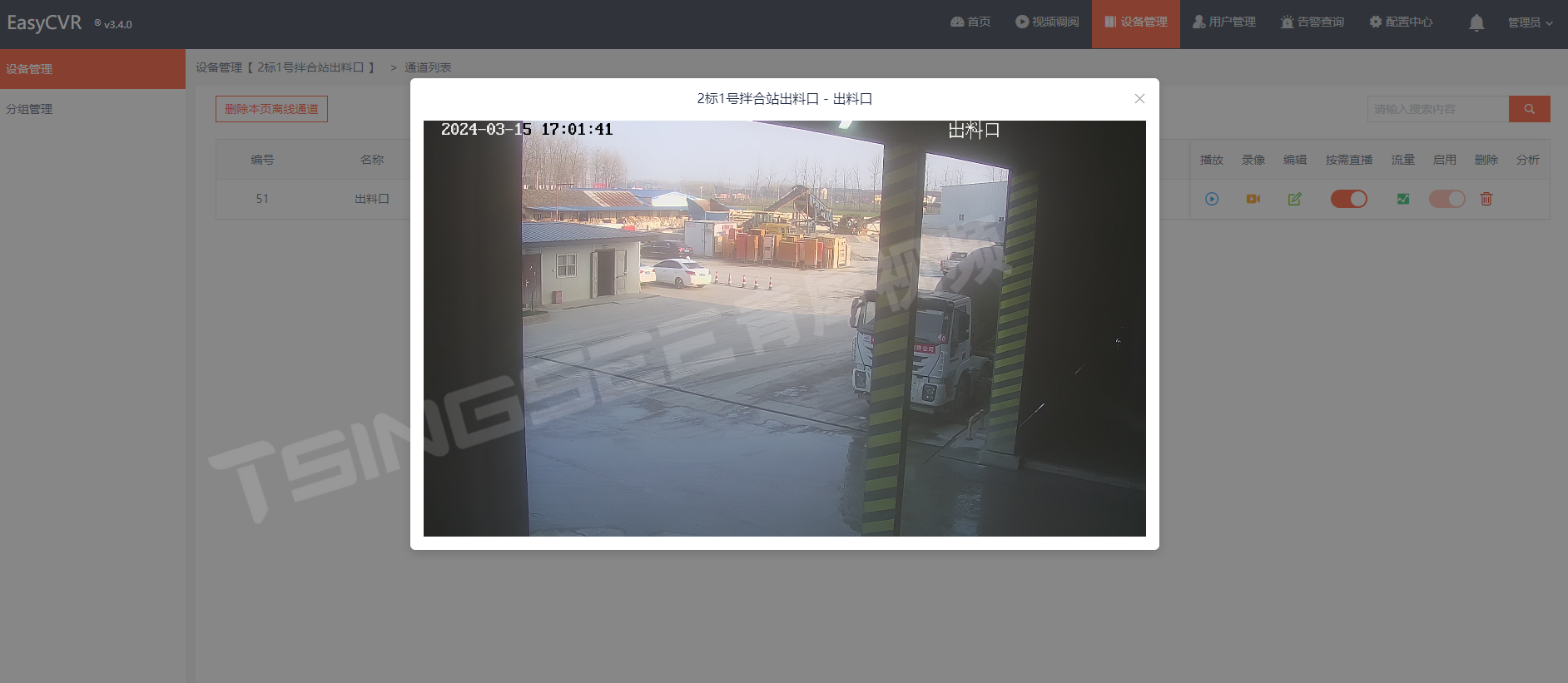

安防监控视频汇聚平台EasyCVR接入海康Ehome设备,设备在线但视频无法播放是什么原因?

安防视频监控/视频集中存储/云存储/磁盘阵列EasyCVR平台可拓展性强、视频能力灵活、部署轻快,可支持的主流标准协议有国标GB28181、RTSP/Onvif、RTMP等,以及支持厂家私有协议与SDK接入,包括海康Ehome、海大宇等设备的SDK等。平台既具备传统安…...

Onekey终极指南:如何5分钟快速获取Steam游戏清单的免费神器

Onekey终极指南:如何5分钟快速获取Steam游戏清单的免费神器 【免费下载链接】Onekey Onekey Steam Depot Manifest Downloader 项目地址: https://gitcode.com/gh_mirrors/one/Onekey 还在为复杂的Steam游戏清单下载而头疼吗?想要备份游戏资源却不…...

GitLab External Wiki代理权限绕过漏洞深度解析

1. 这个漏洞不是“修个补丁”就能完事的——它暴露的是 GitLab 权限模型里一个被长期忽视的逻辑断层GitLab 安全漏洞 CVE-2025-2614,光看编号容易误以为是又一个常规的越权或 XSS 类型漏洞。但我在实际复现和审计过程中发现,它根本不是配置疏漏或代码拼写…...

Owl-Alpha 新手快速上手指南

在处理大规模数据或构建高性能应用时,我们常常会遇到一个棘手的问题:如何在不阻塞主线程的情况下,高效地执行耗时任务?无论是处理图像、解析大型文件,还是进行复杂的数学运算,传统的单线程模式往往会让界面…...

独立站内容分层:一层给 SEO,一层给 GEO

你的内容在喂两个完全不同的"阅读者" 你的博客文章,从来都不只有一个读者。 传统认知里,独立站内容的读者只有两类:真人访客和搜索引擎爬虫。SEO 优化的一切工作,本质上都是在讨好后者,顺带服务前者。 但…...

基于Arduino与nRF24L01+的无线传感器平台设计与部署指南

1. 项目概述与设计思路如果你和我一样,喜欢在阳台或者小院子里种点蔬菜瓜果,那你肯定也遇到过这样的烦恼:出门几天,心里总惦记着家里的番茄苗是不是缺水了,小温室里的温度会不会太高。传统的温湿度计只能让你在现场读数…...

LaTeX公式一键转Word:3步告别数学公式编辑烦恼

LaTeX公式一键转Word:3步告别数学公式编辑烦恼 【免费下载链接】LaTeX2Word-Equation Copy LaTeX Equations as Word Equations, a Chrome Extension 项目地址: https://gitcode.com/gh_mirrors/la/LaTeX2Word-Equation 还在为Word文档中的数学公式编辑而抓狂…...

榨干Codex!OpenAI工程师亲授Codex真正用法

你可能把 Codex 当编程助手用,改改代码,跑跑测试。但它的能力远不止于此。OpenAI 的客户支持工程师 Jason(jxnlco)告诉你,Codex 其实是一套完整的电脑工作系统,从语音输入到自动化,从浏览器操控…...

OmenSuperHub:基于WMI BIOS控制的高性能笔记本硬件管理方案

OmenSuperHub:基于WMI BIOS控制的高性能笔记本硬件管理方案 【免费下载链接】OmenSuperHub Control Omen laptop performance, fan speeds, and keyboard lighting, and unlock power limits. 项目地址: https://gitcode.com/gh_mirrors/om/OmenSuperHub 在惠…...

ATTiny85通用开发板PCB-4设计:集成电源、音频与诊断的一站式DIY平台

1. PCB-4:一个为四款经典ATTiny85项目而生的通用开发板如果你玩过一阵子电子DIY,特别是对小巧、低功耗的微控制器项目感兴趣,那你很可能听说过或者自己动手做过基于ATTiny85芯片的小玩意儿。这颗只有8个引脚的“小巨人”,以其极低…...

清华大学学位论文LaTeX模板:告别格式烦恼的终极指南

清华大学学位论文LaTeX模板:告别格式烦恼的终极指南 【免费下载链接】thuthesis LaTeX Thesis Template for Tsinghua University 项目地址: https://gitcode.com/gh_mirrors/th/thuthesis 还在为论文格式调整而烦恼吗?清华大学thuthesis LaTeX模…...