llama.cpp LLM_ARCH_DEEPSEEK and LLM_ARCH_DEEPSEEK2

llama.cpp LLM_ARCH_DEEPSEEK and LLM_ARCH_DEEPSEEK2

- 1. `LLM_ARCH_DEEPSEEK` and `LLM_ARCH_DEEPSEEK2`

- 2. `LLM_ARCH_DEEPSEEK` and `LLM_ARCH_DEEPSEEK2`

- 3. `struct ggml_cgraph * build_deepseek()` and `struct ggml_cgraph * build_deepseek2()`

- References

不宜吹捧中国大语言模型的同时,又去贬低美国大语言模型。

水是人体的主要化学成分,约占体重的 50% 至 70%。大语言模型的含水量也不会太少。

llama.cpp

https://github.com/ggerganov/llama.cpp

1. LLM_ARCH_DEEPSEEK and LLM_ARCH_DEEPSEEK2

/home/yongqiang/llm_work/llama_cpp_25_01_05/llama.cpp/src/llama-arch.h

/home/yongqiang/llm_work/llama_cpp_25_01_05/llama.cpp/src/llama-arch.cpp

LLM_ARCH_DEEPSEEKandLLM_ARCH_DEEPSEEK2

//

// gguf constants (sync with gguf.py)

//enum llm_arch {LLM_ARCH_LLAMA,LLM_ARCH_DECI,LLM_ARCH_FALCON,LLM_ARCH_BAICHUAN,LLM_ARCH_GROK,LLM_ARCH_GPT2,LLM_ARCH_GPTJ,LLM_ARCH_GPTNEOX,LLM_ARCH_MPT,LLM_ARCH_STARCODER,LLM_ARCH_REFACT,LLM_ARCH_BERT,LLM_ARCH_NOMIC_BERT,LLM_ARCH_JINA_BERT_V2,LLM_ARCH_BLOOM,LLM_ARCH_STABLELM,LLM_ARCH_QWEN,LLM_ARCH_QWEN2,LLM_ARCH_QWEN2MOE,LLM_ARCH_QWEN2VL,LLM_ARCH_PHI2,LLM_ARCH_PHI3,LLM_ARCH_PHIMOE,LLM_ARCH_PLAMO,LLM_ARCH_CODESHELL,LLM_ARCH_ORION,LLM_ARCH_INTERNLM2,LLM_ARCH_MINICPM,LLM_ARCH_MINICPM3,LLM_ARCH_GEMMA,LLM_ARCH_GEMMA2,LLM_ARCH_STARCODER2,LLM_ARCH_MAMBA,LLM_ARCH_XVERSE,LLM_ARCH_COMMAND_R,LLM_ARCH_COHERE2,LLM_ARCH_DBRX,LLM_ARCH_OLMO,LLM_ARCH_OLMO2,LLM_ARCH_OLMOE,LLM_ARCH_OPENELM,LLM_ARCH_ARCTIC,LLM_ARCH_DEEPSEEK,LLM_ARCH_DEEPSEEK2,LLM_ARCH_CHATGLM,LLM_ARCH_BITNET,LLM_ARCH_T5,LLM_ARCH_T5ENCODER,LLM_ARCH_JAIS,LLM_ARCH_NEMOTRON,LLM_ARCH_EXAONE,LLM_ARCH_RWKV6,LLM_ARCH_RWKV6QWEN2,LLM_ARCH_GRANITE,LLM_ARCH_GRANITE_MOE,LLM_ARCH_CHAMELEON,LLM_ARCH_WAVTOKENIZER_DEC,LLM_ARCH_UNKNOWN,

};

{ LLM_ARCH_DEEPSEEK, "deepseek" }and{ LLM_ARCH_DEEPSEEK2, "deepseek2" }

static const std::map<llm_arch, const char *> LLM_ARCH_NAMES = {{ LLM_ARCH_LLAMA, "llama" },{ LLM_ARCH_DECI, "deci" },{ LLM_ARCH_FALCON, "falcon" },{ LLM_ARCH_GROK, "grok" },{ LLM_ARCH_GPT2, "gpt2" },{ LLM_ARCH_GPTJ, "gptj" },{ LLM_ARCH_GPTNEOX, "gptneox" },{ LLM_ARCH_MPT, "mpt" },{ LLM_ARCH_BAICHUAN, "baichuan" },{ LLM_ARCH_STARCODER, "starcoder" },{ LLM_ARCH_REFACT, "refact" },{ LLM_ARCH_BERT, "bert" },{ LLM_ARCH_NOMIC_BERT, "nomic-bert" },{ LLM_ARCH_JINA_BERT_V2, "jina-bert-v2" },{ LLM_ARCH_BLOOM, "bloom" },{ LLM_ARCH_STABLELM, "stablelm" },{ LLM_ARCH_QWEN, "qwen" },{ LLM_ARCH_QWEN2, "qwen2" },{ LLM_ARCH_QWEN2MOE, "qwen2moe" },{ LLM_ARCH_QWEN2VL, "qwen2vl" },{ LLM_ARCH_PHI2, "phi2" },{ LLM_ARCH_PHI3, "phi3" },{ LLM_ARCH_PHIMOE, "phimoe" },{ LLM_ARCH_PLAMO, "plamo" },{ LLM_ARCH_CODESHELL, "codeshell" },{ LLM_ARCH_ORION, "orion" },{ LLM_ARCH_INTERNLM2, "internlm2" },{ LLM_ARCH_MINICPM, "minicpm" },{ LLM_ARCH_MINICPM3, "minicpm3" },{ LLM_ARCH_GEMMA, "gemma" },{ LLM_ARCH_GEMMA2, "gemma2" },{ LLM_ARCH_STARCODER2, "starcoder2" },{ LLM_ARCH_MAMBA, "mamba" },{ LLM_ARCH_XVERSE, "xverse" },{ LLM_ARCH_COMMAND_R, "command-r" },{ LLM_ARCH_COHERE2, "cohere2" },{ LLM_ARCH_DBRX, "dbrx" },{ LLM_ARCH_OLMO, "olmo" },{ LLM_ARCH_OLMO2, "olmo2" },{ LLM_ARCH_OLMOE, "olmoe" },{ LLM_ARCH_OPENELM, "openelm" },{ LLM_ARCH_ARCTIC, "arctic" },{ LLM_ARCH_DEEPSEEK, "deepseek" },{ LLM_ARCH_DEEPSEEK2, "deepseek2" },{ LLM_ARCH_CHATGLM, "chatglm" },{ LLM_ARCH_BITNET, "bitnet" },{ LLM_ARCH_T5, "t5" },{ LLM_ARCH_T5ENCODER, "t5encoder" },{ LLM_ARCH_JAIS, "jais" },{ LLM_ARCH_NEMOTRON, "nemotron" },{ LLM_ARCH_EXAONE, "exaone" },{ LLM_ARCH_RWKV6, "rwkv6" },{ LLM_ARCH_RWKV6QWEN2, "rwkv6qwen2" },{ LLM_ARCH_GRANITE, "granite" },{ LLM_ARCH_GRANITE_MOE, "granitemoe" },{ LLM_ARCH_CHAMELEON, "chameleon" },{ LLM_ARCH_WAVTOKENIZER_DEC, "wavtokenizer-dec" },{ LLM_ARCH_UNKNOWN, "(unknown)" },

};

2. LLM_ARCH_DEEPSEEK and LLM_ARCH_DEEPSEEK2

/home/yongqiang/llm_work/llama_cpp_25_01_05/llama.cpp/src/llama-arch.cpp

LLM_ARCH_DEEPSEEKandLLM_ARCH_DEEPSEEK2

static const std::map<llm_arch, std::map<llm_tensor, const char *>> LLM_TENSOR_NAMES = {{LLM_ARCH_LLAMA,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_GATE_INP, "blk.%d.ffn_gate_inp" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_GATE_EXP, "blk.%d.ffn_gate.%d" },{ LLM_TENSOR_FFN_DOWN_EXP, "blk.%d.ffn_down.%d" },{ LLM_TENSOR_FFN_UP_EXP, "blk.%d.ffn_up.%d" },{ LLM_TENSOR_FFN_GATE_EXPS, "blk.%d.ffn_gate_exps" },{ LLM_TENSOR_FFN_DOWN_EXPS, "blk.%d.ffn_down_exps" },{ LLM_TENSOR_FFN_UP_EXPS, "blk.%d.ffn_up_exps" },},},{LLM_ARCH_DECI,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_GATE_INP, "blk.%d.ffn_gate_inp" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_GATE_EXP, "blk.%d.ffn_gate.%d" },{ LLM_TENSOR_FFN_DOWN_EXP, "blk.%d.ffn_down.%d" },{ LLM_TENSOR_FFN_UP_EXP, "blk.%d.ffn_up.%d" },{ LLM_TENSOR_FFN_GATE_EXPS, "blk.%d.ffn_gate_exps" },{ LLM_TENSOR_FFN_DOWN_EXPS, "blk.%d.ffn_down_exps" },{ LLM_TENSOR_FFN_UP_EXPS, "blk.%d.ffn_up_exps" },},},{LLM_ARCH_BAICHUAN,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_FALCON,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_NORM_2, "blk.%d.attn_norm_2" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_GROK,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_GATE_INP, "blk.%d.ffn_gate_inp" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE_EXP, "blk.%d.ffn_gate.%d" },{ LLM_TENSOR_FFN_DOWN_EXP, "blk.%d.ffn_down.%d" },{ LLM_TENSOR_FFN_UP_EXP, "blk.%d.ffn_up.%d" },{ LLM_TENSOR_FFN_GATE_EXPS, "blk.%d.ffn_gate_exps" },{ LLM_TENSOR_FFN_DOWN_EXPS, "blk.%d.ffn_down_exps" },{ LLM_TENSOR_FFN_UP_EXPS, "blk.%d.ffn_up_exps" },{ LLM_TENSOR_LAYER_OUT_NORM, "blk.%d.layer_output_norm" },{ LLM_TENSOR_ATTN_OUT_NORM, "blk.%d.attn_output_norm" },},},{LLM_ARCH_GPT2,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_POS_EMBD, "position_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },},},{LLM_ARCH_GPTJ,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },},},{LLM_ARCH_GPTNEOX,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_MPT,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output"},{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_ACT, "blk.%d.ffn.act" },{ LLM_TENSOR_POS_EMBD, "position_embd" },{ LLM_TENSOR_ATTN_Q_NORM, "blk.%d.attn_q_norm"},{ LLM_TENSOR_ATTN_K_NORM, "blk.%d.attn_k_norm"},},},{LLM_ARCH_STARCODER,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_POS_EMBD, "position_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },},},{LLM_ARCH_REFACT,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_BERT,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_TOKEN_EMBD_NORM, "token_embd_norm" },{ LLM_TENSOR_TOKEN_TYPES, "token_types" },{ LLM_TENSOR_POS_EMBD, "position_embd" },{ LLM_TENSOR_ATTN_OUT_NORM, "blk.%d.attn_output_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_LAYER_OUT_NORM, "blk.%d.layer_output_norm" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_CLS, "cls" },{ LLM_TENSOR_CLS_OUT, "cls.output" },},},{LLM_ARCH_NOMIC_BERT,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_TOKEN_EMBD_NORM, "token_embd_norm" },{ LLM_TENSOR_TOKEN_TYPES, "token_types" },{ LLM_TENSOR_ATTN_OUT_NORM, "blk.%d.attn_output_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_LAYER_OUT_NORM, "blk.%d.layer_output_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_JINA_BERT_V2,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_TOKEN_EMBD_NORM, "token_embd_norm" },{ LLM_TENSOR_TOKEN_TYPES, "token_types" },{ LLM_TENSOR_ATTN_NORM_2, "blk.%d.attn_norm_2" },{ LLM_TENSOR_ATTN_OUT_NORM, "blk.%d.attn_output_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_Q_NORM, "blk.%d.attn_q_norm" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_K_NORM, "blk.%d.attn_k_norm" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_LAYER_OUT_NORM, "blk.%d.layer_output_norm" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_CLS, "cls" },},},{LLM_ARCH_BLOOM,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_TOKEN_EMBD_NORM, "token_embd_norm" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },},},{LLM_ARCH_STABLELM,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_ATTN_Q_NORM, "blk.%d.attn_q_norm" },{ LLM_TENSOR_ATTN_K_NORM, "blk.%d.attn_k_norm" },},},{LLM_ARCH_QWEN,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_QWEN2,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_QWEN2VL,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_QWEN2MOE,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE_INP, "blk.%d.ffn_gate_inp" },{ LLM_TENSOR_FFN_GATE_EXPS, "blk.%d.ffn_gate_exps" },{ LLM_TENSOR_FFN_DOWN_EXPS, "blk.%d.ffn_down_exps" },{ LLM_TENSOR_FFN_UP_EXPS, "blk.%d.ffn_up_exps" },{ LLM_TENSOR_FFN_GATE_INP_SHEXP, "blk.%d.ffn_gate_inp_shexp" },{ LLM_TENSOR_FFN_GATE_SHEXP, "blk.%d.ffn_gate_shexp" },{ LLM_TENSOR_FFN_DOWN_SHEXP, "blk.%d.ffn_down_shexp" },{ LLM_TENSOR_FFN_UP_SHEXP, "blk.%d.ffn_up_shexp" },},},{LLM_ARCH_PHI2,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_PHI3,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FACTORS_LONG, "rope_factors_long" },{ LLM_TENSOR_ROPE_FACTORS_SHORT, "rope_factors_short" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_PHIMOE,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FACTORS_LONG, "rope_factors_long" },{ LLM_TENSOR_ROPE_FACTORS_SHORT, "rope_factors_short" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE_INP, "blk.%d.ffn_gate_inp" },{ LLM_TENSOR_FFN_GATE_EXPS, "blk.%d.ffn_gate_exps" },{ LLM_TENSOR_FFN_DOWN_EXPS, "blk.%d.ffn_down_exps" },{ LLM_TENSOR_FFN_UP_EXPS, "blk.%d.ffn_up_exps" },},},{LLM_ARCH_PLAMO,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_CODESHELL,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_ORION,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_INTERNLM2,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_MINICPM,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ROPE_FACTORS_LONG, "rope_factors_long" },{ LLM_TENSOR_ROPE_FACTORS_SHORT, "rope_factors_short" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_GATE_INP, "blk.%d.ffn_gate_inp" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_GATE_EXP, "blk.%d.ffn_gate.%d" },{ LLM_TENSOR_FFN_DOWN_EXP, "blk.%d.ffn_down.%d" },{ LLM_TENSOR_FFN_UP_EXP, "blk.%d.ffn_up.%d" },},},{LLM_ARCH_MINICPM3,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FACTORS_LONG, "rope_factors_long" },{ LLM_TENSOR_ROPE_FACTORS_SHORT, "rope_factors_short" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q_A_NORM, "blk.%d.attn_q_a_norm" },{ LLM_TENSOR_ATTN_KV_A_NORM, "blk.%d.attn_kv_a_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_Q_A, "blk.%d.attn_q_a" },{ LLM_TENSOR_ATTN_Q_B, "blk.%d.attn_q_b" },{ LLM_TENSOR_ATTN_KV_A_MQA, "blk.%d.attn_kv_a_mqa" },{ LLM_TENSOR_ATTN_KV_B, "blk.%d.attn_kv_b" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },},},{LLM_ARCH_GEMMA,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_GEMMA2,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_POST_NORM, "blk.%d.post_attention_norm" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_POST_NORM, "blk.%d.post_ffw_norm" },},},{LLM_ARCH_STARCODER2,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_MAMBA,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_SSM_IN, "blk.%d.ssm_in" },{ LLM_TENSOR_SSM_CONV1D, "blk.%d.ssm_conv1d" },{ LLM_TENSOR_SSM_X, "blk.%d.ssm_x" },{ LLM_TENSOR_SSM_DT, "blk.%d.ssm_dt" },{ LLM_TENSOR_SSM_A, "blk.%d.ssm_a" },{ LLM_TENSOR_SSM_D, "blk.%d.ssm_d" },{ LLM_TENSOR_SSM_OUT, "blk.%d.ssm_out" },},},{LLM_ARCH_XVERSE,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_COMMAND_R,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_ATTN_Q_NORM, "blk.%d.attn_q_norm" },{ LLM_TENSOR_ATTN_K_NORM, "blk.%d.attn_k_norm" },},},{LLM_ARCH_COHERE2,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_DBRX,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_OUT_NORM, "blk.%d.attn_output_norm" },{ LLM_TENSOR_FFN_GATE_INP, "blk.%d.ffn_gate_inp" },{ LLM_TENSOR_FFN_GATE_EXPS, "blk.%d.ffn_gate_exps" },{ LLM_TENSOR_FFN_DOWN_EXPS, "blk.%d.ffn_down_exps" },{ LLM_TENSOR_FFN_UP_EXPS, "blk.%d.ffn_up_exps" },},},{LLM_ARCH_OLMO,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_OLMO2,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_POST_NORM, "blk.%d.post_attention_norm" },{ LLM_TENSOR_ATTN_Q_NORM, "blk.%d.attn_q_norm" },{ LLM_TENSOR_ATTN_K_NORM, "blk.%d.attn_k_norm" },{ LLM_TENSOR_FFN_POST_NORM, "blk.%d.post_ffw_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_OLMOE,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_Q_NORM, "blk.%d.attn_q_norm" },{ LLM_TENSOR_ATTN_K_NORM, "blk.%d.attn_k_norm" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE_INP, "blk.%d.ffn_gate_inp" },{ LLM_TENSOR_FFN_GATE_EXPS, "blk.%d.ffn_gate_exps" },{ LLM_TENSOR_FFN_DOWN_EXPS, "blk.%d.ffn_down_exps" },{ LLM_TENSOR_FFN_UP_EXPS, "blk.%d.ffn_up_exps" },},},{LLM_ARCH_OPENELM,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_Q_NORM, "blk.%d.attn_q_norm" },{ LLM_TENSOR_ATTN_K_NORM, "blk.%d.attn_k_norm" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_ARCTIC,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_GATE_INP, "blk.%d.ffn_gate_inp" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_NORM_EXPS, "blk.%d.ffn_norm_exps" },{ LLM_TENSOR_FFN_GATE_EXPS, "blk.%d.ffn_gate_exps" },{ LLM_TENSOR_FFN_DOWN_EXPS, "blk.%d.ffn_down_exps" },{ LLM_TENSOR_FFN_UP_EXPS, "blk.%d.ffn_up_exps" },},},{LLM_ARCH_DEEPSEEK,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_GATE_INP, "blk.%d.ffn_gate_inp" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_GATE_EXPS, "blk.%d.ffn_gate_exps" },{ LLM_TENSOR_FFN_DOWN_EXPS, "blk.%d.ffn_down_exps" },{ LLM_TENSOR_FFN_UP_EXPS, "blk.%d.ffn_up_exps" },{ LLM_TENSOR_FFN_GATE_INP_SHEXP, "blk.%d.ffn_gate_inp_shexp" },{ LLM_TENSOR_FFN_GATE_SHEXP, "blk.%d.ffn_gate_shexp" },{ LLM_TENSOR_FFN_DOWN_SHEXP, "blk.%d.ffn_down_shexp" },{ LLM_TENSOR_FFN_UP_SHEXP, "blk.%d.ffn_up_shexp" },},},{LLM_ARCH_DEEPSEEK2,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q_A_NORM, "blk.%d.attn_q_a_norm" },{ LLM_TENSOR_ATTN_KV_A_NORM, "blk.%d.attn_kv_a_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_Q_A, "blk.%d.attn_q_a" },{ LLM_TENSOR_ATTN_Q_B, "blk.%d.attn_q_b" },{ LLM_TENSOR_ATTN_KV_A_MQA, "blk.%d.attn_kv_a_mqa" },{ LLM_TENSOR_ATTN_KV_B, "blk.%d.attn_kv_b" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_GATE_INP, "blk.%d.ffn_gate_inp" },{ LLM_TENSOR_FFN_GATE_EXPS, "blk.%d.ffn_gate_exps" },{ LLM_TENSOR_FFN_DOWN_EXPS, "blk.%d.ffn_down_exps" },{ LLM_TENSOR_FFN_UP_EXPS, "blk.%d.ffn_up_exps" },{ LLM_TENSOR_FFN_GATE_INP_SHEXP, "blk.%d.ffn_gate_inp_shexp" },{ LLM_TENSOR_FFN_GATE_SHEXP, "blk.%d.ffn_gate_shexp" },{ LLM_TENSOR_FFN_DOWN_SHEXP, "blk.%d.ffn_down_shexp" },{ LLM_TENSOR_FFN_UP_SHEXP, "blk.%d.ffn_up_shexp" },{ LLM_TENSOR_FFN_EXP_PROBS_B, "blk.%d.exp_probs_b" },},},{LLM_ARCH_CHATGLM,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },},},{LLM_ARCH_BITNET,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_SUB_NORM, "blk.%d.attn_sub_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_SUB_NORM, "blk.%d.ffn_sub_norm" },},},{LLM_ARCH_T5,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_DEC_OUTPUT_NORM, "dec.output_norm" },{ LLM_TENSOR_DEC_ATTN_NORM, "dec.blk.%d.attn_norm" },{ LLM_TENSOR_DEC_ATTN_Q, "dec.blk.%d.attn_q" },{ LLM_TENSOR_DEC_ATTN_K, "dec.blk.%d.attn_k" },{ LLM_TENSOR_DEC_ATTN_V, "dec.blk.%d.attn_v" },{ LLM_TENSOR_DEC_ATTN_OUT, "dec.blk.%d.attn_o" },{ LLM_TENSOR_DEC_ATTN_REL_B, "dec.blk.%d.attn_rel_b" },{ LLM_TENSOR_DEC_CROSS_ATTN_NORM, "dec.blk.%d.cross_attn_norm" },{ LLM_TENSOR_DEC_CROSS_ATTN_Q, "dec.blk.%d.cross_attn_q" },{ LLM_TENSOR_DEC_CROSS_ATTN_K, "dec.blk.%d.cross_attn_k" },{ LLM_TENSOR_DEC_CROSS_ATTN_V, "dec.blk.%d.cross_attn_v" },{ LLM_TENSOR_DEC_CROSS_ATTN_OUT, "dec.blk.%d.cross_attn_o" },{ LLM_TENSOR_DEC_CROSS_ATTN_REL_B, "dec.blk.%d.cross_attn_rel_b" },{ LLM_TENSOR_DEC_FFN_NORM, "dec.blk.%d.ffn_norm" },{ LLM_TENSOR_DEC_FFN_GATE, "dec.blk.%d.ffn_gate" },{ LLM_TENSOR_DEC_FFN_DOWN, "dec.blk.%d.ffn_down" },{ LLM_TENSOR_DEC_FFN_UP, "dec.blk.%d.ffn_up" },{ LLM_TENSOR_ENC_OUTPUT_NORM, "enc.output_norm" },{ LLM_TENSOR_ENC_ATTN_NORM, "enc.blk.%d.attn_norm" },{ LLM_TENSOR_ENC_ATTN_Q, "enc.blk.%d.attn_q" },{ LLM_TENSOR_ENC_ATTN_K, "enc.blk.%d.attn_k" },{ LLM_TENSOR_ENC_ATTN_V, "enc.blk.%d.attn_v" },{ LLM_TENSOR_ENC_ATTN_OUT, "enc.blk.%d.attn_o" },{ LLM_TENSOR_ENC_ATTN_REL_B, "enc.blk.%d.attn_rel_b" },{ LLM_TENSOR_ENC_FFN_NORM, "enc.blk.%d.ffn_norm" },{ LLM_TENSOR_ENC_FFN_GATE, "enc.blk.%d.ffn_gate" },{ LLM_TENSOR_ENC_FFN_DOWN, "enc.blk.%d.ffn_down" },{ LLM_TENSOR_ENC_FFN_UP, "enc.blk.%d.ffn_up" },},},{LLM_ARCH_T5ENCODER,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ENC_OUTPUT_NORM, "enc.output_norm" },{ LLM_TENSOR_ENC_ATTN_NORM, "enc.blk.%d.attn_norm" },{ LLM_TENSOR_ENC_ATTN_Q, "enc.blk.%d.attn_q" },{ LLM_TENSOR_ENC_ATTN_K, "enc.blk.%d.attn_k" },{ LLM_TENSOR_ENC_ATTN_V, "enc.blk.%d.attn_v" },{ LLM_TENSOR_ENC_ATTN_OUT, "enc.blk.%d.attn_o" },{ LLM_TENSOR_ENC_ATTN_REL_B, "enc.blk.%d.attn_rel_b" },{ LLM_TENSOR_ENC_FFN_NORM, "enc.blk.%d.ffn_norm" },{ LLM_TENSOR_ENC_FFN_GATE, "enc.blk.%d.ffn_gate" },{ LLM_TENSOR_ENC_FFN_DOWN, "enc.blk.%d.ffn_down" },{ LLM_TENSOR_ENC_FFN_UP, "enc.blk.%d.ffn_up" },},},{LLM_ARCH_JAIS,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_QKV, "blk.%d.attn_qkv" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },},},{LLM_ARCH_NEMOTRON,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_EXAONE,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ROPE_FREQS, "rope_freqs" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_ATTN_ROT_EMBD, "blk.%d.attn_rot_embd" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_RWKV6,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_TOKEN_EMBD_NORM, "token_embd_norm" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_NORM_2, "blk.%d.attn_norm_2" },{ LLM_TENSOR_TIME_MIX_W1, "blk.%d.time_mix_w1" },{ LLM_TENSOR_TIME_MIX_W2, "blk.%d.time_mix_w2" },{ LLM_TENSOR_TIME_MIX_LERP_X, "blk.%d.time_mix_lerp_x" },{ LLM_TENSOR_TIME_MIX_LERP_W, "blk.%d.time_mix_lerp_w" },{ LLM_TENSOR_TIME_MIX_LERP_K, "blk.%d.time_mix_lerp_k" },{ LLM_TENSOR_TIME_MIX_LERP_V, "blk.%d.time_mix_lerp_v" },{ LLM_TENSOR_TIME_MIX_LERP_R, "blk.%d.time_mix_lerp_r" },{ LLM_TENSOR_TIME_MIX_LERP_G, "blk.%d.time_mix_lerp_g" },{ LLM_TENSOR_TIME_MIX_LERP_FUSED, "blk.%d.time_mix_lerp_fused" },{ LLM_TENSOR_TIME_MIX_FIRST, "blk.%d.time_mix_first" },{ LLM_TENSOR_TIME_MIX_DECAY, "blk.%d.time_mix_decay" },{ LLM_TENSOR_TIME_MIX_DECAY_W1, "blk.%d.time_mix_decay_w1" },{ LLM_TENSOR_TIME_MIX_DECAY_W2, "blk.%d.time_mix_decay_w2" },{ LLM_TENSOR_TIME_MIX_KEY, "blk.%d.time_mix_key" },{ LLM_TENSOR_TIME_MIX_VALUE, "blk.%d.time_mix_value" },{ LLM_TENSOR_TIME_MIX_RECEPTANCE, "blk.%d.time_mix_receptance" },{ LLM_TENSOR_TIME_MIX_GATE, "blk.%d.time_mix_gate" },{ LLM_TENSOR_TIME_MIX_LN, "blk.%d.time_mix_ln" },{ LLM_TENSOR_TIME_MIX_OUTPUT, "blk.%d.time_mix_output" },{ LLM_TENSOR_CHANNEL_MIX_LERP_K, "blk.%d.channel_mix_lerp_k" },{ LLM_TENSOR_CHANNEL_MIX_LERP_R, "blk.%d.channel_mix_lerp_r" },{ LLM_TENSOR_CHANNEL_MIX_KEY, "blk.%d.channel_mix_key" },{ LLM_TENSOR_CHANNEL_MIX_VALUE, "blk.%d.channel_mix_value" },{ LLM_TENSOR_CHANNEL_MIX_RECEPTANCE, "blk.%d.channel_mix_receptance" },},},{LLM_ARCH_RWKV6QWEN2,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_TIME_MIX_W1, "blk.%d.time_mix_w1" },{ LLM_TENSOR_TIME_MIX_W2, "blk.%d.time_mix_w2" },{ LLM_TENSOR_TIME_MIX_LERP_X, "blk.%d.time_mix_lerp_x" },{ LLM_TENSOR_TIME_MIX_LERP_FUSED, "blk.%d.time_mix_lerp_fused" },{ LLM_TENSOR_TIME_MIX_FIRST, "blk.%d.time_mix_first" },{ LLM_TENSOR_TIME_MIX_DECAY, "blk.%d.time_mix_decay" },{ LLM_TENSOR_TIME_MIX_DECAY_W1, "blk.%d.time_mix_decay_w1" },{ LLM_TENSOR_TIME_MIX_DECAY_W2, "blk.%d.time_mix_decay_w2" },{ LLM_TENSOR_TIME_MIX_KEY, "blk.%d.time_mix_key" },{ LLM_TENSOR_TIME_MIX_VALUE, "blk.%d.time_mix_value" },{ LLM_TENSOR_TIME_MIX_RECEPTANCE, "blk.%d.time_mix_receptance" },{ LLM_TENSOR_TIME_MIX_GATE, "blk.%d.time_mix_gate" },{ LLM_TENSOR_TIME_MIX_OUTPUT, "blk.%d.time_mix_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_GRANITE,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },},},{LLM_ARCH_GRANITE_MOE,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE_INP, "blk.%d.ffn_gate_inp" },{ LLM_TENSOR_FFN_GATE_EXPS, "blk.%d.ffn_gate_exps" },{ LLM_TENSOR_FFN_DOWN_EXPS, "blk.%d.ffn_down_exps" },{ LLM_TENSOR_FFN_UP_EXPS, "blk.%d.ffn_up_exps" },},},{LLM_ARCH_CHAMELEON,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_ATTN_NORM, "blk.%d.attn_norm" },{ LLM_TENSOR_ATTN_Q, "blk.%d.attn_q" },{ LLM_TENSOR_ATTN_K, "blk.%d.attn_k" },{ LLM_TENSOR_ATTN_V, "blk.%d.attn_v" },{ LLM_TENSOR_ATTN_OUT, "blk.%d.attn_output" },{ LLM_TENSOR_FFN_NORM, "blk.%d.ffn_norm" },{ LLM_TENSOR_FFN_GATE, "blk.%d.ffn_gate" },{ LLM_TENSOR_FFN_DOWN, "blk.%d.ffn_down" },{ LLM_TENSOR_FFN_UP, "blk.%d.ffn_up" },{ LLM_TENSOR_ATTN_Q_NORM, "blk.%d.attn_q_norm" },{ LLM_TENSOR_ATTN_K_NORM, "blk.%d.attn_k_norm" },},},{LLM_ARCH_WAVTOKENIZER_DEC,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },{ LLM_TENSOR_TOKEN_EMBD_NORM, "token_embd_norm" },{ LLM_TENSOR_CONV1D, "conv1d" },{ LLM_TENSOR_CONVNEXT_DW, "convnext.%d.dw" },{ LLM_TENSOR_CONVNEXT_NORM, "convnext.%d.norm" },{ LLM_TENSOR_CONVNEXT_PW1, "convnext.%d.pw1" },{ LLM_TENSOR_CONVNEXT_PW2, "convnext.%d.pw2" },{ LLM_TENSOR_CONVNEXT_GAMMA, "convnext.%d.gamma" },{ LLM_TENSOR_OUTPUT_NORM, "output_norm" },{ LLM_TENSOR_OUTPUT, "output" },{ LLM_TENSOR_POS_NET_CONV1, "posnet.%d.conv1" },{ LLM_TENSOR_POS_NET_CONV2, "posnet.%d.conv2" },{ LLM_TENSOR_POS_NET_NORM, "posnet.%d.norm" },{ LLM_TENSOR_POS_NET_NORM1, "posnet.%d.norm1" },{ LLM_TENSOR_POS_NET_NORM2, "posnet.%d.norm2" },{ LLM_TENSOR_POS_NET_ATTN_NORM, "posnet.%d.attn_norm" },{ LLM_TENSOR_POS_NET_ATTN_Q, "posnet.%d.attn_q" },{ LLM_TENSOR_POS_NET_ATTN_K, "posnet.%d.attn_k" },{ LLM_TENSOR_POS_NET_ATTN_V, "posnet.%d.attn_v" },{ LLM_TENSOR_POS_NET_ATTN_OUT, "posnet.%d.attn_output" },},},{LLM_ARCH_UNKNOWN,{{ LLM_TENSOR_TOKEN_EMBD, "token_embd" },},},

};

3. struct ggml_cgraph * build_deepseek() and struct ggml_cgraph * build_deepseek2()

/home/yongqiang/llm_work/llama_cpp_25_01_05/llama.cpp/src/llama.cpp

struct ggml_cgraph * build_deepseek()

struct ggml_cgraph * build_deepseek() {struct ggml_cgraph * gf = ggml_new_graph_custom(ctx0, model.max_nodes(), false);// mutable variable, needed during the last layer of the computation to skip unused tokensint32_t n_tokens = this->n_tokens;const int64_t n_embd_head = hparams.n_embd_head_v;GGML_ASSERT(n_embd_head == hparams.n_embd_head_k);GGML_ASSERT(n_embd_head == hparams.n_rot);struct ggml_tensor * cur;struct ggml_tensor * inpL;inpL = llm_build_inp_embd(ctx0, lctx, hparams, ubatch, model.tok_embd, cb);// inp_pos - contains the positionsstruct ggml_tensor * inp_pos = build_inp_pos();// KQ_mask (mask for 1 head, it will be broadcasted to all heads)struct ggml_tensor * KQ_mask = build_inp_KQ_mask();const float kq_scale = hparams.f_attention_scale == 0.0f ? 1.0f/sqrtf(float(n_embd_head)) : hparams.f_attention_scale;for (int il = 0; il < n_layer; ++il) {struct ggml_tensor * inpSA = inpL;// normcur = llm_build_norm(ctx0, inpL, hparams,model.layers[il].attn_norm, NULL,LLM_NORM_RMS, cb, il);cb(cur, "attn_norm", il);// self-attention{// rope freq factors for llama3; may return nullptr for llama2 and other modelsstruct ggml_tensor * rope_factors = build_rope_factors(il);// compute Q and K and RoPE themstruct ggml_tensor * Qcur = llm_build_lora_mm(lctx, ctx0, model.layers[il].wq, cur);cb(Qcur, "Qcur", il);if (model.layers[il].bq) {Qcur = ggml_add(ctx0, Qcur, model.layers[il].bq);cb(Qcur, "Qcur", il);}struct ggml_tensor * Kcur = llm_build_lora_mm(lctx, ctx0, model.layers[il].wk, cur);cb(Kcur, "Kcur", il);if (model.layers[il].bk) {Kcur = ggml_add(ctx0, Kcur, model.layers[il].bk);cb(Kcur, "Kcur", il);}struct ggml_tensor * Vcur = llm_build_lora_mm(lctx, ctx0, model.layers[il].wv, cur);cb(Vcur, "Vcur", il);if (model.layers[il].bv) {Vcur = ggml_add(ctx0, Vcur, model.layers[il].bv);cb(Vcur, "Vcur", il);}Qcur = ggml_rope_ext(ctx0, ggml_reshape_3d(ctx0, Qcur, n_embd_head, n_head, n_tokens), inp_pos, rope_factors,n_rot, rope_type, n_ctx_orig, freq_base, freq_scale,ext_factor, attn_factor, beta_fast, beta_slow);cb(Qcur, "Qcur", il);Kcur = ggml_rope_ext(ctx0, ggml_reshape_3d(ctx0, Kcur, n_embd_head, n_head_kv, n_tokens), inp_pos, rope_factors,n_rot, rope_type, n_ctx_orig, freq_base, freq_scale,ext_factor, attn_factor, beta_fast, beta_slow);cb(Kcur, "Kcur", il);cur = llm_build_kv(ctx0, lctx, kv_self, gf,model.layers[il].wo, model.layers[il].bo,Kcur, Vcur, Qcur, KQ_mask, n_tokens, kv_head, n_kv, kq_scale, cb, il);}if (il == n_layer - 1) {// skip computing output for unused tokensstruct ggml_tensor * inp_out_ids = build_inp_out_ids();n_tokens = n_outputs;cur = ggml_get_rows(ctx0, cur, inp_out_ids);inpSA = ggml_get_rows(ctx0, inpSA, inp_out_ids);}struct ggml_tensor * ffn_inp = ggml_add(ctx0, cur, inpSA);cb(ffn_inp, "ffn_inp", il);cur = llm_build_norm(ctx0, ffn_inp, hparams,model.layers[il].ffn_norm, NULL,LLM_NORM_RMS, cb, il);cb(cur, "ffn_norm", il);if ((uint32_t) il < hparams.n_layer_dense_lead) {cur = llm_build_ffn(ctx0, lctx, cur,model.layers[il].ffn_up, NULL, NULL,model.layers[il].ffn_gate, NULL, NULL,model.layers[il].ffn_down, NULL, NULL,NULL,LLM_FFN_SILU, LLM_FFN_PAR, cb, il);cb(cur, "ffn_out", il);} else {// MoE branchggml_tensor * moe_out =llm_build_moe_ffn(ctx0, lctx, cur,model.layers[il].ffn_gate_inp,model.layers[il].ffn_up_exps,model.layers[il].ffn_gate_exps,model.layers[il].ffn_down_exps,nullptr,n_expert, n_expert_used,LLM_FFN_SILU, false,false, hparams.expert_weights_scale,LLAMA_EXPERT_GATING_FUNC_TYPE_SOFTMAX,cb, il);cb(moe_out, "ffn_moe_out", il);// FFN shared expert{ggml_tensor * ffn_shexp = llm_build_ffn(ctx0, lctx, cur,model.layers[il].ffn_up_shexp, NULL, NULL,model.layers[il].ffn_gate_shexp, NULL, NULL,model.layers[il].ffn_down_shexp, NULL, NULL,NULL,LLM_FFN_SILU, LLM_FFN_PAR, cb, il);cb(ffn_shexp, "ffn_shexp", il);cur = ggml_add(ctx0, moe_out, ffn_shexp);cb(cur, "ffn_out", il);}}cur = ggml_add(ctx0, cur, ffn_inp);cur = lctx.cvec.apply_to(ctx0, cur, il);cb(cur, "l_out", il);// input for next layerinpL = cur;}cur = inpL;cur = llm_build_norm(ctx0, cur, hparams,model.output_norm, NULL,LLM_NORM_RMS, cb, -1);cb(cur, "result_norm", -1);// lm_headcur = llm_build_lora_mm(lctx, ctx0, model.output, cur);cb(cur, "result_output", -1);ggml_build_forward_expand(gf, cur);return gf;}

struct ggml_cgraph * build_deepseek2()

struct ggml_cgraph * build_deepseek2() {struct ggml_cgraph * gf = ggml_new_graph_custom(ctx0, model.max_nodes(), false);// mutable variable, needed during the last layer of the computation to skip unused tokensint32_t n_tokens = this->n_tokens;bool is_lite = (hparams.n_layer == 27);// We have to pre-scale kq_scale and attn_factor to make the YaRN RoPE work correctly.// See https://github.com/ggerganov/llama.cpp/discussions/7416 for detailed explanation.const float mscale = attn_factor * (1.0f + hparams.rope_yarn_log_mul * logf(1.0f / freq_scale));const float kq_scale = 1.0f*mscale*mscale/sqrtf(float(hparams.n_embd_head_k));const float attn_factor_scaled = 1.0f / (1.0f + 0.1f * logf(1.0f / freq_scale));const uint32_t n_embd_head_qk_rope = hparams.n_rot;const uint32_t n_embd_head_qk_nope = hparams.n_embd_head_k - hparams.n_rot;const uint32_t kv_lora_rank = hparams.n_lora_kv;struct ggml_tensor * cur;struct ggml_tensor * inpL;// {n_embd, n_tokens}inpL = llm_build_inp_embd(ctx0, lctx, hparams, ubatch, model.tok_embd, cb);// inp_pos - contains the positionsstruct ggml_tensor * inp_pos = build_inp_pos();// KQ_mask (mask for 1 head, it will be broadcasted to all heads)struct ggml_tensor * KQ_mask = build_inp_KQ_mask();for (int il = 0; il < n_layer; ++il) {struct ggml_tensor * inpSA = inpL;// normcur = llm_build_norm(ctx0, inpL, hparams,model.layers[il].attn_norm, NULL,LLM_NORM_RMS, cb, il);cb(cur, "attn_norm", il);// self_attention{struct ggml_tensor * q = NULL;if (!is_lite) {// {n_embd, q_lora_rank} * {n_embd, n_tokens} -> {q_lora_rank, n_tokens}q = ggml_mul_mat(ctx0, model.layers[il].wq_a, cur);cb(q, "q", il);q = llm_build_norm(ctx0, q, hparams,model.layers[il].attn_q_a_norm, NULL,LLM_NORM_RMS, cb, il);cb(q, "q", il);// {q_lora_rank, n_head * hparams.n_embd_head_k} * {q_lora_rank, n_tokens} -> {n_head * hparams.n_embd_head_k, n_tokens}q = ggml_mul_mat(ctx0, model.layers[il].wq_b, q);cb(q, "q", il);} else {q = ggml_mul_mat(ctx0, model.layers[il].wq, cur);cb(q, "q", il);}// split into {n_head * n_embd_head_qk_nope, n_tokens}struct ggml_tensor * q_nope = ggml_view_3d(ctx0, q, n_embd_head_qk_nope, n_head, n_tokens,ggml_row_size(q->type, hparams.n_embd_head_k),ggml_row_size(q->type, hparams.n_embd_head_k * n_head),0);cb(q_nope, "q_nope", il);// and {n_head * n_embd_head_qk_rope, n_tokens}struct ggml_tensor * q_pe = ggml_view_3d(ctx0, q, n_embd_head_qk_rope, n_head, n_tokens,ggml_row_size(q->type, hparams.n_embd_head_k),ggml_row_size(q->type, hparams.n_embd_head_k * n_head),ggml_row_size(q->type, n_embd_head_qk_nope));cb(q_pe, "q_pe", il);// {n_embd, kv_lora_rank + n_embd_head_qk_rope} * {n_embd, n_tokens} -> {kv_lora_rank + n_embd_head_qk_rope, n_tokens}struct ggml_tensor * kv_pe_compresseed = ggml_mul_mat(ctx0, model.layers[il].wkv_a_mqa, cur);cb(kv_pe_compresseed, "kv_pe_compresseed", il);// split into {kv_lora_rank, n_tokens}struct ggml_tensor * kv_compressed = ggml_view_2d(ctx0, kv_pe_compresseed, kv_lora_rank, n_tokens,kv_pe_compresseed->nb[1],0);cb(kv_compressed, "kv_compressed", il);// and {n_embd_head_qk_rope, n_tokens}struct ggml_tensor * k_pe = ggml_view_3d(ctx0, kv_pe_compresseed, n_embd_head_qk_rope, 1, n_tokens,kv_pe_compresseed->nb[1],kv_pe_compresseed->nb[1],ggml_row_size(kv_pe_compresseed->type, kv_lora_rank));cb(k_pe, "k_pe", il);kv_compressed = ggml_cont(ctx0, kv_compressed); // TODO: the CUDA backend does not support non-contiguous normkv_compressed = llm_build_norm(ctx0, kv_compressed, hparams,model.layers[il].attn_kv_a_norm, NULL,LLM_NORM_RMS, cb, il);cb(kv_compressed, "kv_compressed", il);// {kv_lora_rank, n_head * (n_embd_head_qk_nope + n_embd_head_v)} * {kv_lora_rank, n_tokens} -> {n_head * (n_embd_head_qk_nope + n_embd_head_v), n_tokens}struct ggml_tensor * kv = ggml_mul_mat(ctx0, model.layers[il].wkv_b, kv_compressed);cb(kv, "kv", il);// split into {n_head * n_embd_head_qk_nope, n_tokens}struct ggml_tensor * k_nope = ggml_view_3d(ctx0, kv, n_embd_head_qk_nope, n_head, n_tokens,ggml_row_size(kv->type, n_embd_head_qk_nope + hparams.n_embd_head_v),ggml_row_size(kv->type, n_head * (n_embd_head_qk_nope + hparams.n_embd_head_v)),0);cb(k_nope, "k_nope", il);// and {n_head * n_embd_head_v, n_tokens}struct ggml_tensor * v_states = ggml_view_3d(ctx0, kv, hparams.n_embd_head_v, n_head, n_tokens,ggml_row_size(kv->type, (n_embd_head_qk_nope + hparams.n_embd_head_v)),ggml_row_size(kv->type, (n_embd_head_qk_nope + hparams.n_embd_head_v)*n_head),ggml_row_size(kv->type, (n_embd_head_qk_nope)));cb(v_states, "v_states", il);v_states = ggml_cont(ctx0, v_states);cb(v_states, "v_states", il);v_states = ggml_view_2d(ctx0, v_states, hparams.n_embd_head_v * n_head, n_tokens,ggml_row_size(kv->type, hparams.n_embd_head_v * n_head),0);cb(v_states, "v_states", il);q_pe = ggml_cont(ctx0, q_pe); // TODO: the CUDA backend used to not support non-cont. RoPE, investigate removing thisq_pe = ggml_rope_ext(ctx0, q_pe, inp_pos, nullptr,n_rot, rope_type, n_ctx_orig, freq_base, freq_scale,ext_factor, attn_factor_scaled, beta_fast, beta_slow);cb(q_pe, "q_pe", il);// shared RoPE keyk_pe = ggml_cont(ctx0, k_pe); // TODO: the CUDA backend used to not support non-cont. RoPE, investigate removing thisk_pe = ggml_rope_ext(ctx0, k_pe, inp_pos, nullptr,n_rot, rope_type, n_ctx_orig, freq_base, freq_scale,ext_factor, attn_factor_scaled, beta_fast, beta_slow);cb(k_pe, "k_pe", il);struct ggml_tensor * q_states = ggml_concat(ctx0, q_nope, q_pe, 0);cb(q_states, "q_states", il);struct ggml_tensor * k_states = ggml_concat(ctx0, k_nope, ggml_repeat(ctx0, k_pe, q_pe), 0);cb(k_states, "k_states", il);cur = llm_build_kv(ctx0, lctx, kv_self, gf,model.layers[il].wo, NULL,k_states, v_states, q_states, KQ_mask, n_tokens, kv_head, n_kv, kq_scale, cb, il);}if (il == n_layer - 1) {// skip computing output for unused tokensstruct ggml_tensor * inp_out_ids = build_inp_out_ids();n_tokens = n_outputs;cur = ggml_get_rows(ctx0, cur, inp_out_ids);inpSA = ggml_get_rows(ctx0, inpSA, inp_out_ids);}struct ggml_tensor * ffn_inp = ggml_add(ctx0, cur, inpSA);cb(ffn_inp, "ffn_inp", il);cur = llm_build_norm(ctx0, ffn_inp, hparams,model.layers[il].ffn_norm, NULL,LLM_NORM_RMS, cb, il);cb(cur, "ffn_norm", il);if ((uint32_t) il < hparams.n_layer_dense_lead) {cur = llm_build_ffn(ctx0, lctx, cur,model.layers[il].ffn_up, NULL, NULL,model.layers[il].ffn_gate, NULL, NULL,model.layers[il].ffn_down, NULL, NULL,NULL,LLM_FFN_SILU, LLM_FFN_PAR, cb, il);cb(cur, "ffn_out", il);} else {// MoE branchggml_tensor * moe_out =llm_build_moe_ffn(ctx0, lctx, cur,model.layers[il].ffn_gate_inp,model.layers[il].ffn_up_exps,model.layers[il].ffn_gate_exps,model.layers[il].ffn_down_exps,model.layers[il].ffn_exp_probs_b,n_expert, n_expert_used,LLM_FFN_SILU, hparams.expert_weights_norm,true, hparams.expert_weights_scale,(enum llama_expert_gating_func_type) hparams.expert_gating_func,cb, il);cb(moe_out, "ffn_moe_out", il);// FFN shared expert{ggml_tensor * ffn_shexp = llm_build_ffn(ctx0, lctx, cur,model.layers[il].ffn_up_shexp, NULL, NULL,model.layers[il].ffn_gate_shexp, NULL, NULL,model.layers[il].ffn_down_shexp, NULL, NULL,NULL,LLM_FFN_SILU, LLM_FFN_PAR, cb, il);cb(ffn_shexp, "ffn_shexp", il);cur = ggml_add(ctx0, moe_out, ffn_shexp);cb(cur, "ffn_out", il);}}cur = ggml_add(ctx0, cur, ffn_inp);cur = lctx.cvec.apply_to(ctx0, cur, il);cb(cur, "l_out", il);// input for next layerinpL = cur;}cur = inpL;cur = llm_build_norm(ctx0, cur, hparams,model.output_norm, NULL,LLM_NORM_RMS, cb, -1);cb(cur, "result_norm", -1);// lm_headcur = ggml_mul_mat(ctx0, model.output, cur);cb(cur, "result_output", -1);ggml_build_forward_expand(gf, cur);return gf;}

case LLM_ARCH_DEEPSEEK:andcase LLM_ARCH_DEEPSEEK2:

switch (model.arch) {case LLM_ARCH_LLAMA:case LLM_ARCH_MINICPM:case LLM_ARCH_GRANITE:case LLM_ARCH_GRANITE_MOE:{result = llm.build_llama();} break;case LLM_ARCH_DECI:{result = llm.build_deci();} break;case LLM_ARCH_BAICHUAN:{result = llm.build_baichuan();} break;case LLM_ARCH_FALCON:{result = llm.build_falcon();} break;case LLM_ARCH_GROK:{result = llm.build_grok();} break;case LLM_ARCH_STARCODER:{result = llm.build_starcoder();} break;case LLM_ARCH_REFACT:{result = llm.build_refact();} break;case LLM_ARCH_BERT:case LLM_ARCH_JINA_BERT_V2:case LLM_ARCH_NOMIC_BERT:{result = llm.build_bert();} break;case LLM_ARCH_BLOOM:{result = llm.build_bloom();} break;case LLM_ARCH_MPT:{result = llm.build_mpt();} break;case LLM_ARCH_STABLELM:{result = llm.build_stablelm();} break;case LLM_ARCH_QWEN:{result = llm.build_qwen();} break;case LLM_ARCH_QWEN2:{result = llm.build_qwen2();} break;case LLM_ARCH_QWEN2VL:{lctx.n_pos_per_token = 4;result = llm.build_qwen2vl();} break;case LLM_ARCH_QWEN2MOE:{result = llm.build_qwen2moe();} break;case LLM_ARCH_PHI2:{result = llm.build_phi2();} break;case LLM_ARCH_PHI3:case LLM_ARCH_PHIMOE:{result = llm.build_phi3();} break;case LLM_ARCH_PLAMO:{result = llm.build_plamo();} break;case LLM_ARCH_GPT2:{result = llm.build_gpt2();} break;case LLM_ARCH_CODESHELL:{result = llm.build_codeshell();} break;case LLM_ARCH_ORION:{result = llm.build_orion();} break;case LLM_ARCH_INTERNLM2:{result = llm.build_internlm2();} break;case LLM_ARCH_MINICPM3:{result = llm.build_minicpm3();} break;case LLM_ARCH_GEMMA:{result = llm.build_gemma();} break;case LLM_ARCH_GEMMA2:{result = llm.build_gemma2();} break;case LLM_ARCH_STARCODER2:{result = llm.build_starcoder2();} break;case LLM_ARCH_MAMBA:{result = llm.build_mamba();} break;case LLM_ARCH_XVERSE:{result = llm.build_xverse();} break;case LLM_ARCH_COMMAND_R:{result = llm.build_command_r();} break;case LLM_ARCH_COHERE2:{result = llm.build_cohere2();} break;case LLM_ARCH_DBRX:{result = llm.build_dbrx();} break;case LLM_ARCH_OLMO:{result = llm.build_olmo();} break;case LLM_ARCH_OLMO2:{result = llm.build_olmo2();} break;case LLM_ARCH_OLMOE:{result = llm.build_olmoe();} break;case LLM_ARCH_OPENELM:{result = llm.build_openelm();} break;case LLM_ARCH_GPTNEOX:{result = llm.build_gptneox();} break;case LLM_ARCH_ARCTIC:{result = llm.build_arctic();} break;case LLM_ARCH_DEEPSEEK:{result = llm.build_deepseek();} break;case LLM_ARCH_DEEPSEEK2:{result = llm.build_deepseek2();} break;case LLM_ARCH_CHATGLM:{result = llm.build_chatglm();} break;case LLM_ARCH_BITNET:{result = llm.build_bitnet();} break;case LLM_ARCH_T5:{if (lctx.is_encoding) {result = llm.build_t5_enc();} else {result = llm.build_t5_dec();}} break;case LLM_ARCH_T5ENCODER:{result = llm.build_t5_enc();} break;case LLM_ARCH_JAIS:{result = llm.build_jais();} break;case LLM_ARCH_NEMOTRON:{result = llm.build_nemotron();} break;case LLM_ARCH_EXAONE:{result = llm.build_exaone();} break;case LLM_ARCH_RWKV6:{result = llm.build_rwkv6();} break;case LLM_ARCH_RWKV6QWEN2:{result = llm.build_rwkv6qwen2();} break;case LLM_ARCH_CHAMELEON:{result = llm.build_chameleon();} break;case LLM_ARCH_WAVTOKENIZER_DEC:{result = llm.build_wavtokenizer_dec();} break;default:GGML_ABORT("fatal error");}

References

[1] Yongqiang Cheng, https://yongqiang.blog.csdn.net/

[2] huggingface/gguf, https://github.com/huggingface/huggingface.js/tree/main/packages/gguf

相关文章:

llama.cpp LLM_ARCH_DEEPSEEK and LLM_ARCH_DEEPSEEK2

llama.cpp LLM_ARCH_DEEPSEEK and LLM_ARCH_DEEPSEEK2 1. LLM_ARCH_DEEPSEEK and LLM_ARCH_DEEPSEEK22. LLM_ARCH_DEEPSEEK and LLM_ARCH_DEEPSEEK23. struct ggml_cgraph * build_deepseek() and struct ggml_cgraph * build_deepseek2()References 不宜吹捧中国大语言模型的同…...

检测到联想鼠标自动调出运行窗口,鼠标自己作为键盘操作

联想鼠标会自动时不时的调用“运行”窗口 然后鼠标自己作为键盘输入 然后打开这个网页 (不是点击了什么鼠标外加按键,这个鼠标除了左右和中间滚轮,没有其他按键了)...

-bash: ./uninstall.command: /bin/sh^M: 坏的解释器: 没有那个文件或目录

终端报错: -bash: ./uninstall.command: /bin/sh^M: 坏的解释器: 没有那个文件或目录原因:由于文件行尾符不匹配导致的。当脚本文件在Windows环境中创建或编辑后,行尾符为CRLF(即回车和换行,\r\n)…...

15天基础内容总复习

总复习 一.day01内容 1.JVM,JRE,JDK的关系 JVM: java虚拟机,用来运行java程序的,JVM本身是不夸平台的,每个操作系统都需要安装针对本操作系统的JVM所以: java通过jvm的不夸平台实现了java的跨平台JRE:java运行环境,包含jvm和核心类库JDK:java开发工具包,包含开发工具和JRE三…...

星火大模型接入及文本生成HTTP流式、非流式接口(JAVA)

文章目录 一、接入星火大模型二、基于JAVA实现HTTP非流式接口1.配置2.接口实现(1)分析接口请求(2)代码实现 3.功能测试(1)测试对话功能(2)测试记住上下文功能 三、基于JAVA实现HTTP流…...

如何将电脑桌面默认的C盘设置到D盘?详细操作步骤!

将电脑桌面默认的C盘设置到D盘的详细操作步骤! 本博文介绍如何将电脑桌面(默认为C盘)设置在D盘下。 首先,在D盘建立文件夹Desktop,完整的路径为D:\Desktop。winR,输入Regedit命令。(或者单击【…...

toRow和markRow的用法以及使用场景

Vue3 Raw API 完整指南 1. toRaw vs markRaw 1.1 基本概念 toRaw: 返回响应式对象的原始对象,用于临时获取原始数据结构,标记过后将会失去响应式markRaw: 标记一个对象永远不会转换为响应式对象,返回对象本身 1.2 使用对比 // toRaw 示例…...

Java中ExecutorService接口介绍、应用场景和示例代码

概述 ExecutorService 是 Java 中用于管理线程池的接口,它属于 java.util.concurrent 包。它提供了用于管理并发任务的功能,包括任务的提交、执行和线程池的生命周期管理。以下是对 ExecutorService 的详细讲解、应用场景和示例代码。 1. 详细讲解 1.…...

java 判断Date是上午还是下午

我要用Java生成表格统计信息,如下图所示: 所以就诞生了本文的内容。 在 Java 里,判断 Date 对象代表的时间是上午还是下午有多种方式,下面为你详细介绍不同的实现方法。 方式一:使用 java.util.Calendar Calendar 类…...

开源 CSS 框架 Tailwind CSS v4.0

开源 CSS 框架 Tailwind CSS v4.0 于 1 月 22 日正式发布,除了显著提升性能、简化配置体验外,还增强了功能特性,具体如下1: 性能提升 采用全新的高性能引擎 Oxide,带来了构建速度的巨大飞跃: 全量构建速度…...

)

微信小程序中实现进入页面时数字跳动效果(自定义animate-numbers组件)

微信小程序中实现进入页面时数字跳动效果 1. 组件定义,新建animate-numbers组件1.1 index.js1.2 wxml1.3 wxss 2. 使用组件 1. 组件定义,新建animate-numbers组件 1.1 index.js // components/animate-numbers/index.js Component({properties: {number: {type: Number,value…...

Kafka生产者ACK参数与同步复制

目录 生产者的ACK参数 ack等于0 ack等于1(默认) ack等于-1或all Kafka的同步复制 使用误区 生产者的ACK参数 Kafka的ack机制可以保证生产者发送的消息被broker接收成功。 Kafka producer有三种ack机制 ,分别是 0,1…...

C语言------数组从入门到精通

1.一维数组 目标:通过思维导图了解学习一维数组的核心知识点: 1.1定义 使用 类型名 数组名[数组长度]; 定义数组。 // 示例: int arr[5]; 1.2一维数组初始化 数组的初始化可以分为静态初始化和动态初始化两种方式。 它们的主要区别在于初始化的时机和内存分配的方…...

FLTK - FLTK1.4.1 - 搭建模板,将FLTK自带的实现搬过来做实验

文章目录 FLTK - FLTK1.4.1 - 搭建模板,将FLTK自带的实现搬过来做实验概述笔记my_fltk_test.cppfltk_test.hfltk_test.cxx用adjuster工程试了一下,好使。END FLTK - FLTK1.4.1 - 搭建模板,将FLTK自带的实现搬过来做实验 概述 用fluid搭建UI…...

postgres基准测试工具pgbench如何使用自定义的表结构和自定义sql

使用 pgbench 进行 PostgreSQL 性能测试时,可以自定义表结构和测试脚本来更好地模拟实际使用场景。以下是一个示例,说明如何自定义表结构和测试脚本。 自定义表结构 创建自定义表结构的 SQL 脚本。例如,创建一个名为 custom_schema.sql 的文…...

开发者交流平台项目部署到阿里云服务器教程

本文使用PuTTY软件在本地Windows系统远程控制Linux服务器;其中,Windows系统为Windows 10专业版,Linux系统为CentOS 7.6 64位。 1.工具软件的准备 maven:https://archive.apache.org/dist/maven/maven-3/3.6.1/binaries/apache-m…...

长期研究计划)

Seed Edge- AGI(人工智能通用智能)长期研究计划

Seed Edge 是字节跳动豆包大模型团队推出的 AGI(人工智能通用智能)长期研究计划12。以下是对它的具体介绍1: 名称含义 “Seed” 即豆包大模型团队名称,“Edge” 代表最前沿的 AGI 探索,整体意味着该项目将在 AGI 领域…...

DeepSeek学术写作测评第二弹:数据分析、图表解读,效果怎么样?

我是娜姐 迪娜学姐 ,一个SCI医学期刊编辑,探索用AI工具提效论文写作和发表。 针对最近全球热议的DeepSeek开源大模型,娜姐昨天分析了关于论文润色、中译英的详细效果测评: DeepSeek学术写作测评第一弹:论文润色&#…...

从单体应用到微服务的迁移过程

目录 1. 理解单体应用与微服务架构2. 微服务架构的优势3. 迁移的步骤步骤 1:评估当前单体应用步骤 2:确定服务边界步骤 3:逐步拆分单体应用步骤 4:微服务的基础设施和工具步骤 5:管理和优化微服务步骤 6:逐…...

Direct2D 极速教程(2) —— 画淳平

极速导航 创建新项目:002-DrawJunpeiWIC 是什么用 WIC 加载图片画淳平 创建新项目:002-DrawJunpei 右键解决方案 -> 添加 -> 新建项目 选择"空项目",项目名称为 “002-DrawJunpei”,然后按"创建" 将 “…...

Lustre Core 语法 - 比较表达式

概述 Lustre v6 中的 Lustre Core 部分支持的表达式种类中,支持比较表达式。相关的表达式包括 , <>, <, >, <, >。 相应的文法定义为 Expression :: Expression Expression | Expression <> Expression | Expression < Expression |…...

] 的使用详解)

C# 中 [MethodImpl(MethodImplOptions.Synchronized)] 的使用详解

总目录 前言 在C#中,[MethodImpl(MethodImplOptions.Synchronized)] 是一个特性(attribute),用于标记方法,使其在执行时自动获得锁。这类似于Java中的 synchronized 关键字,确保同一时刻只有一个线程可以执…...

在win11系统笔记本中使用Ollama部署deepseek制作一个本地AI小助手!原来如此简单!!!

大家新年好啊,明天就是蛇年啦,蛇年快乐! 最近DeepSeek真的太火了,我也跟随B站,使用Ollama在一台Win11系统的笔记本电脑部署了DeepSeek。由于我的云服务器性能很差,虽然笔记本的性能也一般,但是…...

03.01、三合一

03.01、[简单] 三合一 1、题目描述 三合一。描述如何只用一个数组来实现三个栈。 你应该实现push(stackNum, value)、pop(stackNum)、isEmpty(stackNum)、peek(stackNum)方法。stackNum表示栈下标,value表示压入的值。 构造函数会传入一个stackSize参数…...

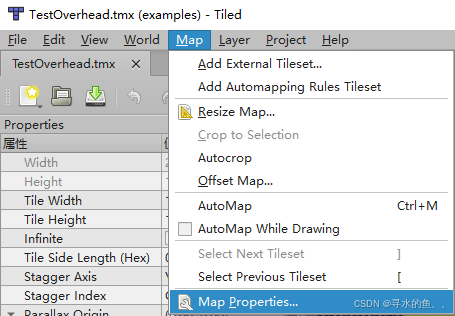

【Super Tilemap Editor使用详解】(十五):从 TMX 文件导入地图(Importing from TMX files)

Super Tilemap Editor 支持从 TMX 文件(Tiled Map Editor 的文件格式)导入图块地图。通过导入 TMX 文件,你可以将 Tiled 中设计的地图快速转换为 Unity 中的图块地图,并自动创建图块地图组(Tilemap Group)。以下是详细的导入步骤和准备工作。 一、导入前的准备工作 在导…...

在FreeBSD下安装Ollama并体验DeepSeek r1大模型

在FreeBSD下安装Ollama并体验DeepSeek r1大模型 在FreeBSD下安装Ollama 直接使用pkg安装即可: sudo pkg install ollama 安装完成后,提示: You installed ollama: the AI model runner. To run ollama, plese open 2 terminals. 1. In t…...

低代码系统-产品架构案例介绍、明道云(十一)

明道云HAP-超级应用平台(Hyper Application Platform),其实就是企业级应用平台,跟微搭类似。 通过自设计底层架构,兼容各种平台,使用低代码做到应用搭建、应用运维。 企业级应用平台最大的特点就是隐藏在冰山下的功能很深…...

)

编解码技术:最大秩距离码(Maximum Rank Distance Code)

最大秩距离码(Maximum Rank Distance Code,简称MRD码)是一类用于处理矩阵或线性空间中错误校正的编码。其主要特点是在矩阵数据结构中具备检测和纠正错误的能力,设计目标是实现给定矩阵尺寸和错误纠正能力下的最大可能码字数。MRD…...

Linux 4.19内核中的内存管理:x86_64架构下的实现与源码解析

在现代操作系统中,内存管理是核心功能之一,它直接影响系统的性能、稳定性和多任务处理能力。Linux 内核在 x86_64 架构下,通过复杂的机制实现了高效的内存管理,涵盖了虚拟内存、分页机制、内存分配、内存映射、内存保护、缓存管理等多个方面。本文将深入探讨这些机制,并结…...

python:taichi 绘制太极图

安装 pip install taichi pip install opencv-python pycairo where ti # -- taichi 高性能可视化 Demo 展览 ti gallery D:\Python39\Lib\site-packages\taichi\examples\algorithm\circle-packing\ 点击图片,执行 circle_packing_image.py 可见 编写 taijitu.py 如…...