【NLP的python库(03/4) 】: 全面概述

一、说明

Python 对自然语言处理库有丰富的支持。从文本处理、标记化文本并确定其引理开始,到句法分析、解析文本并分配句法角色,再到语义处理,例如识别命名实体、情感分析和文档分类,一切都由至少一个库提供。那么,你从哪里开始呢?

本文的目标是为每个核心 NLP 任务提供相关 Python 库的概述。这些库通过简要说明进行了解释,并给出了 NLP 任务的具体代码片段。继续我对 NLP 博客文章的介绍,本文仅显示用于文本处理、句法和语义分析以及文档语义等核心 NLP 任务的库。此外,在 NLP 实用程序类别中,还提供了用于语料库管理和数据集的库。

涵盖以下库:

- NLTK

- TextBlob

- Spacy

- SciKit Learn

- Gensim

二、核心自然语言处理任务

2.1 文本处理

任务:标记化、词形还原、词干提取、部分标记

NLTK 库为文本处理提供了一个完整的工具包,包括标记化、词干提取和词形还原。

from nltk.tokenize import sent_tokenize, word_tokenizeparagraph = '''Artificial intelligence was founded as an academic discipline in 1956, and in the years since it has experienced several waves of optimism, followed by disappointment and the loss of funding (known as an "AI winter"), followed by new approaches, success, and renewed funding. AI research has tried and discarded many different approaches, including simulating the brain, modeling human problem solving, formal logic, large databases of knowledge, and imitating animal behavior. In the first decades of the 21st century, highly mathematical and statistical machine learning has dominated the field, and this technique has proved highly successful, helping to solve many challenging problems throughout industry and academia.'''sentences = []

for sent in sent_tokenize(paragraph):sentences.append(word_tokenize(sent))sentences[0]

# ['Artificial', 'intelligence', 'was', 'founded', 'as', 'an', 'academic', 'discipline'

使用 TextBlob,支持相同的文本处理任务。它与NLTK的区别在于更高级的语义结果和易于使用的数据结构:解析句子已经生成了丰富的语义信息。

from textblob import TextBlobtext = '''

Artificial intelligence was founded as an academic discipline in 1956, and in the years since it has experienced several waves of optimism, followed by disappointment and the loss of funding (known as an "AI winter"), followed by new approaches, success, and renewed funding. AI research has tried and discarded many different approaches, including simulating the brain, modeling human problem solving, formal logic, large databases of knowledge, and imitating animal behavior. In the first decades of the 21st century, highly mathematical and statistical machine learning has dominated the field, and this technique has proved highly successful, helping to solve many challenging problems throughout industry and academia.

'''blob = TextBlob(text)blob.ngrams()

#[WordList(['Artificial', 'intelligence', 'was']),

# WordList(['intelligence', 'was', 'founded']),

# WordList(['was', 'founded', 'as']),blob.tokens

# WordList(['Artificial', 'intelligence', 'was', 'founded', 'as', 'an', 'academic', 'discipline', 'in', '1956', ',', 'and', 'in',

借助现代 NLP 库 Spacy,文本处理只是主要语义任务的丰富管道中的第一步。与其他库不同,它需要首先加载目标语言的模型。最近的模型不是启发式的,而是人工神经网络,尤其是变压器,它提供了更丰富的抽象,可以更好地与其他模型相结合。

import spacy

nlp = spacy.load('en_core_web_lg')text = '''

Artificial intelligence was founded as an academic discipline in 1956, and in the years since it has experienced several waves of optimism, followed by disappointment and the loss of funding (known as an "AI winter"), followed by new approaches, success, and renewed funding. AI research has tried and discarded many different approaches, including simulating the brain, modeling human problem solving, formal logic, large databases of knowledge, and imitating animal behavior. In the first decades of the 21st century, highly mathematical and statistical machine learning has dominated the field, and this technique has proved highly successful, helping to solve many challenging problems throughout industry and academia.

'''doc = nlp(text)

tokens = [token for token in doc]print(tokens)

# [Artificial, intelligence, was, founded, as, an, academic, discipline

2.2 文本语法

任务:解析、词性标记、名词短语提取

从 NLTK 开始,支持所有语法任务。它们的输出作为 Python 原生数据结构提供,并且始终可以显示为简单的文本输出。

from nltk.tokenize import word_tokenize

from nltk import pos_tag, RegexpParser# Source: Wikipedia, Artificial Intelligence, https://en.wikipedia.org/wiki/Artificial_intelligence

text = '''

Artificial intelligence was founded as an academic discipline in 1956, and in the years since it has experienced several waves of optimism, followed by disappointment and the loss of funding (known as an "AI winter"), followed by new approaches, success, and renewed funding. AI research has tried and discarded many different approaches, including simulating the brain, modeling human problem solving, formal logic, large databases of knowledge, and imitating animal behavior. In the first decades of the 21st century, highly mathematical and statistical machine learning has dominated the field, and this technique has proved highly successful, helping to solve many challenging problems throughout industry and academia.

'''pos_tag(word_tokenize(text))

# [('Artificial', 'JJ'),

# ('intelligence', 'NN'),

# ('was', 'VBD'),

# ('founded', 'VBN'),

# ('as', 'IN'),

# ('an', 'DT'),

# ('academic', 'JJ'),

# ('discipline', 'NN'),# noun chunk parser

# source: https://www.nltk.org/book_1ed/ch07.html

grammar = "NP: {<DT>?<JJ>*<NN>}"

parser = RegexpParser(grammar)parser.parse(pos_tag(word_tokenize(text)))

#(S

# (NP Artificial/JJ intelligence/NN)

# was/VBD

# founded/VBN

# as/IN

# (NP an/DT academic/JJ discipline/NN)

# in/IN

# 1956/CD

文本 Blob 在处理文本时立即提供 POS 标记。使用另一种方法,创建包含丰富语法信息的解析树。

from textblob import TextBlobtext = '''

Artificial intelligence was founded as an academic discipline in 1956, and in the years since it has experienced several waves of optimism, followed by disappointment and the loss of funding (known as an "AI winter"), followed by new approaches, success, and renewed funding. AI research has tried and discarded many different approaches, including simulating the brain, modeling human problem solving, formal logic, large databases of knowledge, and imitating animal behavior. In the first decades of the 21st century, highly mathematical and statistical machine learning has dominated the field, and this technique has proved highly successful, helping to solve many challenging problems throughout industry and academia.

'''blob = TextBlob(text)

blob.tags

#[('Artificial', 'JJ'),

# ('intelligence', 'NN'),

# ('was', 'VBD'),

# ('founded', 'VBN'),blob.parse()

# Artificial/JJ/B-NP/O

# intelligence/NN/I-NP/O

# was/VBD/B-VP/O

# founded/VBN/I-VP/O

Spacy 库使用转换器神经网络来支持其语法任务。

import spacy

nlp = spacy.load('en_core_web_lg')for token in nlp(text):print(f'{token.text:<20}{token.pos_:>5}{token.tag_:>5}')#Artificial ADJ JJ

#intelligence NOUN NN

#was AUX VBD

#founded VERB VBN

2.3 文本语义

任务:命名实体识别、词义消歧、语义角色标记

语义分析是NLP方法开始不同的领域。使用 NLTK 时,生成的语法信息将在字典中查找以识别例如命名实体。因此,在处理较新的文本时,可能无法识别实体。

from nltk import download as nltk_download

from nltk.tokenize import word_tokenize

from nltk import pos_tag, ne_chunknltk_download('maxent_ne_chunker')

nltk_download('words')# Source: Wikipedia, Spacecraft, https://en.wikipedia.org/wiki/Spacecraft

text = '''

As of 2016, only three nations have flown crewed spacecraft: USSR/Russia, USA, and China. The first crewed spacecraft was Vostok 1, which carried Soviet cosmonaut Yuri Gagarin into space in 1961, and completed a full Earth orbit. There were five other crewed missions which used a Vostok spacecraft. The second crewed spacecraft was named Freedom 7, and it performed a sub-orbital spaceflight in 1961 carrying American astronaut Alan Shepard to an altitude of just over 187 kilometers (116 mi). There were five other crewed missions using Mercury spacecraft.

'''pos_tag(word_tokenize(text))

# [('Artificial', 'JJ'),

# ('intelligence', 'NN'),

# ('was', 'VBD'),

# ('founded', 'VBN'),

# ('as', 'IN'),

# ('an', 'DT'),

# ('academic', 'JJ'),

# ('discipline', 'NN'),# noun chunk parser

# source: https://www.nltk.org/book_1ed/ch07.html

print(ne_chunk(pos_tag(word_tokenize(text))))

# (S

# As/IN

# of/IN

# [...]

# (ORGANIZATION USA/NNP)

# [...]

# which/WDT

# carried/VBD

# (GPE Soviet/JJ)

# cosmonaut/NN

# (PERSON Yuri/NNP Gagarin/NNP)

Spacy 库使用的转换器模型包含一个隐式的“时间戳”:它们的训练时间。这决定了模型使用了哪些文本,因此模型能够识别哪些文本。

import spacy

nlp = spacy.load('en_core_web_lg')text = '''

As of 2016, only three nations have flown crewed spacecraft: USSR/Russia, USA, and China. The first crewed spacecraft was Vostok 1, which carried Soviet cosmonaut Yuri Gagarin into space in 1961, and completed a full Earth orbit. There were five other crewed missions which used a Vostok spacecraft. The second crewed spacecraft was named Freedom 7, and it performed a sub-orbital spaceflight in 1961 carrying American astronaut Alan Shepard to an altitude of just over 187 kilometers (116 mi). There were five other crewed missions using Mercury spacecraft.

'''doc = nlp(paragraph)

for token in doc.ents:print(f'{token.text:<25}{token.label_:<15}')# 2016 DATE

# only three CARDINAL

# USSR GPE

# Russia GPE

# USA GPE

# China GPE

# first ORDINAL

# Vostok 1 PRODUCT

# Soviet NORP

# Yuri Gagarin PERSON

2.4 文档语义

任务:文本分类、主题建模、情感分析、毒性识别

情感分析也是NLP方法差异不同的任务:在词典中查找单词含义与在单词或文档向量上编码的学习单词相似性。

TextBlob 具有内置的情感分析,可返回文本中的极性(整体正面或负面内涵)和主观性(个人意见的程度)。

from textblob import TextBlobtext = '''

Artificial intelligence was founded as an academic discipline in 1956, and in the years since it has experienced several waves of optimism, followed by disappointment and the loss of funding (known as an "AI winter"), followed by new approaches, success, and renewed funding. AI research has tried and discarded many different approaches, including simulating the brain, modeling human problem solving, formal logic, large databases of knowledge, and imitating animal behavior. In the first decades of the 21st century, highly mathematical and statistical machine learning has dominated the field, and this technique has proved highly successful, helping to solve many challenging problems throughout industry and academia.

'''blob = TextBlob(text)

blob.sentiment

#Sentiment(polarity=0.16180290297937355, subjectivity=0.42155589508530683)

Spacy 不包含文本分类功能,但可以作为单独的管道步骤进行扩展。下面的代码很长,包含几个 Spacy 内部对象和数据结构 - 以后的文章将更详细地解释这一点。

## train single label categorization from multi-label dataset

def convert_single_label(dataset, filename):db = DocBin()nlp = spacy.load('en_core_web_lg')for index, fileid in enumerate(dataset):cat_dict = {cat: 0 for cat in dataset.categories()}cat_dict[dataset.categories(fileid).pop()] = 1doc = nlp(get_text(fileid))doc.cats = cat_dictdb.add(doc)db.to_disk(filename)## load trained model and apply to text

nlp = spacy.load('textcat_multilabel_model/model-best')text = dataset.raw(42)doc = nlp(text)estimated_cats = sorted(doc.cats.items(), key=lambda i:float(i[1]), reverse=True)print(dataset.categories(42))

# ['orange']print(estimated_cats)

# [('nzdlr', 0.998894989490509), ('money-supply', 0.9969857335090637), ... ('orange', 0.7344251871109009),

SciKit Learn 是一个通用的机器学习库,提供许多聚类和分类算法。它仅适用于数字输入,因此需要对文本进行矢量化,例如使用 GenSims 预先训练的词向量,或使用内置的特征矢量化器。仅举一个例子,这里有一个片段,用于将原始文本转换为单词向量,然后将 KMeans分类器应用于它们。

from sklearn.feature_extraction import DictVectorizer

from sklearn.cluster import KMeansvectorizer = DictVectorizer(sparse=False)

x_train = vectorizer.fit_transform(dataset['train'])kmeans = KMeans(n_clusters=8, random_state=0, n_init="auto").fit(x_train)print(kmeans.labels_.shape)

# (8551, )print(kmeans.labels_)

# [4 4 4 ... 6 6 6]

最后,Gensim是一个专门用于大规模语料库的主题分类的库。以下代码片段加载内置数据集,矢量化每个文档的令牌,并执行聚类分析算法 LDA。仅在 CPU 上运行时,这些最多可能需要 15 分钟。

# source: https://radimrehurek.com/gensim/auto_examples/tutorials/run_lda.html, https://radimrehurek.com/gensim/auto_examples/howtos/run_downloader_api.htmlimport logging

import gensim.downloader as api

from gensim.corpora import Dictionary

from gensim.models import LdaModellogging.basicConfig(format='%(asctime)s : %(levelname)s : %(message)s', level=logging.INFO)docs = api.load('text8')

dictionary = Dictionary(docs)

corpus = [dictionary.doc2bow(doc) for doc in docs]_ = dictionary[0]

id2word = dictionary.id2token# Define and train the model

model = LdaModel(corpus=corpus,id2word=id2word,chunksize=2000,alpha='auto',eta='auto',iterations=400,num_topics=10,passes=20,eval_every=None

)print(model.num_topics)

# 10print(model.top_topics(corpus)[6])

# ([(4.201401e-06, 'done'),

# (4.1998064e-06, 'zero'),

# (4.1478743e-06, 'eight'),

# (4.1257395e-06, 'one'),

# (4.1166854e-06, 'two'),

# (4.085097e-06, 'six'),

# (4.080696e-06, 'language'),

# (4.050306e-06, 'system'),

# (4.041121e-06, 'network'),

# (4.0385708e-06, 'internet'),

# (4.0379923e-06, 'protocol'),

# (4.035399e-06, 'open'),

# (4.033435e-06, 'three'),

# (4.0334166e-06, 'interface'),

# (4.030141e-06, 'four'),

# (4.0283044e-06, 'seven'),

# (4.0163245e-06, 'no'),

# (4.0149207e-06, 'i'),

# (4.0072555e-06, 'object'),

# (4.007036e-06, 'programming')],

三、公用事业

3.1 语料库管理

NLTK为JSON格式的纯文本,降价甚至Twitter提要提供语料库阅读器。它通过传递文件路径来创建,然后提供基本统计信息以及迭代器以处理所有找到的文件。

from nltk.corpus.reader.plaintext import PlaintextCorpusReadercorpus = PlaintextCorpusReader('wikipedia_articles', r'.*\.txt')print(corpus.fileids())

# ['AI_alignment.txt', 'AI_safety.txt', 'Artificial_intelligence.txt', 'Machine_learning.txt', ...]print(len(corpus.sents()))

# 47289print(len(corpus.words()))

# 1146248

Gensim 处理文本文件以形成每个文档的词向量表示,然后可用于其主要用例主题分类。文档需要由包装遍历目录的迭代器处理,然后将语料库构建为词向量集合。但是,这种语料库表示很难外部化并与其他库重用。以下片段是上面的摘录 - 它将加载 Gensim 中包含的数据集,然后创建一个基于词向量的表示。

import gensim.downloader as api

from gensim.corpora import Dictionarydocs = api.load('text8')

dictionary = Dictionary(docs)

corpus = [dictionary.doc2bow(doc) for doc in docs]print('Number of unique tokens: %d' % len(dictionary))

# Number of unique tokens: 253854print('Number of documents: %d' % len(corpus))

# Number of documents: 1701

3.2 数据

NLTK提供了几个即用型数据集,例如路透社新闻摘录,欧洲议会会议记录以及古腾堡收藏的开放书籍。请参阅完整的数据集和模型列表。

from nltk.corpus import reutersprint(len(reuters.fileids()))

#10788print(reuters.categories()[:43])

# ['acq', 'alum', 'barley', 'bop', 'carcass', 'castor-oil', 'cocoa', 'coconut', 'coconut-oil', 'coffee', 'copper', 'copra-cake', 'corn', 'cotton', 'cotton-oil', 'cpi', 'cpu', 'crude', 'dfl', 'dlr', 'dmk', 'earn', 'fuel', 'gas', 'gnp', 'gold', 'grain', 'groundnut', 'groundnut-oil', 'heat', 'hog', 'housing', 'income', 'instal-debt', 'interest', 'ipi', 'iron-steel', 'jet', 'jobs', 'l-cattle', 'lead', 'lei', 'lin-oil']

SciKit Learn包括来自新闻组,房地产甚至IT入侵检测的数据集,请参阅完整列表。这是后者的快速示例。

from sklearn.datasets import fetch_20newsgroupsdataset = fetch_20newsgroups()

dataset.data[1]

# "From: guykuo@carson.u.washington.edu (Guy Kuo)\nSubject: SI Clock Poll - Final Call\nSummary: Final call for SI clock reports\nKeywords: SI,acceleration,clock,upgrade\nArticle-I.D.: shelley.1qvfo9INNc3s\nOrganization: University of Washington\nLines: 11\nNNTP-Posting-Host: carson.u.washington.edu\n\nA fair number of brave souls who upgraded their SI clock oscillator have\nshared their experiences for this poll.

四、结论

对于 Python 中的 NLP 项目,存在大量的库选择。为了帮助您入门,本文提供了 NLP 任务驱动的概述,其中包含紧凑的库解释和代码片段。从文本处理开始,您了解了如何从文本创建标记和引理。继续语法分析,您学习了如何生成词性标签和句子的语法结构。到达语义,识别文本中的命名实体以及文本情感也可以在几行代码中解决。对于语料库管理和访问预结构化数据集的其他任务,您还看到了库示例。总而言之,本文应该让你在处理核心 NLP 任务时为下一个 NLP 项目提供一个良好的开端。

相关文章:

【NLP的python库(03/4) 】: 全面概述

一、说明 Python 对自然语言处理库有丰富的支持。从文本处理、标记化文本并确定其引理开始,到句法分析、解析文本并分配句法角色,再到语义处理,例如识别命名实体、情感分析和文档分类,一切都由至少一个库提供。那么,你…...

面试理论篇三

关于异常机制篇 异常描述 目录 关于异常机制篇异常描述 注:自用 1,Java中的异常分为哪几类?各自的特点是什么? Java中的异常 可以分为 可查异常(Checked Exception)、运行时异常(Runtime Exception) 和 错误(Error)三类。可查异…...

ShardingSphere|shardingJDBC - 在使用数据分片功能情况下无法配置读写分离

问题场景: 最近在学习ShardingSphere,跟着教程一步步做shardingJDBC,但是想在开启数据分片的时候还能使用读写分离,一直失败,开始是一直能读写分离,但是分偏见规则感觉不生效,一直好像是走不进去…...

char s1[len + 1]; 报错说需要常量?

在C中,字符数组的大小必须是常量表达式,不能使用变量 len 作为数组大小。为了解决这个问题,你可以使用 new 运算符动态分配字符数组的内存,但在使用完后需要手动释放。 还有啥是只能这样的,还是说所有的动态都需要new&…...

【Linux】CentOS-6.8超详细安装教程

文章目录 1.CentOS介绍:2.必要准备:3.创建虚拟机:4 .安装系统 1.CentOS介绍: CentOS是一种基于开放源代码的Linux操作系统,它以其稳定性、安全性和可靠性而闻名,它有以下特点: 开源性࿱…...

【Java 进阶篇】MySQL启动与关闭、目录结构以及 SQL 相关概念

MySQL 服务启动与关闭 MySQL是一个常用的关系型数据库管理系统,通过启动和关闭MySQL服务,可以控制数据库的运行状态。本节将介绍如何在Windows和Linux系统上启动和关闭MySQL服务。 在Windows上启动和关闭MySQL服务 启动MySQL服务 在Windows上&#x…...

Android 11.0 mt6771新增分区功能实现一

1.前言 在11.0的系统开发中,在对某些特殊模块中关于数据的存储方面等需要新增分区来保存, 所以就需要在系统分区新增分区,接下来就来实现这个功能 2.mt6771新增分区功能实现一的核心类 build/make/core/Makefile build/make/core/board_config.mk build/make/core/config…...

LiveData简单使用

1.LiveData是基于观察者模式,可以用于处理消息的订阅分发的组件。 LiveData组件有以下特性: 1) 可以感知Activity、Fragment生命周期变化,因为他把自己注册成LifecycleObserver。 2) LiveData可以注册多个观察者,只有数据…...

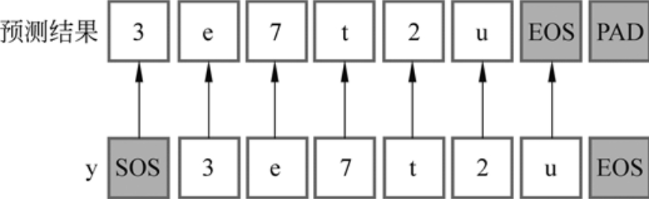

手动实现Transformer

Transformer和BERT可谓是LLM的基础模型,彻底搞懂极其必要。Transformer最初设想是作为文本翻译模型使用的,而BERT模型构建使用了Transformer的部分组件,如果理解了Transformer,则能很轻松地理解BERT。 一.Transformer模型架构 1…...

leetcode456 132 Pattern

给定数组,找到 i < j < k i < j < k i<j<k,使得 n u m s [ i ] < n u m s [ k ] < n u m s [ j ] nums[i] < nums[k] < nums[j] nums[i]<nums[k]<nums[j] 最开始肯定想着三重循环,时间复杂度 O ( n 3 )…...

WordPress外贸建站Astra免费版教程指南(2023)

在WordPress的外贸建站主题中,有许多备受欢迎的主题,如AAvada、Astra、Hello、Kadence等最佳WordPress外贸主题,它们都能满足建站需求并在市场上广受认可。然而,今天我要介绍的是一个不断颠覆建站人员思维的黑马——Astra主题。 …...

Vue之ElementUI实现登陆及注册

目录 编辑 前言 一、ElementUI简介 1. 什么是ElementUI 2. 使用ElementUI的优势 3. ElementUI的应用场景 二、登陆注册前端界面开发 1. 修改端口号 2. 下载ElementUI所需的js依赖 2.1 添加Element-UI模块 2.2 导入Element-UI模块 2.3 测试Element-UI是否能用 3.编…...

网络代理的多面应用:保障隐私、增强安全和数据获取

随着互联网的发展,网络代理在网络安全、隐私保护和数据获取方面变得日益重要。本文将深入探讨网络代理的多面应用,特别关注代理如何保障隐私、增强安全性以及为数据获取提供支持。 1. 代理服务器的基本原理 代理服务器是一种位于客户端和目标服务器之间…...

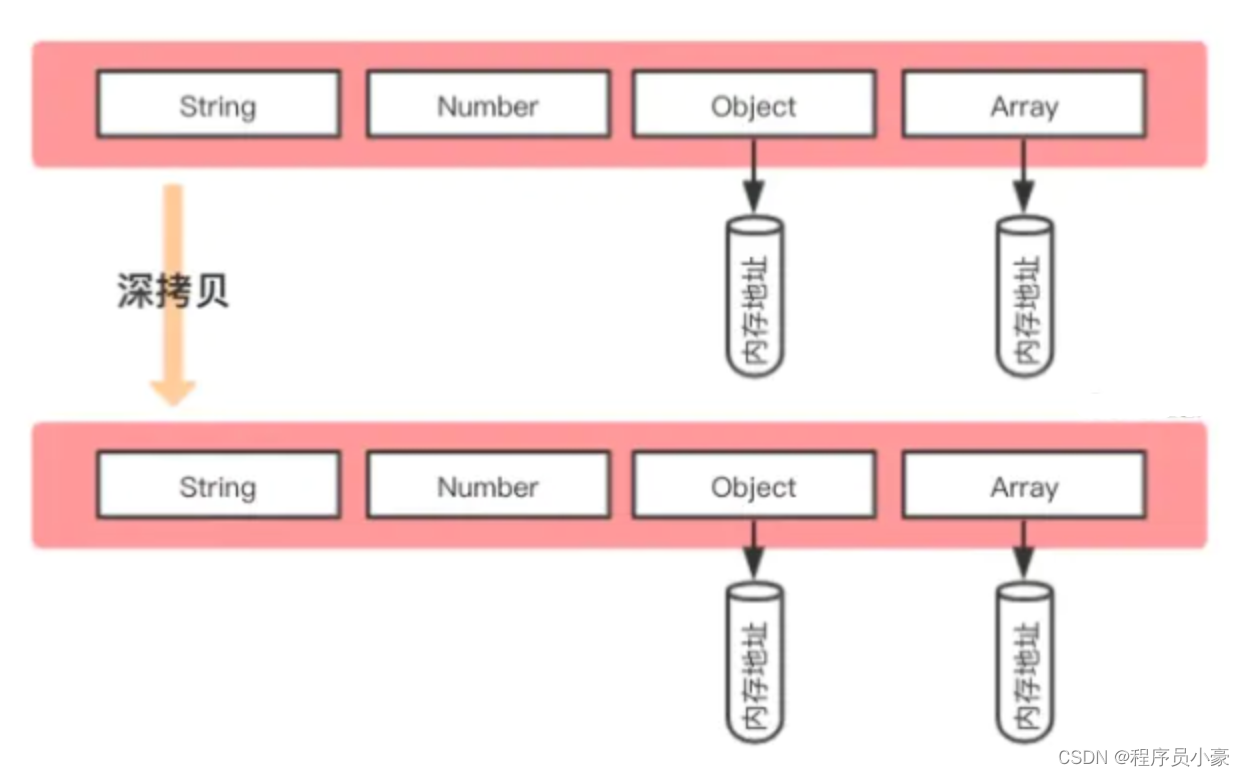

字节一面:深拷贝浅拷贝的区别?如何实现一个深拷贝?

前言 最近博主在字节面试中遇到这样一个面试题,这个问题也是前端面试的高频问题,我们经常需要对后端返回的数据进行处理才能渲染到页面上,一般我们会讲数据进行拷贝,在副本对象里进行处理,以免玷污原始数据,…...

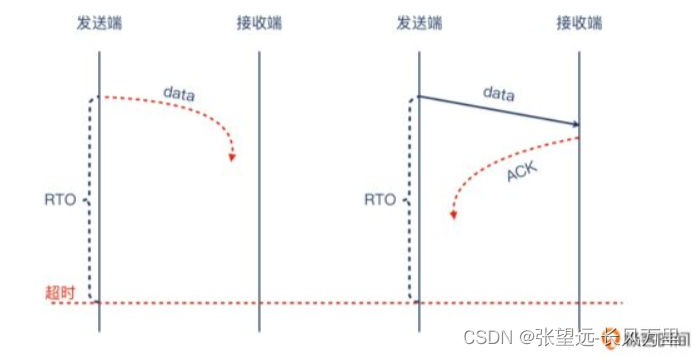

协议-TCP协议-基础概念02-TCP握手被拒绝-内核参数-指数退避原则-TCP窗口-TCP重传

协议-TCP协议-基础概念02-TCP握手被拒绝-TCP窗口 参考来源: 《极客专栏-网络排查案例课》 TCP连接都是TCP协议沟通的吗? 不是 如果服务端不想接受这次握手,它会怎么做呢? 内核参数中与TCP重试有关的参数(两个) -net.ipv4.tc…...

PDF文件压缩软件 PDF Squeezer mac中文版软件特点

PDF Squeezer mac是一款macOS平台上的PDF文件压缩软件,可以帮助用户快速地压缩PDF文件,从而减小文件大小,使其更容易共享、存储和传输。PDF Squeezer使用先进的压缩算法,可以在不影响文件质量的情况下减小文件大小。 PDF Squeezer…...

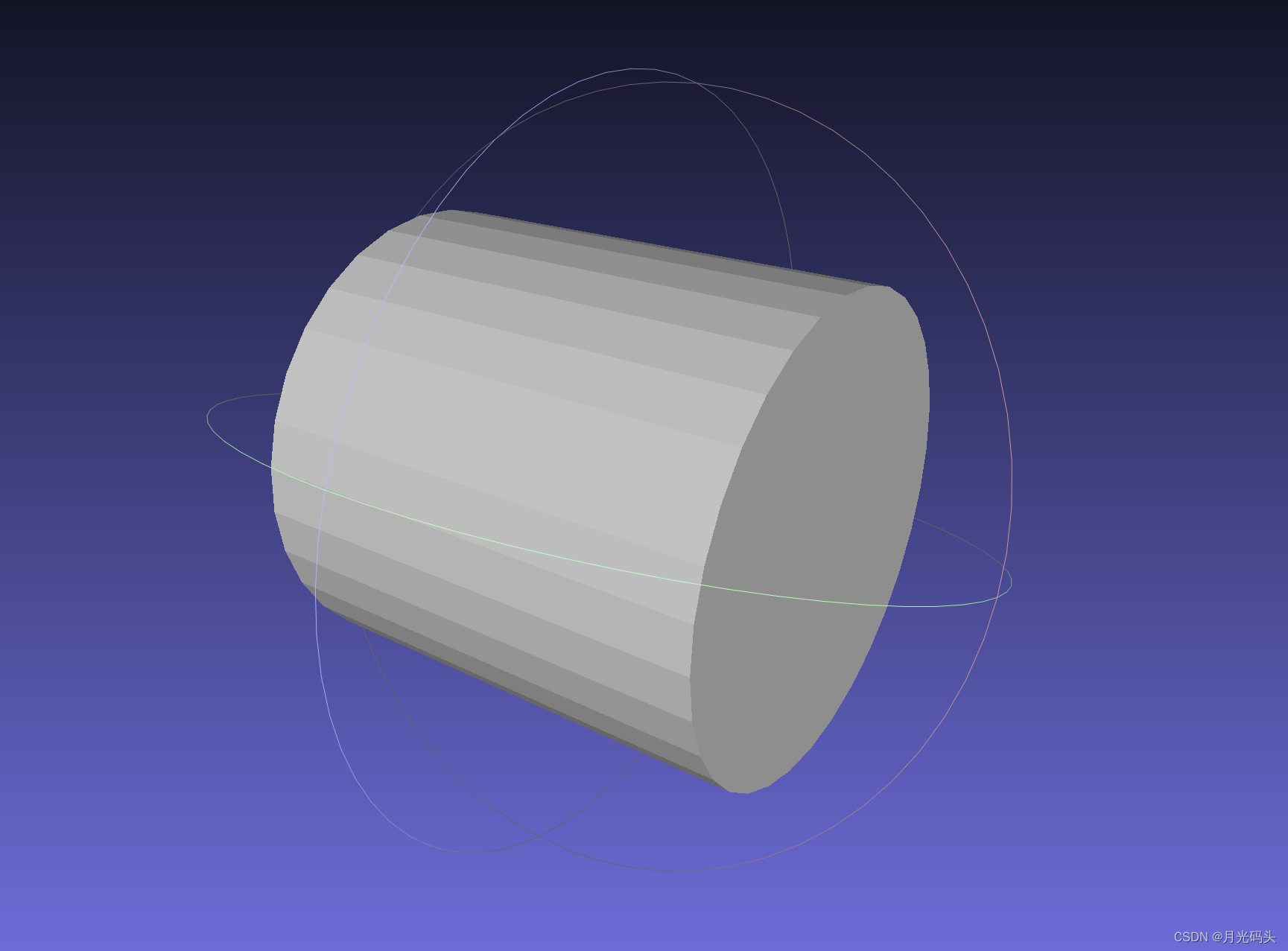

VS+Qt+opencascade三维绘图stp/step/igs/stl格式图形读取显示

程序示例精选 VSQtopencascade三维绘图stp/step/igs/stl格式图形读取显示 如需安装运行环境或远程调试,见文章底部个人QQ名片,由专业技术人员远程协助! 前言 这篇博客针对《VSQtopencascade三维绘图stp/step/igs/stl格式图形读取显示》编写…...

如何在Ubuntu中切换root用户和普通用户

问题 大家在新装Ubuntu之后,有没有发现自己进入不了root用户,su root后输入密码根本进入不了,这怎么回事呢? 打开Ubuntu命令终端; 输入命令:su root; 回车提示输入密码; 提示&…...

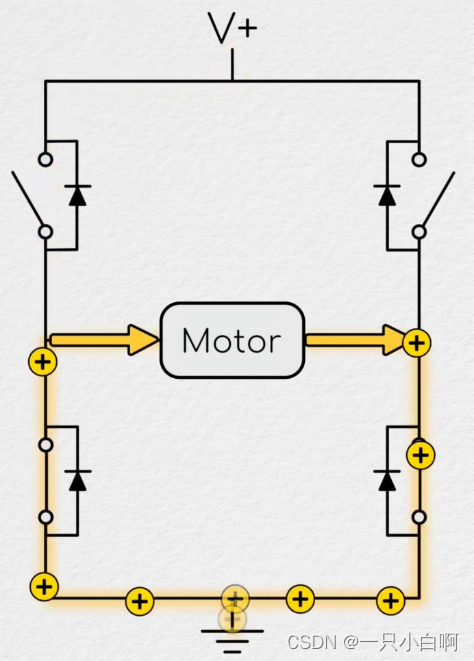

从零开始之了解电机及其控制(10)空间矢量理论

与一维数字转子位置不同,电流和电压都是二维的。可以在矩形笛卡尔平面中考虑这些尺寸。 用旋转角度和幅度来描述向量 虽然电流命令的幅度和施加的电压是进入控制器的误差项的函数,它们施加的角度是 d-q 轴方向的函数,因此也是转子位置的函数。…...

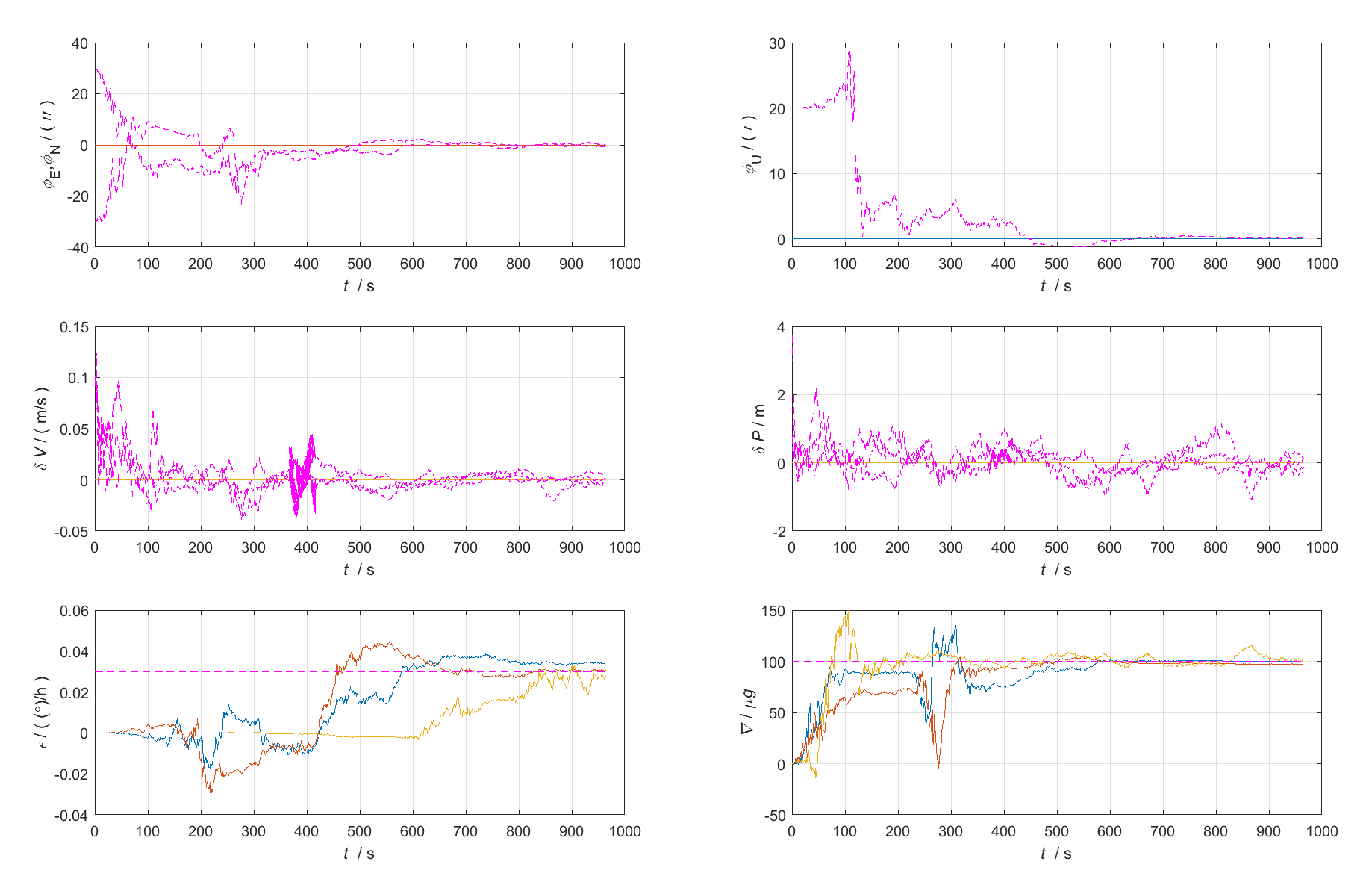

PSINS工具箱学习(一)下载安装初始化、SINS-GPS组合导航仿真、习惯约定与常用变量符号、数据导入转换、绘图显示

原始 Markdown文档、Visio流程图、XMind思维导图见:https://github.com/LiZhengXiao99/Navigation-Learning 文章目录 一、前言二、相关资源三、下载安装初始化1、下载PSINSyymmdd.rar工具箱文件2、解压文件3、初始化4、启动工具箱导览 四、习惯约定与常用变量符号1…...

)

Perplexity学术模式尚未开放的4个隐藏功能(仅限IEEE Fellow级用户测试通道泄露)

更多请点击: https://intelliparadigm.com 第一章:Perplexity学术模式尚未开放的4个隐藏功能(仅限IEEE Fellow级用户测试通道泄露) 离线语义缓存预热接口 Perplexity 内部测试版暴露了 /v2/academic/cache/warmup 端点ÿ…...

)

AI全领域热点速递(2026年5月11日)

💌 关心家人,从每日报平安开始。万年历提醒微信小程序,您值得体验。📰 每日整理AI领域核心动态,精选有价值资讯,精简可读,适合收藏备查。🤖 AI全领域热点速递(2026年5月1…...

)

面试题:文本表示方法详解——One-hot、Word2Vec、上下文表示、BERT词向量全解析(NLP基础高频考点)

1. 为什么面试官总爱问“文本表示方法”?1.1 这个问题的本质是什么任何 NLP 系统,不管是情感分析、文本分类、搜索推荐、智能客服,还是今天的大模型应用,本质上都绕不开一个前提:机器并不真正认识“文字”,…...

代码骨架生成器:从原理到实践,打造高效项目脚手架

1. 项目概述:从零到一的代码骨架生成器在软件开发领域,尤其是团队协作或个人快速启动新项目时,我们常常会陷入一种重复性的“仪式感”中:创建项目目录结构、初始化版本控制、配置构建工具、设置代码规范、编写基础配置文件……这些…...

为初创团队搭建统一的大模型api网关以控制开发成本

🚀 告别海外账号与网络限制!稳定直连全球优质大模型,限时半价接入中。 👉 点击领取海量免费额度 为初创团队搭建统一的大模型API网关以控制开发成本 对于初创技术团队而言,快速验证产品想法、迭代功能是生存的关键。在…...

Elasticsearch 查询日志:每个查询一行协调器级别日志,适用于 ES|QL、DSL、SQL 和 EQL

作者:来自 Elastic Najwa Harif 及 Valentin Crettaz 通过 Elasticsearch 查询日志,可以轻松理解查询对集群性能的影响。每个请求由一条协调器级别日志记录,覆盖 ES|QL、DSL、SQL 和 EQL,并提供完整的查询文本、追踪信息、可选用户…...

学习如何用CC-Switch + Claude Code 接入 DeepSeek-V4-Pro

1.概述 1.1.关键词 Claude Code:Anthropic 出品的 AI 编程命令行工具。在终端里让 AI 帮你写代码、改 Bug、分析项目。 CC-Switch:开源的图形化配置管理工具。一键切换 Claude Code 背后使用的模型,不用手动改配置文件。 1.2.目的 使用C…...

Stata 数据处理实战:时间序列数据的日期转换与聚合

1. 时间序列数据处理的常见痛点 刚接触时间序列分析的朋友们,经常会遇到这样的困扰:从Excel导入的数据明明是日期格式,到了Stata里却变成了看不懂的字符;想按周汇总销售数据,却发现系统根本不认识"2023-W15"…...

SAP ABAP BADI AC_DOCUMENT:跨越VF01/MIRO/AFAB的智能凭证替代实战

1. 为什么需要AC_DOCUMENT BADI? 在SAP标准业务流程中,GGB1提供的凭证替代功能已经能满足大部分常规需求。但实际业务往往更复杂——比如销售开票时,需要根据付款条件动态替换税科目;发票校验时,要根据供应商信息自动填…...

SKILLS All-in-one:开源AI Agent技能库,标准化Prompt与工具函数,提升开发效率

1. 项目定位与核心价值如果你和我一样,在过去一年里深度使用过 Claude Code、ChatGPT 或者尝试搭建自己的 AI Agent 工作流,那你一定遇到过这个痛点:每次想给 AI 装个新“技能”,都得自己从头写 Prompt、设计工具调用逻辑、处理错…...