深入理解FFmpeg--libavformat接口使用(一)

libavformat(lavf)是一个用于处理各种媒体容器格式的库。它的主要两个目的是去复用(即将媒体文件拆分为组件流)和复用的反向过程(以指定的容器格式写入提供的数据)。它还有一个I/O模块,支持多种访问数据的协议(如文件、tcp、http等)。在使用lavf之前,您需要调用av_register_all()来注册所有已编译的复用器、解复用器和协议。除非您绝对确定不会使用libavformat的网络功能,否则还应该调用avformat_network_init();

一、媒体流封装(Muxing)

媒体流封装主要是指以AVPackets的形式获取编码后的数据后,以指定的容器格式将其写入到文件或者其他方式输出到字节流中。

Muxing实际执行的主要API有:

初始化:avformat_alloc_output_context2();

创建媒体流(如果有的话):avformat_new_stream();

写文件头:avformat_write_header();

写数据包:av_write_frame()/av_interleaved_write_frame();

写文件尾:av_write_trailer();

流程图:

代码示例:

在官方源码:/doc/examples/muxing.c

#include <stdlib.h>

#include <stdio.h>

#include <string.h>

#include <math.h>#include <libavutil/avassert.h>

#include <libavutil/channel_layout.h>

#include <libavutil/opt.h>

#include <libavutil/mathematics.h>

#include <libavutil/timestamp.h>

#include <libavcodec/avcodec.h>

#include <libavformat/avformat.h>

#include <libswscale/swscale.h>

#include <libswresample/swresample.h>#define STREAM_DURATION 10.0

#define STREAM_FRAME_RATE 25 /* 25 images/s */

#define STREAM_PIX_FMT AV_PIX_FMT_YUV420P /* default pix_fmt */#define SCALE_FLAGS SWS_BICUBIC// a wrapper around a single output AVStream

typedef struct OutputStream {AVStream *st;AVCodecContext *enc;/* pts of the next frame that will be generated */int64_t next_pts;int samples_count;AVFrame *frame;AVFrame *tmp_frame;AVPacket *tmp_pkt;float t, tincr, tincr2;struct SwsContext *sws_ctx;struct SwrContext *swr_ctx;

} OutputStream;static void log_packet(const AVFormatContext *fmt_ctx, const AVPacket *pkt)

{AVRational *time_base = &fmt_ctx->streams[pkt->stream_index]->time_base;printf("pts:%s pts_time:%s dts:%s dts_time:%s duration:%s duration_time:%s stream_index:%d\n",av_ts2str(pkt->pts), av_ts2timestr(pkt->pts, time_base),av_ts2str(pkt->dts), av_ts2timestr(pkt->dts, time_base),av_ts2str(pkt->duration), av_ts2timestr(pkt->duration, time_base),pkt->stream_index);

}static int write_frame(AVFormatContext *fmt_ctx, AVCodecContext *c,AVStream *st, AVFrame *frame, AVPacket *pkt)

{int ret;// send the frame to the encoderret = avcodec_send_frame(c, frame);if (ret < 0) {fprintf(stderr, "Error sending a frame to the encoder: %s\n",av_err2str(ret));exit(1);}while (ret >= 0) {ret = avcodec_receive_packet(c, pkt);if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF)break;else if (ret < 0) {fprintf(stderr, "Error encoding a frame: %s\n", av_err2str(ret));exit(1);}/* rescale output packet timestamp values from codec to stream timebase */av_packet_rescale_ts(pkt, c->time_base, st->time_base);pkt->stream_index = st->index;/* Write the compressed frame to the media file. */log_packet(fmt_ctx, pkt);ret = av_interleaved_write_frame(fmt_ctx, pkt);/* pkt is now blank (av_interleaved_write_frame() takes ownership of* its contents and resets pkt), so that no unreferencing is necessary.* This would be different if one used av_write_frame(). */if (ret < 0) {fprintf(stderr, "Error while writing output packet: %s\n", av_err2str(ret));exit(1);}}return ret == AVERROR_EOF ? 1 : 0;

}/* Add an output stream. */

static void add_stream(OutputStream *ost, AVFormatContext *oc,const AVCodec **codec,enum AVCodecID codec_id)

{AVCodecContext *c;int i;/* find the encoder */*codec = avcodec_find_encoder(codec_id);if (!(*codec)) {fprintf(stderr, "Could not find encoder for '%s'\n",avcodec_get_name(codec_id));exit(1);}ost->tmp_pkt = av_packet_alloc();if (!ost->tmp_pkt) {fprintf(stderr, "Could not allocate AVPacket\n");exit(1);}ost->st = avformat_new_stream(oc, NULL);if (!ost->st) {fprintf(stderr, "Could not allocate stream\n");exit(1);}ost->st->id = oc->nb_streams-1;c = avcodec_alloc_context3(*codec);if (!c) {fprintf(stderr, "Could not alloc an encoding context\n");exit(1);}ost->enc = c;switch ((*codec)->type) {case AVMEDIA_TYPE_AUDIO:c->sample_fmt = (*codec)->sample_fmts ?(*codec)->sample_fmts[0] : AV_SAMPLE_FMT_FLTP;c->bit_rate = 64000;c->sample_rate = 44100;if ((*codec)->supported_samplerates) {c->sample_rate = (*codec)->supported_samplerates[0];for (i = 0; (*codec)->supported_samplerates[i]; i++) {if ((*codec)->supported_samplerates[i] == 44100)c->sample_rate = 44100;}}c->channels = av_get_channel_layout_nb_channels(c->channel_layout);c->channel_layout = AV_CH_LAYOUT_STEREO;if ((*codec)->channel_layouts) {c->channel_layout = (*codec)->channel_layouts[0];for (i = 0; (*codec)->channel_layouts[i]; i++) {if ((*codec)->channel_layouts[i] == AV_CH_LAYOUT_STEREO)c->channel_layout = AV_CH_LAYOUT_STEREO;}}c->channels = av_get_channel_layout_nb_channels(c->channel_layout);ost->st->time_base = (AVRational){ 1, c->sample_rate };break;case AVMEDIA_TYPE_VIDEO:c->codec_id = codec_id;c->bit_rate = 400000;/* Resolution must be a multiple of two. */c->width = 352;c->height = 288;/* timebase: This is the fundamental unit of time (in seconds) in terms* of which frame timestamps are represented. For fixed-fps content,* timebase should be 1/framerate and timestamp increments should be* identical to 1. */ost->st->time_base = (AVRational){ 1, STREAM_FRAME_RATE };c->time_base = ost->st->time_base;c->gop_size = 12; /* emit one intra frame every twelve frames at most */c->pix_fmt = STREAM_PIX_FMT;if (c->codec_id == AV_CODEC_ID_MPEG2VIDEO) {/* just for testing, we also add B-frames */c->max_b_frames = 2;}if (c->codec_id == AV_CODEC_ID_MPEG1VIDEO) {/* Needed to avoid using macroblocks in which some coeffs overflow.* This does not happen with normal video, it just happens here as* the motion of the chroma plane does not match the luma plane. */c->mb_decision = 2;}break;default:break;}/* Some formats want stream headers to be separate. */if (oc->oformat->flags & AVFMT_GLOBALHEADER)c->flags |= AV_CODEC_FLAG_GLOBAL_HEADER;

}/**************************************************************/

/* audio output */static AVFrame *alloc_audio_frame(enum AVSampleFormat sample_fmt,uint64_t channel_layout,int sample_rate, int nb_samples)

{AVFrame *frame = av_frame_alloc();int ret;if (!frame) {fprintf(stderr, "Error allocating an audio frame\n");exit(1);}frame->format = sample_fmt;frame->channel_layout = channel_layout;frame->sample_rate = sample_rate;frame->nb_samples = nb_samples;if (nb_samples) {ret = av_frame_get_buffer(frame, 0);if (ret < 0) {fprintf(stderr, "Error allocating an audio buffer\n");exit(1);}}return frame;

}static void open_audio(AVFormatContext *oc, const AVCodec *codec,OutputStream *ost, AVDictionary *opt_arg)

{AVCodecContext *c;int nb_samples;int ret;AVDictionary *opt = NULL;c = ost->enc;/* open it */av_dict_copy(&opt, opt_arg, 0);ret = avcodec_open2(c, codec, &opt);av_dict_free(&opt);if (ret < 0) {fprintf(stderr, "Could not open audio codec: %s\n", av_err2str(ret));exit(1);}/* init signal generator */ost->t = 0;ost->tincr = 2 * M_PI * 110.0 / c->sample_rate;/* increment frequency by 110 Hz per second */ost->tincr2 = 2 * M_PI * 110.0 / c->sample_rate / c->sample_rate;if (c->codec->capabilities & AV_CODEC_CAP_VARIABLE_FRAME_SIZE)nb_samples = 10000;elsenb_samples = c->frame_size;ost->frame = alloc_audio_frame(c->sample_fmt, c->channel_layout,c->sample_rate, nb_samples);ost->tmp_frame = alloc_audio_frame(AV_SAMPLE_FMT_S16, c->channel_layout,c->sample_rate, nb_samples);/* copy the stream parameters to the muxer */ret = avcodec_parameters_from_context(ost->st->codecpar, c);if (ret < 0) {fprintf(stderr, "Could not copy the stream parameters\n");exit(1);}/* create resampler context */ost->swr_ctx = swr_alloc();if (!ost->swr_ctx) {fprintf(stderr, "Could not allocate resampler context\n");exit(1);}/* set options */av_opt_set_int (ost->swr_ctx, "in_channel_count", c->channels, 0);av_opt_set_int (ost->swr_ctx, "in_sample_rate", c->sample_rate, 0);av_opt_set_sample_fmt(ost->swr_ctx, "in_sample_fmt", AV_SAMPLE_FMT_S16, 0);av_opt_set_int (ost->swr_ctx, "out_channel_count", c->channels, 0);av_opt_set_int (ost->swr_ctx, "out_sample_rate", c->sample_rate, 0);av_opt_set_sample_fmt(ost->swr_ctx, "out_sample_fmt", c->sample_fmt, 0);/* initialize the resampling context */if ((ret = swr_init(ost->swr_ctx)) < 0) {fprintf(stderr, "Failed to initialize the resampling context\n");exit(1);}

}/* Prepare a 16 bit dummy audio frame of 'frame_size' samples and* 'nb_channels' channels. */

static AVFrame *get_audio_frame(OutputStream *ost)

{AVFrame *frame = ost->tmp_frame;int j, i, v;int16_t *q = (int16_t*)frame->data[0];/* check if we want to generate more frames */if (av_compare_ts(ost->next_pts, ost->enc->time_base,STREAM_DURATION, (AVRational){ 1, 1 }) > 0)return NULL;for (j = 0; j <frame->nb_samples; j++) {v = (int)(sin(ost->t) * 10000);for (i = 0; i < ost->enc->channels; i++)*q++ = v;ost->t += ost->tincr;ost->tincr += ost->tincr2;}frame->pts = ost->next_pts;ost->next_pts += frame->nb_samples;return frame;

}/** encode one audio frame and send it to the muxer* return 1 when encoding is finished, 0 otherwise*/

static int write_audio_frame(AVFormatContext *oc, OutputStream *ost)

{AVCodecContext *c;AVFrame *frame;int ret;int dst_nb_samples;c = ost->enc;frame = get_audio_frame(ost);if (frame) {/* convert samples from native format to destination codec format, using the resampler *//* compute destination number of samples */dst_nb_samples = av_rescale_rnd(swr_get_delay(ost->swr_ctx, c->sample_rate) + frame->nb_samples,c->sample_rate, c->sample_rate, AV_ROUND_UP);av_assert0(dst_nb_samples == frame->nb_samples);/* when we pass a frame to the encoder, it may keep a reference to it* internally;* make sure we do not overwrite it here*/ret = av_frame_make_writable(ost->frame);if (ret < 0)exit(1);/* convert to destination format */ret = swr_convert(ost->swr_ctx,ost->frame->data, dst_nb_samples,(const uint8_t **)frame->data, frame->nb_samples);if (ret < 0) {fprintf(stderr, "Error while converting\n");exit(1);}frame = ost->frame;frame->pts = av_rescale_q(ost->samples_count, (AVRational){1, c->sample_rate}, c->time_base);ost->samples_count += dst_nb_samples;}return write_frame(oc, c, ost->st, frame, ost->tmp_pkt);

}/**************************************************************/

/* video output */static AVFrame *alloc_picture(enum AVPixelFormat pix_fmt, int width, int height)

{AVFrame *picture;int ret;picture = av_frame_alloc();if (!picture)return NULL;picture->format = pix_fmt;picture->width = width;picture->height = height;/* allocate the buffers for the frame data */ret = av_frame_get_buffer(picture, 0);if (ret < 0) {fprintf(stderr, "Could not allocate frame data.\n");exit(1);}return picture;

}static void open_video(AVFormatContext *oc, const AVCodec *codec,OutputStream *ost, AVDictionary *opt_arg)

{int ret;AVCodecContext *c = ost->enc;AVDictionary *opt = NULL;av_dict_copy(&opt, opt_arg, 0);/* open the codec */ret = avcodec_open2(c, codec, &opt);av_dict_free(&opt);if (ret < 0) {fprintf(stderr, "Could not open video codec: %s\n", av_err2str(ret));exit(1);}/* allocate and init a re-usable frame */ost->frame = alloc_picture(c->pix_fmt, c->width, c->height);if (!ost->frame) {fprintf(stderr, "Could not allocate video frame\n");exit(1);}/* If the output format is not YUV420P, then a temporary YUV420P* picture is needed too. It is then converted to the required* output format. */ost->tmp_frame = NULL;if (c->pix_fmt != AV_PIX_FMT_YUV420P) {ost->tmp_frame = alloc_picture(AV_PIX_FMT_YUV420P, c->width, c->height);if (!ost->tmp_frame) {fprintf(stderr, "Could not allocate temporary picture\n");exit(1);}}/* copy the stream parameters to the muxer */ret = avcodec_parameters_from_context(ost->st->codecpar, c);if (ret < 0) {fprintf(stderr, "Could not copy the stream parameters\n");exit(1);}

}/* Prepare a dummy image. */

static void fill_yuv_image(AVFrame *pict, int frame_index,int width, int height)

{int x, y, i;i = frame_index;/* Y */for (y = 0; y < height; y++)for (x = 0; x < width; x++)pict->data[0][y * pict->linesize[0] + x] = x + y + i * 3;/* Cb and Cr */for (y = 0; y < height / 2; y++) {for (x = 0; x < width / 2; x++) {pict->data[1][y * pict->linesize[1] + x] = 128 + y + i * 2;pict->data[2][y * pict->linesize[2] + x] = 64 + x + i * 5;}}

}static AVFrame *get_video_frame(OutputStream *ost)

{AVCodecContext *c = ost->enc;/* check if we want to generate more frames */if (av_compare_ts(ost->next_pts, c->time_base,STREAM_DURATION, (AVRational){ 1, 1 }) > 0)return NULL;/* when we pass a frame to the encoder, it may keep a reference to it* internally; make sure we do not overwrite it here */if (av_frame_make_writable(ost->frame) < 0)exit(1);if (c->pix_fmt != AV_PIX_FMT_YUV420P) {/* as we only generate a YUV420P picture, we must convert it* to the codec pixel format if needed */if (!ost->sws_ctx) {ost->sws_ctx = sws_getContext(c->width, c->height,AV_PIX_FMT_YUV420P,c->width, c->height,c->pix_fmt,SCALE_FLAGS, NULL, NULL, NULL);if (!ost->sws_ctx) {fprintf(stderr,"Could not initialize the conversion context\n");exit(1);}}fill_yuv_image(ost->tmp_frame, ost->next_pts, c->width, c->height);sws_scale(ost->sws_ctx, (const uint8_t * const *) ost->tmp_frame->data,ost->tmp_frame->linesize, 0, c->height, ost->frame->data,ost->frame->linesize);} else {fill_yuv_image(ost->frame, ost->next_pts, c->width, c->height);}ost->frame->pts = ost->next_pts++;return ost->frame;

}/** encode one video frame and send it to the muxer* return 1 when encoding is finished, 0 otherwise*/

static int write_video_frame(AVFormatContext *oc, OutputStream *ost)

{return write_frame(oc, ost->enc, ost->st, get_video_frame(ost), ost->tmp_pkt);

}static void close_stream(AVFormatContext *oc, OutputStream *ost)

{avcodec_free_context(&ost->enc);av_frame_free(&ost->frame);av_frame_free(&ost->tmp_frame);av_packet_free(&ost->tmp_pkt);sws_freeContext(ost->sws_ctx);swr_free(&ost->swr_ctx);

}/**************************************************************/

/* media file output */int main(int argc, char **argv)

{OutputStream video_st = { 0 }, audio_st = { 0 };const AVOutputFormat *fmt;const char *filename;AVFormatContext *oc;const AVCodec *audio_codec, *video_codec;int ret;int have_video = 0, have_audio = 0;int encode_video = 0, encode_audio = 0;AVDictionary *opt = NULL;int i;if (argc < 2) {printf("usage: %s output_file\n""API example program to output a media file with libavformat.\n""This program generates a synthetic audio and video stream, encodes and\n""muxes them into a file named output_file.\n""The output format is automatically guessed according to the file extension.\n""Raw images can also be output by using '%%d' in the filename.\n""\n", argv[0]);return 1;}filename = argv[1];for (i = 2; i+1 < argc; i+=2) {if (!strcmp(argv[i], "-flags") || !strcmp(argv[i], "-fflags"))av_dict_set(&opt, argv[i]+1, argv[i+1], 0);}/* allocate the output media context */avformat_alloc_output_context2(&oc, NULL, NULL, filename);if (!oc) {printf("Could not deduce output format from file extension: using MPEG.\n");avformat_alloc_output_context2(&oc, NULL, "mpeg", filename);}if (!oc)return 1;fmt = oc->oformat;/* Add the audio and video streams using the default format codecs* and initialize the codecs. */if (fmt->video_codec != AV_CODEC_ID_NONE) {add_stream(&video_st, oc, &video_codec, fmt->video_codec);have_video = 1;encode_video = 1;}if (fmt->audio_codec != AV_CODEC_ID_NONE) {add_stream(&audio_st, oc, &audio_codec, fmt->audio_codec);have_audio = 1;encode_audio = 1;}/* Now that all the parameters are set, we can open the audio and* video codecs and allocate the necessary encode buffers. */if (have_video)open_video(oc, video_codec, &video_st, opt);if (have_audio)open_audio(oc, audio_codec, &audio_st, opt);av_dump_format(oc, 0, filename, 1);/* open the output file, if needed */if (!(fmt->flags & AVFMT_NOFILE)) {ret = avio_open(&oc->pb, filename, AVIO_FLAG_WRITE);if (ret < 0) {fprintf(stderr, "Could not open '%s': %s\n", filename,av_err2str(ret));return 1;}}/* Write the stream header, if any. */ret = avformat_write_header(oc, &opt);if (ret < 0) {fprintf(stderr, "Error occurred when opening output file: %s\n",av_err2str(ret));return 1;}while (encode_video || encode_audio) {/* select the stream to encode */if (encode_video &&(!encode_audio || av_compare_ts(video_st.next_pts, video_st.enc->time_base,audio_st.next_pts, audio_st.enc->time_base) <= 0)) {encode_video = !write_video_frame(oc, &video_st);} else {encode_audio = !write_audio_frame(oc, &audio_st);}}/* Write the trailer, if any. The trailer must be written before you* close the CodecContexts open when you wrote the header; otherwise* av_write_trailer() may try to use memory that was freed on* av_codec_close(). */av_write_trailer(oc);/* Close each codec. */if (have_video)close_stream(oc, &video_st);if (have_audio)close_stream(oc, &audio_st);if (!(fmt->flags & AVFMT_NOFILE))/* Close the output file. */avio_closep(&oc->pb);/* free the stream */avformat_free_context(oc);return 0;

}相关文章:

深入理解FFmpeg--libavformat接口使用(一)

libavformat(lavf)是一个用于处理各种媒体容器格式的库。它的主要两个目的是去复用(即将媒体文件拆分为组件流)和复用的反向过程(以指定的容器格式写入提供的数据)。它还有一个I/O模块,支持多种…...

坚持日更的意义何在?

概述 日更,就是每天更新一次或一篇文章。 坚持日更,就是坚持每天更新一次或一篇文章。 这里用了坚持,实际上不是恰当的表述,正确的感觉应该是让日更当作习惯,然后,让自己习惯每天去更新一篇文章。 日更…...

内容长度不同的div如何自动对齐展示

平时我们经常会遇到页面内容div结构相同页,这时为了美观我们会希望div会对齐展示,但当div里的文字长度不一时又不想写固定高度,就会出现div长度长长短短,此时实现样式可以这样写: .e-commerce-Wrap {display: flex;fle…...

Qt中https的使用,报错TLS initialization failed和不能打开ssl.lib问题解决

前言 在现代应用程序中,安全地传输数据变得越来越重要。Qt提供了一套完整的网络API来支持HTTP和HTTPS通信。然而,在实际开发过程中,开发者可能会遇到SSL相关的错误,例如“TLS initialization failed”,cantt open ssl…...

P2p网络性能测度及监测系统模型

P2p网络性能测度及监测系统模型 网络IP性能参数 IP包传输时延时延变化误差率丢失率虚假率吞吐量可用性连接性测度单向延迟测度单向分组丢失测度往返延迟测度 OSI中的位置-> 网络层 用途 面相业务的网络分布式计算网络游戏IP软件电话流媒体分发多媒体通信 业务质量 通过…...

zookeeper相关总结

1. ZooKeeper 的架构 ZooKeeper 采用主从架构(Leader-Follower 模型),包括以下组件: Leader:负责处理所有写请求和协调事务一致性。Follower:处理读请求并转发写请求给 Leader。参与 Leader 选举和事务提…...

【openwrt】Openwrt系统新增普通用户指南

文章目录 1 如何新增普通用户2 如何以普通用户权限运行服务3 普通用户如何访问root账户的ubus服务4 其他权限控制5 参考 Openwrt系统在默认情况下只提供一个 root账户,所有的服务都是以 root权限运行的,包括 WebUI也是通过root账户访问的,…...

【GD32】从零开始学GD32单片机 | WDGT看门狗定时器+独立看门狗和窗口看门狗例程(GD32F470ZGT6)

1. 简介 看门狗从本质上来说也是一个定时器,它是用来监测硬件或软件的故障的;它的工作原理大概就是开启后内部定时器会按照设置的频率更新,在程序运行过程中我们需不断地重装载看门狗,以使它不溢出;如果硬件或软件发生…...

详解曼达拉升级:如何用网络拓扑结构扩容BSV区块链

发表时间:2024年5月24日 BSV曼达拉升级是对BSV基础设施的战略性重塑,意在显著增强其性能,运行效率和可扩容。该概念于2018年提出,其战略落地将使BSV区块链顺利过渡,从现有的基于单一集成功能组件的网络拓扑结构&am…...

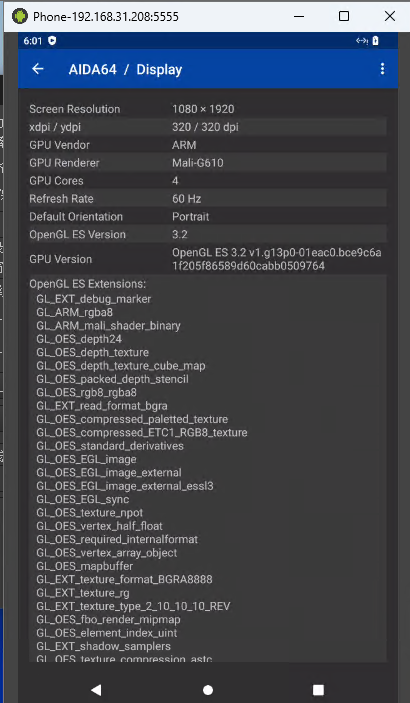

编译打包自己的云手机(redroid)镜像

前言 香橙派上跑云手机可以看之前的文章: 香橙派5plus上跑云手机方案一 redroid(带硬件加速)香橙派5plus上跑云手机方案二 waydroid 还有一个cuttlefish方案没说,后面再研究,cuttlefish的优势在于可以自定义内核且selinux是开启的…...

自动驾驶的规划控制简介

自动驾驶的规划控制是自动驾驶系统中的核心组成部分,它负责生成安全、合理且高效的行驶轨迹,并控制车辆按照这个轨迹行驶。规划控制分为几个层次,通常包括行为决策(Behavior Planning)、轨迹规划(Trajector…...

java配置nginx网络安全,防止国外ip访问,自动添加黑名单,需手动重新加载nginx

通过访问日志自动添加国外ip黑名单 创建一个类,自己添加一个main启动类即可测试 import lombok.AccessLevel; import lombok.NoArgsConstructor; import lombok.extern.slf4j.Slf4j; import org.json.JSONArray; import org.json.JSONObject; import org.sp…...

ARP协议

计算机网络资料下载:CSDN ARP协议 APR(address resolution protocol):地址解析协议,用于实现从IP地址到MAC地址的映射,即访问目标ip地址的mac地址。 网络层及以上采用的ip地址来标记网络接口,但是以太数据帧的传输,…...

Qt程序图标更改以及程序打包

Qt程序图标更改以及程序打包 1 windows1.1 cmake1.1.1 修改.exe程序图标1.1.2 修改显示页面左上角图标 1.2 qmake1.2.1 修改.exe程序图标1.2.2 修改显示页面左上角图标 2 程序打包2.1 MinGW2.2 Visual Studio 3 参考链接 QT6 6.7.2 1 windows 1.1 cmake 1.1.1 修改.exe程序图…...

普通人还有必要学习 Python 之类的编程语言吗?

在开始前分享一些编程的资料需要的同学评论888即可拿走 是我根据网友给的问题精心整理的对于编程的重要性,这里就不详谈了。 未来,我们和机器的交流会越来越多,编程可以简单看作是和机器对话并分发给机器任务。机器不仅越来越强大࿰…...

「Python」基于Gunicorn、Flask和Docker的高并发部署

目标预期 使用Gunicorn作为WSGI HTTP服务器,提供高效的Python应用服务。使用Flask作为轻量级Web应用框架,快速开发Web应用。利用Docker容器化技术,确保应用的可移植性和一致性。实现高并发处理,提高应用的响应速度和稳定性。过程 环境准备:安装Docker和Docker Compose。编…...

在攻防演练中遇到的一个“有马蜂的蜜罐”

在攻防演练中遇到的一个“有马蜂的蜜罐” 有趣的结论,请一路看到文章结尾 在前几天的攻防演练中,我跟队友的气氛氛围都很好,有说有笑,恐怕也是全场话最多、笑最多的队伍了。 也是因为我们遇到了许多相当有趣的事情,其…...

一文了解MySQL的表级锁

文章目录 ☃️概述☃️表级锁❄️❄️介绍❄️❄️表锁❄️❄️元数据锁❄️❄️意向锁⛷️⛷️⛷️ 介绍 ☃️概述 锁是计算机协调多个进程或线程并发访问某一资源的机制。在数据库中,除传统的计算资源(CPU、RAM、I/O)的争用以外࿰…...

LVS+Keepalive高可用

1、keepalive 调度器的高可用 vip地址主备之间的切换,主在工作时,vip地址只在主上,vip漂移到备服务器。 在主备的优先级不变的情况下,主恢复工作,vip会飘回到住服务器 1、配优先级 2、配置vip和真实服务器 3、主…...

网络安全防御【防火墙安全策略用户认证综合实验】

目录 一、实验拓扑图 二、实验要求 三、实验思路 四、实验步骤 1、打开ensp防火墙的web服务(带内管理的工作模式) 2、在FW1的web网页中网络相关配置 3、交换机LSW6(总公司)的相关配置: 4、路由器相关接口配置&a…...

Adobe-GenP:创意工具普惠化的技术破局实践

Adobe-GenP:创意工具普惠化的技术破局实践 【免费下载链接】Adobe-GenP Adobe CC 2019/2020/2021/2022/2023 GenP Universal Patch 3.0 项目地址: https://gitcode.com/gh_mirrors/ad/Adobe-GenP 一、问题象限:创意产业的授权困境与技术挑战 1.1…...

低查重AI教材写作攻略:工具选择、流程步骤与案例解析

谁没有过为教材框架而苦恼的经历呢?面对一片空白的文档,有时甚至会傻傻地发愣半个小时。该先讲解概念,还是当即提供案例呢?章节划分应该根据逻辑还是按课时进行?即使经常调整大纲,最终得到的结果要么不符合…...

终极免费CAJ转PDF解决方案:caj2pdf完整使用指南

终极免费CAJ转PDF解决方案:caj2pdf完整使用指南 【免费下载链接】caj2pdf Convert CAJ (China Academic Journals) files to PDF. 转换中国知网 CAJ 格式文献为 PDF。佛系转换,成功与否,皆是玄学。 项目地址: https://gitcode.com/gh_mirro…...

龙芯k - 走马观碑组MPU驱动移植僖

先回顾:三次握手(建立连接)核心流程(实际版) 为了让挥手流程衔接更顺畅,咱们先快速回顾三次握手的实际核心,避免上下文脱节: 第一步(客户端→服务器)…...

TypeScript 快速上手:环境配置与编译模型

1. 前言 TypeScript 在游戏开发领域的应用日益广泛,Cocos Creator、Egret、LayaAir 等引擎均将其作为主要开发语言,PuerTS 方案也让 Unity 开发者能够以 TypeScript 编写逻辑。对于具备 C# 或 C 背景的开发者而言,TypeScript 的类型系统并不…...

为什么你的Spring Boot 4.0 Agent始终“不就绪”?7步诊断清单+ClassLoader隔离冲突终极解法

第一章:Spring Boot 4.0 Agent-Ready 架构演进与核心挑战Spring Boot 4.0 将 JVM Agent 集成能力提升为核心架构特性,标志着从“可监控”迈向“原生可观测”的范式跃迁。该版本深度重构了启动生命周期、类加载器隔离机制与 Bean 注册流程,使字…...

网易云音乐永久直链解析API完整指南:高效获取稳定音乐链接

网易云音乐永久直链解析API完整指南:高效获取稳定音乐链接 【免费下载链接】netease-cloud-music-api 网易云音乐直链解析 API 项目地址: https://gitcode.com/gh_mirrors/ne/netease-cloud-music-api 还在为网易云音乐分享链接频繁失效而烦恼吗?…...

小白也能玩转AI配音!Fish Speech 1.5一键部署实战指南

小白也能玩转AI配音!Fish Speech 1.5一键部署实战指南 想让你的文字变成专业级语音吗?Fish Speech 1.5作为一款强大的AI语音合成工具,支持12种语言和声音克隆功能,现在通过CSDN星图镜像,只需简单几步就能快速体验。本…...

)

从URDF到MoveIt!手把手教你为六轴机械臂配置运动规划(避坑指南)

从URDF到MoveIt!六轴机械臂运动规划实战全解析 当你第一次在RViz中看到自己设计的六轴机械臂模型时,那种成就感难以言表。但很快你会发现,静态展示只是万里长征的第一步——如何让这个钢铁手臂真正"活"起来?这就是MoveI…...

)

避坑指南:Node-RED读取西门子PLC模拟量值,为什么你的DB块数据总是0?(附S7-1200配置全流程)

Node-RED与西门子S7-1200 PLC通信避坑实战:从DB块数据异常到稳定读取的完整解决方案 当工业物联网项目遇到Node-RED与西门子PLC通信时,DB块数据读取为0的问题就像一道无形的墙,让不少开发者陷入调试泥潭。上周深夜,我的工作站屏幕…...