【Linux】ClickHouse 部署

搭建Clickhouse集群时,需要使用Zookeeper去实现集群副本之间的同步,所以需要先搭建zookeeper集群

1、卸载

# 检查有哪些clickhouse依赖包:

[root@localhost ~]# yum list installed | grep clickhouse# 移除依赖包:

[root@localhost ~]# yum remove -y clickhouse-common-static

[root@localhost ~]# yum remove -y clickhouse-common-static-dbg# 卸载完再确认一下是否卸载干净

[root@localhost ~]# yum list installed | grep clickhouse # 删除相关配置文件

[root@localhost ~]# rm -rf /var/lib/clickhouse /var/log/clickhouse-server /var/run/clickhouse-server /etc/clickhouse-* /app/clickhouse

2、创建用户

# 查看用户是否存在:可以查看 /etc/passwd 文件,也可以使用 id 命令

[root@localhost ~]# getent passwd clickhouse | awk -F: '{print $1}'

# 查看用户所属用户组

[root@localhost ~]# groups clickouse

# 删除用户

[root@localhost ~]# userdel -r clickouse

# 创建clickhouse用户及用户组, 设置密码

[root@localhost ~]# groupadd clickhouse

[root@localhost ~]# useradd clickhouse -g clickhouse

[root@localhost ~]# passwd clickhouse

abc#CK@2000

3、安装依赖

[root@localhost ~]# yum list | grep libtool

[root@localhost ~]# yum install -y libtool unixODBC

4、安装clickhouse

# /app/clickhouse目录下

[root@localhost ~]# ls /app/clickhouse

clickhouse-client-20.11.5.18-2.noarch.rpm

clickhouse-common-static-20.11.5.18-2.x86_64.rpm

clickhouse-server-20.11.5.18-2.noarch.rpm# 安装

[root@localhost ~]# cd /app/clickhouse

[root@localhost clickhouse]# rpm -ivh *.rpm# 查看安装情况

[root@localhost clickhouse]# rpm -qa|grep clickhouse# 创建log目录和data目录

[root@localhost clickhouse]# mkdir -p log/clickhouse-server data# 修改文件权限

[root@localhost clickhouse]# chown -R clickhouse:clickhouse /app/clickhouse /etc/clickhouse-server

安装完成之后会生成如下对应的目录:

- /etc/clickhouse-server : 服务端的配置文件目录,包括全局配置config.xml 和用户配置users.xml。

- /var/lib/clickhouse : 默认的数据存储目录,通常会修改,将数据保存到大容量磁盘路径中。

- /var/log/cilckhouse-server : 默认保存日志的目录,通常会修改,将数据保存到大容量磁盘路径中。

- 在/usr/bin下会有可执行文件:

- clickhouse: 主程序可执行文件

- clickhouse-server: 一个指向clickhouse可执行文件的软连接,供服务端启动使用。

- clickhouse-client: 一个指向clickhouse可执行文件的软连接,供客户端启动使用。

5、修改配置文件

[root@localhost clickhouse]# cd /etc/clickhouse-server

[root@localhost clickhouse-server]# vim config.xml

[root@localhost clickhouse-server]# vim metrika.xml

[root@localhost clickhouse-server]# vim users.xml

5.1 主配置文件 /etc/clickhouse-server/config.xml

<?xml version="1.0"?><!--NOTE: User and query level settings are set up in "users.xml" file.If you have accidentally specified user-level settings here, server won't start.You can either move the settings to the right place inside "users.xml" fileor add <skip_check_for_incorrect_settings>1</skip_check_for_incorrect_settings> here.--><yandex><logger><!-- Possible levels: https://github.com/pocoproject/poco/blob/poco-1.9.4-release/Foundation/include/Poco/Logger.h#L105 --><level>trace</level><log>/app/clickhouse/log/clickhouse-server/clickhouse-server.log</log><errorlog>/app/clickhouse/log/clickhouse-server/clickhouse-server.err.log</errorlog><size>1000M</size><count>10</count><!-- <console>1</console> --> <!-- Default behavior is autodetection (log to console if not daemon mode and is tty) --><!-- Per level overrides (legacy):For example to suppress logging of the ConfigReloader you can use:NOTE: levels.logger is reserved, see below.--><!--<levels><ConfigReloader>none</ConfigReloader></levels>--><!-- Per level overrides:For example to suppress logging of the RBAC for default user you can use:(But please note that the logger name maybe changed from version to version, even after minor upgrade)--><!--<levels><logger><name>ContextAccess (default)</name><level>none</level></logger><logger><name>DatabaseOrdinary (test)</name><level>none</level></logger></levels>--></logger><send_crash_reports><!-- Changing <enabled> to true allows sending crash reports to --><!-- the ClickHouse core developers team via Sentry https://sentry.io --><!-- Doing so at least in pre-production environments is highly appreciated --><enabled>false</enabled><!-- Change <anonymize> to true if you don't feel comfortable attaching the server hostname to the crash report --><anonymize>false</anonymize><!-- Default endpoint should be changed to different Sentry DSN only if you have --><!-- some in-house engineers or hired consultants who're going to debug ClickHouse issues for you --><endpoint>https://6f33034cfe684dd7a3ab9875e57b1c8d@o388870.ingest.sentry.io/5226277</endpoint></send_crash_reports><!--display_name>production</display_name--> <!-- It is the name that will be shown in the client --><http_port>8123</http_port><tcp_port>9000</tcp_port><mysql_port>9004</mysql_port><!-- For HTTPS and SSL over native protocol. --><!--<https_port>8443</https_port><tcp_port_secure>9440</tcp_port_secure>--><!-- Used with https_port and tcp_port_secure. Full ssl options list: https://github.com/ClickHouse-Extras/poco/blob/master/NetSSL_OpenSSL/include/Poco/Net/SSLManager.h#L71 --><openSSL><server> <!-- Used for https server AND secure tcp port --><!-- openssl req -subj "/CN=localhost" -new -newkey rsa:2048 -days 365 -nodes -x509 -keyout /etc/clickhouse-server/server.key -out /etc/clickhouse-server/server.crt --><certificateFile>/etc/clickhouse-server/server.crt</certificateFile><privateKeyFile>/etc/clickhouse-server/server.key</privateKeyFile><!-- openssl dhparam -out /etc/clickhouse-server/dhparam.pem 4096 --><dhParamsFile>/etc/clickhouse-server/dhparam.pem</dhParamsFile><verificationMode>none</verificationMode><loadDefaultCAFile>true</loadDefaultCAFile><cacheSessions>true</cacheSessions><disableProtocols>sslv2,sslv3</disableProtocols><preferServerCiphers>true</preferServerCiphers></server><client> <!-- Used for connecting to https dictionary source and secured Zookeeper communication --><loadDefaultCAFile>true</loadDefaultCAFile><cacheSessions>true</cacheSessions><disableProtocols>sslv2,sslv3</disableProtocols><preferServerCiphers>true</preferServerCiphers><!-- Use for self-signed: <verificationMode>none</verificationMode> --><invalidCertificateHandler><!-- Use for self-signed: <name>AcceptCertificateHandler</name> --><name>RejectCertificateHandler</name></invalidCertificateHandler></client></openSSL><!-- Default root page on http[s] server. For example load UI from https://tabix.io/ when opening http://localhost:8123 --><!--<http_server_default_response><![CDATA[<html ng-app="SMI2"><head><base href="http://ui.tabix.io/"></head><body><div ui-view="" class="content-ui"></div><script src="http://loader.tabix.io/master.js"></script></body></html>]]></http_server_default_response>--><!-- Port for communication between replicas. Used for data exchange. --><interserver_http_port>9009</interserver_http_port><!-- Hostname that is used by other replicas to request this server.If not specified, than it is determined analogous to 'hostname -f' command.This setting could be used to switch replication to another network interface.--><!--<interserver_http_host>example.yandex.ru</interserver_http_host>--><!-- Listen specified host. use :: (wildcard IPv6 address), if you want to accept connections both with IPv4 and IPv6 from everywhere. --><listen_host>::</listen_host><!-- Same for hosts with disabled ipv6: --><!-- <listen_host>0.0.0.0</listen_host> --><!-- Default values - try listen localhost on ipv4 and ipv6: --><!--<listen_host>::1</listen_host><listen_host>127.0.0.1</listen_host>--><!-- Don't exit if ipv6 or ipv4 unavailable, but listen_host with this protocol specified --><!-- <listen_try>0</listen_try> --><!-- Allow listen on same address:port --><!-- <listen_reuse_port>0</listen_reuse_port> --><!-- <listen_backlog>64</listen_backlog> --><max_connections>4096</max_connections><keep_alive_timeout>3</keep_alive_timeout><!-- Maximum number of concurrent queries. --><max_concurrent_queries>100</max_concurrent_queries><!-- Maximum memory usage (resident set size) for server process.Zero value or unset means default. Default is "max_server_memory_usage_to_ram_ratio" of available physical RAM.If the value is larger than "max_server_memory_usage_to_ram_ratio" of available physical RAM, it will be cut down.The constraint is checked on query execution time.If a query tries to allocate memory and the current memory usage plus allocation is greaterthan specified threshold, exception will be thrown.It is not practical to set this constraint to small values like just a few gigabytes,because memory allocator will keep this amount of memory in caches and the server will deny service of queries.--><max_server_memory_usage>0</max_server_memory_usage><!-- Maximum number of threads in the Global thread pool.This will default to a maximum of 10000 threads if not specified.This setting will be useful in scenarios where there are a large numberof distributed queries that are running concurrently but are idling mostof the time, in which case a higher number of threads might be required.--><max_thread_pool_size>10000</max_thread_pool_size><!-- On memory constrained environments you may have to set this to value larger than 1.--><max_server_memory_usage_to_ram_ratio>0.9</max_server_memory_usage_to_ram_ratio><!-- Simple server-wide memory profiler. Collect a stack trace at every peak allocation step (in bytes).Data will be stored in system.trace_log table with query_id = empty string.Zero means disabled.--><total_memory_profiler_step>4194304</total_memory_profiler_step><!-- Collect random allocations and deallocations and write them into system.trace_log with 'MemorySample' trace_type.The probability is for every alloc/free regardless to the size of the allocation.Note that sampling happens only when the amount of untracked memory exceeds the untracked memory limit,which is 4 MiB by default but can be lowered if 'total_memory_profiler_step' is lowered.You may want to set 'total_memory_profiler_step' to 1 for extra fine grained sampling.--><total_memory_tracker_sample_probability>0</total_memory_tracker_sample_probability><!-- Set limit on number of open files (default: maximum). This setting makes sense on Mac OS X because getrlimit() fails to retrievecorrect maximum value. --><!-- <max_open_files>262144</max_open_files> --><!-- Size of cache of uncompressed blocks of data, used in tables of MergeTree family.In bytes. Cache is single for server. Memory is allocated only on demand.Cache is used when 'use_uncompressed_cache' user setting turned on (off by default).Uncompressed cache is advantageous only for very short queries and in rare cases.--><uncompressed_cache_size>8589934592</uncompressed_cache_size><!-- Approximate size of mark cache, used in tables of MergeTree family.In bytes. Cache is single for server. Memory is allocated only on demand.You should not lower this value.--><mark_cache_size>5368709120</mark_cache_size><!-- Path to data directory, with trailing slash. --><path>/app/clickhouse/</path><!-- Path to temporary data for processing hard queries. --><tmp_path>/app/clickhouse/tmp/</tmp_path><!-- Policy from the <storage_configuration> for the temporary files.If not set <tmp_path> is used, otherwise <tmp_path> is ignored.Notes:- move_factor is ignored- keep_free_space_bytes is ignored- max_data_part_size_bytes is ignored- you must have exactly one volume in that policy--><!-- <tmp_policy>tmp</tmp_policy> --><!-- Directory with user provided files that are accessible by 'file' table function. --><user_files_path>/var/lib/clickhouse/user_files/</user_files_path><!-- LDAP server definitions. --><ldap_servers><!-- List LDAP servers with their connection parameters here to later 1) use them as authenticators for dedicated local users,who have 'ldap' authentication mechanism specified instead of 'password', or to 2) use them as remote user directories.Parameters:host - LDAP server hostname or IP, this parameter is mandatory and cannot be empty.port - LDAP server port, default is 636 if enable_tls is set to true, 389 otherwise.auth_dn_prefix, auth_dn_suffix - prefix and suffix used to construct the DN to bind to.Effectively, the resulting DN will be constructed as auth_dn_prefix + escape(user_name) + auth_dn_suffix string.Note, that this implies that auth_dn_suffix should usually have comma ',' as its first non-space character.enable_tls - flag to trigger use of secure connection to the LDAP server.Specify 'no' for plain text (ldap://) protocol (not recommended).Specify 'yes' for LDAP over SSL/TLS (ldaps://) protocol (recommended, the default).Specify 'starttls' for legacy StartTLS protocol (plain text (ldap://) protocol, upgraded to TLS).tls_minimum_protocol_version - the minimum protocol version of SSL/TLS.Accepted values are: 'ssl2', 'ssl3', 'tls1.0', 'tls1.1', 'tls1.2' (the default).tls_require_cert - SSL/TLS peer certificate verification behavior.Accepted values are: 'never', 'allow', 'try', 'demand' (the default).tls_cert_file - path to certificate file.tls_key_file - path to certificate key file.tls_ca_cert_file - path to CA certificate file.tls_ca_cert_dir - path to the directory containing CA certificates.tls_cipher_suite - allowed cipher suite (in OpenSSL notation).Example:<my_ldap_server><host>localhost</host><port>636</port><auth_dn_prefix>uid=</auth_dn_prefix><auth_dn_suffix>,ou=users,dc=example,dc=com</auth_dn_suffix><enable_tls>yes</enable_tls><tls_minimum_protocol_version>tls1.2</tls_minimum_protocol_version><tls_require_cert>demand</tls_require_cert><tls_cert_file>/path/to/tls_cert_file</tls_cert_file><tls_key_file>/path/to/tls_key_file</tls_key_file><tls_ca_cert_file>/path/to/tls_ca_cert_file</tls_ca_cert_file><tls_ca_cert_dir>/path/to/tls_ca_cert_dir</tls_ca_cert_dir><tls_cipher_suite>ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384:AES256-GCM-SHA384</tls_cipher_suite></my_ldap_server>--></ldap_servers><!-- Sources to read users, roles, access rights, profiles of settings, quotas. --><user_directories><users_xml><!-- Path to configuration file with predefined users. --><path>users.xml</path></users_xml><local_directory><!-- Path to folder where users created by SQL commands are stored. --><path>/app/clickhouse/access/</path></local_directory><!-- To add an LDAP server as a remote user directory of users that are not defined locally, define a single 'ldap' sectionwith the following parameters:server - one of LDAP server names defined in 'ldap_servers' config section above.This parameter is mandatory and cannot be empty.roles - section with a list of locally defined roles that will be assigned to each user retrieved from the LDAP server.If no roles are specified, user will not be able to perform any actions after authentication.If any of the listed roles is not defined locally at the time of authentication, the authenthication attemptwill fail as if the provided password was incorrect.Example:<ldap><server>my_ldap_server</server><roles><my_local_role1 /><my_local_role2 /></roles></ldap>--></user_directories><!-- Default profile of settings. --><default_profile>default</default_profile><!-- Comma-separated list of prefixes for user-defined settings. --><custom_settings_prefixes></custom_settings_prefixes><!-- System profile of settings. This settings are used by internal processes (Buffer storage, Distributed DDL worker and so on). --><!-- <system_profile>default</system_profile> --><!-- Default database. --><default_database>default</default_database><!-- Server time zone could be set here.Time zone is used when converting between String and DateTime types,when printing DateTime in text formats and parsing DateTime from text,it is used in date and time related functions, if specific time zone was not passed as an argument.Time zone is specified as identifier from IANA time zone database, like UTC or Africa/Abidjan.If not specified, system time zone at server startup is used.Please note, that server could display time zone alias instead of specified name.Example: W-SU is an alias for Europe/Moscow and Zulu is an alias for UTC.--><timezone>Asia/Shanghai</timezone><!-- You can specify umask here (see "man umask"). Server will apply it on startup.Number is always parsed as octal. Default umask is 027 (other users cannot read logs, data files, etc; group can only read).--><!-- <umask>022</umask> --><!-- Perform mlockall after startup to lower first queries latencyand to prevent clickhouse executable from being paged out under high IO load.Enabling this option is recommended but will lead to increased startup time for up to a few seconds.--><mlock_executable>true</mlock_executable><!-- Reallocate memory for machine code ("text") using huge pages. Highly experimental. --><remap_executable>false</remap_executable><!-- Configuration of clusters that could be used in Distributed tables.https://clickhouse.tech/docs/en/operations/table_engines/distributed/--><remote_servers incl="clickhouse_remote_servers" ><!-- Test only shard config for testing distributed storage --><!-- <test_shard_localhost> --><!-- Inter-server per-cluster secret for Distributed queriesdefault: no secret (no authentication will be performed)If set, then Distributed queries will be validated on shards, so at least:- such cluster should exist on the shard,- such cluster should have the same secret.And also (and which is more important), the initial_user willbe used as current user for the query.Right now the protocol is pretty simple and it only takes into account:- cluster name- queryAlso it will be nice if the following will be implemented:- source hostname (see interserver_http_host), but then it will depends from DNS,it can use IP address instead, but then the you need to get correct on the initiator node.- target hostname / ip address (same notes as for source hostname)- time-based security tokens--><!-- <secret></secret> --><!-- <shard> --><!-- Optional. Whether to write data to just one of the replicas. Default: false (write data to all replicas). --><!-- <internal_replication>false</internal_replication> --><!-- Optional. Shard weight when writing data. Default: 1. --><!-- <weight>1</weight> --><!-- <replica><host>localhost</host><port>9000</port> --><!-- Optional. Priority of the replica for load_balancing. Default: 1 (less value has more priority). --><!-- <priority>1</priority> --><!-- </replica></shard></test_shard_localhost><test_cluster_two_shards_localhost><shard><replica><host>localhost</host><port>9000</port></replica></shard><shard><replica><host>localhost</host><port>9000</port></replica></shard></test_cluster_two_shards_localhost><test_cluster_two_shards><shard><replica><host>127.0.0.1</host><port>9000</port></replica></shard><shard><replica><host>127.0.0.2</host><port>9000</port></replica></shard></test_cluster_two_shards><test_cluster_two_shards_internal_replication><shard><internal_replication>true</internal_replication><replica><host>127.0.0.1</host><port>9000</port></replica></shard><shard><internal_replication>true</internal_replication><replica><host>127.0.0.2</host><port>9000</port></replica></shard></test_cluster_two_shards_internal_replication><test_shard_localhost_secure><shard><replica><host>localhost</host><port>9440</port><secure>1</secure></replica></shard></test_shard_localhost_secure><test_unavailable_shard><shard><replica><host>localhost</host><port>9000</port></replica></shard><shard><replica><host>localhost</host><port>1</port></replica></shard></test_unavailable_shard> --></remote_servers><!-- The list of hosts allowed to use in URL-related storage engines and table functions.If this section is not present in configuration, all hosts are allowed.--><remote_url_allow_hosts><!-- Host should be specified exactly as in URL. The name is checked before DNS resolution.Example: "yandex.ru", "yandex.ru." and "www.yandex.ru" are different hosts.If port is explicitly specified in URL, the host:port is checked as a whole.If host specified here without port, any port with this host allowed."yandex.ru" -> "yandex.ru:443", "yandex.ru:80" etc. is allowed, but "yandex.ru:80" -> only "yandex.ru:80" is allowed.If the host is specified as IP address, it is checked as specified in URL. Example: "[2a02:6b8:a::a]".If there are redirects and support for redirects is enabled, every redirect (the Location field) is checked.--><!-- Regular expression can be specified. RE2 engine is used for regexps.Regexps are not aligned: don't forget to add ^ and $. Also don't forget to escape dot (.) metacharacter(forgetting to do so is a common source of error).--></remote_url_allow_hosts><!-- If element has 'incl' attribute, then for it's value will be used corresponding substitution from another file.By default, path to file with substitutions is /etc/metrika.xml. It could be changed in config in 'include_from' element.Values for substitutions are specified in /yandex/name_of_substitution elements in that file.--><!--<include_from>/etc/clickhouse-server/metrika.xml</include_from> --><!-- ZooKeeper is used to store metadata about replicas, when using Replicated tables.Optional. If you don't use replicated tables, you could omit that.See https://clickhouse.yandex/docs/en/table_engines/replication/--><zookeeper incl="zookeeper-servers" optional="true" /><!-- Substitutions for parameters of replicated tables.Optional. If you don't use replicated tables, you could omit that.See https://clickhouse.yandex/docs/en/table_engines/replication/#creating-replicated-tables--><macros incl="macros" optional="true" /><!-- Reloading interval for embedded dictionaries, in seconds. Default: 3600. --><builtin_dictionaries_reload_interval>3600</builtin_dictionaries_reload_interval><!-- Maximum session timeout, in seconds. Default: 3600. --><max_session_timeout>3600</max_session_timeout><!-- Default session timeout, in seconds. Default: 60. --><default_session_timeout>60</default_session_timeout><!-- Sending data to Graphite for monitoring. Several sections can be defined. --><!--interval - send every X secondroot_path - prefix for keyshostname_in_path - append hostname to root_path (default = true)metrics - send data from table system.metricsevents - send data from table system.eventsasynchronous_metrics - send data from table system.asynchronous_metrics--><!--<graphite><host>localhost</host><port>42000</port><timeout>0.1</timeout><interval>60</interval><root_path>one_min</root_path><hostname_in_path>true</hostname_in_path><metrics>true</metrics><events>true</events><events_cumulative>false</events_cumulative><asynchronous_metrics>true</asynchronous_metrics></graphite><graphite><host>localhost</host><port>42000</port><timeout>0.1</timeout><interval>1</interval><root_path>one_sec</root_path><metrics>true</metrics><events>true</events><events_cumulative>false</events_cumulative><asynchronous_metrics>false</asynchronous_metrics></graphite>--><!-- Serve endpoint for Prometheus monitoring. --><!--endpoint - mertics path (relative to root, statring with "/")port - port to setup server. If not defined or 0 than http_port usedmetrics - send data from table system.metricsevents - send data from table system.eventsasynchronous_metrics - send data from table system.asynchronous_metricsstatus_info - send data from different component from CH, ex: Dictionaries status--><!--<prometheus><endpoint>/metrics</endpoint><port>9363</port><metrics>true</metrics><events>true</events><asynchronous_metrics>true</asynchronous_metrics><status_info>true</status_info></prometheus>--><!-- Query log. Used only for queries with setting log_queries = 1. --><query_log><!-- What table to insert data. If table is not exist, it will be created.When query log structure is changed after system update,then old table will be renamed and new table will be created automatically.--><database>system</database><table>query_log</table><!--PARTITION BY expr https://clickhouse.yandex/docs/en/table_engines/custom_partitioning_key/Example:event_datetoMonday(event_date)toYYYYMM(event_date)toStartOfHour(event_time)--><partition_by>toYYYYMM(event_date)</partition_by><!-- Instead of partition_by, you can provide full engine expression (starting with ENGINE = ) with parameters,Example: <engine>ENGINE = MergeTree PARTITION BY toYYYYMM(event_date) ORDER BY (event_date, event_time) SETTINGS index_granularity = 1024</engine>--><!-- Interval of flushing data. --><flush_interval_milliseconds>7500</flush_interval_milliseconds></query_log><!-- Trace log. Stores stack traces collected by query profilers.See query_profiler_real_time_period_ns and query_profiler_cpu_time_period_ns settings. --><trace_log><database>system</database><table>trace_log</table><partition_by>toYYYYMM(event_date)</partition_by><flush_interval_milliseconds>7500</flush_interval_milliseconds></trace_log><!-- Query thread log. Has information about all threads participated in query execution.Used only for queries with setting log_query_threads = 1. --><query_thread_log><database>system</database><table>query_thread_log</table><partition_by>toYYYYMM(event_date)</partition_by><flush_interval_milliseconds>7500</flush_interval_milliseconds></query_thread_log><!-- Uncomment if use part log.Part log contains information about all actions with parts in MergeTree tables (creation, deletion, merges, downloads).<part_log><database>system</database><table>part_log</table><flush_interval_milliseconds>7500</flush_interval_milliseconds></part_log>--><!-- Uncomment to write text log into table.Text log contains all information from usual server log but stores it in structured and efficient way.The level of the messages that goes to the table can be limited (<level>), if not specified all messages will go to the table.<text_log><database>system</database><table>text_log</table><flush_interval_milliseconds>7500</flush_interval_milliseconds><level></level></text_log>--><!-- Metric log contains rows with current values of ProfileEvents, CurrentMetrics collected with "collect_interval_milliseconds" interval. --><metric_log><database>system</database><table>metric_log</table><flush_interval_milliseconds>7500</flush_interval_milliseconds><collect_interval_milliseconds>1000</collect_interval_milliseconds></metric_log><!--Asynchronous metric log contains values of metrics fromsystem.asynchronous_metrics.--><asynchronous_metric_log><database>system</database><table>asynchronous_metric_log</table><!--Asynchronous metrics are updated once a minute, so there isno need to flush more often.--><flush_interval_milliseconds>60000</flush_interval_milliseconds></asynchronous_metric_log><!--OpenTelemetry log contains OpenTelemetry trace spans.--><opentelemetry_span_log><!--The default table creation code is insufficient, this <engine> specis a workaround. There is no 'event_time' for this log, but two times,start and finish. It is sorted by finish time, to avoid insertingdata too far away in the past (probably we can sometimes insert a spanthat is seconds earlier than the last span in the table, due to a racebetween several spans inserted in parallel). This gives the spans aglobal order that we can use to e.g. retry insertion into some externalsystem.--><engine>engine MergeTreepartition by toYYYYMM(finish_date)order by (finish_date, finish_time_us, trace_id)</engine><database>system</database><table>opentelemetry_span_log</table><flush_interval_milliseconds>7500</flush_interval_milliseconds></opentelemetry_span_log><!-- Crash log. Stores stack traces for fatal errors.This table is normally empty. --><crash_log><database>system</database><table>crash_log</table><partition_by /><flush_interval_milliseconds>1000</flush_interval_milliseconds></crash_log><!-- Parameters for embedded dictionaries, used in Yandex.Metrica.See https://clickhouse.yandex/docs/en/dicts/internal_dicts/--><!-- Path to file with region hierarchy. --><!-- <path_to_regions_hierarchy_file>/opt/geo/regions_hierarchy.txt</path_to_regions_hierarchy_file> --><!-- Path to directory with files containing names of regions --><!-- <path_to_regions_names_files>/opt/geo/</path_to_regions_names_files> --><!-- Configuration of external dictionaries. See:https://clickhouse.yandex/docs/en/dicts/external_dicts/--><dictionaries_config>*_dictionary.xml</dictionaries_config><!-- Uncomment if you want data to be compressed 30-100% better.Don't do that if you just started using ClickHouse.--><compression incl="clickhouse_compression"><!--<!- - Set of variants. Checked in order. Last matching case wins. If nothing matches, lz4 will be used. - -><case><!- - Conditions. All must be satisfied. Some conditions may be omitted. - -><min_part_size>10000000000</min_part_size> <!- - Min part size in bytes. - -><min_part_size_ratio>0.01</min_part_size_ratio> <!- - Min size of part relative to whole table size. - -><!- - What compression method to use. - -><method>zstd</method></case>--></compression><!-- Allow to execute distributed DDL queries (CREATE, DROP, ALTER, RENAME) on cluster.Works only if ZooKeeper is enabled. Comment it if such functionality isn't required. --><distributed_ddl><!-- Path in ZooKeeper to queue with DDL queries --><path>/clickhouse/task_queue/ddl</path><!-- Settings from this profile will be used to execute DDL queries --><!-- <profile>default</profile> --><!-- Controls how much ON CLUSTER queries can be run simultaneously. --><!-- <pool_size>1</pool_size> --></distributed_ddl><!-- Settings to fine tune MergeTree tables. See documentation in source code, in MergeTreeSettings.h --><!--<merge_tree><max_suspicious_broken_parts>5</max_suspicious_broken_parts></merge_tree>--><!-- Protection from accidental DROP.If size of a MergeTree table is greater than max_table_size_to_drop (in bytes) than table could not be dropped with any DROP query.If you want do delete one table and don't want to change clickhouse-server config, you could create special file <clickhouse-path>/flags/force_drop_table and make DROP once.By default max_table_size_to_drop is 50GB; max_table_size_to_drop=0 allows to DROP any tables.The same for max_partition_size_to_drop.Uncomment to disable protection.--><!-- <max_table_size_to_drop>0</max_table_size_to_drop> --><!-- <max_partition_size_to_drop>0</max_partition_size_to_drop> --><!-- Example of parameters for GraphiteMergeTree table engine --><graphite_rollup_example><pattern><regexp>click_cost</regexp><function>any</function><retention><age>0</age><precision>3600</precision></retention><retention><age>86400</age><precision>60</precision></retention></pattern><default><function>max</function><retention><age>0</age><precision>60</precision></retention><retention><age>3600</age><precision>300</precision></retention><retention><age>86400</age><precision>3600</precision></retention></default></graphite_rollup_example><!-- Directory in <clickhouse-path> containing schema files for various input formats.The directory will be created if it doesn't exist.--><format_schema_path>/app/clickhouse/format_schemas/</format_schema_path><!-- Default query masking rules, matching lines would be replaced with something else in the logs(both text logs and system.query_log).name - name for the rule (optional)regexp - RE2 compatible regular expression (mandatory)replace - substitution string for sensitive data (optional, by default - six asterisks)--><query_masking_rules><rule><name>hide encrypt/decrypt arguments</name><regexp>((?:aes_)?(?:encrypt|decrypt)(?:_mysql)?)\s*\(\s*(?:'(?:\\'|.)+'|.*?)\s*\)</regexp><!-- or more secure, but also more invasive:(aes_\w+)\s*\(.*\)--><replace>\1(???)</replace></rule></query_masking_rules><!-- Uncomment to use custom http handlers.rules are checked from top to bottom, first match runs the handlerurl - to match request URL, you can use 'regex:' prefix to use regex match(optional)methods - to match request method, you can use commas to separate multiple method matches(optional)headers - to match request headers, match each child element(child element name is header name), you can use 'regex:' prefix to use regex match(optional)handler is request handlertype - supported types: static, dynamic_query_handler, predefined_query_handlerquery - use with predefined_query_handler type, executes query when the handler is calledquery_param_name - use with dynamic_query_handler type, extracts and executes the value corresponding to the <query_param_name> value in HTTP request paramsstatus - use with static type, response status codecontent_type - use with static type, response content-typeresponse_content - use with static type, Response content sent to client, when using the prefix 'file://' or 'config://', find the content from the file or configuration send to client.<http_handlers><rule><url>/</url><methods>POST,GET</methods><headers><pragma>no-cache</pragma></headers><handler><type>dynamic_query_handler</type><query_param_name>query</query_param_name></handler></rule><rule><url>/predefined_query</url><methods>POST,GET</methods><handler><type>predefined_query_handler</type><query>SELECT * FROM system.settings</query></handler></rule><rule><handler><type>static</type><status>200</status><content_type>text/plain; charset=UTF-8</content_type><response_content>config://http_server_default_response</response_content></handler></rule></http_handlers>--><!-- Uncomment to disable ClickHouse internal DNS caching. --><!-- <disable_internal_dns_cache>1</disable_internal_dns_cache> --></yandex>5.2 集群配置(两种方式)

方式一、直接修改 /etc/clickhouse-server/config.xml 文件

<!-- 修改端口:(默认9000端口跟hdfs冲突) --><tcp_port>9002</tcp_port><!-- 修改时区 --> <timezone>Asia/Shanghai</timezone> <!-- 配置监听网络 --><!--ip地址,配置成::可以被任意ipv4和ipv6的客户端连接,如果机器本身不支持ipv6,这样配置是无法连接clickhouse的,这时候要改成0.0.0.0 --><listen_host>::</listen_host><!-- 添加集群相关配置 --><remote_servers><!-- 3个分片1个副本 --><test_cluster_three_shards_internal_replication><shard><!-- 是否只将数据写入其中一个副本,默认为false,表示写入所有副本,在复制表的情况下可能会导致重复和不一致,所以这里要改为true,clickhouse分布式表只管写入一个副本,其余同步表的事情交给复制表和zookeeper来进行 --><internal_replication>true</internal_replication><replica><host>cdh01</host><port>9002</port></replica></shard><shard><internal_replication>true</internal_replication><replica><host>cdh02</host><port>9002</port></replica></shard><shard><internal_replication>true</internal_replication><replica><host>cdh03</host><port>9002</port></replica></shard></test_cluster_three_shards_internal_replication></remote_servers><!-- zookeeper配置 --><zookeeper><node><host>cdh01</host><port>2181</port></node><node><host>cdh02</host><port>2181</port></node><node><host>cdh03</host><port>2181</port></node></zookeeper><!-- 复制标识的配置,也称为宏配置,这里唯一标识一个副本名称,每个实例配置都是唯一的 --><macros><!-- 当前节点在在集群中的分片编号,需要在集群中唯一,3个节点分别为01,02,03 --><shard>01</shard><!-- 副本的唯一标识,需要在单个分片的多个副本中唯一,3个节点分别为cdh01,cdh02,cdh03 --><replica>cdh01</replica></macros>

方式二、创建一个名为metrika.xml ( /etc/clickhouse-server/metrika.xml) 的配置文件来配置remote_servers、zookeeper、macros…

<yandex><!-- clickhouse集群配置标签,固定写法 --><clickhouse_remote_servers><!-- 配置clickhouse的集群名称,可自由定义名称,注意集群名称中不能包含点号。这里代表集群中有3个分片,每个分片有1个副本--><!-- 分片是指包含部分数据的服务器,要读取所有的数据,必须访问所有的分片 --><!-- 副本是指存储分片备份数据的服务器,要读取所有的数据,访问任意副本上的数据即可 --><clickhouse_cluster_3shards_1replicas><!-- 分片,一个clickhouse集群可以分多个分片,每个分片可以存储数据,这里分片可以理解为clickhouse机器中的每个节点。这里可以配置一个或者任意多个分片,在每个分片中可以配置一个或任意多个副本,不同分片可配置不同数量的副本。如果只是配置一个分片,这种情况下查询操作应该称为远程查询,而不是分布式查询 --><shard><!-- 默认为false,写数据操作会将数据写入所有的副本,设置为true,写操作只会选择一个正常的副本写入数据,数据的同步在后台自动进行 --><internal_replication>true</internal_replication><!-- 每个分片的副本,默认每个分片配置了一个副本。也可以配置多个。如果配置了副本,读取操作可以从每个分片里选择一个可用的副本。如果副本不可用,会依次选择下个副本进行连接。该机制利于系统的可用性 --><replica><host>2206:410:a10::111</host><port>9000</port><user>default</user><password>CK#password@2000</password></replica><replica><host>2206:410:a10::222</host><port>9000</port><user>default</user><password>CK#password@2000</password></replica> <replica><host>2206:420:a10::333</host><port>9000</port><user>default</user><password>CK#password@2000</password></replica></shard></clickhouse_cluster_3shards_1replicas></clickhouse_remote_servers><!-- 配置的zookeeper集群 --><zookeeper-servers><node index="1"><host>[2106:440:a30::444]</host><port>2181</port></node><node index="2"><host>[2106:440:a30::555]</host><port>2181</port></node><node index="3"><host>[2106:440:a30::666]</host><port>2181</port></node></zookeeper-servers><!-- 区分每台clickhouse节点的宏配置,每台clickhouse需要配置不同名称 --><macros><shard>01</shard><replica>[2206:410:a10::111]</replica></macros><!-- 这里配置ip为“::/0”代表任意IP可以访问,包含IPv4和IPv6 --><networks><ip>::/0</ip></networks><!-- MergeTree引擎表的数据压缩设置 --><clickhouse_compression><case><!-- 数据部分最小大小 --><min_part_size>10000000000</min_part_size><!-- 数据部分大小与表大小的比率 --><min_part_size_ratio>0.01</min_part_size_ratio><!-- 数据压缩格式 --><method>lz4</method></case></clickhouse_compression></yandex>

接着,在全局配置config.xml中使用<include_from>标签导入刚才定义的配置。

<zookeeper incl="zookeeper-servers" optional="true" /><remote_servers incl="clickhouse_remote_servers" optional="true" /><macros incl="macros" optional="true"/> <include_from>/etc/clickhouse server/metrika.xml</include_from>

5.3 用户配置文件 /etc/clickhouse-server/users.xml

<?xml version="1.0"?><yandex><!-- Profiles of settings. --><profiles><!-- Default settings. --><default><!-- Maximum memory usage for processing single query, in bytes. --><max_memory_usage>10000000000</max_memory_usage><!-- Use cache of uncompressed blocks of data. Meaningful only for processing many of very short queries. --><use_uncompressed_cache>0</use_uncompressed_cache><!-- How to choose between replicas during distributed query processing.random - choose random replica from set of replicas with minimum number of errorsnearest_hostname - from set of replicas with minimum number of errors, choose replicawith minimum number of different symbols between replica's hostname and local hostname(Hamming distance).in_order - first live replica is chosen in specified order.first_or_random - if first replica one has higher number of errors, pick a random one from replicas with minimum number of errors.--><load_balancing>random</load_balancing></default><openapi><!-- Maximum memory usage for processing single query, in bytes. --><max_memory_usage>10000000000</max_memory_usage><!-- Use cache of uncompressed blocks of data. Meaningful only for processing many of very short queries. --><use_uncompressed_cache>0</use_uncompressed_cache><!-- How to choose between replicas during distributed query processing.random - choose random replica from set of replicas with minimum number of errorsnearest_hostname - from set of replicas with minimum number of errors, choose replicawith minimum number of different symbols between replica's hostname and local hostname(Hamming distance).in_order - first live replica is chosen in specified order.first_or_random - if first replica one has higher number of errors, pick a random one from replicas with minimum number of errors.--><load_balancing>random</load_balancing></openapi><!-- Profile that allows only read queries. --><readonly><readonly>1</readonly></readonly></profiles><!-- Users and ACL. --><users><!-- If user name was not specified, 'default' user is used. --><default><!-- Password could be specified in plaintext or in SHA256 (in hex format).If you want to specify password in plaintext (not recommended), place it in 'password' element.Example: <password>qwerty</password>.Password could be empty.If you want to specify SHA256, place it in 'password_sha256_hex' element.Example: <password_sha256_hex>65e84be33532fb784c48129675f9eff3a682b27168c0ea744b2cf58ee02337c5</password_sha256_hex>Restrictions of SHA256: impossibility to connect to ClickHouse using MySQL JS client (as of July 2019).If you want to specify double SHA1, place it in 'password_double_sha1_hex' element.Example: <password_double_sha1_hex>e395796d6546b1b65db9d665cd43f0e858dd4303</password_double_sha1_hex>If you want to specify a previously defined LDAP server (see 'ldap_servers' in main config) for authentication, place its name in 'server' element inside 'ldap' element.Example: <ldap><server>my_ldap_server</server></ldap>How to generate decent password:Execute: PASSWORD=$(base64 < /dev/urandom | head -c8); echo "$PASSWORD"; echo -n "$PASSWORD" | sha256sum | tr -d '-'In first line will be password and in second - corresponding SHA256.How to generate double SHA1:Execute: PASSWORD=$(base64 < /dev/urandom | head -c8); echo "$PASSWORD"; echo -n "$PASSWORD" | sha1sum | tr -d '-' | xxd -r -p | sha1sum | tr -d '-'In first line will be password and in second - corresponding double SHA1.--><password>ecsb_crb#CLICKHOUSE2021</password><!-- List of networks with open access.To open access from everywhere, specify:<ip>::/0</ip>To open access only from localhost, specify:<ip>::1</ip><ip>127.0.0.1</ip>Each element of list has one of the following forms:<ip> IP-address or network mask. Examples: 213.180.204.3 or 10.0.0.1/8 or 10.0.0.1/255.255.255.02a02:6b8::3 or 2a02:6b8::3/64 or 2a02:6b8::3/ffff:ffff:ffff:ffff::.<host> Hostname. Example: server01.yandex.ru.To check access, DNS query is performed, and all received addresses compared to peer address.<host_regexp> Regular expression for host names. Example, ^server\d\d-\d\d-\d\.yandex\.ru$To check access, DNS PTR query is performed for peer address and then regexp is applied.Then, for result of PTR query, another DNS query is performed and all received addresses compared to peer address.Strongly recommended that regexp is ends with $All results of DNS requests are cached till server restart.--><networks incl="networks" replace="replace"><ip>::/0</ip></networks><!-- Settings profile for user. --><profile>default</profile><!-- Quota for user. --><quota>default</quota><!-- User can create other users and grant rights to them. --><!-- <access_management>1</access_management> --></default><openapi><!-- Password could be specified in plaintext or in SHA256 (in hex format).If you want to specify password in plaintext (not recommended), place it in 'password' element.Example: <password>qwerty</password>.Password could be empty.If you want to specify SHA256, place it in 'password_sha256_hex' element.Example: <password_sha256_hex>65e84be33532fb784c48129675f9eff3a682b27168c0ea744b2cf58ee02337c5</password_sha256_hex>Restrictions of SHA256: impossibility to connect to ClickHouse using MySQL JS client (as of July 2019).If you want to specify double SHA1, place it in 'password_double_sha1_hex' element.Example: <password_double_sha1_hex>e395796d6546b1b65db9d665cd43f0e858dd4303</password_double_sha1_hex>If you want to specify a previously defined LDAP server (see 'ldap_servers' in main config) for authentication, place its name in 'server' element inside 'ldap' element.Example: <ldap><server>my_ldap_server</server></ldap>How to generate decent password:Execute: PASSWORD=$(base64 < /dev/urandom | head -c8); echo "$PASSWORD"; echo -n "$PASSWORD" | sha256sum | tr -d '-'In first line will be password and in second - corresponding SHA256.How to generate double SHA1:Execute: PASSWORD=$(base64 < /dev/urandom | head -c8); echo "$PASSWORD"; echo -n "$PASSWORD" | sha1sum | tr -d '-' | xxd -r -p | sha1sum | tr -d '-'In first line will be password and in second - corresponding double SHA1.--><password>CK#password@2000</password><!-- List of networks with open access.To open access from everywhere, specify:<ip>::/0</ip>To open access only from localhost, specify:<ip>::1</ip><ip>127.0.0.1</ip>Each element of list has one of the following forms:<ip> IP-address or network mask. Examples: 213.180.204.3 or 10.0.0.1/8 or 10.0.0.1/255.255.255.02a02:6b8::3 or 2a02:6b8::3/64 or 2a02:6b8::3/ffff:ffff:ffff:ffff::.<host> Hostname. Example: server01.yandex.ru.To check access, DNS query is performed, and all received addresses compared to peer address.<host_regexp> Regular expression for host names. Example, ^server\d\d-\d\d-\d\.yandex\.ru$To check access, DNS PTR query is performed for peer address and then regexp is applied.Then, for result of PTR query, another DNS query is performed and all received addresses compared to peer address.Strongly recommended that regexp is ends with $All results of DNS requests are cached till server restart.--><networks incl="networks" replace="replace"><ip>::/0</ip></networks><!-- Settings profile for user. --><profile>default</profile><!-- Quota for user. --><quota>default</quota><!-- User can create other users and grant rights to them. --><!-- <access_management>1</access_management> --></openapi></users><!-- Quotas. --><quotas><!-- Name of quota. --><default><!-- Limits for time interval. You could specify many intervals with different limits. --><interval><!-- Length of interval. --><duration>3600</duration><!-- No limits. Just calculate resource usage for time interval. --><queries>0</queries><errors>0</errors><result_rows>0</result_rows><read_rows>0</read_rows><execution_time>0</execution_time></interval></default></quotas></yandex>

6、配置文件拷贝到其他机器上

# 本地到远程机器

[root@localhost clickhouse]# scp -r /etc/clickhouse-server/* root@10.130.149.33:/etc/clickhouse-server# 远程机器到本地

[root@localhost clickhouse]# scp root@10.130.149.58:/etc/clickhouse-server/* /etc/clickhouse-server

7、生成密钥文件

这一步可以省略

[root@localhost clickhouse-server]# openssl req -subj "/CN=localhost" -new -newkey rsa:2048 -days 365 -nodes -x509 -keyout /etc/clickhouse-server/server.key -out /etc/clickhouse-server/server.crt

[root@localhost clickhouse-server]# openssl dhparam -out /etc/clickhouse-server/dhparam.pem 4096

8、 启动clickhouse服务

[root@localhost clickhouse]# su clickhouse

[clickhouse@localhost clickhouse]$ clickhouse-server start

9、 登录客户端

# clickhouse-client --host=cdh02 --port=9002 -m

[clickhouse@localhost clickhouse]$ clickhouse-client --user openapi --password ecsb_crb#CLICKHOUSE2021 --multiline# 检查集群状态

localhost.novalocal :) select * from system.clusters;# 校验zookeeper连接

localhost.novalocal :) SELECT * FROM system.zookeeper WHERE path = '/clickhouse';# 退出

localhost.novalocal :) exit;

10、参考资料

- ClickHouse 安装

- Which ClickHouse version to use in production?

相关文章:

【Linux】ClickHouse 部署

搭建Clickhouse集群时,需要使用Zookeeper去实现集群副本之间的同步,所以需要先搭建zookeeper集群 1、卸载 # 检查有哪些clickhouse依赖包: [rootlocalhost ~]# yum list installed | grep clickhouse# 移除依赖包: [rootlocalho…...

js的小知识

以下是一些 JavaScript 的小知识点,适合不同水平的开发者: 1. 变量声明 使用 let、const 和 var 声明变量。let 和 const 块级作用域,而 var 是函数作用域。const 声明的变量不可重新赋值,但对象的属性仍然可以修改。 2. 箭头函…...

一些swift问题

写得比较快,如果有问题请私信。 序列化和反序列化 反序列化的jsonString2只是给定的任意json字符串 private func p_testDecodeTable() {let arr ["recordID123456", "recordID2"]// 序列化[string] -> json datalet jsonData try? JSO…...

Nginx安装配置详解

Nginx Nginx官网 Tengine翻译的Nginx中文文档 轻量级的Web服务器,主要有反向代理、负载均衡的功能。 能够支撑5万的并发量,运行时内存和CPU占用低,配置简单,运行稳定。 写在前 uWSGI与Nginx的关系 1. 安装 Windows 官网 Stabl…...

汽车免拆诊断案例 | 2010款起亚赛拉图车发动机转速表指针不动

故障现象 一辆2010款起亚赛拉图车,搭载G4ED 发动机,累计行驶里程约为17.2万km。车主反映,车辆行驶正常,但组合仪表上的发动机转速表指针始终不动。 故障诊断 接车后进行路试,车速表、燃油存量表及发动机冷却温度…...

在ubuntu上安装最新版的clang

方法一: 执行如下的命令: # 下载安装脚本wget https://apt.llvm.org/llvm.sh chmod x llvm.sh # 开始下载, 输入需要安装的版本号。 sudo ./llvm.sh <version number>方法二 添加软件下载源。 请根据自己的Ubuntu系统版本添加&…...

使用Django REST framework构建RESTful API

使用Django REST framework构建RESTful API Django REST framework简介 安装Django REST framework 创建Django项目 创建Django应用 配置Django项目 创建模型 迁移数据库 创建序列化器 创建视图 配置URL 配置全局URL 配置认证和权限 测试API 使用Postman测试API 分页 过滤和排序…...

「Mac畅玩鸿蒙与硬件14」鸿蒙UI组件篇4 - Toggle 和 Checkbox 组件

在鸿蒙开发中,Toggle 和 Checkbox 是常用的交互组件,分别用于实现开关切换和多项选择。Toggle 提供多种类型以适应不同场景,而 Checkbox 支持自定义样式及事件回调。本篇将详细介绍这两个组件的基本用法,并通过实战展示它们的组合应用。 关键词 Toggle 组件Checkbox 组件开…...

Kotlin协程suspend的理解

suspend修饰符,它可以告诉编译器,该函数需要在协程中执行。作为开发者,您可以把挂起函数看作是普通函数,只不过它可能会在某些时刻挂起和恢复而已。协程的挂起就是退出方法,等到一定条件到来会重新执行这个方法&#x…...

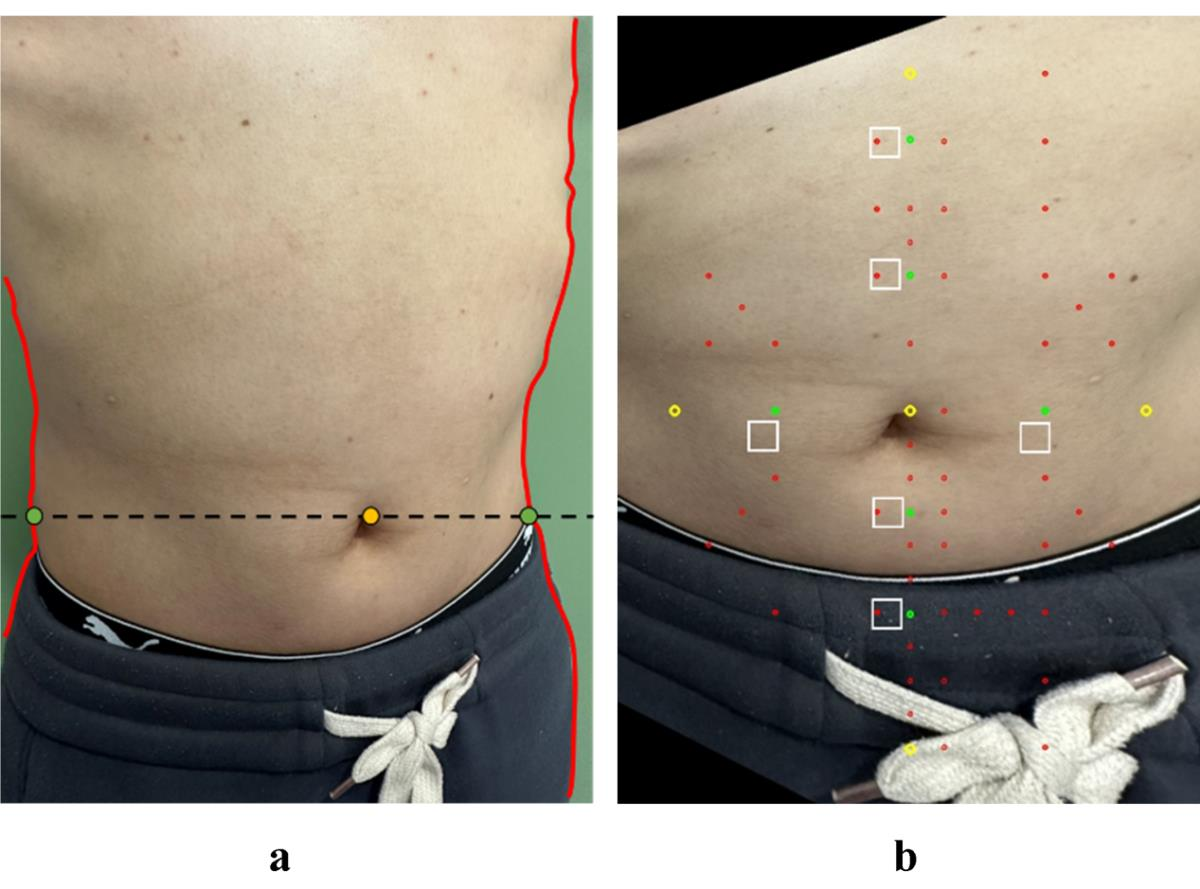

基于AI深度学习的中医针灸实训室腹针穴位智能辅助定位系统开发

在中医针灸的传统治疗中,穴位取穴的精确度对于治疗效果至关重要。然而,传统的定位方法,如体表标志法、骨度折量法和指寸法,由于观察角度、个体差异(如人体姿态和皮肤纹理)以及环境因素的干扰,往…...

51单片机教程(二)- 创建项目

1 创建项目 创建项目存储文件夹:C51Project 打开Keil5软件,选择 Project -> New uVision Project: 选择项目路径,即刚才创建的文件夹 选择芯片,选择 Microchip(微型集成电路)࿰…...

Rust 图形界面开发——使用 GTK 创建跨平台 GUI

第五章 图形界面开发 第一节 使用 GTK 创建跨平台 GUI GTK(GIMP Toolkit)是一个流行的开源跨平台图形用户界面库,适用于创建桌面应用程序。结合 Rust 的 gtk-rs 库,开发者能够高效地构建现代化 GUI 应用。本节将详细探讨 GTK 的…...

Hellinger Distance(赫林格距离)

Hellinger Distance(赫林格距离)是一种用于衡量两个概率分布相似度的距离度量。它通常用于概率统计、信息论和机器学习中,以评估两个分布之间的相似性。Hellinger距离的值介于0和1之间,其中0表示两个分布完全相同,1表示…...

【系统架构设计师】七、设计模式

7.1 设计模式概述 设计经验在实践者之间日益广泛地利用,描述这些共同问题和解决这些问题的方案就形成了所谓的模式。 7.1.1 设计模式的历史 建筑师Christopher Alexander首先提出了模式概念,他将模式分为了三个部分: 特定的情景ÿ…...

新工具可绕过 Google Chrome 的新 Cookie 加密系统

一位研究人员发布了一款工具,用于绕过 Google 新推出的 App-Bound 加密 cookie 盗窃防御措施并从 Chrome 网络浏览器中提取已保存的凭据。 这款工具名为“Chrome-App-Bound-Encryption-Decryption”,由网络安全研究员亚历山大哈格纳 (Alexander Hagenah…...

模型拆解(三):EGNet、FMFINet、MJRBM

文章目录 一、EGNet1.1编码器:VGG16的扩展网络 二、EMFINet2.1编码器:三分支并行卷积编码器2.2CFFM:级联特征融合模块2.3Edge Module:突出边缘提取模块2.4Bridge Module:桥接器2.5解码器:深度特征融合解码器…...

齐次线性微分方程的解的性质与结构

内容来源 常微分方程(第四版) (王高雄,周之铭,朱思铭,王寿松) 高等教育出版社 齐次线性微分方程定义 d n x d t n a 1 ( t ) d n − 1 x d t n − 1 ⋯ a n − 1 ( t ) d x d t a n ( t ) x 0 \frac{\mathrm{d}^nx}{\mathrm{d}t^n} a_1(t)\frac{\mathrm{d}^{n-1}x}{\math…...

Python-Celery-基础用法总结-安装-配置-启动

文章目录 1.安装 Celery2.配置 Celery3.启动 Worker4.调用任务5.任务装饰器选项6.任务状态7.定期任务8.高级特性9.监控和管理 Celery 是一个基于分布式消息传递的异步任务队列。它专注于实时操作,但也支持调度。Celery 可以与 Django, Flask, Pyramid 等 Web 框架集…...

- 2024最新版前端秋招面试短期突击面试题【100道】)

vue中的nextTick() - 2024最新版前端秋招面试短期突击面试题【100道】

nextTick() - 2024最新版前端秋招面试短期突击面试题【100道】 🔄 在Vue.js中,nextTick 是一个重要的方法,用于在下次DOM更新循环结束之后执行回调函数。理解 nextTick 的原理和用法可以帮助你更好地处理DOM更新和异步操作。以下是关于 next…...

5G学习笔记三之物理层、数据链路层、RRC层协议

5G学习笔记三之物理层、数据链路层、RRC层协议 物理层位于无线接口协议栈的最底层,作用:提供了物理介质中比特流传输所需要的所有功能。 1.3.1 传输信道的类型 物理层为MAC层和更高层提供信息传输的服务,其中,物理层提供的服务…...

Claude帮用户找回40万美元Bitcoin:AI在密码破解上真正擅长的是什么?

一名美国男子在2013年买了5个BTC,2015年在醉酒后修改钱包密码,忘记了新密码。 11年后,他用Claude找回了价值40万美元的资产。 网友:AI真的很神奇。 但很少有人问这个问题:Claude到底是怎么做到的,以及更重要…...

C51可重入函数原理与实践指南

1. 理解C51中的可重入函数概念 在8051单片机开发中,可重入函数(Reentrant Function)是一个关键但常被误解的概念。与通用计算机上的C语言开发不同,由于8051架构的特殊限制,标准C51函数默认都是不可重入的。这源于8051硬件设计的几个固有特点&…...

处理智能体的不确定性:重试、回退与人工介入

一个让AI“不任性”的实战手册——该认错时认错,该求助时求助先讲一个让我至今心有余悸的事。 去年做的一个金融Agent,任务是每天自动从十几家券商网站抓取研报,提取关键的投资评级和目标价,然后汇总成一张表发给基金经理。上线跑…...

5G 网络优化工程师是骗局吗?从业15年资深老工程师实话实说

01 5G 网优岗位,本身真实靠谱很多人一刷到 5G 网络优化工程师这个岗位,第一反应都是犹豫、怀疑:这到底是不是收割小白的骗局?我在通信行业深耕整整 15 年,也拿到过华为高级工程师认证,今天以业内老兵的身份…...

AI工程师实战技能树:从特征工程到MLOps的完整指南

1. 项目概述与核心价值最近在GitHub上看到一个挺有意思的仓库,叫tqviet1978/ai-skills。光看名字,你可能会觉得这又是一个关于AI技能学习的普通教程合集。但当我点进去仔细研究后,发现它的定位和内容组织方式,与市面上大多数“AI学…...

终极指南:在Windows上安装安卓应用的简单解决方案

终极指南:在Windows上安装安卓应用的简单解决方案 【免费下载链接】APK-Installer An Android Application Installer for Windows 项目地址: https://gitcode.com/GitHub_Trending/ap/APK-Installer 你是否曾经希望在Windows电脑上直接运行手机应用…...

从零打造专属机械键盘:基于CircuitPython的USB HID输入设备实践

1. 项目概述:打造你的专属“一键”键盘如果你对市面上千篇一律的键盘感到厌倦,或者一直想亲手制作一个独一无二的输入设备,那么这个项目就是为你准备的。今天,我们不谈那些复杂的全尺寸客制化键盘,而是从一个精巧、有趣…...

开源轻量CRM系统skill-twenty-crm技术解析与全栈部署指南

1. 项目概述与核心价值最近在GitHub上看到一个挺有意思的项目,叫devchaudhary24k/skill-twenty-crm。光看这个名字,你可能会有点懵,这“Skill Twenty CRM”到底是个啥?作为一个在软件开发和团队协作领域摸爬滚打多年的老手&#x…...

高校图书馆未公开的Perplexity学术协议:解锁DOI深度解析、跨库引文追踪与灰色文献捕获权限

更多请点击: https://codechina.net 第一章:高校图书馆未公开的Perplexity学术协议全景解析 Perplexity学术协议并非官方发布的标准规范,而是国内部分高校图书馆在采购或对接Perplexity Pro教育版API服务时,经谈判形成的定制化协…...

Borderless Gaming终极指南:如何轻松实现无边框游戏窗口管理

Borderless Gaming终极指南:如何轻松实现无边框游戏窗口管理 【免费下载链接】Borderless-Gaming Play your favorite games in a borderless window; no more time consuming alt-tabs. 项目地址: https://gitcode.com/gh_mirrors/bo/Borderless-Gaming 你…...