Kafka To HBase To Hive

目录

1.在HBase中创建表

2.写入API

2.1普通模式写入hbase(逐条写入)

2.2普通模式写入hbase(buffer写入)

2.3设计模式写入hbase(buffer写入)

3.HBase表映射至Hive中

1.在HBase中创建表

hbase(main):003:0> create_namespace 'events_db'

hbase(main):004:0> create 'events_db:users','profile','region','registration'

hbase(main):005:0> create 'events_db:user_friend','uf'

hbase(main):006:0> create 'events_db:events','schedule','location','creator','remark'

hbase(main):007:0> create 'events_db:event_attendee','euat'

hbase(main):008:0> create 'events_db:train','eu'

hbase(main):011:0> list_namespace_tables 'events_db'

TABLE

event_attendee

events

train

user_friend

users

5 row(s)

2.写入API

2.1普通模式写入hbase(逐条写入)

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.HConstants;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.Connection;

import org.apache.hadoop.hbase.client.ConnectionFactory;

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.client.Table;

import org.apache.hadoop.hbase.util.Bytes;

import org.apache.kafka.clients.consumer.ConsumerConfig;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import org.apache.kafka.common.serialization.StringDeserializer;import java.io.IOException;

import java.time.Duration;import java.util.ArrayList;

import java.util.Collections;

import java.util.Properties;/*** 将Kafka中的topic为userfriends中的数据消费到hbase中* hbase中的表为events_db:user_friend*/

public class UserFriendToHB {static int num = 0; //计数器public static void main(String[] args) {Properties properties = new Properties();properties.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, "kb129:9092");properties.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class);properties.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG,StringDeserializer.class);properties.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG,"earliest");properties.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG,"false");properties.put(ConsumerConfig.GROUP_ID_CONFIG, "user_friend_group1");KafkaConsumer<String, String> consumer = new KafkaConsumer<>(properties);consumer.subscribe(Collections.singleton("userfriends"));//配置hbase信息,连接hbase数据库Configuration conf = HBaseConfiguration.create();conf.set(HConstants.HBASE_DIR, "hdfs://kb129:9000/hbase");conf.set(HConstants.ZOOKEEPER_QUORUM, "kb129");conf.set(HConstants.CLIENT_PORT_STR, "2181");Connection connection = null;try {connection = ConnectionFactory.createConnection(conf);Table ufTable = connection.getTable(TableName.valueOf("events_db:user_friend"));ArrayList<Put> datas = new ArrayList<>();while (true){ConsumerRecords<String, String> poll = consumer.poll(Duration.ofMillis(100));//每次for循环前清空datasdatas.clear();for (ConsumerRecord<String, String> record : poll) {//System.out.println(record.value());String[] split = record.value().split(",");int i = (split[0] + split[1]).hashCode();Put put = new Put(Bytes.toBytes(i));put.addColumn(Bytes.toBytes("uf"), Bytes.toBytes("userid"), split[0].getBytes());put.addColumn("uf".getBytes(), "friend".getBytes(),split[1].getBytes());datas.add(put);}num = num + datas.size();System.out.println("---------num:" + num);if (datas.size() > 0){ufTable.put(datas);}try {Thread.sleep(10);} catch (InterruptedException e) {throw new RuntimeException(e);}}} catch (IOException e) {throw new RuntimeException(e);}}

}

2.2普通模式写入hbase(buffer写入)

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.HConstants;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.*;

import org.apache.hadoop.hbase.util.Bytes;

import org.apache.kafka.clients.consumer.ConsumerConfig;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import org.apache.kafka.common.serialization.StringDeserializer;import java.io.IOException;

import java.time.Duration;

import java.util.ArrayList;

import java.util.Collections;

import java.util.Properties;/*** 将Kafka中的topic为userfriends中的数据消费到hbase中* hbase中的表为events_db:user_friend*/

public class UserFriendToHB2 {static int num = 0; //计数器public static void main(String[] args) {Properties properties = new Properties();properties.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, "kb129:9092");properties.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class);properties.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG,StringDeserializer.class);properties.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG,"earliest");properties.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG,"false");properties.put(ConsumerConfig.GROUP_ID_CONFIG, "user_friend_group1");KafkaConsumer<String, String> consumer = new KafkaConsumer<>(properties);consumer.subscribe(Collections.singleton("userfriends"));//配置hbase信息,连接hbase数据库Configuration conf = HBaseConfiguration.create();conf.set(HConstants.HBASE_DIR, "hdfs://kb129:9000/hbase");conf.set(HConstants.ZOOKEEPER_QUORUM, "kb129");conf.set(HConstants.CLIENT_PORT_STR, "2181");Connection connection = null;try {connection = ConnectionFactory.createConnection(conf);BufferedMutatorParams bufferedMutatorParams = new BufferedMutatorParams(TableName.valueOf("events_db:user_friend"));bufferedMutatorParams.setWriteBufferPeriodicFlushTimeoutMs(10000);//设置超时flush时间最大值bufferedMutatorParams.writeBufferSize(10*1024*1024);//设置缓存大小flushBufferedMutator bufferedMutator = connection.getBufferedMutator(bufferedMutatorParams) ;ArrayList<Put> datas = new ArrayList<>();while (true){ConsumerRecords<String, String> poll = consumer.poll(Duration.ofMillis(100));datas.clear(); //每次for循环前清空datasfor (ConsumerRecord<String, String> record : poll) {//System.out.println(record.value());String[] split = record.value().split(",");int i = (split[0] + split[1]).hashCode();Put put = new Put(Bytes.toBytes(i));put.addColumn(Bytes.toBytes("uf"), Bytes.toBytes("userid"), split[0].getBytes());put.addColumn("uf".getBytes(), "friend".getBytes(),split[1].getBytes());datas.add(put);}num = num + datas.size();System.out.println("---------num:" + num);if (datas.size() > 0){bufferedMutator.mutate(datas);}}} catch (IOException e) {throw new RuntimeException(e);}}

}

2.3设计模式写入hbase(buffer写入)

(1)Iworker接口

public interface IWorker {void fillData(String targetName);

}(2)worker实现类

import nj.zb.kb23.kafkatohbase.oop.writer.IWriter;

import org.apache.kafka.clients.consumer.ConsumerConfig;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import org.apache.kafka.common.serialization.StringDeserializer;import java.time.Duration;

import java.util.Collections;

import java.util.Properties;public class Worker implements IWorker {private KafkaConsumer<String, String> consumer = null;private IWriter writer = null;public Worker(String topicName, String consumerGroupId, IWriter writer) {this.writer = writer;Properties properties = new Properties();properties.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, "kb129:9092");properties.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class);properties.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class);properties.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG, "earliest");properties.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG, "false");properties.put(ConsumerConfig.GROUP_ID_CONFIG, consumerGroupId);consumer = new KafkaConsumer<>(properties);consumer.subscribe(Collections.singleton(topicName));}@Overridepublic void fillData(String targetName) {int num = 0;while (true) {ConsumerRecords<String, String> records = consumer.poll(Duration.ofMillis(100));int returnNum = writer.write(targetName, records);num += returnNum;System.out.println("---------num:" + num);}}

}

(3)IWriter接口

import org.apache.kafka.clients.consumer.ConsumerRecords;/*** 完成kafka消费出的数据 ConsumerRecords 的组装和写入到指定类型的数据库 指定table 的工作*/

public interface IWriter {int write(String targetTableName, ConsumerRecords<String, String> records);

}(4)writer实现类

import nj.zb.kb23.kafkatohbase.oop.handler.IParseRecord;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.HConstants;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.*;

import org.apache.kafka.clients.consumer.ConsumerRecords;import java.io.IOException;

import java.util.List;public class HBaseWriter implements IWriter{private Connection connection = null;private BufferedMutator bufferedMutator = null;private IParseRecord handler = null;/*** 初始化HBaseWriter对象*/public HBaseWriter(IParseRecord handler) {this.handler = handler;Configuration conf = HBaseConfiguration.create();conf.set(HConstants.HBASE_DIR, "hdfs://kb129:9000/hbase");conf.set(HConstants.ZOOKEEPER_QUORUM, "kb129");conf.set(HConstants.CLIENT_PORT_STR, "2181");try {connection = ConnectionFactory.createConnection(conf);} catch (IOException e) {throw new RuntimeException(e);}}private void getBufferedMutator(String targetTableName){BufferedMutatorParams bufferedMutatorParams = new BufferedMutatorParams(TableName.valueOf(targetTableName));bufferedMutatorParams.setWriteBufferPeriodicFlushTimeoutMs(10000);//设置超时flush时间最大值bufferedMutatorParams.writeBufferSize(10*1024*1024);//设置缓存大小flushif (bufferedMutator == null){try {bufferedMutator = connection.getBufferedMutator(bufferedMutatorParams);} catch (IOException e) {throw new RuntimeException(e);}}}@Overridepublic int write(String targetTableName, ConsumerRecords<String, String> records) {if (records.count() > 0) {this.getBufferedMutator(targetTableName);List<Put> datas = handler.parse(records);try {bufferedMutator.mutate(datas);} catch (IOException e) {throw new RuntimeException(e);}return datas.size();}else {return 0;}}

}

(5)IParseRecord接口

import org.apache.hadoop.hbase.client.Put;

import org.apache.kafka.clients.consumer.ConsumerRecords;import java.util.List;/*** 将record 装配成 put*/

public interface IParseRecord {List<Put> parse(ConsumerRecords<String, String> records);

}(6)具体表对应的handler类(包装Put)

UsersHandler

import org.apache.hadoop.hbase.client.Put;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;import java.util.ArrayList;

import java.util.List;public class UsersHandler implements IParseRecord{List<Put> datas = new ArrayList<>();@Overridepublic List<Put> parse(ConsumerRecords<String, String> records) {datas.clear();for (ConsumerRecord<String, String> record : records) {String[] users = record.value().split(",");Put put = new Put(users[0].getBytes());put.addColumn("profile".getBytes(), "birthyear".getBytes(), users[2].getBytes());put.addColumn("profile".getBytes(), "gender".getBytes(), users[3].getBytes());put.addColumn("region".getBytes(), "locale".getBytes(), users[1].getBytes());if (users.length > 4){put.addColumn("registration".getBytes(), "joinedAt".getBytes(), users[4].getBytes());}if (users.length > 5){put.addColumn("region".getBytes(), "location".getBytes(), users[5].getBytes());}if (users.length > 6){put.addColumn("region".getBytes(), "timezone".getBytes(), users[6].getBytes());}datas.add(put);}return datas;}

}

TrainHandler

import org.apache.hadoop.hbase.client.Put;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;import java.util.ArrayList;

import java.util.List;public class TrainHandler implements IParseRecord{List<Put> datas = new ArrayList<>();@Overridepublic List<Put> parse(ConsumerRecords<String, String> records) {datas.clear();for (ConsumerRecord<String, String> record : records) {String[] trains = record.value().split(",");double random = Math.random();Put put = new Put((trains[0]+trains[1]+random).getBytes());put.addColumn("eu".getBytes(), "user".getBytes(), trains[0].getBytes());put.addColumn("eu".getBytes(), "event".getBytes(), trains[1].getBytes());put.addColumn("eu".getBytes(), "invited".getBytes(), trains[2].getBytes());put.addColumn("eu".getBytes(), "timestamp".getBytes(), trains[3].getBytes());put.addColumn("eu".getBytes(), "interested".getBytes(), trains[4].getBytes());put.addColumn("eu".getBytes(), "not_interested".getBytes(), trains[5].getBytes());datas.add(put);}return datas;}

}

EventsHandler

import org.apache.hadoop.hbase.client.Put;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;import java.util.ArrayList;

import java.util.List;public class EventsHandler implements IParseRecord {List<Put> datas = new ArrayList<>();@Overridepublic List<Put> parse(ConsumerRecords<String, String> records) {datas.clear();for (ConsumerRecord<String, String> record : records) {String[] events = record.value().split(",");Put put = new Put(events[0].getBytes());put.addColumn("creator".getBytes(), "user_id".getBytes(),events[1].getBytes());put.addColumn("schedule".getBytes(), "start_time".getBytes(),events[2].getBytes());put.addColumn("location".getBytes(), "city".getBytes(),events[3].getBytes());put.addColumn("location".getBytes(), "state".getBytes(),events[4].getBytes());put.addColumn("location".getBytes(), "zip".getBytes(),events[5].getBytes());put.addColumn("location".getBytes(), "country".getBytes(),events[6].getBytes());put.addColumn("location".getBytes(), "lat".getBytes(),events[7].getBytes());put.addColumn("location".getBytes(), "lng".getBytes(),events[8].getBytes());put.addColumn("remark".getBytes(), "common_words".getBytes(),events[9].getBytes());datas.add(put);}return datas;}

}

EventAttendHandler

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.util.Bytes;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;import java.util.ArrayList;

import java.util.List;public class EventAttendHandler implements IParseRecord{@Overridepublic List<Put> parse(ConsumerRecords<String, String> records) {List<Put> datas = new ArrayList<>();for (ConsumerRecord<String, String> record : records) {String[] splits = record.value().split(",");Put put = new Put((splits[0] + splits[1] + splits[2]).getBytes());put.addColumn(Bytes.toBytes("euat"), Bytes.toBytes("eventid"), splits[0].getBytes());put.addColumn("euat".getBytes(), "friendid".getBytes(),splits[1].getBytes());put.addColumn("euat".getBytes(), "state".getBytes(),splits[2].getBytes());datas.add(put);}return datas;}

}

(7)主程序

import nj.zb.kb23.kafkatohbase.oop.handler.*;

import nj.zb.kb23.kafkatohbase.oop.worker.Worker;

import nj.zb.kb23.kafkatohbase.oop.writer.HBaseWriter;

import nj.zb.kb23.kafkatohbase.oop.writer.IWriter;

/*** 将Kafka中的topic为...中的数据消费到hbase中* hbase中的表为events_db:...*/

public class KfkToHbTest {static int num = 0; //计数器public static void main(String[] args) {//IParseRecord handler = new EventAttendHandler();//IWriter writer = new HBaseWriter(handler);//String topic = "eventattendees";//String consumerGroupId = "eventattendees_group1";//String targetName = "events_db:event_attendee";//Worker worker = new Worker(topic, consumerGroupId, writer);//worker.fillData(targetName);/*EventsHandler eventsHandler = new EventsHandler();IWriter writer = new HBaseWriter(eventsHandler);Worker worker = new Worker("events", "events_group1", writer);worker.fillData("events_db:eventsb");*//*UsersHandler usersHandler = new UsersHandler();IWriter writer = new HBaseWriter(usersHandler);Worker worker = new Worker("users_raw", "users_group1", writer);worker.fillData("events_db:users");*/TrainHandler trainHandler = new TrainHandler();IWriter writer = new HBaseWriter(trainHandler);Worker worker = new Worker("train", "train_group1", writer);worker.fillData("events_db:train2");}

}

3.HBase表映射至Hive中

create database if not exists events;

use events;create external table hb_users(userId string,birthyear int,gender string,locale string,location string,timezone string,joinedAt string

)stored by 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

with SERDEPROPERTIES ('hbase.columns.mapping'=':key,profile:birthyear,profile:gender,region:locale,region:location,region:timezone,registration:joinedAt')

tblproperties ('hbase.table.name'='events_db:users');select * from hb_users limit 3;

select count(1) from hb_users;--orc格式创建内部表存储映射外部表,安全保存数据,创建好可以直接删除hbase中的表

create table users stored as orc as select * from hb_users;

select * from users limit 3;

select count(1) from users;

drop table hb_users;--38209 1494

select count(*) from users where birthyear is null;select round(avg(birthyear), 0) from users;

select `floor`(avg(birthyear)) from users;-- 处理空字段,覆盖写入

withtb as ( select `floor`(avg(birthyear)) avgAge from users ),tb2 as ( select userId, nvl(birthyear, tb.avgAge),gender,locale,location,timezone,joinedAt from users,tb)

insert overwrite table users

select * from tb2;-- 查询到性别中空字符串109个

select count(gender) count from users where gender is null or gender = "";--------------------------------------------------------

create external table hb_events(event_id string,user_id string,start_time string,city string,state string,zip string,country string,lat float,lng float,common_words string

)stored by 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

with SERDEPROPERTIES ('hbase.columns.mapping'=':key,creator:user_id,schedule:start_time,location:city,location:state,location:zip,location:country,location:lat,location:lng,remark:common_words')

tblproperties ('hbase.table.name'='events_db:events');select * from hb_events limit 10;

create table events stored as orc as select * from hb_events;

select count(*) from hb_events;

select count(*) from events;

drop table hb_events;select event_id from events group by event_id having count(event_id) >1;

withtb as (select event_id, row_number() over (partition by event_id) rn from events)

select event_id from tb where rn > 1;select user_id, count(event_id) num from events group by user_id order by num desc;-----------------------------------------------------

create external table if not exists hb_user_friend(row_key string,userid string,friendid string

)stored by 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

with SERDEPROPERTIES ('hbase.columns.mapping'=':key,uf:userid,uf:friend')

tblproperties ('hbase.table.name'='events_db:user_friend');select * from hb_user_friend limit 3;

create table user_friend stored as orc as select * from hb_user_friend;

select count(*) from hb_user_friend;

select count(*) from user_friend;

drop table hb_user_friend;-----------------------------------------------------------

create external table if not exists hb_event_attendee(row_key string,eventid string,friendid string,attendtype string

)stored by 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

with SERDEPROPERTIES ('hbase.columns.mapping'=':key,euat:eventid,euat:friendid,euat:state')

tblproperties ('hbase.table.name'='events_db:event_attendee');select * from hb_event_attendee limit 3;

select count(*) from hb_event_attendee;

create table event_attendee stored as orc as select * from hb_event_attendee;

select * from event_attendee limit 3;

select count(*) from event_attendee;

drop table hb_event_attendee;--------------------------------------------------------------

create external table if not exists hb_train(row_key string,userid string,eventid string,invited string,`timestamp` string,interested string

)stored by 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

with SERDEPROPERTIES ('hbase.columns.mapping'=':key,eu:user,eu:event,eu:invited,eu:timestamp,eu:interested')

tblproperties ('hbase.table.name'='events_db:train');select * from hb_train limit 3;

select count(*) from hb_train;

create table train stored as orc as select * from hb_train;

select * from train limit 3;

select count(*) from train;

drop table hb_train;-----------------------------------------------

create external table locale(locale_id int,locale string

)

row format delimited fields terminated by '\t'

location '/events/data/locale';select * from locale;create external table time_zone(time_zone_id int,time_zone string

)

row format delimited fields terminated by ','

location '/events/data/timezone';select * from time_zone;相关文章:

Kafka To HBase To Hive

目录 1.在HBase中创建表 2.写入API 2.1普通模式写入hbase(逐条写入) 2.2普通模式写入hbase(buffer写入) 2.3设计模式写入hbase(buffer写入) 3.HBase表映射至Hive中 1.在HBase中创建表 hbase(main):00…...

python pandas.DataFrame 直接写入Clickhouse

import pandas as pd import sqlalchemy from clickhouse_sqlalchemy import Table, engines from sqlalchemy import create_engine, MetaData, Column import urllib.parsehost 1.1.1.1 user default password default db test port 8123 # http连接端口 engine create…...

德语中第二虚拟式在主动态的形式,柯桥哪里可以学德语

德语中第二虚拟式在主动态的形式 1. 对于大多数的动词,一般使用这样的一般现在时时态: wrde 动词原形 例句:Wenn es nicht so viel kosten wrde, wrde ich mir ein Haus am Meer kaufen. 如果不花这么多钱,我会在海边买一栋房…...

[Python进阶] 消息框、弹窗:tkinter库

6.16 消息框、弹窗:tkinter 6.16.1 前言 应用程序中的提示信息处理程序是非常重要的部分,用户要知道他输入的资料到底正不正确,或者是应用程序有一些提示信息要告诉用户,都必须通过提示信息处理程序来显示适当的信息,…...

(免费领源码)java#Springboot#mysql装修选购网站99192-计算机毕业设计项目选题推荐

摘 要 随着科学技术,计算机迅速的发展。在如今的社会中,市场上涌现出越来越多的新型的产品,人们有了不同种类的选择拥有产品的方式,而电子商务就是随着人们的需求和网络的发展涌动出的产物,电子商务网站是建立在企业与…...

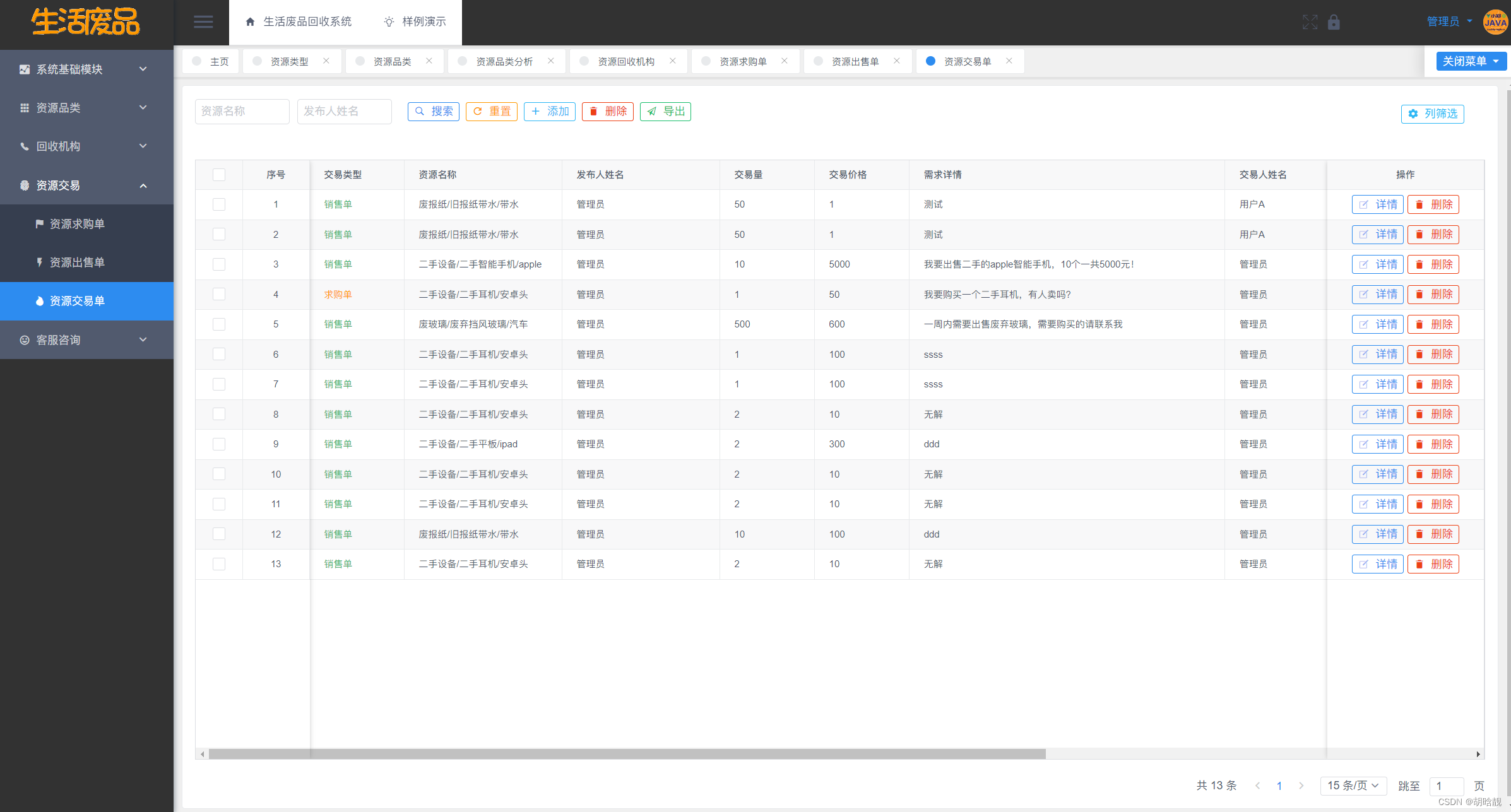

生活废品回收系统 JAVA语言设计和实现

目录 一、系统介绍 二、系统下载 三、系统截图 一、系统介绍 基于VueSpringBootMySQL的生活废品回收系统包含资源类型模块、资源品类模块、回收机构模块、回收机构模块、资源销售单模块、资源交易单模块、资源交易单模块,还包含系统自带的用户管理、部门管理、角…...

redhat/centos 配置本地yum源

- 详细步骤(首先需要将iso文件上传到服务器): 1. mkdir /media/cdrom #新建镜像文件挂载目录2. cd /usr/local/src #进入系统镜像文件存放目录3. ls #列出目录文件,可以看到刚刚上传的系统镜像文件4. mount -t iso9660 -o loop /usr/local/src/rhel-s…...

FLStudio2024汉化破解版在哪可以下载?

水果音乐制作软件FLStudio是一款功能强大的音乐创作软件,全名:Fruity Loops Studio。水果音乐制作软件FLStudio内含教程、软件、素材,是一个完整的软件音乐制作环境或数字音频工作站... FL Studio21简称FL 21,全称 Fruity Loops Studio 21,因此国人习惯叫…...

Java 音频处理,音频流转音频文件,获取音频播放时长

1.背景 最近对接了一款智能手表,手环,可以应用与老人与儿童监控,环卫工人监控,农场畜牧业监控,宠物监控等,其中用到了音频传输,通过平台下发语音包,发送远程命令录制当前设备音频并…...

Spring Boot发送邮件

在现代的互联网应用中,发送电子邮件是一项常见的功能需求。Spring Boot提供了简单且强大的邮件发送功能,使得在应用中集成邮件发送变得非常容易。本文将介绍如何在Spring Boot中发送电子邮件,并提供一个完整的示例。 1. 准备工作 在开始之前…...

智慧矿山:AI算法助力!刮板机监测,生产效率和安全性提升!

工作面刮板机在煤矿等采矿场景中起着重要作用。为了提高其生产效率和安全性,研究人员开发了一种基于 AI 算法的刮板机监测技术。 在传统的刮板机监测中,通常需要人工观察和判断刮板机的状态。这种方法存在许多问题,如主观性、耗时和易出错等。…...

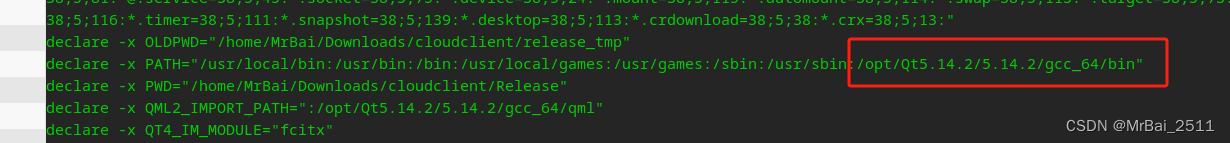

Qt跨平台(统信UOS)各种坑解决办法

记录Qt跨平台的坑,方便日后翻阅。 一、环境安装 本人用的是qt 5.14.2.直接在官网下载即可。地址:Index of /archive/qt/5.14/5.14.2 下载linux版本。 下载之后 添加可执行权限。 chmod 777 qt-opensource-linux-x64-5.14.2.run 然后执行。 出现坑1…...

ORB-SLAM3算法1之Ubuntu18.04+ROS-melodic安装ORB-SLAM3及各种问题解决

文章目录 0 引言1 安装依赖1.1 opencv安装1.2 Eigen3安装1.3 Pangolin安装1.4 其他2 编译安装ORB-SLAM32.1 build.sh2.2 build_ros.sh0 引言 ORB-SLAM3,在之前ORB-SLAM和ORB-SLAM2的基础上,新增了IMU多传感器融合SLAM,这是第一个能够使用针孔和鱼眼镜头模型通过单目、立体和…...

git学习笔记之用命令行解决冲突

背景 一般来说,当使用git检测到源分支和目标分支发生冲突时,我们习惯用IDE在本地进行冲突的解决,再合并、push。 但如果冲突文件不多,我们大可以直接用命令行去解决冲突。 方法 第一种方法: 找到所有的>>>…...

C语言中的内联汇编是什么?如何使用内联汇编进行底层编程?

C语言中的内联汇编是一种高级编程技术,允许开发者在C代码中嵌入汇编代码,以实现对特定处理器指令的直接控制和优化。内联汇编通常用于底层编程,例如操作系统开发、嵌入式系统编程和性能关键的应用程序。本文将详细介绍内联汇编的概念、语法和…...

react笔记基础部分(组件生命周期路由)

注意点: class是一个关键字, 类。 所以react 写class, 用classname ,会自动编译替换class 点击方法: <button onClick {this.sendData}>给父元素传值</button>常用的插件: 需要引入才能使用的…...

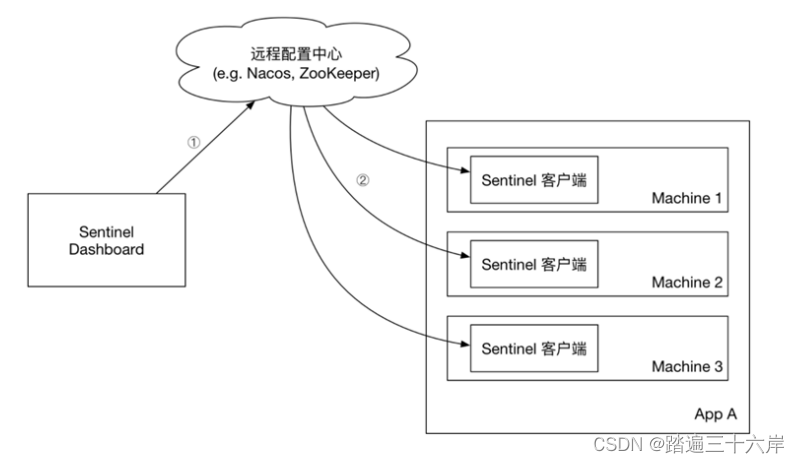

Sentinel授权规则和规则持久化

大家好我是苏麟 , 今天说说Sentinel规则持久化. 授权规则 授权规则可以对请求方来源做判断和控制。 授权规则 基本规则 授权规则可以对调用方的来源做控制,有白名单和黑名单两种方式。 白名单:来源(origin)在白名单内的调用…...

垃圾回收)

JVM(三) 垃圾回收

一、自动垃圾回收 1.1 C/C++的内存管理 在C/C++这类没有自动垃圾回收机制的语言中,一个对象如果不再使用,需要手动释放,否则就会出现内存泄漏。我们称这种释放对象的过程为垃圾回收,而需要程序员编写代码进行回收的方式为手动回收。 内存泄漏指的是不再使用的对象在系统中…...

vue3中使用svg并封装成组件

打包svg地图 安装插件 yarn add vite-plugin-svg-icons -D # or npm i vite-plugin-svg-icons -D # or pnpm install vite-plugin-svg-icons -D使用插件 vite.config.ts import { VantResolver } from unplugin-vue-components/resolvers import { createSvgIconsPlugin } from…...

实验六:DHCP、DNS、Apache、FTP服务器的安装和配置

1. (其它) 掌握Linux下DHCP、DNS、Apache、FTP服务器的安装和配置,在Linux服务器上部署JavaWeb应用 完成单元八的实训内容。 1、安装 JDK 2、安装 MySQL 3、部署JavaWeb应用 安装jdk 教程连接:linux安装jdk8详细步骤-CSDN博客 Jdk来源:linu…...

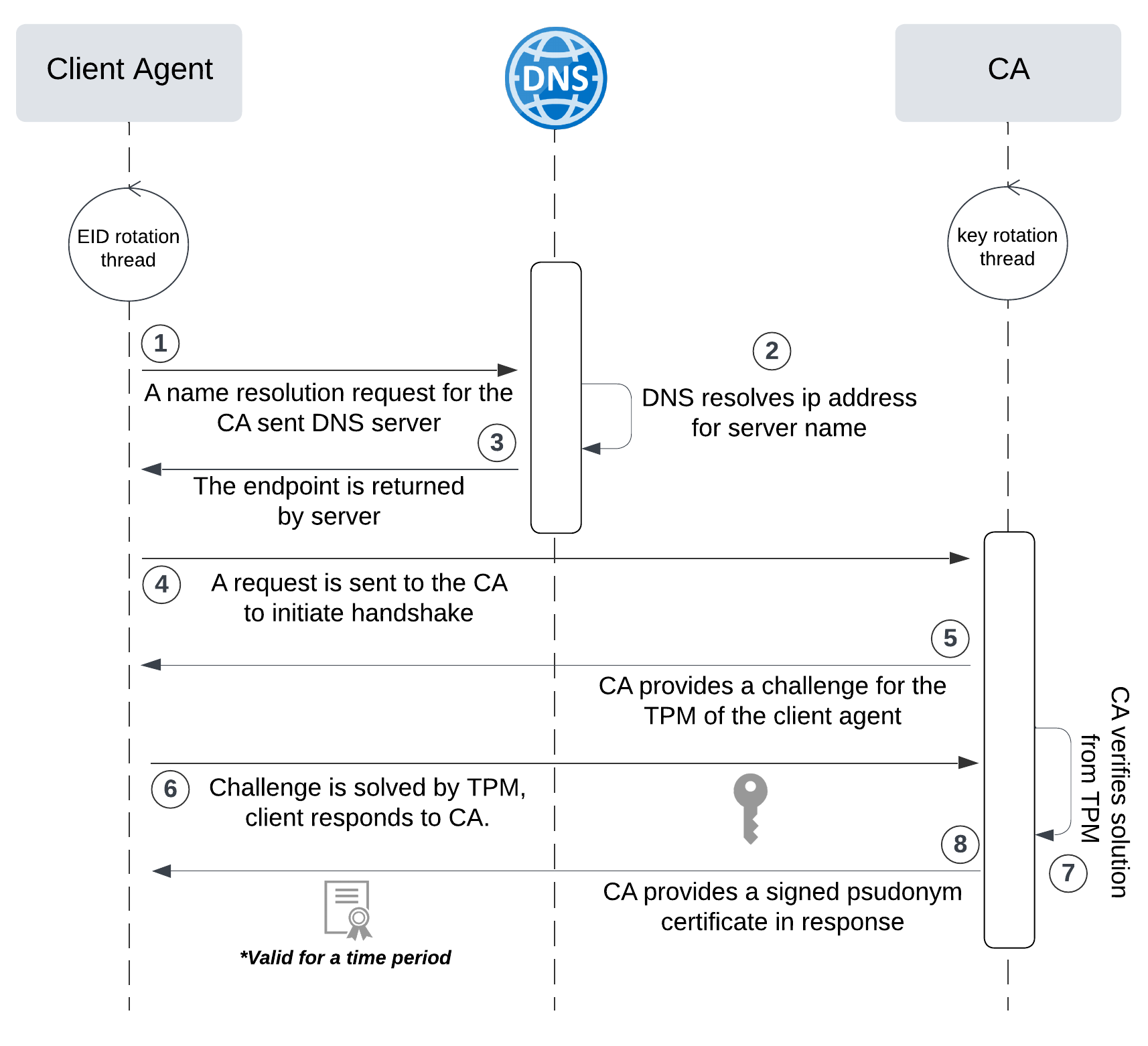

网络六边形受到攻击

大家读完觉得有帮助记得关注和点赞!!! 抽象 现代智能交通系统 (ITS) 的一个关键要求是能够以安全、可靠和匿名的方式从互联车辆和移动设备收集地理参考数据。Nexagon 协议建立在 IETF 定位器/ID 分离协议 (…...

:にする)

日语学习-日语知识点小记-构建基础-JLPT-N4阶段(33):にする

日语学习-日语知识点小记-构建基础-JLPT-N4阶段(33):にする 1、前言(1)情况说明(2)工程师的信仰2、知识点(1) にする1,接续:名词+にする2,接续:疑问词+にする3,(A)は(B)にする。(2)復習:(1)复习句子(2)ために & ように(3)そう(4)にする3、…...

基于ASP.NET+ SQL Server实现(Web)医院信息管理系统

医院信息管理系统 1. 课程设计内容 在 visual studio 2017 平台上,开发一个“医院信息管理系统”Web 程序。 2. 课程设计目的 综合运用 c#.net 知识,在 vs 2017 平台上,进行 ASP.NET 应用程序和简易网站的开发;初步熟悉开发一…...

渗透实战PortSwigger靶场-XSS Lab 14:大多数标签和属性被阻止

<script>标签被拦截 我们需要把全部可用的 tag 和 event 进行暴力破解 XSS cheat sheet: https://portswigger.net/web-security/cross-site-scripting/cheat-sheet 通过爆破发现body可以用 再把全部 events 放进去爆破 这些 event 全部可用 <body onres…...

Go 语言接口详解

Go 语言接口详解 核心概念 接口定义 在 Go 语言中,接口是一种抽象类型,它定义了一组方法的集合: // 定义接口 type Shape interface {Area() float64Perimeter() float64 } 接口实现 Go 接口的实现是隐式的: // 矩形结构体…...

linux arm系统烧录

1、打开瑞芯微程序 2、按住linux arm 的 recover按键 插入电源 3、当瑞芯微检测到有设备 4、松开recover按键 5、选择升级固件 6、点击固件选择本地刷机的linux arm 镜像 7、点击升级 (忘了有没有这步了 估计有) 刷机程序 和 镜像 就不提供了。要刷的时…...

数据链路层的主要功能是什么

数据链路层(OSI模型第2层)的核心功能是在相邻网络节点(如交换机、主机)间提供可靠的数据帧传输服务,主要职责包括: 🔑 核心功能详解: 帧封装与解封装 封装: 将网络层下发…...

Python如何给视频添加音频和字幕

在Python中,给视频添加音频和字幕可以使用电影文件处理库MoviePy和字幕处理库Subtitles。下面将详细介绍如何使用这些库来实现视频的音频和字幕添加,包括必要的代码示例和详细解释。 环境准备 在开始之前,需要安装以下Python库:…...

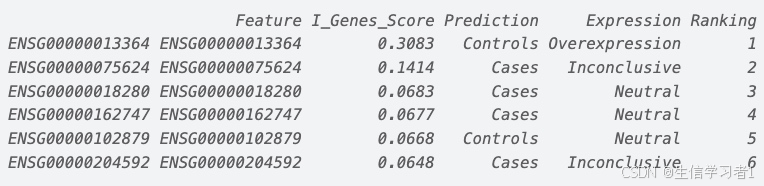

【数据分析】R版IntelliGenes用于生物标志物发现的可解释机器学习

禁止商业或二改转载,仅供自学使用,侵权必究,如需截取部分内容请后台联系作者! 文章目录 介绍流程步骤1. 输入数据2. 特征选择3. 模型训练4. I-Genes 评分计算5. 输出结果 IntelliGenesR 安装包1. 特征选择2. 模型训练和评估3. I-Genes 评分计…...

NXP S32K146 T-Box 携手 SD NAND(贴片式TF卡):驱动汽车智能革新的黄金组合

在汽车智能化的汹涌浪潮中,车辆不再仅仅是传统的交通工具,而是逐步演变为高度智能的移动终端。这一转变的核心支撑,来自于车内关键技术的深度融合与协同创新。车载远程信息处理盒(T-Box)方案:NXP S32K146 与…...