zookeeper kafka集群配置

一.下载安装包

地址:https://download.csdn.net/download/cyw8998/16579797

二.配置文件

zookeeper.properties

dataDir=/data/kafka/zookeeper_data/zookeeper

# the port at which the clients will connect

clientPort=2181

# disable the per-ip limit on the number of connections since this is a non-production config

maxClientCnxns=0

# Disable the adminserver by default to avoid port conflicts.

# Set the port to something non-conflicting if choosing to enable this

admin.enableServer=false

# admin.serverPort=8080

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

server.0=172.16.2.217:2888:3888

server.1=172.16.2.216:2888:3888

kafka server.properties

broker.id=1

############################# Server Basics ############################## The id of the broker. This must be set to a unique integer for each broker.

broker.id=1############################# Socket Server Settings ############################## The address the socket server listens on. It will get the value returned from

# java.net.InetAddress.getCanonicalHostName() if not configured.

# FORMAT:

# listeners = listener_name://host_name:port

# EXAMPLE:

# listeners = PLAINTEXT://your.host.name:9092

listeners=PLAINTEXT://172.16.2.216:9092# Hostname and port the broker will advertise to producers and consumers. If not set,

# it uses the value for "listeners" if configured. Otherwise, it will use the value

# returned from java.net.InetAddress.getCanonicalHostName().

advertised.listeners=PLAINTEXT://172.16.2.216:9092# Maps listener names to security protocols, the default is for them to be the same. See the config documentation for more details

#listener.security.protocol.map=PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL# The number of threads that the server uses for receiving requests from the network and sending responses to the network

num.network.threads=3# The number of threads that the server uses for processing requests, which may include disk I/O

num.io.threads=8# The send buffer (SO_SNDBUF) used by the socket server

socket.send.buffer.bytes=102400# The receive buffer (SO_RCVBUF) used by the socket server

socket.receive.buffer.bytes=102400# The maximum size of a request that the socket server will accept (protection against OOM)

socket.request.max.bytes=104857600############################# Log Basics ############################## A comma separated list of directories under which to store log files

log.dirs=/data/kafka/kafka_data/kafka-logs# The default number of log partitions per topic. More partitions allow greater

# parallelism for consumption, but this will also result in more files across

# the brokers.

num.partitions=1# The number of threads per data directory to be used for log recovery at startup and flushing at shutdown.

# This value is recommended to be increased for installations with data dirs located in RAID array.

num.recovery.threads.per.data.dir=1############################# Internal Topic Settings #############################

# The replication factor for the group metadata internal topics "__consumer_offsets" and "__transaction_state"

# For anything other than development testing, a value greater than 1 is recommended to ensure availability such as 3.

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1############################# Log Flush Policy ############################## Messages are immediately written to the filesystem but by default we only fsync() to sync

# the OS cache lazily. The following configurations control the flush of data to disk.

# There are a few important trade-offs here:

# 1. Durability: Unflushed data may be lost if you are not using replication.

# 2. Latency: Very large flush intervals may lead to latency spikes when the flush does occur as there will be a lot of data to flush.

# 3. Throughput: The flush is generally the most expensive operation, and a small flush interval may lead to excessive seeks.

# The settings below allow one to configure the flush policy to flush data after a period of time or

# every N messages (or both). This can be done globally and overridden on a per-topic basis.# The number of messages to accept before forcing a flush of data to disk

#log.flush.interval.messages=10000# The maximum amount of time a message can sit in a log before we force a flush

#log.flush.interval.ms=1000############################# Log Retention Policy ############################## The following configurations control the disposal of log segments. The policy can

# be set to delete segments after a period of time, or after a given size has accumulated.

# A segment will be deleted whenever *either* of these criteria are met. Deletion always happens

# from the end of the log.# The minimum age of a log file to be eligible for deletion due to age

log.retention.hours=168# A size-based retention policy for logs. Segments are pruned from the log unless the remaining

# segments drop below log.retention.bytes. Functions independently of log.retention.hours.

#log.retention.bytes=1073741824# The maximum size of a log segment file. When this size is reached a new log segment will be created.

log.segment.bytes=1073741824# The interval at which log segments are checked to see if they can be deleted according

# to the retention policies

log.retention.check.interval.ms=300000############################# Zookeeper ############################## Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

zookeeper.connect=172.16.2.216:2181,172.16.2.217:2181

# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=18000############################# Group Coordinator Settings ############################## The following configuration specifies the time, in milliseconds, that the GroupCoordinator will delay the initial consumer rebalance.

# The rebalance will be further delayed by the value of group.initial.rebalance.delay.ms as new members join the group, up to a maximum of max.poll.interval.ms.

# The default value for this is 3 seconds.

# We override this to 0 here as it makes for a better out-of-the-box experience for development and testing.

# However, in production environments the default value of 3 seconds is more suitable as this will help to avoid unnecessary, and potentially expensive, rebalances during application startup.

group.initial.rebalance.delay.ms=0

broker.id=0

############################# Server Basics ############################## The id of the broker. This must be set to a unique integer for each broker.

broker.id=0############################# Socket Server Settings ############################## The address the socket server listens on. It will get the value returned from

# java.net.InetAddress.getCanonicalHostName() if not configured.

# FORMAT:

# listeners = listener_name://host_name:port

# EXAMPLE:

# listeners = PLAINTEXT://your.host.name:9092

listeners=PLAINTEXT://172.16.2.217:9092# Hostname and port the broker will advertise to producers and consumers. If not set,

# it uses the value for "listeners" if configured. Otherwise, it will use the value

# returned from java.net.InetAddress.getCanonicalHostName().

advertised.listeners=PLAINTEXT://172.16.2.217:9092# Maps listener names to security protocols, the default is for them to be the same. See the config documentation for more details

#listener.security.protocol.map=PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL# The number of threads that the server uses for receiving requests from the network and sending responses to the network

num.network.threads=3# The number of threads that the server uses for processing requests, which may include disk I/O

num.io.threads=8# The send buffer (SO_SNDBUF) used by the socket server

socket.send.buffer.bytes=102400# The receive buffer (SO_RCVBUF) used by the socket server

socket.receive.buffer.bytes=102400# The maximum size of a request that the socket server will accept (protection against OOM)

socket.request.max.bytes=104857600############################# Log Basics ############################## A comma separated list of directories under which to store log files

log.dirs=/data/kafka/kafka_data/kafka-logs# The default number of log partitions per topic. More partitions allow greater

# parallelism for consumption, but this will also result in more files across

# the brokers.

num.partitions=1# The number of threads per data directory to be used for log recovery at startup and flushing at shutdown.

# This value is recommended to be increased for installations with data dirs located in RAID array.

num.recovery.threads.per.data.dir=1############################# Internal Topic Settings #############################

# The replication factor for the group metadata internal topics "__consumer_offsets" and "__transaction_state"

# For anything other than development testing, a value greater than 1 is recommended to ensure availability such as 3.

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1############################# Log Flush Policy ############################## Messages are immediately written to the filesystem but by default we only fsync() to sync

# the OS cache lazily. The following configurations control the flush of data to disk.

# There are a few important trade-offs here:

# 1. Durability: Unflushed data may be lost if you are not using replication.

# 2. Latency: Very large flush intervals may lead to latency spikes when the flush does occur as there will be a lot of data to flush.

# 3. Throughput: The flush is generally the most expensive operation, and a small flush interval may lead to excessive seeks.

# The settings below allow one to configure the flush policy to flush data after a period of time or

# every N messages (or both). This can be done globally and overridden on a per-topic basis.# The number of messages to accept before forcing a flush of data to disk

#log.flush.interval.messages=10000# The maximum amount of time a message can sit in a log before we force a flush

#log.flush.interval.ms=1000############################# Log Retention Policy ############################## The following configurations control the disposal of log segments. The policy can

# be set to delete segments after a period of time, or after a given size has accumulated.

# A segment will be deleted whenever *either* of these criteria are met. Deletion always happens

# from the end of the log.# The minimum age of a log file to be eligible for deletion due to age

log.retention.hours=168# A size-based retention policy for logs. Segments are pruned from the log unless the remaining

# segments drop below log.retention.bytes. Functions independently of log.retention.hours.

#log.retention.bytes=1073741824# The maximum size of a log segment file. When this size is reached a new log segment will be created.

log.segment.bytes=1073741824# The interval at which log segments are checked to see if they can be deleted according

# to the retention policies

log.retention.check.interval.ms=300000############################# Zookeeper ############################## Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

zookeeper.connect=172.16.2.217:2181,172.16.2.216:2181# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=18000############################# Group Coordinator Settings ############################## The following configuration specifies the time, in milliseconds, that the GroupCoordinator will delay the initial consumer rebalance.

# The rebalance will be further delayed by the value of group.initial.rebalance.delay.ms as new members join the group, up to a maximum of max.poll.interval.ms.

# The default value for this is 3 seconds.

# We override this to 0 here as it makes for a better out-of-the-box experience for development and testing.

# However, in production environments the default value of 3 seconds is more suitable as this will help to avoid unnecessary, and potentially expensive, rebalances during application startup.

group.initial.rebalance.delay.ms=0

相关文章:

zookeeper kafka集群配置

一.下载安装包 地址:https://download.csdn.net/download/cyw8998/16579797 二.配置文件 zookeeper.properties dataDir/data/kafka/zookeeper_data/zookeeper # the port at which the clients will connect clientPort2181 # disable the per-ip limit on the…...

Java IO 基础知识

IO 流简介 IO 即 Input/Output,输入和输出。数据输入到计算机内存的过程即输入,反之输出到外部存储(比如数据库,文件,远程主机)的过程即输出。数据传输过程类似于水流,因此称为 IO 流。IO 流在…...

【报错处理】MR/Spark 使用 BulkLoad 方式传输到 HBase 发生报错: NullPointerException

博主希望能够得到大家的点赞收藏支持!非常感谢 点赞,收藏是情分,不点是本分。祝你身体健康,事事顺心! Spark 通过 BulkLoad 方式传输到 HBase,我发现会出现空指针异常。简单写下如何解决的。 原理…...

域7:安全运营 第17章 事件的预防和响应

第七域包括 16、17、18、19 章。 事件的预防和响应是安全运营管理的核心环节,对于组织有效识别、评估、控制和减轻网络安全威胁至关重要。这一过程是循环往复的,要求组织不断总结经验,优化策略,提升整体防护能力。通过持续的监测、…...

Linux常见基本指令 +外壳shell + 权限的理解

下面这篇文章主要介绍了一些Linux的基本指令及其周边知识, 以及shell的简单理解和权限的理解. 目录 前言1.基本指令及其周边知识1.1 ADD类touch [file]文件的时间mkdir [directory]cp [file/directory]echo [file]输出重定向Linux中, 一切皆文件 1.2 DELETE类rmdirrm通配符关机…...

Android Framework AMS(07)service组件启动分析-1(APP到AMS流程解读)

该系列文章总纲链接:专题总纲目录 Android Framework 总纲 本章关键点总结 & 说明: 说明:本章节主要解读应用层service组件启动的2种方式startService和bindService,以及从APP层到AMS调用之间的打通。关注思维导图中左侧部分即…...

详解)

深度学习:领域适应(Domain Adaptation)详解

领域适应(Domain Adaptation)详解 领域适应是机器学习中的一个重要研究领域,它解决的问题是模型在一个领域(源域)上训练得到的知识如何迁移到另一个有所差异的领域(目标域)上。领域适应特别重要…...

华三服务器R4900 G5在图形界面使用PMC阵列卡(P460-B4)创建RAID,并安装系统(中文教程)

环境以用户需求安装Centos7.9,服务器使用9块900G硬盘,创建RAID1和RAID6,留一块作为热备盘。 使用笔记本通过HDM管理口()登录 使用VGA()线连接显示器和使用usb线连接键盘鼠标,进行窗…...

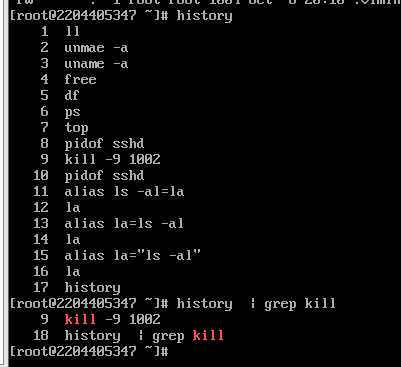

Linux实验三

Linux实验三 实验步骤: 一、登录进入 CentOS7 系统,打开并进入终端,使用 su root 切换到 root 用户 ; 二、将主机名称修改为 个人学号,并完成以下操作: 1、使用 uname -a 查看系统内核信息&#x…...

Vue预渲染:深入探索prerender-spa-plugin与vue-meta-info的联合应用

在前端开发的浪潮中,Vue.js凭借其轻量级、易上手和高效的特点,赢得了广大开发者的青睐。然而,单页面应用(SPA)在SEO方面的短板一直是开发者们需要面对的挑战。为了优化SEO,预渲染技术应运而生,而…...

使用`ThreadLocal`来优化鉴权逻辑并不能直接解决Web应用中session共享的问题

使用ThreadLocal来优化鉴权逻辑并不能直接解决Web应用中session共享的问题。实际上,ThreadLocal和session共享是两个不同的概念,它们解决的问题也不同。 ThreadLocal的作用 ThreadLocal是Java中提供的一个线程局部变量类,它可以让每个线程都拥有一个独立的变量副本,这样线…...

Python implement for PID

Python,serves as language for calculation of any domain 待更 Reference PID pythonPID git...

C++中的initializer_list类

目录 initializer_list类 介绍 基本使用 常见函数 initializer_list类 介绍 initializer_list类是C11新增的类,其原型如下: template<class T> class initializer_list; 有了initializer_list,一些容器也可以实现列表初始化&am…...

持续科技创新 高德亮相2024中国测绘地理信息科技年会

图为博览会期间, 自然资源部党组成员、副部长刘国洪前往高德企业展台参观。 10月15日,2024中国测绘地理信息科学技术年会暨中国测绘地理信息技术装备博览会在郑州召开。作为国内领先的地图厂商,高德地图凭借高精度高动态导航地图技术应用受邀参会。 本…...

深入理解HTTP Cookie

🍑个人主页:Jupiter. 🚀 所属专栏:Linux从入门到进阶 欢迎大家点赞收藏评论😊 目录 HTTP Cookie定义工作原理分类安全性用途 认识 cookie基本格式实验测试 cookie 当我们登录了B站过后,为什么下次访问B站就…...

Python多进程编程:使用`multiprocessing.Queue`进行进程间通信

Python多进程编程:使用multiprocessing.Queue进行进程间通信 1. 什么是multiprocessing.Queue?2. 为什么需要multiprocessing.Queue?3. 如何使用multiprocessing.Queue?3.1 基本用法3.2 队列的其他操作3.3 队列的阻塞与超时 4. 适…...

Docker 常见命令

命令库:docker ps | Docker Docs 安装docker apt install docker.io docker ps -a 作用:显示所有容器 docker logs -f frps 作用:持续输出容器名称为frps的日志信息(监控) docker restart frps 作用:重…...

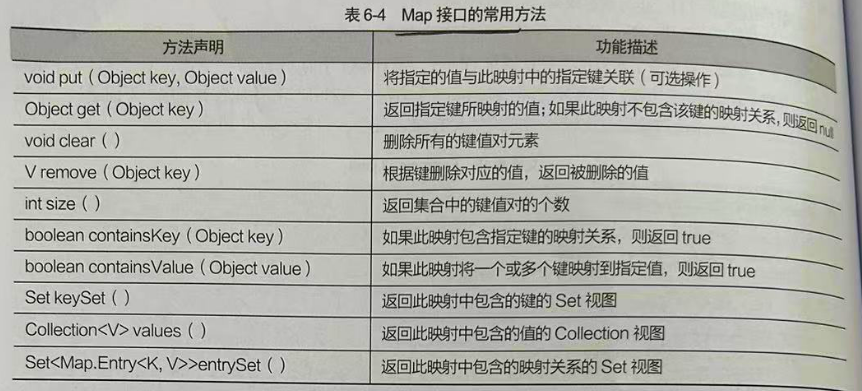

Map 双列集合根接口 HashMap TreeMap

Map接口是一种双列集合,它的每一个元素都包含一个键对象Key和值Value 键和值直接存在一种对应关系 称为映射 从Map集中中访问元素, 只要指定了Key 就是找到对应的Value 常用方法 HashMap实现类无重复键无序 它是Map 接口的一个实现类,用于存储键值映射关系,并且HashMap 集合没…...

相关总结)

Pip源设置(清华源)相关总结

1、临时使用 pip install -i https://pypi.tuna.tsinghua.edu.cn/simple some-package 2、永久更改pip源 升级 pip 到最新的版本 (>10.0.0) 后进行配置: pip install pip -U pip config set global.index-url https://pypi.tuna.tsinghua.edu.cn/simple 如…...

编程入门攻略

编程小白如何成为大神?大学新生的最佳入门攻略 编程已成为当代大学生的必备技能,但面对众多编程语言和学习资源,新生们常常感到迷茫。如何选择适合自己的编程语言?如何制定有效的学习计划?如何避免常见的学习陷阱&…...

智能检索新范式,让AIAgent自主决策,提升RAG效率100%!

市面上的 RAG 系统,不管叫什么名字,本质上只有两种做法: 第一种,一次性检索。把用户的 query 向量化,从语料库里捞出 Top-K 个文档片段,拼成一个大 prompt 塞给模型。GraphRAG、HippoRAG、LightRAG 都属于…...

中兴光猫终极管理指南:解锁工厂模式与Telnet权限的实战教程

中兴光猫终极管理指南:解锁工厂模式与Telnet权限的实战教程 【免费下载链接】zteOnu A tool that can open ZTE onu device factory mode 项目地址: https://gitcode.com/gh_mirrors/zt/zteOnu 掌握中兴光猫的设备管理和权限获取能力是网络管理员和技术爱好者…...

AI学习 - 大模型基础入门

AI学习 - 大模型基础入门 从零开始:Ollama 安装 → 本地模型运行 → Python 代码接入 → 理解核心概念 摘要 本文记录了在 Windows 上使用 Ollama 部署本地大模型、并通过 Python 代码接入调用的完整过程。内容涵盖:Ollama 安装与模型拉取、大模型基础概…...

DS4Windows终极指南:3步让PS手柄在PC上完美运行游戏

DS4Windows终极指南:3步让PS手柄在PC上完美运行游戏 【免费下载链接】DS4Windows Like those other ds4tools, but sexier 项目地址: https://gitcode.com/gh_mirrors/ds/DS4Windows 还在为PS手柄连接Windows电脑后无法识别而烦恼吗?🎮…...

)

别再只用鼠标了!用Leap Motion手势控制Unity游戏,保姆级配置避坑指南(2024版)

2024年Unity手势交互开发实战:Leap Motion从配置到游戏逻辑全解析在游戏开发领域,交互方式的创新往往能带来全新的体验。想象一下,玩家不再需要键盘鼠标,仅凭自然的手部动作就能操控游戏角色——这正是Leap Motion手势识别技术为U…...

利用FTDI芯片MPSSE模式构建Arduino兼容开发环境

1. 项目概述:当FTDI芯片遇上Arduino生态如果你手头有一些闲置的FTDI USB转串口模块,比如常见的FT232R、FT2232H,或者像我一样,从某个旧设备上拆下来一块FT2232C的老古董,除了用来给单片机烧录程序或者做串口调试&#…...

DIY智能USB充电器:基于电流检测与双稳态继电器的零功耗节能方案

1. 项目概述:打造一款智能、节能的USB手机充电器作为一名电子爱好者,我经常折腾各种电源项目。市面上很多手机充电器,包括一些原装货,都存在一个通病:手机充满电后,充电器依然插在插座上,内部电…...

DeTikZify:基于AI的TikZ图形程序自动生成技术深度解析

DeTikZify:基于AI的TikZ图形程序自动生成技术深度解析 【免费下载链接】DeTikZify Synthesizing Graphics Programs for Scientific Figures and Sketches with TikZ. 项目地址: https://gitcode.com/gh_mirrors/de/DeTikZify DeTikZify是一款革命性的多模态…...

茉莉花插件:如何让中文文献管理效率提升300%

茉莉花插件:如何让中文文献管理效率提升300% 【免费下载链接】jasminum A Zotero add-on to retrive CNKI meta data. 一个简单的Zotero 插件,用于识别中文元数据 项目地址: https://gitcode.com/gh_mirrors/ja/jasminum 还在为中文文献的元数据抓…...

从Stable Diffusion到DiT:为什么说Transformer是扩散模型的下一站?

从Stable Diffusion到DiT:Transformer如何重塑扩散模型的未来 在图像生成领域,扩散模型正经历着从U-Net架构向Transformer架构的范式转移。这一转变不仅仅是技术组件的简单替换,而是代表着生成式AI在可扩展性、训练效率和模型容量方面的重大突…...