Kylin Server V10 下自动安装并配置Kafka

Kafka是一个分布式的、分区的、多副本的消息发布-订阅系统,它提供了类似于JMS的特性,但在设计上完全不同,它具有消息持久化、高吞吐、分布式、多客户端支持、实时等特性,适用于离线和在线的消息消费,如常规的消息收集、网站活性采集、聚合统计系统运营数据(监测数据)、日志收集等大量数据的互联网服务的数据收集场景。

1. 查看操作系统信息

[root@localhost ~]# cat /etc/.kyinfo

[dist]

name=Kylin

milestone=Server-V10-GFB-Release-ZF9_01-2204-Build03

arch=arm64

beta=False

time=2023-01-09 11:04:36

dist_id=Kylin-Server-V10-GFB-Release-ZF9_01-2204-Build03-arm64-2023-01-09 11:04:36

[servicekey]

key=0080176

[os]

to=

term=2024-05-16

2. 编辑setup.sh安装脚本

#!/bin/bash

###########################################################################################

# @programe : setup.sh

# @version : 3.8.1

# @function@ :

# @campany :

# @dep. :

# @writer : Liu Cheng ji

# @phone : 18037139992

# @date : 2024-09-24

############################################################################################getent group kafka >/dev/null || groupadd -r kafka

getent passwd kafka >/dev/null || useradd -r -g kafka -d /var/lib/kafka \-s /sbin/nologin -c "Kafka user" kafkatar -zxvf kafka.tar.gz -C /usr/local/

mkdir /usr/local/kafka/logs -p

cp -f ./config/server.properties /usr/local/kafka/config/

chown -R kafka:kafka /usr/local/kafkacp ./config/kafka.sh /etc/profile.d/

chown root:root /etc/profile.d/kafka.sh

chmod 644 /etc/profile.d/kafka.sh

source /etc/profilecp ./config/kafka.service /usr/lib/systemd/system/

chown root:root /usr/lib/systemd/system/kafka.service

chmod 644 /usr/lib/systemd/system/kafka.servicecp ./config/kafka.conf /usr/lib/tmpfiles.d/

chown root:root /usr/lib/tmpfiles.d/kafka.conf

chmod 0644 /usr/lib/tmpfiles.d/kafka.conf

systemd-tmpfiles --create /usr/lib/tmpfiles.d/kafka.confsystemctl unmask kafka.service

systemctl daemon-reload

service_power_on_status=`systemctl is-enabled kafka`

if [ $service_power_on_status != 'enabled' ]; thensystemctl enable kafka.service

fiecho "+--------------------------------------------------------------------------------------------------------------+"

echo "| Kafka 3.8.1 Install Sucesses |"

echo "+--------------------------------------------------------------------------------------------------------------+"3. 设置环境变量配置文件kafka.sh

export KAFKA_HOME=/usr/local/kafka

export PATH=$KAFKA_HOME/bin:$PATH

4. 设置kafka.service文件

[Unit]

Requires=zookeeper.service

After=zookeeper.service[Service]

Type=simple

LimitNOFILE=1048576

ExecStart=/usr/local/kafka/bin/kafka-server-start.sh /usr/local/kafka/config/server.properties

ExecStop=/usr/local/kafka/bin/kafka-server-stop.sh

Restart=Always[Install]

WantedBy=multi-user.target

5. 编写 kafka.conf 文件

d /var/lib/kafka 0775 kafka kafka -

6. 根据需要优化设置server.properties文件

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.#

# This configuration file is intended for use in ZK-based mode, where Apache ZooKeeper is required.

# See kafka.server.KafkaConfig for additional details and defaults

############################## Server Basics ############################## The id of the broker. This must be set to a unique integer for each broker.

broker.id=0############################# Socket Server Settings ############################## The address the socket server listens on. If not configured, the host name will be equal to the value of

# java.net.InetAddress.getCanonicalHostName(), with PLAINTEXT listener name, and port 9092.

# FORMAT:

# listeners = listener_name://host_name:port

# EXAMPLE:

# listeners = PLAINTEXT://your.host.name:9092

listeners=PLAINTEXT://:15903# Listener name, hostname and port the broker will advertise to clients.

# If not set, it uses the value for "listeners".

advertised.listeners=PLAINTEXT://your.host.name:15903# Maps listener names to security protocols, the default is for them to be the same. See the config documentation for more details

#listener.security.protocol.map=PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL# The number of threads that the server uses for receiving requests from the network and sending responses to the network

num.network.threads=128# The number of threads that the server uses for processing requests, which may include disk I/O

num.io.threads=65# The send buffer (SO_SNDBUF) used by the socket server

socket.send.buffer.bytes=102400# The receive buffer (SO_RCVBUF) used by the socket server

socket.receive.buffer.bytes=102400# The maximum size of a request that the socket server will accept (protection against OOM)

socket.request.max.bytes=104857600############################# Log Basics ############################## A comma separated list of directories under which to store log files

log.dirs=/usr/local/kafka/logs# The default number of log partitions per topic. More partitions allow greater

# parallelism for consumption, but this will also result in more files across

# the brokers.

num.partitions=1# The number of threads per data directory to be used for log recovery at startup and flushing at shutdown.

# This value is recommended to be increased for installations with data dirs located in RAID array.

num.recovery.threads.per.data.dir=1############################# Internal Topic Settings #############################

# The replication factor for the group metadata internal topics "__consumer_offsets" and "__transaction_state"

# For anything other than development testing, a value greater than 1 is recommended to ensure availability such as 3.

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1############################# Log Flush Policy ############################## Messages are immediately written to the filesystem but by default we only fsync() to sync

# the OS cache lazily. The following configurations control the flush of data to disk.

# There are a few important trade-offs here:

# 1. Durability: Unflushed data may be lost if you are not using replication.

# 2. Latency: Very large flush intervals may lead to latency spikes when the flush does occur as there will be a lot of data to flush.

# 3. Throughput: The flush is generally the most expensive operation, and a small flush interval may lead to excessive seeks.

# The settings below allow one to configure the flush policy to flush data after a period of time or

# every N messages (or both). This can be done globally and overridden on a per-topic basis.# The number of messages to accept before forcing a flush of data to disk

#log.flush.interval.messages=10000# The maximum amount of time a message can sit in a log before we force a flush

#log.flush.interval.ms=1000############################# Log Retention Policy ############################## The following configurations control the disposal of log segments. The policy can

# be set to delete segments after a period of time, or after a given size has accumulated.

# A segment will be deleted whenever *either* of these criteria are met. Deletion always happens

# from the end of the log.# The minimum age of a log file to be eligible for deletion due to age

log.retention.hours=168# A size-based retention policy for logs. Segments are pruned from the log unless the remaining

# segments drop below log.retention.bytes. Functions independently of log.retention.hours.

#log.retention.bytes=1073741824# The maximum size of a log segment file. When this size is reached a new log segment will be created.

#log.segment.bytes=1073741824# The interval at which log segments are checked to see if they can be deleted according

# to the retention policies

log.retention.check.interval.ms=300000############################# Zookeeper ############################## Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

zookeeper.connect=localhost:15902# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=18000############################# Group Coordinator Settings ############################## The following configuration specifies the time, in milliseconds, that the GroupCoordinator will delay the initial consumer rebalance.

# The rebalance will be further delayed by the value of group.initial.rebalance.delay.ms as new members join the group, up to a maximum of max.poll.interval.ms.

# The default value for this is 3 seconds.

# We override this to 0 here as it makes for a better out-of-the-box experience for development and testing.

# However, in production environments the default value of 3 seconds is more suitable as this will help to avoid unnecessary, and potentially expensive, rebalances during application startup.

group.initial.rebalance.delay.ms=0

7. 配置说明

相关文章:

Kylin Server V10 下自动安装并配置Kafka

Kafka是一个分布式的、分区的、多副本的消息发布-订阅系统,它提供了类似于JMS的特性,但在设计上完全不同,它具有消息持久化、高吞吐、分布式、多客户端支持、实时等特性,适用于离线和在线的消息消费,如常规的消息收集、…...

windows环境下cmd窗口打开就进入到对应目录,一般人都不知道~

前言 很久以前,我还在上一家公司的时候,有一次我看到我同事打开cmd窗口的方式,瞬间把我惊呆了。原来他打开cmd窗口的方式,不是一般的在开始里面输入cmd,然后打开cmd窗口。而是另外一种方式。 我这个同事是个技术控&a…...

企微SCRM价格解析及其性价比分析

内容概要 在如今的数字化时代,企业对于客户关系管理的需求日益增长,而企微SCRM(Social Customer Relationship Management)作为一款新兴的客户管理工具,正好满足了这一需求。本文旨在为大家深入解析企微SCRM的价格体系…...

【SpringMVC】记录一次Bug——mvc:resources设置静态资源不过滤导致WEB-INF下的资源无法访问

SpringMVC 记录一次bug 其实都是小毛病,但是为了以后再出毛病,记录一下: mvc:resources设置静态资源不过滤问题 SpringMVC中配置的核心Servlet——DispatcherServlet,为了可以拦截到所有的请求(JSP页面除外…...

【React】React 生命周期完全指南

🌈个人主页: 鑫宝Code 🔥热门专栏: 闲话杂谈| 炫酷HTML | JavaScript基础 💫个人格言: "如无必要,勿增实体" 文章目录 React 生命周期完全指南一、生命周期概述二、生命周期的三个阶段2.1 挂载阶段&a…...

【NLP】使用 SpaCy、ollama 创建用于命名实体识别的合成数据集

命名实体识别 (NER) 是自然语言处理 (NLP) 中的一项重要任务,用于自动识别和分类文本中的实体,例如人物、位置、组织等。尽管它很重要,但手动注释大型数据集以进行 NER 既耗时又费钱。受本文 ( https://huggingface.co/blog/synthetic-data-s…...

【C++练习】二进制到十进制的转换器

题目:二进制到十进制的转换器 描述 编写一个程序,将用户输入的8位二进制数转换成对应的十进制数并输出。如果用户输入的二进制数不是8位,则程序应提示用户输入无效,并终止运行。 要求 程序应首先提示用户输入一个8位二进制数。…...

Vue功能菜单的异步加载、动态渲染

实际的Vue应用中,常常需要提供功能菜单,例如:文件下载、用户注册、数据采集、信息查询等等。每个功能菜单项,对应某个.vue组件。下面的代码,提供了一种独特的异步加载、动态渲染功能菜单的构建方法: <s…...

)

云技术基础学习(一)

内容预览 ≧∀≦ゞ 声明导语云技术历史 云服务概述云服务商与部署模式1. 公有云服务商2. 私有云部署3. 混合云模式 云服务分类1. 基础设施即服务(IaaS)2. 平台即服务(PaaS)3. 软件即服务(SaaS) 云架构云架构…...

【优选算法篇】微位至简,数之恢宏——解构 C++ 位运算中的理与美

文章目录 C 位运算详解:基础题解与思维分析前言第一章:位运算基础应用1.1 判断字符是否唯一(easy)解法(位图的思想)C 代码实现易错点提示时间复杂度和空间复杂度 1.2 丢失的数字(easy࿰…...

MFC工控项目实例二十九主对话框调用子对话框设定参数值

在主对话框调用子对话框设定参数值,使用theApp变量实现。 子对话框各参数变量 CString m_strTypeName; CString m_strBrand; CString m_strRemark; double m_edit_min; double m_edit_max; double m_edit_time2; double …...

Java | Leetcode Java题解之第546题移除盒子

题目: 题解: class Solution {int[][][] dp;public int removeBoxes(int[] boxes) {int length boxes.length;dp new int[length][length][length];return calculatePoints(boxes, 0, length - 1, 0);}public int calculatePoints(int[] boxes, int l…...

【前端】Svelte:响应性声明

Svelte 的响应性声明机制简化了动态更新 UI 的过程,让开发者不需要手动追踪数据变化。通过 $ 前缀与响应式声明语法,Svelte 能够自动追踪依赖关系,实现数据变化时的自动重新渲染。在本教程中,我们将详细探讨 Svelte 的响应性声明机…...

PostgreSQL 性能优化全方位指南:深度提升数据库效率

PostgreSQL 性能优化全方位指南:深度提升数据库效率 别忘了请点个赞收藏关注支持一下博主喵!!! 在现代互联网应用中,数据库性能优化是系统优化中至关重要的一环,尤其对于数据密集型和高并发的应用而言&am…...

Flutter鸿蒙next 使用 BLoC 模式进行状态管理详解

1. 引言 在 Flutter 中,随着应用规模的扩大,管理应用中的状态变得越来越复杂。为了处理这种复杂性,许多开发者选择使用不同的状态管理方案。其中,BLoC(Business Logic Component)模式作为一种流行的状态管…...

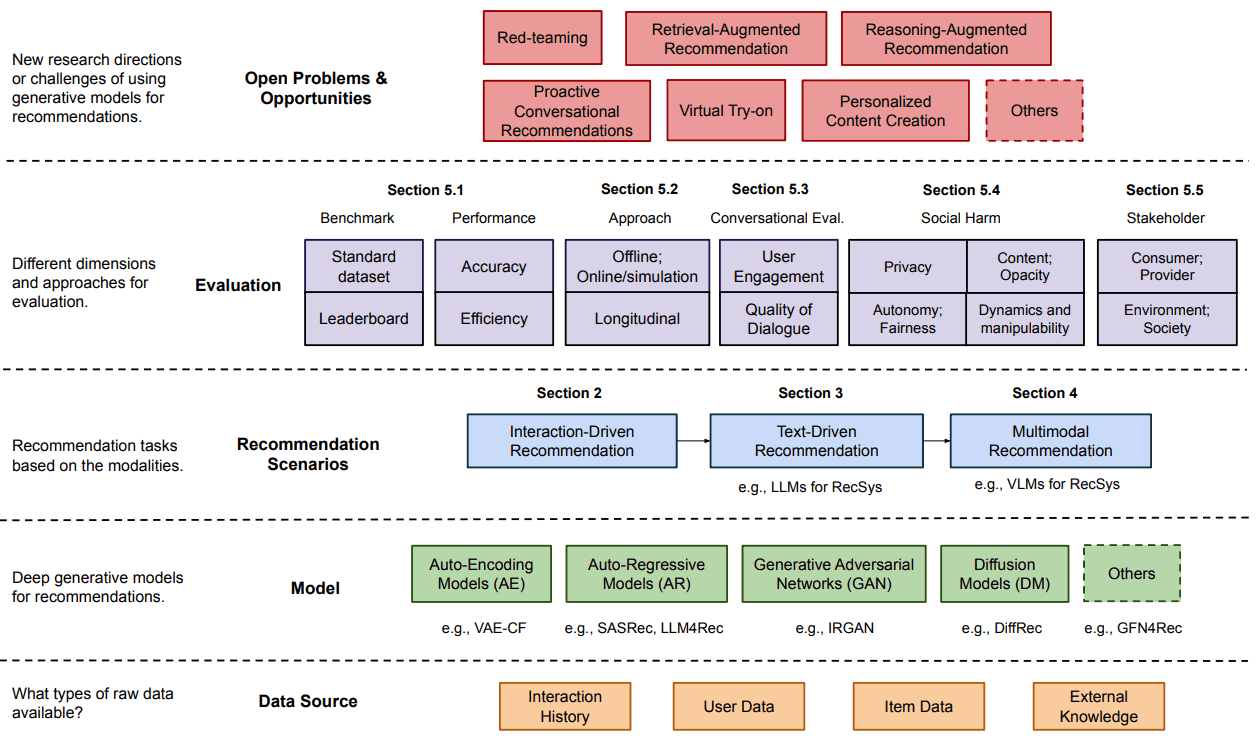

Gen-RecSys——一个通过生成和大规模语言模型发展起来的推荐系统

概述 生成模型的进步对推荐系统的发展产生了重大影响。传统的推荐系统是 “狭隘的专家”,只能捕捉特定领域内的用户偏好和项目特征,而现在生成模型增强了这些系统的功能,据报道,其性能优于传统方法。这些模型为推荐的概念和实施带…...

Android 重新定义一个广播修改系统时间,避免系统时间混乱

有时候,搞不懂为什么手机设备无法准确定义系统时间,出现混乱或显示与实际不符,需要重置或重新设定一次才行,也是真的够无语的!! vendor/mediatek/proprietary/packages/apps/MtkSettings/AndroidManifest.…...

第3章:角色扮演提示-Claude应用开发教程

更多教程,请访问claude应用开发教程 设置 运行以下设置单元以加载您的 API 密钥并建立 get_completion 辅助函数。 !pip install anthropic# Import pythons built-in regular expression library import re import anthropic# Retrieve the API_KEY & MODEL…...

【FAQ】HarmonyOS SDK 闭源开放能力 —Vision Kit

1.问题描述: 人脸活体检测页面会有声音提示,如何控制声音开关? 解决方案: 活体检测暂无声音控制开关,但可通过其他能力控制系统音量,从而控制音量。 活体检测页面固定音频流设置的是8(无障碍…...

【问题解决】Tomcat由低于8版本升级到高版本使用Tomcat自带连接池报错无法找到表空间的问题

问题复现 项目上历史项目为解决漏洞扫描从Tomcat 6.0升级到了9.0版本,服务启动的日志显示如下警告,数据源是通过JNDI方式在server.xml中配置的,控制台上狂刷无法找到表空间的错误(没截图) 报错: 06-Nov-…...

UE4动画蓝图实战:用双骨骼IK节点搞定手部穿模,附完整蓝图节点截图

UE4动画蓝图实战:双骨骼IK节点解决手部穿模的完整指南在角色动画开发中,手部穿模问题堪称"视觉杀手"。想象一下精心设计的角色挥拳时,拳头直接穿过墙壁或敌人身体——这种违和感足以毁掉整个场景的沉浸感。本文将彻底解决这个痛点&…...

Kerberos身份认证原理与实战排错指南

1. 为什么今天还要花时间搞懂 Kerberos?——一个被低估的“老协议”正在悄悄支撑着你的日常你每天登录公司内网查邮件、访问财务系统提交报销、用 Jenkins 构建代码、甚至在 Windows 域环境中打开一台同事的共享文件夹……这些看似顺滑的操作背后,大概率…...

告别虚拟机卡顿:在Windows 11的WSL2里搞定Lichee Nano交叉编译环境

告别虚拟机卡顿:在Windows 11的WSL2里搞定Lichee Nano交叉编译环境 对于嵌入式开发者来说,配置开发环境往往是个令人头疼的问题。传统虚拟机方案虽然能提供完整的Linux体验,但资源占用高、启动慢、与宿主系统交互不便等问题一直困扰着开发者。…...

从Gamma函数到泊松分布:一个概率论中的含参量积分实用案例解析

Gamma函数与泊松分布:概率论中的数学之美 在数据科学和机器学习的实践中,概率分布构成了建模的基石。当我们深入探究这些分布背后的数学原理时,Gamma函数以其优雅的性质和广泛的应用脱颖而出。它不仅连接了离散与连续概率世界,更在…...

告别虚频困扰:用VASP+DynaPhoPy搞定高温材料声子谱的保姆级教程

高温材料声子谱计算实战:从虚频困境到非谐解决方案 引言:虚频问题的根源与突破路径 在计算材料学领域,声子谱分析是理解材料动力学稳定性和热力学性质的核心手段。然而许多研究者都遭遇过这样的困境:对实验合成的材料进行简谐近似…...

录音会议纪要整理不同使用场景,实用口碑选择建议

针对不同场景的录音整理需求(短录音、中长录音、长内容深度整理),本文基于实际使用体验,分享不同场景下的工具选择建议与使用心得。一、场景一:短录音(15-60分钟,发音清晰)典型场景&…...

ARM PMU性能监控单元原理与实践指南

1. ARM PMU性能监控单元概述性能监控单元(PMU)是现代ARM处理器中用于硬件级性能分析的核心组件。它通过一组可编程的硬件计数器,实现对处理器内部各种关键事件的精确测量。这些事件涵盖了从指令执行、缓存访问到内存子系统行为等处理器活动的…...

【DeepSeek开源协议识别权威指南】:20年合规专家亲授3大协议陷阱与5步精准识别法

更多请点击: https://intelliparadigm.com 第一章:DeepSeek开源协议识别的底层逻辑与合规价值 DeepSeek系列模型(如DeepSeek-V2、DeepSeek-Coder)虽以“开源”名义发布,但其实际许可状态需通过结构化协议解析才能准确…...

基于双T振荡器的正弦波LED调光电路设计与实践

1. 项目概述:用双T振荡器实现正弦波LED调光最近在捣鼓一些氛围灯项目,总感觉用单片机PWM做的呼吸灯效果有点“硬”,那种线性的明暗变化看久了难免审美疲劳。于是翻出以前模拟电路的老本行,琢磨着能不能用纯硬件的方式,…...

ZMJS,把 JavaScript 解释器放进 SAP ABAP 应用服务器之后,很多扩展思路会变得不一样

我今天看这个 oisee/zmjs 仓库时,最吸引人的不是它把 JavaScript 语法做进了 ABAP,而是它选择了一条非常 SAP 的路线,纯 ABAP、无外部依赖、无 Kernel Module、以类和接口的形式运行在 SAP 应用服务器内部。仓库自己的定位很直接,ZMJS 是一个面向 SAP ABAP 的 Mini JavaScr…...