机器学习之实战篇——MNIST手写数字0~9识别(全连接神经网络模型)

机器学习之实战篇——Mnist手写数字0~9识别(全连接神经网络模型)

- 文章传送

- MNIST数据集介绍:

- 实验过程

- 实验环境

- 导入模块

- 导入MNIST数据集

- 创建神经网络模型进行训练,测试,评估

- 模型优化

文章传送

机器学习之监督学习(一)线性回归、多项式回归、算法优化[巨详细笔记]

机器学习之监督学习(二)二元逻辑回归

机器学习之监督学习(三)神经网络基础

机器学习之实战篇——预测二手房房价(线性回归)

机器学习之实战篇——肿瘤良性/恶性分类器(二元逻辑回归)

MNIST数据集介绍:

MNIST数据集是机器学习和计算机视觉领域中最知名和广泛使用的数据集之一。它是一个大型手写数字数据库,包含 70,000 张手写数字图像,60,000 张训练图像,10,000 张测试图像。每张图像是 28x28 像素的灰度图像素值范围从 0(白色)到 255(黑色),每张图像对应一个 0 到 9 的数字标签。

在实验开始前,为了熟悉这个伟大的数据集,读者可以先做一下下面的小实验,测验你的手写数字识别能力。尽管识别手写数字对于人类来说小菜一碟,但由于图像分辨率比较低同时有些数字写的比较抽象,因此想要达到100%准确率还是很难的,实验表明类的平均准确率约为97.5%到98.5%,实验代码如下:

import numpy as np

from tensorflow.keras.datasets import mnist

import matplotlib.pyplot as plt

from random import sample# 加载MNIST数据集

(_, _), (x_test, y_test) = mnist.load_data()# 随机选择100个样本

indices = sample(range(len(x_test)), 100)correct = 0

total = 100for i, idx in enumerate(indices, 1):# 显示图像plt.imshow(x_test[idx], cmap='gray')plt.axis('off')plt.show()# 获取用户输入user_answer = input(f"问题 {i}/100: 这个数字是什么? ")# 检查答案if int(user_answer) == y_test[idx]:correct += 1print("正确!")else:print(f"错误. 正确答案是 {y_test[idx]}")print(f"当前正确率: {correct}/{i} ({correct/i*100:.2f}%)")print(f"\n最终正确率: {correct}/{total} ({correct/total*100:.2f}%)")

实验过程

实验环境

pycharm+jupyter notebook

导入模块

import numpy as np

import matplotlib.pyplot as plt

import tensorflow as tf

from sklearn.metrics import accuracy_score

from tensorflow.keras.layers import Input,Dense,Dropout

from tensorflow.keras.regularizers import l2

from tensorflow.keras.optimizers import Adam

from tensorflow.keras.models import Sequential

from tensorflow.keras.losses import SparseCategoricalCrossentropy

from tensorflow.keras.callbacks import EarlyStoppingimport matplotlib

matplotlib.rcParams['font.family'] = 'SimHei' # 或者 'Microsoft YaHei'

matplotlib.rcParams['axes.unicode_minus'] = False # 解决负号 '-'

导入MNIST数据集

导入mnist手写数字集(包括训练集和测试集)

from tensorflow.keras.datasets import mnist

(x_train, y_train), (x_test, y_test) = mnist.load_data()

查看训练、测试数据集的规模

print(f'x_train.shape:{x_train.shape}')

print(f'y_train.shape:{y_train.shape}')

print(f'x_test.shape:{x_test.shape}')

print(f'y_test.shape:{y_test.shape}')

x_train.shape:(60000, 28, 28)

y_train.shape:(60000,)

x_test.shape:(10000, 28, 28)

y_test.shape:(10000,)

查看64张手写图片

#查看64张训练手写图片内容

#获取训练集规模

m=x_train.shape[0]

#创建4*4子图布局

fig,axes=plt.subplots(8,8,figsize=(8,8))

#每张子图随机呈现一张手写图片

for i,ax in enumerate(axes.flat):idx=np.random.randint(m)#imshow():传入图片的像素矩阵,cmap='gray',显示黑白图片ax.imshow(x_train[idx],cmap='gray')#设置子图标题,将图片标签显示在图片上方ax.set_title(y_train[idx])# 移除坐标轴ax.axis('off')

#调整子图之间的间距

plt.tight_layout()

(由于空间限制,没有展现全64张图片)

将图片灰度像素矩阵转为灰度像素向量[展平],同时进行归一化[/255](0-255->0-1)

x_train_flat=x_train.reshape(60000,28*28).astype('float32')/255

x_test_flat=x_test.reshape(10000,28*28).astype('float32')/255

查看展平后数据集规模

print(f'x_train.shape:{x_train_flat.shape}')

print(f'x_test.shape:{x_test_flat.shape}')

x_train.shape:(60000, 784)

x_test.shape:(10000, 784)

创建神经网络模型进行训练,测试,评估

初步创建第一个三层全连接神经网络,隐层中采用‘relu’激活函数,使用分类交叉熵损失函数(设置from_logits=True,减少训练过程计算误差),Adam学习率自适应器(设置初始学习率0.001)

#创建神经网络

model1=Sequential([Input(shape=(784,)),Dense(128,activation='relu',name='L1'),Dense(32,activation='relu',name='L2'),Dense(10,activation='linear',name='L3'),],name='model1',

)

#编译模型

model1.compile(loss=SparseCategoricalCrossentropy(from_logits=True),optimizer=Adam(learning_rate=0.001))

#查看模型总结

model1.summary()

Model: "model1"

_________________________________________________________________Layer (type) Output Shape Param #

=================================================================L1 (Dense) (None, 128) 100480 L2 (Dense) (None, 32) 4128 L3 (Dense) (None, 10) 330 =================================================================

Total params: 104,938

Trainable params: 104,938

Non-trainable params: 0

model1拟合训练集开始训练,迭代次数初步设置为20

model1.fit(x_train_flat,y_train,epochs=20)

Epoch 1/20

1875/1875 [==============================] - 12s 5ms/step - loss: 0.2502

Epoch 2/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.1057

Epoch 3/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0748

Epoch 4/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0547

Epoch 5/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0438

Epoch 6/20

1875/1875 [==============================] - 8s 5ms/step - loss: 0.0360

Epoch 7/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0300

Epoch 8/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0237

Epoch 9/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0223

Epoch 10/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0201

Epoch 11/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0166

Epoch 12/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0172

Epoch 13/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0131

Epoch 14/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0124

Epoch 15/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0133

Epoch 16/20

1875/1875 [==============================] - 10s 5ms/step - loss: 0.0108

Epoch 17/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0095

Epoch 18/20

1875/1875 [==============================] - 10s 5ms/step - loss: 0.0116

Epoch 19/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0090

Epoch 20/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0084

查看model1训练结果,由于模型直接输出Logits,需要通过softmax函数激活输出概率向量,然后通过最大概率索引得出模型识别的手写数字

#查看训练结果

z_train_hat=model1.predict(x_train_flat)

#经过softmax激活后得到概率向量构成的矩阵

p_train_hat=tf.nn.softmax(z_train_hat).numpy()

#找出每个概率向量最大概率对应的索引,即识别的数字

y_train_hat=np.argmax(p_train_hat,axis=1)

print(y_train_hat)

可以将上述代码编写为函数:

#神经网络输出->最终识别结果

def get_result(z):p=tf.nn.softmax(z)y=np.argmax(p,axis=1)return y

为了理解上面的输出处理过程,查看第一个样本的逻辑输出、概率向量和识别数字

print(f'Logits:{z_train_hat[0]}')

print(f'Probabilities:{p_train_hat[0]}')

print(f'targe:{y_train_hat[0]}')

Logits:[-21.427883 -11.558845 -15.150495 15.6205845 -58.351833 29.704205-23.925339 -30.009314 -11.389831 -14.521982 ]

Probabilities:[6.2175050e-23 1.2013921e-18 3.3101813e-20 7.6482343e-07 0.0000000e+009.9999928e-01 5.1166414e-24 1.1661356e-26 1.4226123e-18 6.2059749e-20]

targe:5

输出model1训练准确率,准确率达到99.8%

print(f'model1训练集准确率:{accuracy_score(y_train,y_train_hat)}')

model1训练集准确率:0.998133

测试model1,准确率达到97.9%,相当不戳

z_test_hat=model1.predict(x_test_flat)

y_test_hat=get_result(z_test_hat)

print(f'model1测试集准确率:{accuracy_score(y_test,y_test_hat)}')

313/313 [==============================] - 1s 3ms/step

model1测试集准确率:0.9789

为了方便后续神经网络模型的实验,编写run_model函数包含训练、测试模型的整个过程,引入早停机制,即当10个epoch内训练损失没有改善,则停止训练

early_stopping = EarlyStopping(monitor='loss', patience=10, # 如果10个epoch内训练损失没有改善,则停止训练restore_best_weights=True # 恢复最佳权重

)def run_model(model,epochs):model.fit(x_train_flat,y_train,epochs=epochs,callbacks=[early_stopping]) z_train_hat=model.predict(x_train_flat)y_train_hat=get_result(z_train_hat)print(f'{model.name}训练准确率:{accuracy_score(y_train,y_train_hat)}')z_test_hat=model.predict(x_test_flat)y_test_hat=get_result(z_test_hat)print(f'{model.name}测试准确率:{accuracy_score(y_test,y_test_hat)}')

查看模型在哪些图片上栽了跟头:

#显示n张错误识别图片的函数def show_error_pic(x, y, y_pred, n=64):wrong_idx = (y != y_pred)# 获取错误识别的图片和标签x_wrong = x[wrong_idx]y_wrong = y[wrong_idx]y_pred_wrong = y_pred[wrong_idx]# 选择前n张错误图片n = min(n, len(x_wrong))x_wrong = x_wrong[:n]y_wrong = y_wrong[:n]y_pred_wrong = y_pred_wrong[:n]# 设置图片网格rows = int(np.ceil(n / 8))fig, axes = plt.subplots(rows, 8, figsize=(20, 2.5*rows))axes = axes.flatten()for i in range(n):ax = axes[i]ax.imshow(x_wrong[i].reshape(28, 28), cmap='gray')ax.set_title(f'True: {y_wrong[i]}, Pred: {y_pred_wrong[i]}')ax.axis('off')# 隐藏多余的子图for i in range(n, len(axes)):axes[i].axis('off')plt.tight_layout()plt.show()show_error_pic(x_test,y_test,y_test_hat)

(出于空间限制,只展示部分图片)

模型优化

目前来看我们第一个较简单的神经网络表现得非常不错,训练准确率达到99.8%,测试准确率达到97.9%,而人类的平均准确率约为97.5%到98.5%,因此我们诊断模型存在一定高方差的问题,可以考虑引入正则化技术或增加数据量来优化模型,或者从另一方面,考虑采用更加大型的神经网络看看能否达到更优的准确率。

model2:model1基础上,增加迭代次数至40次

#创建神经网络

model2=Sequential([Input(shape=(784,)),Dense(128,activation='relu',name='L1'),Dense(32,activation='relu',name='L2'),Dense(10,activation='linear',name='L3'),],name='model2',

)

#编译模型

model2.compile(loss=SparseCategoricalCrossentropy(from_logits=True),optimizer=Adam(learning_rate=0.001))

#查看模型总结

model2.summary()run_model(model2,40)

Model: "model2"

_________________________________________________________________Layer (type) Output Shape Param #

=================================================================L1 (Dense) (None, 128) 100480 L2 (Dense) (None, 32) 4128 L3 (Dense) (None, 10) 330 =================================================================

Total params: 104,938

Trainable params: 104,938

Non-trainable params: 0

_________________________________________________________________

Epoch 1/40

1875/1875 [==============================] - 10s 5ms/step - loss: 0.2670

Epoch 2/40

1875/1875 [==============================] - 10s 5ms/step - loss: 0.1124

Epoch 3/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0786

Epoch 4/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0593

Epoch 5/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0468

Epoch 6/40

1875/1875 [==============================] - 8s 5ms/step - loss: 0.0377

Epoch 7/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0310

Epoch 8/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0266

Epoch 9/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0246

Epoch 10/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0183

Epoch 11/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0180

Epoch 12/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0160

Epoch 13/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0170

Epoch 14/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0133

Epoch 15/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0135

Epoch 16/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0117

Epoch 17/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0108

Epoch 18/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0110

Epoch 19/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0107

Epoch 20/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0086

Epoch 21/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0096

Epoch 22/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0101

Epoch 23/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0083

Epoch 24/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0079

Epoch 25/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0095

Epoch 26/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0087

Epoch 27/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0063

Epoch 28/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0087

Epoch 29/40

1875/1875 [==============================] - 8s 4ms/step - loss: 0.0080

Epoch 30/40

1875/1875 [==============================] - 7s 4ms/step - loss: 0.0069

Epoch 31/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0053

Epoch 32/40

1875/1875 [==============================] - 8s 4ms/step - loss: 0.0071

Epoch 33/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0056

Epoch 34/40

1875/1875 [==============================] - 8s 4ms/step - loss: 0.0089

Epoch 35/40

1875/1875 [==============================] - 8s 4ms/step - loss: 0.0062

Epoch 36/40

1875/1875 [==============================] - 8s 4ms/step - loss: 0.0084

Epoch 37/40

1875/1875 [==============================] - 8s 4ms/step - loss: 0.0051

Epoch 38/40

1875/1875 [==============================] - 8s 4ms/step - loss: 0.0063

Epoch 39/40

1875/1875 [==============================] - 8s 4ms/step - loss: 0.0074

Epoch 40/40

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0063

1875/1875 [==============================] - 5s 3ms/step

model2训练准确率:0.9984166666666666

313/313 [==============================] - 1s 3ms/step

model2测试准确率:0.98可以看到测试准确率达到98%,略有提升,但考虑到运行时间翻倍,收益并不明显

model3:采用宽度和厚度更大的神经网络,迭代次数20

#增加模型宽度和厚度

model3 = Sequential([Input(shape=(784,)),Dense(256, activation='relu', name='L1'),Dense(128, activation='relu', name='L2'),Dense(64, activation='relu', name='L3'),Dense(10, activation='linear', name='L4'),

], name='model3')#编译模型

model3.compile(loss=SparseCategoricalCrossentropy(from_logits=True),optimizer=Adam(learning_rate=0.001))

#查看模型总结

model3.summary()run_model(model3,20)

Model: "model3"

_________________________________________________________________Layer (type) Output Shape Param #

=================================================================L1 (Dense) (None, 256) 200960 L2 (Dense) (None, 128) 32896 L3 (Dense) (None, 64) 8256 L4 (Dense) (None, 10) 650 =================================================================

Total params: 242,762

Trainable params: 242,762

Non-trainable params: 0

_________________________________________________________________

Epoch 1/20

1875/1875 [==============================] - 12s 6ms/step - loss: 0.2152

Epoch 2/20

1875/1875 [==============================] - 12s 6ms/step - loss: 0.0908

Epoch 3/20

1875/1875 [==============================] - 12s 7ms/step - loss: 0.0623

Epoch 4/20

1875/1875 [==============================] - 12s 7ms/step - loss: 0.0496

Epoch 5/20

1875/1875 [==============================] - 12s 7ms/step - loss: 0.0390

Epoch 6/20

1875/1875 [==============================] - 12s 6ms/step - loss: 0.0341

Epoch 7/20

1875/1875 [==============================] - 12s 6ms/step - loss: 0.0291

Epoch 8/20

1875/1875 [==============================] - 12s 6ms/step - loss: 0.0244

Epoch 9/20

1875/1875 [==============================] - 12s 7ms/step - loss: 0.0223

Epoch 10/20

1875/1875 [==============================] - 12s 7ms/step - loss: 0.0187

Epoch 11/20

1875/1875 [==============================] - 12s 7ms/step - loss: 0.0206

Epoch 12/20

1875/1875 [==============================] - 12s 6ms/step - loss: 0.0145

Epoch 13/20

1875/1875 [==============================] - 12s 7ms/step - loss: 0.0176

Epoch 14/20

1875/1875 [==============================] - 12s 7ms/step - loss: 0.0153

Epoch 15/20

1875/1875 [==============================] - 12s 6ms/step - loss: 0.0120

Epoch 16/20

1875/1875 [==============================] - 12s 6ms/step - loss: 0.0148

Epoch 17/20

1875/1875 [==============================] - 12s 6ms/step - loss: 0.0125

Epoch 18/20

1875/1875 [==============================] - 12s 6ms/step - loss: 0.0123

Epoch 19/20

1875/1875 [==============================] - 13s 7ms/step - loss: 0.0120

Epoch 20/20

1875/1875 [==============================] - 13s 7ms/step - loss: 0.0094

1875/1875 [==============================] - 6s 3ms/step

model3训练准确率:0.9989333333333333

313/313 [==============================] - 1s 4ms/step

model3测试准确率:0.9816

model3训练准确率达到99.9%,测试准确率也取得了目前为止的新高98.2%

model4:model1基础上,加入Dropout层引入正则化

#Dropout正则化

model4 = Sequential([Input(shape=(784,)),Dense(128, activation='relu', name='L1'),Dropout(0.3),Dense(64, activation='relu', name='L2'),Dropout(0.2),Dense(10, activation='linear', name='L3'),

], name='model4')#编译模型

model4.compile(loss=SparseCategoricalCrossentropy(from_logits=True),optimizer=Adam(learning_rate=0.001))

#查看模型总结

model4.summary()run_model(model4,20)

Model: "model5"

_________________________________________________________________Layer (type) Output Shape Param #

=================================================================L1 (Dense) (None, 128) 100480 dropout_2 (Dropout) (None, 128) 0 L2 (Dense) (None, 64) 8256 dropout_3 (Dropout) (None, 64) 0 L3 (Dense) (None, 10) 650 =================================================================

Total params: 109,386

Trainable params: 109,386

Non-trainable params: 0

_________________________________________________________________

Epoch 1/20

1875/1875 [==============================] - 15s 7ms/step - loss: 0.3686

Epoch 2/20

1875/1875 [==============================] - 12s 6ms/step - loss: 0.1855

Epoch 3/20

1875/1875 [==============================] - 17s 9ms/step - loss: 0.1475

Epoch 4/20

1875/1875 [==============================] - 17s 9ms/step - loss: 0.1289

Epoch 5/20

1875/1875 [==============================] - 20s 11ms/step - loss: 0.1124

Epoch 6/20

1875/1875 [==============================] - 19s 10ms/step - loss: 0.1053

Epoch 7/20

1875/1875 [==============================] - 22s 12ms/step - loss: 0.0976

Epoch 8/20

1875/1875 [==============================] - 15s 8ms/step - loss: 0.0907

Epoch 9/20

1875/1875 [==============================] - 12s 6ms/step - loss: 0.0861

Epoch 10/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0807

Epoch 11/20

1875/1875 [==============================] - 10s 5ms/step - loss: 0.0794

Epoch 12/20

1875/1875 [==============================] - 11s 6ms/step - loss: 0.0744

Epoch 13/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0733

Epoch 14/20

1875/1875 [==============================] - 8s 4ms/step - loss: 0.0734

Epoch 15/20

1875/1875 [==============================] - 8s 4ms/step - loss: 0.0691

Epoch 16/20

1875/1875 [==============================] - 10s 5ms/step - loss: 0.0656

Epoch 17/20

1875/1875 [==============================] - 11s 6ms/step - loss: 0.0674

Epoch 18/20

1875/1875 [==============================] - 12s 7ms/step - loss: 0.0614

Epoch 19/20

1875/1875 [==============================] - 11s 6ms/step - loss: 0.0601

Epoch 20/20

1875/1875 [==============================] - 9s 5ms/step - loss: 0.0614

1875/1875 [==============================] - 5s 3ms/step

model5训练准确率:0.9951833333333333

313/313 [==============================] - 1s 2ms/step

model5测试准确率:0.98

model5训练准确率下降到了99.5%,但是相比model1测试准确率98%略有提升,Dropout正则化的确有效降低了模型方差,增强了模型的泛化能力

综上考虑,使用model3的框架同时引入Dropout正则化,迭代训练40次,构建model7

#最终全连接神经网络

model7 = Sequential([Input(shape=(784,)),Dense(256, activation='relu', name='L1'),Dropout(0.3),Dense(128, activation='relu', name='L2'),Dropout(0.2),Dense(64, activation='relu', name='L3'),Dropout(0.1),Dense(10, activation='linear', name='L4'),

], name='model7')#编译模型

model7.compile(loss=SparseCategoricalCrossentropy(from_logits=True),optimizer=Adam(learning_rate=0.001))

#查看模型总结

model7.summary()run_model(model7,40)

Model: "model7"

_________________________________________________________________Layer (type) Output Shape Param #

=================================================================L1 (Dense) (None, 256) 200960 dropout_4 (Dropout) (None, 256) 0 L2 (Dense) (None, 128) 32896 dropout_5 (Dropout) (None, 128) 0 L3 (Dense) (None, 64) 8256 dropout_6 (Dropout) (None, 64) 0 L4 (Dense) (None, 10) 650 =================================================================

Total params: 242,762

Trainable params: 242,762

Non-trainable params: 0

_________________________________________________________________

Epoch 1/40

1875/1875 [==============================] - 16s 8ms/step - loss: 0.3174

Epoch 2/40

1875/1875 [==============================] - 14s 7ms/step - loss: 0.1572

Epoch 3/40

1875/1875 [==============================] - 16s 9ms/step - loss: 0.1255

Epoch 4/40

1875/1875 [==============================] - 23s 12ms/step - loss: 0.1047

Epoch 5/40

1875/1875 [==============================] - 19s 10ms/step - loss: 0.0935

Epoch 6/40

1875/1875 [==============================] - 30s 16ms/step - loss: 0.0839

Epoch 7/40

1875/1875 [==============================] - 20s 11ms/step - loss: 0.0776

Epoch 8/40

1875/1875 [==============================] - 21s 11ms/step - loss: 0.0728

Epoch 9/40

1875/1875 [==============================] - 17s 9ms/step - loss: 0.0661

Epoch 10/40

1875/1875 [==============================] - 14s 8ms/step - loss: 0.0629

Epoch 11/40

1875/1875 [==============================] - 16s 8ms/step - loss: 0.0596

Epoch 12/40

1875/1875 [==============================] - 26s 14ms/step - loss: 0.0566

Epoch 13/40

1875/1875 [==============================] - 22s 12ms/step - loss: 0.0533

Epoch 14/40

1875/1875 [==============================] - 16s 8ms/step - loss: 0.0520

Epoch 15/40

1875/1875 [==============================] - 14s 7ms/step - loss: 0.0467

Epoch 16/40

1875/1875 [==============================] - 15s 8ms/step - loss: 0.0458

Epoch 17/40

1875/1875 [==============================] - 15s 8ms/step - loss: 0.0451

Epoch 18/40

1875/1875 [==============================] - 19s 10ms/step - loss: 0.0443

Epoch 19/40

1875/1875 [==============================] - 43s 23ms/step - loss: 0.0417

Epoch 20/40

1875/1875 [==============================] - 38s 20ms/step - loss: 0.0409

Epoch 21/40

1875/1875 [==============================] - 21s 11ms/step - loss: 0.0392

Epoch 22/40

1875/1875 [==============================] - 16s 9ms/step - loss: 0.0396

Epoch 23/40

1875/1875 [==============================] - 20s 11ms/step - loss: 0.0355

Epoch 24/40

1875/1875 [==============================] - 17s 9ms/step - loss: 0.0368

Epoch 25/40

1875/1875 [==============================] - 18s 10ms/step - loss: 0.0359

Epoch 26/40

1875/1875 [==============================] - 18s 10ms/step - loss: 0.0356

Epoch 27/40

1875/1875 [==============================] - 16s 8ms/step - loss: 0.0360

Epoch 28/40

1875/1875 [==============================] - 17s 9ms/step - loss: 0.0326

Epoch 29/40

1875/1875 [==============================] - 19s 10ms/step - loss: 0.0335

Epoch 30/40

1875/1875 [==============================] - 19s 10ms/step - loss: 0.0310

Epoch 31/40

1875/1875 [==============================] - 21s 11ms/step - loss: 0.0324

Epoch 32/40

1875/1875 [==============================] - 16s 9ms/step - loss: 0.0301

Epoch 33/40

1875/1875 [==============================] - 17s 9ms/step - loss: 0.0303

Epoch 34/40

1875/1875 [==============================] - 15s 8ms/step - loss: 0.0319

Epoch 35/40

1875/1875 [==============================] - 17s 9ms/step - loss: 0.0300

Epoch 36/40

1875/1875 [==============================] - 17s 9ms/step - loss: 0.0305

Epoch 37/40

1875/1875 [==============================] - 14s 7ms/step - loss: 0.0290

Epoch 38/40

1875/1875 [==============================] - 19s 10ms/step - loss: 0.0288

Epoch 39/40

1875/1875 [==============================] - 20s 11ms/step - loss: 0.0272

Epoch 40/40

1875/1875 [==============================] - 38s 20ms/step - loss: 0.0264

1875/1875 [==============================] - 18s 9ms/step

model7训练准确率:0.9984333333333333

313/313 [==============================] - 2s 5ms/step

model7测试准确率:0.9831

model7训练准确率99.8%,测试准确率达到了98.3%,相比model1的97.9%,取得了接近0.4%的提升。

本实验是学习了神经网络基础后的一个实验练习,因此只采用全连接神经网络模型。我们知道CNN模型在图像识别上能力更强,因此在实验最后创建一个CNN网络进行测试(gpt生成网络框架)。

from tensorflow.keras.layers import Conv2D, MaxPooling2D, Flattenmodel8 = Sequential([Input(shape=(28, 28, 1)),Conv2D(32, kernel_size=(3, 3), activation='relu'),MaxPooling2D(pool_size=(2, 2)),Conv2D(64, kernel_size=(3, 3), activation='relu'),MaxPooling2D(pool_size=(2, 2)),Flatten(),Dense(128, activation='relu'),Dense(10, activation='linear')

], name='cnn_model')#编译模型

model8.compile(loss=SparseCategoricalCrossentropy(from_logits=True),optimizer=Adam(learning_rate=0.001))

#查看模型总结

model8.summary()model8.fit(x_train,y_train,epochs=20,callbacks=[early_stopping])

z_train_hat=model8.predict(x_train)

y_train_hat=get_result(z_train_hat)

print(f'{model8.name}训练准确率:{accuracy_score(y_train,y_train_hat)}')z_test_hat=model8.predict(x_test)

y_test_hat=get_result(z_test_hat)

print(f'{model8.name}测试准确率:{accuracy_score(y_test,y_test_hat)}')cnn网络:

cnn_model训练准确率:0.9982333333333333

cnn_model测试准确率:0.9878

可以看到测试准确率达到了98.8%,比我们上面的全连接神经网络要优异。

相关文章:

机器学习之实战篇——MNIST手写数字0~9识别(全连接神经网络模型)

机器学习之实战篇——Mnist手写数字0~9识别(全连接神经网络模型) 文章传送MNIST数据集介绍:实验过程实验环境导入模块导入MNIST数据集创建神经网络模型进行训练,测试,评估模型优化 文章传送 机器学习之监督学习&#…...

ICLR2024: 大视觉语言模型中对象幻觉的分析和缓解

https://arxiv.org/pdf/2310.00754 https://github.com/YiyangZhou/LURE 背景 对象幻觉:生成包含图像中实际不存在的对象的描述 早期的工作试图通过跨不同模式执行细粒度对齐(Biten et al.,2022)或通过数据增强减少对象共现模…...

数据库系统 第54节 数据库优化器

数据库优化器是数据库管理系统(DBMS)中的一个关键组件,它的作用是分析用户的查询请求,并生成一个高效的执行计划。这个执行计划定义了如何访问数据和执行操作,以最小化查询的执行时间和资源消耗。以下是数据库优化器的…...

微服务杂谈

几个概念 还是第一次听说Spring Cloud Alibaba ,真是孤陋寡闻了,以前只知道 SpringCloud 是为了搭建微服务的,spring boot 则是快速创建一个项目,也可以是一个微服务 。那么SpringCloud 和 Spring boot 有什么区别呢?S…...

【Pandas操作2】groupby函数、pivot_table函数、数据运算(map和apply)、重复值清洗、异常值清洗、缺失值处理

1 数据清洗 #### 概述数据清洗是指对原始数据进行处理和转换,以去除无效、重复、缺失或错误的数据,使数据符合分析的要求。#### 作用和意义- 提高数据质量:- 通过数据清洗,数据质量得到提升,减少错误分析和错误决策。…...

如何分辨IP地址是否能够正常使用

在互联网的日常使用中,无论是进行网络测试、网站访问、数据抓取还是远程访问,一个正常工作的IP地址都是必不可少的。然而,由于各种原因,IP地址可能无法正常使用,如被封禁、网络连接问题或配置错误等。本文将详细介绍如…...

Sqoop 数据迁移

Sqoop 数据迁移 一、Sqoop 概述二、Sqoop 优势三、Sqoop 的架构与工作机制四、Sqoop Import 流程五、Sqoop Export 流程六、Sqoop 安装部署6.1 下载解压6.2 修改 Sqoop 配置文件6.3 配置 Sqoop 环境变量6.4 添加 MySQL 驱动包6.5 测试运行 Sqoop6.5.1 查看Sqoop命令语法6.5.2 测…...

【数据结构】排序算法系列——希尔排序(附源码+图解)

希尔排序 算法思想 希尔排序(Shell Sort)是一种改进的插入排序算法,希尔排序的创造者Donald Shell想出了这个极具创造力的改进。其时间复杂度取决于步长序列(gap)的选择。我们在插入排序中,会发现是对整体…...

c++(继承、模板进阶)

一、模板进阶 1、非类型模板参数 模板参数分类类型形参与非类型形参。 类型形参即:出现在模板参数列表中,跟在class或者typename之类的参数类型名称。 非类型形参,就是用一个常量作为类(函数)模板的一个参数,在类(函数)模板中…...

【机器学习】从零开始理解深度学习——揭开神经网络的神秘面纱

1. 引言 随着技术的飞速发展,人工智能(AI)已从学术研究的实验室走向现实应用的舞台,成为推动现代社会变革的核心动力之一。而在这一进程中,深度学习(Deep Learning)因其在大规模数据处理和复杂问题求解中的卓越表现,迅速崛起为人工智能的最前沿技术。深度学习的核心是…...

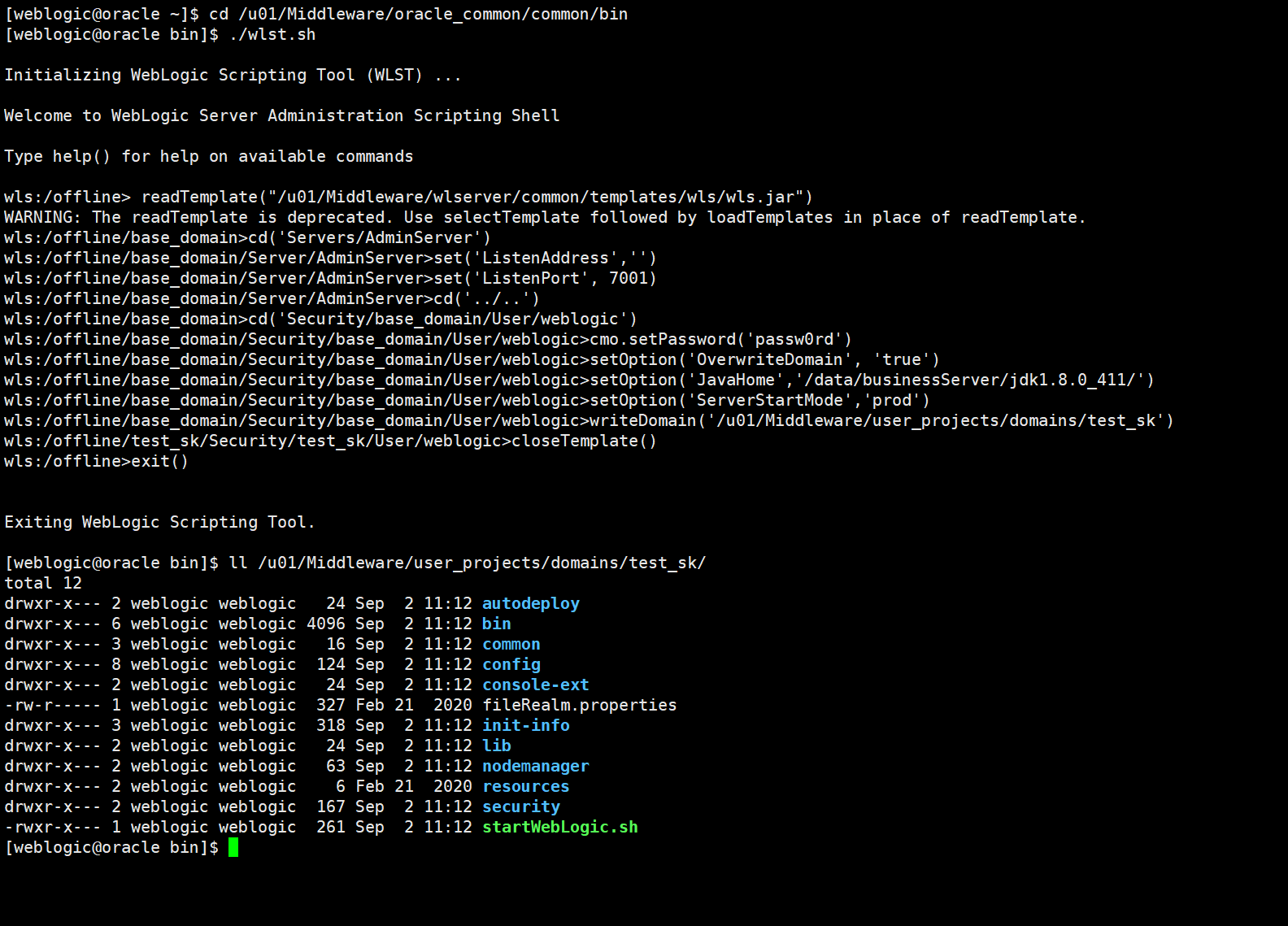

WebLogic 笔记汇总

WebLogic 笔记汇总 一、weblogic安装 1、创建用户和用户组 groupadd weblogicuseradd -g weblogic weblogic # 添加用户,并用-g参数来制定 web用户组passwd weblogic # passwd命令修改密码# 在文件末尾增加以下内容 cat >>/etc/security/limits.conf<<EOF web…...

leetcode:2710. 移除字符串中的尾随零(python3解法)

难度:简单 给你一个用字符串表示的正整数 num ,请你以字符串形式返回不含尾随零的整数 num 。 示例 1: 输入:num "51230100" 输出:"512301" 解释:整数 "51230100" 有 2 个尾…...

Python GUI入门详解-学习篇

一、简介 GUI就是图形用户界面的意思,在Python中使用PyQt可以快速搭建自己的应用,自己的程序看上去就会更加高大上。 有时候使用 python 做自动化运维操作,开发一个简单的应用程序非常方便。程序写好,每次都要通过命令行运行 pyt…...

QT5实现https的post请求(QNetworkAccessManager、QNetworkRequest和QNetworkReply)

QT5实现https的post请求 前言一、一定要有sslErrors处理1、问题经过2、代码示例 二、要利用抓包工具1、问题经过2、wireshark的使用3、利用wireshark查看服务器地址4、利用wireshark查看自己构建的请求报文 三、返回数据只能读一次1、问题描述2、部分代码 总结 前言 QNetworkA…...

vscode 使用git bash,路径分隔符缺少问题

window使用bash --login -i 使用bash时候,在系统自带的terminal里面进入,测试conda可以正常输出,但是在vscode里面输入conda发现有问题 bash: C:\Users\marswennaconda3\Scripts: No such file or directory实际路径应该要为 C:\Users\mars…...

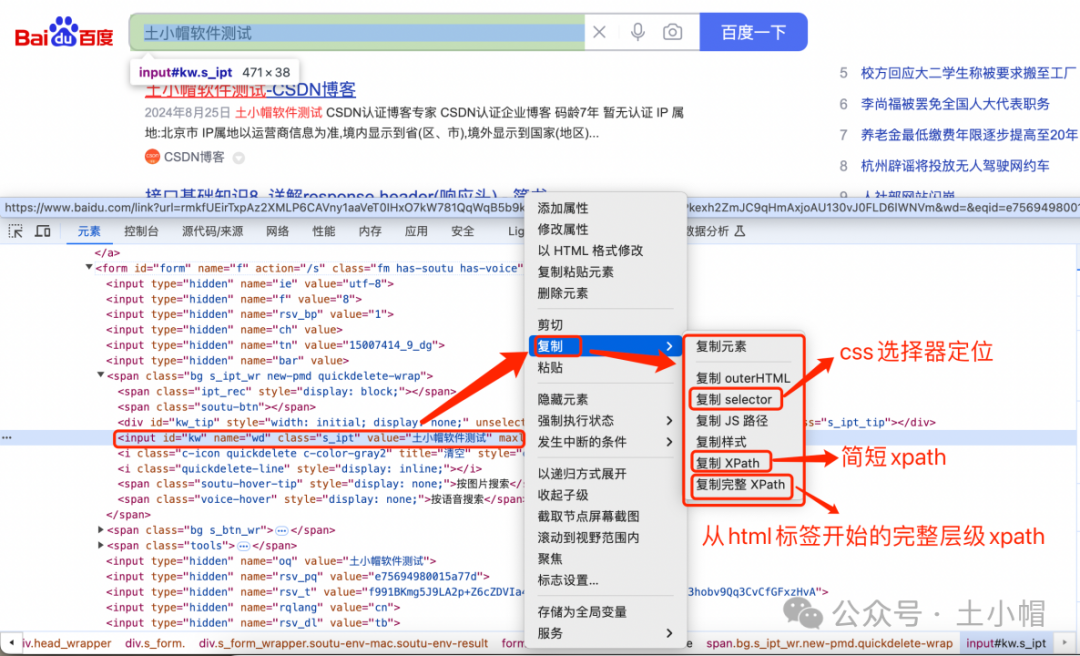

F12抓包10:UI自动化 - Elements(元素)定位页面元素

课程大纲 1、前端基础 1.1 元素 元素是构成HTML文档的基本组成部分之一,定义了文档的结构和内容,比如段落、标题、链接等。 元素大致分为3种:基本结构、自闭合元素(self-closing element)、嵌套元素。 1、基本结构&…...

android 删除系统原有的debug.keystore,系统运行的时候,重新生成新的debug.keystore,来完成App的运行。

1、先上一个图:这个是keystore无效的原因 之前在安装这个旧版本android studio的时候呢,安装过一版最新的android studio,然后通过模拟器跑过测试的demo。 2、运行旧的项目到模拟器的时候,就报错了: Execution failed…...

SQL入门题

作者SQL入门小白,此栏仅是记录一些解题过程 1、题目 用户访问表users,记录了用户id(usr_id)和访问日期(log_date),求出连续3天以上访问的用户id。 2、解答过程 2.1数据准备 通过navicat创建数据…...

Python实战:实战练习案例汇总

Python实战:实战练习案例汇总 **Python世界系列****Python实践系列****Python语音处理系列** 本文逆序更新,汇总实践练习案例。 Python世界系列 Python世界:力扣题43大数相乘算法实践Python世界:求解满足某完全平方关系的整数实…...

zabbix之钉钉告警

钉钉告警设置 我们可以将同一个运維组的人员加入到同一个钉钉工作群中,当有异常出现后,Zabbix 将告警信息发送到钉钉的群里面,此时,群内所有的运维人员都能在第一时间看到这则告警详细。 Zabbix 监控系统默认没有开箱即用…...

新手首次使用 Taotoken 从注册到完成第一个 API 调用的完整指南

🚀 告别海外账号与网络限制!稳定直连全球优质大模型,限时半价接入中。 👉 点击领取海量免费额度 新手首次使用 Taotoken 从注册到完成第一个 API 调用的完整指南 本文旨在为初次接触 Taotoken 的开发者提供一份清晰的入门指引。我…...

前后端分离项目避坑指南:为什么你的网关CORS配置了还是报跨域错误?

前后端分离项目避坑指南:为什么你的网关CORS配置了还是报跨域错误? 在前后端分离架构中,跨域资源共享(CORS)问题一直是开发者绕不开的"拦路虎"。即便在网关层正确配置了CORS规则,开发者仍可能遇到…...

【亲测免费】 探索卷积神经网络之美:一键绘制专业结构图的利器

探索卷积神经网络之美:一键绘制专业结构图的利器 【下载地址】卷积神经网络结构绘制工具 本资源适用于需要展示卷积神经网络具体结构的研究人员。用户下载本项目后,按照README官方教程中的“Getting Started”部分进行操作,简单学习语法后即可…...

央视刷屏燃了!82 岁“中国刻蚀机之父”放狠话:我们已有能力来做最先进的设备

5 月 16 日央视《对话》播出后,82 岁的“中国刻蚀机之父”尹志尧一夜刷屏,相关话题冲上热搜,背后是他的硬核宣言:我们现在已经有能力来做最先进的设备。①尹志尧早年赴美深造,在半导体设备领域深耕数十年。他曾先后在英…...

【Nginx】Nginx index 指令全解:从首页加载失败到高性能目录服务的生产实践

Nginx index 指令全解:从首页加载失败到高性能目录服务的生产实践 本文面向已部署过简单 Nginx 服务、了解反向代理概念,但尚未系统掌握其静态文件目录索引与默认首页机制的中高级工程师。我们将彻底拆解 index 指令的工作原理、继承规则、与 try_files 的协作边界,揭示为何…...

构建Web化配置中心:从环境变量管理到实时热更新的工程实践

1. 项目概述与核心价值最近在折腾一个挺有意思的小项目,叫Laliet/cc-switch-web。乍一看这个标题,可能有点摸不着头脑,但如果你是一个经常需要处理不同环境配置、或者在不同服务之间切换的前端或全栈开发者,这个项目很可能就是你一…...

腾讯混元调用代码实践

目录 查看资源是否用尽: ai3d的资源包,可以免费领取 api调用实例,亲测ok: 查看资源是否用尽: https://console.cloud.tencent.com/hunyuan/packages ai3d的资源包,可以免费领取 https://console.clou…...

2026本地视频怎么去水印?5款免费去水印软件对比和实用方法指南

很多人都遇到过这个问题:辛辛苦苦保存下来的视频、素材库里的片段,上面都贴了水印,想要二次编辑或重新发布时,这些水印就成了"眼中钉"。本地视频怎么去水印?2026年有哪些靠谱的免费去水印方法?今…...

从电机控制到服务器电源:详解功率MOSFET栅极外加电容CGS与CGD的选型计算与布局要点

功率MOSFET栅极电容设计实战:从电机驱动到服务器电源的差异化策略 在电力电子系统的核心地带,功率MOSFET如同精密交响乐团的指挥,其开关性能直接决定整个系统的效率与可靠性。当我们面对电机驱动系统要求快速切换以降低损耗,或是服…...

——run with profiler查看我们所运行程序的描述、计算指标、内存、峰值内存和数量)

Google Earth Engine(GEE)——run with profiler查看我们所运行程序的描述、计算指标、内存、峰值内存和数量

分析器显示有关特定算法和计算的其他部分消耗的资源(CPU 时间、内存)的信息。这有助于诊断脚本运行缓慢或由于内存限制而失败的原因。要使用探查器,请单击“运行”按钮下拉菜单中的“使用探查器运行”选项。作为快捷方式,按住 Alt(或 Mac 上的 Option)并单击运行,或按 C…...