YOLO11改进|注意力机制篇|引入HAT超分辨率重建模块

目录

- 一、HAttention注意力机制

- 1.1HAttention注意力介绍

- 1.2HAT核心代码

- 二、添加HAT注意力机制

- 2.1STEP1

- 2.2STEP2

- 2.3STEP3

- 2.4STEP4

- 三、yaml文件与运行

- 3.1yaml文件

- 3.2运行成功截图

一、HAttention注意力机制

1.1HAttention注意力介绍

HAT模型 通过结合卷积特征提取与多尺度注意力机制,具备了强大的图像重建能力。它的优势在于能有效整合局部和全局信息,并通过残差连接和通道注意力等方式提高网络的表达能力和重建质量,适用于图像超分辨率和图像重建任务。

下面是HAT的工作流程和主要模块的作用

- 浅层特征提取 (Shallow Feature Extraction)

输入图像首先经过卷积操作提取低级特征。该过程用来捕捉图像的基础信息,如边缘、颜色等,形成初步的特征图。 - 深层特征提取 (Deep Feature Extraction)

浅层特征通过多个RHAG模块进行深度特征提取。RHAG由多个HAB(混合注意力块)和OCAB(重叠交叉注意力块)组成:

HAB:包含 CAB (Channel Attention Block) 和 (S)W-MSA (Shifted Window Multi-Head Self-Attention) 结构。

CAB (通道注意力块) 使用全局池化和通道注意力机制,专注于不同通道之间的依赖关系,以增强特定通道的特征表示。

(S)W-MSA 是一种窗口划分的自注意力机制,通过窗口化操作计算注意力,减少计算开销,同时增强局部与全局信息的交互。

OCAB:通过交叉注意力机制结合局部和全局特征,并通过重叠区域确保信息的连贯性和连续性。

优势:深度特征提取模块通过多个注意力模块结合局部和全局信息,实现对复杂特征的高效捕捉,同时保持较低的计算成本。 - 图像重建 (Image Reconstruction)

深层特征经过多个RHAG模块后,通过上采样操作重建回高分辨率图像。模型将提取到的深层特征与初始输入进行特征融合,生成更高质量的重建图像。 - 模块优势

RHAG (Residual Hybrid Attention Group):该模块通过残差连接增强网络的梯度流,避免深层网络中的梯度消失问题,同时结合多种注意力机制,提高特征提取的准确性和效率。

HAB (Hybrid Attention Block):该模块将通道注意力与窗口自注意力相结合,在不同尺度上捕捉图像特征。通道注意力增强了各个特征通道的表示能力,而窗口自注意力通过局部和全局上下文的信息交互来提升整体的特征感知能力。

OCAB (Overlapping Cross-Attention Block):通过交叉注意力和重叠区域融合,使模型在捕捉局部特征的同时,能够保持对全局特征的感知,避免信息的割裂。

1.2HAT核心代码

import math

import torch

import torch.nn as nn

from basicsr.utils.registry import ARCH_REGISTRY

from basicsr.archs.arch_util import to_2tuple, trunc_normal_

from einops import rearrangedef drop_path(x, drop_prob: float = 0., training: bool = False):"""Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).From: https://github.com/rwightman/pytorch-image-models/blob/master/timm/models/layers/drop.py"""if drop_prob == 0. or not training:return xkeep_prob = 1 - drop_probshape = (x.shape[0], ) + (1, ) * (x.ndim - 1) # work with diff dim tensors, not just 2D ConvNetsrandom_tensor = keep_prob + torch.rand(shape, dtype=x.dtype, device=x.device)random_tensor.floor_() # binarizeoutput = x.div(keep_prob) * random_tensorreturn outputclass DropPath(nn.Module):"""Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).From: https://github.com/rwightman/pytorch-image-models/blob/master/timm/models/layers/drop.py"""def __init__(self, drop_prob=None):super(DropPath, self).__init__()self.drop_prob = drop_probdef forward(self, x):return drop_path(x, self.drop_prob, self.training)class ChannelAttention(nn.Module):"""Channel attention used in RCAN.Args:num_feat (int): Channel number of intermediate features.squeeze_factor (int): Channel squeeze factor. Default: 16."""def __init__(self, num_feat, squeeze_factor=16):super(ChannelAttention, self).__init__()self.attention = nn.Sequential(nn.AdaptiveAvgPool2d(1),nn.Conv2d(num_feat, num_feat // squeeze_factor, 1, padding=0),nn.ReLU(inplace=True),nn.Conv2d(num_feat // squeeze_factor, num_feat, 1, padding=0),nn.Sigmoid())def forward(self, x):y = self.attention(x)return x * yclass CAB(nn.Module):def __init__(self, num_feat, compress_ratio=3, squeeze_factor=30):super(CAB, self).__init__()self.cab = nn.Sequential(nn.Conv2d(num_feat, num_feat // compress_ratio, 3, 1, 1),nn.GELU(),nn.Conv2d(num_feat // compress_ratio, num_feat, 3, 1, 1),ChannelAttention(num_feat, squeeze_factor))def forward(self, x):return self.cab(x)class Mlp(nn.Module):def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):super().__init__()out_features = out_features or in_featureshidden_features = hidden_features or in_featuresself.fc1 = nn.Linear(in_features, hidden_features)self.act = act_layer()self.fc2 = nn.Linear(hidden_features, out_features)self.drop = nn.Dropout(drop)def forward(self, x):x = self.fc1(x)x = self.act(x)x = self.drop(x)x = self.fc2(x)x = self.drop(x)return xdef window_partition(x, window_size):"""Args:x: (b, h, w, c)window_size (int): window sizeReturns:windows: (num_windows*b, window_size, window_size, c)"""b, h, w, c = x.shapex = x.view(b, h // window_size, window_size, w // window_size, window_size, c)windows = x.permute(0, 1, 3, 2, 4, 5).contiguous().view(-1, window_size, window_size, c)return windowsdef window_reverse(windows, window_size, h, w):"""Args:windows: (num_windows*b, window_size, window_size, c)window_size (int): Window sizeh (int): Height of imagew (int): Width of imageReturns:x: (b, h, w, c)"""b = int(windows.shape[0] / (h * w / window_size / window_size))x = windows.view(b, h // window_size, w // window_size, window_size, window_size, -1)x = x.permute(0, 1, 3, 2, 4, 5).contiguous().view(b, h, w, -1)return xclass WindowAttention(nn.Module):r""" Window based multi-head self attention (W-MSA) module with relative position bias.It supports both of shifted and non-shifted window.Args:dim (int): Number of input channels.window_size (tuple[int]): The height and width of the window.num_heads (int): Number of attention heads.qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: Trueqk_scale (float | None, optional): Override default qk scale of head_dim ** -0.5 if setattn_drop (float, optional): Dropout ratio of attention weight. Default: 0.0proj_drop (float, optional): Dropout ratio of output. Default: 0.0"""def __init__(self, dim, window_size, num_heads, qkv_bias=True, qk_scale=None, attn_drop=0., proj_drop=0.):super().__init__()self.dim = dimself.window_size = window_size # Wh, Wwself.num_heads = num_headshead_dim = dim // num_headsself.scale = qk_scale or head_dim**-0.5# define a parameter table of relative position biasself.relative_position_bias_table = nn.Parameter(torch.zeros((2 * window_size[0] - 1) * (2 * window_size[1] - 1), num_heads)) # 2*Wh-1 * 2*Ww-1, nHself.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)self.attn_drop = nn.Dropout(attn_drop)self.proj = nn.Linear(dim, dim)self.proj_drop = nn.Dropout(proj_drop)trunc_normal_(self.relative_position_bias_table, std=.02)self.softmax = nn.Softmax(dim=-1)def forward(self, x, rpi, mask=None):"""Args:x: input features with shape of (num_windows*b, n, c)mask: (0/-inf) mask with shape of (num_windows, Wh*Ww, Wh*Ww) or None"""b_, n, c = x.shapeqkv = self.qkv(x).reshape(b_, n, 3, self.num_heads, c // self.num_heads).permute(2, 0, 3, 1, 4)q, k, v = qkv[0], qkv[1], qkv[2] # make torchscript happy (cannot use tensor as tuple)q = q * self.scaleattn = (q @ k.transpose(-2, -1))relative_position_bias = self.relative_position_bias_table[rpi.view(-1)].view(self.window_size[0] * self.window_size[1], self.window_size[0] * self.window_size[1], -1) # Wh*Ww,Wh*Ww,nHrelative_position_bias = relative_position_bias.permute(2, 0, 1).contiguous() # nH, Wh*Ww, Wh*Wwattn = attn + relative_position_bias.unsqueeze(0)if mask is not None:nw = mask.shape[0]attn = attn.view(b_ // nw, nw, self.num_heads, n, n) + mask.unsqueeze(1).unsqueeze(0)attn = attn.view(-1, self.num_heads, n, n)attn = self.softmax(attn)else:attn = self.softmax(attn)attn = self.attn_drop(attn)x = (attn @ v).transpose(1, 2).reshape(b_, n, c)x = self.proj(x)x = self.proj_drop(x)return xclass HAB(nn.Module):r""" Hybrid Attention Block.Args:dim (int): Number of input channels.input_resolution (tuple[int]): Input resolution.num_heads (int): Number of attention heads.window_size (int): Window size.shift_size (int): Shift size for SW-MSA.mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: Trueqk_scale (float | None, optional): Override default qk scale of head_dim ** -0.5 if set.drop (float, optional): Dropout rate. Default: 0.0attn_drop (float, optional): Attention dropout rate. Default: 0.0drop_path (float, optional): Stochastic depth rate. Default: 0.0act_layer (nn.Module, optional): Activation layer. Default: nn.GELUnorm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNorm"""def __init__(self,dim,input_resolution,num_heads,window_size=7,shift_size=0,compress_ratio=3,squeeze_factor=30,conv_scale=0.01,mlp_ratio=4.,qkv_bias=True,qk_scale=None,drop=0.,attn_drop=0.,drop_path=0.,act_layer=nn.GELU,norm_layer=nn.LayerNorm):super().__init__()self.dim = dimself.input_resolution = input_resolutionself.num_heads = num_headsself.window_size = window_sizeself.shift_size = shift_sizeself.mlp_ratio = mlp_ratioif min(self.input_resolution) <= self.window_size:# if window size is larger than input resolution, we don't partition windowsself.shift_size = 0self.window_size = min(self.input_resolution)assert 0 <= self.shift_size < self.window_size, 'shift_size must in 0-window_size'self.norm1 = norm_layer(dim)self.attn = WindowAttention(dim,window_size=to_2tuple(self.window_size),num_heads=num_heads,qkv_bias=qkv_bias,qk_scale=qk_scale,attn_drop=attn_drop,proj_drop=drop)self.conv_scale = conv_scaleself.conv_block = CAB(num_feat=dim, compress_ratio=compress_ratio, squeeze_factor=squeeze_factor)self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()self.norm2 = norm_layer(dim)mlp_hidden_dim = int(dim * mlp_ratio)self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=act_layer, drop=drop)def forward(self, x, x_size, rpi_sa, attn_mask):h, w = x_sizeb, _, c = x.shape# assert seq_len == h * w, "input feature has wrong size"shortcut = xx = self.norm1(x)x = x.view(b, h, w, c)# Conv_Xconv_x = self.conv_block(x.permute(0, 3, 1, 2))conv_x = conv_x.permute(0, 2, 3, 1).contiguous().view(b, h * w, c)# cyclic shiftif self.shift_size > 0:shifted_x = torch.roll(x, shifts=(-self.shift_size, -self.shift_size), dims=(1, 2))attn_mask = attn_maskelse:shifted_x = xattn_mask = None# partition windowsx_windows = window_partition(shifted_x, self.window_size) # nw*b, window_size, window_size, cx_windows = x_windows.view(-1, self.window_size * self.window_size, c) # nw*b, window_size*window_size, c# W-MSA/SW-MSA (to be compatible for testing on images whose shapes are the multiple of window sizeattn_windows = self.attn(x_windows, rpi=rpi_sa, mask=attn_mask)# merge windowsattn_windows = attn_windows.view(-1, self.window_size, self.window_size, c)shifted_x = window_reverse(attn_windows, self.window_size, h, w) # b h' w' c# reverse cyclic shiftif self.shift_size > 0:attn_x = torch.roll(shifted_x, shifts=(self.shift_size, self.shift_size), dims=(1, 2))else:attn_x = shifted_xattn_x = attn_x.view(b, h * w, c)# FFNx = shortcut + self.drop_path(attn_x) + conv_x * self.conv_scalex = x + self.drop_path(self.mlp(self.norm2(x)))return xclass PatchMerging(nn.Module):r""" Patch Merging Layer.Args:input_resolution (tuple[int]): Resolution of input feature.dim (int): Number of input channels.norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNorm"""def __init__(self, input_resolution, dim, norm_layer=nn.LayerNorm):super().__init__()self.input_resolution = input_resolutionself.dim = dimself.reduction = nn.Linear(4 * dim, 2 * dim, bias=False)self.norm = norm_layer(4 * dim)def forward(self, x):"""x: b, h*w, c"""h, w = self.input_resolutionb, seq_len, c = x.shapeassert seq_len == h * w, 'input feature has wrong size'assert h % 2 == 0 and w % 2 == 0, f'x size ({h}*{w}) are not even.'x = x.view(b, h, w, c)x0 = x[:, 0::2, 0::2, :] # b h/2 w/2 cx1 = x[:, 1::2, 0::2, :] # b h/2 w/2 cx2 = x[:, 0::2, 1::2, :] # b h/2 w/2 cx3 = x[:, 1::2, 1::2, :] # b h/2 w/2 cx = torch.cat([x0, x1, x2, x3], -1) # b h/2 w/2 4*cx = x.view(b, -1, 4 * c) # b h/2*w/2 4*cx = self.norm(x)x = self.reduction(x)return xclass OCAB(nn.Module):# overlapping cross-attention blockdef __init__(self, dim,input_resolution,window_size,overlap_ratio,num_heads,qkv_bias=True,qk_scale=None,mlp_ratio=2,norm_layer=nn.LayerNorm):super().__init__()self.dim = dimself.input_resolution = input_resolutionself.window_size = window_sizeself.num_heads = num_headshead_dim = dim // num_headsself.scale = qk_scale or head_dim**-0.5self.overlap_win_size = int(window_size * overlap_ratio) + window_sizeself.norm1 = norm_layer(dim)self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)self.unfold = nn.Unfold(kernel_size=(self.overlap_win_size, self.overlap_win_size), stride=window_size, padding=(self.overlap_win_size-window_size)//2)# define a parameter table of relative position biasself.relative_position_bias_table = nn.Parameter(torch.zeros((window_size + self.overlap_win_size - 1) * (window_size + self.overlap_win_size - 1), num_heads)) # 2*Wh-1 * 2*Ww-1, nHtrunc_normal_(self.relative_position_bias_table, std=.02)self.softmax = nn.Softmax(dim=-1)self.proj = nn.Linear(dim,dim)self.norm2 = norm_layer(dim)mlp_hidden_dim = int(dim * mlp_ratio)self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=nn.GELU)def forward(self, x, x_size, rpi):h, w = x_sizeb, _, c = x.shapeshortcut = xx = self.norm1(x)x = x.view(b, h, w, c)qkv = self.qkv(x).reshape(b, h, w, 3, c).permute(3, 0, 4, 1, 2) # 3, b, c, h, wq = qkv[0].permute(0, 2, 3, 1) # b, h, w, ckv = torch.cat((qkv[1], qkv[2]), dim=1) # b, 2*c, h, w# partition windowsq_windows = window_partition(q, self.window_size) # nw*b, window_size, window_size, cq_windows = q_windows.view(-1, self.window_size * self.window_size, c) # nw*b, window_size*window_size, ckv_windows = self.unfold(kv) # b, c*w*w, nwkv_windows = rearrange(kv_windows, 'b (nc ch owh oww) nw -> nc (b nw) (owh oww) ch', nc=2, ch=c, owh=self.overlap_win_size, oww=self.overlap_win_size).contiguous() # 2, nw*b, ow*ow, ck_windows, v_windows = kv_windows[0], kv_windows[1] # nw*b, ow*ow, cb_, nq, _ = q_windows.shape_, n, _ = k_windows.shaped = self.dim // self.num_headsq = q_windows.reshape(b_, nq, self.num_heads, d).permute(0, 2, 1, 3) # nw*b, nH, nq, dk = k_windows.reshape(b_, n, self.num_heads, d).permute(0, 2, 1, 3) # nw*b, nH, n, dv = v_windows.reshape(b_, n, self.num_heads, d).permute(0, 2, 1, 3) # nw*b, nH, n, dq = q * self.scaleattn = (q @ k.transpose(-2, -1))relative_position_bias = self.relative_position_bias_table[rpi.view(-1)].view(self.window_size * self.window_size, self.overlap_win_size * self.overlap_win_size, -1) # ws*ws, wse*wse, nHrelative_position_bias = relative_position_bias.permute(2, 0, 1).contiguous() # nH, ws*ws, wse*wseattn = attn + relative_position_bias.unsqueeze(0)attn = self.softmax(attn)attn_windows = (attn @ v).transpose(1, 2).reshape(b_, nq, self.dim)# merge windowsattn_windows = attn_windows.view(-1, self.window_size, self.window_size, self.dim)x = window_reverse(attn_windows, self.window_size, h, w) # b h w cx = x.view(b, h * w, self.dim)x = self.proj(x) + shortcutx = x + self.mlp(self.norm2(x))return xclass AttenBlocks(nn.Module):""" A series of attention blocks for one RHAG.Args:dim (int): Number of input channels.input_resolution (tuple[int]): Input resolution.depth (int): Number of blocks.num_heads (int): Number of attention heads.window_size (int): Local window size.mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: Trueqk_scale (float | None, optional): Override default qk scale of head_dim ** -0.5 if set.drop (float, optional): Dropout rate. Default: 0.0attn_drop (float, optional): Attention dropout rate. Default: 0.0drop_path (float | tuple[float], optional): Stochastic depth rate. Default: 0.0norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNormdownsample (nn.Module | None, optional): Downsample layer at the end of the layer. Default: Noneuse_checkpoint (bool): Whether to use checkpointing to save memory. Default: False."""def __init__(self,dim,input_resolution,depth,num_heads,window_size,compress_ratio,squeeze_factor,conv_scale,overlap_ratio,mlp_ratio=4.,qkv_bias=True,qk_scale=None,drop=0.,attn_drop=0.,drop_path=0.,norm_layer=nn.LayerNorm,downsample=None,use_checkpoint=False):super().__init__()self.dim = dimself.input_resolution = input_resolutionself.depth = depthself.use_checkpoint = use_checkpoint# build blocksself.blocks = nn.ModuleList([HAB(dim=dim,input_resolution=input_resolution,num_heads=num_heads,window_size=window_size,shift_size=0 if (i % 2 == 0) else window_size // 2,compress_ratio=compress_ratio,squeeze_factor=squeeze_factor,conv_scale=conv_scale,mlp_ratio=mlp_ratio,qkv_bias=qkv_bias,qk_scale=qk_scale,drop=drop,attn_drop=attn_drop,drop_path=drop_path[i] if isinstance(drop_path, list) else drop_path,norm_layer=norm_layer) for i in range(depth)])# OCABself.overlap_attn = OCAB(dim=dim,input_resolution=input_resolution,window_size=window_size,overlap_ratio=overlap_ratio,num_heads=num_heads,qkv_bias=qkv_bias,qk_scale=qk_scale,mlp_ratio=mlp_ratio,norm_layer=norm_layer)# patch merging layerif downsample is not None:self.downsample = downsample(input_resolution, dim=dim, norm_layer=norm_layer)else:self.downsample = Nonedef forward(self, x, x_size, params):for blk in self.blocks:x = blk(x, x_size, params['rpi_sa'], params['attn_mask'])x = self.overlap_attn(x, x_size, params['rpi_oca'])if self.downsample is not None:x = self.downsample(x)return xclass RHAG(nn.Module):"""Residual Hybrid Attention Group (RHAG).Args:dim (int): Number of input channels.input_resolution (tuple[int]): Input resolution.depth (int): Number of blocks.num_heads (int): Number of attention heads.window_size (int): Local window size.mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: Trueqk_scale (float | None, optional): Override default qk scale of head_dim ** -0.5 if set.drop (float, optional): Dropout rate. Default: 0.0attn_drop (float, optional): Attention dropout rate. Default: 0.0drop_path (float | tuple[float], optional): Stochastic depth rate. Default: 0.0norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNormdownsample (nn.Module | None, optional): Downsample layer at the end of the layer. Default: Noneuse_checkpoint (bool): Whether to use checkpointing to save memory. Default: False.img_size: Input image size.patch_size: Patch size.resi_connection: The convolutional block before residual connection."""def __init__(self,dim,input_resolution,depth,num_heads,window_size,compress_ratio,squeeze_factor,conv_scale,overlap_ratio,mlp_ratio=4.,qkv_bias=True,qk_scale=None,drop=0.,attn_drop=0.,drop_path=0.,norm_layer=nn.LayerNorm,downsample=None,use_checkpoint=False,img_size=224,patch_size=4,resi_connection='1conv'):super(RHAG, self).__init__()self.dim = dimself.input_resolution = input_resolutionself.residual_group = AttenBlocks(dim=dim,input_resolution=input_resolution,depth=depth,num_heads=num_heads,window_size=window_size,compress_ratio=compress_ratio,squeeze_factor=squeeze_factor,conv_scale=conv_scale,overlap_ratio=overlap_ratio,mlp_ratio=mlp_ratio,qkv_bias=qkv_bias,qk_scale=qk_scale,drop=drop,attn_drop=attn_drop,drop_path=drop_path,norm_layer=norm_layer,downsample=downsample,use_checkpoint=use_checkpoint)if resi_connection == '1conv':self.conv = nn.Conv2d(dim, dim, 3, 1, 1)elif resi_connection == 'identity':self.conv = nn.Identity()self.patch_embed = PatchEmbed(img_size=img_size, patch_size=patch_size, in_chans=0, embed_dim=dim, norm_layer=None)self.patch_unembed = PatchUnEmbed(img_size=img_size, patch_size=patch_size, in_chans=0, embed_dim=dim, norm_layer=None)def forward(self, x, x_size, params):return self.patch_embed(self.conv(self.patch_unembed(self.residual_group(x, x_size, params), x_size))) + xclass PatchEmbed(nn.Module):r""" Image to Patch EmbeddingArgs:img_size (int): Image size. Default: 224.patch_size (int): Patch token size. Default: 4.in_chans (int): Number of input image channels. Default: 3.embed_dim (int): Number of linear projection output channels. Default: 96.norm_layer (nn.Module, optional): Normalization layer. Default: None"""def __init__(self, img_size=224, patch_size=4, in_chans=3, embed_dim=96, norm_layer=None):super().__init__()img_size = to_2tuple(img_size)patch_size = to_2tuple(patch_size)patches_resolution = [img_size[0] // patch_size[0], img_size[1] // patch_size[1]]self.img_size = img_sizeself.patch_size = patch_sizeself.patches_resolution = patches_resolutionself.num_patches = patches_resolution[0] * patches_resolution[1]self.in_chans = in_chansself.embed_dim = embed_dimif norm_layer is not None:self.norm = norm_layer(embed_dim)else:self.norm = Nonedef forward(self, x):x = x.flatten(2).transpose(1, 2) # b Ph*Pw cif self.norm is not None:x = self.norm(x)return xclass PatchUnEmbed(nn.Module):r""" Image to Patch UnembeddingArgs:img_size (int): Image size. Default: 224.patch_size (int): Patch token size. Default: 4.in_chans (int): Number of input image channels. Default: 3.embed_dim (int): Number of linear projection output channels. Default: 96.norm_layer (nn.Module, optional): Normalization layer. Default: None"""def __init__(self, img_size=224, patch_size=4, in_chans=3, embed_dim=96, norm_layer=None):super().__init__()img_size = to_2tuple(img_size)patch_size = to_2tuple(patch_size)patches_resolution = [img_size[0] // patch_size[0], img_size[1] // patch_size[1]]self.img_size = img_sizeself.patch_size = patch_sizeself.patches_resolution = patches_resolutionself.num_patches = patches_resolution[0] * patches_resolution[1]self.in_chans = in_chansself.embed_dim = embed_dimdef forward(self, x, x_size):x = x.transpose(1, 2).contiguous().view(x.shape[0], self.embed_dim, x_size[0], x_size[1]) # b Ph*Pw creturn xclass Upsample(nn.Sequential):"""Upsample module.Args:scale (int): Scale factor. Supported scales: 2^n and 3.num_feat (int): Channel number of intermediate features."""def __init__(self, scale, num_feat):m = []if (scale & (scale - 1)) == 0: # scale = 2^nfor _ in range(int(math.log(scale, 2))):m.append(nn.Conv2d(num_feat, 4 * num_feat, 3, 1, 1))m.append(nn.PixelShuffle(2))elif scale == 3:m.append(nn.Conv2d(num_feat, 9 * num_feat, 3, 1, 1))m.append(nn.PixelShuffle(3))else:raise ValueError(f'scale {scale} is not supported. ' 'Supported scales: 2^n and 3.')super(Upsample, self).__init__(*m)@ARCH_REGISTRY.register()

class HAT(nn.Module):r""" Hybrid Attention TransformerA PyTorch implementation of : `Activating More Pixels in Image Super-Resolution Transformer`.Some codes are based on SwinIR.Args:img_size (int | tuple(int)): Input image size. Default 64patch_size (int | tuple(int)): Patch size. Default: 1in_chans (int): Number of input image channels. Default: 3embed_dim (int): Patch embedding dimension. Default: 96depths (tuple(int)): Depth of each Swin Transformer layer.num_heads (tuple(int)): Number of attention heads in different layers.window_size (int): Window size. Default: 7mlp_ratio (float): Ratio of mlp hidden dim to embedding dim. Default: 4qkv_bias (bool): If True, add a learnable bias to query, key, value. Default: Trueqk_scale (float): Override default qk scale of head_dim ** -0.5 if set. Default: Nonedrop_rate (float): Dropout rate. Default: 0attn_drop_rate (float): Attention dropout rate. Default: 0drop_path_rate (float): Stochastic depth rate. Default: 0.1norm_layer (nn.Module): Normalization layer. Default: nn.LayerNorm.ape (bool): If True, add absolute position embedding to the patch embedding. Default: Falsepatch_norm (bool): If True, add normalization after patch embedding. Default: Trueuse_checkpoint (bool): Whether to use checkpointing to save memory. Default: Falseupscale: Upscale factor. 2/3/4/8 for image SR, 1 for denoising and compress artifact reductionimg_range: Image range. 1. or 255.upsampler: The reconstruction reconstruction module. 'pixelshuffle'/'pixelshuffledirect'/'nearest+conv'/Noneresi_connection: The convolutional block before residual connection. '1conv'/'3conv'"""def __init__(self,in_chans=3,img_size=64,patch_size=1,embed_dim=96,depths=(6, 6, 6, 6),num_heads=(6, 6, 6, 6),window_size=7,compress_ratio=3,squeeze_factor=30,conv_scale=0.01,overlap_ratio=0.5,mlp_ratio=4.,qkv_bias=True,qk_scale=None,drop_rate=0.,attn_drop_rate=0.,drop_path_rate=0.1,norm_layer=nn.LayerNorm,ape=False,patch_norm=True,use_checkpoint=False,upscale=2,img_range=1.,upsampler='',resi_connection='1conv',**kwargs):super(HAT, self).__init__()self.window_size = window_sizeself.shift_size = window_size // 2self.overlap_ratio = overlap_rationum_in_ch = in_chansnum_out_ch = in_chansnum_feat = 64self.img_range = img_rangeif in_chans == 3:rgb_mean = (0.4488, 0.4371, 0.4040)self.mean = torch.Tensor(rgb_mean).view(1, 3, 1, 1)else:self.mean = torch.zeros(1, 1, 1, 1)self.upscale = upscaleself.upsampler = upsampler# relative position indexrelative_position_index_SA = self.calculate_rpi_sa()relative_position_index_OCA = self.calculate_rpi_oca()self.register_buffer('relative_position_index_SA', relative_position_index_SA)self.register_buffer('relative_position_index_OCA', relative_position_index_OCA)# ------------------------- 1, shallow feature extraction ------------------------- #self.conv_first = nn.Conv2d(num_in_ch, embed_dim, 3, 1, 1)# ------------------------- 2, deep feature extraction ------------------------- #self.num_layers = len(depths)self.embed_dim = embed_dimself.ape = apeself.patch_norm = patch_normself.num_features = embed_dimself.mlp_ratio = mlp_ratio# split image into non-overlapping patchesself.patch_embed = PatchEmbed(img_size=img_size,patch_size=patch_size,in_chans=embed_dim,embed_dim=embed_dim,norm_layer=norm_layer if self.patch_norm else None)num_patches = self.patch_embed.num_patchespatches_resolution = self.patch_embed.patches_resolutionself.patches_resolution = patches_resolution# merge non-overlapping patches into imageself.patch_unembed = PatchUnEmbed(img_size=img_size,patch_size=patch_size,in_chans=embed_dim,embed_dim=embed_dim,norm_layer=norm_layer if self.patch_norm else None)# absolute position embeddingif self.ape:self.absolute_pos_embed = nn.Parameter(torch.zeros(1, num_patches, embed_dim))trunc_normal_(self.absolute_pos_embed, std=.02)self.pos_drop = nn.Dropout(p=drop_rate)# stochastic depthdpr = [x.item() for x in torch.linspace(0, drop_path_rate, sum(depths))] # stochastic depth decay rule# build Residual Hybrid Attention Groups (RHAG)self.layers = nn.ModuleList()for i_layer in range(self.num_layers):layer = RHAG(dim=embed_dim,input_resolution=(patches_resolution[0], patches_resolution[1]),depth=depths[i_layer],num_heads=num_heads[i_layer],window_size=window_size,compress_ratio=compress_ratio,squeeze_factor=squeeze_factor,conv_scale=conv_scale,overlap_ratio=overlap_ratio,mlp_ratio=self.mlp_ratio,qkv_bias=qkv_bias,qk_scale=qk_scale,drop=drop_rate,attn_drop=attn_drop_rate,drop_path=dpr[sum(depths[:i_layer]):sum(depths[:i_layer + 1])], # no impact on SR resultsnorm_layer=norm_layer,downsample=None,use_checkpoint=use_checkpoint,img_size=img_size,patch_size=patch_size,resi_connection=resi_connection)self.layers.append(layer)self.norm = norm_layer(self.num_features)# build the last conv layer in deep feature extractionif resi_connection == '1conv':self.conv_after_body = nn.Conv2d(embed_dim, embed_dim, 3, 1, 1)elif resi_connection == 'identity':self.conv_after_body = nn.Identity()# ------------------------- 3, high quality image reconstruction ------------------------- #if self.upsampler == 'pixelshuffle':# for classical SRself.conv_before_upsample = nn.Sequential(nn.Conv2d(embed_dim, num_feat, 3, 1, 1), nn.LeakyReLU(inplace=True))self.upsample = Upsample(upscale, num_feat)self.conv_last = nn.Conv2d(num_feat, num_out_ch, 3, 1, 1)self.apply(self._init_weights)def _init_weights(self, m):if isinstance(m, nn.Linear):trunc_normal_(m.weight, std=.02)if isinstance(m, nn.Linear) and m.bias is not None:nn.init.constant_(m.bias, 0)elif isinstance(m, nn.LayerNorm):nn.init.constant_(m.bias, 0)nn.init.constant_(m.weight, 1.0)def calculate_rpi_sa(self):# calculate relative position index for SAcoords_h = torch.arange(self.window_size)coords_w = torch.arange(self.window_size)coords = torch.stack(torch.meshgrid([coords_h, coords_w])) # 2, Wh, Wwcoords_flatten = torch.flatten(coords, 1) # 2, Wh*Wwrelative_coords = coords_flatten[:, :, None] - coords_flatten[:, None, :] # 2, Wh*Ww, Wh*Wwrelative_coords = relative_coords.permute(1, 2, 0).contiguous() # Wh*Ww, Wh*Ww, 2relative_coords[:, :, 0] += self.window_size - 1 # shift to start from 0relative_coords[:, :, 1] += self.window_size - 1relative_coords[:, :, 0] *= 2 * self.window_size - 1relative_position_index = relative_coords.sum(-1) # Wh*Ww, Wh*Wwreturn relative_position_indexdef calculate_rpi_oca(self):# calculate relative position index for OCAwindow_size_ori = self.window_sizewindow_size_ext = self.window_size + int(self.overlap_ratio * self.window_size)coords_h = torch.arange(window_size_ori)coords_w = torch.arange(window_size_ori)coords_ori = torch.stack(torch.meshgrid([coords_h, coords_w])) # 2, ws, wscoords_ori_flatten = torch.flatten(coords_ori, 1) # 2, ws*wscoords_h = torch.arange(window_size_ext)coords_w = torch.arange(window_size_ext)coords_ext = torch.stack(torch.meshgrid([coords_h, coords_w])) # 2, wse, wsecoords_ext_flatten = torch.flatten(coords_ext, 1) # 2, wse*wserelative_coords = coords_ext_flatten[:, None, :] - coords_ori_flatten[:, :, None] # 2, ws*ws, wse*wserelative_coords = relative_coords.permute(1, 2, 0).contiguous() # ws*ws, wse*wse, 2relative_coords[:, :, 0] += window_size_ori - window_size_ext + 1 # shift to start from 0relative_coords[:, :, 1] += window_size_ori - window_size_ext + 1relative_coords[:, :, 0] *= window_size_ori + window_size_ext - 1relative_position_index = relative_coords.sum(-1)return relative_position_indexdef calculate_mask(self, x_size):# calculate attention mask for SW-MSAh, w = x_sizeimg_mask = torch.zeros((1, h, w, 1)) # 1 h w 1h_slices = (slice(0, -self.window_size), slice(-self.window_size,-self.shift_size), slice(-self.shift_size, None))w_slices = (slice(0, -self.window_size), slice(-self.window_size,-self.shift_size), slice(-self.shift_size, None))cnt = 0for h in h_slices:for w in w_slices:img_mask[:, h, w, :] = cntcnt += 1mask_windows = window_partition(img_mask, self.window_size) # nw, window_size, window_size, 1mask_windows = mask_windows.view(-1, self.window_size * self.window_size)attn_mask = mask_windows.unsqueeze(1) - mask_windows.unsqueeze(2)attn_mask = attn_mask.masked_fill(attn_mask != 0, float(-100.0)).masked_fill(attn_mask == 0, float(0.0))return attn_mask@torch.jit.ignoredef no_weight_decay(self):return {'absolute_pos_embed'}@torch.jit.ignoredef no_weight_decay_keywords(self):return {'relative_position_bias_table'}def forward_features(self, x):x_size = (x.shape[2], x.shape[3])# Calculate attention mask and relative position index in advance to speed up inference.# The original code is very time-consuming for large window size.attn_mask = self.calculate_mask(x_size).to(x.device)params = {'attn_mask': attn_mask, 'rpi_sa': self.relative_position_index_SA, 'rpi_oca': self.relative_position_index_OCA}x = self.patch_embed(x)if self.ape:x = x + self.absolute_pos_embedx = self.pos_drop(x)for layer in self.layers:x = layer(x, x_size, params)x = self.norm(x) # b seq_len cx = self.patch_unembed(x, x_size)return xdef forward(self, x):self.mean = self.mean.type_as(x)x = (x - self.mean) * self.img_rangeif self.upsampler == 'pixelshuffle':# for classical SRx = self.conv_first(x)x = self.conv_after_body(self.forward_features(x)) + xx = self.conv_before_upsample(x)x = self.conv_last(self.upsample(x))x = x / self.img_range + self.meanreturn x

二、添加HAT注意力机制

2.1STEP1

首先找到ultralytics/nn文件路径下新建一个Add-module的python文件包【这里注意一定是python文件包,新建后会自动生成_init_.py】,如果已经跟着我的教程建立过一次了可以省略此步骤,随后新建一个HAT.py文件并将上文中提到的注意力机制的代码全部粘贴到此文件中,如下图所示

2.2STEP2

在STEP1中新建的_init_.py文件中导入增加改进模块的代码包如下图所示

2.3STEP3

找到ultralytics/nn文件夹中的task.py文件,在其中按照下图添加

2.4STEP4

定位到ultralytics/nn文件夹中的task.py文件中的def parse_model(d, ch, verbose=True): # model_dict, input_channels(3)函数添加如图代码,【如果不好定位可以直接ctrl+f搜索定位】

三、yaml文件与运行

3.1yaml文件

以下是添加HAT注意力机制在Backbone中的yaml文件,大家可以注释自行调节,效果以自己的数据集结果为准

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'# [depth, width, max_channels]n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPss: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPsm: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPsl: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPsx: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs# YOLO11n backbone

backbone:# [from, repeats, module, args]- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4- [-1, 2, C3k2, [256, False, 0.25]]- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8- [-1, 2, C3k2, [512, False, 0.25]]- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16- [-1, 2, C3k2, [512, True]]- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32- [-1, 2, C3k2, [1024, True]]- [-1, 1, HAT, []]- [-1, 1, SPPF, [1024, 5]] # 9- [-1, 2, C2PSA, [1024]] # 10# YOLO11n head

head:- [-1, 1, nn.Upsample, [None, 2, "nearest"]]- [[-1, 6], 1, Concat, [1]] # cat backbone P4- [-1, 2, C3k2, [512, False]] # 13- [-1, 1, nn.Upsample, [None, 2, "nearest"]]- [[-1, 4], 1, Concat, [1]] # cat backbone P3- [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)- [-1, 1, Conv, [256, 3, 2]]- [[-1, 14], 1, Concat, [1]] # cat head P4- [-1, 2, C3k2, [512, False]] # 19 (P4/16-medium)- [-1, 1, Conv, [512, 3, 2]]- [[-1, 11], 1, Concat, [1]] # cat head P5- [-1, 2, C3k2, [1024, True]] # 22 (P5/32-large)- [[17, 20, 23], 1, Detect, [nc]] # Detect(P3, P4, P5)以上添加位置仅供参考,具体添加位置以及模块效果以自己的数据集结果为准

3.2运行成功截图

OK 以上就是添加HAT注意力机制的全部过程了,后续将持续更新尽情期待

相关文章:

YOLO11改进|注意力机制篇|引入HAT超分辨率重建模块

目录 一、HAttention注意力机制1.1HAttention注意力介绍1.2HAT核心代码 二、添加HAT注意力机制2.1STEP12.2STEP22.3STEP32.4STEP4 三、yaml文件与运行3.1yaml文件3.2运行成功截图 一、HAttention注意力机制 1.1HAttention注意力介绍 HAT模型 通过结合卷积特征提取与多尺度注意…...

老牛也想吃嫩草,思科为何巨资投入云初创CoreWeave?

【科技明说 | 科技热点关注】 当我看到前些天思科(Cisco)的新闻时笑了。业内朋友对我说,老牛也想吃嫩草,人之常情尔,都是为了好好活着。 作为全球著名的网络产品巨头,思科Cisco论是遭遇到何种市场与行业巨变ÿ…...

Spring Boot 事务管理入门

在 Spring Boot 应用中,事务管理是一个至关重要的方面,它确保了数据的一致性和完整性。本文将深入探讨 Spring Boot 中事务管理的机制、使用方法以及注意事项,并提供丰富的示例代码。 其它教程: mysql事务详解 一、事务基础概念…...

20年408数据结构

第一题: 解析:这种题可以先画个草图分析一下,一下就看出来了。 这里的m(7,2)对应的是这图里的m(2,7),第一列存1个元素,第二列存2个元素,第三列存3个元素,第四列存4个元素,第五列存5个元素&#…...

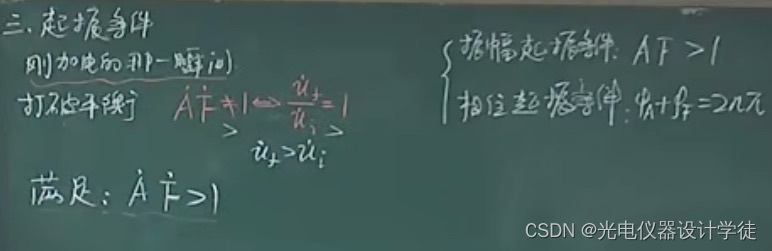

4反馈、LC、石英、RC振荡器

1什么是振荡器? 我们看看振荡器在无线通信中扮演什么角色? 1)无线通信的波是指电磁波。 2)电磁波的频率高于100KHz才能在空气中传播。 3)空气中的高频电磁波的相位和振幅可以排列组合包含信息。 4)无…...

go 的 timer reset

在 Go 语言 1.23 版本之前,与Timer(定时器)关联的通道是异步的(有缓冲,容量为 1)。这意味着即使在调用Timer.Stop(停止定时器)或Timer.Reset(重置定时器)并返…...

每日一面 day03

Q:介绍一下MySQL的三种日志(redo,undo,bin) Redo Log 和 Undo Log 是存储引擎 InnoDB 层面实现的,Bin Log 是 MySQL 层面实现的。 下面是三种日志的简要介绍: Redo Log:保证事务的…...

ssm基于SSM框架的餐馆点餐系统的设计+VUE

系统包含:源码论文 所用技术:SpringBootVueSSMMybatisMysql 免费提供给大家参考或者学习,获取源码请私聊我 需要定制请私聊 目 录 摘要 I Abstract II 1绪论 1 1.1研究背景与意义 1 1.1.1研究背景 1 1.1.2研究意义 1 1.2国内外研究…...

多人播报配音怎么弄?简单4招分享

想象一下,你手中的小说突然间活了起来,每个角色都有了自己的声音和情感。 这就是多人配音的魅力所在。它让文字跃然纸上,赋予了故事新的生命。 那么,如何制作一部引人入胜的小说呢?多人配音怎么制作的呢?…...

《Windows PE》4.1导入表

导入表顾名思义,就是记录外部导入函数信息的表。这些信息包括外部导入函数的序号、名称、地址和所属的DLL动态链接库的名称。Windows程序中使用的所有API接口函数都是从系统DLL中调用的。当然也可能是自定义的DLL动态链接库。对于调用方,我们称之为导入函…...

计算机专业大学生应该如何规划大学四年?

计算机专业的大学生在学习过程中应该注重以下几个方面,以确保他们在快速变化的技术领域中保持竞争力: 基础知识: 数学基础:离散数学、线性代数、概率论等数学课程对于理解算法和数据结构至关重要。编程基础:学习至少一…...

R知识图谱1—tidyverse玩转数据处理120题

以下是本人依据张老师提供的tidyverse题库自行刷题后的tidyverse Rmd文件,部分解法参考张老师提示,部分解法我本人灵感提供 数据下载来源https://github.com/zhjx19/tidyverse120/tree/main/data 参考https://github.com/MaybeBio/R_cheatsheet/tree/mai…...

【赵渝强老师】K8s中的有状态控制器StatefulSet

在K8s中,StatefulSets将Pod部署成有状态的应用程序。通过使用StatefulSets控制器,可以为Pod提供持久存储和持久的唯一性标识符。StatefulSets控制器与Deployment控制器不同的是,StatefulSets控制器为管理的Pod维护了一个有粘性的标识符。无论…...

机器学习笔记(持续更新)

使用matplotlib绘图: import matplotlib.pyplot as plt fig, axplt.subplots() #创建一个图形窗口 plt.show() #不绘制任何内容,直接显示空图 重复值处理: 重复值处理代码: import pandas as pd data pd.DataFrame({学号: [1…...

Nginx 配置之server块

在 Nginx 配置中使用两个 server 块是为了处理 HTTP 和 HTTPS 请求的不同需求。具体来说: 第一个 server 块: 监听 80 端口(HTTP)。将所有 HTTP 请求重定向到 HTTPS(443 端口)。 第二个 server 块ÿ…...

魅族Lucky 08惊艳亮相:极窄四等边设计引领美学新风尚

在这个智能手机设计趋于同质化的时代,魅族以其独特的设计理念和创新技术,再次为市场带来了一股清新之风。 近日,魅族全新力作——Lucky 08手机正式曝光,其独特的“极窄物理四等边”设计瞬间吸引了众多消费者的目光,而…...

自动化的抖音

文件命名 main.js var uiModule require("ui_module.js"); if (!auto.service) {toast("请开启无障碍服务");auto.waitFor();} var isRunning true; var swipeCount 0; var targetSwipeCount random(1, 10); var window uiModule.createUI(); uiMo…...

无人机之巡航控制篇

一、巡航控制的基本原理 无人机巡航控制的基本原理是通过传感器检测无人机的飞行状态和环境信息,并将其反馈给控制器。控制器根据反馈信息和任务需求,计算出无人机的控制指令,并将其发送给执行机构。执行机构根据控制器的控制指令,…...

面试必问的7大测试分类!一文说清楚!

在日常测试工作中,我们经常会听到“单元测试,集成测试,系统测试”之类的词汇,大家都知道这是按照开发阶段进行测试活动的划分。 这种划分完整的分类,其实是分为四种“单元测试,集成测试,系统测…...

深信服上网行为管理AC无法注销在线用户

下图用户认证成功后无法注销 很多入网的用户都是使用的这个账号 针对单个IP强制注销也不生效 解决步骤: 接入管理-用户管理-用户绑定管理-用户绑定 删除绑定免认证的配置 删除后所有用户会强制注销掉,重新登录即可 可添加主页联系方式帮忙远程解决问…...

终极指南:如何解锁光猫全部性能?RTL960x开源方案深度解析

终极指南:如何解锁光猫全部性能?RTL960x开源方案深度解析 【免费下载链接】RTL960x Hacking & Reverse Engineering RTL960x-based xPON ONTs to suit your OLT 项目地址: https://gitcode.com/gh_mirrors/rt/RTL960x RTL960x开源光猫固件是基…...

)

Vue2项目实战:手把手教你用Antv X6的Dnd插件实现可拖拽流程图(附完整代码)

Vue2项目实战:Antv X6 Dnd插件实现可拖拽流程图的深度实践 在Vue2项目中集成Antv X6的Dnd插件实现拖拽功能,是构建流程图编辑器、数据编排工具等复杂交互系统的常见需求。不同于简单的拖拽实现,我们需要考虑Vue2的组件化特性、业务逻辑与拖拽…...

)

Midjourney快速模式 vs 标准模式实测对比:27组图像生成数据、GPU资源占用率与成本折算表(限时公开)

更多请点击: https://codechina.net 第一章:Midjourney快速模式与标准模式的核心差异解析 Midjourney 的快速模式(Relaxed Mode)与标准模式(Turbo/Standard Mode)在资源调度、生成质量、排队机制及计费逻辑…...

从Pooling到MetaFormer:深入解析PoolFormer如何用极简算子重塑视觉Transformer架构

1. 为什么说PoolFormer是Transformer的"极简主义革命"? 第一次看到PoolFormer的论文时,我正坐在咖啡馆调试一个复杂的Vision Transformer模型。当读到"用平均池化替代注意力机制"的设计时,差点把咖啡喷在键盘上——这简…...

FPGA时序约束避坑指南:Set Bus Skew与Set Max Delay到底有什么区别?

FPGA时序约束深度解析:Set Bus Skew与Set Max Delay的核心差异与工程实践 在FPGA设计的时序收敛过程中,工程师们常常面临一个关键抉择:何时使用Set Max Delay,何时又该选择Set Bus Skew?这两种约束看似都与路径延迟相关…...

3大AI创作效率瓶颈的模块化解法:ComfyUI企业级工作流自动化实践

3大AI创作效率瓶颈的模块化解法:ComfyUI企业级工作流自动化实践 【免费下载链接】ComfyUI The most powerful and modular diffusion model GUI, api and backend with a graph/nodes interface. 项目地址: https://gitcode.com/GitHub_Trending/co/ComfyUI …...

OpenArm开源机械臂终极指南:从零开始构建你的7自由度人形手臂

OpenArm开源机械臂终极指南:从零开始构建你的7自由度人形手臂 【免费下载链接】openarm A fully open-source humanoid arm for physical AI research and deployment in contact-rich environments. 项目地址: https://gitcode.com/GitHub_Trending/op/openarm …...

高性能自动化网页信息提取工具实战指南:大规模目标扫描与安全检测技术方案

高性能自动化网页信息提取工具实战指南:大规模目标扫描与安全检测技术方案 【免费下载链接】URLFinder 一款快速、全面、易用的页面信息提取工具,可快速发现和提取页面中的JS、URL和敏感信息。 项目地址: https://gitcode.com/gh_mirrors/ur/URLFinder…...

)

别再踩坑了!手把手教你解决RPM安装时的‘事务锁定’报错(附spec文件编写避坑指南)

RPM事务锁定的深度解析与实战避坑指南 在Linux系统管理中,RPM包管理器的"事务锁定"错误堪称开发者和管理员的噩梦。当你精心编写的spec文件在关键时刻抛出cant create transaction lock错误时,那种挫败感足以让任何技术专家抓狂。本文将带你深…...

LeetCode 前K个高频元素题解

LeetCode 前K个高频元素题解 题目描述 给定一个数组,找到前 k 个高频元素。 示例: 输入:nums [1,1,1,2,2,3], k 2输出:[1,2] 解题思路 方法:堆 思路: 使用哈希表统计每个元素出现的次数。使用最小堆维护前…...