windows 11 配置 kafka 使用SASL SCRAM-SHA-256 认证

1. 下载安装apache-zookeeper-3.9.2

配置 \conf\zoo.cfg

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

dataDir=D:/server/data/zookeeper

dataLogDir=D:/server/data/zookeeperLog

# admin管理端端口

#admin.serverPort=8887# the port at which the clients will connect

clientPort=2181

# the maximum number of client connections.

# increase this if you need to handle more clients

#maxClientCnxns=60

#

# Be sure to read the maintenance section of the

# administrator guide before turning on autopurge.

#

# https://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

#

# The number of snapshots to retain in dataDir

#autopurge.snapRetainCount=3

# Purge task interval in hours

# Set to "0" to disable auto purge feature

#autopurge.purgeInterval=1## Metrics Providers

#

# https://prometheus.io Metrics Exporter

#metricsProvider.className=org.apache.zookeeper.metrics.prometheus.PrometheusMetricsProvider

#metricsProvider.httpHost=0.0.0.0

#metricsProvider.httpPort=7000

#metricsProvider.exportJvmInfo=true2. 启动 zkServer.cmd

3. 下载并重命名 kafka , kafka2.12.3.8.0

4. 编辑配置 \config\server.properties 以使用 SCRAM-SHA-256

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.#

# This configuration file is intended for use in ZK-based mode, where Apache ZooKeeper is required.

# See kafka.server.KafkaConfig for additional details and defaults

############################## Server Basics ############################## The id of the broker. This must be set to a unique integer for each broker.

broker.id=0############################# Socket Server Settings ############################## The address the socket server listens on. If not configured, the host name will be equal to the value of

# java.net.InetAddress.getCanonicalHostName(), with PLAINTEXT listener name, and port 9092.

# FORMAT:

# listeners = listener_name://host_name:port

# EXAMPLE:

# listeners = PLAINTEXT://your.host.name:9092

#listeners=PLAINTEXT://:9092

# 启用 SASL 机制

sasl.enabled.mechanisms=SCRAM-SHA-256# 配置 SASL 认证方式

sasl.mechanism.inter.broker.protocol=SCRAM-SHA-256

security.inter.broker.protocol=SASL_PLAINTEXT# 启用用户认证的 Kafka 认证控制

allow.everyone.if.no.acl.found=false

# 配置 Kafka 用户的 JAAS 配置

listener.name.sasl_plaintext.scram-sha-256.sasl.jaas.config=org.apache.kafka.common.security.scram.ScramLoginModule required \username="kafka" \password="kafkaAdmin#20240304";# 如果使用 SSL, 配置 SSL 文件路径(可选)

# ssl.keystore.location=/path/to/keystore.jks

# ssl.keystore.password=your_keystore_password

# ssl.key.password=your_key_password# 设置Kafka 端口

listeners=SASL_PLAINTEXT://127.0.0.1:9092

advertised.listeners=SASL_PLAINTEXT://127.0.0.1:9092# Listener name, hostname and port the broker will advertise to clients.

# If not set, it uses the value for "listeners".

#advertised.listeners=PLAINTEXT://your.host.name:9092# Maps listener names to security protocols, the default is for them to be the same. See the config documentation for more details

#listener.security.protocol.map=PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL# The number of threads that the server uses for receiving requests from the network and sending responses to the network

num.network.threads=3# The number of threads that the server uses for processing requests, which may include disk I/O

num.io.threads=8# The send buffer (SO_SNDBUF) used by the socket server

socket.send.buffer.bytes=102400# The receive buffer (SO_RCVBUF) used by the socket server

socket.receive.buffer.bytes=102400# The maximum size of a request that the socket server will accept (protection against OOM)

socket.request.max.bytes=104857600############################# Log Basics ############################## A comma separated list of directories under which to store log files

# 设置Kafka日志

log.dirs=D:/server/data/kafka-logs# The default number of log partitions per topic. More partitions allow greater

# parallelism for consumption, but this will also result in more files across

# the brokers.

num.partitions=1# The number of threads per data directory to be used for log recovery at startup and flushing at shutdown.

# This value is recommended to be increased for installations with data dirs located in RAID array.

num.recovery.threads.per.data.dir=1############################# Internal Topic Settings #############################

# The replication factor for the group metadata internal topics "__consumer_offsets" and "__transaction_state"

# For anything other than development testing, a value greater than 1 is recommended to ensure availability such as 3.

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1############################# Log Flush Policy ############################## Messages are immediately written to the filesystem but by default we only fsync() to sync

# the OS cache lazily. The following configurations control the flush of data to disk.

# There are a few important trade-offs here:

# 1. Durability: Unflushed data may be lost if you are not using replication.

# 2. Latency: Very large flush intervals may lead to latency spikes when the flush does occur as there will be a lot of data to flush.

# 3. Throughput: The flush is generally the most expensive operation, and a small flush interval may lead to excessive seeks.

# The settings below allow one to configure the flush policy to flush data after a period of time or

# every N messages (or both). This can be done globally and overridden on a per-topic basis.# The number of messages to accept before forcing a flush of data to disk

#log.flush.interval.messages=10000# The maximum amount of time a message can sit in a log before we force a flush

#log.flush.interval.ms=1000############################# Log Retention Policy ############################## The following configurations control the disposal of log segments. The policy can

# be set to delete segments after a period of time, or after a given size has accumulated.

# A segment will be deleted whenever *either* of these criteria are met. Deletion always happens

# from the end of the log.# The minimum age of a log file to be eligible for deletion due to age

log.retention.hours=168# A size-based retention policy for logs. Segments are pruned from the log unless the remaining

# segments drop below log.retention.bytes. Functions independently of log.retention.hours.

#log.retention.bytes=1073741824# The maximum size of a log segment file. When this size is reached a new log segment will be created.

#log.segment.bytes=1073741824# The interval at which log segments are checked to see if they can be deleted according

# to the retention policies

log.retention.check.interval.ms=300000############################# Zookeeper ############################## Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

#设置ZK地址

zookeeper.connect=localhost:2181# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=18000############################# Group Coordinator Settings ############################## The following configuration specifies the time, in milliseconds, that the GroupCoordinator will delay the initial consumer rebalance.

# The rebalance will be further delayed by the value of group.initial.rebalance.delay.ms as new members join the group, up to a maximum of max.poll.interval.ms.

# The default value for this is 3 seconds.

# We override this to 0 here as it makes for a better out-of-the-box experience for development and testing.

# However, in production environments the default value of 3 seconds is more suitable as this will help to avoid unnecessary, and potentially expensive, rebalances during application startup.

group.initial.rebalance.delay.ms=0

配置 JAAS 文件 \kafka2.12.3.8.0\config\kafka_server_jaas.conf

KafkaServer {org.apache.kafka.common.security.scram.ScramLoginModule requiredusername="kafka"password="kafkaAdmin#20240304";

};Client {org.apache.kafka.common.security.scram.ScramLoginModule requiredusername="kafka"password="kafkaAdmin#20240304";

};设置环境变量 (optional)

set KAFKA_OPTS=-Djava.security.auth.login.config=D:/server/kafka2.12.3.8.0/config/kafka_server_jaas.conf

为 kafka 用户创建 SCRAM-SHA-256 凭据

\kafka2.12.3.8.0\bin\windows\kafka-configs --zookeeper localhost:2181 --alter --add-config SCRAM-SHA-256=[iterations=4096,password=kafkaAdmin#20240304],SCRAM-SHA-512=[password=kafkaAdmin#20240304] --entity-type users --entity-name kafka

配置 Kafka 客户端 \kafka2.12.3.8.0\config\client.properties

security.protocol=SASL_PLAINTEXT

sasl.mechanism=SCRAM-SHA-256

sasl.jaas.config=org.apache.kafka.common.security.scram.ScramLoginModule required \

username="kafka" \

password="kafkaAdmin#20240304";

5. 启动kafka kafka-server-start.bat ..\..\config\server.properties

6. 测试配置

新建主题

kafka-topics --create --topic test-topic --bootstrap-server localhost:9092 --partitions 1 --replication-factor 1 --command-config ../../config/client.properties

新建生产者 并输入数据

kafka-console-producer --topic test-topic --bootstrap-server localhost:9092 --producer.config ../../config/client.properties

新建消费者以显示生产者数据

kafka-console-consumer --topic test-topic --bootstrap-server localhost:9092 --from-beginning --consumer.config ../../config/client.properties

测试成功

7. 安装配置kafka UI 消息查看工具

下载git 代码打包生产 kafdrop-4.0.3-SNAPSHOT.jar (git 跳过 test), 路径D:\soft\kafka

配置UI客户端连接文件 kafka.properties

security.protocol=SASL_PLAINTEXT

sasl.mechanism=SCRAM-SHA-256

sasl.jaas.config=org.apache.kafka.common.security.scram.ScramLoginModule required username="kafka" password="kafkaAdmin#20240304";命令行启动UI

java --add-opens=java.base/sun.nio.ch=ALL-UNNAMED -jar kafdrop-4.0.3-SNAPSHOT.jar --kafka.brokerConnect=127.0.0.1:9092 --kafka.properties=kafka.properties

或者指定控制台端口

java --add-opens=java.base/sun.nio.ch=ALL-UNNAMED -jar kafdrop-4.0.3-SNAPSHOT.jar --kafka.brokerConnect=localhost:9092 --server.port=9999 --management.server.port=9999 --kafka.properties=kafka.properties

登录控制台查看 URL: http://localhost:9000/ 可查看先前 topic: test-topic

windows11 WSL 配置后只能WSL下使用,主机 SCRAM-SHA-256 配置访问不成功,故放弃.

看来WSL只能简单使用一些工具,多安全网络相关配置不适用

相关文章:

windows 11 配置 kafka 使用SASL SCRAM-SHA-256 认证

1. 下载安装apache-zookeeper-3.9.2 配置 \conf\zoo.cfg # The number of milliseconds of each tick tickTime2000 # The number of ticks that the initial # synchronization phase can take initLimit10 # The number of ticks that can pass between # sending a requ…...

Elasticsearch —— ES 环境搭建、概念、基本操作、文档操作、SpringBoot继承ES

文章中会用到的文件,如果官网下不了可以在这下 链接: https://pan.baidu.com/s/1SeRdqLo0E0CmaVJdoZs_nQ?pwdxr76 提取码: xr76 一、 ES 环境搭建 注:环境搭建过程中的命令窗口不能关闭,关闭了服务就会关闭(除了修改设置后重启的…...

ElSelect 组件的 onChange 和 onInput 事件的区别

偶然遇到一个问题,在 ElSelect 组件中设置 filterable 属性后,监测不到复制粘贴的内容,也就意味着不能调用接口,下拉框内容为空。 简要代码如下: <ElSelectstyle"width: 256px"multiplev-model{siteIdL…...

加密与数据提取:保护隐私的新途径

加密与数据提取:保护隐私的新途径 在数字化时代,数据已成为驱动社会进步和经济发展的关键要素。然而,随着数据量的爆炸性增长,个人隐私保护成为了一个亟待解决的问题。如何在利用数据价值的同时,确保个人隐私不被侵犯…...

波澜壮阔的一生」2024年11月1日)

博客摘录「 宋宝华:Linux文件读写(BIO)波澜壮阔的一生」2024年11月1日

同时内核会给第2页标识一个PageReadahead标记,意思就是如果app接着读第2页,就可以预判app在做顺序读,这样我们在app读第2页的时候,内核可以进一步异步预读。 每个bio对应的硬盘里面一块连续的位置,每一块硬盘里面连续…...

使用华为云数字人可以做什么

在数字化和智能化快速发展的今天,企业面临着如何提升客户体验、优化运营效率的挑战。华为云数字人作为一种创新的智能交互解决方案,为企业提供了全新的可能性,助力企业在各个领域实现智能化升级。 提升客户服务体验 华为云数字人能够模拟真…...

leetcode刷题记录——(十六)349. 两个数组的交集

(一)问题描述 . - 力扣(LeetCode). - 备战技术面试?力扣提供海量技术面试资源,帮助你高效提升编程技能,轻松拿下世界 IT 名企 Dream Offer。https://leetcode.cn/problems/intersection-of-two-arrays/ …...

vue3实现规则编辑器

组件用于创建和编辑复杂的条件规则,支持添加、删除条件和子条件,以及选择不同的条件类型。 可实现json数据和页面显示的转换。 代码实现 : index.vue: <template><div class"allany-container"><div class"co…...

【快速上手】pyspark 集群环境下的搭建(Standalone模式)

目录 前言 : 一、spark运行的五种模式 二、 安装步骤 安装前准备 1.第一步:安装python 2.第二步:在bigdata01上安装spark 3.第三步:同步bigdata01中的spark到bigdata02和03上 三、集群启动/关闭 四、打开监控界面验证 前…...

中文NLP地址要素解析【阿里云:天池比赛】

比赛地址:中文NLP地址要素解析 https://tianchi.aliyun.com/notebook/467867?spma2c22.12281976.0.0.654b265fTnW3lu长期赛: 分数:87.7271 排名:长期赛:56(本次)/6990(团体或个人)方案…...

使用AddressSanitizer内存检测

修改cmakelist.txt,在project(xxxx)后面追加: option(MEM_CHECK "memory check with AddressSanitizer" OFF) if(MEM_CHECK)set(CMAKE_C_FLAGS "${CMAKE_C_FLAGS} -fsanitizeaddress")set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS…...

11月1日星期五今日早报简报微语报早读

11月1日星期五,农历十月初一,早报#微语早读。 1、六大行今日起实施存量房贷利率新机制。 2、谷歌被俄罗斯罚款35位数,罚款远超全球GDP。 3、山西吕梁:女性35岁前登记结婚,给予1500元奖励。 4、我国人均每日上网时间…...

实用篇:Postman历史版本下载

postman历史版本下载步骤 1.官方历史版本发布信息 2.点进去1中的链接,往下滑动;选择你想要的版本 例如下载v11.18版本 3.根据操作系统选择 mac:mac系统postman下载 window:window系统postman下载 4.在old version里找到对应版本下载即可 先点击download 再点击free downlo…...

微服务实战系列之玩转Docker(十七)

导览 前言Q:如何实现etcd数据的可视化管理一、创建etcd集群1. 节点定义2. 集群成员2.1 docker ps2.2 docker exec2.3 etcdctl member list 二、发布数据1. 添加数据2. 数据共享 三、可视化管理1. ETCD Keeper入门1.1 简介1.2 安装1.2.1 定义compose.yml1.2.2 启动ke…...

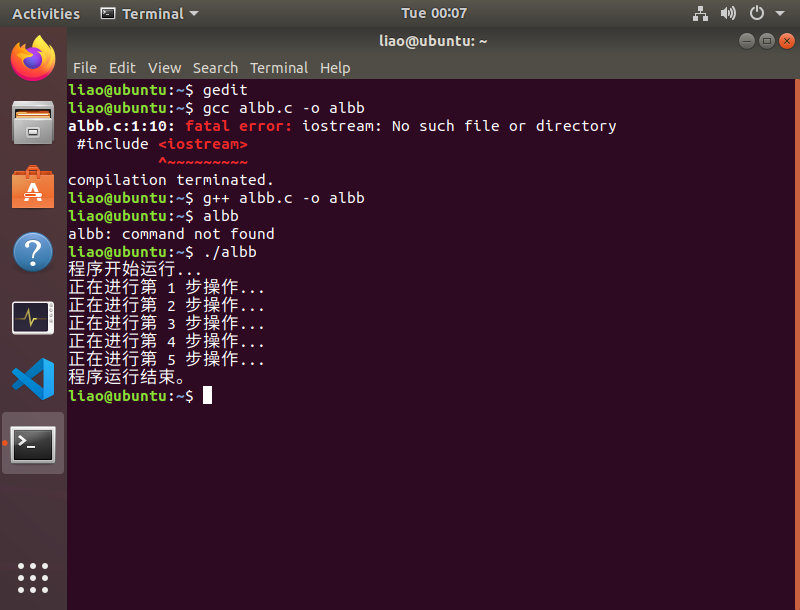

操作系统-实验报告单(1)

目录 1 实验目标 2 实验工具 3 实验内容、实验步骤及实验结果 一、安装虚拟机及Ubuntu 5、*存在虚拟机不能安装的问题 二、Ubuntu基本操作 1、桌面操作 2、终端命令行操作 三、在Ubuntu下运行C程序 3、*Ubuntu中编写一个Hello.c的主要程序 4 实验总结 实 验 报 告…...

rom定制系列------小米8青春版定制安卓14批量线刷固件 原生系统

💝💝💝小米8青春版。机型代码platina。官方最终版为 12.5.1安卓10的版本。客户需要安卓14的固件以便使用他们的软件。根据测试,原生pixeExpe固件适配兼容性较好。为方便客户批量进行刷写。修改固件为可fast批量刷写。整合底层分区…...

CATIA许可证常见问题解答

在使用CATIA软件的过程中,许可证问题常常是用户关心的焦点。为了帮助大家更好地理解和解决这些问题,我们整理了一份CATIA许可证常见问题解答,希望能为您提供便捷的参考。 问题一:如何激活CATIA许可证? 解答:…...

PySpark Standalone 集群部署教程

目录 1. 环境准备 1.1 配置免密登录 2. 下载并配置Spark 3. 配置Spark集群 3.1 配置spark-env.sh 3.2 配置spark-defaults.conf 3.3 设置Master和Worker节点 3.4 设配置log4j.properties 3.5 同步到所有Worker节点 4. 启动Spark Standalone集群 4.1 启动Master节点 …...

【源码+文档】基于SpringBoot+Vue旅游网站系统【提供源码+答辩PPT+参考文档+项目部署】

作者简介:✌CSDN新星计划导师、Java领域优质创作者、掘金/华为云/阿里云/InfoQ等平台优质作者、专注于Java技术领域和学生毕业项目实战,高校老师/讲师/同行前辈交流。✌ 主要内容:🌟Java项目、Python项目、前端项目、PHP、ASP.NET、人工智能…...

9.排队模型-M/M/1

1.排队模型 在Excel中建立排队模型可以帮助分析系统中的客户流动和服务效率。以下是如何构建简单排队模型的步骤: 1.确定模型参数 到达率(λ):客户到达系统的平均速率(例如每小时到达的客户数)。服务率&…...

)

动手实验:在QEMU上模拟调试ATF安全启动全流程(含常见错误排查)

在QEMU虚拟环境中实战调试ATF安全启动全流程指南 1. 实验环境搭建与工具链配置 构建ATF调试环境需要精心准备工具链和依赖组件。我们推荐使用Ubuntu 20.04 LTS作为基础系统,这是目前对ARM虚拟化支持最完善的Linux发行版之一。以下是关键组件的版本要求: …...

二代壳脱壳新思路:Hook CreateFromRawDexFile捕获原始DEX

1. 为什么“二代壳”让传统脱壳方法集体失效?——从Dex加载链路说起你有没有试过用经典的dumpdex脚本在Android 10设备上跑,结果dump出来的dex文件一打开就是满屏java.lang.ClassNotFoundException?或者用dex2oat反编译出的odex,反…...

【+1】)

Unity AI 编程(VS Code + Cline + DeepSeek-V4)【+1】

Unity AI 编程操作流演示(VS Code + Cline + DeepSeek-V4-Pro)目标:通过 AI 直接在 Unity 项目内进行代码修改与功能迭代,实现“让 AI 进入工程并完成修改”,而不是仅输出代码片段供手动复制。 Unity AI 编程操作流: 步骤一:在 Assets 目录下创建名为 “C# Scripts” 的…...

:7类发音错误+5个未公开参数修复方案)

ElevenLabs印地文语音质量崩塌真相(印地语TTS失效深度溯源):7类发音错误+5个未公开参数修复方案

更多请点击: https://intelliparadigm.com 第一章:ElevenLabs印地文语音质量崩塌的全局现象与影响评估 近期,ElevenLabs平台在印地语(Hindi)TTS合成任务中出现系统性语音质量退化,表现为音素错读、韵律断裂…...

0 基础转码学 AI:Java+Python 双语言入门,3 个月可落地实战项目

如今 AI 应用开发岗位需求持续上涨,不少零基础上班族、应届生、跨行业人群都想走转码路线入局技术行业。但很多人纠结不知道先学哪门语言,也不清楚零基础该以怎样的节奏入门,更担心学习周期太长,迟迟做不出能用于求职的实战项目。 结合当下企业真实用人需求来看,单纯只学…...

中年以后,真正有效的抗衰老运动,其实就这 4 种

过了 30 岁,肌肉每年流失 1%-2%,基础代谢下降,精力大不如前——这不是错觉,是生理规律。 但运动的选择,决定了你是「老得快」还是「逆生长」。分享 4 种被科学验证的抗衰老运动,中年人越早开始越好。 1️⃣…...

配置Hermes Agent使用自定义Taotoken作为模型供应商的步骤

🚀 告别海外账号与网络限制!稳定直连全球优质大模型,限时半价接入中。 👉 点击领取海量免费额度 配置Hermes Agent使用自定义Taotoken作为模型供应商的步骤 1. 准备工作:获取必要的凭证 在开始配置之前,你…...

Adobe-GenP 3.0终极指南:三步免费解锁Adobe全家桶的完整教程

Adobe-GenP 3.0终极指南:三步免费解锁Adobe全家桶的完整教程 【免费下载链接】Adobe-GenP Adobe CC 2019/2020/2021/2022/2023 GenP Universal Patch 3.0 项目地址: https://gitcode.com/gh_mirrors/ad/Adobe-GenP 想要免费使用Adobe Creative Cloud专业软件…...

机器人仿真终极指南:使用WPR系列从零构建ROS虚拟测试环境 [特殊字符]

机器人仿真终极指南:使用WPR系列从零构建ROS虚拟测试环境 🚀 【免费下载链接】wpr_simulation 项目地址: https://gitcode.com/gh_mirrors/wp/wpr_simulation 在机器人开发领域,硬件成本高昂、测试周期漫长是每个开发者面临的现实挑战…...

嵌入式异构多处理器评估板:从核心原理到工业应用实战

1. 项目概述:当“异构”不再是PPT上的概念在嵌入式开发领域,尤其是边缘计算、工业控制和智能物联网设备中,我们正面临一个越来越普遍的困境:单一架构的处理器越来越难以满足复杂且矛盾的系统需求。一方面,我们需要强大…...