Ubuntu二进制部署K8S 1.29.2

本机说明

本版本非高可用,单Master,以及一个Node

- 新装的 ubuntu 22.04

- k8s 1.29.3

- 使用该文档请使用批量替换 192.168.44.141这个IP,其余照着复制粘贴就可以成功

- 需要手动 设置一个 固定DNS,我这里设置的是 8.8.8.8不然coredns无法启动

一 基础环境准备

1.1 更换apt镜像源

cat > /etc/apt/sources.list << "eof"

deb http://mirrors.aliyun.com/ubuntu/ jammy main restricted universe multiverse

deb http://mirrors.aliyun.com/ubuntu/ jammy-security main restricted universe multiverse

deb http://mirrors.aliyun.com/ubuntu/ jammy-updates main restricted universe multiverse

deb http://mirrors.aliyun.com/ubuntu/ jammy-proposed main restricted universe multiverse

deb http://mirrors.aliyun.com/ubuntu/ jammy-backports main restricted universe multiverse

eof

apt-get update

apt-get -y install lrzsz net-tools gcc net-toolsapt install ipvsadm ipset sysstat conntrack -y

1.2 关闭swap

swapoff -a# 永久关闭swap

vim /etc/fstab

1.3 其他配置项目

# 关闭防火墙

iptables -P FORWARD ACCEPT

/etc/init.d/ufw stop

ufw disable

# 将桥接的IPv4流量传递到iptables的链

cat <<EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward=1

vm.max_map_count=262144

EOF

modprobe br_netfilter

sysctl -p /etc/sysctl.d/k8s.conf

# 使用ipvsadmin

mkdir -p /etc/sysconfig/modules

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack

# 调整k8s网络配置

cat <<EOF | sudo tee /etc/sysctl.d/99-kubernetes-cri.conf

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

# Apply sysctl params without reboot

sysctl --system

1.4 配置Ulimit

ulimit -SHn 65535

cat >> /etc/security/limits.conf <<EOF

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* seft memlock unlimited

* hard memlock unlimitedd

EOF

二 CA证书准备

注意,所有的命令执行均在 data/k8s-work

2.1 配置SSL软件

mkdir -p /data/k8s-work #统一软件的存放位置

cd /data/k8s-work

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

chmod +x cfssl*

mv cfssl_linux-amd64 /usr/local/bin/cfssl

mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

mv cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo

2.2 生成CA文件

cat > ca-csr.json <<"EOF"

{"CN": "kubernetes","key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Sichuan","L": "Chengdu","O": "Jcrose","OU": "CN"}],"ca": {"expiry": "87600h"}

}

EOF

2.2.1 创建CA证书

cfssl gencert -initca ca-csr.json | cfssljson -bare ca

2.2.2 配置ca证书策略

cfssl print-defaults config > ca-config.jsoncat > ca-config.json <<"EOF"

{"signing": {"default": {"expiry": "87600h"},"profiles": {"kubernetes": {"usages": ["signing","key encipherment","server auth","client auth"],"expiry": "87600h"}}}

}

EOF

2.3 单机启动ETCD

2.3.1 配置ETCD的证书文件

配置csd.json

cat > etcd-csr.json <<"EOF"

{"CN": "etcd","hosts": ["127.0.0.1","192.168.44.141"],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Sichuan","L": "Chengdu","O": "Jcrose","OU": "CN"}]

}

EOF

创建etcd文件

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

2.3.2 部署ETCD

下载二进制文件

wget https://github.com/etcd-io/etcd/releases/download/v3.5.2/etcd-v3.5.2-linux-amd64.tar.gz

tar -xvf etcd-v3.5.2-linux-amd64.tar.gz

cp -p etcd-v3.5.2-linux-amd64/etcd* /usr/local/bin/

mkdir /etc/etcdmkdir -p /etc/etcd/ssl

mkdir -p /var/lib/etcd/default.etcd

cd /data/k8s-work

cp ca*.pem /etc/etcd/ssl

cp etcd*.pem /etc/etcd/sslmkdir -p /etc/etcd

mkdir -p /etc/etcd/ssl

mkdir -p /var/lib/etcd/default.etcd

创建配置文件

cat > /etc/etcd/etcd.conf << "eof"

#[Member]

ETCD_NAME="default"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://127.0.0.1:2380"

ETCD_LISTEN_CLIENT_URLS="https://127.0.0.1:2379"

eof

创建启动命令

cat > /etc/systemd/system/etcd.service << "EOF"

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target[Service]

Type=notify

EnvironmentFile=-/etc/etcd/etcd.conf

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd \--cert-file=/etc/etcd/ssl/etcd.pem \--key-file=/etc/etcd/ssl/etcd-key.pem \--trusted-ca-file=/etc/etcd/ssl/ca.pem \--peer-cert-file=/etc/etcd/ssl/etcd.pem \--peer-key-file=/etc/etcd/ssl/etcd-key.pem \--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \--advertise-client-urls=https://127.0.0.1:2380 \--peer-client-cert-auth \--client-cert-auth

Restart=on-failure

RestartSec=5

LimitNOFILE=65536[Install]

WantedBy=multi-user.target

EOFsystemctl daemon-reload

systemctl enable --now etcd.service

systemctl status etcd

检查服务状态

/usr/local/bin/etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://127.0.0.1:2379 endpoint healthroot@jcrose:/data/k8s-work# /usr/local/bin/etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://127.0.0.1:2379 endpoint health

+------------------------+--------+------------+-------+

| ENDPOINT | HEALTH | TOOK | ERROR |

+------------------------+--------+------------+-------+

| https://127.0.0.1:2379 | true | 4.006819ms | |

+------------------------+--------+------------+-------+

三 准备k8s组件

cd /data/k8s-work

wget https://cdn.dl.k8s.io/release/v1.29.2/kubernetes-server-linux-amd64.tar.gz

tar -xf kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}

3.1 k8s-apiserver

3.1.1 证书文件

mkdir -p /etc/kubernetes/

mkdir -p /etc/kubernetes/ssl

mkdir -p /var/log/kubernetes cd /data/k8s-workcat > kube-apiserver-csr.json << "EOF"

{

"CN": "kubernetes","hosts": ["127.0.0.1","192.168.44.141","10.96.0.1","kubernetes","kubernetes.default","kubernetes.default.svc","kubernetes.default.svc.cluster","kubernetes.default.svc.cluster.local"],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Sichuan","L": "Chengdu","O": "Jcrose","OU": "CN"}]

}

EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver# 生成token文件

cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

mkdir -p /etc/kubernetes/

mkdir -p /etc/kubernetes/ssl

mkdir -p /var/log/kubernetes cd /data/k8s-workcat > kube-apiserver-csr.json << "EOF"

{

"CN": "kubernetes","hosts": ["127.0.0.1","192.168.44.141","10.96.0.1","kubernetes","kubernetes.default","kubernetes.default.svc","kubernetes.default.svc.cluster","kubernetes.default.svc.cluster.local"],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Sichuan","L": "Chengdu","O": "Jcrose","OU": "CN"}]

}

EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver# 生成token文件

cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

3.1.2 复制证书文件

cp ca.pem ca-key.pem kube-apiserver.pem kube-apiserver-key.pem token.csv /etc/kubernetes/ssl/

3.1.3 开启API聚合功能

cat > kube-metrics-csr.json << "eof"

{"CN": "aggregator","hosts": [],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Sichuan","L": "Chengdu","O": "system:masters","OU": "CN"}]

}

eofcfssl gencert -profile=kubernetes -ca=/etc/kubernetes/ssl/ca.pem -ca-key=/etc/kubernetes/ssl/ca-key.pem kube-metrics-csr.json | cfssljson -bare kube-metrics

cp kube-metrics*.pem /etc/kubernetes/ssl/

cat > kube-metrics-csr.json << "eof"

{"CN": "aggregator","hosts": [],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Sichuan","L": "Chengdu","O": "system:masters","OU": "CN"}]

}

eofcfssl gencert -profile=kubernetes -ca=/etc/kubernetes/ssl/ca.pem -ca-key=/etc/kubernetes/ssl/ca-key.pem kube-metrics-csr.json | cfssljson -bare kube-metrics

cp kube-metrics*.pem /etc/kubernetes/ssl/

3.1.4 创建apiserver服务配置文件

cat > /etc/kubernetes/kube-apiserver.conf << "EOF"

KUBE_APISERVER_OPTS="--anonymous-auth=true \--v=2 \--allow-privileged=true \--bind-address=0.0.0.0 \--secure-port=6443 \--advertise-address=192.168.44.141 \--service-cluster-ip-range=10.96.0.0/12,fd00:1111::/112 \--service-node-port-range=80-32767 \--etcd-servers=https://127.0.0.1:2379 \--etcd-cafile=/etc/etcd/ssl/ca.pem \--etcd-certfile=/etc/etcd/ssl/etcd.pem \--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \--client-ca-file=/etc/kubernetes/ssl/ca.pem \--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \--proxy-client-cert-file=/etc/kubernetes/ssl/kube-metrics.pem \--proxy-client-key-file=/etc/kubernetes/ssl/kube-metrics-key.pem \--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \--service-account-issuer=https://kubernetes.default.svc.cluster.local \--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \--authorization-mode=Node,RBAC \--runtime-config=api/all=true \--enable-bootstrap-token-auth \--token-auth-file=/etc/kubernetes/ssl/token.csv \--requestheader-allowed-names=aggregator \--requestheader-group-headers=X-Remote-Group \--requestheader-extra-headers-prefix=X-Remote-Extra- \--requestheader-username-headers=X-Remote-User \--enable-aggregator-routing=true"

EOF

3.1.5 创建apiserver服务管理配置文件

cat > /etc/systemd/system/kube-apiserver.service << "EOF"

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=etcd.service

Wants=etcd.service[Service]

EnvironmentFile=-/etc/kubernetes/kube-apiserver.conf

ExecStart=/usr/local/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536[Install]

WantedBy=multi-user.target

EOF

3.1.6 启动api-server

cp ca*.pem /etc/kubernetes/ssl/

cp kube-apiserver*.pem /etc/kubernetes/ssl/

cp token.csv /etc/kubernetes/systemctl daemon-reload

systemctl enable --now kube-apiserversystemctl status kube-apiserver# 测试

curl --insecure https://192.168.44.141:6443/

3.2 kubectl

3.2.1 证书文件

cat > admin-csr.json << "EOF"

{"CN": "admin","hosts": [],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Sichuan","L": "Chengdu","O": "system:masters", "OU": "system"}]

}

EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

cp admin*.pem /etc/kubernetes/ssl/

3.2.2 创建RBAC

kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.44.141:6443 --kubeconfig=kube.config

kubectl config set-credentials admin --client-certificate=admin.pem --client-key=admin-key.pem --embed-certs=true --kubeconfig=kube.config

kubectl config set-context kubernetes --cluster=kubernetes --user=admin --kubeconfig=kube.config

kubectl config use-context kubernetes --kubeconfig=kube.configmkdir ~/.kube

cp kube.config ~/.kube/config# 准备kubectl配置文件并进行角色绑定kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes --kubeconfig=/root/.kube/config

3.2 kube-controller-manager

3.2.1 证书文件

cat > kube-controller-manager-csr.json << "EOF"

{"CN": "system:kube-controller-manager","key": {"algo": "rsa","size": 2048},"hosts": ["127.0.0.1","192.168.44.141"],"names": [{"C": "CN","ST": "Sichuan","L": "Chengdu","O": "system:kube-controller-manager","OU": "system"}]

}

EOF

3.2.2 创建RBAC

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-managerkubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.44.141:6443 --kubeconfig=kube-controller-manager.kubeconfigkubectl config set-credentials system:kube-controller-manager --client-certificate=kube-controller-manager.pem --client-key=kube-controller-manager-key.pem --embed-certs=true --kubeconfig=kube-controller-manager.kubeconfigkubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfigkubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfigcp kube-controller-manager*.pem /etc/kubernetes/ssl/

cp kube-controller-manager.kubeconfig /etc/kubernetes/

3.2.3 创建kube-controller-manager配置文件

cat > kube-controller-manager.conf << "EOF"

KUBE_CONTROLLER_MANAGER_OPTS="--v=2 \--bind-address=127.0.0.1 \--root-ca-file=/etc/kubernetes/ssl/ca.pem \--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem \--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \--leader-elect=true \--use-service-account-credentials=true \--node-monitor-grace-period=40s \--node-monitor-period=5s \--controllers=*,bootstrapsigner,tokencleaner \--allocate-node-cidrs=true \--service-cluster-ip-range=10.96.0.0/12,fd00:1111::/112 \--cluster-cidr=172.16.0.0/12,fc00:2222::/112 \--node-cidr-mask-size-ipv4=24 \--node-cidr-mask-size-ipv6=120"

EOF

3.2.4 创建服务启动文件

cat > kube-controller-manager.service << "EOF"

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes[Service]

EnvironmentFile=-/etc/kubernetes/kube-controller-manager.conf

ExecStart=/usr/local/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

RestartSec=5[Install]

WantedBy=multi-user.target

EOF

3.2.5 复制配置文件

cp kube-controller-manager*.pem /etc/kubernetes/ssl/

cp kube-controller-manager.kubeconfig /etc/kubernetes/

cp kube-controller-manager.conf /etc/kubernetes/

cp kube-controller-manager.service /usr/lib/systemd/system/

3.2.6 启动服务

systemctl daemon-reload

systemctl enable --now kube-controller-manager

systemctl status kube-controller-manager

3.3 kube-scheduler

3.3.1 证书文件

cat > kube-scheduler-csr.json << "EOF"

{"CN": "system:kube-scheduler","hosts": ["127.0.0.1","192.168.44.141"],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Sichuan","L": "Chengdu","O": "system:kube-scheduler","OU": "system"}]

}

EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-schedulerkubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.44.141:6443 --kubeconfig=kube-scheduler.kubeconfigkubectl config set-credentials system:kube-scheduler --client-certificate=kube-scheduler.pem --client-key=kube-scheduler-key.pem --embed-certs=true --kubeconfig=kube-scheduler.kubeconfigkubectl config set-context system:kube-scheduler --cluster=kubernetes --user=system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfigkubectl config use-context system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfigcp kube-scheduler*.pem /etc/kubernetes/ssl/

cp kube-scheduler.kubeconfig /etc/kubernetes/

3.3.2 创建服务配置文件

cat > kube-scheduler.conf << "EOF"

KUBE_SCHEDULER_OPTS="--kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig --leader-elect=true --v=2"

EOF

3.3.3 创建服务启动文件

cat > kube-scheduler.service << "EOF"

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes[Service]

EnvironmentFile=-/etc/kubernetes/kube-scheduler.conf

ExecStart=/usr/local/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

RestartSec=5[Install]

WantedBy=multi-user.target

EOF

3.3.4 复制服务启动文件

cp kube-scheduler*.pem /etc/kubernetes/ssl/

cp kube-scheduler.kubeconfig /etc/kubernetes/

cp kube-scheduler.conf /etc/kubernetes/

cp kube-scheduler.service /usr/lib/systemd/system/

3.3.5 启动服务

systemctl daemon-reload

systemctl enable --now kube-scheduler

systemctl status kube-scheduler

3.4 Containerd

3.4.1 下载软件包containerd

wget https://ghproxy.com/https://github.com/containerd/containerd/releases/download/v1.6.10/cri-containerd-cni-1.6.10-linux-amd64.tar.gz

wget https://ghproxy.com/https://github.com/containernetworking/plugins/releases/download/v1.1.1/cni-plugins-linux-amd64-v1.1.1.tgz

wget https://ghproxy.com/https://github.com/kubernetes-sigs/cri-tools/releases/download/v1.26.0/crictl-v1.26.0-linux-amd64.tar.gz#创建cni插件所需目录

mkdir -p /etc/cni/net.d /opt/cni/bin

#解压cni二进制包

tar xf cni-plugins-linux-amd64-v*.tgz -C /opt/cni/bin/#解压

tar -xzf cri-containerd-cni-*-linux-amd64.tar.gz -C /#创建服务启动文件

cat > /etc/systemd/system/containerd.service <<EOF

[Unit]

Description=containerd container runtime

Documentation=https://containerd.io

After=network.target local-fs.target[Service]

ExecStartPre=-/sbin/modprobe overlay

ExecStart=/usr/local/bin/containerd

Type=notify

Delegate=yes

KillMode=process

Restart=always

RestartSec=5

LimitNPROC=infinity

LimitCORE=infinity

LimitNOFILE=infinity

TasksMax=infinity

OOMScoreAdjust=-999[Install]

WantedBy=multi-user.target

EOF

3.4.2 配置Containerd所需的模块

cat <<EOF | sudo tee /etc/modules-load.d/containerd.conf

overlay

br_netfilter

EOF#加载模块

systemctl restart systemd-modules-load.service3.4.3 创建Containerd的配置文件

# 创建默认配置文件

mkdir -p /etc/containerd

containerd config default | tee /etc/containerd/config.toml# 修改Containerd的配置文件

sed -i "s#SystemdCgroup\ \=\ false#SystemdCgroup\ \=\ true#g" /etc/containerd/config.toml

cat /etc/containerd/config.toml | grep SystemdCgroupsed -i "s#registry.k8s.io#registry.cn-hangzhou.aliyuncs.com/chenby#g" /etc/containerd/config.toml

cat /etc/containerd/config.toml | grep sandbox_imagesed -i "s#config_path\ \=\ \"\"#config_path\ \=\ \"/etc/containerd/certs.d\"#g" /etc/containerd/config.toml

cat /etc/containerd/config.toml | grep certs.dmkdir /etc/containerd/certs.d/docker.io -pvcat > /etc/containerd/certs.d/docker.io/hosts.toml << EOF

server = "https://docker.io"

[host."https://dockerpull.cn"]capabilities = ["pull", "resolve"]

EOF

3.4.4 设置服务为开机自动

systemctl daemon-reload

systemctl enable --now containerd

systemctl restart containerd

3.4.5 配置crictl客户端连接的运行时位置

tar xf crictl-v*-linux-amd64.tar.gz -C /usr/bin/

#生成配置文件

cat > /etc/crictl.yaml <<EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF#测试

systemctl restart containerd

crictl info

3.5 Kubelet

主要用于k8s-apiserver与 节点node进行交互

3.5.1 创建配置文件

创建kubelet-bootstrap.kubeconfig

BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/ssl/token.csv)kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/ssl/ca.pem \

--embed-certs=true --server=https://192.168.44.141:6443 \

--kubeconfig=kubelet-bootstrap.kubeconfigkubectl config set-credentials tls-bootstrap-token-user \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=kubelet-bootstrap.kubeconfigkubectl config set-context tls-bootstrap-token-user@kubernetes \

--cluster=kubernetes \

--user=tls-bootstrap-token-user \

--kubeconfig=kubelet-bootstrap.kubeconfigkubectl config use-context tls-bootstrap-token-user@kubernetes \

--kubeconfig=kubelet-bootstrap.kubeconfigcp kubelet-bootstrap.kubeconfig /etc/kubernetes

3.5.2 创建kubelet.json文件

cat > kubelet.json << "EOF"

{"kind": "KubeletConfiguration","apiVersion": "kubelet.config.k8s.io/v1beta1","authentication": {"x509": {"clientCAFile": "/etc/kubernetes/ssl/ca.pem"},"webhook": {"enabled": true,"cacheTTL": "2m0s"},"anonymous": {"enabled": false}},"authorization": {"mode": "Webhook","webhook": {"cacheAuthorizedTTL": "5m0s","cacheUnauthorizedTTL": "30s"}},"address": "192.168.44.141","port": 10250,"readOnlyPort": 10255,"cgroupDriver": "systemd", "hairpinMode": "promiscuous-bridge","serializeImagePulls": false,"clusterDomain": "cluster.local.","clusterDNS": ["10.96.0.2"]

}

EOF# 同步文件到集群节点

cp kubelet-bootstrap.kubeconfig /etc/kubernetes/

cp kubelet.json /etc/kubernetes/

3.2.4 创建kubelet服务启动管理文件

cat > kubelet.service << "EOF"

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=containerd.service

Requires=containerd.service[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/usr/local/bin/kubelet \--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \--kubeconfig=/root/.kube/config \--config=/etc/kubernetes/kubelet.json \--container-runtime-endpoint=unix:///run/containerd/containerd.sock \--node-labels=node.kubernetes.io/node=

Restart=on-failure

RestartSec=5[Install]

WantedBy=multi-user.target

EOF

3.2.5 复制配置文件

cp kubelet-bootstrap.kubeconfig /etc/kubernetes/

cp kubelet.json /etc/kubernetes/

cp kubelet.service /usr/lib/systemd/system/

3.2.6 创建启动文件

systemctl daemon-reload

systemctl enable --now kubeletsystemctl status kubelet

3.6 kube-proxy

3.6.1 创建kube-proxy配置文件

cat > kube-proxy-csr.json << "EOF"

{"CN": "system:kube-proxy","key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Sichuan","L": "Chengdu","O": "Jcrose","OU": "CN"}]

}

EOF

3.6.2 生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.44.141:6443 --kubeconfig=kube-proxy.kubeconfigkubectl config set-credentials kube-proxy \--client-certificate=kube-proxy.pem \--client-key=kube-proxy-key.pem \--embed-certs=true \--kubeconfig=kube-proxy.kubeconfigkubectl config set-context kube-proxy@kubernetes \--cluster=kubernetes \--user=kube-proxy \--kubeconfig=kube-proxy.kubeconfigkubectl config use-context kube-proxy@kubernetes --kubeconfig=kube-proxy.kubeconfig

3.3.3 创建配置文件

cat > kube-proxy.yaml << EOF

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

clientConnection:acceptContentTypes: ""burst: 10contentType: application/vnd.kubernetes.protobufkubeconfig: /etc/kubernetes/kube-proxy.kubeconfigqps: 5

clusterCIDR: 172.16.0.0/12,fc00:2222::/112

configSyncPeriod: 15m0s

conntrack:max: nullmaxPerCore: 32768min: 131072tcpCloseWaitTimeout: 1h0m0stcpEstablishedTimeout: 24h0m0s

enableProfiling: false

healthzBindAddress: 0.0.0.0:10256

hostnameOverride: ""

iptables:masqueradeAll: falsemasqueradeBit: 14minSyncPeriod: 0ssyncPeriod: 30s

ipvs:masqueradeAll: trueminSyncPeriod: 5sscheduler: "rr"syncPeriod: 30s

kind: KubeProxyConfiguration

metricsBindAddress: 127.0.0.1:10249

mode: "ipvs"

nodePortAddresses: null

oomScoreAdj: -999

portRange: ""

udpIdleTimeout: 250ms

EOF

3.3.4 创建服务启动管理文件

cat > kube-proxy.service << "EOF"

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \--config=/etc/kubernetes/kube-proxy.yaml \--v=2

Restart=on-failure

RestartSec=5

LimitNOFILE=65536[Install]

WantedBy=multi-user.target

EOF

3.3.5 移动配置文件

cp kube-proxy*.pem /etc/kubernetes/ssl/

cp kube-proxy.kubeconfig kube-proxy.yaml /etc/kubernetes/

cp kube-proxy.service /usr/lib/systemd/system/

mkdir -p /var/lib/kube-proxy

3.3.6 启动

systemctl daemon-reload

systemctl enable --now kube-proxysystemctl status kube-proxy

四 安装K8S内部的服务

4.1 安装 Kubens Tab补全

tab补全

apt install bash-completion

source <(kubectl completion bash)

echo 'source <(kubectl completion bash)' >> ~/.bashrc source ~/.bashrc

安装jq yq

wget https://mirror.ghproxy.com/https://github.com/mikefarah/yq/releases/latest/download/yq_linux_amd64 -O /usr/bin/yq && chmod +x /usr/bin/yq

wget https://mirror.ghproxy.com/https://github.com/stedolan/jq/releases/download/jq-1.6/jq-linux64 -O /usr/bin/jq && chmod +x /usr/bin/jq

kubens

wget https://mirror.ghproxy.com/https://github.com/ahmetb/kubectx/releases/download/v0.9.5/kubens

chmod +x kubens && mv kubens /usr/local/sbin

4.2 网络组件部署 Calico

wget https://mirror.ghproxy.com/https://raw.githubusercontent.com/projectcalico/calico/v3.25.0/manifests/calico.yaml

sed -i 's#192.168.0.0/16#10.244.0.0/16#' calico.yaml

五 Node节点加入K8S

Master节点操作

添加 /etc/hosts

192.168.44.142 k8s-master02

配置免密登录

ssh-keygen #一直按回车键就可以了

ssh-copy-id root@192.168.44.142

创建需要的文件夹

mkdir -p /etc/kubernetes/ssl

mkdir -p /root/.kube

5.1 Node节点操作-Containerd

重复上面的 一 基础环境准备的步骤

5.1.1 下载软件包containerd

wget https://ghproxy.com/https://github.com/containerd/containerd/releases/download/v1.6.10/cri-containerd-cni-1.6.10-linux-amd64.tar.gz

wget https://ghproxy.com/https://github.com/containernetworking/plugins/releases/download/v1.1.1/cni-plugins-linux-amd64-v1.1.1.tgz

wget https://ghproxy.com/https://github.com/kubernetes-sigs/cri-tools/releases/download/v1.26.0/crictl-v1.26.0-linux-amd64.tar.gz#创建cni插件所需目录

mkdir -p /etc/cni/net.d /opt/cni/bin

#解压cni二进制包

tar xf cni-plugins-linux-amd64-v*.tgz -C /opt/cni/bin/#解压

tar -xzf cri-containerd-cni-*-linux-amd64.tar.gz -C /#创建服务启动文件

cat > /etc/systemd/system/containerd.service <<EOF

[Unit]

Description=containerd container runtime

Documentation=https://containerd.io

After=network.target local-fs.target[Service]

ExecStartPre=-/sbin/modprobe overlay

ExecStart=/usr/local/bin/containerd

Type=notify

Delegate=yes

KillMode=process

Restart=always

RestartSec=5

LimitNPROC=infinity

LimitCORE=infinity

LimitNOFILE=infinity

TasksMax=infinity

OOMScoreAdjust=-999[Install]

WantedBy=multi-user.target

EOF

5.1.2 配置Containerd所需的模块

cat <<EOF | sudo tee /etc/modules-load.d/containerd.conf

overlay

br_netfilter

EOF#加载模块

systemctl restart systemd-modules-load.service5.1.3 创建Containerd的配置文件

# 创建默认配置文件

mkdir -p /etc/containerd

containerd config default | tee /etc/containerd/config.toml# 修改Containerd的配置文件

sed -i "s#SystemdCgroup\ \=\ false#SystemdCgroup\ \=\ true#g" /etc/containerd/config.toml

cat /etc/containerd/config.toml | grep SystemdCgroupsed -i "s#registry.k8s.io#registry.cn-hangzhou.aliyuncs.com/chenby#g" /etc/containerd/config.toml

cat /etc/containerd/config.toml | grep sandbox_imagesed -i "s#config_path\ \=\ \"\"#config_path\ \=\ \"/etc/containerd/certs.d\"#g" /etc/containerd/config.toml

cat /etc/containerd/config.toml | grep certs.dmkdir /etc/containerd/certs.d/docker.io -pvcat > /etc/containerd/certs.d/docker.io/hosts.toml << EOF

server = "https://docker.io"

[host."https://dockerpull.cn"]capabilities = ["pull", "resolve"]

EOF

5.1.4 设置服务为开机自动

systemctl daemon-reload

systemctl enable --now containerd

systemctl restart containerd

5.1.5 配置crictl客户端连接的运行时位置

tar xf crictl-v*-linux-amd64.tar.gz -C /usr/bin/

#生成配置文件

cat > /etc/crictl.yaml <<EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF#测试

systemctl restart containerd

crictl info

5.2 Master节点操作-Kubelet

k8s-master01操作

说明:

kubelet.json中address需要修改为当前主机IP地址。

for i in k8s-master02 ;do scp /usr/local/bin/kube-proxy $i:/usr/local/bin/;done

for i in k8s-master02 ;do scp /usr/local/bin/kubelet $i:/usr/local/bin/;done

for i in k8s-master02 ;do scp kubelet-bootstrap.kubeconfig kubelet.json $i:/etc/kubernetes/;donefor i in k8s-master02 ;do scp ca.pem $i:/etc/kubernetes/ssl/;donefor i in k8s-master02 ;do scp kubelet.service $i:/usr/lib/systemd/system/;done

mkdir -p /var/lib/kubelet

mkdir -p /var/log/kubernetessystemctl daemon-reload

systemctl enable --now kubeletsystemctl status kubelet

5.3 Kube-proxy

for i in k8s-master02 ;do scp kube-proxy.kubeconfig kube-proxy.yaml $i:/etc/kubernetes/;done

for i in k8s-master02 ;do scp kube-proxy.service $i:/usr/lib/systemd/system/;donemkdir -p /var/lib/kube-proxy

systemctl daemon-reload

systemctl enable --now kube-proxysystemctl status kube-proxy

六 检验集群节点状态

root@master:~# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-slave Ready <none> 21h v1.29.2

master Ready <none> 25h v1.29.2

相关文章:

Ubuntu二进制部署K8S 1.29.2

本机说明 本版本非高可用,单Master,以及一个Node 新装的 ubuntu 22.04k8s 1.29.3使用该文档请使用批量替换 192.168.44.141这个IP,其余照着复制粘贴就可以成功需要手动 设置一个 固定DNS,我这里设置的是 8.8.8.8不然coredns无法…...

第05章 10 地形梯度场模拟显示

在 VTK(Visualization Toolkit)中,可以通过计算地形数据的梯度场,并用箭头或线条来表示梯度方向和大小,从而模拟显示地形梯度场。以下是一个示例代码,展示了如何使用 VTK 和 C 来计算和显示地形数据的梯度场…...

全程Kali linux---CTFshow misc入门

图片篇(基础操作) 第一题: ctfshow{22f1fb91fc4169f1c9411ce632a0ed8d} 第二题 解压完成后看到PNG,可以知道这是一张图片,使用mv命令或者直接右键重命名,修改扩展名为“PNG”即可得到flag。 ctfshow{6f66202f21ad22a2a19520cdd…...

[ Spring ] Spring Cloud Alibaba Message Stream Binder for RocketMQ 2025

文章目录 IntroduceProject StructureDeclare Plugins and ModulesApply Plugins and Add DependenciesSender PropertiesSender ApplicationSender ControllerReceiver PropertiesReceiver ApplicationReceiver Message HandlerCongratulationsAutomatically Send Message By …...

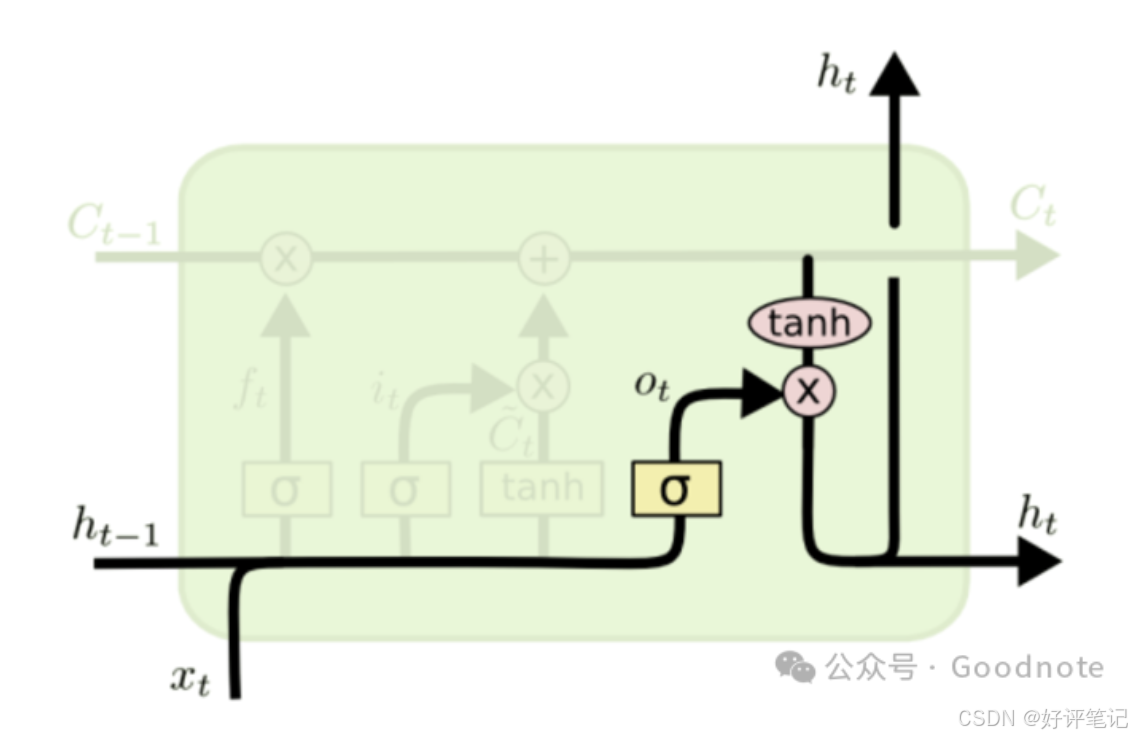

深度学习笔记——循环神经网络之LSTM

大家好,这里是好评笔记,公主号:Goodnote,专栏文章私信限时Free。本文详细介绍面试过程中可能遇到的循环神经网络LSTM知识点。 文章目录 文本特征提取的方法1. 基础方法1.1 词袋模型(Bag of Words, BOW)工作…...

AI 模型评估与质量控制:生成内容的评估与问题防护

在生成式 AI 应用中,模型生成的内容质量直接影响用户体验。然而,生成式模型存在一定风险,如幻觉(Hallucination)问题——生成不准确或完全虚构的内容。因此,在构建生成式 AI 应用时,模型评估与质…...

[MILP] Logical Constraints 0-1 (Note2)

1. 如果选择了项目1,则项目2,3也要求被选中 表示为: 2. 如果确定了选项目1,则接下来必须选项目2或者项目3 表示为: or 3. 如果同时选择了项目2和项目3,则不可以选择项目1 表示为: 4. 如果…...

DFFormer实战:使用DFFormer实现图像分类任务(二)

文章目录 训练部分导入项目使用的库设置随机因子设置全局参数图像预处理与增强读取数据设置Loss设置模型设置优化器和学习率调整策略设置混合精度,DP多卡,EMA定义训练和验证函数训练函数验证函数调用训练和验证方法 运行以及结果查看测试完整的代码 在上…...

蓝桥杯例题四

每个人都有无限潜能,只要你敢于去追求,你就能超越自己,实现梦想。人生的道路上会有困难和挑战,但这些都是成长的机会。不要被过去的失败所束缚,要相信自己的能力,坚持不懈地努力奋斗。成功需要付出汗水和努…...

如何复现o1模型,打造医疗 o1?

如何复现o1模型,打造医疗 o1? o1 树搜索一、起点:预训练规模触顶与「推理阶段(Test-Time)扩展」的动机二、Test-Time 扩展的核心思路与常见手段1. Proposer & Verifier 统一视角方法1:纯 Inference Sca…...

PostgreSQL TRUNCATE TABLE 操作详解

PostgreSQL TRUNCATE TABLE 操作详解 引言 在数据库管理中,经常需要对表进行操作以保持数据的有效性和一致性。TRUNCATE TABLE 是 PostgreSQL 中一种高效删除表内所有记录的方法。本文将详细探讨 PostgreSQL 中 TRUNCATE TABLE 的使用方法、性能优势以及注意事项。 什么是 …...

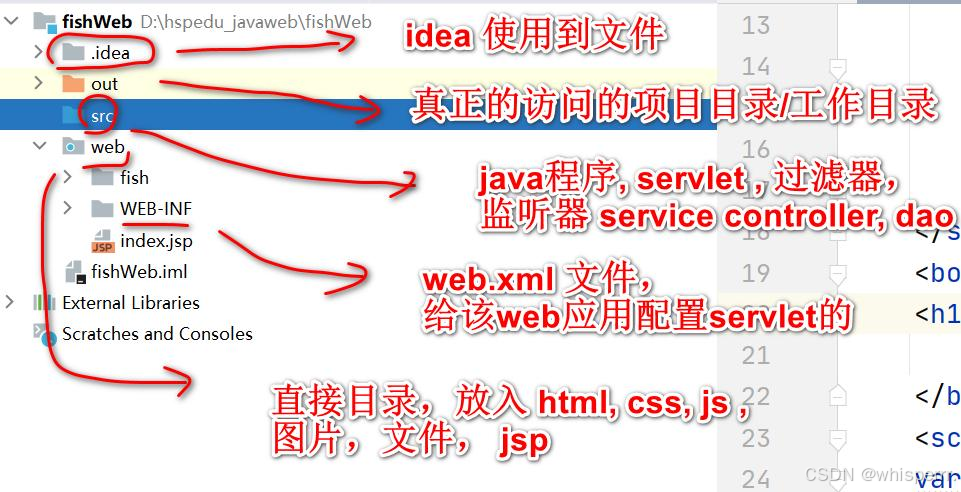

【JavaWeb06】Tomcat基础入门:架构理解与基本配置指南

文章目录 🌍一. WEB 开发❄️1. 介绍 ❄️2. BS 与 CS 开发介绍 ❄️3. JavaWeb 服务软件 🌍二. Tomcat❄️1. Tomcat 下载和安装 ❄️2. Tomcat 启动 ❄️3. Tomcat 启动故障排除 ❄️4. Tomcat 服务中部署 WEB 应用 ❄️5. 浏览器访问 Web 服务过程详…...

【NOI】C++程序结构入门之循环结构三-计数求和

文章目录 前言一、计数求和1.导入2.计数器3.累加器 二、例题讲解问题:1741 - 求出1~n中满足条件的数的个数和总和?问题:1002. 编程求解123...n问题:1004. 编程求1 * 2 * 3*...*n问题:1014. 编程求11/21/3...1/n问题&am…...

[Linux]Shell脚本中以指定用户运行命令

前言 当我们为Linux设置了用户自启动的shel脚本,默认会使用root用户执行启动脚本中的命令,那么我们如何在启动脚本中切换为指定用户指定命令呢。 命令 以下将列出两条命令,两条命令都可以实现以指定用户运行命令,凭喜好选择使用…...

通过 NAudio 控制电脑操作系统音量

根据您的需求,以下是通过 NAudio 获取和控制电脑操作系统音量的方法: 一、获取和控制系统音量 (一)获取系统音量和静音状态 您可以使用 NAudio.CoreAudioApi.MMDeviceEnumerator 来获取系统默认音频设备的音量和静音状态&#…...

新项目上传gitlab

Git global setup git config --global user.name “FUFANGYU” git config --global user.email “fyfucnic.cn” Create a new repository git clone gitgit.dev.arp.cn:casDs/sawrd.git cd sawrd touch README.md git add README.md git commit -m “add README” git push…...

【异步编程基础】FutureTask基本原理与异步阻塞问题

文章目录 一、FutureTask 的桥梁作用二、Future 模式与异步回调三、 FutureTask获取异步结果的逻辑1. 获取异步执行结果的步骤2. 举例说明3. FutureTask的异步阻塞问题 Runnable 用于定义无返回值的任务,而 Callable 用于定义有返回值的任务。然而,Calla…...

原生 Node 开发 Web 服务器

一、创建基本的 HTTP 服务器 使用 http 模块创建 Web 服务器 const http require("http");// 创建服务器const server http.createServer((req, res) > {// 设置响应头res.writeHead(200, { "Content-Type": "text/plain" });// 发送响应…...

—— 1.两数之和)

LeetCode热题100(一)—— 1.两数之和

LeetCode热题100(一)—— 1.两数之和 题目描述代码实现思路解析 你好,我是杨十一,一名热爱健身的程序员在Coding的征程中,不断探索与成长LeetCode热题100——刷题记录(不定期更新) 此系列文章用…...

二叉树高频题目——下——不含树型dp

一,普通二叉树上寻找两个节点的最近的公共祖先 1,介绍 LCA(Lowest Common Ancestor,最近公共祖先)是二叉树中经常讨论的一个问题。给定二叉树中的两个节点,它的LCA是指这两个节点的最低(最深&…...

vue事件总线(原理、优缺点)

目录 一、原理二、使用方法三、优缺点优点缺点 四、使用注意事项具体代码参考: 一、原理 在Vue中,事件总线(Event Bus)是一种可实现任意组件间通信的通信方式。 要实现这个功能必须满足两点要求: (1&#…...

PyCharm介绍

PyCharm的官网是https://www.jetbrains.com/pycharm/。 以下是在PyCharm官网下载和安装软件的步骤: 下载步骤 打开浏览器,访问PyCharm的官网https://www.jetbrains.com/pycharm/。在官网首页,点击“Download”按钮进入下载页面。选择适合自…...

《CPython Internals》读后感

一、 为什么选择这本书? Python 是本人工作中最常用的开发语言,为了加深对 Python 的理解,更好的掌握 Python 这门语言,所以想对 Python 解释器有所了解,看看是怎么使用C语言来实现Python的,以期达到对 Py…...

音频入门(一):音频基础知识与分类的基本流程

音频信号和图像信号在做分类时的基本流程类似,区别就在于预处理部分存在不同;本文简单介绍了下音频处理的方法,以及利用深度学习模型分类的基本流程。 目录 一、音频信号简介 1. 什么是音频信号 2. 音频信号长什么样 二、音频的深度学习分…...

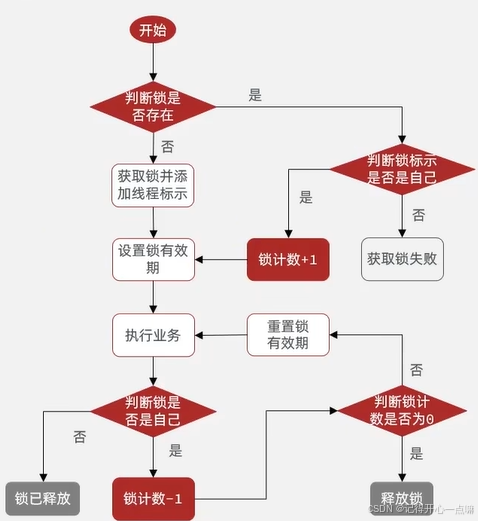

Redis --- 分布式锁的使用

我们在上篇博客高并发处理 --- 超卖问题一人一单解决方案讲述了两种锁解决业务的使用方法,但是这样不能让锁跨JVM也就是跨进程去使用,只能适用在单体项目中如下图: 为了解决这种场景,我们就需要用一个锁监视器对全部集群进行监视…...

用C++编写一个2048的小游戏

以下是一个简单的2048游戏的实现。这个实现使用了控制台输入和输出,适合在终端或命令行环境中运行。 2048游戏的实现 1.游戏逻辑 2048游戏的核心逻辑包括: • 初始化一个4x4的网格。 • 随机生成2或4。 • 处理玩家的移动操作(上、下、左、…...

【设计模式-行为型】状态模式

一、什么是状态模式 什么是状态模式呢,这里我举一个例子来说明,在自动挡汽车中,挡位的切换是根据驾驶条件(如车速、油门踏板位置、刹车状态等)自动完成的。这种自动切换挡位的过程可以很好地用状态模式来描述。状态模式…...

CentOS/Linux Python 2.7 离线安装 Requests 库解决离线安装问题。

root@mwcollector1 externalscripts]# cat /etc/os-release NAME=“Kylin Linux Advanced Server” VERSION=“V10 (Sword)” ID=“kylin” VERSION_ID=“V10” PRETTY_NAME=“Kylin Linux Advanced Server V10 (Sword)” ANSI_COLOR=“0;31” 这是我系统的版本,由于是公司内网…...

【flutter版本升级】【Nativeshell适配】nativeshell需要做哪些更改

flutter 从3.13.9 升级:3.27.2 nativeshell组合库中的 1、nativeshell_build库替换为github上的最新代码 可以解决两个问题: 一个是arg("--ExtraFrontEndOptions--no-sound-null-safety") 在新版flutter中这个构建参数不支持了导致的build错误…...

使用 Vue 3 的 watchEffect 和 watch 进行响应式监视

Vue 3 的 Composition API 引入了 <script setup> 语法,这是一种更简洁、更直观的方式来编写组件逻辑。结合 watchEffect 和 watch,我们可以轻松地监视响应式数据的变化。本文将介绍如何使用 <script setup> 语法结合 watchEffect 和 watch&…...