2、基于pytorch lightning的fabric实现pytorch的多GPU训练和混合精度功能

文章目录

承接 上一篇,使用原始的pytorch来实现多GPU训练和混合精度,现在对比以上代码,我们使用Fabric来实现相同的功能。关于Fabric,我会在后续的博客中继续讲解,是讲解,也是在学习。通过fabric,可以减少代码量,同时提升开发速度。

相比上一篇,模型稍微改了一下,只是为了查看对bn的影响。直接上代码:

import torch

from torch import nn

from lightning import Fabric

from torchinfo import summarydef train(num_epochs,model,optimizer,data,target,fabric):model.train()data=fabric.to_device(data)target=fabric.to_device(target)#data=data.to(fabric.device)#target=target.to(fabric.device)print("fabric.device and local_rank and torch local rank:",fabric.device,fabric.local_rank,torch.distributed.get_rank())# 这三个是一个东西for epoch in range(num_epochs):out=model(data)loss = torch.nn.MSELoss()(out,target)optimizer.zero_grad()fabric.backward(loss)optimizer.step()print(f"Epoch: {epoch+1:04d}/{num_epochs:04d} | train loss:{loss}") #会打印出每个GPU上的lossall_loss=fabric.all_gather(loss) #获取所有loss,这个是一样大的,GPU个lossprint(all_loss)#保存模型state={"model":model,"optimizer":optimizer,"iter":epoch+1}fabric.save("checkpoint.ckpt",state)class SimpleModel(nn.Module):def __init__(self):super(SimpleModel, self).__init__()self.conv=nn.Conv2d(3,5,3,1)self.bn = nn.BatchNorm2d(5)self.avg_pool = nn.AdaptiveAvgPool2d((1,1))self.flat = nn.Flatten()self.fc = nn.Linear(5, 1)def forward(self, x):x = self.conv(x)x = self.bn(x)x = self.avg_pool(x)x = self.flat(x)x = self.fc(x) return x

if __name__=="__main__":fabric = Fabric(accelerator="cuda",devices=[0,1],strategy="ddp",precision='16-mixed')fabric.launch()fabric.seed_everything()#初始化模型model = SimpleModel()fabric.print(f"before setup model,state dict:")#只在GPU0上打印#fabric.print(summary(model,input_size=(1,3,8,8)))fabric.print(model.state_dict().keys())fabric.print("*****************************************************************")optimizer=torch.optim.SGD(model.parameters(),lr=0.01)if fabric.world_size>1:model=torch.nn.SyncBatchNorm.convert_sync_batchnorm(model)fabric.print(f"after convert bn to sync bn,state dict:")#fabric.print(summary(model,input_size=(1,3,8,8)))print(f"after convert bn to sync bn device:{fabric.device} conv.weight.device:{model.conv.weight.device}")fabric.print(model.state_dict().keys())fabric.print("*****************************************************************")model,optimizer=fabric.setup(model,optimizer)print(f"after setup device:{fabric.device} conv.weight.device:{model.conv.weight.device}")fabric.print(f"after setup model,model state dict:")#fabric.print(summary(model,input_size=(1,3,8,8)))fabric.print(model.state_dict().keys())#设置模拟数据(如果是dataloader那么除了torch.utils.data.DistributedSampler外的其它部分)data= torch.rand(5,3,8,8)target=torch.rand(5,1)#开始训练epoch=100train(epoch,model,optimizer,data,target,fabric) 输出结果:

Using 16-bit Automatic Mixed Precision (AMP)

Initializing distributed: GLOBAL_RANK: 0, MEMBER: 1/2

Initializing distributed: GLOBAL_RANK: 1, MEMBER: 2/2

----------------------------------------------------------------------------------------------------

distributed_backend=nccl

All distributed processes registered. Starting with 2 processes

----------------------------------------------------------------------------------------------------/home/tl/anaconda3/envs/ptch/lib/python3.10/site-packages/lightning/fabric/utilities/seed.py:40: No seed found, seed set to 3183422672

[rank: 0] Seed set to 3183422672

before setup model,state dict:

odict_keys(['conv.weight', 'conv.bias', 'bn.weight', 'bn.bias', 'bn.running_mean', 'bn.running_var', 'bn.num_batches_tracked', 'fc.weight', 'fc.bias'])

*****************************************************************

after convert bn to sync bn,state dict:

after convert bn to sync bn device:cuda:0 conv.weight.device:cpu

odict_keys(['conv.weight', 'conv.bias', 'bn.weight', 'bn.bias', 'bn.running_mean', 'bn.running_var', 'bn.num_batches_tracked', 'fc.weight', 'fc.bias'])

*****************************************************************

[rank: 1] Seed set to 1590652679

after convert bn to sync bn device:cuda:1 conv.weight.device:cpu

after setup device:cuda:1 conv.weight.device:cuda:1

after setup device:cuda:0 conv.weight.device:cuda:0

after setup model,model state dict:

odict_keys(['conv.weight', 'conv.bias', 'bn.weight', 'bn.bias', 'bn.running_mean', 'bn.running_var', 'bn.num_batches_tracked', 'fc.weight', 'fc.bias'])

fabric.device and local_rank and torch local rank: cuda:1 1 1

fabric.device and local_rank and torch local rank: cuda:0 0 0

Epoch: 0001/0100 | train loss:0.5391270518302917

Epoch: 0001/0100 | train loss:0.4002908766269684

tensor([0.5391, 0.4003], device='cuda:0')

tensor([0.5391, 0.4003], device='cuda:1')

Epoch: 0002/0100 | train loss:0.5391270518302917

Epoch: 0002/0100 | train loss:0.4002908766269684

tensor([0.5391, 0.4003], device='cuda:0')

tensor([0.5391, 0.4003], device='cuda:1')

Epoch: 0003/0100 | train loss:0.3809531629085541

Epoch: 0003/0100 | train loss:0.5164263844490051

tensor([0.5164, 0.3810], device='cuda:1')

tensor([0.5164, 0.3810], device='cuda:0')

Epoch: 0004/0100 | train loss:0.3625626266002655

Epoch: 0004/0100 | train loss:0.49487170577049255

tensor([0.4949, 0.3626], device='cuda:0')

tensor([0.4949, 0.3626], device='cuda:1')

Epoch: 0005/0100 | train loss:0.34520527720451355

Epoch: 0005/0100 | train loss:0.47438523173332214

tensor([0.4744, 0.3452], device='cuda:1')

tensor([0.4744, 0.3452], device='cuda:0')

Epoch: 0006/0100 | train loss:0.32876724004745483

Epoch: 0006/0100 | train loss:0.45497187972068787

tensor([0.4550, 0.3288], device='cuda:1')

tensor([0.4550, 0.3288], device='cuda:0')

Epoch: 0007/0100 | train loss:0.4365047514438629

Epoch: 0007/0100 | train loss:0.31321704387664795

tensor([0.4365, 0.3132], device='cuda:0')

tensor([0.4365, 0.3132], device='cuda:1')

Epoch: 0008/0100 | train loss:0.41904139518737793

Epoch: 0008/0100 | train loss:0.2985176146030426

tensor([0.4190, 0.2985], device='cuda:0')

tensor([0.4190, 0.2985], device='cuda:1')

Epoch: 0009/0100 | train loss:0.4022897183895111

Epoch: 0009/0100 | train loss:0.28452268242836

tensor([0.4023, 0.2845], device='cuda:0')

tensor([0.4023, 0.2845], device='cuda:1')

Epoch: 0010/0100 | train loss:0.38661184906959534

Epoch: 0010/0100 | train loss:0.2712869644165039

tensor([0.3866, 0.2713], device='cuda:0')

tensor([0.3866, 0.2713], device='cuda:1')

Epoch: 0011/0100 | train loss:0.37144994735717773

Epoch: 0011/0100 | train loss:0.2587887942790985

tensor([0.3714, 0.2588], device='cuda:0')

tensor([0.3714, 0.2588], device='cuda:1')

Epoch: 0012/0100 | train loss:0.3572254776954651

Epoch: 0012/0100 | train loss:0.24688617885112762

tensor([0.3572, 0.2469], device='cuda:0')

tensor([0.3572, 0.2469], device='cuda:1')

Epoch: 0013/0100 | train loss:0.34366878867149353

Epoch: 0013/0100 | train loss:0.23560750484466553

tensor([0.3437, 0.2356], device='cuda:0')

tensor([0.3437, 0.2356], device='cuda:1')

Epoch: 0014/0100 | train loss:0.33070918917655945

Epoch: 0014/0100 | train loss:0.22490985691547394

tensor([0.3307, 0.2249], device='cuda:0')

tensor([0.3307, 0.2249], device='cuda:1')

Epoch: 0015/0100 | train loss:0.318371444940567

Epoch: 0015/0100 | train loss:0.21479550004005432

tensor([0.3184, 0.2148], device='cuda:0')

tensor([0.3184, 0.2148], device='cuda:1')

Epoch: 0016/0100 | train loss:0.30663591623306274

Epoch: 0016/0100 | train loss:0.20525796711444855

tensor([0.3066, 0.2053], device='cuda:0')

tensor([0.3066, 0.2053], device='cuda:1')

Epoch: 0017/0100 | train loss:0.2955937087535858

Epoch: 0017/0100 | train loss:0.19613352417945862

tensor([0.2956, 0.1961], device='cuda:0')

tensor([0.2956, 0.1961], device='cuda:1')

Epoch: 0018/0100 | train loss:0.2850213646888733

Epoch: 0018/0100 | train loss:0.18744778633117676

tensor([0.2850, 0.1874], device='cuda:0')

tensor([0.2850, 0.1874], device='cuda:1')

Epoch: 0019/0100 | train loss:0.27490052580833435

Epoch: 0019/0100 | train loss:0.17930081486701965

tensor([0.2749, 0.1793], device='cuda:0')

tensor([0.2749, 0.1793], device='cuda:1')

Epoch: 0020/0100 | train loss:0.265290230512619

Epoch: 0020/0100 | train loss:0.17152751982212067

tensor([0.2653, 0.1715], device='cuda:0')

tensor([0.2653, 0.1715], device='cuda:1')

Epoch: 0021/0100 | train loss:0.25619110465049744

Epoch: 0021/0100 | train loss:0.16420160233974457

tensor([0.2562, 0.1642], device='cuda:0')

tensor([0.2562, 0.1642], device='cuda:1')

Epoch: 0022/0100 | train loss:0.24748849868774414

Epoch: 0022/0100 | train loss:0.15718798339366913

tensor([0.2475, 0.1572], device='cuda:0')

tensor([0.2475, 0.1572], device='cuda:1')

Epoch: 0023/0100 | train loss:0.23922590911388397

Epoch: 0023/0100 | train loss:0.15056990087032318

tensor([0.2392, 0.1506], device='cuda:0')

tensor([0.2392, 0.1506], device='cuda:1')

Epoch: 0024/0100 | train loss:0.2313191443681717

Epoch: 0024/0100 | train loss:0.14431701600551605

tensor([0.2313, 0.1443], device='cuda:0')

tensor([0.2313, 0.1443], device='cuda:1')

Epoch: 0025/0100 | train loss:0.22383789718151093

Epoch: 0025/0100 | train loss:0.13829165697097778

tensor([0.2238, 0.1383], device='cuda:0')

tensor([0.2238, 0.1383], device='cuda:1')

Epoch: 0026/0100 | train loss:0.2166999876499176

Epoch: 0026/0100 | train loss:0.13270090520381927

tensor([0.2167, 0.1327], device='cuda:0')

tensor([0.2167, 0.1327], device='cuda:1')

Epoch: 0027/0100 | train loss:0.12735657393932343

Epoch: 0027/0100 | train loss:0.2099115401506424

tensor([0.2099, 0.1274], device='cuda:1')

tensor([0.2099, 0.1274], device='cuda:0')

Epoch: 0028/0100 | train loss:0.2034330815076828

Epoch: 0028/0100 | train loss:0.12219982594251633

tensor([0.2034, 0.1222], device='cuda:0')

tensor([0.2034, 0.1222], device='cuda:1')

Epoch: 0029/0100 | train loss:0.19724245369434357

Epoch: 0029/0100 | train loss:0.11739777773618698

tensor([0.1972, 0.1174], device='cuda:0')

tensor([0.1972, 0.1174], device='cuda:1')

Epoch: 0030/0100 | train loss:0.1913725584745407

Epoch: 0030/0100 | train loss:0.11280806362628937

tensor([0.1914, 0.1128], device='cuda:0')

tensor([0.1914, 0.1128], device='cuda:1')

Epoch: 0031/0100 | train loss:0.1856645792722702

Epoch: 0031/0100 | train loss:0.10841526836156845

tensor([0.1857, 0.1084], device='cuda:0')

tensor([0.1857, 0.1084], device='cuda:1')

Epoch: 0032/0100 | train loss:0.18032146990299225

Epoch: 0032/0100 | train loss:0.10436604171991348

tensor([0.1803, 0.1044], device='cuda:0')

tensor([0.1803, 0.1044], device='cuda:1')

Epoch: 0033/0100 | train loss:0.17524836957454681

Epoch: 0033/0100 | train loss:0.10045601427555084

tensor([0.1752, 0.1005], device='cuda:0')

tensor([0.1752, 0.1005], device='cuda:1')

Epoch: 0034/0100 | train loss:0.1704605370759964

Epoch: 0034/0100 | train loss:0.0966917872428894

tensor([0.1705, 0.0967], device='cuda:0')

tensor([0.1705, 0.0967], device='cuda:1')

Epoch: 0035/0100 | train loss:0.1658073514699936

Epoch: 0035/0100 | train loss:0.09323866665363312

tensor([0.1658, 0.0932], device='cuda:0')

tensor([0.1658, 0.0932], device='cuda:1')

Epoch: 0036/0100 | train loss:0.16137376427650452

Epoch: 0036/0100 | train loss:0.08982827514410019

tensor([0.1614, 0.0898], device='cuda:0')

tensor([0.1614, 0.0898], device='cuda:1')

Epoch: 0037/0100 | train loss:0.15720796585083008

Epoch: 0037/0100 | train loss:0.0867210254073143

tensor([0.1572, 0.0867], device='cuda:0')

tensor([0.1572, 0.0867], device='cuda:1')

Epoch: 0038/0100 | train loss:0.15312625467777252

Epoch: 0038/0100 | train loss:0.08372923731803894

tensor([0.1531, 0.0837], device='cuda:0')

tensor([0.1531, 0.0837], device='cuda:1')

Epoch: 0039/0100 | train loss:0.14925920963287354

Epoch: 0039/0100 | train loss:0.0807720348238945

tensor([0.1493, 0.0808], device='cuda:0')

tensor([0.1493, 0.0808], device='cuda:1')

Epoch: 0040/0100 | train loss:0.14571939408779144

Epoch: 0040/0100 | train loss:0.07814785093069077

tensor([0.1457, 0.0781], device='cuda:0')

tensor([0.1457, 0.0781], device='cuda:1')

Epoch: 0041/0100 | train loss:0.1421670764684677

Epoch: 0041/0100 | train loss:0.07556602358818054

tensor([0.1422, 0.0756], device='cuda:0')

tensor([0.1422, 0.0756], device='cuda:1')

Epoch: 0042/0100 | train loss:0.13886897265911102

Epoch: 0042/0100 | train loss:0.07304538041353226

tensor([0.1389, 0.0730], device='cuda:0')

tensor([0.1389, 0.0730], device='cuda:1')

Epoch: 0043/0100 | train loss:0.13570688664913177

Epoch: 0043/0100 | train loss:0.07073201984167099

tensor([0.1357, 0.0707], device='cuda:0')

tensor([0.1357, 0.0707], device='cuda:1')

Epoch: 0044/0100 | train loss:0.13255445659160614

Epoch: 0044/0100 | train loss:0.06854959577322006

tensor([0.1326, 0.0685], device='cuda:0')

tensor([0.1326, 0.0685], device='cuda:1')

Epoch: 0045/0100 | train loss:0.12969191372394562

Epoch: 0045/0100 | train loss:0.06643456220626831

tensor([0.1297, 0.0664], device='cuda:0')

tensor([0.1297, 0.0664], device='cuda:1')

Epoch: 0046/0100 | train loss:0.12693797051906586

Epoch: 0046/0100 | train loss:0.06441470235586166

tensor([0.1269, 0.0644], device='cuda:0')

tensor([0.1269, 0.0644], device='cuda:1')

Epoch: 0047/0100 | train loss:0.12435060739517212

Epoch: 0047/0100 | train loss:0.06256702542304993

tensor([0.1244, 0.0626], device='cuda:0')

tensor([0.1244, 0.0626], device='cuda:1')

Epoch: 0048/0100 | train loss:0.12184498459100723

Epoch: 0048/0100 | train loss:0.06076086685061455

tensor([0.1218, 0.0608], device='cuda:0')

tensor([0.1218, 0.0608], device='cuda:1')

Epoch: 0049/0100 | train loss:0.11948590725660324

Epoch: 0049/0100 | train loss:0.05909023433923721

tensor([0.1195, 0.0591], device='cuda:0')

tensor([0.1195, 0.0591], device='cuda:1')

Epoch: 0050/0100 | train loss:0.11719142645597458

Epoch: 0050/0100 | train loss:0.05748440697789192

tensor([0.1172, 0.0575], device='cuda:0')

tensor([0.1172, 0.0575], device='cuda:1')

Epoch: 0051/0100 | train loss:0.11490301042795181

Epoch: 0051/0100 | train loss:0.05596492439508438

tensor([0.1149, 0.0560], device='cuda:0')

tensor([0.1149, 0.0560], device='cuda:1')

Epoch: 0052/0100 | train loss:0.11284526437520981

Epoch: 0052/0100 | train loss:0.05452785640954971

tensor([0.1128, 0.0545], device='cuda:0')

tensor([0.1128, 0.0545], device='cuda:1')

Epoch: 0053/0100 | train loss:0.11080770939588547

Epoch: 0053/0100 | train loss:0.053089436143636703

tensor([0.1108, 0.0531], device='cuda:0')

tensor([0.1108, 0.0531], device='cuda:1')

Epoch: 0054/0100 | train loss:0.1088673397898674

Epoch: 0054/0100 | train loss:0.05177140235900879

tensor([0.1089, 0.0518], device='cuda:0')

tensor([0.1089, 0.0518], device='cuda:1')

Epoch: 0055/0100 | train loss:0.10703599452972412

Epoch: 0055/0100 | train loss:0.05052466318011284

tensor([0.1070, 0.0505], device='cuda:0')

tensor([0.1070, 0.0505], device='cuda:1')

Epoch: 0056/0100 | train loss:0.10530979931354523

Epoch: 0056/0100 | train loss:0.049302320927381516

tensor([0.1053, 0.0493], device='cuda:0')

tensor([0.1053, 0.0493], device='cuda:1')

Epoch: 0057/0100 | train loss:0.10361965000629425

Epoch: 0057/0100 | train loss:0.048224009573459625

tensor([0.1036, 0.0482], device='cuda:0')

tensor([0.1036, 0.0482], device='cuda:1')

Epoch: 0058/0100 | train loss:0.10195320099592209

Epoch: 0058/0100 | train loss:0.04709456115961075

tensor([0.1020, 0.0471], device='cuda:0')

tensor([0.1020, 0.0471], device='cuda:1')

Epoch: 0059/0100 | train loss:0.10047540813684464

Epoch: 0059/0100 | train loss:0.04614344984292984

tensor([0.1005, 0.0461], device='cuda:0')

tensor([0.1005, 0.0461], device='cuda:1')

Epoch: 0060/0100 | train loss:0.09898962825536728

Epoch: 0060/0100 | train loss:0.045158226042985916

tensor([0.0990, 0.0452], device='cuda:0')

tensor([0.0990, 0.0452], device='cuda:1')

Epoch: 0061/0100 | train loss:0.097608782351017

Epoch: 0061/0100 | train loss:0.044237129390239716

tensor([0.0976, 0.0442], device='cuda:0')

tensor([0.0976, 0.0442], device='cuda:1')

Epoch: 0062/0100 | train loss:0.09622994810342789

Epoch: 0062/0100 | train loss:0.043375153094530106

tensor([0.0962, 0.0434], device='cuda:0')

tensor([0.0962, 0.0434], device='cuda:1')

Epoch: 0063/0100 | train loss:0.09495609253644943

Epoch: 0063/0100 | train loss:0.04254027456045151

tensor([0.0950, 0.0425], device='cuda:0')

tensor([0.0950, 0.0425], device='cuda:1')

Epoch: 0064/0100 | train loss:0.04172029718756676

Epoch: 0064/0100 | train loss:0.09371034801006317

tensor([0.0937, 0.0417], device='cuda:1')

tensor([0.0937, 0.0417], device='cuda:0')

Epoch: 0065/0100 | train loss:0.04094156622886658

Epoch: 0065/0100 | train loss:0.09246573597192764

tensor([0.0925, 0.0409], device='cuda:0')

tensor([0.0925, 0.0409], device='cuda:1')

Epoch: 0066/0100 | train loss:0.09130342304706573

Epoch: 0066/0100 | train loss:0.040253669023513794

tensor([0.0913, 0.0403], device='cuda:0')

tensor([0.0913, 0.0403], device='cuda:1')

Epoch: 0067/0100 | train loss:0.09026143699884415

Epoch: 0067/0100 | train loss:0.03958689793944359

tensor([0.0903, 0.0396], device='cuda:0')

tensor([0.0903, 0.0396], device='cuda:1')

Epoch: 0068/0100 | train loss:0.08916200697422028

Epoch: 0068/0100 | train loss:0.03885350748896599

tensor([0.0892, 0.0389], device='cuda:0')

tensor([0.0892, 0.0389], device='cuda:1')

Epoch: 0069/0100 | train loss:0.08816101402044296

Epoch: 0069/0100 | train loss:0.03830384090542793

tensor([0.0882, 0.0383], device='cuda:0')

tensor([0.0882, 0.0383], device='cuda:1')

Epoch: 0070/0100 | train loss:0.08718284964561462

Epoch: 0070/0100 | train loss:0.03767556697130203

tensor([0.0872, 0.0377], device='cuda:0')

tensor([0.0872, 0.0377], device='cuda:1')

Epoch: 0071/0100 | train loss:0.08624932169914246

Epoch: 0071/0100 | train loss:0.03716084733605385

tensor([0.0862, 0.0372], device='cuda:0')

tensor([0.0862, 0.0372], device='cuda:1')

Epoch: 0072/0100 | train loss:0.08536970615386963

Epoch: 0072/0100 | train loss:0.03657805919647217

tensor([0.0854, 0.0366], device='cuda:0')

tensor([0.0854, 0.0366], device='cuda:1')

Epoch: 0073/0100 | train loss:0.08444425463676453

Epoch: 0073/0100 | train loss:0.036069512367248535

tensor([0.0844, 0.0361], device='cuda:0')

tensor([0.0844, 0.0361], device='cuda:1')

Epoch: 0074/0100 | train loss:0.08365066349506378

Epoch: 0074/0100 | train loss:0.035561252385377884

tensor([0.0837, 0.0356], device='cuda:0')

tensor([0.0837, 0.0356], device='cuda:1')

Epoch: 0075/0100 | train loss:0.0828193947672844

Epoch: 0075/0100 | train loss:0.03512110188603401

tensor([0.0828, 0.0351], device='cuda:0')

tensor([0.0828, 0.0351], device='cuda:1')

Epoch: 0076/0100 | train loss:0.08206731826066971

Epoch: 0076/0100 | train loss:0.03470907360315323

tensor([0.0821, 0.0347], device='cuda:0')

tensor([0.0821, 0.0347], device='cuda:1')

Epoch: 0077/0100 | train loss:0.08136867731809616

Epoch: 0077/0100 | train loss:0.03429228812456131

tensor([0.0814, 0.0343], device='cuda:0')

tensor([0.0814, 0.0343], device='cuda:1')

Epoch: 0078/0100 | train loss:0.08061014115810394

Epoch: 0078/0100 | train loss:0.03388326242566109

tensor([0.0806, 0.0339], device='cuda:0')

tensor([0.0806, 0.0339], device='cuda:1')

Epoch: 0079/0100 | train loss:0.07996807247400284

Epoch: 0079/0100 | train loss:0.0334811694920063

tensor([0.0800, 0.0335], device='cuda:0')

tensor([0.0800, 0.0335], device='cuda:1')

Epoch: 0080/0100 | train loss:0.07923366874456406

Epoch: 0080/0100 | train loss:0.03312436491250992

tensor([0.0792, 0.0331], device='cuda:0')

tensor([0.0792, 0.0331], device='cuda:1')

Epoch: 0081/0100 | train loss:0.07861354202032089

Epoch: 0081/0100 | train loss:0.03278031200170517

tensor([0.0786, 0.0328], device='cuda:0')

tensor([0.0786, 0.0328], device='cuda:1')

Epoch: 0082/0100 | train loss:0.07789915800094604

Epoch: 0082/0100 | train loss:0.03244069963693619

tensor([0.0779, 0.0324], device='cuda:0')

tensor([0.0779, 0.0324], device='cuda:1')

Epoch: 0083/0100 | train loss:0.07733096927404404

Epoch: 0083/0100 | train loss:0.03207029029726982

tensor([0.0773, 0.0321], device='cuda:0')

tensor([0.0773, 0.0321], device='cuda:1')

Epoch: 0084/0100 | train loss:0.07673352211713791

Epoch: 0084/0100 | train loss:0.031769514083862305

tensor([0.0767, 0.0318], device='cuda:0')

tensor([0.0767, 0.0318], device='cuda:1')

Epoch: 0085/0100 | train loss:0.07619936764240265

Epoch: 0085/0100 | train loss:0.031524963676929474

tensor([0.0762, 0.0315], device='cuda:0')

tensor([0.0762, 0.0315], device='cuda:1')

Epoch: 0086/0100 | train loss:0.07563362270593643

Epoch: 0086/0100 | train loss:0.03119492344558239

tensor([0.0756, 0.0312], device='cuda:0')

tensor([0.0756, 0.0312], device='cuda:1')

Epoch: 0087/0100 | train loss:0.0750502347946167

Epoch: 0087/0100 | train loss:0.03095475398004055

tensor([0.0751, 0.0310], device='cuda:0')

tensor([0.0751, 0.0310], device='cuda:1')

Epoch: 0088/0100 | train loss:0.0746132880449295

Epoch: 0088/0100 | train loss:0.030701685696840286

tensor([0.0746, 0.0307], device='cuda:0')

tensor([0.0746, 0.0307], device='cuda:1')

Epoch: 0089/0100 | train loss:0.07409549504518509

Epoch: 0089/0100 | train loss:0.030368996784090996

tensor([0.0741, 0.0304], device='cuda:0')

tensor([0.0741, 0.0304], device='cuda:1')

Epoch: 0090/0100 | train loss:0.0735851600766182

Epoch: 0090/0100 | train loss:0.03020581416785717

tensor([0.0736, 0.0302], device='cuda:0')

tensor([0.0736, 0.0302], device='cuda:1')

Epoch: 0091/0100 | train loss:0.07305028289556503

Epoch: 0091/0100 | train loss:0.029953384771943092

tensor([0.0731, 0.0300], device='cuda:0')

tensor([0.0731, 0.0300], device='cuda:1')

Epoch: 0092/0100 | train loss:0.07270056009292603

Epoch: 0092/0100 | train loss:0.029726726934313774

tensor([0.0727, 0.0297], device='cuda:0')

tensor([0.0727, 0.0297], device='cuda:1')

Epoch: 0093/0100 | train loss:0.07219361513853073

Epoch: 0093/0100 | train loss:0.02954575978219509

tensor([0.0722, 0.0295], device='cuda:0')

tensor([0.0722, 0.0295], device='cuda:1')

Epoch: 0094/0100 | train loss:0.07180915772914886

Epoch: 0094/0100 | train loss:0.02932337485253811

tensor([0.0718, 0.0293], device='cuda:0')

tensor([0.0718, 0.0293], device='cuda:1')

Epoch: 0095/0100 | train loss:0.07139516621828079

Epoch: 0095/0100 | train loss:0.029103577136993408

tensor([0.0714, 0.0291], device='cuda:0')

tensor([0.0714, 0.0291], device='cuda:1')

Epoch: 0096/0100 | train loss:0.07094169408082962

Epoch: 0096/0100 | train loss:0.02893088571727276

tensor([0.0709, 0.0289], device='cuda:0')

tensor([0.0709, 0.0289], device='cuda:1')

Epoch: 0097/0100 | train loss:0.028796857222914696

Epoch: 0097/0100 | train loss:0.07059731334447861

tensor([0.0706, 0.0288], device='cuda:0')

tensor([0.0706, 0.0288], device='cuda:1')

Epoch: 0098/0100 | train loss:0.028585290536284447

Epoch: 0098/0100 | train loss:0.0701548159122467

tensor([0.0702, 0.0286], device='cuda:0')

tensor([0.0702, 0.0286], device='cuda:1')

Epoch: 0099/0100 | train loss:0.06985291093587875

Epoch: 0099/0100 | train loss:0.028429213911294937

tensor([0.0699, 0.0284], device='cuda:0')

tensor([0.0699, 0.0284], device='cuda:1')

Epoch: 0100/0100 | train loss:0.06947710365056992

Epoch: 0100/0100 | train loss:0.028299672529101372

tensor([0.0695, 0.0283], device='cuda:0')

tensor([0.0695, 0.0283], device='cuda:1')

以上fabric对应lightning2.1版本,该工具还在开发中,后期会有其他功能。

相关文章:

2、基于pytorch lightning的fabric实现pytorch的多GPU训练和混合精度功能

文章目录 承接 上一篇,使用原始的pytorch来实现多GPU训练和混合精度,现在对比以上代码,我们使用Fabric来实现相同的功能。关于Fabric,我会在后续的博客中继续讲解,是讲解,也是在学习。通过fabric,可以减少代码量&#…...

python版opencv人脸训练与人脸识别

1.人脸识别准备 使用的两个opencv包 D:\python2023>pip list |findstr opencv opencv-contrib-python 4.8.1.78 opencv-python 4.8.1.78数据集使用前一篇Javacv的数据集,网上随便找的60张图片,只是都挪到了D:\face目录下方便遍历 D:\face\1 30张刘德华图片…...

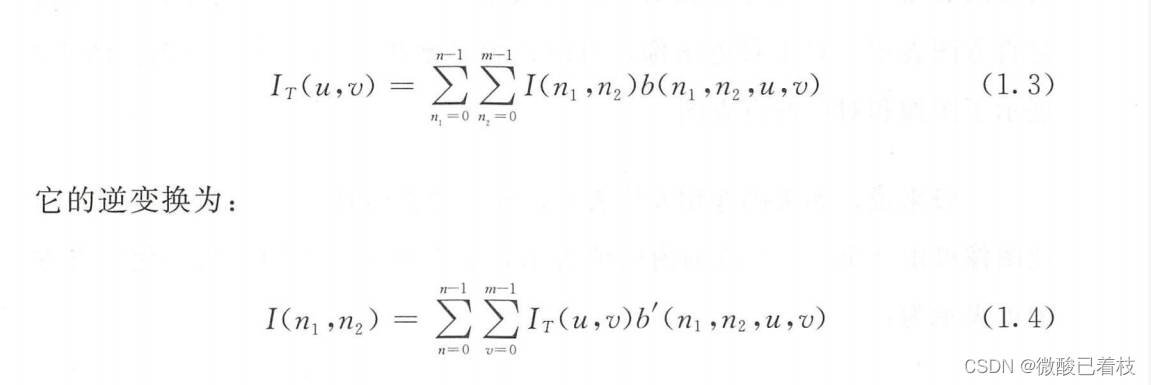

计算机视觉-数学基础*变换域表示

被研究最多的图像(或任何序列数据)变换域表示是通过傅 里叶分析 。所谓的傅里叶表示就是使用 正弦函数的线性组合来表示信号。对于一个给定的图像I(n1,n2) ,可以用如下方式分解它(即逆傅里叶变换): 其中&a…...

小程序如何设置自取规则

在小程序中,自取规则是指当客户下单时选择无需配送的情况下,如何设置相关的计费方式、指定时段费用、免费金额、预定时间和起取金额。下面将详细介绍如何设置这些规则,以便更好地满足客户的需求。 在小程序管理员后台->配送设置->自…...

Elasticsearch分词器-中文分词器ik

文章目录 使用standard analysis对英文进行分词使用standard analysis对中文进行分词安装插件对中文进行友好分词-ik中文分词器下载安装和配置IK分词器使用ik_smart分词器使用ik_max_word分词器 借助Nginx实现ik分词器自定义分词网络新词 ES官方文档Text Analysis 使用standard…...

ITSS信息技术服务运行维护标准符合性证书申请详解及流程

ITSS信息技术服务运行维护标准符合性证书 认证介绍 ITSS(InformationTechnologyServiceStandards,信息技术服务标准,简称ITSS)是一套成体系和综合配套的信息技术服务标准库,全面规范了IT服务产品及其组成要素,用于指导实施标准化…...

Inbound marketing的完美闭环:将官网作为营销枢纽,从集客进化为入站

Inbound marketing即入站营销的运作方式不同于付费广告,你需要不断地投入才能获得持续的访问量。而你的生意表达内容一经创建、发布,就能远远不断地带来流量。 Inbound marketing也被翻译作集客营销,也就是美国知名的营销SaaS企业hubspot所主…...

SQL On Pandas最佳实践

SQL On Pandas最佳实践 1、PandaSQL1.1、PandaSQL简介1.2、Pandas与PandaSQL解决方案对比1.3、PandaSQL支持的窗口函数1.4、PandaSQL综合使用案例2、DuckDB2.1、DuckDB简介2.2、SQL操作(SQL On Pandas)2.3、逻辑SQL(DSL on Pandas)2.4、DuckDB on Apache Arrow2.5、DuckDB …...

如何批量给视频添加logo水印?

如果你想为自己的视频添加图片水印,以增强视频的辨识度和个性化,那么你可以使用固乔剪辑助手软件来实现这一需求。下面就是详细的操作步骤: 1.下载并打开固乔剪辑助手软件,这是一款简单易用的视频剪辑软件,功能丰富&am…...

数据挖掘和大数据的区别

数据挖掘 一般用于对企业内部系统的数据库进行筛选、整合和分析。 操作对象是数据仓库,数据相对有规律,数据量较少。 大数据 一般指对互联网中杂乱无章的数据进行筛选、整合和分析。 操作对象一般是互联网的数据,数据无规律,…...

Go之流程控制大全: 细节、示例与最佳实践

引言 在计算机编程中,流程控制是核心的组成部分,它决定了程序应该如何根据给定的情况执行或决策。以下是Go语言所支持的流程控制结构的简要概览: 流程控制类型代码if-else条件分支if condition { } else { }for循环for initialization; con…...

FLStudio2024最新破解版注册机

水果音乐制作软件FLStudio是一款功能强大的音乐创作软件,全名:Fruity Loops Studio。水果音乐制作软件FLStudio内含教程、软件、素材,是一个完整的软件音乐制作环境或数字音频工作站... FL Studio21简称FL 21,全称 Fruity Loops Studio 21,因此国人习惯叫…...

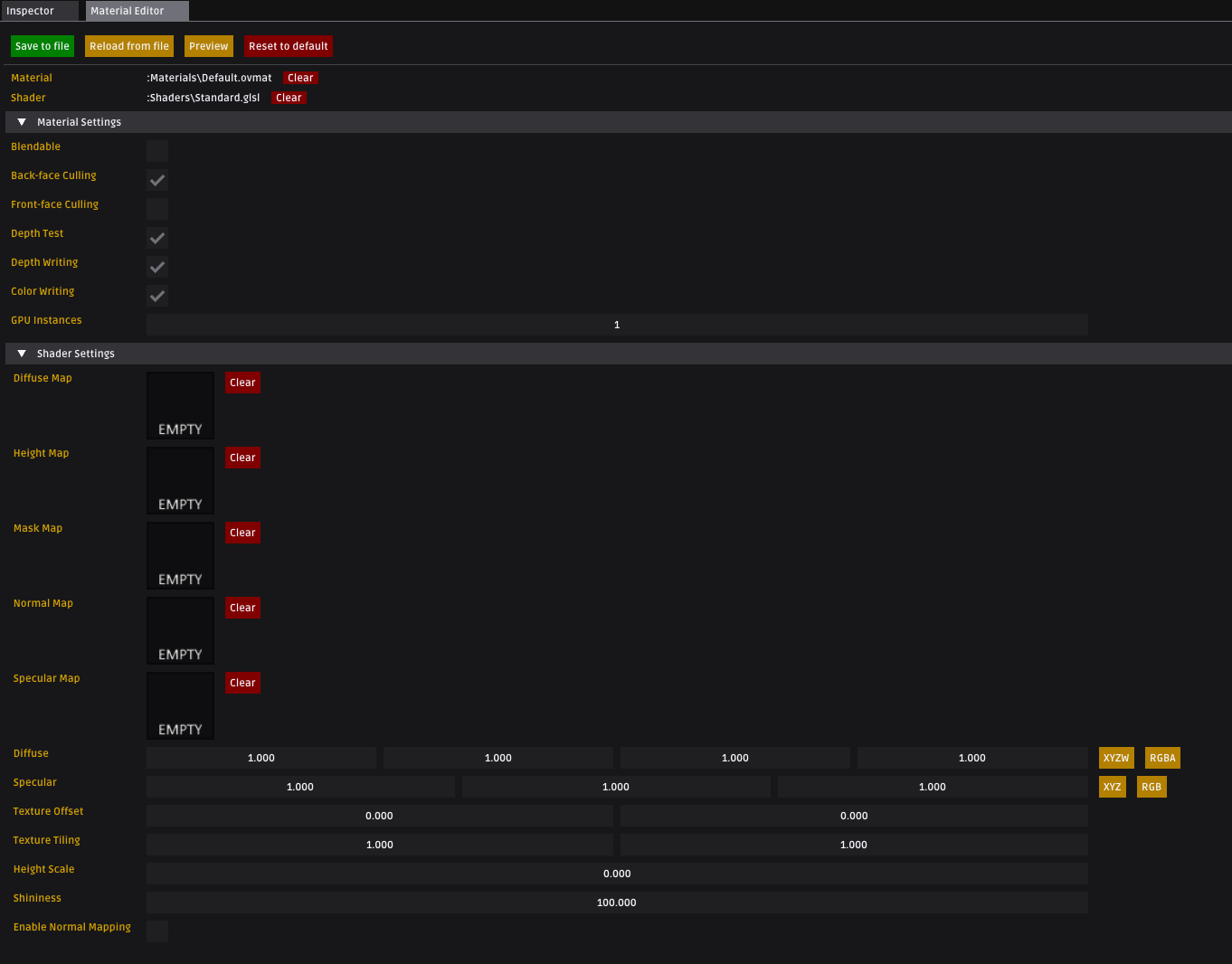

【Overload游戏引擎细节分析】standard材质Shader

提示:Shader属于GPU编程,难写难调试,阅读本文需有一定的OpenGL基础,可以写简单的Shader,不适合不会OpenGL的朋友 一、Blinn-Phong光照模型 Blinn-Phong光照模型,又称为Blinn-phong反射模型(Bli…...

Leetcode—7.整数反转【中等】

2023每日刷题(十) Leetcode—7.整数反转 关于为什么要设long变量 参考自这篇博客 long可以表示-2147483648而且只占4个字节,所以能满足题目要求 复杂逻辑版实现代码 int reverse(int x){int arr[32] {0};long y;int flag 1;if(x <…...

lua-web-utils和proxy设置示例

以下是一个使用lua-web-utils和proxy的下载器程序: -- 首先安装lua-web-utils库 local lwu require "lwu" -- 获取服务器 local function get_proxy()local proxy_url "duoipget_proxy"local resp, code, headers, err lwu.fetch(proxy_…...

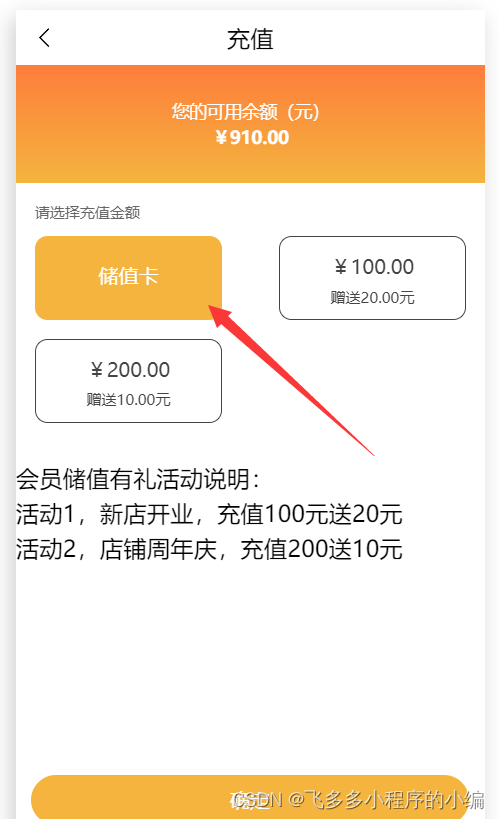

分享一下在微信小程序里怎么添加储值卡功能

在微信小程序中添加储值卡功能,可以让消费者更加便捷地管理和使用储值卡,同时也能增加商家的销售收入。下面是一篇关于如何在微信小程序中添加储值卡功能的软文。 标题:微信小程序添加储值卡功能,便捷与高效并存 随着科技的不断发…...

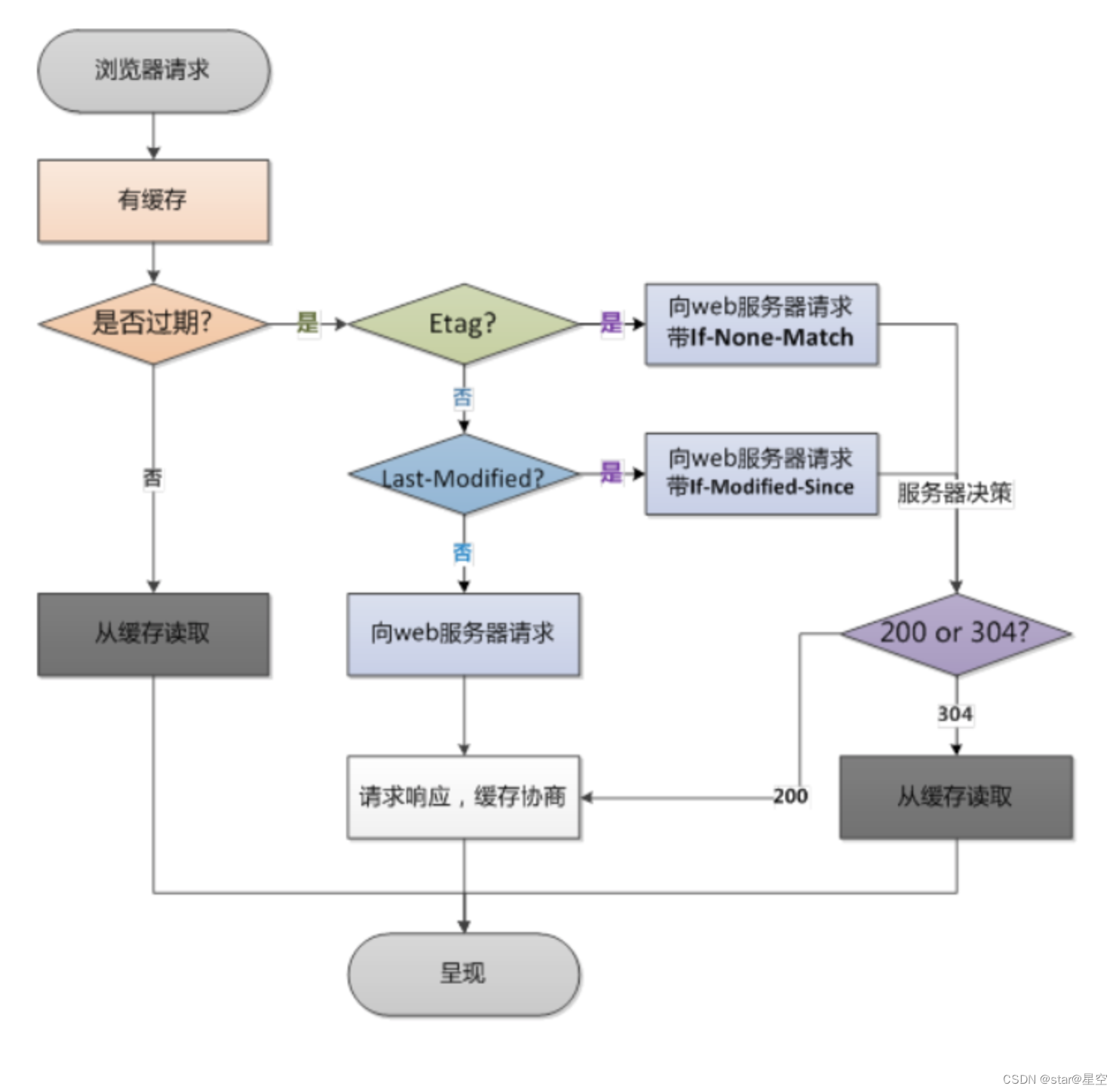

2023高频前端面试题-http

1. HTTP有哪些⽅法? HTTP 1.0 标准中,定义了3种请求⽅法:GET、POST、HEAD HTTP 1.1 标准中,新增了请求⽅法:PUT、PATCH、DELETE、OPTIONS、TRACE、CONNECT 2. 各个HTTP方法的具体作用是什么? 方法功能G…...

图像识别在自动驾驶汽车中的多传感器融合技术

摘要: 介绍文章的主要观点和发现。 引言: 自动驾驶汽车的兴起和重要性。多传感器融合技术在自动驾驶中的关键作用。 第一部分:图像识别技术 图像识别的基本原理。图像传感器和摄像头在自动驾驶中的应用。深度学习和卷积神经网络ÿ…...

Kafka To HBase To Hive

目录 1.在HBase中创建表 2.写入API 2.1普通模式写入hbase(逐条写入) 2.2普通模式写入hbase(buffer写入) 2.3设计模式写入hbase(buffer写入) 3.HBase表映射至Hive中 1.在HBase中创建表 hbase(main):00…...

python pandas.DataFrame 直接写入Clickhouse

import pandas as pd import sqlalchemy from clickhouse_sqlalchemy import Table, engines from sqlalchemy import create_engine, MetaData, Column import urllib.parsehost 1.1.1.1 user default password default db test port 8123 # http连接端口 engine create…...

用DeerFlow做竞品分析:5分钟自动生成全面竞品研究报告

用DeerFlow做竞品分析:5分钟自动生成全面竞品研究报告 1. DeerFlow简介:您的智能研究助手 DeerFlow是一款由字节跳动开源的深度研究自动化工具,它整合了语言模型、网络搜索和代码执行能力,能够快速完成复杂的研究任务。这个工具…...

放弃OpenVINO!在树莓派5上用Anaconda环境直接跑通YOLOv5摄像头检测

放弃OpenVINO!在树莓派5上用Anaconda环境直接跑通YOLOv5摄像头检测 树莓派作为嵌入式开发的明星产品,其第五代在性能上有了显著提升,4GB内存和2.4GHz四核处理器让它能够胜任更多AI推理任务。而YOLOv5作为目标检测领域的轻量级标杆,…...

Nanbeige 4.1-3B专属UI实战:一键部署沉浸式游戏风格聊天应用

Nanbeige 4.1-3B专属UI实战:一键部署沉浸式游戏风格聊天应用 1. 项目概述与核心价值 南北阁(Nanbeige)4.1-3B是一款性能优异的中英双语大语言模型,而今天我们要介绍的是为其量身打造的专属Web交互界面。这个界面最特别之处在于&…...

uniapp日期处理全攻略:获取某月首尾日、近七天日期等实用技巧

Uniapp日期处理实战:从基础格式化到高级业务场景解决方案 在移动应用开发中,日期处理几乎贯穿所有业务场景。无论是电商平台的限时抢购、医疗应用的预约挂号,还是企业系统的报表统计,精准高效的日期操作都是保障业务逻辑完整性的关…...

Neeshck-Z-lmage_LYX_v2实际作品:基于LoRA微调的专属IP形象批量生成

Neeshck-Z-lmage_LYX_v2实际作品:基于LoRA微调的专属IP形象批量生成 1. 引言:从零到一,打造你的专属数字形象 想象一下,你需要为你的品牌、游戏或者社交媒体账号设计一套统一的视觉形象。传统的做法是找设计师,沟通需…...

,错过将无法适配Mojo v1.2+运行时)

【限时开放】Mojo-Python互操作安全边界图谱(2024 Q3最新CVE影响评估+3类高危反模式代码扫描规则),错过将无法适配Mojo v1.2+运行时

第一章:Mojo-Python互操作安全边界图谱概览Mojo 作为面向 AI 原生开发的系统级编程语言,其与 Python 的互操作并非简单语法兼容,而是在运行时、内存模型、类型系统与异常传播四个维度上构建了显式、可审计的安全边界。这些边界共同构成一张动…...

以太网MAC与PHY接口技术详解

以太网PHY、MAC及其通信接口技术解析1. 以太网接口架构概述1.1 基本组成结构以太网接口电路从硬件角度可分为两大核心组件:MAC控制器(Media Access Control):负责数据链路层的媒体访问控制PHY芯片(Physical Layer&…...

Livox_ros_driver vs driver2:消息类型详解与ROS生态兼容性避坑指南

Livox_ros_driver与driver2深度对比:消息架构解析与ROS生态适配实战 当Livox发布HAP等新一代激光雷达时,技术团队常面临驱动版本选择的困境。livox_ros_driver与livox_ros_driver2看似只是版本迭代,实则反映了ROS生态中传感器接口标准化的深层…...

的两种实战配置与调参心得)

从图像分割到GAN生成:转置卷积(Transpose Conv)的两种实战配置与调参心得

转置卷积实战指南:图像分割与GAN生成中的核心技巧 在计算机视觉领域,我们常常需要将低分辨率特征图恢复到原始尺寸——无论是为了像素级预测的图像分割任务,还是从潜在空间生成逼真图像的GAN模型。传统插值方法如双线性插值虽然简单ÿ…...

从拼图游戏到自动驾驶:点云配准技术的跨领域进化史

从拼图游戏到自动驾驶:点云配准技术的跨领域进化史 1. 三维世界的数字拼图师 1987年,当Paul Besl和Neil McKay在实验室里尝试将两组扫描数据对齐时,他们可能不会想到,这项被称为迭代最近点(ICP)的技术会成为…...